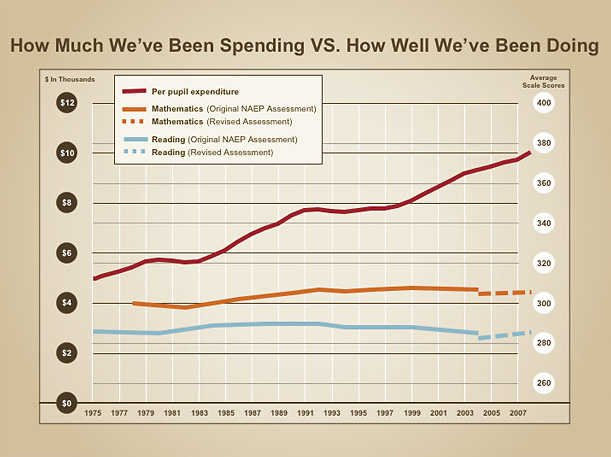

I’ll likely regret writing this post at some point. But this is a really, really important issue and one that undermines a very large number of prominent research studies on the effectiveness of various school reforms, especially when evaluated in high poverty contexts.

I blogged about this a few weeks back – the problems of poverty measurement in educational research. But this issue continues to come up in e-mails and other conversations. And it’s a critically important issue that so many researchers callously overlook. My sensitivity to this issue is heightened by the potential problems emergent from using bad poverty measurement in models to be used for rating and comparing teacher effectiveness.

Here, I pose a challenge to my research colleagues out there.

3 Reporting Rules for Studies/Models Using Crude Poverty Measures

Rule 1: Descriptive/Distribution Reporting of Poverty Measure

If using a single dummy variable to identify kids as qualifying for free or reduced price lunch, include sufficient descriptive statistics to show just how much or how little variance you are actually picking up with this measure. For example, if using this single “low income” indicator, report how many students qualify, and how many students within each nested group.

If, for example, you’ve got 70% of more of your sample identified with this single “low income” dummy variable, then you are assuming that 70% to be statistically equally poor. If, 60% of the classrooms in your sample have 80% or more students who qualify, you are essentially classifying all of those classrooms as being statistically similar. HAVE THE INTEGRITY TO POINT THAT OUT.

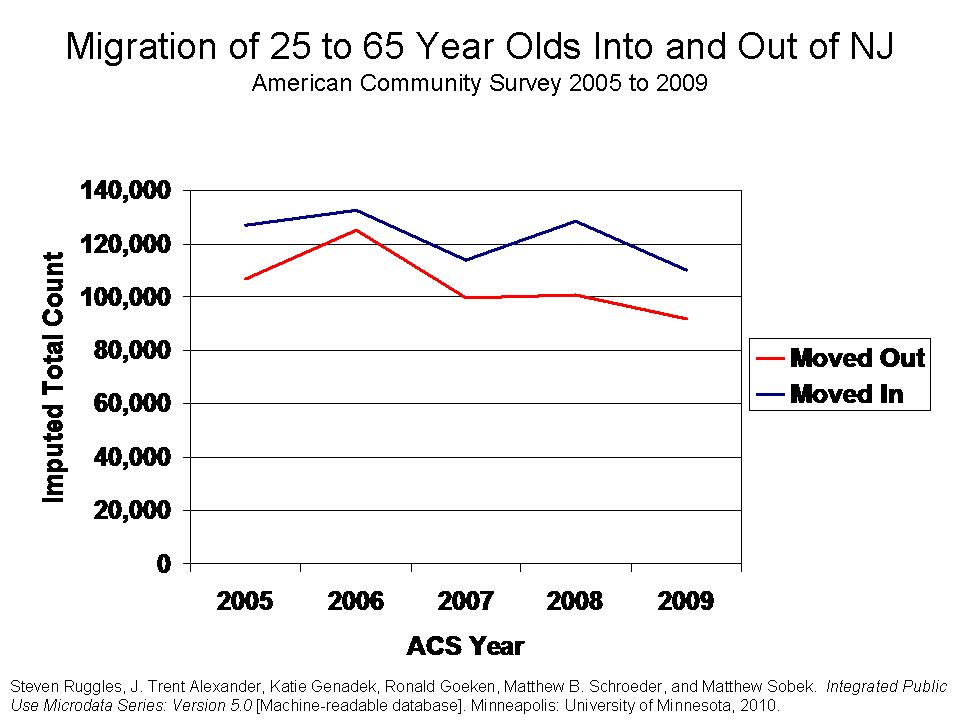

Remember, here’s the variance in % free or reduced lunch across Cleveland Schools.Not very useful, is it?

Is Cleveland just a huge outlier?

Well, in Texas, in 2007:

93% of Dallas elementary schools had over 80% free + reduced lunch

84% of Houston elementary schools had over 80% free + reduced lunch

100% of San Antonio elementary schools had over 80% free + reduced lunch

As such, any analysis which uses only this measure to capture variations in economic status of students across schools within these districts should be interpreted with caution.

Rule 2: Reporting of Relationships between Variance in Poverty and Outcome Measures

If using a single dummy variable to identify kids as qualifying for free or reduced lunch, report the relationship between that variable and student outcome measures. We know from various studies that gradients of poverty and household resources do have strong relationships with student outcome measures. If, at the classroom or school level, the percent of children who qualify for free or reduced lunch has only a modest to weak relationship with classroom or school level outcomes, chances are your poverty measure is junk (That is, there is a greater likelihood that this finding represents a flaw in the poverty measure – lack of variance – than in the likelihood that you are evaluating a system where the poverty-outcome relationship has been completely disrupted. Further, to be confident of the latter, we have to fix the former).

In high poverty settings, your measure may be junk because the range of shares of kids who qualify for free or reduced lunch only varies from about 70% or 80% up to 100%. That is, across nearly all classrooms, nearly all students are from families fall below the 185% income level for poverty. Much of the remaining variation between 80% and 100% is just reporting noise or error.

Any legitimate measure of child poverty or family income status, when aggregated to the classroom or school level will likely be significantly, systematically related to differences in student outcomes. Report it! If it’s not, the measure is likely insufficient. HAVE THE INTEGRITY TO POINT THAT OUT.

EXAMPLE

The following two graphs show us how important it can be to explore using alternative poverty thresholds, such as looking at numbers of children falling below the 130% income threshold versus the 185% threshold in a high poverty setting. The goal is to find the measure that a) works better for picking up variation across school settings or classrooms and b) as a result, picks up poverty variation that may explain differences in student outcomes.

Figure 1 shows the relationship between school level % free OR reduced lunch and 8th grade math proficiency in Newark in 2009

While there appears to be a relationship, most schools fall above 80% free or reduced lunch and the relationship between this poverty measure and student outcomes seems surprisingly weak. On the one hand, we could draw the conclusion that this means that all NPS schools are just so high in poverty that it really doesn’t matter (a ridiculous assertion, to say the least). That all of the kids are poor, and these high poverty levels affect their outcomes similarly, and those remaining variations are all about good and bad teaching, and charter versus traditional public schools.

Figure 2 shows the relationship between school level % free lunch only and 8th grade math proficiency in Newark in 2009

When we use a more sensitive measure, we nearly double the amount of variation we explain in student outcomes, and we severely undermine those conclusions above. From 40% to 80% free lunch there exists a pretty darn strong relationship with student outcomes. Above that, it still erodes somewhat. But this too might be clarified by using an even stricter poverty threshold or a continuous measure of family income. CLEARLY, IT WOULD BE INSUFFICIENT TO USE THE FIRST MEASURE OF POVERTY – FREE + REDUCED – AS A CONTROL VARIABLE IN AN ANALYSIS OF NEWARK SCHOOLS, OR FOR THAT MATTER AN EVALUATION OF NEWARK TEACHERS.

Rule 3: Reporting of Numbers/Shares of Cases Potentially Affected by Omitted Variables Bias (extent to which crude poverty measure compromises validity of model results)

Let’s say you or I have taken each of these first steps, but we decide to go ahead and conduct our analysis of charter school effectiveness, or ratings of individual teacher value added anyway, using the single student level dummy variable for “poorness” (based on free or reduced price lunch). After all, we’ve got to publish something? Now it is incumbent upon you (or I), the researcher, to appropriately represent the extent to which these data shortcomings may bias your (or my) analyses.

For example, in an analysis of teacher effects, it would be relevant to report the number and share of teachers with classrooms having 80% or more children who qualify. Why? Because you’ve chosen statistically to assume that every one of their classrooms full of children are statistically the same in terms of economic disadvantage – EVEN WHEN THEY ARE NOT! Those teachers with the lowest income children may be significantly disadvantaged by this “omitted variables” bias in the model.

Why not just report the overall correlation between effectiveness ratings and classroom level % free or reduced lunch? Yeah… You’re banking on getting that low correlation between teacher effectiveness ratings and % low-income, so you can say your ratings aren’t biased by poverty. Not so fast. You’re likely wrong in making that assertion, given the data. Instead, what you’re showing is that your really crappy poverty measure simply failed to pick up real differences in economic status across classrooms and thus failed to correct for differences in true economic status of students when determining teacher ratings. And then, your crappy poverty measure remained uncorrelated with the biased estimates it helped produce. Really helpful? eh?

Fess up to reality, and report the numbers of teachers across which your model does not effectively control for economic status differences among students – all teachers with classrooms that are say, 80% or more, free or reduced price lunch. HAVE THE INTEGRITY TO POINT THAT OUT.

Here are the factors in the NYC Value-added model. How many teachers have classrooms that are treated as statistically equivalent when they are not? Any teacher effectiveness model applied in a high poverty setting – like a large urban district – that relies solely on the single “low-income” dummy variable – is likely entirely invalid for making comparisons across very large shares of teachers included in the model.

EXAMPLE

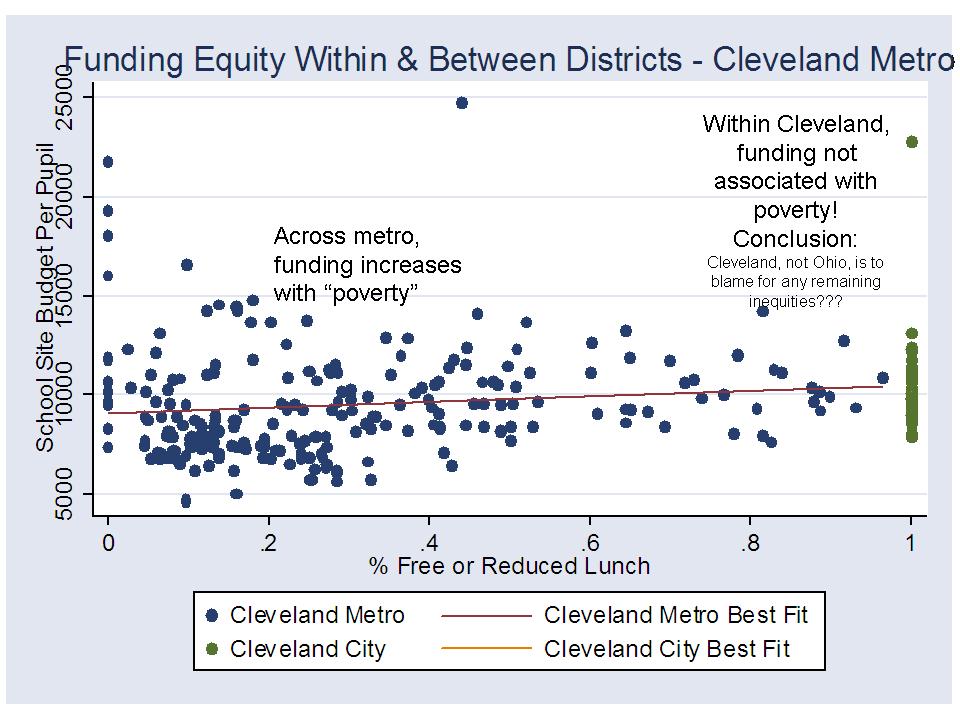

So, could we really draw wrongheaded conclusions by using insensitive poverty measurement, and by not checking and fully reporting on distributions? Here’s one example how we might make stupid assertions, using data from 2007 on schools in the Cleveland metro and in the City of Cleveland.

Figure 3 shows the relationship across all elementary schools in the metro and in Cleveland city between % free or reduced lunch and percent passing state assessments

Now, lets assume that we are trying to figure out if for some reason, Cleveland has been unusually successful at disrupting the relationship between % free or reduced lunch and student outcomes, and we wish to compare the relationship within Cleveland to the relationship across all schools surrounding Cleveland. If we didn’t do the visual above, we might miss something huge (actually, given the Cleveland quirk – 100% of schools 100% free or reduced, we likely wouldn’t miss this, but in other less extreme cases we might). Here the pattern shows a very strong relationship between % free or reduced lunch and student outcomes across all schools, and absolutely no relationship between free or reduced lunch and outcomes in Cleveland – A freakin’ miracle! BUT IT’S ENTIRELY BECAUSE THERE’S NO FREAKIN’ VARIATION IN THE POVERTY MEASURE WITHIN CLEVELAND!

Now, lets assume that we are trying to figure out if for some reason, Cleveland has been unusually successful at disrupting the relationship between % free or reduced lunch and student outcomes, and we wish to compare the relationship within Cleveland to the relationship across all schools surrounding Cleveland. If we didn’t do the visual above, we might miss something huge (actually, given the Cleveland quirk – 100% of schools 100% free or reduced, we likely wouldn’t miss this, but in other less extreme cases we might). Here the pattern shows a very strong relationship between % free or reduced lunch and student outcomes across all schools, and absolutely no relationship between free or reduced lunch and outcomes in Cleveland – A freakin’ miracle! BUT IT’S ENTIRELY BECAUSE THERE’S NO FREAKIN’ VARIATION IN THE POVERTY MEASURE WITHIN CLEVELAND!

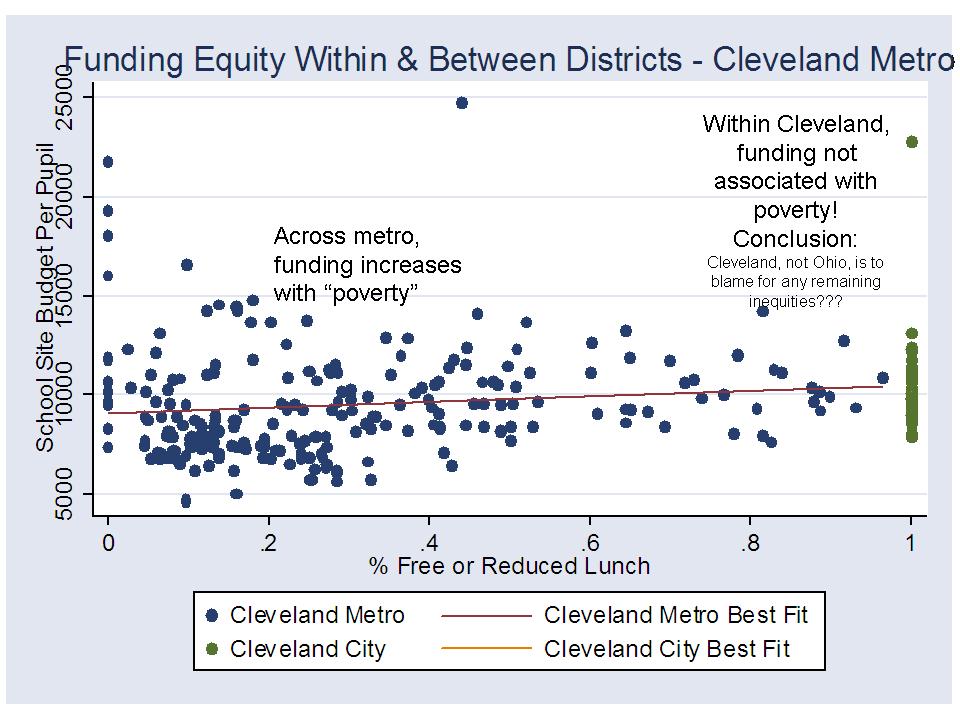

We can easily use this same pattern to our advantage to show that the state of Ohio has made progress on the distribution of funding by poverty across schools, but that Cleveland and other cities have not followed through, and are the real problem. That is, that funding per pupil is more tightly related to poverty between districts than across schools within districts. States have fixed the between district problem, but cities have not fixed the within district problem. This is a common Center for American Progress and Ed Trust claim (which is completely unfounded).

Figure 4 shows the estimation of the within and between district funding-poverty relationships for the Cleveland area, in a (completely bogus) way that supports the CAP and Ed Trust claim.

Yes, Cleveland provides and absurd extreme. But, this same problem occurs when comparing any city where variation in the poverty measure across schools ranges from 80% to 100% and where variation in the poverty measure across districts ranges from 0% to 100% (See Newark example above).

Yes, Cleveland provides and absurd extreme. But, this same problem occurs when comparing any city where variation in the poverty measure across schools ranges from 80% to 100% and where variation in the poverty measure across districts ranges from 0% to 100% (See Newark example above).

No more excuses

The problem for researchers and evaluators is that states maintain multiple data systems that don’t always include the same gradients of data precision. We can find in STATE SCHOOL REPORTS – SCHOOL AGGREGATE DATA systems, information on the numbers and shares of school enrollment that are free lunch, reduced lunch, and sometimes other indicators such as homelessness. But, these data are not included in the STUDENT LEVEL DATA SYSTEM LINKED TO ASSESSMENT OUTCOMES. Instead, those data systems which must be used for value-added modeling or for measuring effectiveness of specific reforms, such as enrollment in charters, include only a handful of simple indicator variables about each student.

Therein lies the typical research excuse – one that I use as well. “It’s what we have! You can’t expect us to use something better if we don’t have it!” No, I can’t. No, we can’t expect you (or I) to use something better if we don’t’ have it. BUT WE CAN EXPECT AN HONEST REPRESENTATION OF THE SHORTCOMINGS OF THESE DATA. And those shortcomings are HUGE, and the stakes are HIGH, especially when we are using these data to compare teacher effectiveness and determine who should be fired, or when we are asserting that charter schools more effective with low income students (if they aren’t actually serving the lower income students).

Readers: Please send along examples of recent prominent studies where the reported statistical model uses only a single indicator for free or reduced lunch to control for either or both a) differences across individual students and b) differences in peer groups, or classroom level effects.

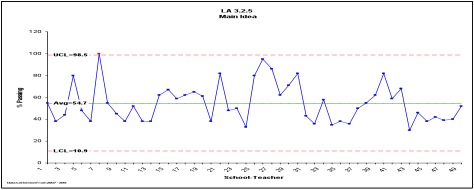

WHAT ABOUT LOS ANGELES, WHERE THE LA TIMES MODEL USED ONLY A SINGLE DUMMY VARIABLE ON FREE+REDUCED LUNCH (actually, the technical report refers ambiguously to students qualified for Title I, with no definition of the variable at all! http://www.latimes.com/media/acrobat/2010-08/55538493.pdf)?

Well, the vast majority of Los Angeles elementary schools have over 80% children qualifying for free + reduced lunch, suggesting that this measure simply won’t capture relevant variation across settings. The majority of LA schools (and classrooms within them) will be treated as statistically equivalent in terms of poverty in a model which only identifies poverty by free + reduced lunch. (data are from the NCES Common Core for 2008-09)

Simply adjusting the poverty threshold downward to the free lunch cut off, spreads the distribution – capturing considerably more variation across schools:

Simply adjusting the poverty threshold downward to the free lunch cut off, spreads the distribution – capturing considerably more variation across schools:

Still the majority of LAUSD elementary schools are over 80% free lunch, indicating that even this measure is likely not sufficiently sensitive to underlying differences in poverty/economic status. Again, it is simply an irresponsible assertion to claim that these schools which have over 80% of children who fall below the 130%, or 185% income level for poverty are pretty much the same. Using a statistical model that claims to correct for economic status, but uses only this measure to do so – depends on that irresponsible assertion! At the very least, this is an assertion that requires considerably more investigation.

Still the majority of LAUSD elementary schools are over 80% free lunch, indicating that even this measure is likely not sufficiently sensitive to underlying differences in poverty/economic status. Again, it is simply an irresponsible assertion to claim that these schools which have over 80% of children who fall below the 130%, or 185% income level for poverty are pretty much the same. Using a statistical model that claims to correct for economic status, but uses only this measure to do so – depends on that irresponsible assertion! At the very least, this is an assertion that requires considerably more investigation.