Here’s a quick data driven post on Governor’s state aid cuts – or aid changes. So far, I’ve been able to compile data from a few states which make it relatively easy to access and download data on district by district runs of state aid (and one state that does not, but I have good sources of assistance). Here, I compare changes in state aid to K-12 public school districts in Ohio, Pennsylvania and New York.

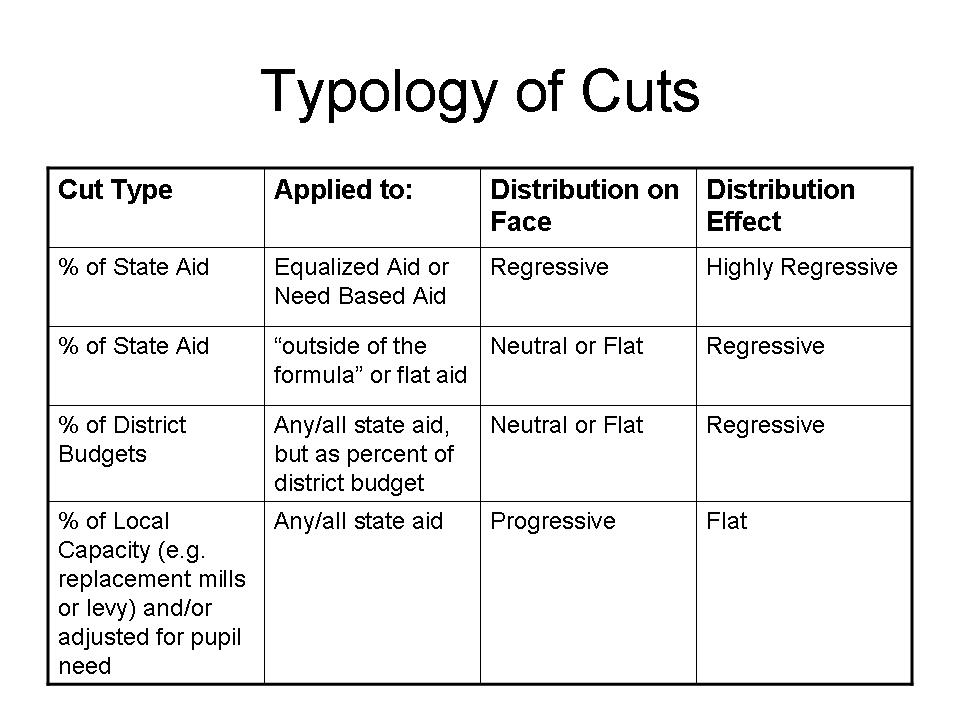

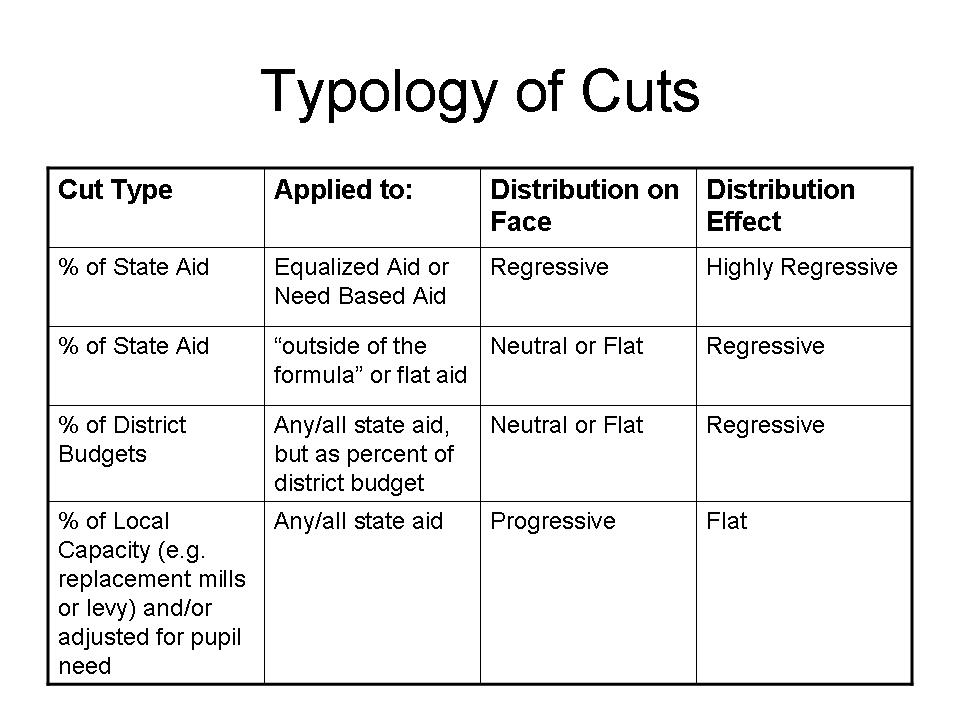

Let’s start with a review of types of cuts or distributions of cuts that might be applied:

First, cuts might be implemented as percent of state aid, but might be implemented across different aid programs. States typically have different clumps of state aid that goes out to school districts, some of which are progressively allocated with respect to need and wealth and others which may be allocated flat across districts regardless of local capacity or wealth. And some, like New York State actually still maintain very large aid programs that are distributed in greater amounts to wealthier districts (STAR aid). If one makes proportionate cuts to need based aid, or equalized aid, that generally means making larger cuts to needier districts (on a per pupil basis). The cuts alone are regressive on their face, and because the cuts are larger for districts with less capacity to replace locally the state cuts the effect tends to be highly regressive. Smaller cuts on wealthier districts are easily replaced with local source funds.

First, cuts might be implemented as percent of state aid, but might be implemented across different aid programs. States typically have different clumps of state aid that goes out to school districts, some of which are progressively allocated with respect to need and wealth and others which may be allocated flat across districts regardless of local capacity or wealth. And some, like New York State actually still maintain very large aid programs that are distributed in greater amounts to wealthier districts (STAR aid). If one makes proportionate cuts to need based aid, or equalized aid, that generally means making larger cuts to needier districts (on a per pupil basis). The cuts alone are regressive on their face, and because the cuts are larger for districts with less capacity to replace locally the state cuts the effect tends to be highly regressive. Smaller cuts on wealthier districts are easily replaced with local source funds.

Alternatively, a state might cut a flat percent of flatly allocated aid, or a state might distribute aid cuts as a flat percent of per pupil budgets. The distributional effects – at face value – of these cuts does depend on the distribution of state budgets. If the overall system is progressive to begin with (higher need districts having larger per pupil budgets) then the cuts are larger on a per pupil basis in higher need districts. If the overall system is flat, or neutral, the proportionate cuts will be flat or neutral on their face. If applied to flatly allocated aid, the cuts are flat, on their face. However, because wealthier districts can more easily replace the same size cut, the distribution effect will likely remain regressive – though not as absurdly regressive as the first option.

Most cuts fall into these two above categories (first three in table), but the possibility exists that a state would actually cut state aid in greater amounts to those districts that either have less need to begin with or districts that can most easily replace that aid with local resources. These would, on their face, be progressively distributed cuts. But, because those districts receiving the largest cuts would be the ones with greatest capacity to bounce back on their own, the distribution effect would likely be flat.

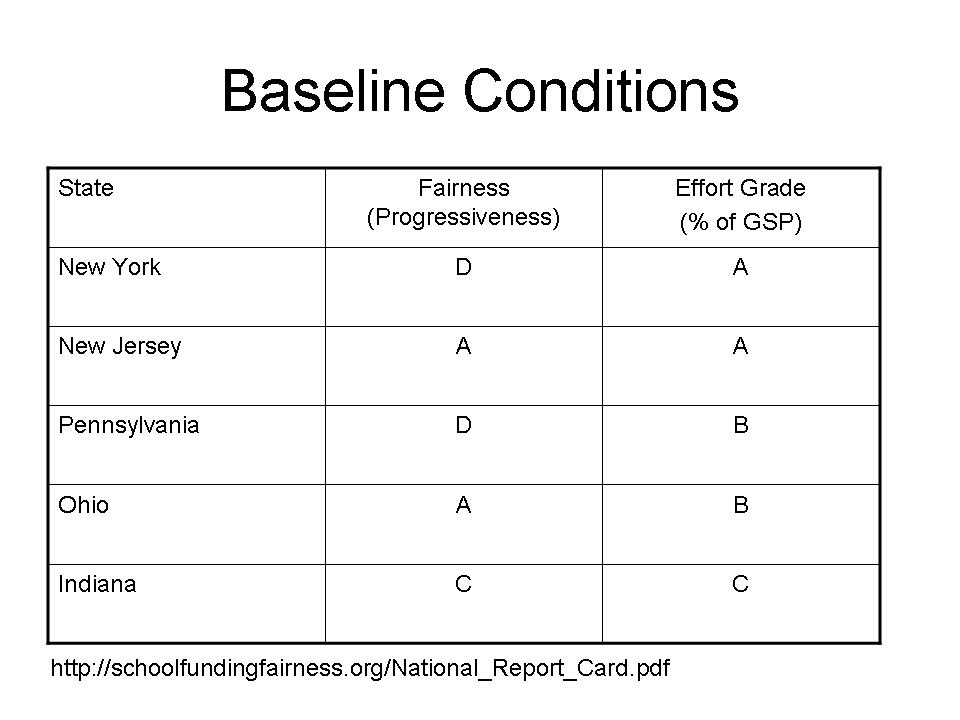

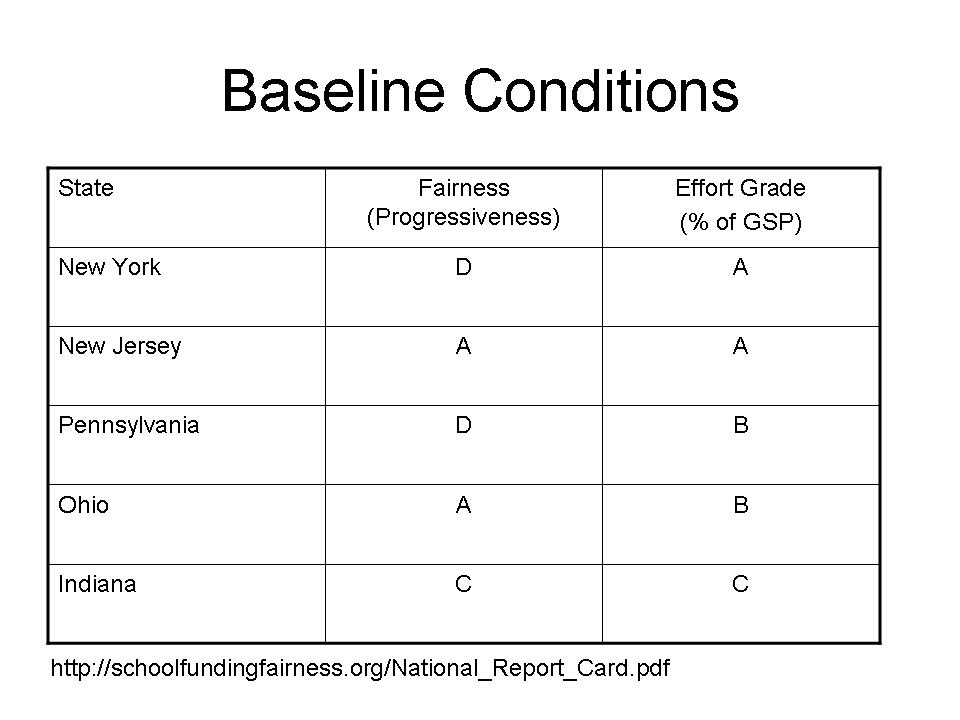

The baseline conditions in a state matter!

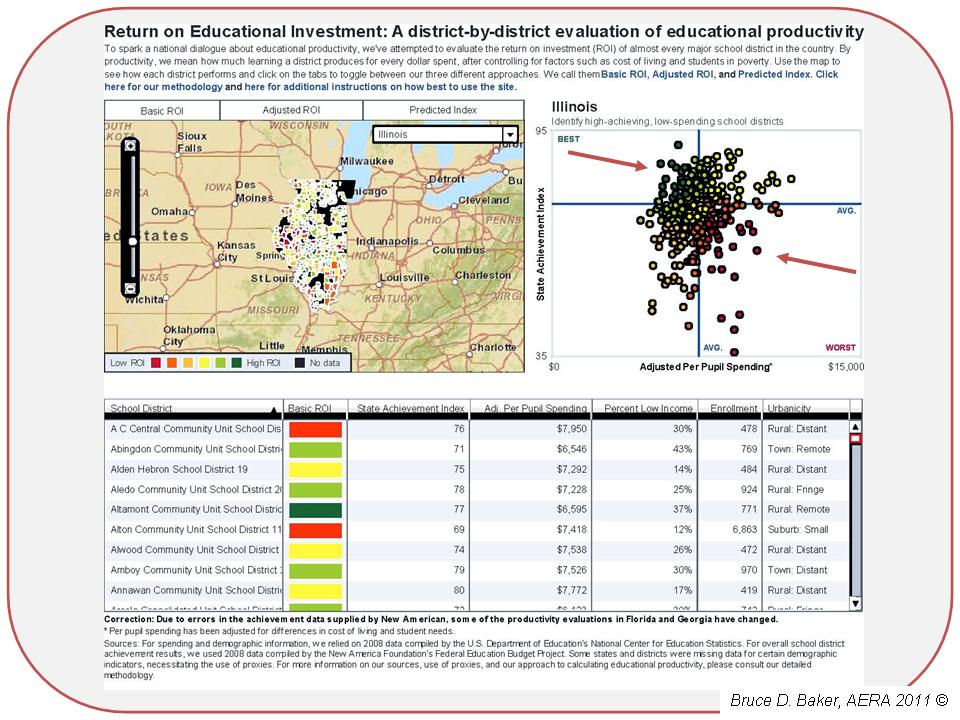

This table draws on the School Funding Fairness report I worked on and released last year, which characterizes the baseline conditions for states. It would be particularly problematic, for example, to make the first type of cuts on a state school finance system that is regressive to begin with. It would arguably also be quite offensive to make flat cuts on a regressive system. For more explanation regarding these baseline conditions, see http://www.schoolfundingfairness.org.

New York, while having high average spending per pupil, IS AMONG THE MOST REGRESSIVELLY FUNDED STATE EDUCATION SYSTEMS IN THE NATION. In fact, funding in New York State is only as high as it is because of the very high spending of very affluent suburban districts – suburban districts that, by the way, continue to receive substantial state aid for property tax relief. New Jersey and Ohio are two of the only states which, in our report, showed systematic positive relationships between funding (state and local) and poverty, albeit Ohio’s funding was much less systematic than that of New Jersey and less progressive overall. Still, Ohio was far more progressive on funding distribution than many other states. Pennsylvania was right down their with New York, among the most regressive in the nation – but PA had begun to phase in a new basic education funding formula which would, if implemented, lead to improvements.

New York, while having high average spending per pupil, IS AMONG THE MOST REGRESSIVELLY FUNDED STATE EDUCATION SYSTEMS IN THE NATION. In fact, funding in New York State is only as high as it is because of the very high spending of very affluent suburban districts – suburban districts that, by the way, continue to receive substantial state aid for property tax relief. New Jersey and Ohio are two of the only states which, in our report, showed systematic positive relationships between funding (state and local) and poverty, albeit Ohio’s funding was much less systematic than that of New Jersey and less progressive overall. Still, Ohio was far more progressive on funding distribution than many other states. Pennsylvania was right down their with New York, among the most regressive in the nation – but PA had begun to phase in a new basic education funding formula which would, if implemented, lead to improvements.

How do the Governor’s cuts play out? Who’s “best” and who’s “worst”

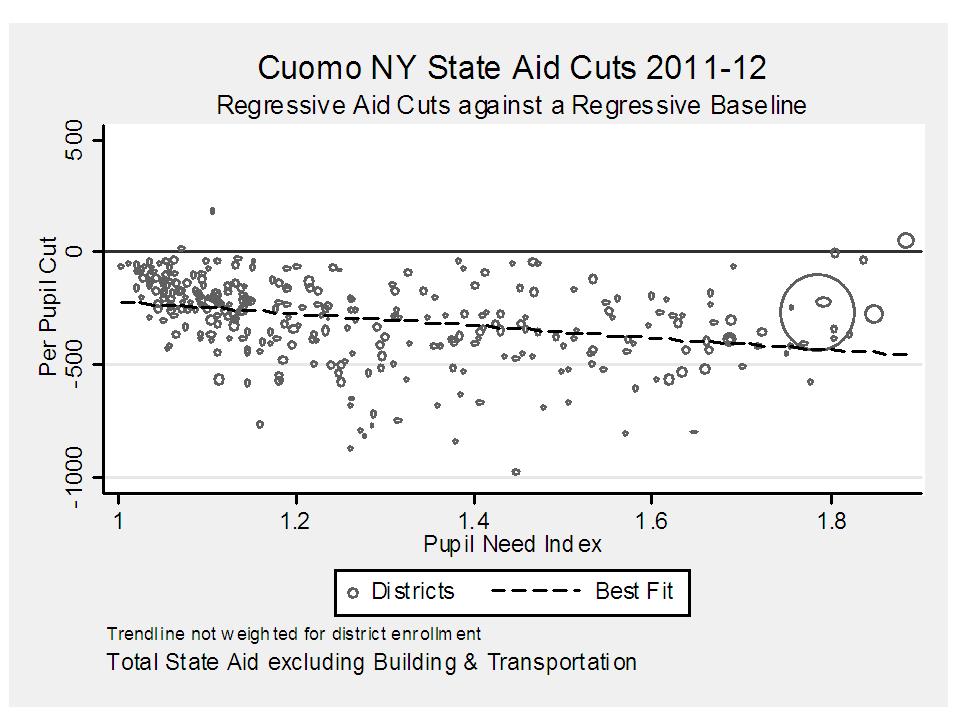

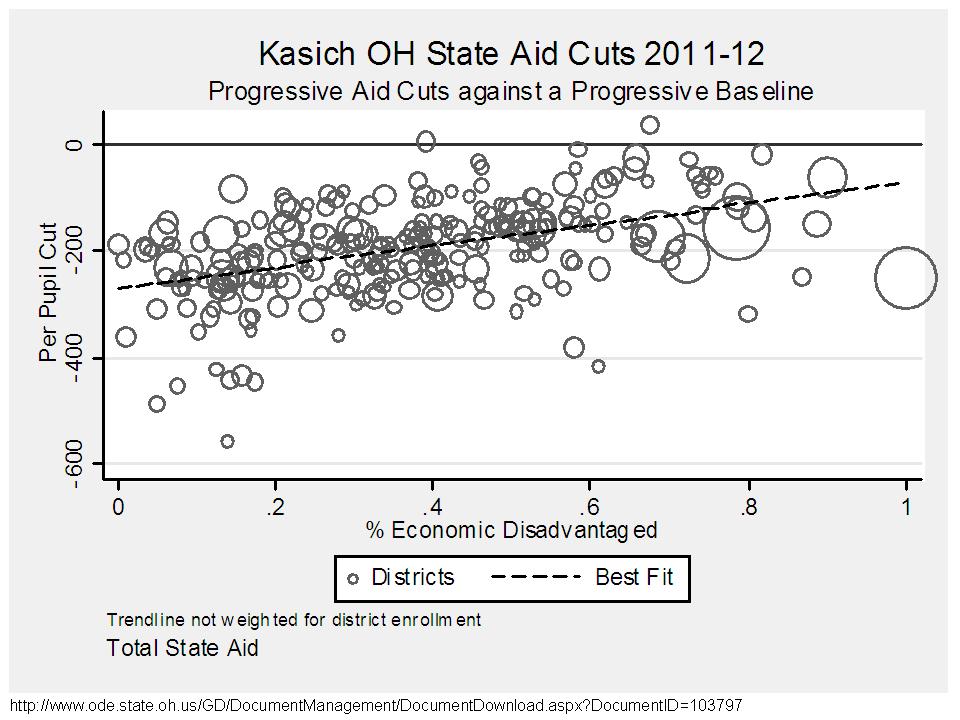

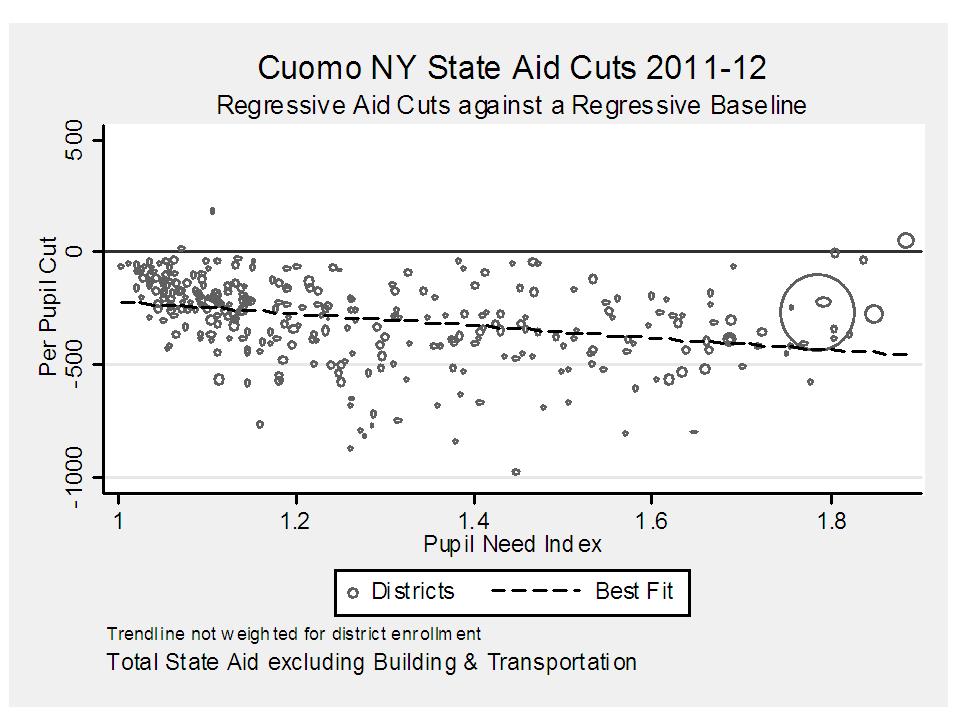

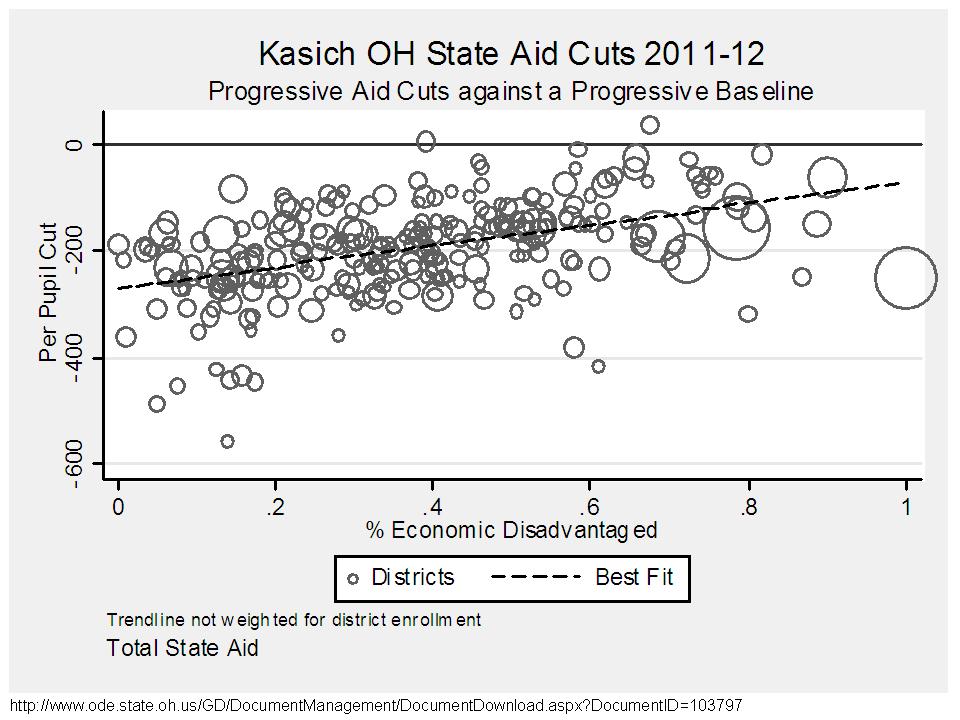

Below are the district by district distributions of per pupil aid changes with respect to student need measures, for Ohio, NY and PA.

In New York, the aid cuts per pupil ARE REGRESSIVE ON THEIR FACE, and fall into the first and worst category above. Higher need districts will have their aid cut nearly $500 per pupil, while many very low need districts see negligible cuts per pupil.

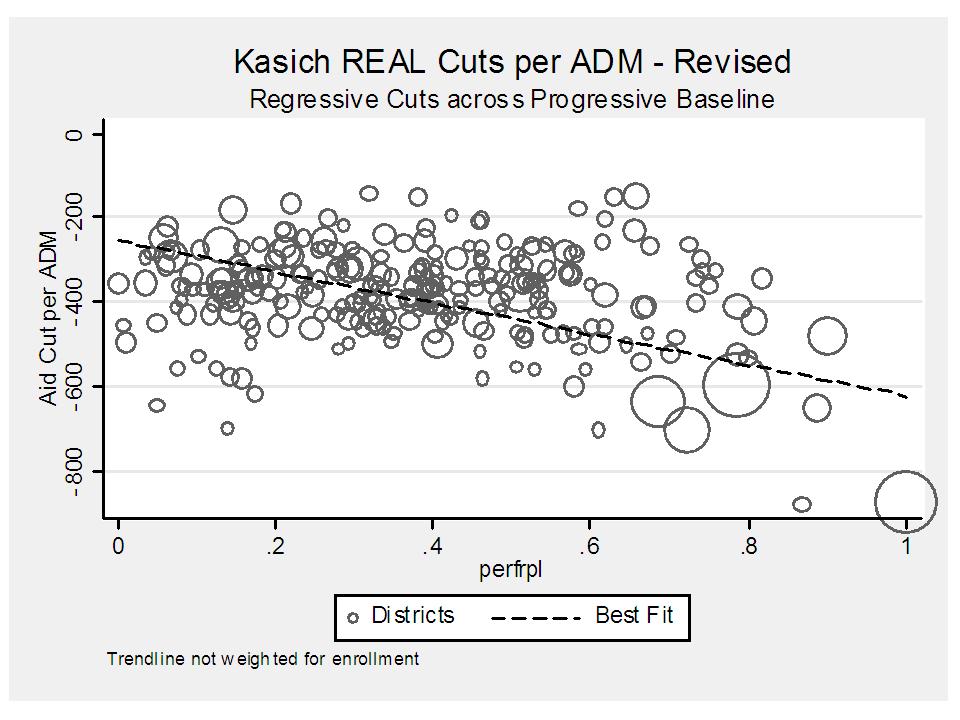

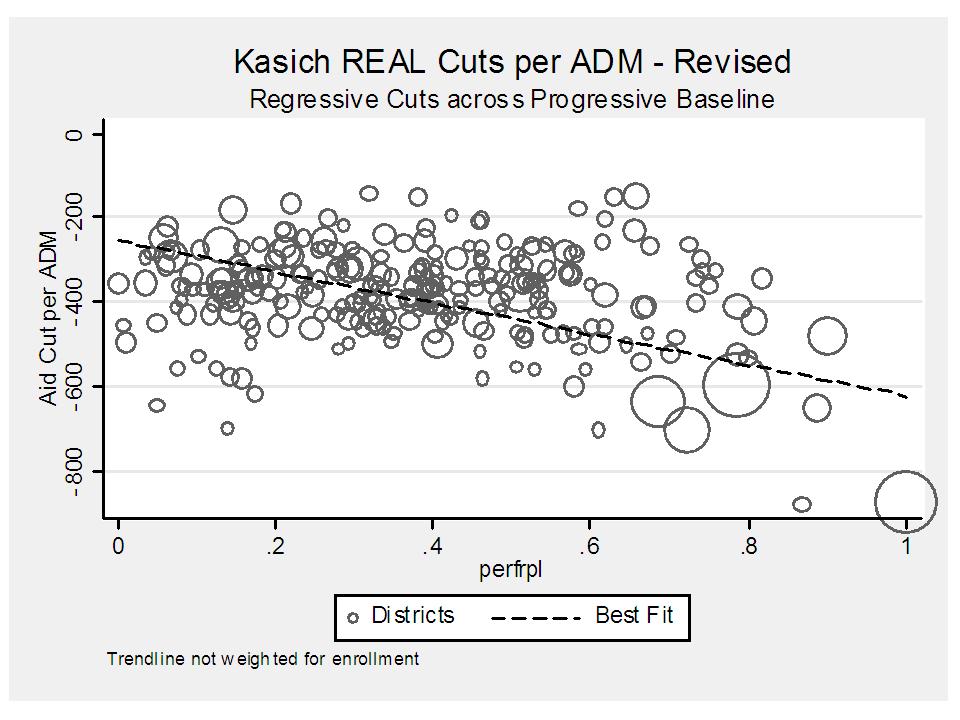

AND NOW FOR THE REAL KASICH CUTS. IF THE CORBETT CUTS WERE SUSPECT AS REPORTED IT ONLY MADE SENSE TO TAKE A SECOND LOOK AT THE KASICH GAME. AND THE PLAYBOOK IS THE SAME!

The playbook is to ignore that federal stabilization money that was intended to be replaced with state aid as it disappeared. Well, here are Kasich’s REAL regressive cuts when comparing 2012 to 2011, with 2011 including the stabilization money:

Ohio’s cuts are particularly interesting. On a per pupil basis, the cuts are systematically smaller in higher poverty districts. The cuts are actually larger in lower need and higher wealth districts (but for a few outliers). These cuts are, on their face, progressive, and will likely lead to a relatively flat distribution of overall per pupil budget changes. I’ve not yet run the second year of aid changes though.

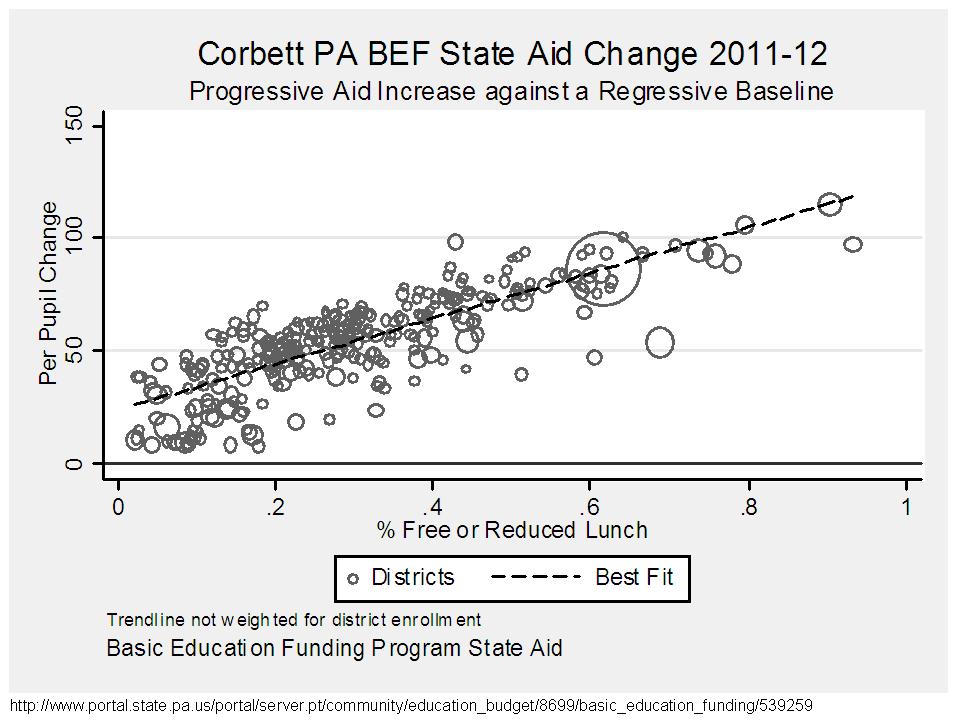

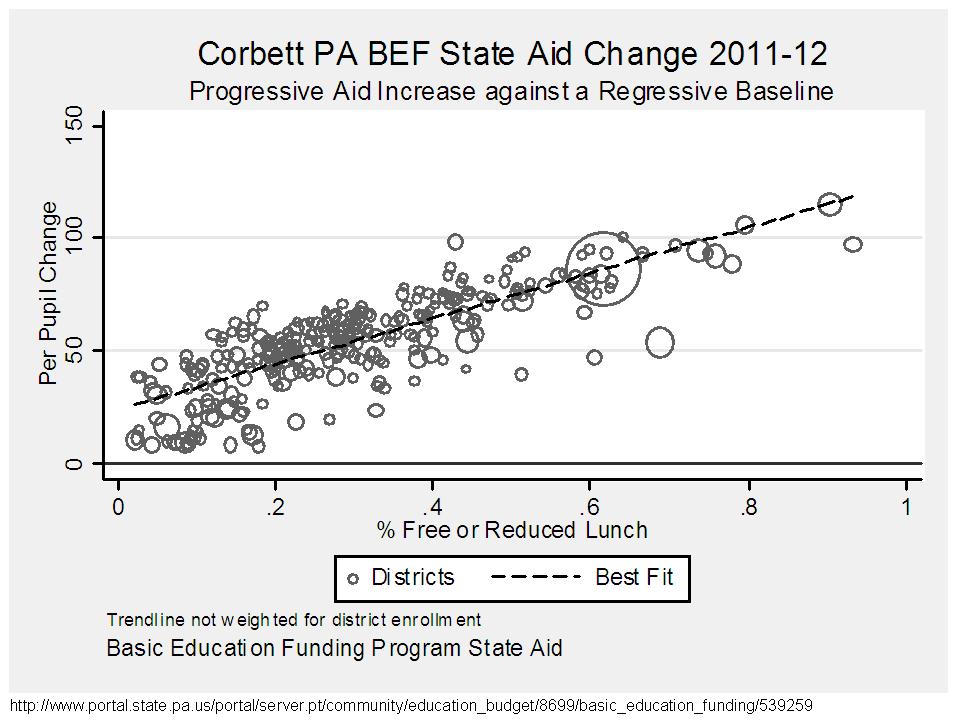

As reported on the PA state portal web size, Basic Education Funding is set to increase by about 2% across PA districts. I’ve certainly heard news of cuts, but the data and official documentation at this point do not show those cuts. The overall state budget data do show huge cuts to other areas of the budget. But BEF funding receives a small boost and SEF (special education funding) is frozen. Because the boost is proportionate to 2010-11 BEF funding which is equalized, the bust is larger in higher need districts. Nonetheless the boost is quite small.

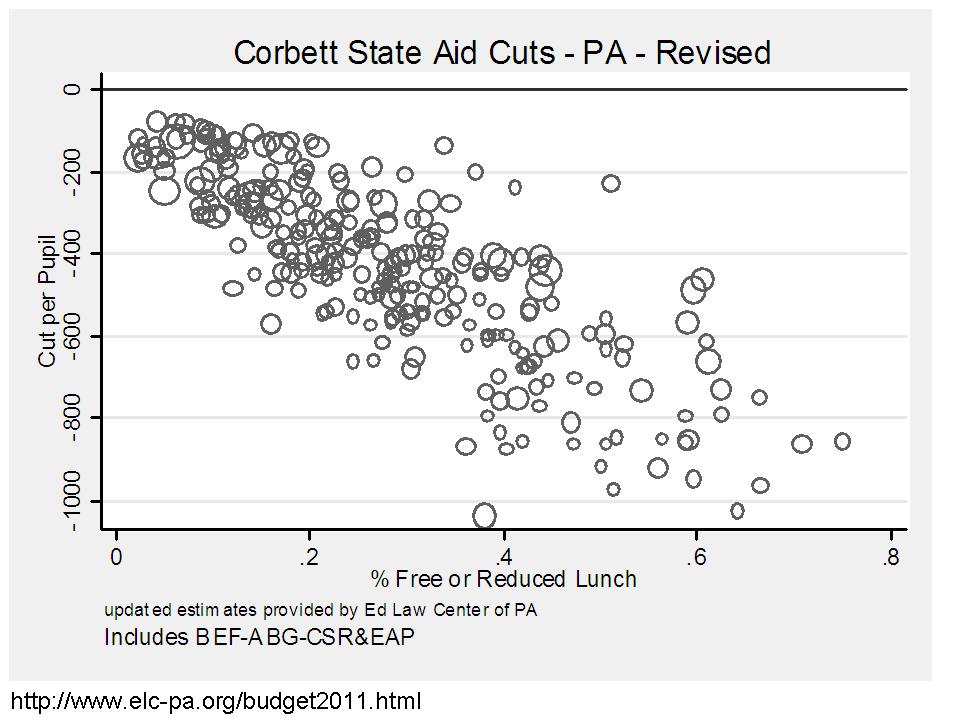

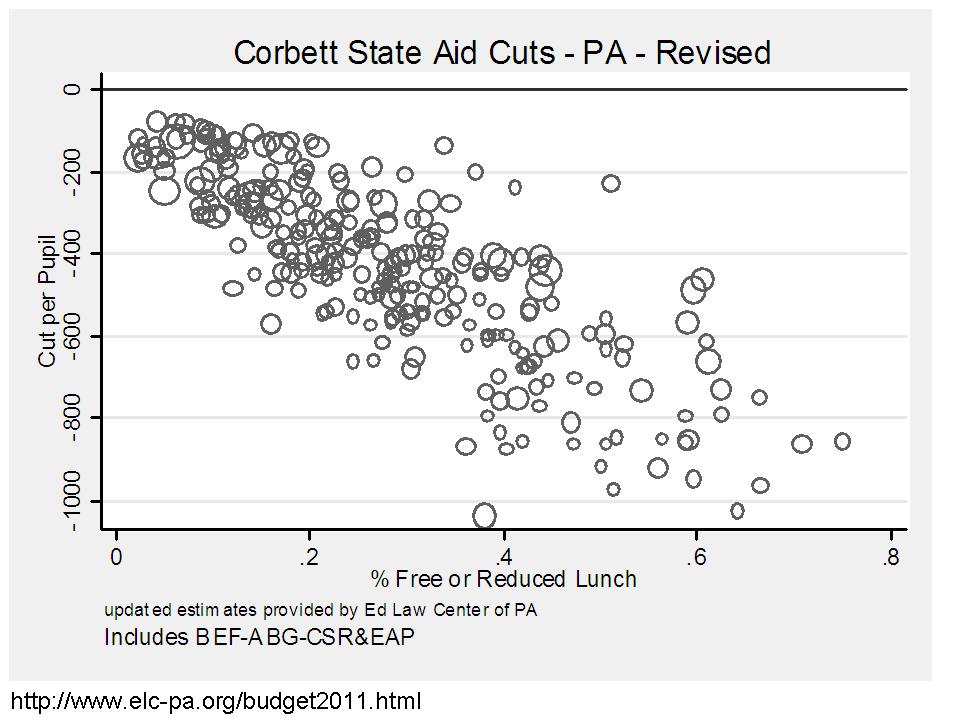

NOW FOR THE REAL PENNSYLVANIA CORBETT CUTS, COURTESY OF THE ED LAW CENTER OF PA:

Why the big difference? Well, I should have caught this one. Indeed the first graph above which shows a 2% increase over prior year is, in fact, a 2% increase over the prior year STATE + FED JOBS money portions of BEF. What they failed to mention is that they chose not to replace the FEDERAL STABILIZATION FUNDING. In 2010-11:

BEF = STATE AID + SFSF + JOBS

The idea was, that as SFSF disappeared, state aid would be raised to replace that money, or else districts would face substantial budget holes. Corbetts 2012 funding is:

Corbett BEF Aid = 1.02 x (STATE AID 2010-11 + JOBS 2010-11)

Leaving out that other $650 million or so that was also in BEF (from SFSF) in the prior year, and was distributed through the equalized formula.

PA ELC Spreadsheet here!

So, the winner of the worse cuts award in ROUND1 – the battle of Corbett, Kasich, Cuomo – is Cuomo. Cuomo’s cuts are large and Cuomo’s cuts are regressive on their face! That’s one heck of an accomplishment!

SO, AS IT TURNS OUT BOTH KASICH AND CORBETT ACTUALLY DO MARGINALLY WORSE THAN CUOMO.

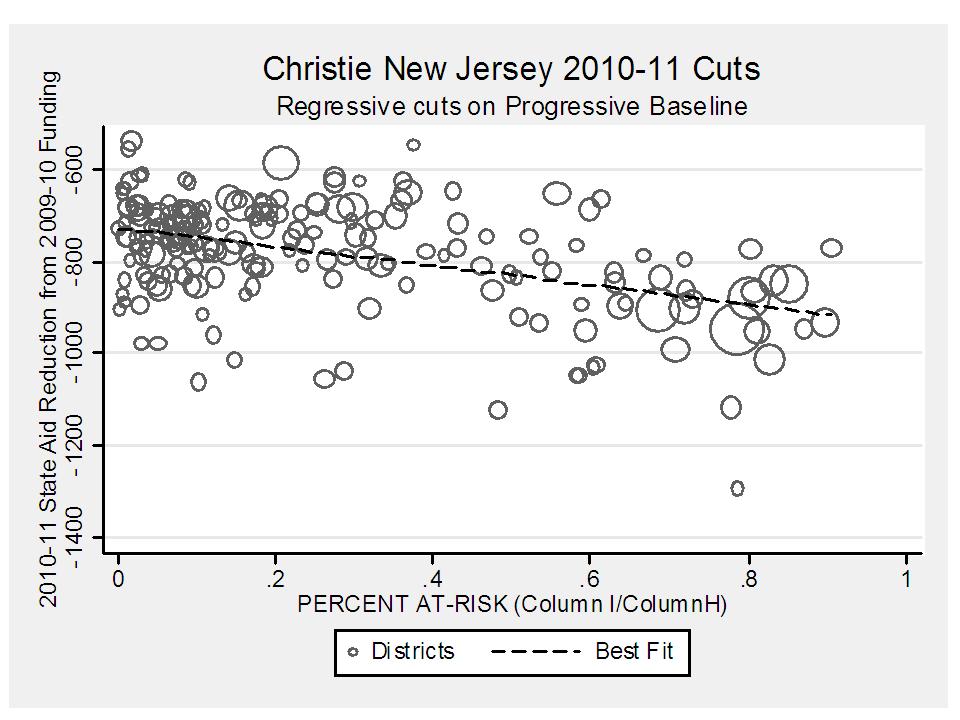

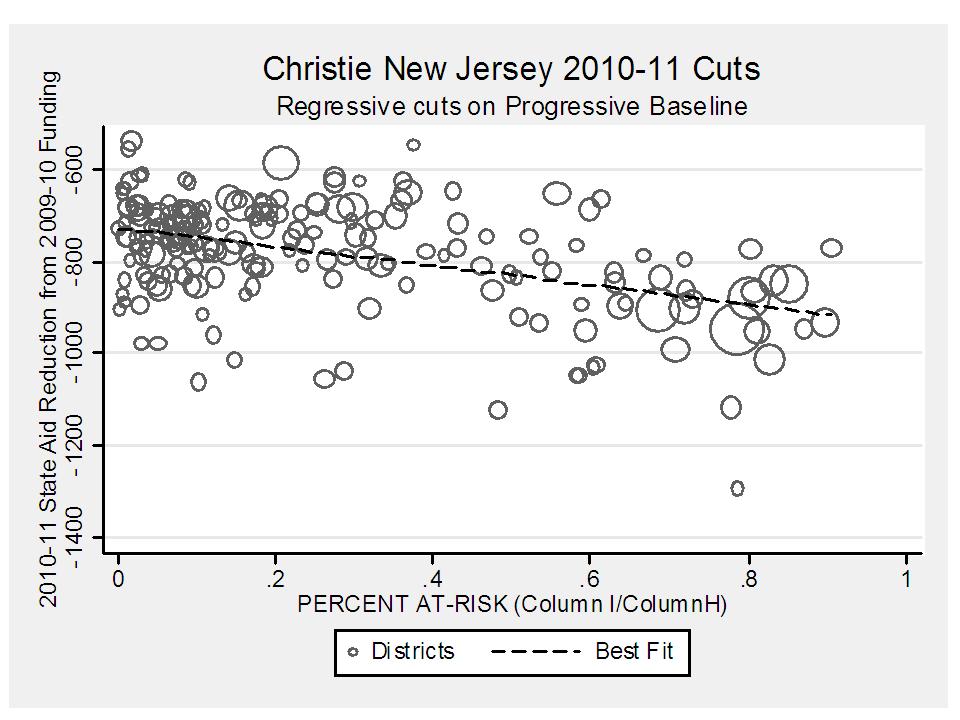

BONUS GRAPH – CHRISTIE’s Prior Year New Jersey Cuts