I’ve previously written about the growing number of rigorous peer reviewed and other studies which tend to show positive effects of state school finance reforms. But what about all of those accounts to the contrary? The accounts that seem so dominant in the policy conversations on the topic. What is that vast body of research that suggests that school finance reforms don’t matter? That it’s all money down the rat-hole. That in fact, judicial orders to increase funding for schools actually hurt children?

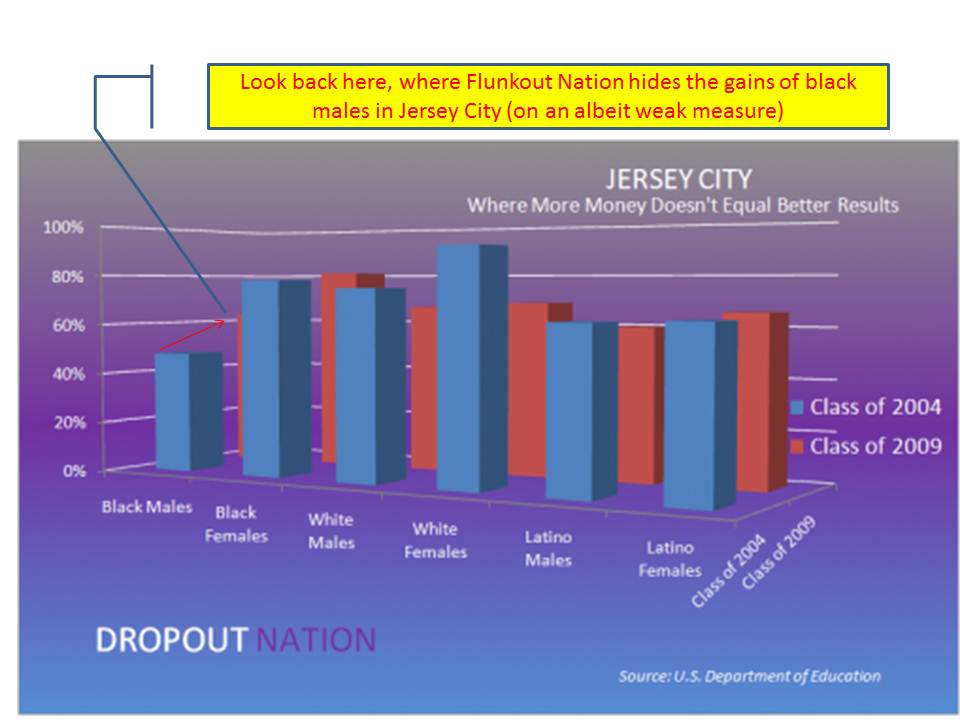

Beyond utterly absurd graphs and tables like Bill Gates’ “turn the curve upside down” graph, and Dropout Nation’s even more absurd graph, there have been a handful of recent studies and entire books dedicated to proving that court ordered school finance reforms simply have no positive effect on children. Some do appear in peer reviewed journals, despite egregious (and really obvious) methodological flaws. And yes, some really do go so far as to claim that court ordered school finance reforms “harm our children.”[1]

The premise that additional funding for schools often leveraged toward class size reduction, additional course offerings or increased teacher salaries, causes harm to children is, on its face, absurd. Further, no rigorous empirical study of which I am aware actually validates that increased funding for schools in general or targeted to specific populations has led to any substantive, measured reduction in student outcomes or other “harm.”

But questions regarding measurement and validation of positive effects versus non-effects are complex. That said, while designing good research analyses can be quite complex, the flaws of bad analyses are often absurdly simple. As simple as asking three questions: a) whether the reform in question actually happened? b) when it happened and for how long? and c) who was to be affected by the reform?

- Whether: Many analyses argue to show that school funding reforms had no positive effects on outcomes, but fail to measure whether substantive school funding reforms were ever implemented or whether they were sustained. Studies of this type often simply look at student outcome data in the years following a school funding related ruling, creating crude classifications of who won or lost the ruling. Yet, the question at hand is not whether a ruling in-and-of-itself leads to changes in outcomes, but whether reforms implemented in response to a ruling do. One must, at the very least, measure whether reform actually happened!

- When: Many analyses simply pick two end points, or a handful of points of student achievement to cast as a window, or envelop around a supposed occurrence of school finance reform or court order, often combining this strategy with the first (not ever measuring the reform itself). For example, one might take NAEP scores from 1992 and 2007 on a handful of states, and indicate that sometime in that window, each state implemented a reform or had a court order. Then one might compare the changes in outcomes from 1992 to 2007 for those states to other states that supposedly did not implement reforms or have court orders. This, of course provides no guarantee that states from the non-reform group (a non-controlled control group?) didn’t actually do something more substantive than the reform group. But, that aside, the casting of a large time window and the same time window across states ignores the fact that reforms may come and go within that window, or may be sufficiently scaled up only during the latter portion of the window. It makes little sense, for example to evaluate the effects of New Jersey’s school finance reforms which experienced their most significant scaling up between 1998 and 2003, by also including 6 years prior to any scaling up of reform. Similarly, some states which may have aggressively implemented reforms at the beginning of the window may have seen those reforms fade within the first few years. When matters!

- Who: Many analyses also address imprecisely the questions of “who” is expected to benefit from the reforms. Back to the “whether” question, if there was no reform, then the answer to this question is no-one. No-one is expected to benefit from a reform that didn’t ever happen. Further, no-one is expected to benefit today from a reform that may happen tomorrow, nor is it likely that individuals will benefit twenty years from now from a reform that is implemented this year, and gone within the next three years. Beyond these concerns, it is also relevant to consider whether the school finance reform in question, if and when it did happen, benefited specific school districts or specific children. Reforms that benefit poorly funded school districts may not also uniformly benefit low income children who may be distributed, albeit unevenly, across well-funded and poorly-funded districts. Not all achievement data are organized for appropriate alignment with funding reform data. And if they are not, we cannot know if we are measuring the outcomes of who we would actually expect to benefit.

In 2011, Kevin G. Welner of the University of Colorado and I published an extensive review of the good, the bad and the ugly of research on the effectiveness of state school finance reforms.[2] In our article we identify several specific examples of empirical studies claiming to find (not just “find” but prove outright) that school funding reforms and judicial orders simply don’t matter. That is, they don’t have any positive effects on measured student outcomes. But, as noted above, many of those studies suffer from basic flaws of logic in their research design, which center on questions of whether, when and who.

As one example of a whether problem, consider an article published by Greene and Trivett (2008). Greene and Trivitt claim to have found “no evidence that court ordered school spending improves student achievement” (p. 224). The problem is that the authors never actually measured “spending” and instead only measured whether there had been a court order. Kevin Welner and I explain:

The Greene and Trivitt article, published in a special issue of the Peabody Journal of Education, proclaimed that the authors had empirically estimated “the effect of judicial intervention on student achievement using standardized test scores and graduation rates in 48 states from 1992 to 2005” and had found “no evidence that court ordered school spending improves student achievement” (p. 224, emphasis added). The authors claim to have tested for a direct link between judicial orders regarding state school funding systems and any changes in the level or distribution of student outcomes that are statistically associated with those orders. That is, the authors asked whether a declaration of unconstitutionality (nominally on either equity or adequacy grounds) alone is sufficient to induce change in student outcomes. The study simply offers a rough indication of whether the court order itself, not “court-ordered school spending,” affects outcomes. It certainly includes no direct test of the effects of any spending reforms that might have been implemented in response to one or more of the court orders.

Kevin Welner and I also raise questions regarding “who” would have benefited from specific reforms and “when” specific reforms were implemented and/or faded out. In our article, much of our attention regarding who and when questions focused on Chapter 6, The Effectiveness of Judicial Remedies of Eric Hanushek and Alfred Lindseth’s book Courting Failure.[3] A downloadable version of the same graphs and arguments can be found here: http://edpro.stanford.edu/Hanushek/admin/pages/files/uploads/06_EduO_Hanushek_g.pdf. Specifically, Hanushek and Lindseth identify four states, Kentucky, Massachusetts, New Jersey and Wyoming as states which have by order of their court systems, (supposedly) infused large sums of money into school finance reforms over the past 20 years. Given this simple classification, Hanushek and Lindseth take the National Assessment (NAEP) Scores for these states, including scores for low income children, and racial subgroups, and plot those scores against national averages from 1992 to 2007.

No statistical tests are performed, but graphs are presented to illustrate that there would appear to be no difference in growth of scores in these states relative to national averages. Of course, there is also no measure of whether and how funding changed in these states compared to others. Additionally, there is no consideration of the fact that in Wyoming, for example, per pupil spending increased largely as a function of enrollment decline and less as a function of infused resources (the denominator shrunk more than the numerator grew).

Setting these other major concerns aside, which alone undermine entirely the thesis of Hanushek and Lindseth’s chapter, Kevin Welner and I explain the problem of using a wide time window to evaluate school finance reforms which may ebb and flow throughout that window:

As noted earlier, the appropriate outcome measure also depends on identifying the appropriate time frame for linking reforms to outcomes. For example, a researcher would be careless if he or she merely analyzed average gains for a group of states that implemented reforms over an arbitrary set of years. If a state included in a study looking at years 1992 and 2007 had implemented its most substantial reforms from 1998 to 2003, the overall average gains would be watered down by the six pre-reform years – even assuming that the reforms had immediate effects (showing up in 1998, in this example). And, as noted earlier, such an “open window” approach may be particularly problematic for evaluating litigation-induced reforms, given the inequitable and inadequate pre-reform conditions that likely led to the litigation and judicial decree.

There also exist logical, identifiable, time-lagged effects for specific reforms. For example, the post-1998 reforms in New Jersey included implementation of universal pre-school in plaintiff districts. Assuming the first relatively large cohorts of preschoolers passed through in the first few years of those reforms, a researcher could not expect to see resulting differences in 3rd or 4th grade assessment scores until four to five years later.

Further, as noted previously, simply disaggregating NAEP scores by race or low income status does not guarantee by any stretch that one has identified the population expected to benefit from specific reforms. That is, race and poverty subgroups in the NAEP sample are woefully imprecise proxies for students attending districts most likely to have received additional resources. Kevin Welner and I explain:

This need to disaggregate outcomes according to distributional effects of school funding reforms deserves particular emphasis since it severely limits the use of the National Assessment of Educational Progress – the approach used in the recent book by Hanushek and Lindseth. The limitation arises as a result of the matrix sampling design used for NAEP. While accurate when aggregated for all students across states or even large districts, NAEP scores can only be disaggregated by a constrained set of student characteristics, and those characteristics may not be well-aligned to the district-level distribution of the students of interest in a given study.

Consider, for example, New Jersey – one of the four states analyzed in the recent book. It might initially seem logical to use NAEP scores to evaluate the effectiveness of New Jersey’s Abbott litigation, to examine the average performance trends of economically disadvantaged children. However, only about half (54%) of New Jersey children who receive free or reduced-price lunch – a cutoff set at 185% of the poverty threshold – attend the Abbott districts. The other half do not, meaning that they were not direct beneficiaries of the Abbott remedies. While effects of the Abbott reforms might, and likely should, be seen for economically disadvantaged children given that sizeable shares are served in Abbott districts, the limited overlap between economic disadvantage and Abbott districts makes NAEP an exceptionally crude measurement instrument for the effects of the court-ordered reform.16

Hanushek and Lindseth are not alone in making bold assertions based on insufficient analyses, though Chapter 6 of their recent book goes to new lengths in this regard. Kevin Welner and I address numerous comparably problematic studies with more subtle whether, who and when problems, including the Greene and Trivitt study noted above. Another example is a study by Florence Neymotin of Kansas State University, which purports to find that the substantial infusion of funding into Kansas school districts which supposedly occurred between 1997 and 2006 as a function of the Montoy rulings never led to substantive changes in student outcomes. I blogged about this study when it was first reported. But, the most relevant court orders in Montoy did not come until January of 2005, June of 2005 and eventually July of 2006. Remedy legislation may be argued to have begun as early as 2005-06, but primarily from 2006-07 on, before its dismantling from 2008 on. Regarding the Neymotin study, Kevin Welner and I explain:

A comparable weakness undermines a 2009 report written by a Kansas State University economics professor, which contends that judicially mandated school finance reform in Kansas failed to improve student outcomes from 1997 to 2006 (Neymotin, 2009).13 This report was particularly egregious in that it did not acknowledge that the key judicial mandate was issued in 2005 and thus had little or no effect on the level or distribution of resources across Kansas schools until 2007-08. In fact, funding for Kansas schools had fallen behind and become less equitable from 1997 through 2005.14 Consequently, an article purporting to measure the effects of a mandate for increased and more equitable spending was actually, in a very real way, measuring the opposite.[4]

Kevin Welner and I also review several studies applying more rigorous and appropriate methods for evaluating the influence of state school finance reforms. I have discussed those studies previously here. On balance, it is safe to say that a significant body of rigorous empirical literature, conscious of whether, who and when concerns, validates that state school finance reforms can have substantive positive effects on student outcomes including reduction of outcome disparities or increased overall outcome level.

Further, it is even safer to say that analyses provided in sources like the book chapter by Hanushek and Lindseth (2009), or research articles by Neymotin (2009), Greene and Trivett, provide no credible evidence to the contrary, due to significant methodological omissions. Finally, even the boldest, most negative publications regarding state school finance reforms provide no support for the contention that school finance reforms actually “harm our children,” as indicated in the title of a 2006 volume by Eric Hanushek.

Sometimes, even when a research report or article seems really complicated, relatively simple questions like when, whether and who allow the less geeky reader to quickly evaluate and possibly debunk the study entirely. Sometimes, the errors of reasoning regarding when, whether and who, are so absurd that it’s hard to believe that anyone would actually present such an absurd analysis. But these days, I’m rarely shocked. My personal favorite “when” error remains the Reason Foundation’s claim that numerous current reforms positively affected past results! http://nepc.colorado.edu/bunkum/2010/time-machine-award. It just never ends!

Further reading:

B. Baker, K.G. Welner (2011) Do School Finance Reforms Matter and How Can We Tell. Teachers College Record. http://www.tcrecord.org/content.asp?contentid=16106

Card, D., and Payne, A. A. (2002). School Finance Reform, the Distribution of School Spending, and the Distribution of Student Test Scores. Journal of Public Economics, 83(1), 49-82.

Roy, J. (2003). Impact of School Finance Reform on Resource Equalization and Academic Performance: Evidence from Michigan. Princeton University, Education Research Section Working Paper No. 8. Retrieved October 23, 2009 from http://papers.ssrn.com/sol3/papers.cfm?abstract_id=630121(Forthcoming in Education Finance and Policy.)

Papke, L. (2005). The effects of spending on test pass rates: evidence from Michigan. Journal of Public Economics, 89(5-6). 821-839.

Downes, T. A., Zabel, J., and Ansel, D. (2009). Incomplete Grade: Massachusetts Education Reform at 15. Boston, MA. MassINC.

Guryan, J. (2003). Does Money Matter? Estimates from Education Finance Reform in Massachusetts. Working Paper No. 8269. Cambridge, MA: National Bureau of Economic Research.

Deke, J. (2003). A study of the impact of public school spending on postsecondary educational attainment using statewide school district refinancing in Kansas, Economics of Education Review, 22(3), 275-284.

Downes, T. A. (2004). School Finance Reform and School Quality: Lessons from Vermont. In Yinger, J. (ed), Helping Children Left Behind: State Aid and the Pursuit of Educational Equity. Cambridge, MA: MIT Press.

Resch, A. M. (2008). Three Essays on Resources in Education (dissertation). Ann Arbor: University of Michigan, Department of Economics. Retrieved October 28, 2009, from http://deepblue.lib.umich.edu/bitstream/2027.42/61592/1/aresch_1.pdf

Goertz, M., and Weiss, M. (2009). Assessing Success in School Finance Litigation: The Case of New Jersey. New York City: The Campaign for Educational Equity, Teachers College, Columbia University.

[1] See, for example: E.A. Hanushek (2006) Courting Failure: How School Finance Lawsuits Exploit Judges’ Good Intentions and Harm Our Children. Hoover Institution Press. Reviewed here: http://www.tcrecord.org/Content.asp?ContentId=13382

[2] Baker, B.D., Welner, K. (2011) School Finance and Courts: Does Reform Matter, and How Can We Tell? Teachers College Record 113 (11) p. –

[3] Hanushek, E. A., and Lindseth, A. (2009). Schoolhouses, Courthouses and Statehouses. Princeton, N.J.: Princeton University Press.

[4] B. Baker, K.G. Welner (2011) Do School Finance Reforms Matter and How Can We Tell. Teachers College Record. http://www.tcrecord.org/content.asp?contentid=16106