Any good study of the relative productivity and efficiency of charter schools compared to other schools (if such comparisons were worthwhile to begin with) would require precise estimates of comparable financial inputs and outcomes as well as the conditions under which those inputs are expected to yield outcomes.

The University of Arkansas Department of Education Reform has just produced a follow up to their previous analysis in which they proclaimed boldly that charter schools are desperately uniformly everywhere and anywhere deprived of thousands of dollars per pupil when compared with their bloated overfunded public district counterparts (yes… that’s a bit of a mis-characterization of their claims… but closer than their bizarre characterization of my critique).

I wrote a critique of that report pointing out how they had made numerous bogus assumptions and ill-conceived, technically inept comparisons which in most cases dramatically overstated their predetermined, handsomely paid for, but shamelessly wrong claims.

That critique is here: http://nepc.colorado.edu/files/ttruarkcharterfunding.pdf

The previous report proclaiming dreadful underfunding of charter schools leads to the low hanging fruit opportunity to point out that even if charter schools have close to the same test scores as district schools – and do so for so00000 much less money – they are therefore far more efficient. And thus, the nifty new follow up report on charter school productivity – or on how it’s plainly obvious that policymakers get far more for the buck from charters than from those bloated, inefficient public bureaucracies – district schools.

Of course, to be able to use without any thoughtful revision, the completely wrong estimates in their previous report, they must first dispose of my critique of that report – or pretend to.

In their new report comparing the relative productivity and efficiency of charter schools, UARK researchers assert that my previous critique of their funding differentials was flawed. They characterize my critique as focusing on differences specifically – and exclusively in percent free lunch population, providing the following rebuttal:

The main conclusion of our charter school revenue study was that, on average, charter schools nationally are provided with $3,814 less in revenue per-pupil than are traditional public schools. Critics of the report, including Gary Miron and Bruce D. Baker, claimed that the charter school funding gap we reported is largely due to charter schools enrolling fewer disadvantaged students than TPS.7 Miron stated that, “Special education and student support services explains most of the difference in funding.”8 Baker specifically claimed that charter schools enroll fewer students who qualify for free lunch and therefore suffer from deep poverty, compared to TPS.9

We have evidence with which to test these claims that the charter school funding gap is due to charters under-enrolling disadvantaged students, and that the gap would disappear if charters simply enrolled more special education students. To the first point, Table 1 includes aggregate data about the student populations served by the charter and TPS sectors for the 31 states in our revenue study. The states are sorted by the extent to which their charter sector enrolls a disproportionate percentage of free lunch students compared to their TPS sector. A majority of the states in our study (16 out of 31) have charter sectors that enroll a higher percentage of free lunch students than their TPS sector – directly contradicting Baker’s claim. Hawaii charters enroll the same percentage of free lunch students as do Hawaii TPS. For a minority of the states in our study (14 out of 31), their charter school sector enrolls a lower percentage of free lunch students than does their TPS sector.

Here’s the problem with this characterization. My critique was by no means centered on an assumption that charter schools serve fewer free lunch pupils than other schools statewide and that the gap would disappear if populations were more comparable.

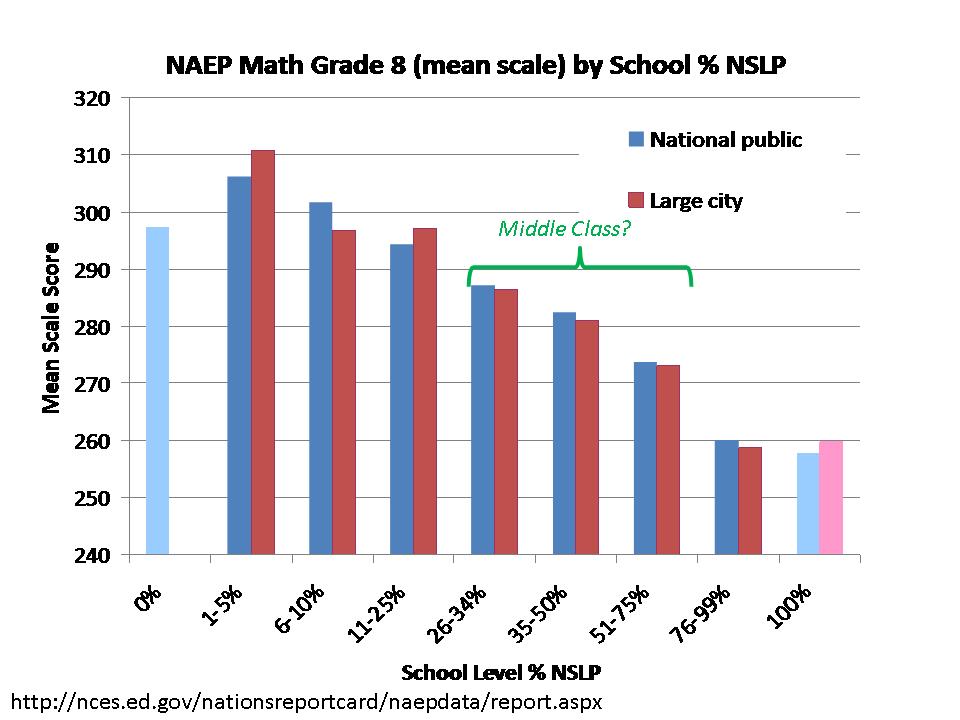

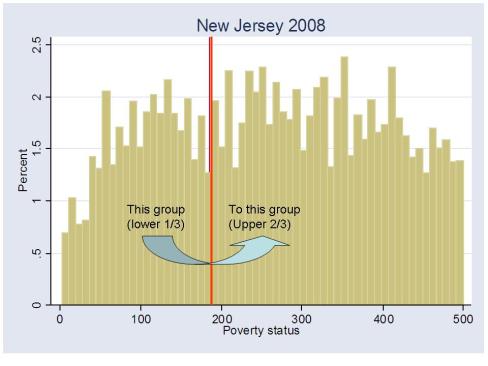

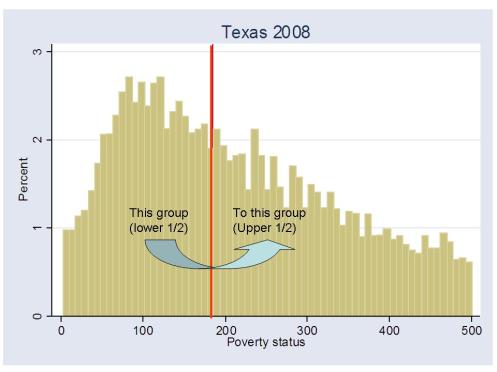

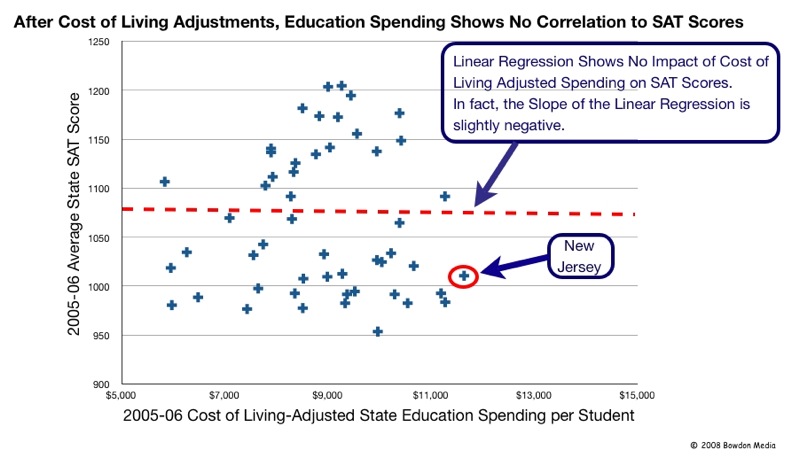

My critique pointed out, among other things that making comparisons of charters schools to district schools statewide is misguided – deceitful in fact. As I explained in my critique, it is far more relevant to compare against district schools IN THE SAME SETTING. I make such comparisons for New Jersey, Connecticut, Texas and New York with far greater detail and documentation provided in this new UARK report. So no – they provide no legitimate refutation of my more accurate, precise and thoroughly documented claims.

But that’s only a small part of the puzzle. To reiterate and summarize my major points of critique:

As explained in this review, the study has one overarching flaw that invalidates all of its findings and conclusions. But the shortcomings of the report and its analyses also include several smaller but notable issues. First, it suffers from alarmingly vague documentation regarding data sources and methodologies, and many of the values reported cannot be verified by publicly available or adequately documented measures of district or charter school revenue. Second, the report constructs entirely inappropriate comparisons of student population characteristics—comparing, for example, charter school students to students statewide (using a poorly documented weighting scheme) rather than comparing charter school students to students actually served in nearby districts or with other schools or districts with more similar demographics. Similar issues occur with revenue comparisons.

Yet these problems pale in comparison to the one overarching flaw: the report’s complete lack of understanding of intergovernmental fiscal relationships, which results in the blatantly erroneous assignment of “revenues” between charters and district schools. As noted, the report purports to compare “all revenues” received by “district schools” and by “charter schools,” asserting that comparing expenditures would be too complex. A significant problem with this logic is that one entity’s expenditure is another’s revenue. More specifically, a district’s expenditure can be a charter’s revenue. Charter funding is in most states and districts received by pass-through from district funding, and districts often retain responsibility for direct provision of services to charter school students —a reality that the report entirely ignores when applying its resource-comparison framework. In only a handful of states are the majority of charter schools ostensibly fully fiscally independent of local public districts.3 This core problem invalidates all findings and conclusions of the study, and if left unaddressed would invalidate any subsequent “return on investment” comparisons.

So, back to my original point – any relative efficiency comparison must have comparable funding measures – and this new UARK study a) clearly does not and b) made no real attempt whatsoever to correct or even respond to their previous egregious errors.

The acknowledgement of my critique, highly selective misrepresentation of my critique, and complete failure to respond to the major substantive points of that critique display a baffling degree of arrogance and complete disregard for legitimate research.

Yes – that’s right – either this is an egregious display of complete ignorance and methodological ineptitude, or this new report is a blatant and intentional misrepresentation of data. So which is it? I’m inclined to believe the latter, but I guess either is possible.

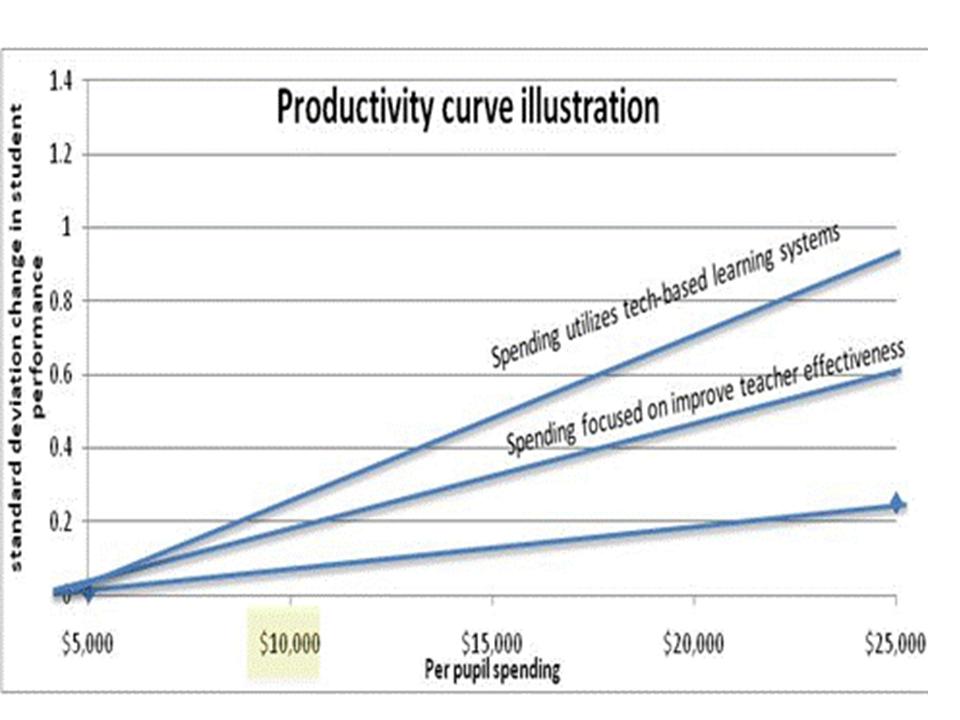

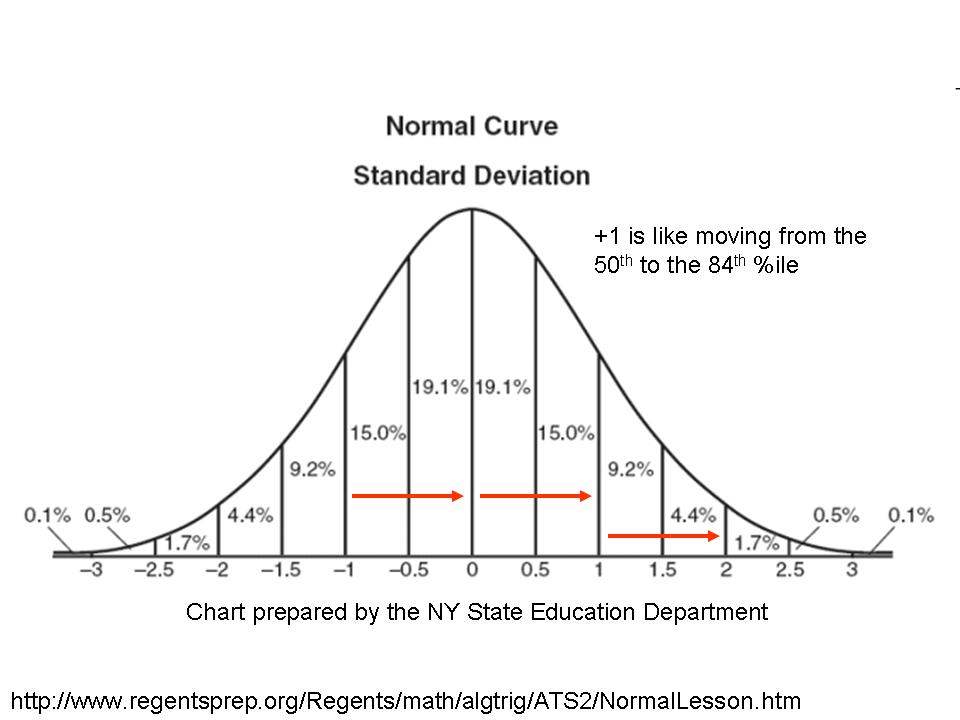

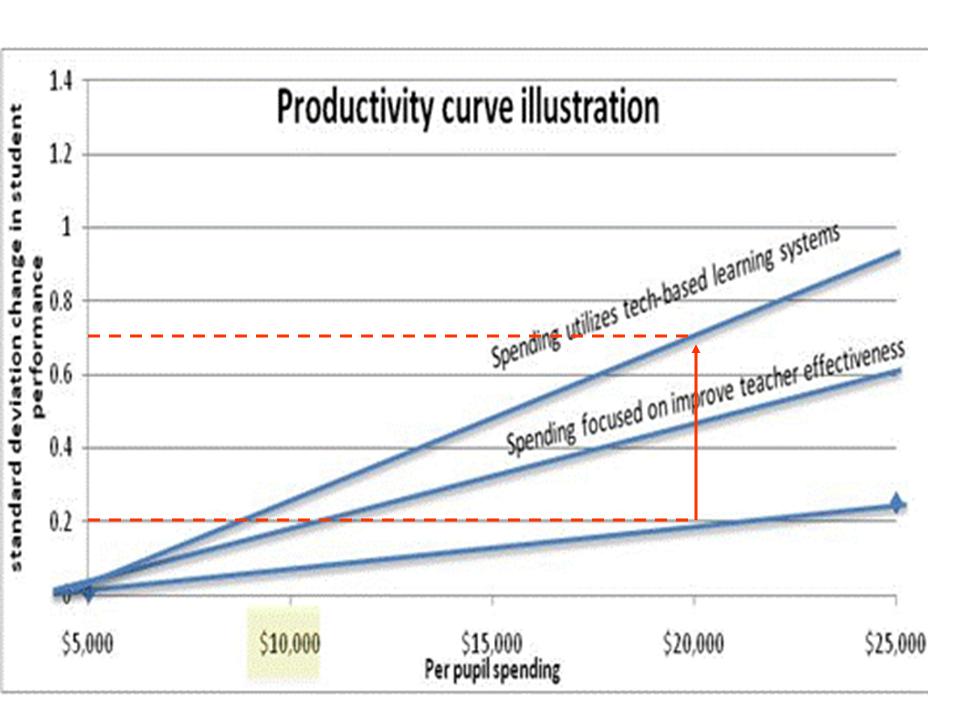

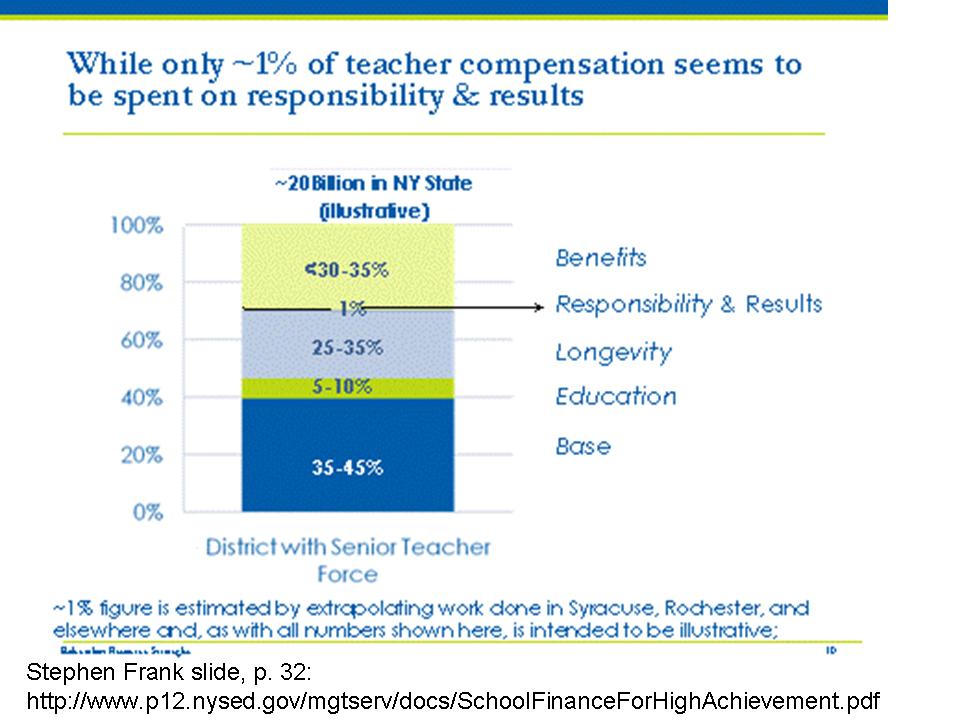

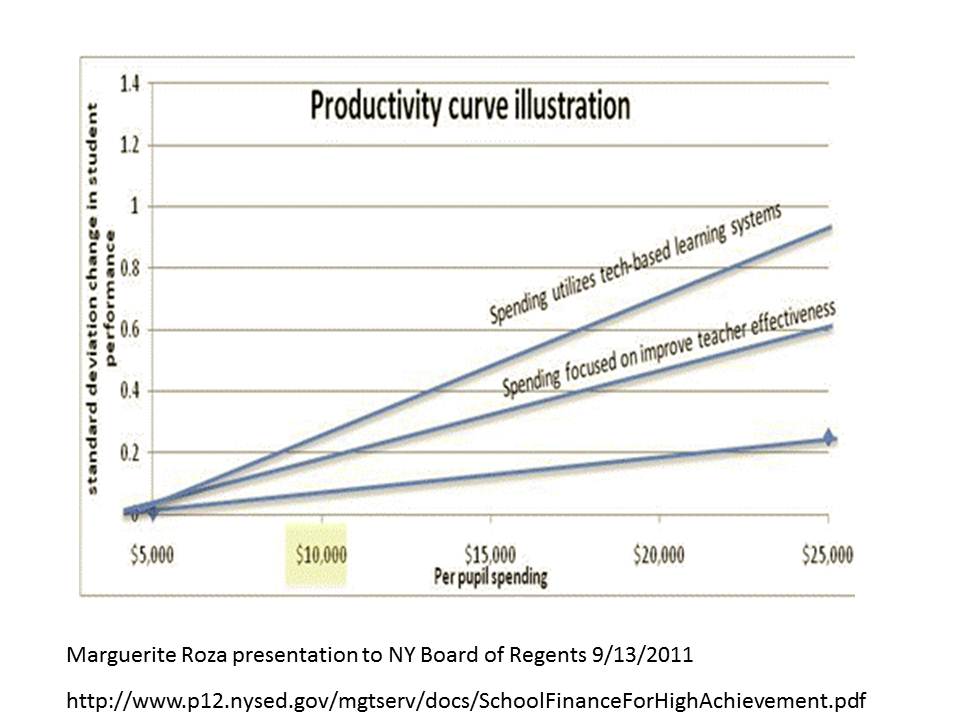

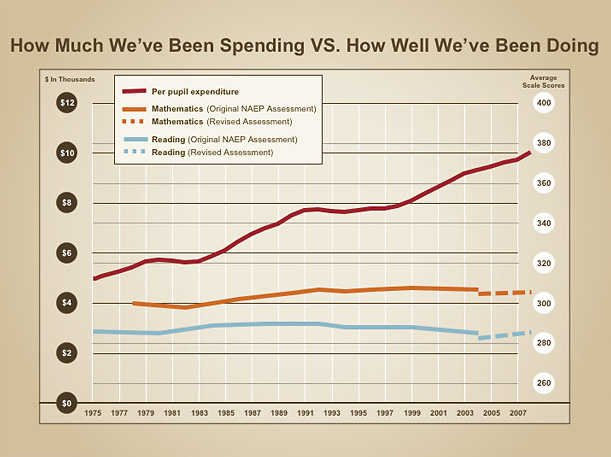

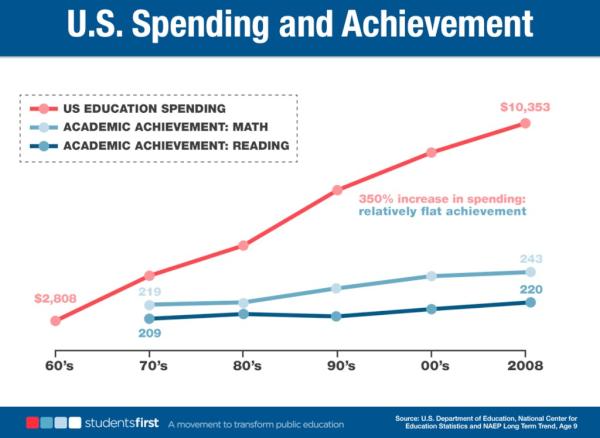

Oh… and separately, in this earlier report, Kevin Welner and I discuss appropriate methods for evaluating relative efficiency (the appropriate framework for such comparisons)…. And to no surprise the methods in this new UARK report regarding relative efficiency are also complete junk. Put simply, and perhaps I’ll get to more detail at a later point, a simple “dollars per NAEP score” comparison, or the silly ROI method used in their report are entirely insufficient (especially as some state aggregate endeavor???).

And it doesn’t take too much of a literature search to turn up the rather large body of literature on relative efficiency analysis in education – and the methodological difficulties in estimating relative efficiency. So, even setting aside the fact that the spending measures in this study are complete junk, the cost effectiveness and ROI approaches used are intellectually flaccid and methodologically ham-fisted.

But if the measures of inputs suck to begin with, then the methods applied to those measures really don’t matter so much.

To say this new UARK charter productivity study is built on a foundation of sand would be offensive… to sand.

And I like sand.