Alternative title: Why Hassel with research, data and facts?

I was called up on this past week to review a new policy brief on reforming Connecticut’s education funding system – or Education Cost Sharing formula. The brief, titled Spend Smart: Fix Connecticut’s Broken School Funding System seemed simple enough on its face, but as I looked deeper, ended up being among the most offensively shallow and poorly documented reports I have ever seen.

Further, some of elements of the report which were stated as fact, but entirely unsubstantiated would actually lead to funding policies that significantly disadvantage some of the state’s highest need children. Even worse, this brief was accompanied by submitted legislation that included these ill-conceived policies.

But this post is only partly about this new brief produced by ConnCan, with an eclectic mix of authors put forth in reformy manifesto style. Nearly every attempt to ground “facts” in the brief was tied to previous ConnCan briefs, which themselves included little or no substantiation.

The common denominator in this brief and those on which it relies, as well as the accompanying legislation, appears to be Bryan Hassel of Public Impact. Hassel has also played a role on previous haphazard manifesto-like school funding reports including Fund the Child. Bryan Hassel has also been mentioned as the outside expert to advocate on behalf of ConnCan for school funding reform in Connectictut, including testifying in favor of the proposed legislation. See: http://blog.ctnews.com/kantrowitz/2009/12/03/1208/, or the ConnCan tweet:

Brian Hassel, co-dir. Public Impact: SB 1195 would “catapult Connecticut into a national model for schools” #edreform #getsmartct

http://twitter.com/#!/conncan/status/51061576467361792

Tangentially, Bryan Hassel and Public Impact were also involved in the production of the deeply problematic analysis of charter school funding disparities released last year, which I critique in part, in my recent work on New York City charter schools.

There comes a point where I encounter enough different reports linked to single organization and author, where those reports are so shockingly bad that I simply can’t hold back anymore.

The following three examples, all connected back to Public Impact and Bryan Hassel, provide evidence of the utter methodological incompetence of this organization and their/his complete disregard for a) existing rigorous research, b) legitimate analytical methods and data, and perhaps most disturbingly, c) significant adverse consequences of performing shoddy analysis and making bold but haphazard policy recommendations.

Below are three of my related critiques of policy “research” (used as loosely as I can imagine) with ties to Public Impact and Bryan Hassel. I offer these critiques in particular to any policy makers who might believe it reasonable to rely on this junk, or the organization that produces it.

Example 1: Public Impact and ConnCan’s Funding Reform Proposals

http://nepc.colorado.edu/files/TTR-ConnCan-Baker-FINAL.pdf

Here are just a few examples from my review of Spend Smart. The Spend Smart brief essentially argues that the Connecticut finance system is broken (it may well be, and I think it is), and that it should be fixed with a simple school funding formula with a single weight on children qualified for free or reduced price lunch.

This particular brief stated a number of supposed “facts” about the status of the current system, few or none of which could be substantiated with information provided, and some which were clearly unchecked and simply wrong, with significant consequences.

Here are some quoted claims from the brief and a tracing of the factual basis for those claims:

Claim 2: “Moreover, our current system was designed to direct 33 percent more dollars to students in towns with high poverty, but actually provides only 11.5 percent more funding for these students.” (Page 2)

Claim 2 posits that the current ECS formula leads to an average of 11.5% additional funding per low-income child across Connecticut school districts. That claim is cited to a previous ConnCan report, The Tab, authored by Bryan Hassel of Public Impact (specifically Page 18 of The Tab). Page 18 of The Tab cites this claim in Footnote 18 as “Authors’ analysis using 2007-08 data from the State Department of Education. See Appendix for Details.” However, the appendix of the report provides no such justification and no further reference to the 11.5 figure. Rather the appendix provides only listings of data sources supposedly used and no explanation of how those sources might have been used.[i]

Claim 5: “For example, students at Connecticut’s charter schools are funded at only 75 cents on the dollar compared with traditional public schools.” (page 3)

Claim 5 is perhaps most perplexing, and like Claim 1, an example of the evidentiary black hole. The claim that Connecticut charter schools receive, on average, about 75% of state average funding is cited to a previous ConnCan report [not a Hassel/Public Impact product] titled Connecticut’s Charter School Law and Race to the Top. [ii] This ConnCan report was previously reviewed by Robert Bifulco for NEPC, who explained:[iii]

“The brief provides no indication of how it was determined that charter schools end up with only 75% of per-pupil funding that districts receive, or how, if at all, this comparison accounts for in-kind services or differences in service responsibilities.” [p. 3, Bifulco Critique]

And finally, for now:

Claim 6:“The formula could also hypothetically provide weights for other student needs, such as English Language Learner status. However, data shared by Connecticut State Department of Education with the State’s Ad Hoc Committee to Study Education Cost Sharing and School Choice show that the measure for free/reduced price lunch also captures most English language learners. In other words, there is a very strong correlation between English language learner concentration and poverty concentration in Connecticut. In addition, keeping the formula simple allows a more generous weight for students in poverty.” (p. 7, FN 12)

Claim 6 is particularly disconcerting, both because it includes a statistical finding which is never validated and because it is used to inform a policy solution which would produce substantial inequities harmful to a specific student population – children with limited English language skills. The authors claim outright that there is no need for additional adjustment for districts serving large shares of limited English proficient children because:

“there is a very strong correlation between English language learner concentration and poverty concentration in Connecticut.” (p. 7, FN 12)

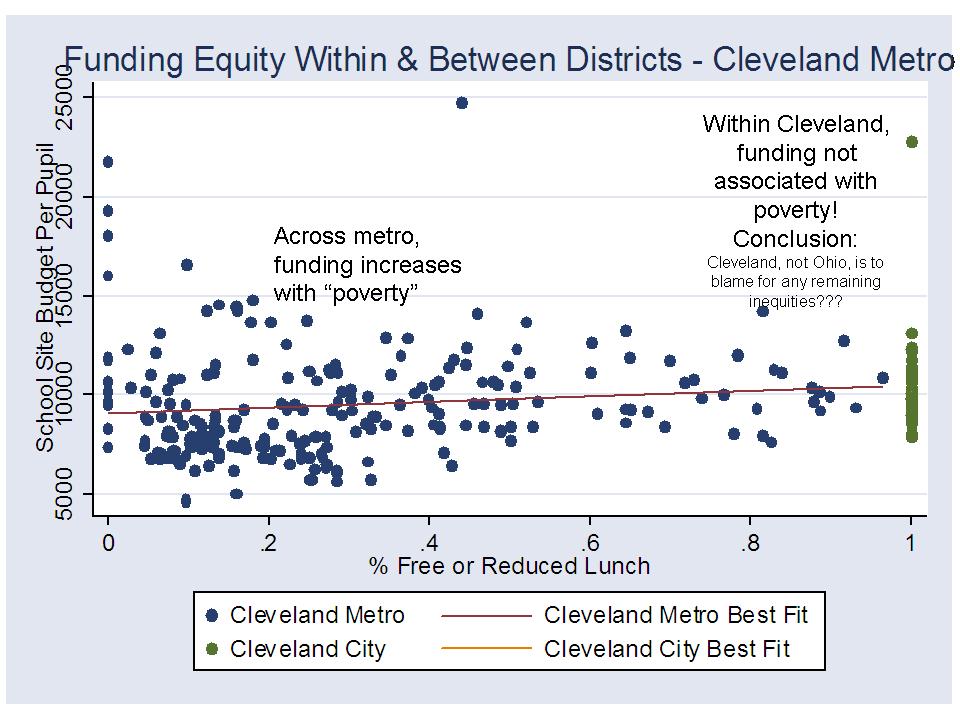

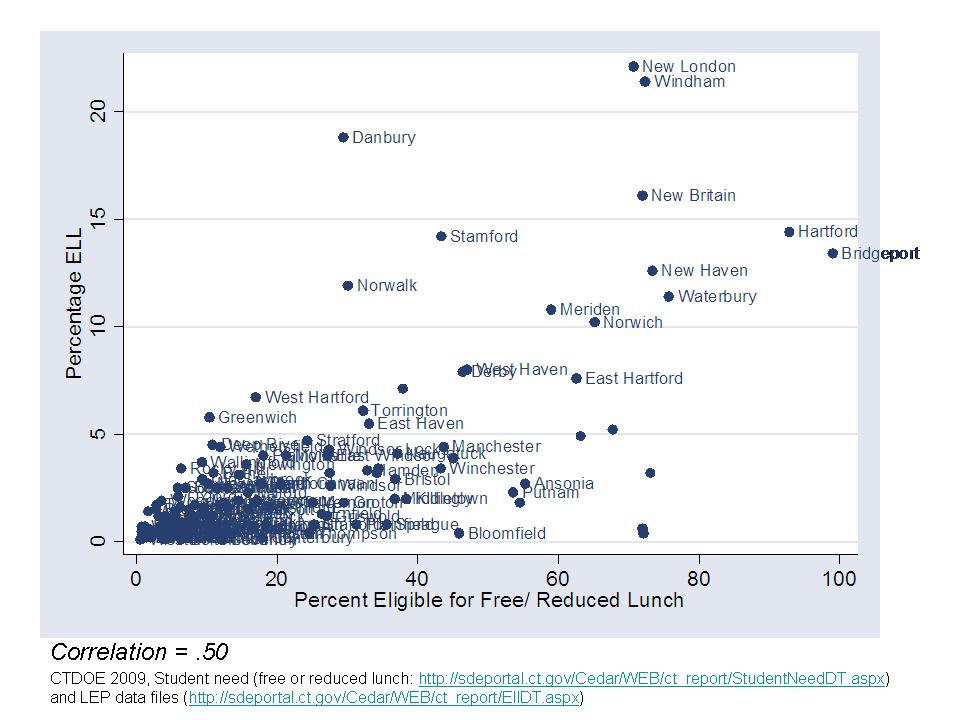

This finding is cited only ambiguously in a footnote to data shared by CTDOE. In some states, a strong relationship between the two measures might warrant collapsing supplemental aid for LEP and low-income children into one student need factor – with sufficient additional support to meet the combination and concentration of needs. However, a quick check of the data in Connecticut shown in Figure 1 (below) reveals that several districts have disproportionately high LEP concentrations relative to their low-income concentrations – specifically Norwalk, Danbury, New London, Windham and New Britain. These districts would be substantially disadvantaged by a formula with no additional weighting for LEP children, coupled with an arbitrary, small weighting for low-income status. In fact, the proposal to include only a relatively small weight for free or reduced price lunch and ignore the concentrated needs of these districts is most likely a back-door way to reduce the overall cost of the formula, and limit the extent that the formula truly redistributes funding where it is needed.

Figure 1

Relationship between Subsidized Lunch Rates and ELL Concentrations 2009

Data source: CTDOE 2009, Student need (free or reduced lunch: http://sdeportal.ct.gov/Cedar/WEB/ct_report/StudentNeedDT.aspx) and LEP data files (http://sdeportal.ct.gov/Cedar/WEB/ct_report/EllDT.aspx)

Note: From 2005 to 2009, the r-squared for this relationship ranges from .25 to .62, and is generally around .5.

The bottom line – The authors clearly never checked. The authors clearly don’t know what they are talking about, even at the most basic level. Yet they are willing – all who signed on to this brief, including Hassel, Hawley-Miles and Paul Hill – to go out on a limb and make these proclamations – proclamations and policy proposals which are simply bad, wrong, misguided – and irresponsible.

Example #2: Public Impact ConnCan’s The Tab

Much of the content of the Spend Smart brief seems to be grounded in, and some of it directly cited to, the previous ConnCan finance report titled The Tab, on which Bryan Hassel was listed as lead author.

I have written previously about The Tab, which is of equal quality to Spend Smart. Here’s a copy and paste of my previous post on The Tab.

https://schoolfinance101.wordpress.com/2009/11/23/why-is-it-ok-for-think-tanks-to-just-make-stuff-up/

==========Original Blog Post

This topic comes to mind today because ConnCan has just released a report (http://www.conncan.org/matriarch/documents/TheTab.pdf) on how to fix Connecticut school funding which provides classic examples of just makin’ stuff up (page 25). The report begins with a few random charts and graphs showing the differences in funding between wealthy and poor Connecticut school districts and their state and local shares of funding. These analyses, while reasonably descriptive are relatively meaningless because they are not anchored to any well conceived or articulated explanation of “what should be.” Such a conception might be located here or even here (Chapters 13, 14 & 15 are particularly on target)!

The height of making stuff up in the report is the recommended policy solution to the problem which is never clearly articulated. There are problems in CT, but The Tab, certainly doesn’t identify them!

The supposed ideal policy solution involves a pupil-based funding formula where each pupil should receive at least $11,000 per pupil (made up), and each child in poverty (no definition provided – just a few random ideas in a footnote) should receive an additional $3,000 per pupil (also made up) and each child with limited English language proficiency should receive an additional $400 per pupil (yep… totally made up). There is minimal attempt in the report (http://www.conncan.org/matriarch/documents/TheTab.pdf) to explain why these figures are reasonable. They’re simply made up.

The authors do provide some back-of-the-napkin explanations for the numbers they made up – based on those numbers being larger than the amounts typically allocated (not necessarily true). They write off the possibility that better numbers might be derived by way of a general footnote reference to a chapter in the Handbook of Research on Education Finance and Policy by Bill Duncombe and John Yinger which actually explains methods for deriving such estimates.

The authors of The Tab conclude: “Combined with federal funding that flows on the basis of poverty and (in some cases) the English Language Learner weight of an additional $400, the $3,000 poverty weight would enable districts and schools to devote considerable resources to meeting the needs of disadvantaged students.” I’m glad they are so confident in their “made up” numbers! I, however, am less so!

It would be one thing if there was no conceptual or methodological basis for figuring out which children require more resources or how much more they might actually need. Then, I guess, you might have to make stuff up. Even then, it might be reasonable to make at least some thoughtful attempt to explain why you made up the numbers you… well… made up. But alas, such thinking seems beyond the grasp of at least some “think tanks.” Guess what? There actually are some pretty good articles out there which attempt to distill additional costs associated with specific poverty measures… like this one, by Bill Duncombe and John Yinger: How much more does a disadvantaged student cost?

It’s not like the title of this article somehow conceals its contents, does it? Nor is the journal in which it was published (Economics of Education Review) somehow tangential to the point at hand. This paper, prepared for the National Research Council provides some additional insights into additional costs associated with poverty and methods for estimating those costs.

Rather than even attempt to argue that these figures are somehow founded in something, the authors of The Tab seem to push the point that it really doesn’t matter what these numbers are as long as the state allocates pupil-based funding. That’s the fix! That’s what matters… not how much funding or whether the right kids get the right amounts. In fact, the reverse is true. The potential effectiveness, equity and adequacy of any decentralized weighted funding system is highly contingent upon driving appropriate levels of funding and funding differentials across schools and districts!

Example #3: Public Impact Charter Disparity Analysis

Finally, there’s the report done by Public Impact with Ball State University on charter school funding disparities, which remains fresh in my mind because it keeps coming back up again and again. And it is because of the connection between the shoddy methods of that report, and the absurdly shoddy analysis in The Tab and Spend Smart, that this post is focuses on Bryan Hassel and Public Impact.

When digging deeper on financial differences among charter and non-charter schools in New York City, and looking at what the Public Impact/Ball State study had said about New York charter schools, my coauthor and I were shocked at how poorly the Public Impact/Ball State study had been conducted. Here’s a short section of our critique:

From: Baker, B.D. & Ferris, R. (2011). Adding Up the Spending: Fiscal Disparities and Philanthropy among New York City Charter Schools. Boulder, CO: National Education Policy Center. Retrieved [date] from http://nepc.colorado.edu/publication/NYC-charter-disparities.

This section returns to the issue of disparities in funding between non-charter and charter schools. As already noted, the Ball State/Public Impact study identified New York State as having large financial disparities between traditional public schools and charter schools. In contrast, the NYC independent budget office concluded that charters with department of education facilities had only negligibly fewer resources than non-charter public schools. One of these accounts is incorrect.

Ball State/Public Impact study claims that NYC traditional public school per-pupil expenditures were $20,021 in 2006-07, and that charter school expenditures were $13,468, for a 32.7% difference.[iv] However, the first figure appears to be inflated; the only figure that closely resembles $20,021 is the total expenditure, including capital outlay expense. This amounts to 19,198,[v] according to the 2006-07 NCES fiscal survey.[vi] This amount includes spending that is clearly not for traditional public schools—it includes not only transportation and textbooks allocated to charter schools, but also the city expenditures on buildings used by some charter schools.[vii] In essence, this approach attributes spending on charters to the publics they are being compared with—clearly a problematic measurement.

After offering these figures and the crude comparisons, the Ball State/Public Impact study argues that the purportedly severe funding differential is not explained by differences in need, because on average 43.5% of the students in public schools in New York State qualify for free or reduced-price lunch, while on average 73.3% of those in charter schools in New York State do. But, as was demonstrated earlier, there are three problems: (a) the focus on state rates, rather than NYC rates; (b) the inclusion of reduced-price lunch rates rather than just free-lunch rates as a measure of poverty (when focused on comparisons within NYC); and (c) the failure to compare only schools serving the same grade-levels. When these details are addressed, a different picture emerges. At the elementary level in NYC, for example, charter school free lunch rates were 57% and non-charter public school rates were 68%.

The NYC IBO report offers figures that are more in line with the data. For 2008-09, traditional public schools are found to have expenditures of $16,678, while charters that are provided with facilities are at nearly the same level ($16,373). Public expenditures on charters not provided facilities are found to be about $2,700 per pupil lower ($13,661). But even this comparison is not necessarily the most precise or accurate that might be made, because it does not attempt to compare schools that are (a) similar in grade level and grade range and (b) similar in student needs. The IBO analysis provides a useful, albeit limited, comparison of charter schools in their aggregate to district schools in their aggregate. Importantly, the IBO charter school funding figures do not include funds raised through private giving to schools or monies provided by their management organizations.

Once the cost differences associated with student populations are factored in, the IBO analysis changes significantly. In fact, the cost associated with student population differences is the same as the per-pupil cost associated with lack of a facility: $2,500. After adding the $2,500 low-need-population adjustment to charters, those not in BOE facilities can be seen to have funding nearly equal to that of non-charters ($16,171 vs $16,678) while those in BOE facilities have significantly more funding than non-charters (see Table 3).[viii]

One might try to argue that these problems we identify with the NY estimates, which render them entirely meaningless, are specific to New York, but that the rest of the states are reasonably estimated. The reality is that when it comes to estimating these types of funding differentials, each state and each local district, depending on the charter funding formula has its own peculiarities. If the crude method used by Hassel and colleagues completely missed the boat on New York, it is highly likely that comparable problems exist across many other settings. Without further, more detailed an appropriate analysis it would be unwise to base any conclusions on the existing Ball State/Public Impact study.

[i] In the recent report Is School Funding Fair, 2007-08 update (http://www.schoolfundingfairness.org/SFF_2008_Update.pdf) , Baker, Farrie and Sciarra show that the differential between very high and very low poverty districts in Connecticut is about 15% (Table 1), however, it is important to understand that in Connecticut, these patterns are not systematic. Rather, as I show in Figure A3 of the appendix herein, there exist substantial irregularities in current spending per pupil with respect to poverty. Among high need districts in particular, funding levels vary widely. Arguably, in this regard the system is indeed broken. But the ConnCan reports fail to provide any legitimate evidence to this effect.

[ii] http://www.conncan.org/sites/default/files/research/CTCharterLaw-RTTT2010-Web-2.pdf. Interestingly, the authors of the current brief, including Bryan Hassel, choose not to anchor this conclusion to other recent work co-authored by Hassel, which describes funding disparities between host districts – New Haven and Bridgeport – and charters in those cities as “severe.” However, Baker and Ferris (2011) explain substantial methodological flaws in the characterization of charter funding gaps by Hassel and colleagues, pertaining to their analysis of New York State and New York City charter schools. There is little reason to believe that Hassel and colleagues analyses of Connecticut are any more valid than those for New York. For the state and district summaries of charter disparities, see: Batdorff, M., Maloney, L., May, J., Doyle, D., & Hassel, B. (2010). Charter School Funding: Inequity Persists. Muncie, IN: Ball State University. see: p. 10-11,Table 5. For a thorough critique of Hassel and colleagues mis-steps in this report when characterizing charter disparities in New York, see: Baker, B.D. & Ferris, R. (2011). Adding Up the Spending: Fiscal Disparities and Philanthropy among New York City Charter Schools. Boulder, CO: National Education Policy Center. Retrieved [date] from http://nepc.colorado.edu/publication/NYC-charter-disparities.

[iv] See: Batdorff, M., Maloney, L., May, J., Doyle, D., & Hassel, B. (2010). Charter School Funding: Inequity Persists. Muncie, IN: Ball State University, bottom of Table 5

[v] Depending on how one chooses to calculate this figure, the range is from 19,199 to about 20,162. The reported total expenditures for the district are $20,144,661,000 and enrollment figures range from 999,150 (as reported in the fiscal survey) to 1,049,273 (implied enrollment from current expenditure per pupil calculation in fiscal survey).

[vii] The New York State Education Department reports several versions of expenditure figures. Total expenditures per pupil for NYC in 2007-08 were $18,977—much lower than the total reported by Batdorf and colleagues. But the IBO correctly points out some expenses would be appropriately excluded from this number. For instance, the NYC Department of Education provides facilities for about half the city’s charter schools as well as many other forms of support for some charter schools, including authorizer services, food service, transportation services, textbooks, and management services:

Pass-through Support for Charter Schools. Charter schools are eligible to receive goods such as textbooks and software, as well as services such as special education evaluations, health services, and student transportation, if needed and requested from the district. In NYC there is a long-established process for non-public schools to access these services, and charter schools have access to similar support from DOE. For these items, charter schools receive the goods or services rather than dollars to pay for them. Most of these non-cash allocations are managed centrally through DOE.

IBO report, 2010: Retrieved December 13, 2010, from

http://schools.nyc.gov/community/planning/charters/ResourcesforSchools/default.htm.

It is simply wrong to compare the city aggregate spending per pupil to the school-site allotment for charters, as was done by Batdorf and colleagues (who also use the most inflated available figure for the city aggregate spending). In 2007-08 (a year earlier than the IBO comparison figure, but likely a reasonable substitute), NYSED estimates for the instructional/operating expenditures per pupil in NYC were $15,065 (this uses the instructional expenditure share, including expenditures on employee benefits [IE2%, Col. AP] times the total expenditures. Retrieved December 13, 2010, from http://www.oms.nysed.gov/faru/Profiles/datacolumns1.htm). This figure may be far more relevant than that chosen by Batdorf and colleagues, but is still potentially problematic.

[viii] Again, we are unable to adjust precisely for differences in special education populations, due to lack of sufficiently detailed data.