A Reply to Dunn and Derthick in Education Next

Anyone who has read my previous work knows I’m not generally a fan of tax and expenditure limits. A significant body of empirical research does show that strict tax and expenditure limits can cause significant damage to state school finance systems over the long haul. For example, Author David Figlio in a study of Oregon’s Measure 5 (National Tax Journal Vol 51 no. 1 (March 1998) pp. 55-70) finds that: Oregon student-teacher ratios have increased significantly as a result of the state’s tax limitation. David Figlio and Kim Rueben in the Journal of Public Economics (April 2001, Pages 49-71) find: Using data from the National Center for Education Statistics we find that tax limits systematically reduce the average quality of education majors, as well as new public school teachers in states that have passed these limits. In a non-peer reviewed, but high quality working paper, Thomas Downes and David Figlio “find compelling evidence that the imposition of tax or expenditure limits on local governments in a state results in a significant reduction in mean student performance on standardized tests of mathematics skills.” (http://ase.tufts.edu/econ/papers/9805.pdf)

Despite my general concerns over tax and expenditure limits, I have even greater concern over legal arguments like those posed by an affluent suburban school district in Kansas, summarized by Joshua Dunn and Martha Derthick in the Fall 2011 issue of Education Next. As Dunn and Derthick explain, beginning in the 1990s Kansas imposed limits on the amount of revenue local public school districts can raise above and beyond the revenue they are guaranteed through the state general fund aid formula. One affluent suburban district outside of Kansas City recently filed a legal challenge to those limits in Federal District Court, and that legal challenge was the subject of Dunn and Derthick’s recent column. Dunn and Derthick explain the legal arguments as follows:

Citing Supreme Court decisions in Meyer v. Nebraska (1923) and Pierce v. Society of Sisters (1925), which held that the liberty guaranteed in the Fourteenth Amendment’s Due Process Clause includes a right of parents to control the education of their children, the plaintiffs charged that the local cap infringes on that right. As well, by forbidding additional taxes it limits their right to use their property as they wish. Still more inventive, they invoked the First Amendment right of assembly, saying that the cap prevents voters from expressing their collective wishes at the ballot box. These violations together, they contended, constitute a denial of equal protection of the law.

http://educationnext.org/trouble-in-kansas/

So then, what’s wrong with considering the individual liberty to unlimited property taxation? If such liberties apply to campaign contributions or other forms of assembly, then why not to the choice to levy whatever property tax one sees fit? And what’s wrong with linking the notion of complete “local” control over property taxation to the notion of parental control over the education of one’s own children? Ah, if it was only so simple. But it’s not, and here’s a primer on why.

A Little Background Tax and Expenditure Limits (TELs)

State imposed limitations on the taxing behavior of state recognized intermediate and local jurisdictions fall into a broad category of state fiscal management policies known as Tax and Expenditure Limits, or TELs. Tax and expenditure limits have been around for decades and exist in one form or another across nearly every state.

Arguably, the modern era of Tax and Expenditure Limits began with the adoption by statewide referendum of California’s Proposition 13 in 1978, which included a series of limits to the taxable assessed values of properties and changes in those assessed values and included an overall tax rate cap. Daniel R. Mullins and Bruce A. Wallin (2004) note that “Within two years of the passage of Proposition 13 (a California initiative), 43 states had implemented some kind of property tax limitation or relief.” [1] By 2004, Mullins and Wallin indicate that Forty-six states have some form of constitutional or statutory statewide limitation on the fiscal behavior of their units of local government.

Statewide limitations on local property taxes exist in multiple forms across states.

Overall Property Tax Rate Limits: Mullins and Wallin note that limits on property tax rates are the most common form of Tax and Expenditure Limit. Overall property tax rate limits restrict the total (municipal, school and other) property tax rate which can be adopted by local jurisdictions. Overall property tax rate limits may but do not necessarily include an option for local override votes. That is, property tax rates are limited but may be exceeded by local voter approval, often including such restrictions as requiring a super-majority vote to achieve override. Mullins and Wallin note that 33 states have imposed property tax rate limits, with 31 limiting municipalities, 28 counties, 26 school districts and 23 all three types (p. 7).

Specific Property Tax Rate Limits: Specific property tax rate limits apply limitations to tax rates for one component of local public goods or services, for example a rate limit on municipal taxes only or a rate limit on property taxes for operating revenues for local public schools, or for capital outlay revenues for local public school. Again, override options may or may not be included.

Property Tax Revenue Limit: Property tax revenue limits place limits on the revenue that may be derived from property taxes in a given year, regardless of the rate applied. Revenue limits may either be applied to the total revenue allowable (revenue level) or, more commonly to the rate of increase in revenue allowable.

Assessment Increase Limit: Because property tax revenues collected, and tax bills paid by property owners are a function of both the tax rate applied and the assessed value of properties, constraints placed on the allowable growth in assessed value also operate as property tax limitations.

General Revenue or Expenditure Limit: States also place caps on the total amount of revenue that can be raised from property taxes for specific purposes, or alternatively on the amount of property tax revenue that can be raised and expended in a given year. Like other limits, these may be placed on either the total level or revenue or expenditures or on the annual growth in revenue or expenditures, and may or may not be coupled with override options (where those override options are also specified in state laws).

Finally, many states include complex combinations of the above property tax and expenditure limits, such as including both a limit on the rate at which assessed property values may grow and the a limit on the property tax levy.

Property Taxation and TELs in Kansas

The above descriptions of tax and expenditure limits reveal some of the complexity of how these limits work. For example, state imposed limits on growth in property value assessments are a property tax limit to the same extent as limiting the tax rate than can be applied to those properties. Property taxes include multiple moving parts, or multiple policy levers, the vast majority of which in most states are creations of and controlled within state constitutions and statutes. Below is a non-exhaustive list of the moving parts of the property tax revenue equation:

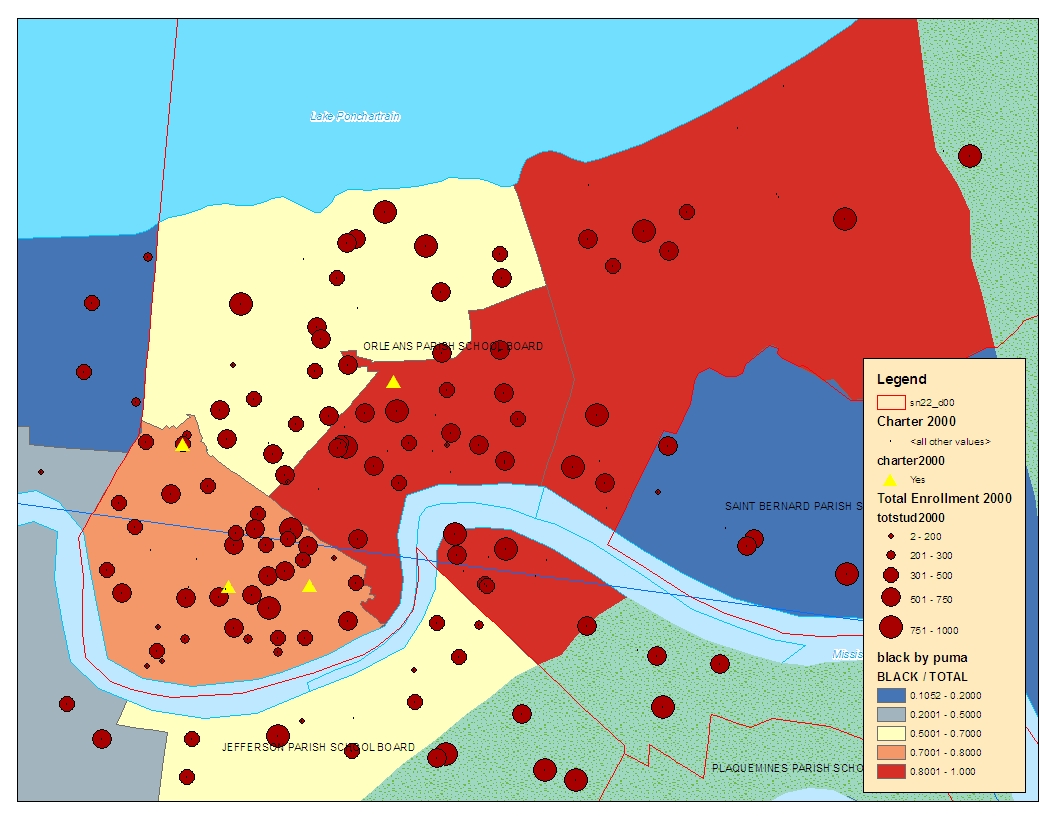

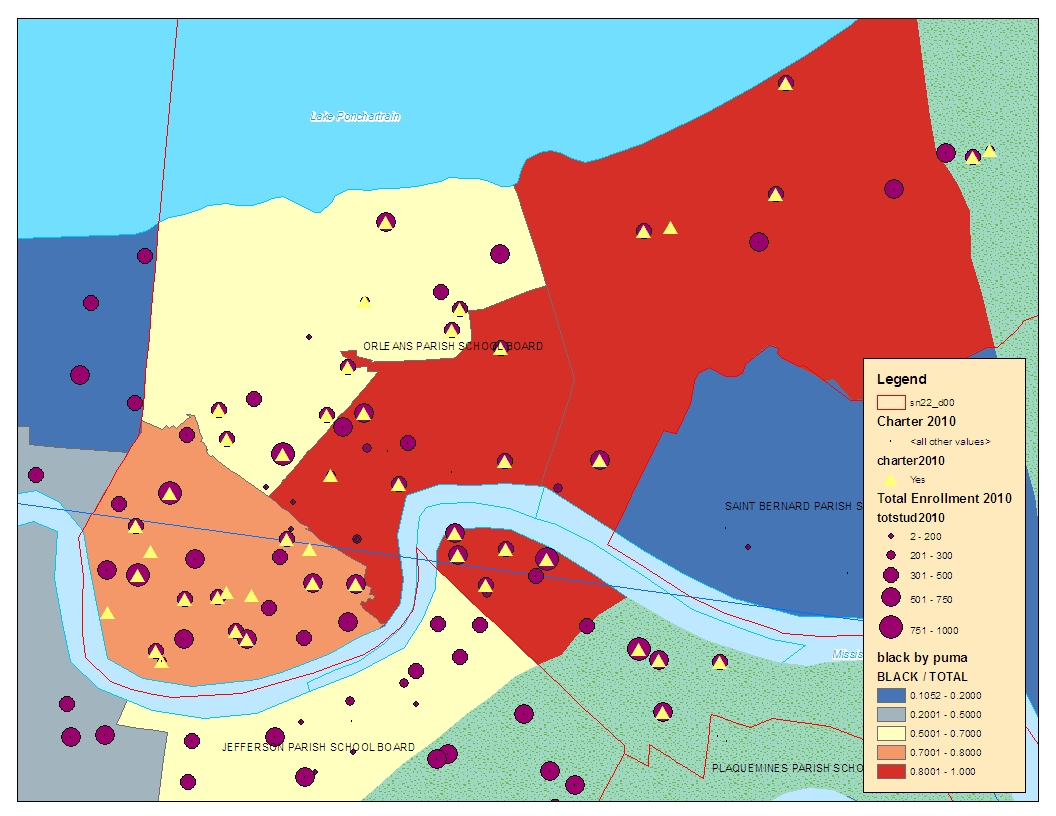

- The boundaries of taxing jurisdictions: Taxing jurisdictions are government subdivisions within states, defined in state statutes and/or constitutions. They are creations of the state, even if granted home rule or limited home rule. Taxing jurisdictions may or may not be as simple as “cities and towns” or “municipalities.” In some states, municipal taxing jurisdictions are reasonably aligned with local school taxing jurisdictions, but in others like Kansas, they are not. The lack of contiguity between local public school district boundaries and municipal boundaries in Kansas is largely a result of school district consolidations that occurred under state statutes adopted in the 1960s, concurrent with (shortly before) rewriting of the education article of the state constitution. In many states, the geographic spaces defined as taxing jurisdictions and enrollment areas for local public school districts continue to be redrawn, as in the case of the northeastern section of Kansas City Missouri School District which was recently annexed to Independence School District through a procedure created (specifically for that circumstance) under a recent Missouri statute. Further, school district boundary determinations (under state laws) are often linked to a long history (including recent history) of institutionalized and state sanctioned racial discrimination in housing markets.[2] The defined geographic boundaries of a taxing jurisdiction determine the properties that are included in or excluded from that jurisdiction. Those boundaries ultimately determine the total values of property within the bounded space, and in turn the amount of revenue that can or cannot be generated by applying any given tax rate to those properties.

Figure 1

School District (green) Boundaries and Cities and Towns in the Kansas City Metro

- Definitions of Property Types: Different types of property exist within any taxing jurisdiction, including residential properties, residential properties owned by non-residents (second homes), commercial properties, industrial properties, utilities and farm properties. In Kansas and elsewhere property types are defined in the State Constitution (Article 11). The definition of property types influences substantially the application of “local” property taxes because each defined jurisdiction contains a different mix of property types – some with more commercial property than others – some more residential – and others more farm property. And the different values applied to different types of properties become a significant factor influencing the local revenue raising capacity of communities. Note that in Kansas, as elsewhere, the highest aggregate property values per child enrolled in school are not those in school districts with the highest valued houses, but are those in communities like Burlington, Moscow and Rolla which each include non-residential properties of significant value.

- Valuation Procedures: Procedures for determining the taxable value of properties are also defined in state statutes and constitutions, and in Kansas, in Article 11 of the constitution. Those valuation procedures operate as a form of tax and expenditure limitation. Residential properties are defined to have a taxable value of 11.5% of fair market value, agricultural land 30%, vacant lots 12%. States adopt such structures out of state policy interest in creating certain types of incentives or controls, including incentives to either preserve or develop farm property or vacant lots, or buffer commercial interest from escalating taxes. These differential assessment ratios are effectively limits to the revenue raising capacity from any applied tax rate.

- Property Tax Exemptions: States also control, typically via statute, the extent to which intermediate or local jurisdictions may grant exemptions to property taxes, including the duration over which an exemption may be granted or types of properties that may be granted exemptions. States also may impose exemptions such exempting from property taxes, a proportion of the value of residential properties owned by senior citizens, in the policy interest of protecting seniors on fixed income from escalating property taxes. As a tax equity measure, Kansas in the late 1990s adopted and exemption to the first $20,000 in taxable value of a residential property for property taxes applied to General Fund Revenues for schools (a statutory provision).

- Tax Rate Setting & Referendum Procedures: States also regulate the procedures by which local school district budgets are determined and/or tax rates are set. In some states with constitutional property tax limits which include override provisions, the referendum procedure for override is in the constitution, and may include a requirement of super-majority vote to achieve an override. Requirement of a super-majority is a limit. In other states, statutory provisions permit local authorities to raise taxes (or resulting revenues) to specific levels without voter approval and above those levels with voter approval. In some cases, those limits are absolute and cannot be exceeded.

- Debt Ratio Ceilings on Bonded Indebtedness: States also impose various limitations on the amount of debt “local” jurisdictions may accumulate toward the financing of capital projects. Kansas, like other states, imposes a limit – measured as a percentage of total taxable assessed valuation – on the amount of debt that can be accumulated through issuance of general obligation municipal bonds for the financing of new school construction or major renovations.

Each and every provision above and each and every element of the property tax system is controlled by and exists only as a function of state constitutional provisions and statutes. Further, each piece of the property tax puzzle imposes limitations – state controlled limitations – on the ability of state sanctioned local jurisdictions to raise revenues with property taxes.

Extreme Implications of a constitutional protection for complete, unregulated local citizen control over property taxation

Taken at its extreme, the assumption that local residents of any geographic space in the State of Kansas possess a Constitutional right to unlimited control over property taxation for “their” local public schools means that those local residents would have control over each and every parameter above, as each parameter above is a critical determinant of the revenue generated for local public schools by adoption of a specific property tax levy (a rate multiplier). Set any parameter – or multiplier to “0” – and the whole equation shuts down. No one piece is more important than another at determining the amount of money that can be raised for “local” public schools.

Taken at its extreme, any group of citizen residents of the state of Kansas should be able to organize themselves, and define a geographic area that they consider to be their taxing jurisdiction. They would then have the authority to define the types of properties in their jurisdiction and the method for determining the taxable value of those properties. Further, they would have the right to decide whether a mere majority or super majority vote is required in order to adopt any particular tax rate to apply to those properties.

If local citizens control only the single parameter of tax rate setting (the “mill levy”), the state could simply alter rules for adopting rate increases, such as requiring a super-majority vote. Or the state could adopt legislation which effectively reduces the taxable value of properties or exempts certain types of properties for raising additional school revenue above current local option budget limits. For example, the state could exempt all commercial and industrial properties from additional taxation (much like the 20% exemption on residential properties for General Funds Budgets). Such state controls, while not limiting the levies adopted, would limit the revenue that could be generated by those levies. Each of these rules only presently exists as a function of prior state, not local actions.

Assuming that there exists only a constitutional right to adopt higher tax levies, but those levies are to be adopted within an otherwise completely state controlled policy framework, is illogical. If such constitutional freedoms do exist, then they must apply to each and every relevant parameter limiting revenue.

Clearly, however, assuming that local groups of citizens have unlimited rights to determine each and every parameter in the property tax revenue generating equation is absurd, would moot numerous Kansas statues, Article 11 of the Kansas Constitution, and similar constitutional and statutory provisions across nearly every other state.

The state interest in regulating taxes imposed on non-resident property owners

As school district boundaries are presently organized, especially in the Kansas City metropolitan area, school districts each consist of many types of properties. Implicit in the assumption that there exists a constitutionally protected individual right to raise additional funds, through property taxation, for the education of one’s own children is that there exists an overly simplistic 1 to 1 to 1 ratio between children to be educated, the parents of those children and homeowner taxpayers of the jurisdiction. That is, each taxpayer homeowner is also a parent with interest in the quality of education provided to his or her child at the collective expense. Such would be true if the group of parents organized to start a private school and used their private resources to finance the operations of that school to a level suitable to their own tastes.

This assumption crumbles when applied to local property taxation for public schools and when we consider the mix of property types, property owners and taxpayers that fall within any school taxing jurisdiction in Kansas. For example, owners of commercial and industrial properties within the jurisdiction may not be residents of the jurisdiction. Taxes paid by these individuals may be affected significantly by the decisions of a simple majority share of local residents of the district. The state has a legitimate interest in and may see fit to limit such impact. And one method for doing so is the maintenance of existing tax and expenditure limits.

It seems absurd to assume that a group of resident citizens of a jurisdiction have a constitutional right to unlimited taxation of someone else’s property without the option of state intervention.

The state interest in regulating taxes imposed on vulnerable minority voting blocks

Senior citizens who currently no-longer have children attending local public schools and are living on fixed income may be outnumbered at the polls in some jurisdictions when school budget (levy referenda) votes are held. Many states have policies exempting portions of the value of properties owned by senior citizens in order to provide some protection against escalating taxes. Those exemptions are a state imposed limit to property taxation.

As noted above, if we accept the assumption of a constitutional right for a group of local residents in a taxing jurisdiction to levy unlimited taxes on the rest of the jurisdiction, we must also accept that those same residents have control over each and every parameter in the property tax revenue generating equation that might limit their revenue raising capacity. A simple majority of residents could then negate exemptions. The state has a legitimate interest in protecting the rights of local minority voter populations, such as senior citizens, through such policy mechanisms as property tax exemptions.

The state interest in maintaining school funding fairness

Finally, the state also has an interest in the maintenance of equity in the provision of public education and in access to equal educational opportunity, and one mechanism the state has adopted in order to maintain equity is the limitation to supplemental local spending through property taxation.

Why is it problematic from an equal educational opportunity perspective for local public school districts to have unlimited ability to raise their property taxes and spend as they see fit on their local public schools? How, for example, does it harm the children of Kansas City, Kansas if the parents in Shawnee Mission or Blue Valley School Districts choose to substantially outspend Kansas City over the next several years and provide far higher quality local public schools?

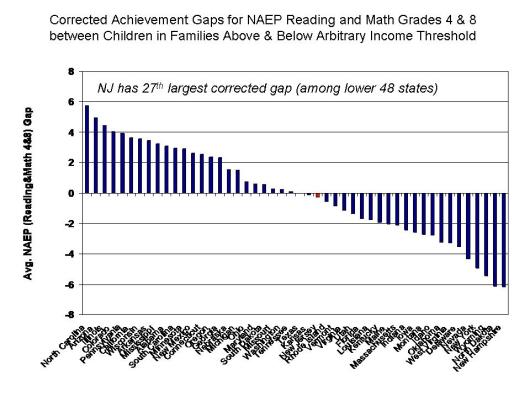

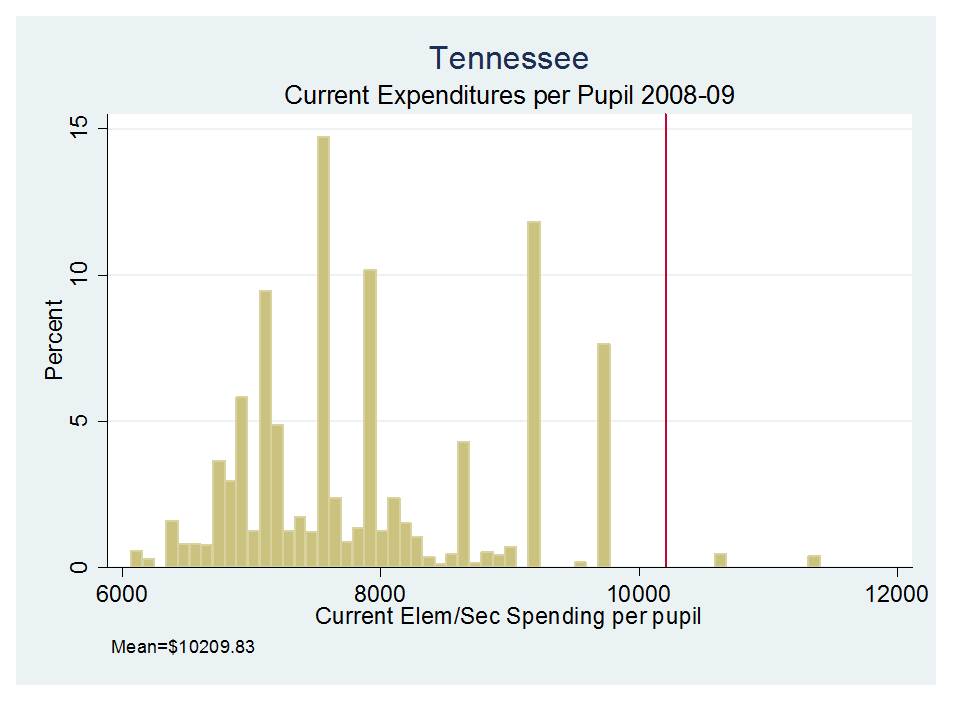

Given the vast student population differences across school districts in the Kansas City area and specifically between Kansas City and Shawnee Mission which are immediate neighbors, there exist very large differences in the actual cost of providing children with equal educational opportunity. Professor William Duncombe (Syracuse University), on behalf of the Kansas Legislative Division of Post Audit in 2006, estimated that if the cost of a specific quality of education for the state average district was set to 100 (100%), the cost of achieving equal opportunity for students in Kansas City would be about 35% higher than that average, and in Shawnee Mission would be about 12% lower than that average. Presently, the state school finance formula provides for much less difference in funding than would actually be needed to achieve more equal educational opportunity (See Table 1). In fact, when all state and local revenues are considered, Kansas is rated as having a regressive to flat state school finance system – one where higher poverty districts have systematically lower (or, at best, nearly comparable) resources per pupil than lower poverty districts – in a recent national report (as of 2007-08).[3]

The differences in cost of equal educational opportunity estimated by William Duncombe are a function of many factors, most notably vast differences in the backgrounds and needs of children attending local public school districts (See Table 2). More needy students require a wider array of services, including more specialized personnel, smaller class sizes and specific educational and support programs. The state has both an interest and a constitutional obligation to provide equal educational opportunity.

There are at least two major reasons why states have an interest in the maintenance of equity and equal educational opportunity across local public school districts.

First, education is a positional good. Access to economic opportunity, including access to higher education for children in Kansas City, Kansas depends not only on the absolute level of educational expenditure in their own public schools but on the relative quality of education they receive compared to that of other children competing for the same slots in local public and private colleges and universities.

Second is that the quality of schooling in any given location depends largely on the quality of teacher workforce that may be attracted to teach in any given location. It is well understood that in any given labor market, working conditions – most notably student population characteristics – substantially influence teacher job choice, most often to the disadvantage of the neediest students. It would take not only equal, but significantly higher wages to recruit and retain teachers of comparable qualification to teach in Kansas City as it would to recruit and retain similar teachers to teach in Shawnee Mission, Blue Valley or Olathe. The competitive wage for teachers of specific qualifications in any given area are driven by the wages paid by each district’s nearest neighboring competitors and by the differences in working conditions across districts.

At present, teacher salaries in Kansas City, Kansas are already much lower than those in Shawnee Mission and other Johnson County districts (Table 3). They are lower partly because the state already allows Johnson County districts to levy a special “cost of living” tax (see Table 4) which falsely assumes that teachers in districts with more expensive houses are therefore more expensive to hire. Providing further opportunity for Johnson County districts to widen the salary gap, by removing state imposed tax limits, would likely lead to even greater disparities in teacher qualifications across wealthy and poor districts serving lower and higher need student populations in the Kansas City metropolitan area.

If Shawnee Mission and other Johnson County parents have the right to raise their property taxes in order to recruit and retain better teachers, don’t Kansas City parents have the same right? While they might have a similar right, they do not have similar capacity. Granting this right does not require that the state adopt any measures to equalize the capacity to compete.

For every additional mill on the local tax levy, Shawnee Mission can raise an additional $117 per pupil, whereas Kansas City can raise only $38, a greater than 3X difference (see Table 5). Even under present circumstances, with imposed limitations to the local option budget, Kansas City salaries lag behind Johnson County districts, and Johnson County districts have already been provided a local taxing opportunity to widen the gap, an option some have used.

TABLES AVAILABLE IN PDF VERSION: Fast Response Brief on Individual Liberty and Tax Limits

[1] Daniel R. Mullins and Bruce A. Wallin (2004) Tax and Expenditure Limitations: Introduction and Overview Public Budgeting and Finance (Winter) 2 – 15

[2] See Kevin Fox Gotham (2000) Urban Space, Restrictive Covenants and the Origins of Racial Residential Segregation in a U.S. City. 1900 to 1950. International Journal of Urban and Regional Research 24 (3) 616-633