Professionals, Pundits and Evidence Based Decision Making

In Ed Schools housed within research universities, and in programs in educational leadership which are primarily charged with the training of school and district level leaders, we are constantly confronted with deliberations over how to balance teaching the “practical stuff” and “how to” information on running a school or school district, managing personnel, managing budgets, etc. etc. etc., and the “research stuff” like understanding how to interpret rigorous research in education and related social sciences (increasingly economic research). Finding the right balance between theory, research and practice is an ongoing struggle and often the subject of bitter debate in professional programs housed in research universities.

Over the past year, I’ve actually become more supportive of the notion that our future school and district leaders really do need to know the research, understand statistics and other methods of inquiry and be able to determine how it all intersects with their daily practice, even when it seems like it couldn’t possibly do so.

Unfortunately, a major reason that it has become so important for school leaders to know their shit is because state agencies, including departments of education, which to some extent are supposed to be playing a “technical support role,” have drifted far more substantially toward political messaging than technical support, and have in many cases drifted toward driving their policy agendas with shoddy fact sheets, manifestos and other shallow, intellectually vacuous but “easy to digest” Think Tank fodder.

In many cases, this intellectually vacuous, technically bankrupt think tank fodder is actually being trotted out by state education agencies as technical guidance to local school administrators.

Punditry in NY State

SchoolFinanceForHighAchievement

commissioner-nyscoss-presentation-092611

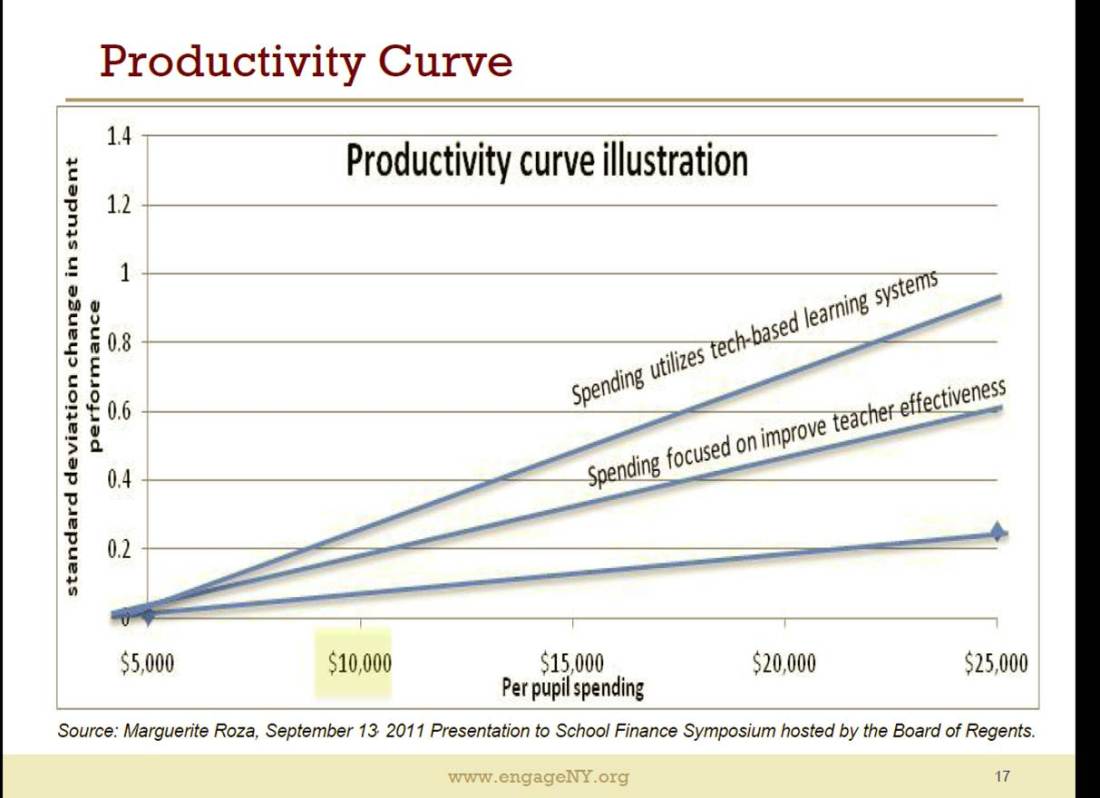

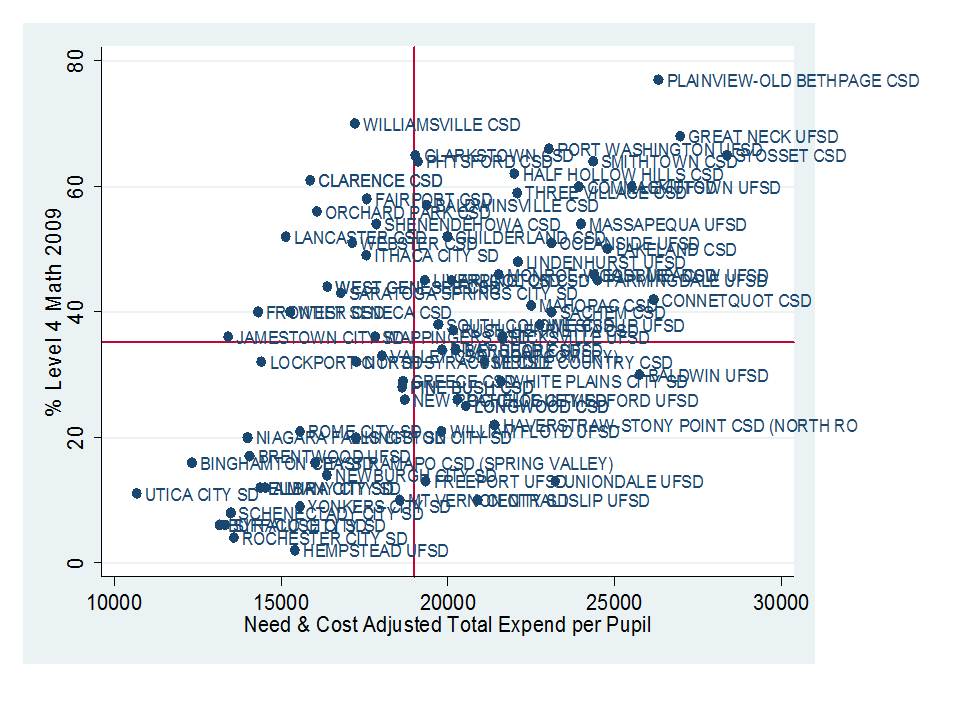

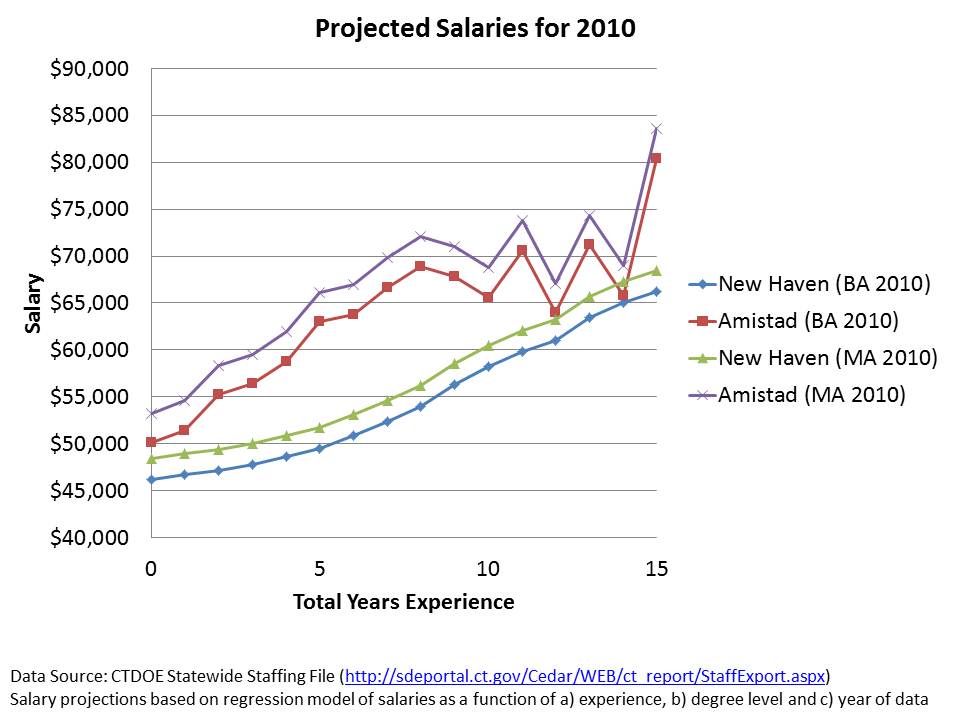

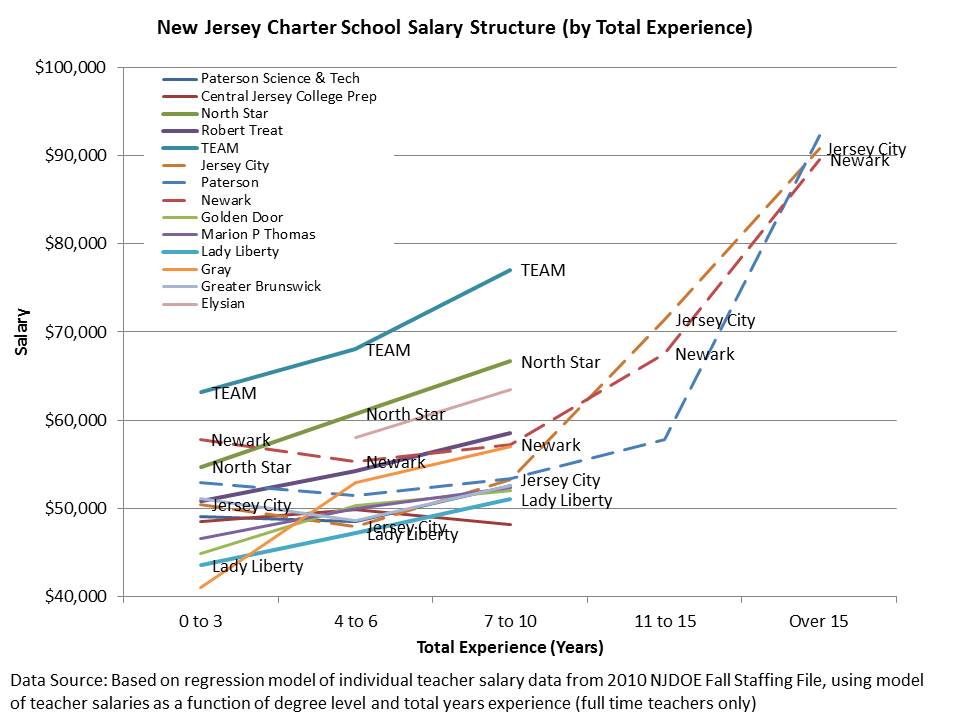

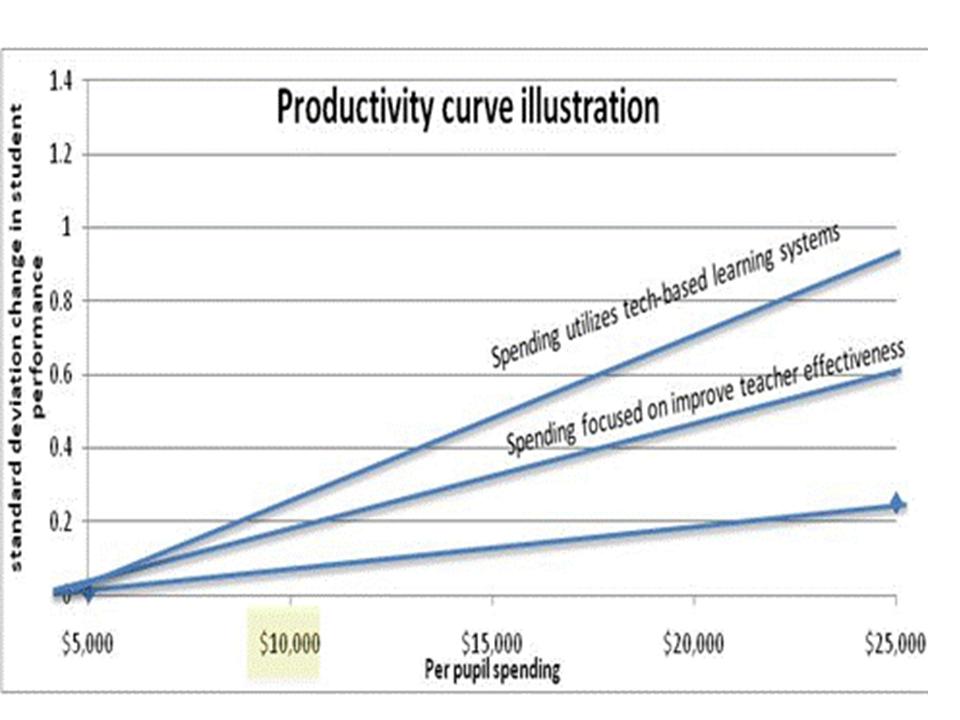

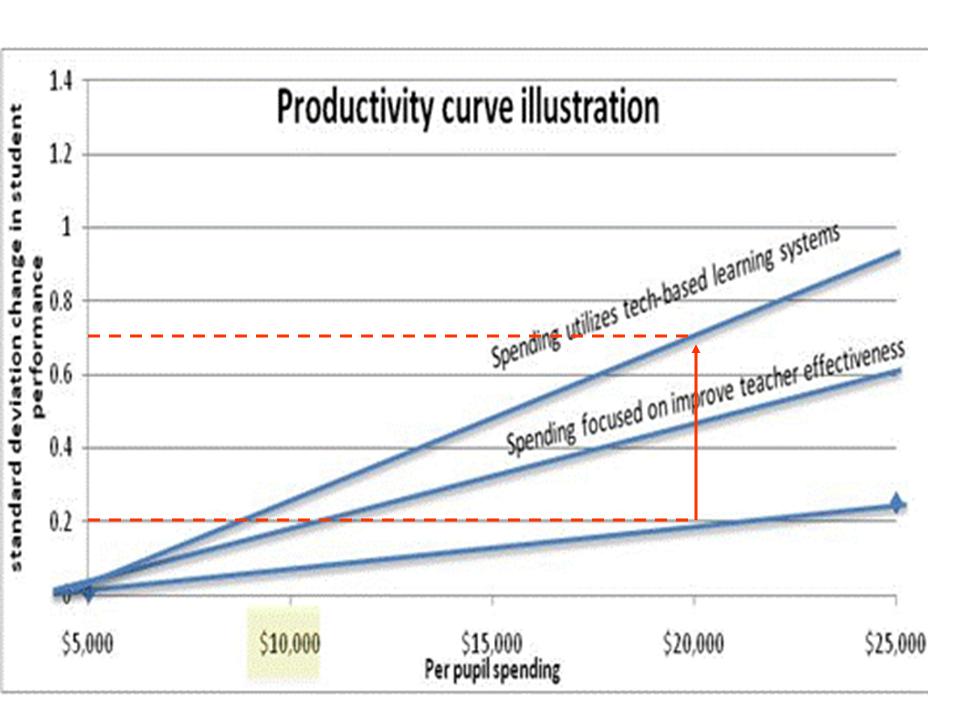

For example, I’ve mentioned these two graphs previously on this blog, which have now been repeatedly trotted out by New York State Education Commissioner John King in presentations to local school officials.

The first graph fabricates an argument that putting more funding into current practices in schools would necessarily be less efficient than putting more funding into either a) alternative compensation schemes which pay teachers based on performance (or at least not on experience and degree level) or b) tech-based solutions. While the latter is never even defined, neither has been shown to produce

Figure 1

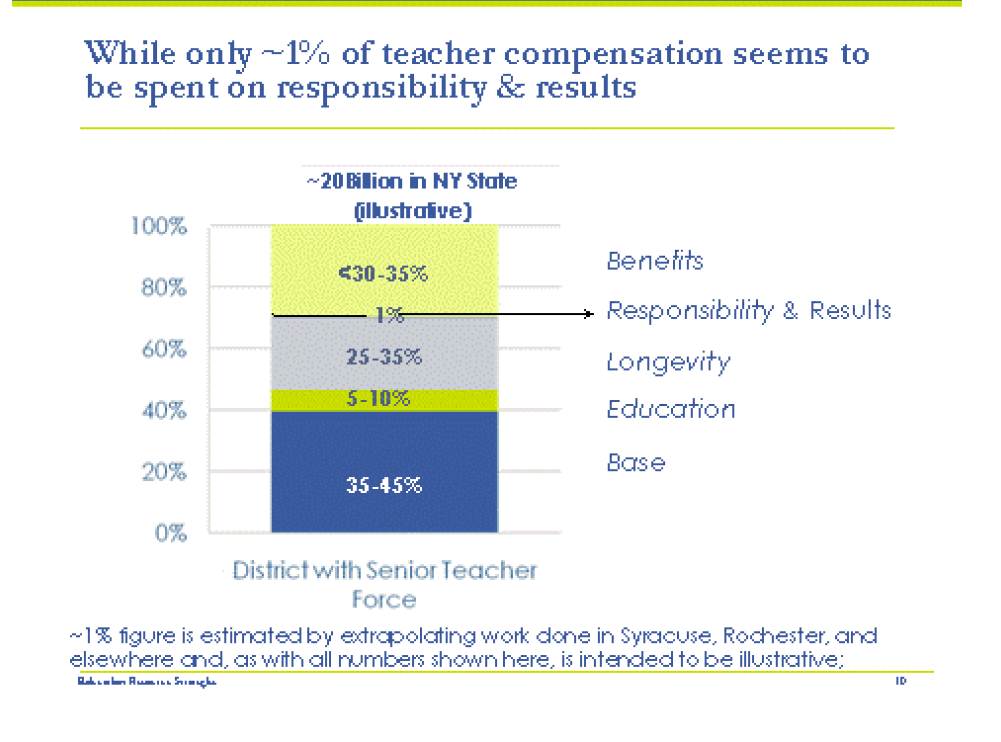

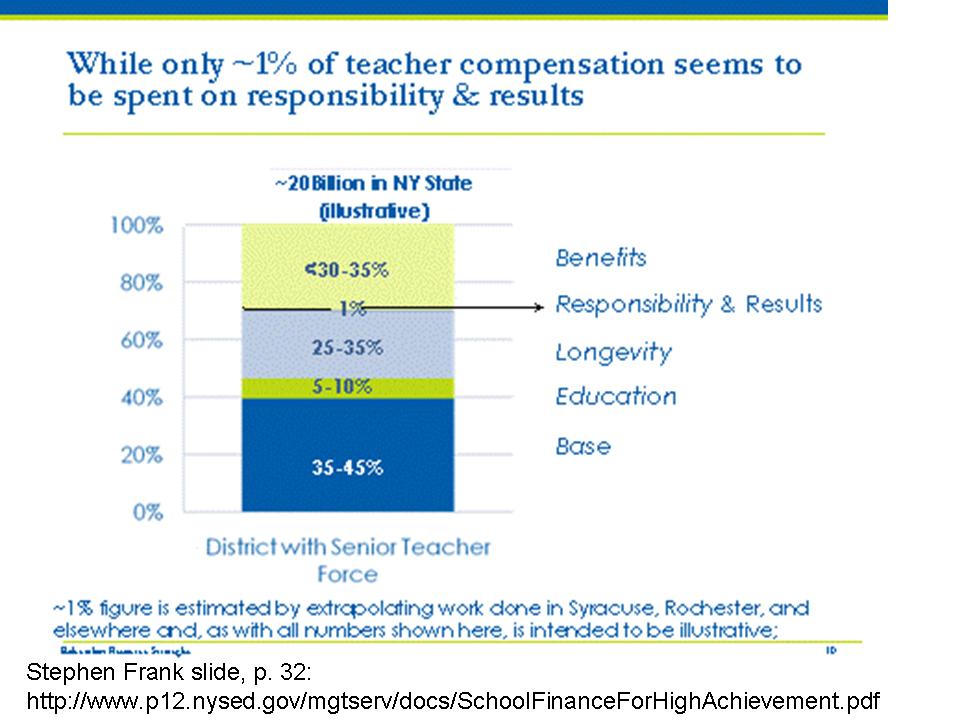

The second graph basically argues that most money currently in schools is simply wasted because it’s allocated to portions of compensation that aren’t directly tied to performance. More or less and extension of the first graph, by a different author.

Figure 2

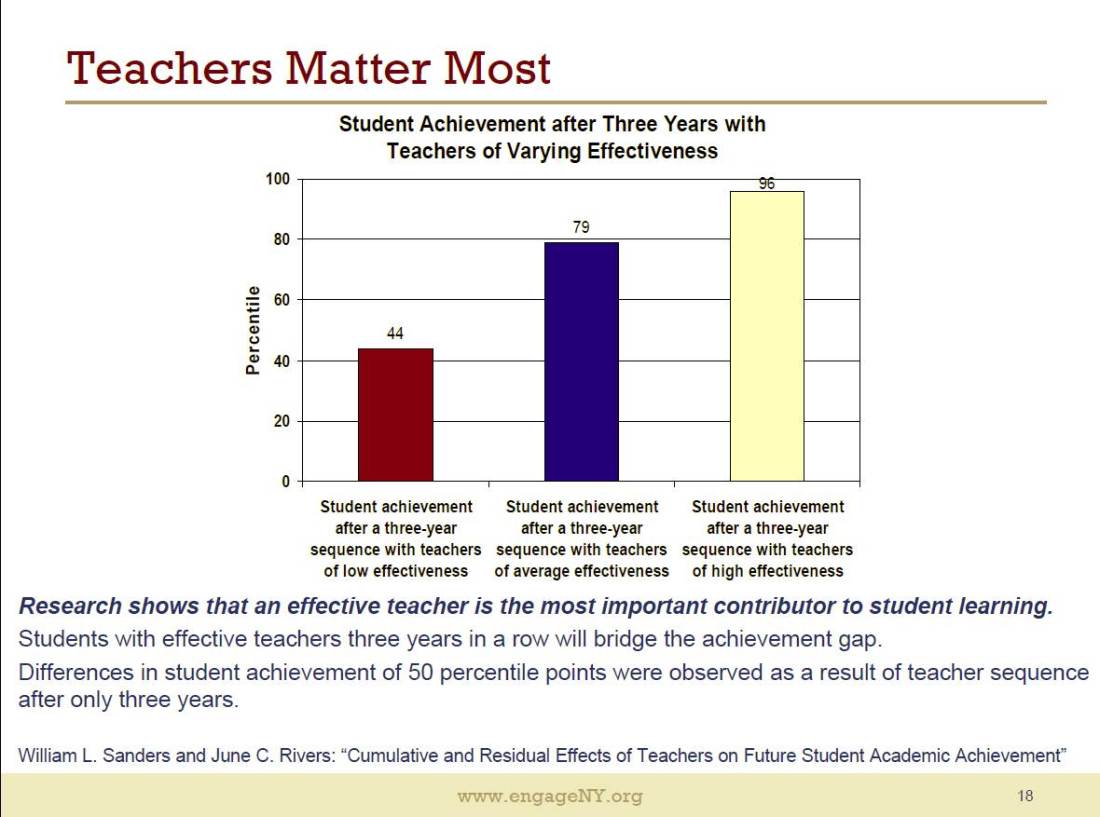

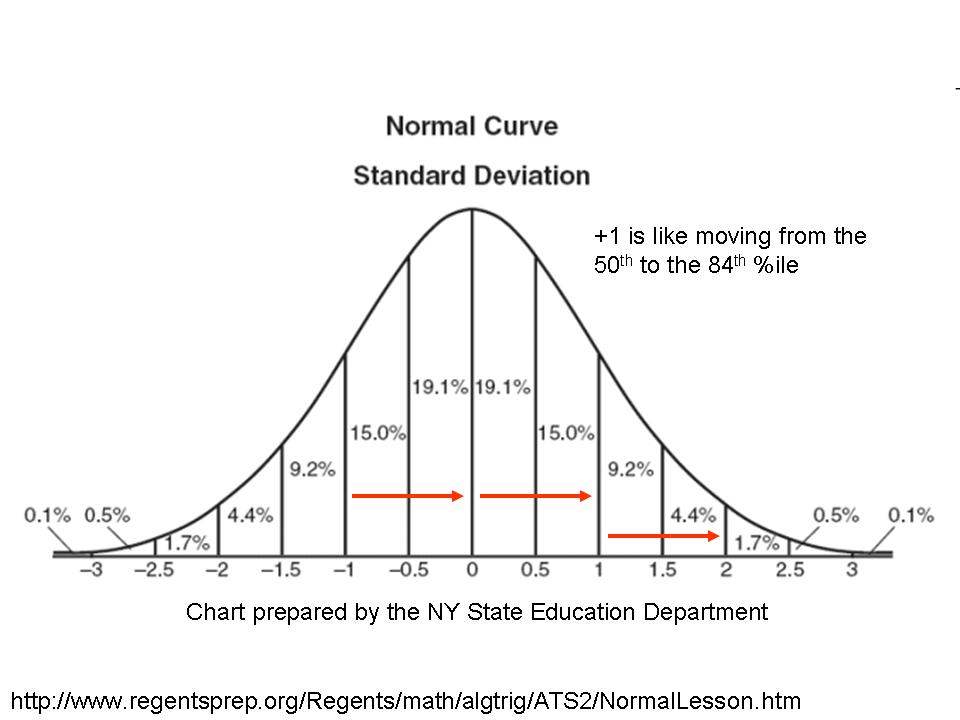

The latest version of the NYSED/King presentation also includes an exaggerated representation of what some refer to as the Three Great Teachers legend. That is, based on estimates from a study in the 1990s, that having three great teachers in a row can close any/all achievement gaps. This is a seriously misguided overstatement/extrapolation from this one study.

Figure 3

To put it bluntly, these various materials compiled and presented by the New York State Education Department are, well, in most cases, not research at all, and in the one case, a gross misrepresentation of a single piece of research on a topic where there are numerous related sources available.

NY Professionals Respond (albeit not directly to the information above, but concurrent with it)

Thankfully, a very large group of Principals on Long Island have been doing their reading, and have been making more legitimate attempts to understand and interpret research as applies to their practice.

The principals were primarily concerned with the requirement under new state policies that they begin using student assessment data as a substantial component of teacher evaluation. The principals raised their concerns as follows:

Concern #1: Educational research and researchers strongly caution against teacher evaluation approaches like New York Stateʼs APPR Legislation

A few days before the Regents approved the APPR regulations, ten prominent researchers of assessment, teaching and learning wrote an open letter that included some of the following concerns about using student test scores to evaluate educators1:

a) Value-added models (VAM) of teacher effectiveness do not produce stable ratings of teachers. For example, different statistical models (all based on reasonable assumptions) yield different effectiveness scores.2 Researchers have found that how a teacher is rated changes from class to class, from year to year, and even from test to test3.

b) There is no evidence that evaluation systems that incorporate student test scores produce gains in student achievement. In order to determine if there is a relationship, researchers recommend small-scale pilot testing of such systems. Student test scores have not been found to be a strong predictor of the quality of teaching as measured by other instruments or approaches4.

c) The Regents examinations and Grades 3-8 Assessments are designed to evaluate student learning, not teacher effectiveness, nor student learning growth5. Using them to measure the latter is akin to using a meter stick to weigh a person: you might be able to develop a formula that links height and weight, but there will be plenty of error in your calculations.

Citing:

- Baker, E. et al. (2011). Correspondence to the New York State Board of Regents. Retrieved October 16, 2011 from: http://www.washingtonpost.com/blogs/answer-sheet/post/the-letter-from-assessment-experts-the-ny-regentsignored/2011/05/21/AFJHIA9G_blog.html.

- Papay, J. (2011). Different tests, different answers: The stability of teacher value-added estimates across outcome measures. American Educational Research Journal 48 (1) pp 163-193.

- McCaffrey, D. et al. (2004). Evaluating value-added models of teacher accountability. Santa Monica, CA.; Rand Corporation.

- See Burris, C. & Welner, K. (2011). Conversations with Arne Duncan: Offering advice on educator evaluations. Phi Delta Kappan 93 (2) pp 38-41.

- New York State Education Department (2011). Guide to the 2011 Grades 3-8 Testing Program in English Language Arts and Mathematics. Retrieved October 18, 2011 from http://www.p12.nysed.gov/apda/ei/ela-mathguide-11.pdf .

- Committee on Incentives and Test-Based Accountability in Education of the National Research Council. (2011). Incentives and Test-Based Accountability in Education. Washington, D.C.: National Academies Press.

- Baker, E. et al (2010). Problems with the use of test scores to evaluate teachers. Washington, D.C. Economic Policy Institute. Retrieved October 16, 2011 from: http://epi.3cdn.net/b9667271ee6c154195_t9m6iij8k.pdf; Newton, X. et al. (2010). Value-added modeling of teacher effectiveness: An exploration of stability across models and contexts. Education Policy and Analysis Archives. Retrieved October 16, 2011 from http://epaa.asu.edu/ojs/article/view/810/858. ; Rothstein, J. (2009). Student sorting and bias in value-added estimation: Selection on observables and unobservables. Education Finance and Policy, 4(4), 537–571.

In short, the principals built their case against the punditry that’s been hoist upon them, on a reasonable read of existing research. Thankfully, they had the capacity to do so, and the interest in pursuing guidance from experts around the country in crafting their response. I urge you to read the remainder of their memo and compare the rigor of evidence behind their arguments to the type of content that has most recently been presented to them in recent months.

New Jersey Punditry

The New York principals backlash was relatively high profile. A similar situation occurred last winter/spring in New Jersey, but went largely unnoticed, at least nationally. At that time, a Task Force established by the Governor released its report on how to reform teacher evaluation. The Task Force had been charged with developing an evaluation system based at least 50% on use of student assessment data. So, of course, they did. The task force include an odd array of individuals. It was not, as does occur in some cases, a true “citizen task force” of lay persons providing their lay perspectives. Rather, it was cast as a task force of interested and knowledgeable constituents.

Here is their report: NJ Teacher Effectiveness Task Force

The task force does have a bibliography on their report listing a number of potentially useful sources. Whether they actually read any of them or understood any of the content is highly questionable, given the content of the recommendations and footnotes actually cited to validate their recommendations.

And here are the majority of the footnotes (those which actually site some supposed source of support) from the teacher evaluation section (excludes principal section) or their report, and the claims those footnotes are intended to support:

NJ Educator Effectiveness Task Force Report

Claim: And when used properly, a strong evaluation system will also help educators become more effective.2

Source: 2 For more on this subject, see the discussion in DC IMPACT: http://dc.gov/DCPS/Learn+About+Schools/School+Leadership/IMPACT+(Performance+Assessment)

Claim: The Task Force recommends that the new system have four summative categories: Highly Effective, Effective, Partially Effective, and Ineffective. The number of rating categories should be large enough to give teachers a clear picture of their performance, but small enough to allow for clear, consistent distinctions between each level and meaningful differentiation of teacher performance3

Source: 3 “Teacher Evaluation 2.0,” p. 7, The New Teacher Project, 2010.

Claim: The state review and approval of measurement tools and their protocols will assure that they are sufficiently rigorous, valid, and reliable while also providing districts flexibility to innovate and develop their own tools.4

Source: 4 The Bill and Melinda Gates Foundation in collaboration with many prominent research organizations are in the process of testing a wide array of measurement tools in the Measuring Effective Teaching project: http://metproject.org/

Claim: Studies have found that the results of student surveys can be tightly correlated with student achievement results. Persuasive evidence can be found in the Gates MET study, which uses a survey instrument called Tripod.5

Source: 5 Learning about Teaching: Initial Findings from the Measures of Effective Teaching Project, Bill and Melinda Gates Foundation, 2009

Claim: Growth scores are a fairer and more accurate means of measuring student performance and teachers’ contributions to student learning. In fact, over half of the states surveyed by the Council of Chief State School Officers (CCSSO)—24 out of 43—reported that they either already do or plan to use student growth in analyzing teacher effectiveness.7

Source: 7 State Growth Models for School Accountability: Progress on Development and Reporting Measures of Student Growth, 2010, by the Council of Chief State School Officers.

In short, most of these claims amount to either a) because The New Teacher Project said so, b) because Washington DC does it in the IMPACT evaluation model or d) because one preliminary release study from the Gates foundation included inferences to this effect.

NJ Professional Response

Like those pesky informed Long Island principals, a group of New Jersey educators responded, through an organization spearheaded by a local superintendent who has immersed himself in the relevant research on the issues and has maintained constant open communication with and attended many sessions presented by economists engaged in teacher evaluation studies. The New Jersey group also engaged researchers from the region to assist in the development of their report.

Here’s a portion of their report, which was drafted concurrent with the Task Force Activities (and presented to the Task Force, apparently to no avail):

EQUATE REPORT: NJ EQuATE Report

Once again, the professionals have far outpaced the pundits in their intellectual rigor, use and interpretation of far more legitimate, primarily peer reviewed research.

Summing it all up…

I am so thankful these days that we have in our schools, professionals like these who a) are willing to speak out in the face of pure punditry, and b) are capable of making such a strong and well reasoned case for their own policy proposals or at the very least for why they should not be backed into the ill-conceived, poorly grounded policy proposals of their governing bodies.

I expect that many “reformy” types and the politicos they support are thinking that these necessarily dumb, high paid bureaucrat local public school administrators should just sit down and shut up (as in this case) and adopt the policies that they are being told to adopt by those (often highly educated pundits) who simply know better. How pundits “know better,” stumps me, because the quality of evidence behind their all knowing-ness is persistently weak.

I might be more inclined to accept and argument for state policy preferences and technical capacity over local resistance if the contrast in the quality of information being presented by the pundits and professionals wasn’t so damn stark.

Regardless of political disposition (which is obviously an impossible hypothetical to achieve), if each of these sources was handed to me as a paper to grade in a graduate class (even in a school of education), differentiating among them would be quite easy.

The NYSED materials include completely fabricated information, ill-defined concepts, little basis in peer reviewed (or any “real”) research, and such utterly silly things as claiming that we can quadruple outcomes by moving to some undefined strategy. Yes, this stuff was presented to them by experts they hired. But rather than even attempt to think critically about any of it (and realize it was junk) they simply copied and pasted it into their report and took it on the road. This work fails on any level.

The NJ Task Force report which argues that NJ should adopt a multi-category effectiveness classification system (without any understanding of the information lost in aggregation or problems of aggregating around uncertain cut points), merely because TNTP said so, and suggests use of growth measures is “fair” by citation to a Council of Chief State School Officers report, and bases much of the rest of their recommendations on “what Washington DC did.” Yeah, I’ve read student papers like this. They fail too! Most of my students know full well not to hand me this kind of crap, even if they believe I’m sympathetic to their ultimate conclusion.

But the memo prepared by the NY principals and the report by the NJ professionals are pretty darn good when viewed as a paper I might have to grade. They use real research, and for the most part, use it responsibly. Their recommendations and criticisms are generally well thought out. For that I applaud them.

That said, it is certainly discomforting that local practitioners have had to counter the pure punditry of the very agencies which arguably should be attempting to provide legitimate, well grounded technical support.