This note is in response to James O’Keefe’s attempt to discredit me on his Project Veritas web site (though I think his point was intended to larger than this). I was lucky (?) enough to be part of one of his investigative set ups earlier this fall. I wrote and held on to this post and all related e-mails.

His scheme was uncovered in this Huffington Post piece to which he refers in his most recent report:

http://www.huffingtonpost.com/mobileweb/2011/10/17/james-okeefe-economic-policy-institute_n_1015845.html

The story:

Back in September, I was contacted by this fictional Peter Harmon who characterized himself as working for the Ohio Education Association, but never made it absolutely clear that he was working for the state teachers’ union of Ohio. In my case, unlike the EPI case, Harmon didn’t (I don’t recall) indicate being a hedge fund guy or being backed by one, but rather that he had “funders.” He dropped me a phone message and an email which were pretty innocuous, so I agreed to talk by phone. That’s where I pick up in this string of e-mails:

===================================

EMAIL #2 – PHONE CALL SET UP

From: peter.harmon@ohioedassoc.org

Sent: Monday, September 19, 2011 10:14 PM

To: bruce.baker@gse.rutgers.edu;

gse.rutgers.edu/bruce_baker@ohioedassoc.org

Subject: Meeting

Dr. Baker,

Thank you for getting back to me. We are eager to talk with you about this project. Would 3pm tomorrow work alright for you?

Sincerely,

Peter Harmon

614-468-3941

===================================

Then there was the strange phone call (which I’m quite sure in retrospect was recorded) where first, “Peter Harmon” wanted me to do a study showing that the collective bargaining legislation in Ohio would hurt children, to which I suggested that a) evaluating collective bargaining legislation is outside the realm of my expertise and b) that even if I agreed that it might, I’d have no clear, defensible way to analyze and argue that point.

From there I suggested things that I can and often do analyze and argue, in each case pointing out that the ability to make such an argument is contingent upon data to support that argument. For example, evaluating the competitiveness of teacher wages over time, or evaluating the distribution of state aid cuts. These are two issues on which I have already actually evaluated Ohio data. I pointed out that there are 3 basic types of products we might be talking about – a) critiques of policy reports or arguments by others (for a few thousand dollars), b) policy briefs/research brief reports (typically about ten thousand dollars) or c) full scale research report (thirty to fifty thousand dollars, with clarification that projects of this magnitude would have to go through RU and/or be done over the Summer). I attempted repeatedly to shift his focus to answerable questions and topics within my expertise, and to topics or issues where I felt I could be helpful to him, on the assumption that he was advocating for the state teachers’ union.

It got strange when Peter Harmon laid down his requirement that if they were going to fund a study, they didn’t want it coming out finding the opposite of what they wanted. I did explain that if he had a topic he was interested in, that I would be willing to explore the data to see if the data actually support his position on the issue and that I would do so before agreeing to write a report for him. The phone call ended with no clear agreement on anything, including no agreement on even what the topic of interest was. In fact, my main point was repeatedly that he needed to figure out what the heck he even wanted to study, though I tried to keep it friendly and supportive. No reason to argue on a first phone call.

It was a strange and disturbing conversation, but I played along until I could get off the phone with the guy. Note that the playing along in a conversation like this also involves trying to figure out what the heck is up with the caller – whether he/she has a particular axe to grind – or other issues that would make any working relationship, well, not work out.

Sadly, as twisted as this phone call was, I’ve had similarly twisted conversations with real representatives of legitimate organizations. However, with most legitimate organizations, you can later identify the less sleazy contact person. My approach has generally been to humor them while on the phone… perhaps probe as to see how twisted they really are… and when the phone conversation ends….let it pass. Move on.

Then came the follow up:

===================================

EMAIL #3 – HARMON FOLLOW-UP

From: peter.harmon@ohioedassoc.org [mailto:peter.harmon@ohioedassoc.org]

Sent: Friday, September 23, 2011 10:01 AM

To: bruce.baker@gse.rutgers.edu; gse.rutgers.edu/bruce_baker@ohioedassoc.org

Subject: Next Meeting

Dr. Baker,

I have good news, my colleagues are very interested in moving forward.

We are confident we can cover the expense of this potential study.

We have a few ideas we would like to run by you for this project.

When would be a good time to call you next?

Regards,

Peter Harmon

614-468-3941

===================================

So now, Harmon is basically suggesting that he can generate the $30 to $50k figure which I had given him for a bigger study, a figure I had basically given him to encourage him to think about doing something else – like contracting a few short policy briefs or critiques. But, he still has no idea what he supposedly wants me to write about. Quite honestly that’s really strange. So my response is simple – it’s essentially a get your act together and don’t both me again until you do. In other words, here are a few examples of the work I do and am proud of. Figure out your damn question and let me know when you do.

===================================

EMAIL #4 – BAKER REPLY

From: Bruce Baker [bruce.baker@gse.rutgers.edu]

Sent: Friday, September 23, 2011 10:06 AM

To: ‘peter.harmon@ohioedassoc.org’

Subject: RE: Next Meeting

Rather busy for next week or so. Would prefer if you could at least send an outline of potential topics & research questions of interest, so I can mull them over.

For examples of reviews/critiques of policy reports, see:

http://nepc.colorado.edu/thinktank/review-middle-class

http://nepc.colorado.edu/thinktank/review-spend-smart

For an example of a policy brief/research report, see:

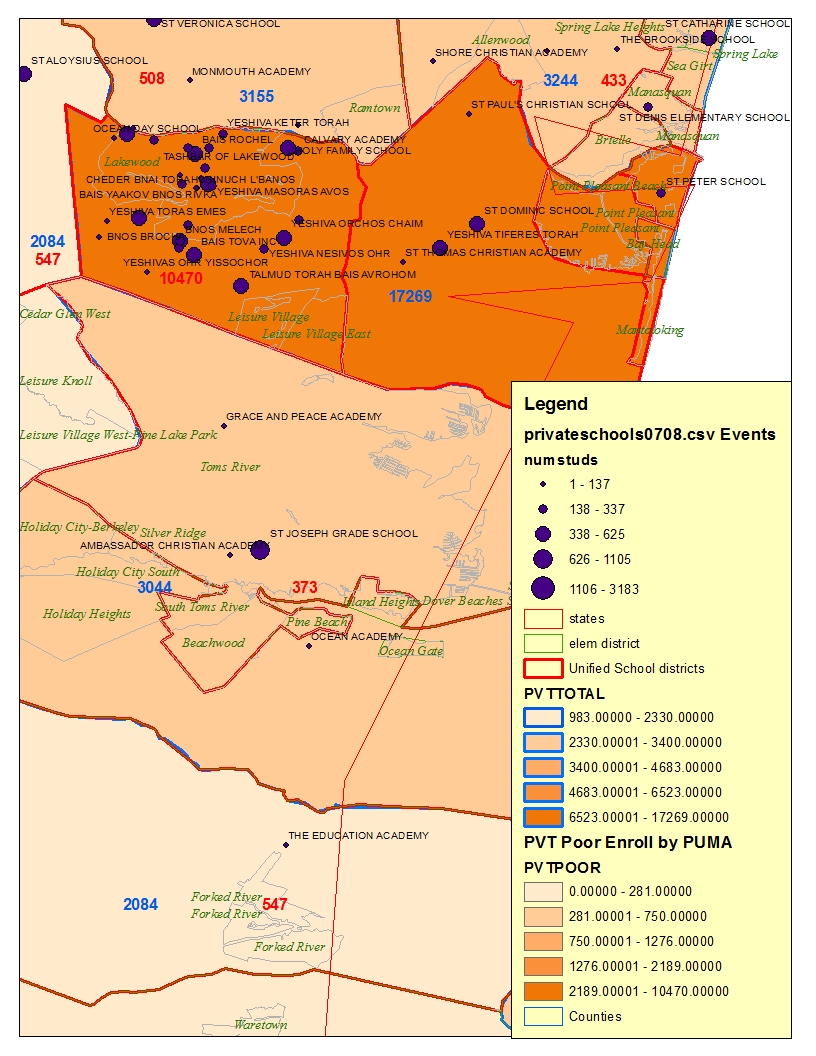

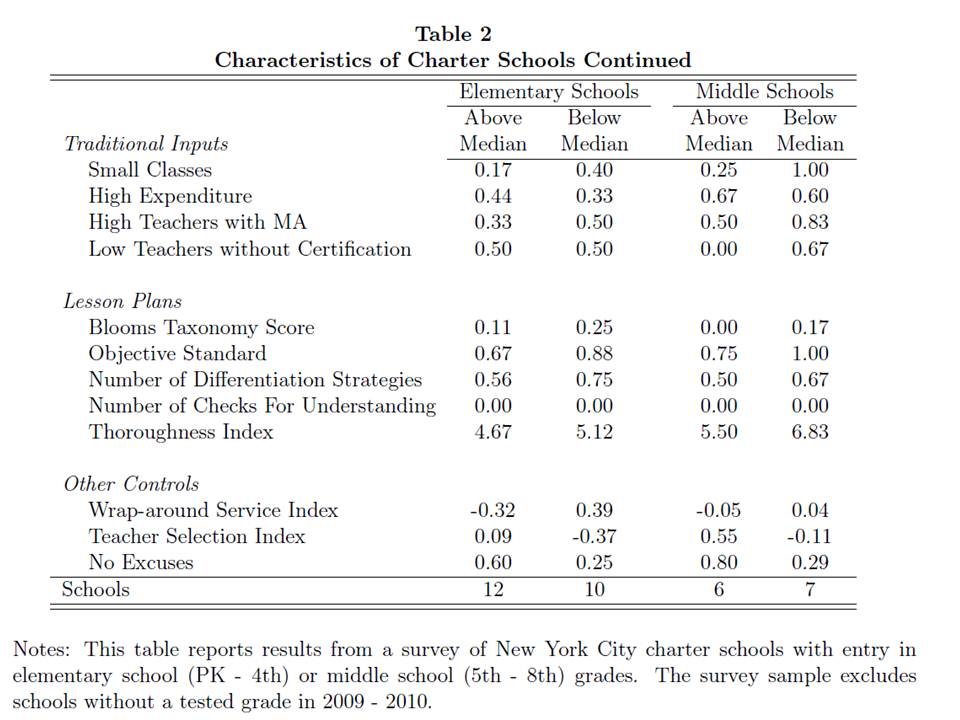

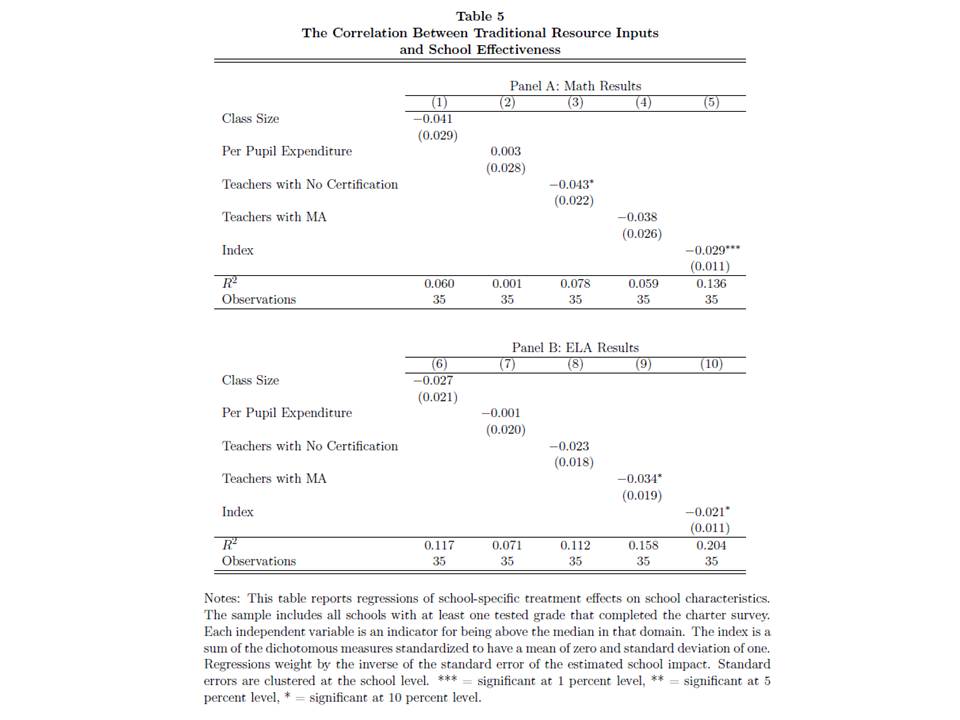

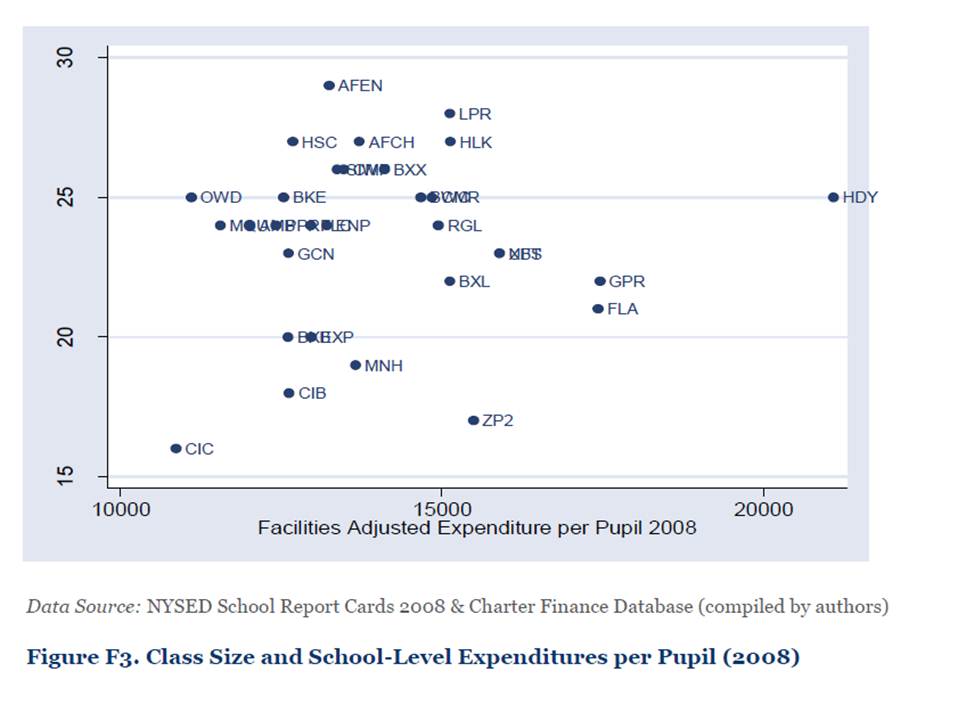

http://nepc.colorado.edu/publication/NYC-charter-disparities

http://nepc.colorado.edu/publication/private-schooling-US

Thanks.

Bruce Baker

===================================

Here’s Harmon’s attempt at figuring out his question:

===================================

EMAIL #5 – HARMON REPLY

Dr. Baker,

Thanks for getting back to us.

Once of the topics we want to pursue is research regarding spending.

Specifically and increase in spending having a good effect on children. If you need to limit the scope of your research to a specific county, district or other local geographic area. that’s OK.

I will take a closer look at the examples you sent on your last email to get a better idea of what you would like from our end. But,I hope this more specific goal better illustrates what we are looking for.

Let me know when would be good time to call, so I can clarify whatever questions you have about this.

Peter Harmon

614-468-3941

===================================

So, Peter Harmon wants me to explain, or more strangely to show that increasing spending is good for children. Okay. Anyone even modestly informed would know that’s an odd way to frame the question or issue. But clearly, given my body of work, I have argued on many occasions in writing and in court that having more funding available to schools can improve school quality, which is something I would certainly argue is good for children. Would I somehow use data on a specific district or county to do this? No…. uh… not sure? I’d probably start with an extensive review of what we already know from existing research on money and school quality.

At this point, I’m ready to drop the whole discussion, but receive an e-mail notice of a new Economic Policy Institute paper on public employee wages in Ohio. So, to save Mr. Harmon money paying for a new study on this topic, I a) send him a link to that study, and b) explain that I’m already working on a paper related to his issues of concern.

===================================

EMAIL #6 – BAKER REPLY

From: Bruce Baker [bruce.baker@gse.rutgers.edu]

Sent: Thursday, October 06, 2011 10:44 AM

To: ‘peter.harmon@ohioedassoc.org’

Subject: FYI

From one of my Rutgers colleagues:

Click to access Briefing_Paper_329.pdf

Working on some related projects myself, which may be of use to you in near future. Will be back in touch as schedule frees up.

Bruce

===================================

And so it ended. And as I suspected by this point, it appears that this whole thing was a sham… and an attempt at a sting. Interestingly, this appears to be when Harmon moved on to go after EPI.

Quite honestly, O’Keefe’s concept for the investigation isn’t entirely unreasonable except that he and his colleagues didn’t seem to fully understand the fundamental difference between research projects per se, and policy analyses – between writing summaries and opinions based on data that already exist and research that’s already been done – versus exploring uncharted territory – where the data do not yet exist and where the answers cannot yet be known.

At this point, I think a few clarifications are in order about doing policy research, or more specifically writing policy briefs in a highly political context.

First, why would I ever vet the data on an issue before signing on to do work for someone? Well, this is actually common, or should be in certain cases. For example, let’s say the funder wants me to show that “teachers in Ohio are underpaid.” I don’t know that to be true. I’m not going to take his money to study an issue where he has a forgone conclusion and a political interest in that conclusion but where the data simply don’t support that conclusion. It is relatively straight forward for me to check to see if the data support the conclusion before I agree to write anything about it. This is an easy one to check. There are a standard set of databases to use, including statewide personnel data, census data and Bureau of Labor Statistics data and there are standard credible methods for comparing teacher wages. If the argument holds up applying the most conservative (most deferential analysis to the “other side” of an argument) analysis, then it’s worth discussing how to present it or whether to move forward.

A different type of example which I’ve learned by experience is that it’s always worth taking a look at the data before engaging as an expert witness on a school funding related case. I often get asked to serve as an expert witness to testify about inequities or inadequacies of funding under state school finance systems. Sometimes, attorneys have already decided what their argument is based only on the complaints of their clients. It would be utterly foolish of me to sign on to represent those clients and accept payment from them without first checking the data to see if they actually have a case.

Then there’s the issue of doing work for partisan clients to begin with. That’s a different question than doing work for sleazy clients. But sometimes, if it’s a legitimate organization, there may be a sleazy contact person, but further checking reveals that the organization as a whole is credible – and not sleazy. But back to the point…

Quite honestly, the toughest kind of policy analysis to do is for partisan clients – clients with an axe to grind or a strong interest in viewing an issue in one particular way. That is usually the case in litigation and increasingly the case when it comes to writing policy briefs on contentious topics. What this means is that the analyses have to be “bullet-proof.” There are a few key elements to making an analysis “bullet proof.”

First, the analysis must be conservative in its estimates and one must avoid at all cost overstating any claims favored by the client. In fact, the analysis needs to be deferential, perhaps even excessively, to the opposing view.

Second, the analysis must use standard, credible methods that are well known, well understood and well documented by others. Examples in my field would include comparable wage analysis, or wage models which typically include a clearly defined set of variables.

Third, the analysis must rely on publicly accessible data, with preference for “official” data sources, such as state and federal government agencies. This is because the analyses should be easy for any reader to replicate by reading through my methods and downloading or requesting the data.

So here are my final thoughts on this issue…

If this kind of stuff causes anyone to place greater scrutiny on my work of that of any others writing policy briefs on contentious topics that’s fine. It’s not only fine, but desirable. I am fully confident that my work stands on its own. Unlike some, I don’t simply take a large commission to offer my opinion without ever having looked at any data. For example, Eric Hanushek of Stanford University took $50,000 from the State of Colorado to testify that more money wouldn’t help kids and that Colorado’s school funding system is just fine, without ever having looked at any data on Colorado’s school funding system. See:

http://www.edlawcenter.org/news/archives/school-funding/what-hanushek-shows-up-again.html?searched=hanushek&advsearch=oneword&highlight=ajaxSearch_highlight+ajaxSearch_highlight1

By contrast, I did indeed accept a payment of $15,000 for writing a nearly 100 page report filled with data and detailed analyses of Colorado’s school funding system raising serious questions about the equity and adequacy of that system (available on request). In fact, I had already come to the conclusions about the problems with Colorado’s school funding system long before I was engaged by the attorneys for the plaintiff districts (as one will find in many of my blog posts referring to Colorado).

My rule #1 is always to check the data first and to base my opinions on the data. So I welcome the scrutiny on my work and I especially welcome it directly. If you have a criticism of my work, write to me. The more scrutiny on my work the better.

=========

Note #1: for an example of the types of policy briefs and/or analyses to which I am referring here, see: NY Aid Policy Brief_Fall2011_DRAFT6

In my view, this is a solid, rigorous and very defensible analysis. It is a policy brief. It uses numerous sources of publicly available data. And, it was written on behalf of an organization which has self-interested concerns with the NY school finance formula.

Note #2: Indeed there were some poor word choices on my part in the phone conversation. “Play with data” is how I tend to refer to digging in and vetting the data to see what’s there. This blog is dedicated to what I would refer to as playing with data. Looking stuff up. Downloading large data files (IPUMS, NCES). Running statistical models. My friends and colleagues, as well as my students know full well that I take great joy in working with data and that I consider it play. But I’ll admit that it sure doesn’t sound too good when taken out of that context.

Note #3: A few people have asked about the portion of the conversation where I suggest that if I find results that do not support the funders’ views, I will not charge them for the work. Some have suggested that this is an example of burying an undesirable result, which would in my view be unethical. So, what’s the point of not charging them? Actually, it’s so that the result won’t get buried. If I do a bunch of preliminary data analyses only to find that the data do not support a funder’s claims/preferences, I’d rather not write up the report for the funder and charge him/her, because they then own the report and its findings, and have the control to bury it if they so choose. Now, I typically don’t permit gag-order type clauses in my consulting contracts anyway, but, it’s much easier just to avoid the eventual pissing match over the findings and any pressure to recast them, which I will not do. If I keep the results of my preliminary work for myself, then I have complete latitude to do with them as I see fit, regardless of the funder’s preferences. It’s my out clause. My freedom to convey the findings of any/all work I do.

I’ve come to this approach having had my results buried in the past on at least two occasions, one in particular where the funder clearly did not want the results published under their name due in part to pending litigation in which they were a defendant. Much to my dismay, the project coordinators (agency that subcontracted me) capitulated to the funder. I was, and remain to this day, deeply offended by the project coordinator’s choice under pressure by the funder, to edit the report and exclude vital content. Yeah… I got paid for the work. But the work got buried, even though the work was highly relevant. I’m unwilling to go down that road again.