I had the displeasure of waking up to this drivel in my in-box this morning:

“Those who can, do. Those who can’t, teach. And those who can’t teach, teach teaching.”

http://www.nytimes.com/2013/10/21/opinion/keller-an-industry-of-mediocrity.html?_r=0

yeah… and those completely lacking in critical thinking, basic research and data interpretation skills write op-eds for the Times.

I don’t really teach teachers myself, so I guess I shouldn’t take offense. But I do mainly because the core argument advanced here is so ill-informed and poorly conceived.

Allow me to start by pointing out that I have actually written detailed, quantitative research in peer reviewed journals on the very topic of who’s teaching the teachers. In fact, the article we wrote was done partly in response to the Arthur Levine report cited in the Times op-ed piece. And it’s not as if the article title really conceals its contents:

- Wolf‐Wendel, L., Baker, B. D., Twombly, S., Tollefson, N., & Mahlios, M. (2006). Who’s teaching the teachers? Evidence from the National Survey of Postsecondary Faculty and the Survey of Earned Doctorates. American Journal of Education, 112(2), 273-300.

My apologies for the fact that this article is fire-walled. I really don’t expect all of my blog readers to go through the trouble of paying for it or finding an academic library that carries it. But any responsible journalist, pundit or author proclaiming a strong policy position on this issue ought to at least do some reading on the topic first. The above article is certainly not uncritical of teacher preparation. [UPDATE: Full version here, courtesy of the kind folks at AJE Baker2006]

And the issues of complexity and variation in teacher preparation I explore in the above research article are not the only massive omission or conflation put forth in the New York Times piece, which operates on the overly crude assumption of a uniform system of content-free instruction across any and all ed schools.

Let’s tackle the bigger and much simpler issue here – the broad notion advanced in this op-ed that Ed Schools are the problem! Ed Schools are the primary threat to the quality of our public schooling system as a whole and by extension Ed Schools are a threat to our national security. [yeah… he didn’t really say that… but somehow it often goes there] And further, that if we can just replace ed schools – with some other unknown thing – we’ll all be better off.

A kinder, gentler variant on this argument is that it’s just the bad ed schools that are a threat and that we can weed out those bad ed schools by looking at how the students of their graduates perform. I’ve addressed this issue in a few previous blog posts. First, I’ve addressed the question of whether “ed school” is really some static, monolithic entity. Second, I’ve addressed the feasibility of rating ed schools by twice removed outcome measures.

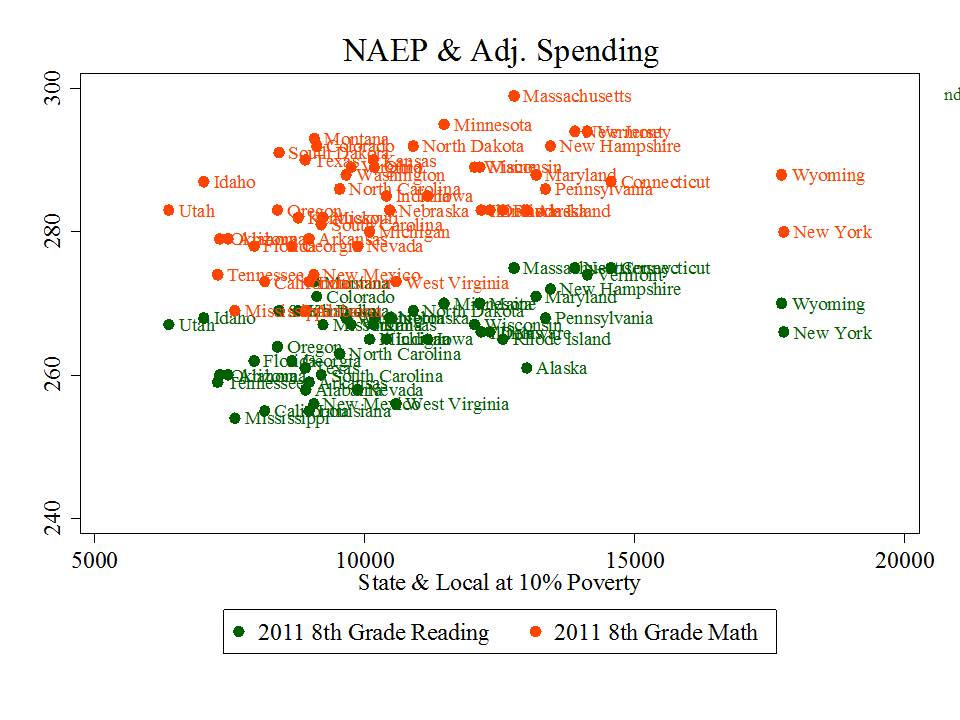

But there’s actually a simpler logical fallacy at play here which lies at the root of many reformy arguments regarding causes and consequences – failure to acknowledge that the U.S. has a wide range of elementary and secondary of schools that are both high performing and low performing and that the defining features differentiating higher and lower performing schools are not found primarily in their teachers or the preparation programs they attended – or whether they attended any at all – but rather in the communities they serve, the resources available to them and the backgrounds, health and economic well-being of the children and families they serve.

This is not about the poverty as excuse argument. This is about the simple point that our highest performing public schools also employ teachers from traditional public college and university preparation programs and in many cases, teachers from the same – or substantively overlapping – college and university preparation programs as teachers in our lowest performing schools in the same region.

If that’s the case, then how is it possible that teacher preparation programs are the problem?

I know… the good reformer at this point is thinking – but there are no good U.S. public schools or districts. They all suck and that’s precisely why teacher preparation is the problem. Of course, if that was the case – that all K-12 public schools suck – it would hard to, by research design – with a dependent variable that doesn’t vary – attribute that sucky-ness to a single cause. But the dependent variable does vary… even when we rely on reformy resources like the Global Report Card I wrote about here.

First, here’s a location where you, yourself can actually download the reformy report card, which in large part was designed to shake the confidence of America’s suburban parents by taking a few statistical leaps to show them their leafy suburban schools wouldn’t stack up so well if we transported them to Finland or Singapore.

http://globalreportcard.org/docs/Global-Report-Card-Data-11.14.12.xlsx

I’ll save that argument for another day, and just select two sets of districts from this report card, from Illinois and Kansas, because I have the data readily available. Let’s look at local public school districts that are

1) Better than the Average Fin and those that are…

2) Worse than 80% of beer-swillin’ Hockey Lovin’ Canadians.

That’s quite a contrast (even though both are high performing countries – on average – setting aside demographics, etc.).

Here are the lists:

So, we’ve got some school districts in each state that are better than the average Finnish school and some that get trampled by the those syrup swillin’ hosers from the Great White North.

The only plausible explanation is that the teachers in the Better than Finland category are either from completely non-traditional ed schools or not ed schools at all while the teachers in the not-so-great schools all come from your typical state ed school.

Certainly, we know from large bodies of teacher labor market research that graduates of various preparation programs, colleges and universities and alternative route programs more broadly, sort themselves on the labor market, with those who possess stronger academic credentials often sorting into the “more desirable” jobs.

But that’s somewhat of an aside here. For the basic reformy premise of massive uniform ed school failure to be true – we would have to see little or no commonality in the ed school preparation of teachers across these settings – across totally awesome U.S. schools and totally sucky ones.

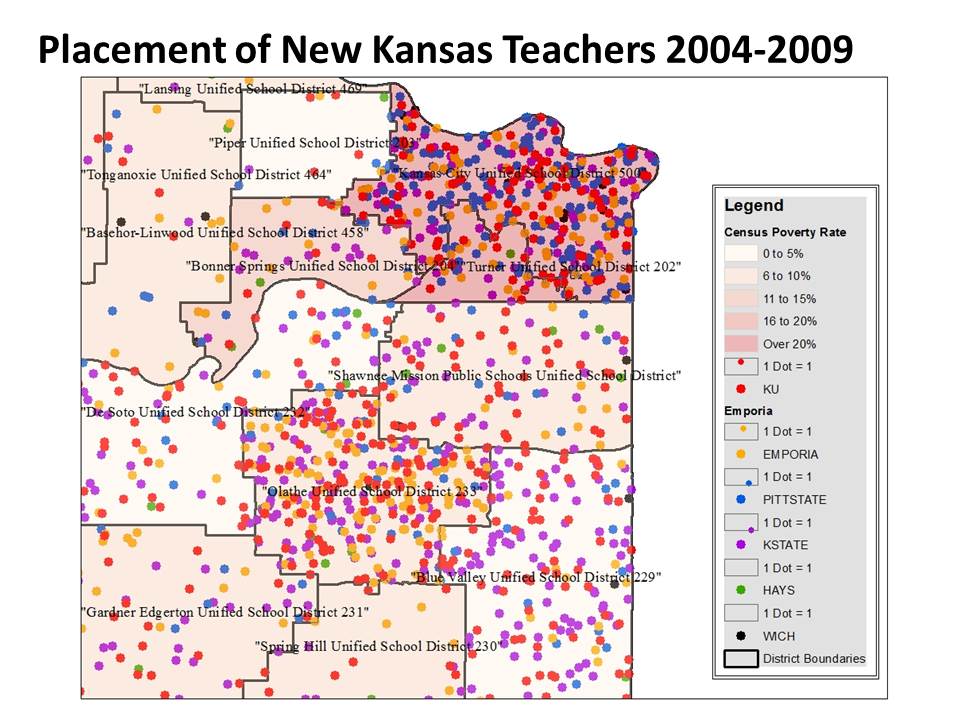

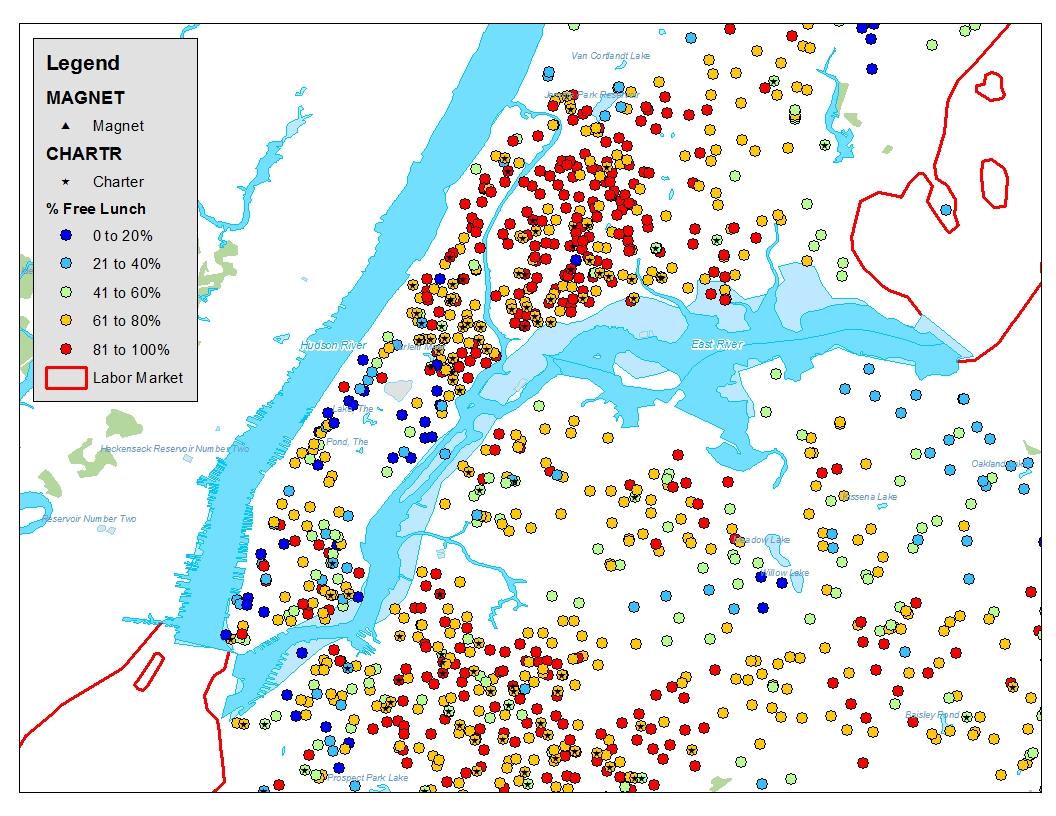

So, here’s the recent distribution of graduates of Kansas teacher preparation programs in the Kansas City metropolitan area which includes the Blue Valley School district – better than the average Fin and Kansas City Kansas, which, well, gets its butt kicked by Canada!

Hmmm… you can’t possibly be telling me that both KCK and BVSD have teachers who graduated from the major state teacher preparation colleges can you? If that’s the case, then their relative international rankings might not be determined by teacher preparation?

[ignore the poverty shading in the background…’cuz payin attention to poverty… well… just isn’t cool with the reformy crowd!]

There are some notable features to this map. One is that BVSD and and Olathe to its west were still significantly growing districts during this period. So it makes sense that they hired a lot of new teachers during that time. It makes less sense that KCK, more stagnant (and declining) in population hired so many new teachers – but for the relatively high turnover rate more common in such high poverty settings! There are also some distributional differences in the dots – which universities produce more teachers for which districts (or provide more credentials). Pittsburg state (blue dots) more prevalent in KCK provides a local program that feeds to KCK. I’d be hard pressed, however, to lay blame on Pitt state for KCK’s Canadian butt-whoopin’ and I’d be equally hard pressed to credit K-state in producing more teachers for Blue Valley as the cause of Blue Valley’s competitive match up with Finland! The fact is that all of these Kansas districts draw heavily on teachers produced by the public teachers colleges of that state – and some do as well as Finland while others struggle.

As such, it’s pretty darn hard to lay blame on traditional teacher preparation in Kansas for these differences in outcomes.

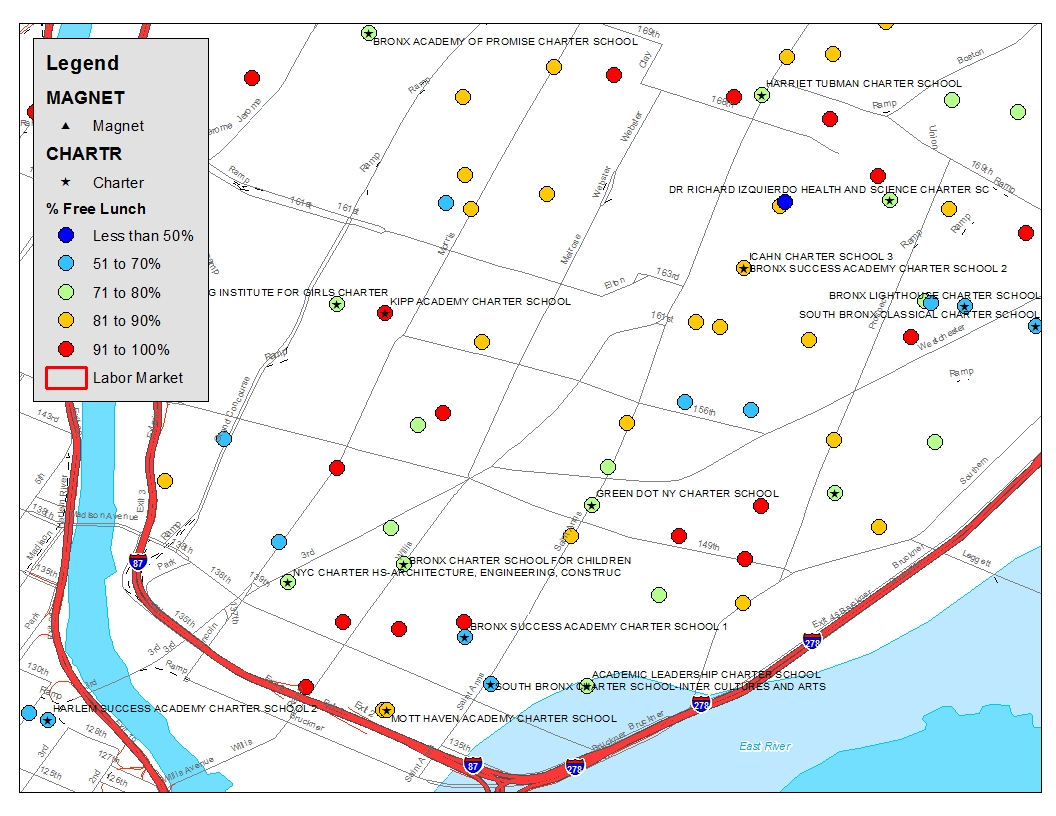

Now, let’s take a look at a few high performing and lower performing districts in Illinois.

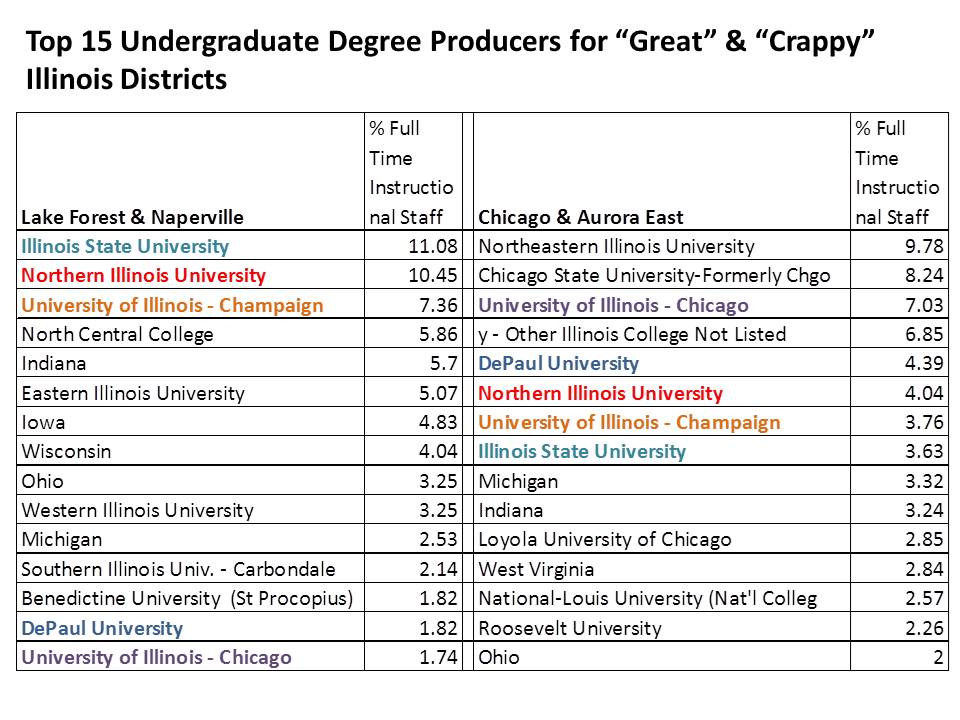

First, here are the top 15 undergraduate degree producers for Chicago and Aurora East and for Naperville and Lake Forest. Rather than from the degree producers perspective, these data simply include all instructional staff in these districts, downloadable here: http://www.isbe.state.il.us/research/xls/2012-tsr-public-dataset-instr.xlsx

The data include where teachers got their undergraduate and advanced degrees.

Wow… there’s actually quite a bit of overlap in the institutions. Sure, there are differences. Where a state name is listed, the teacher received his/her undergraduate degree from an un-named institution in that state (such are the shortcomings of state administrative data). The City of Chicago does have larger shares from some Chicago based programs. But there’s also overlap and there’s significant overlap for the state’s major public teacher preparation institutions, like Illinois State University, Northern Illinois University and the University of Illinois main campus (Champaign). How can that be? How can there possibly be school districts that compete favorably with Finland while employing graduates of traditional teachers colleges?

While the percentages of teachers in these districts who attended any one preparation institution tend to be small, the shares who attended major public preparation institutions for their bachelors degrees appears marginally larger in the high performers (Over 10% for both IL State and Northern).

That’s impossible! But… But… But… graduates of those same colleges are teaching in districts that got whooped by the Canadians? So how can we possibly place blame for systemic failure of American schools on teacher prep programs? I’m struggling with the logic here.

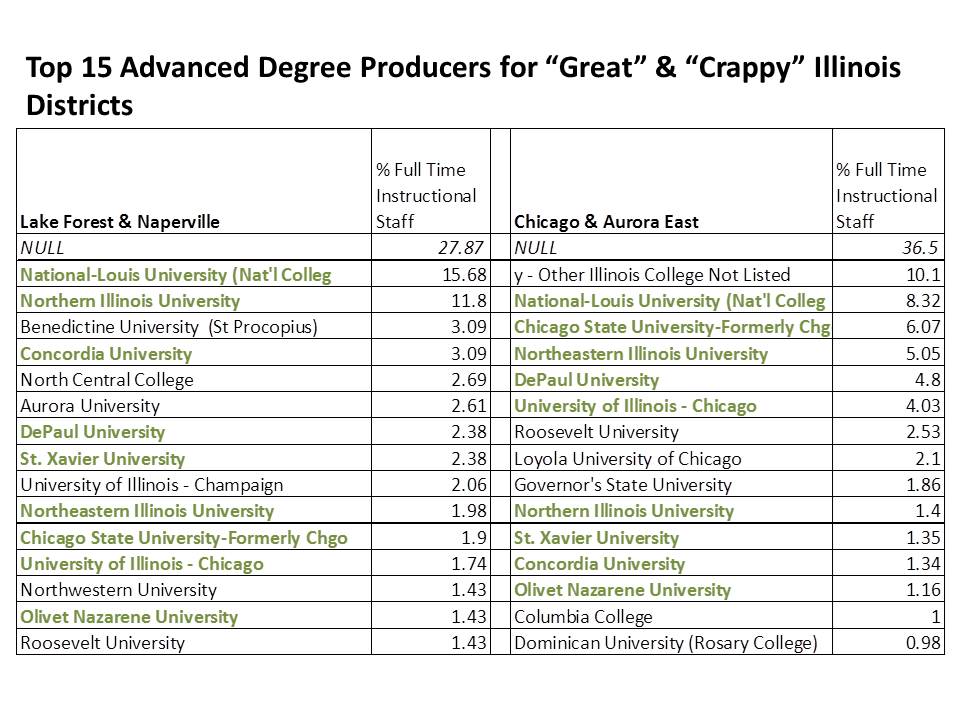

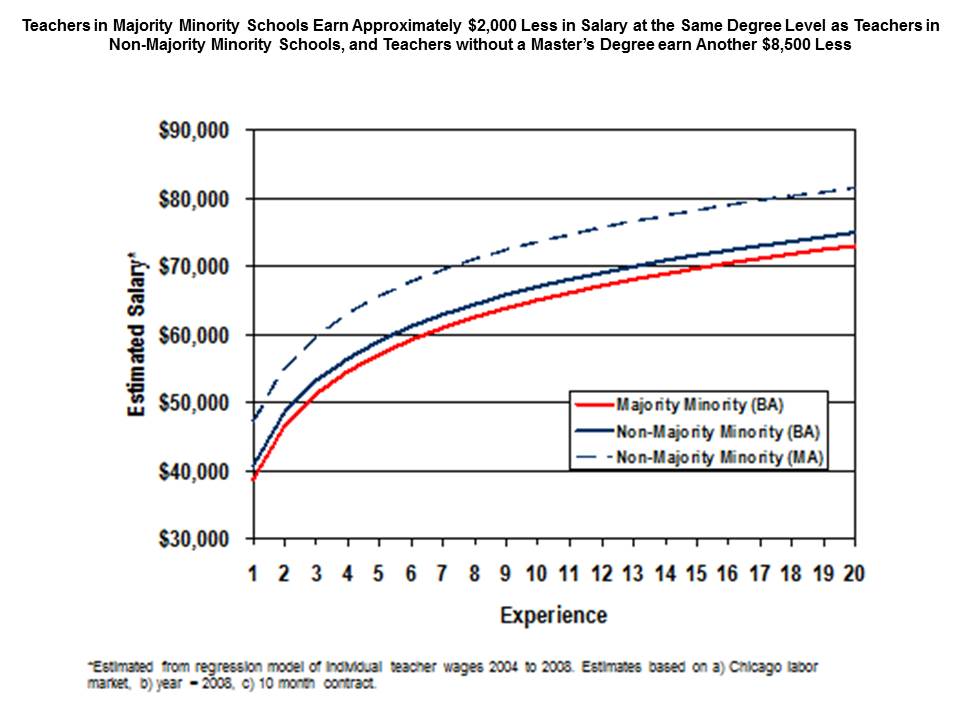

One more look… here are the advanced degree granting institutions for teachers in higher versus lower performing Chicago area districts. Note that “NULL” refers to those not holding (or reporting) advanced degrees and that the share holding only a bachelors degree is higher in the lower performing districts (poorer, minority districts).

Again, these degrees – which in include both initial and additional certifications – are dominated by traditional credential granting institutions with substantial overlap across teachers between higher and lower performing schools.

This is a separable but related issue to the evaluation of ed schools by student outcome measures. I’ll continue digging more into that issue in future posts.

It is certainly hard to make a compelling case that traditional teacher preparation institutions are the primary cause of our supposed lagging national education system when our highest performing schools – those that compete favorably with Finland – also employ in large number, graduates of those preparation programs and in many cases employ significant numbers of graduates of the same programs that provide teachers for our supposed failing schools.