In several previous posts I have addressed the common argument among charter advocacy organizations (notably, not necessarily those out there doing the hard work of actually running a real charter school – but the pundits who claim to speak on their behalf) that charter schools do more, with less while serving comparable student populations. This argument appears to be a central theme of current policy proposals in Connecticut, which, among other things, would substantially increase funding for urban charter schools while doing little to provide additional support for high need traditional public school districts. For more on that point, see here.

I’ve posted some specific information on Connecticut charter schools in previous posts, but have not addressed them more broadly. Here, I provide a run-down of simple descriptive data, widely available through two major credible sources. Easy enough to replicate any/all of these analyses on your own with the publicly available data:

Connecticut State Department of Education (CEDaR) reports

National Center for Education Statistics Common Core of Data

Since the common claim is that charters do more (outcomes) with less (funding) and while serving the same kids (demographics), it is relevant to walk through each of these prongs of the argument step by step.

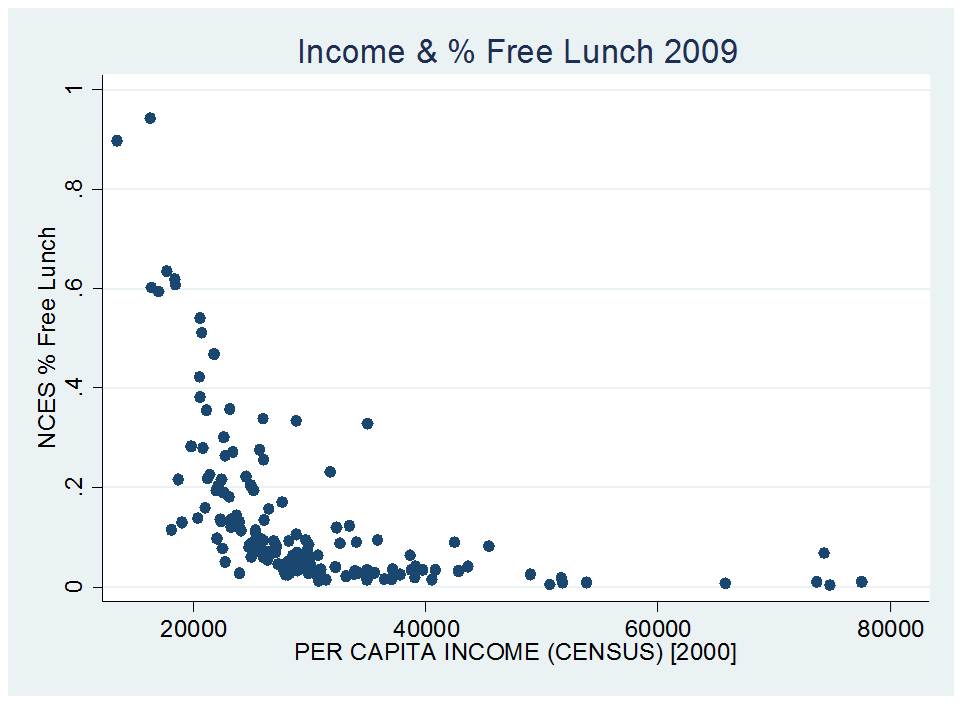

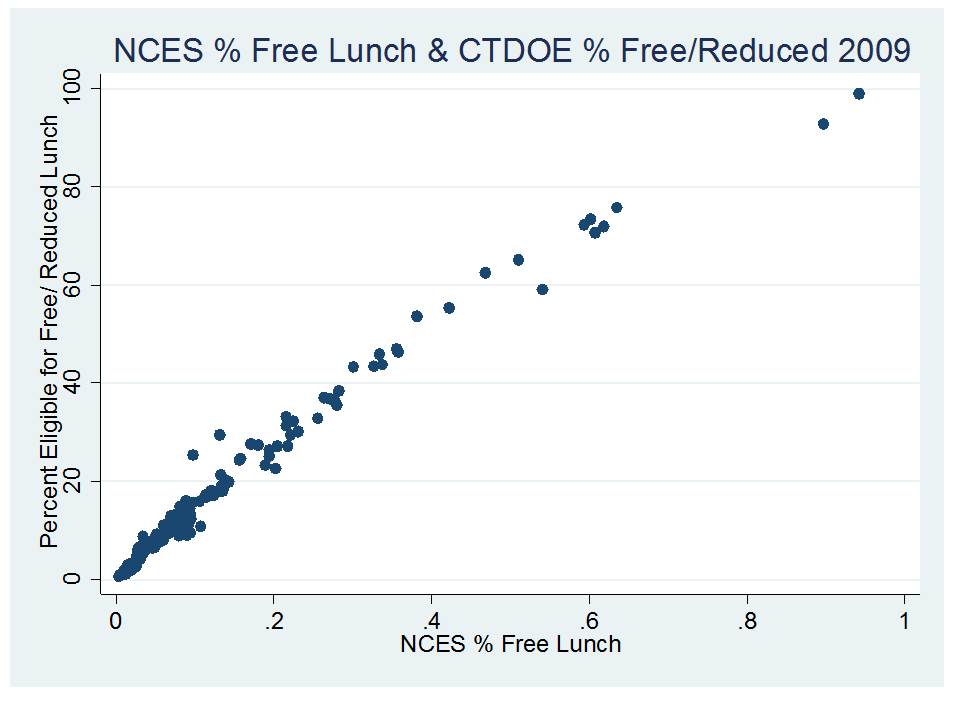

DEMOGRAPHIC COMPARISONS

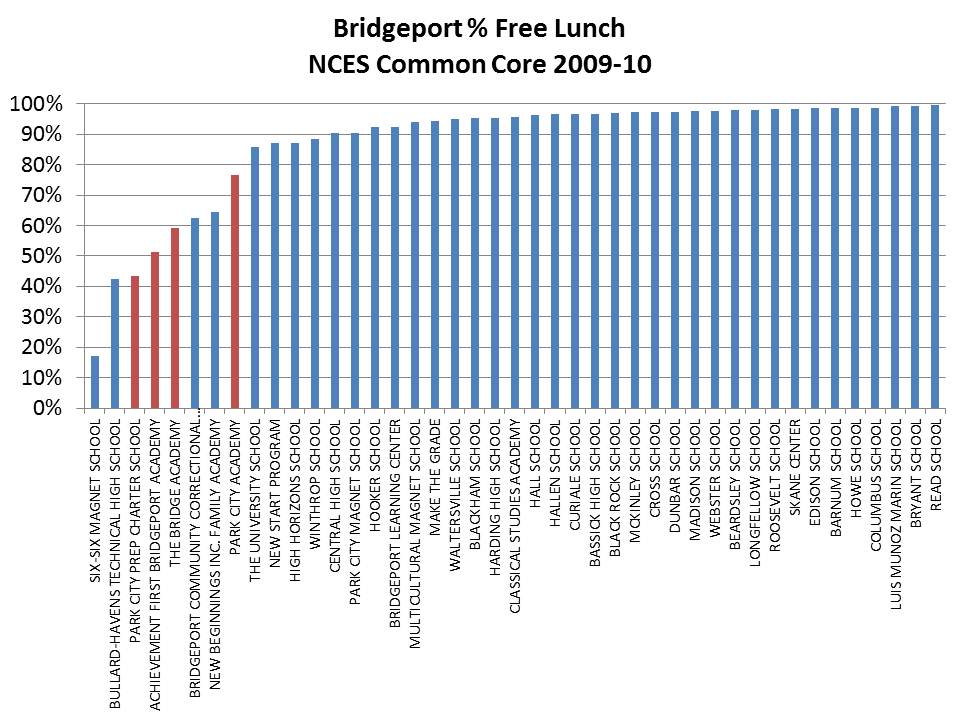

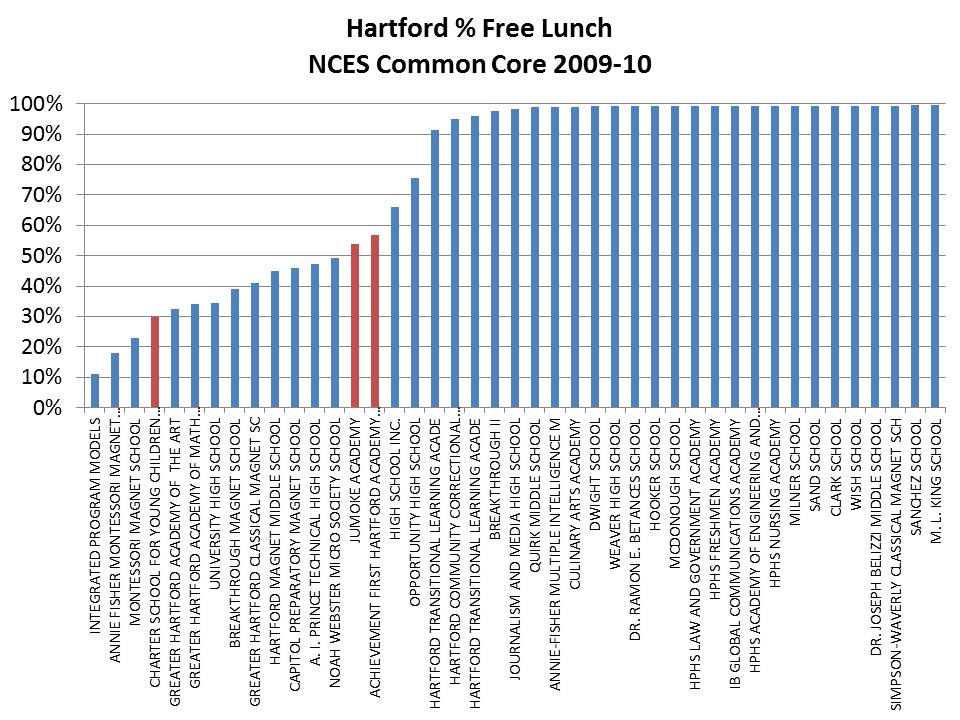

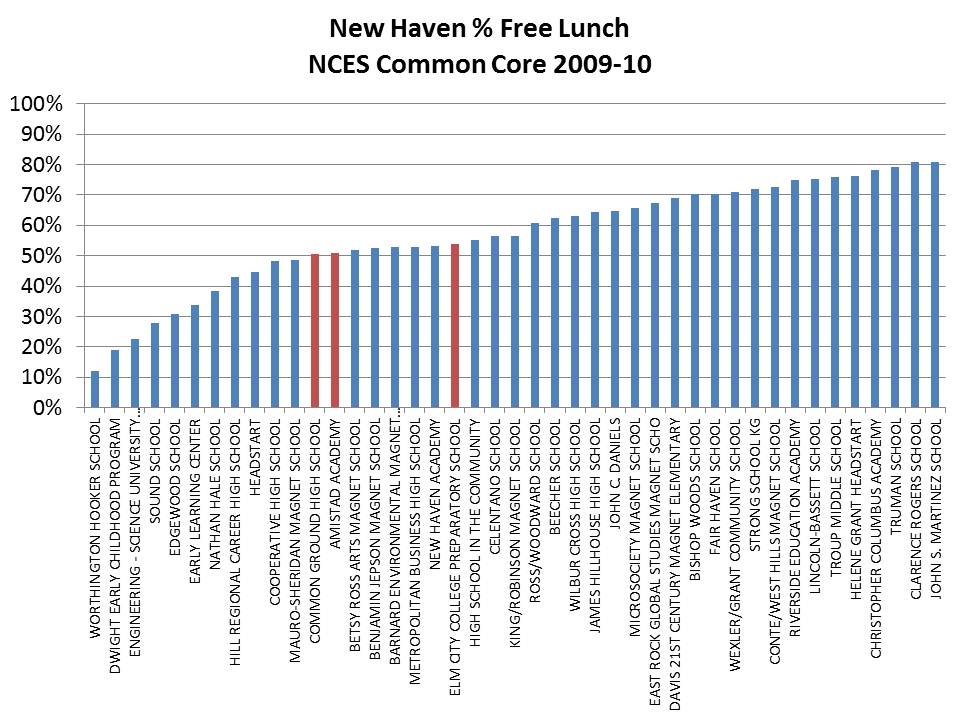

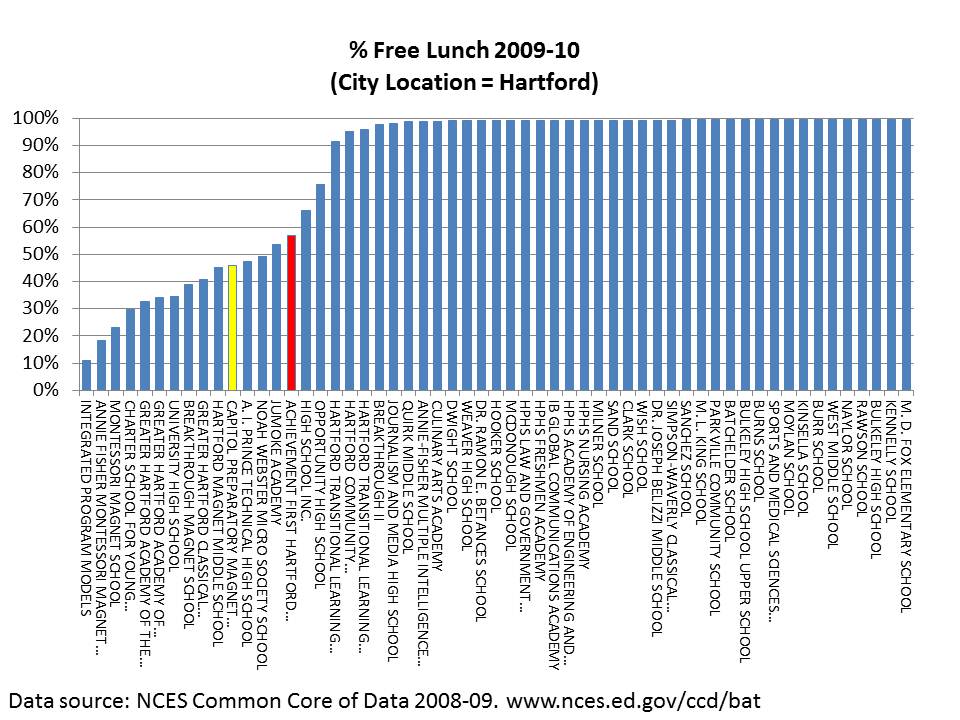

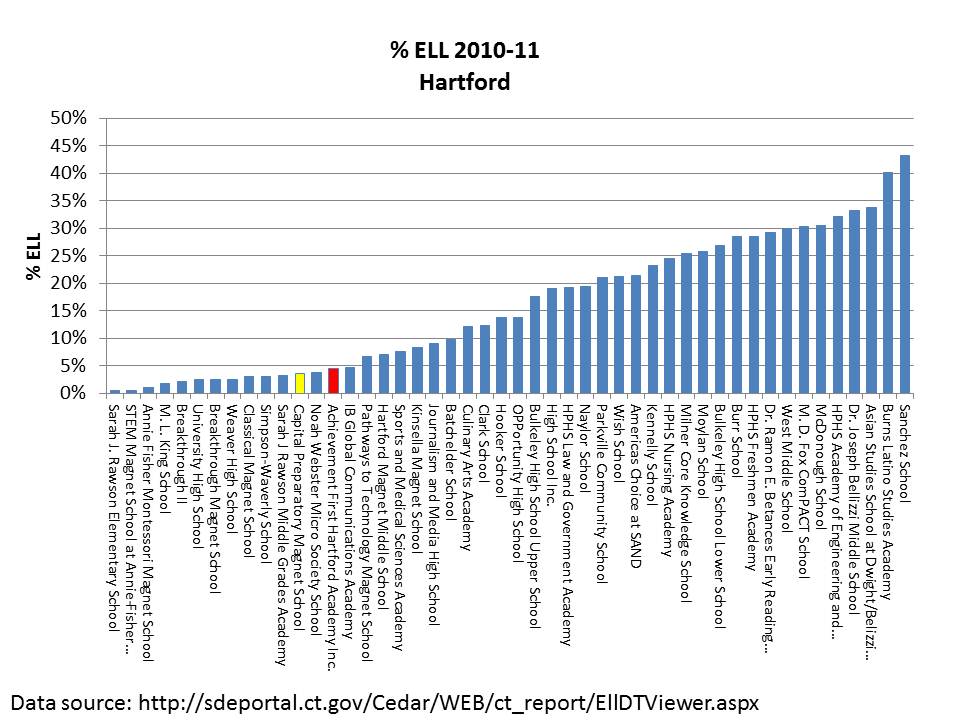

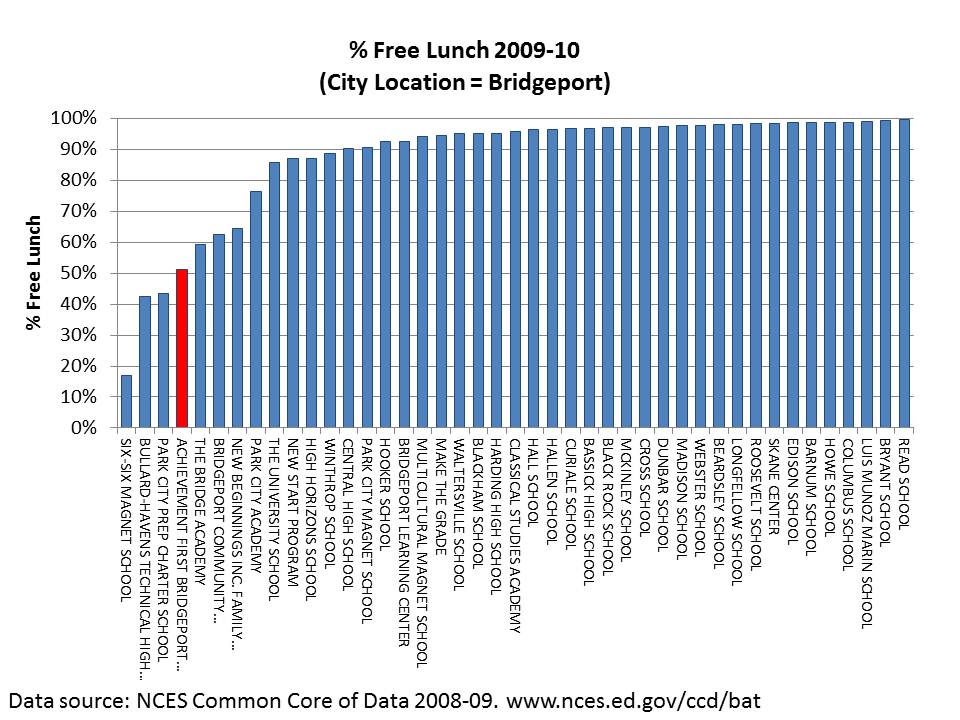

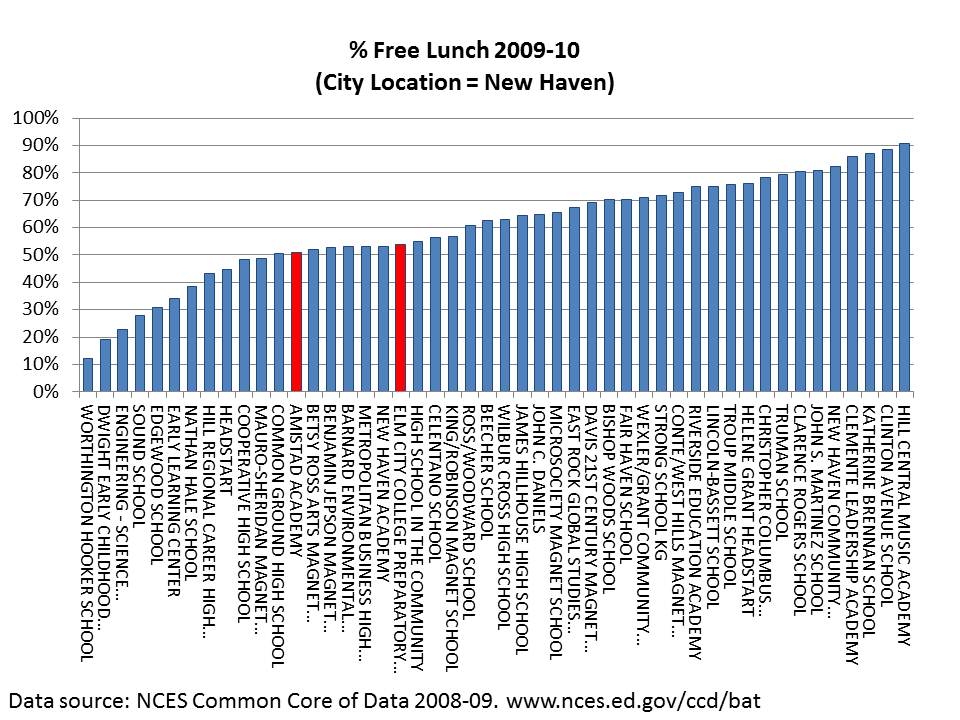

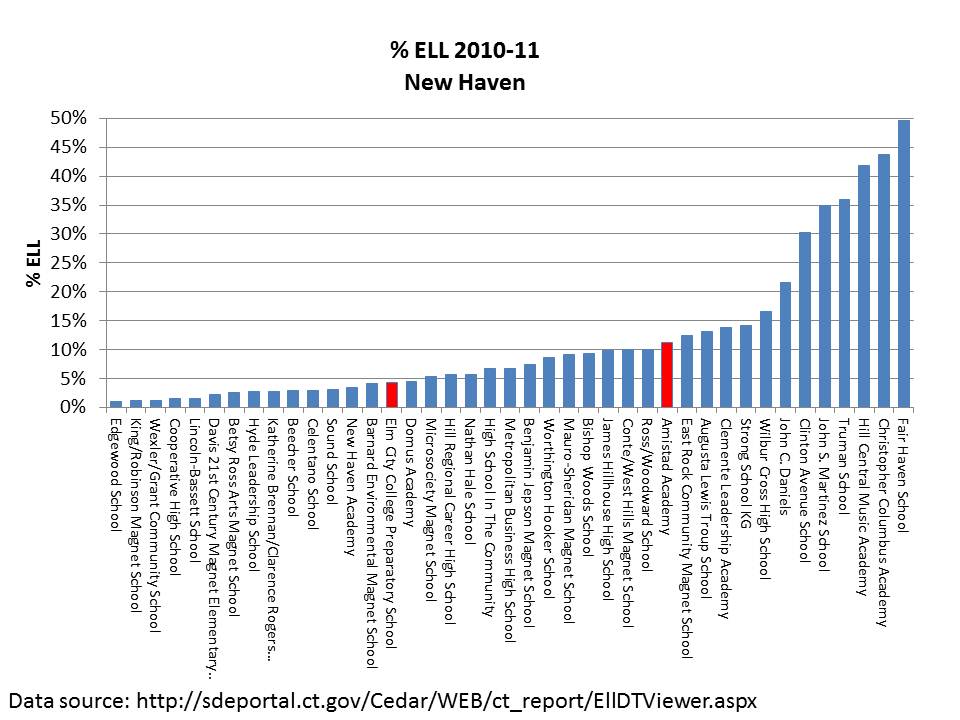

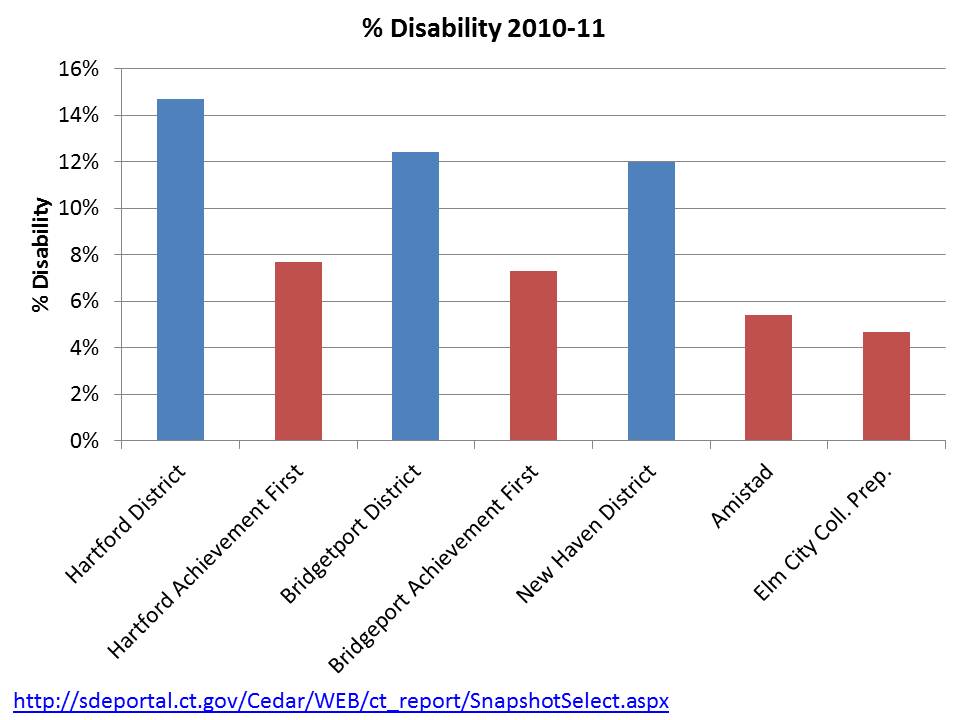

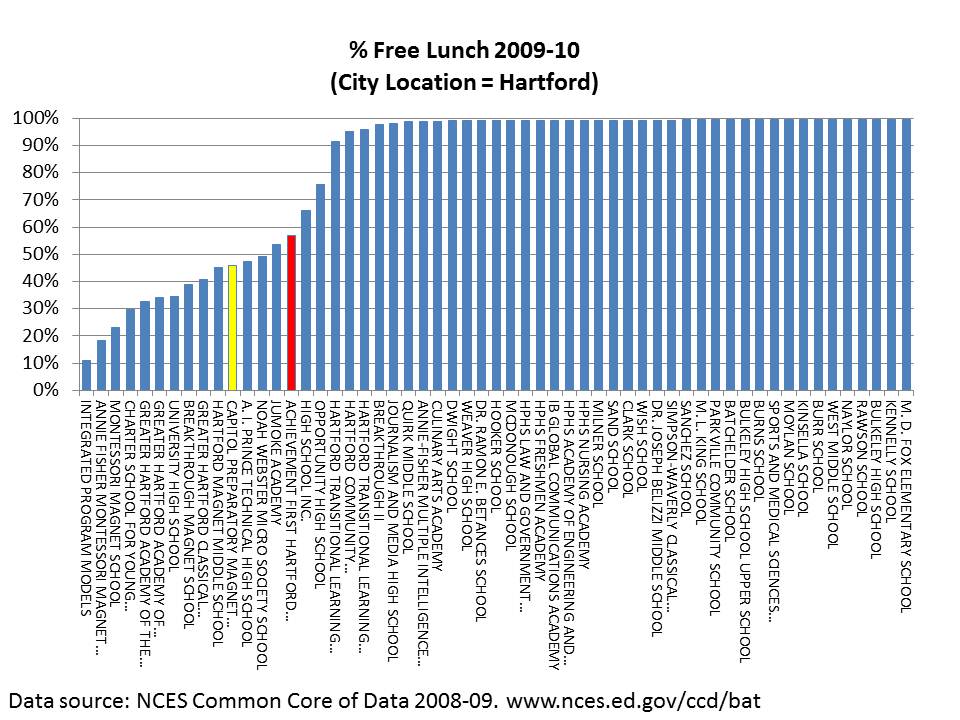

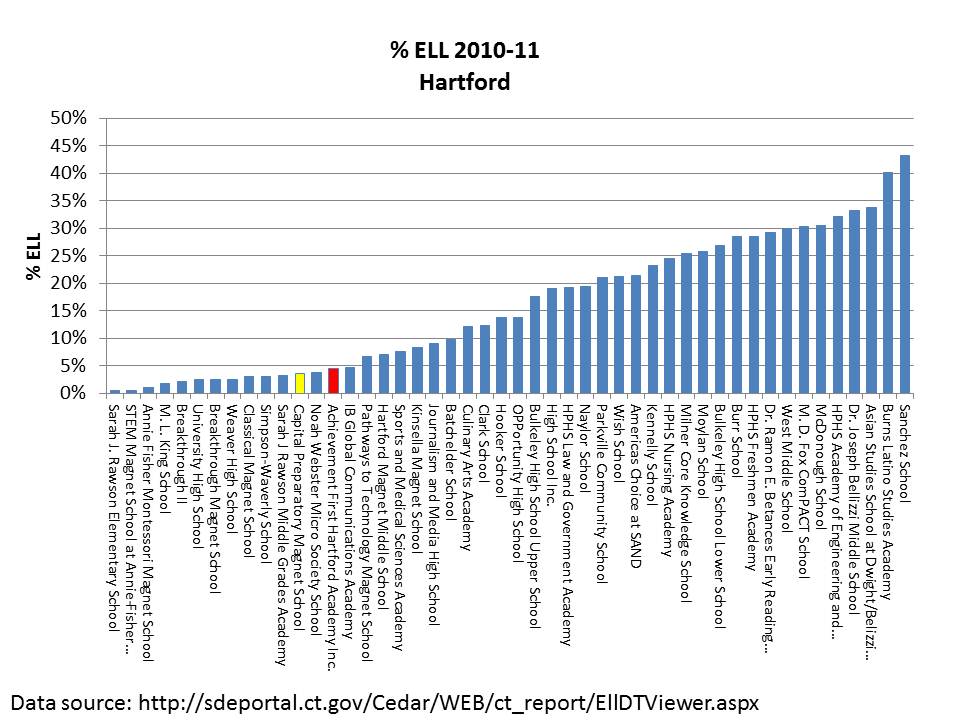

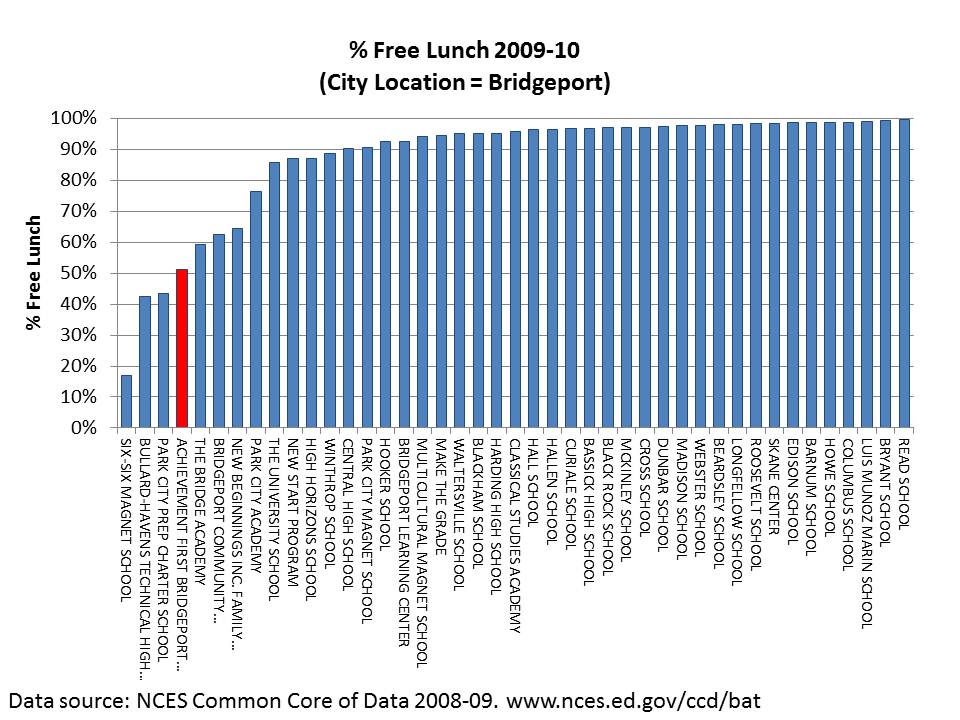

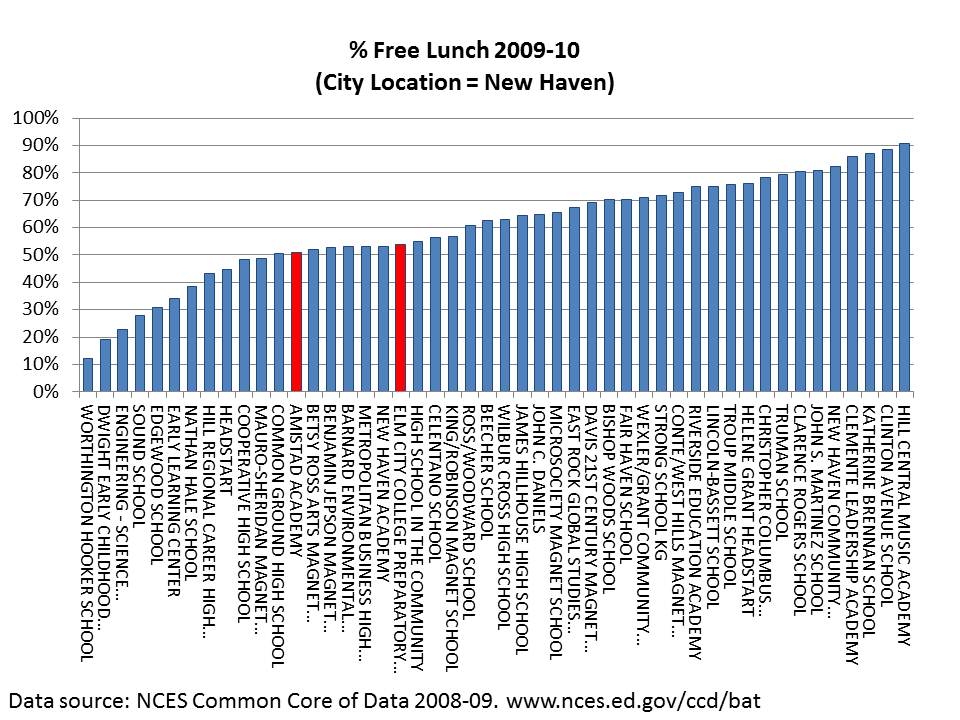

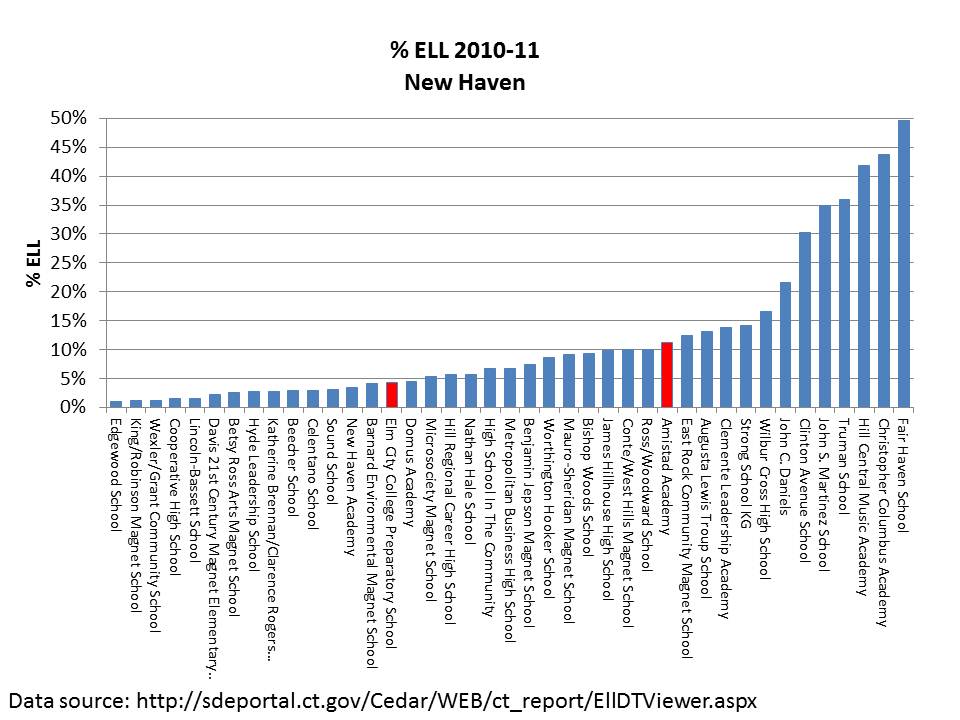

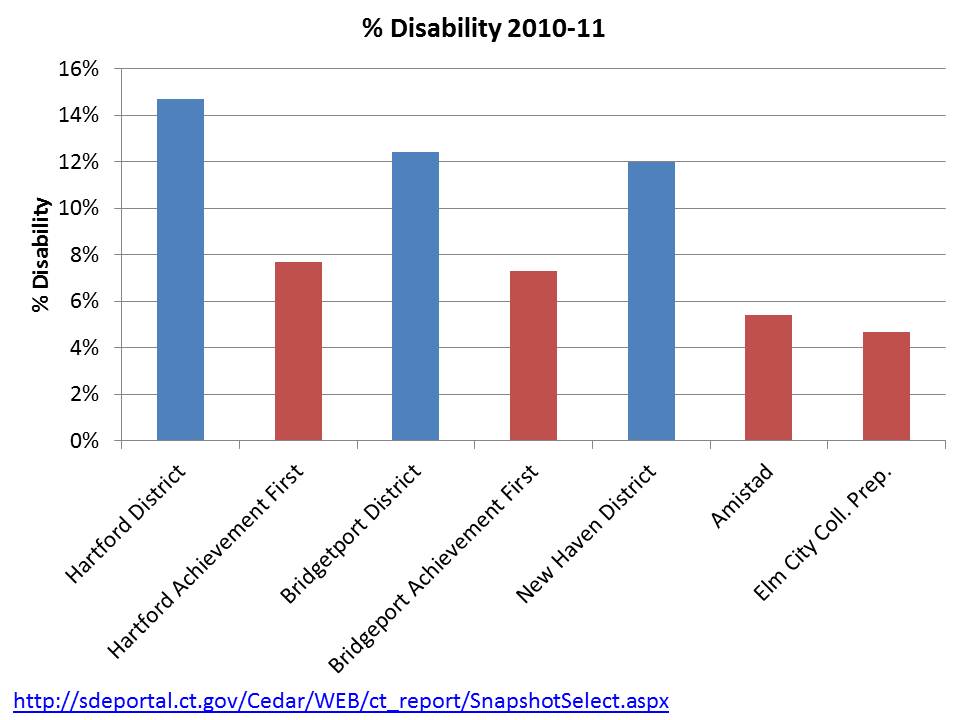

These graphs focus on Connecticut’s most acclaimed high-flying charter schools, those affiliated with Achievement First, and the graphs are relatively self explanatory.

Note: % Free lunch information comes form 2009-10 NCES Common Core of Data and includes all schools identified as being located within the city limits. % ELL data is from 2010-11 CEDaR system and includes Achievement First Charters and District Schools (leading to smaller numbers of total schools due to special school and other charter exclusions). Special education data are gathered from individual school snapshot reports (CEDaR).

For fun, in this one, I’ve also noted the position of Capital Prep – which is a magnet school, and it is well understood that the student populations at Hartford magnets are substantively different from Hartford regular public schools. But strangely, there is even substantial rhetoric out there about this school being an example of beating the odds!?!

Finally:

Put very simply – Achievement First Charter schools DO NOT SERVE STUDENT POPULATIONS COMPARABLE TO DISTRICT POPULATIONS.

I have explained previously how this is relevant to broader policy discussions. Specifically, it is relevant to the claim that these schools can serve as a model for expansion yielding similar outcomes for all children in New Haven, Bridgeport or Hartford. In very simple terms, there are not enough non-low income, non-disabled and non-ELL kids around in these settings to broadly replicate the outcomes that these schools may be achieving. Again, this public policy perspective contrasts with the parental choice perspective. While from a public policy perspective we are concerned that these outcomes may be merely a function of selective demography, from a personal/parental choice perspective within any one of these cities, the concern is only for the outcomes, and achieving those outcomes by having a desirable peer group is as desirable as achieving those outcomes by providing higher quality service.

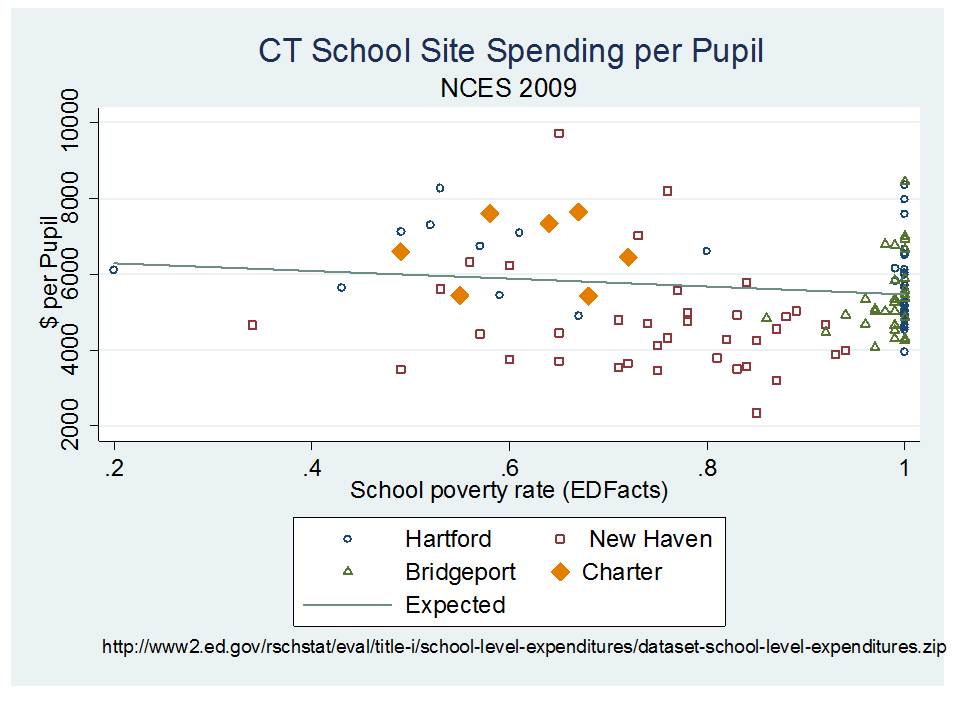

FINANCIAL & OTHER RESOURCE COMPARISONS

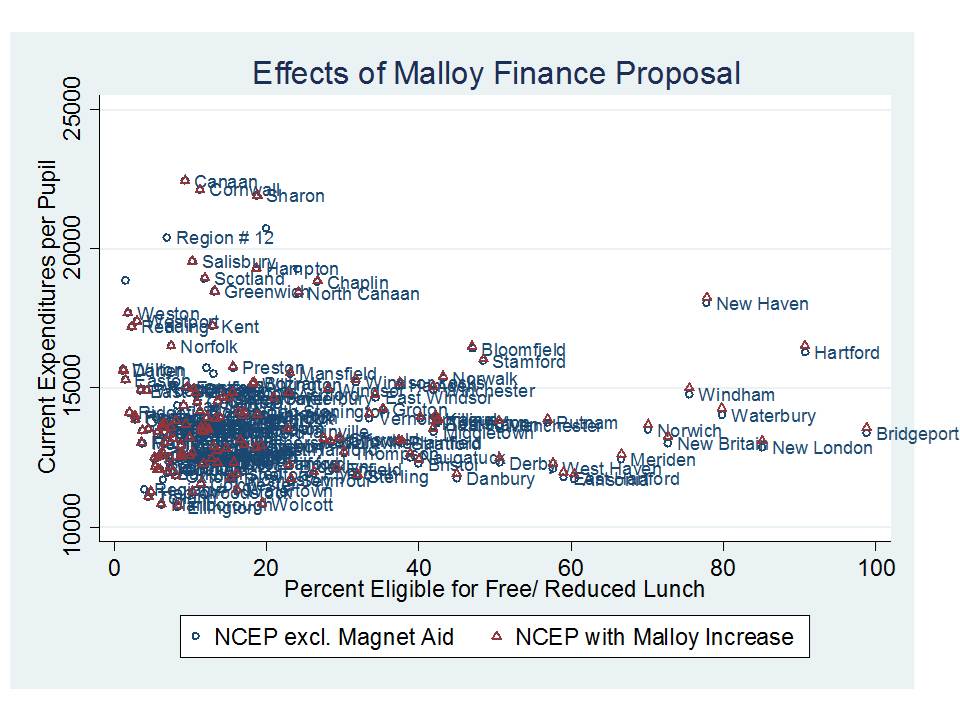

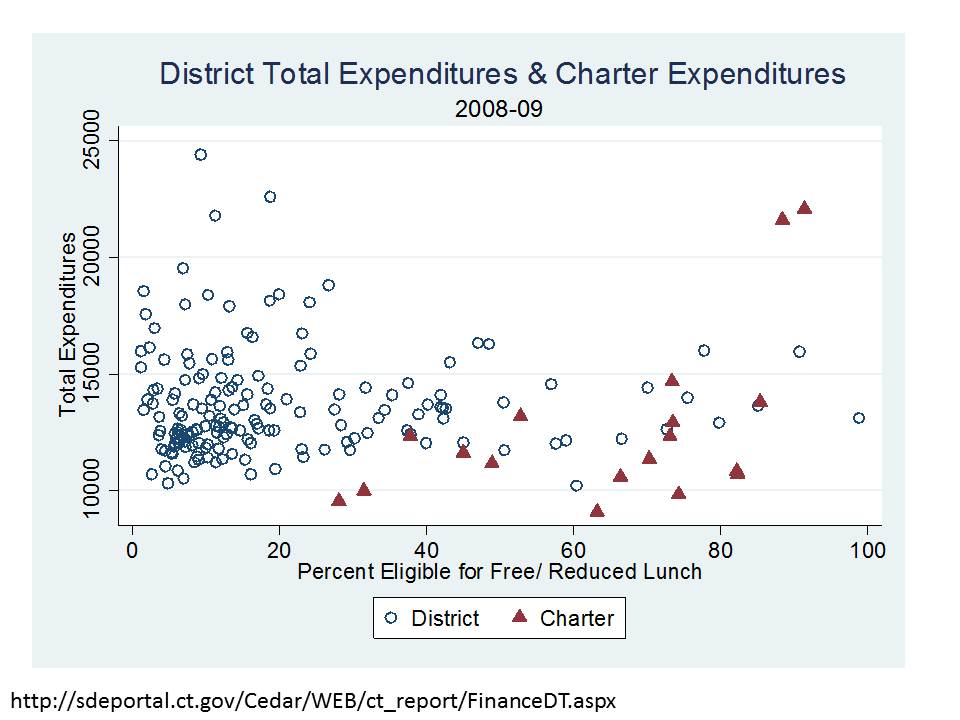

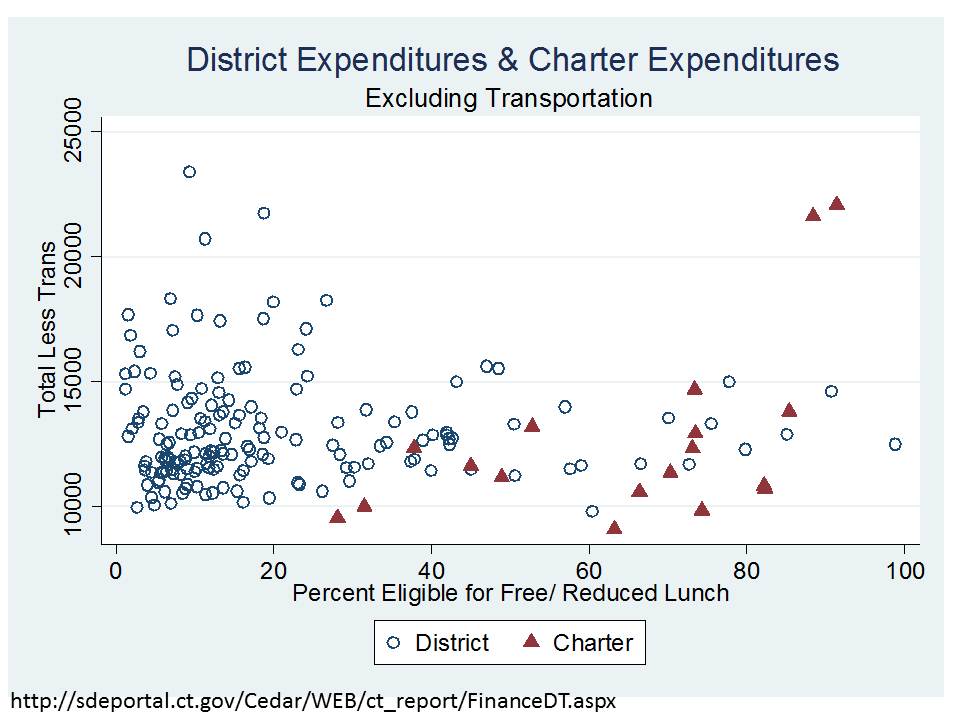

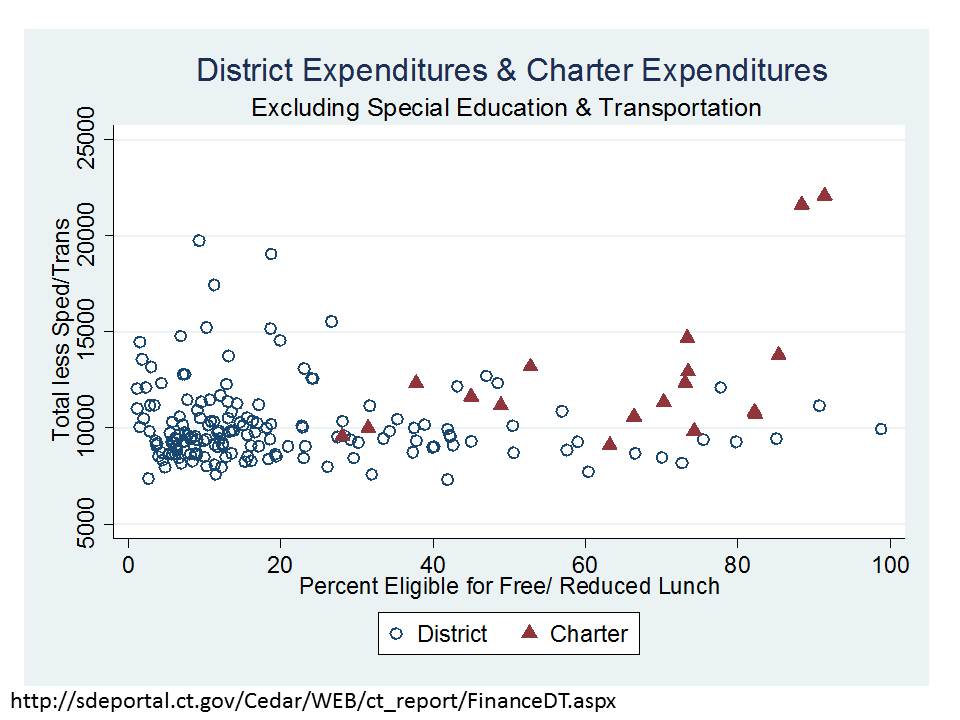

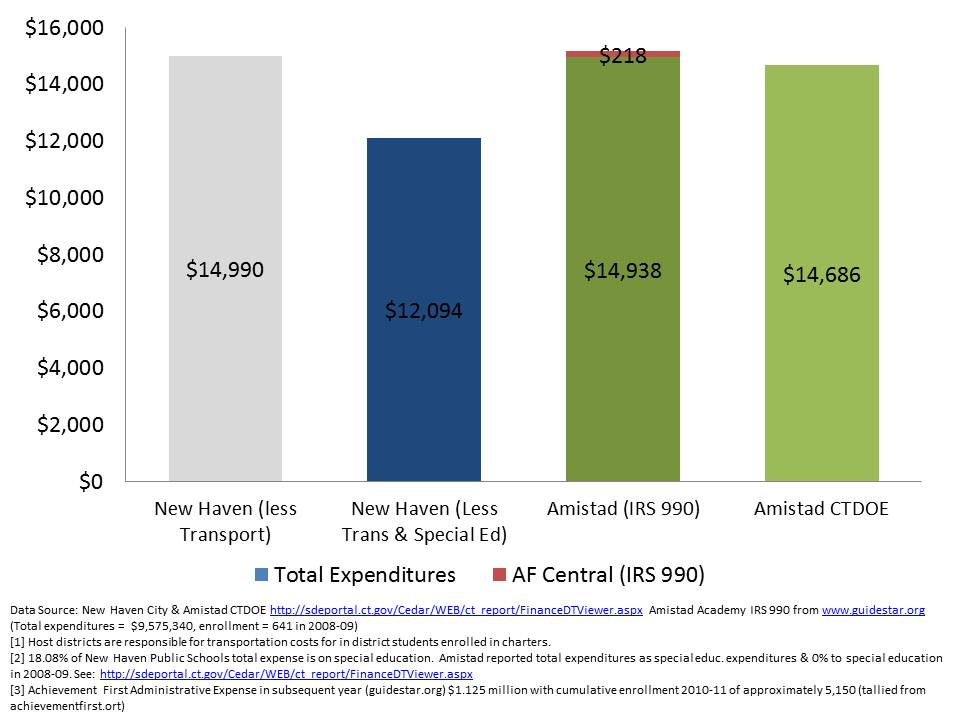

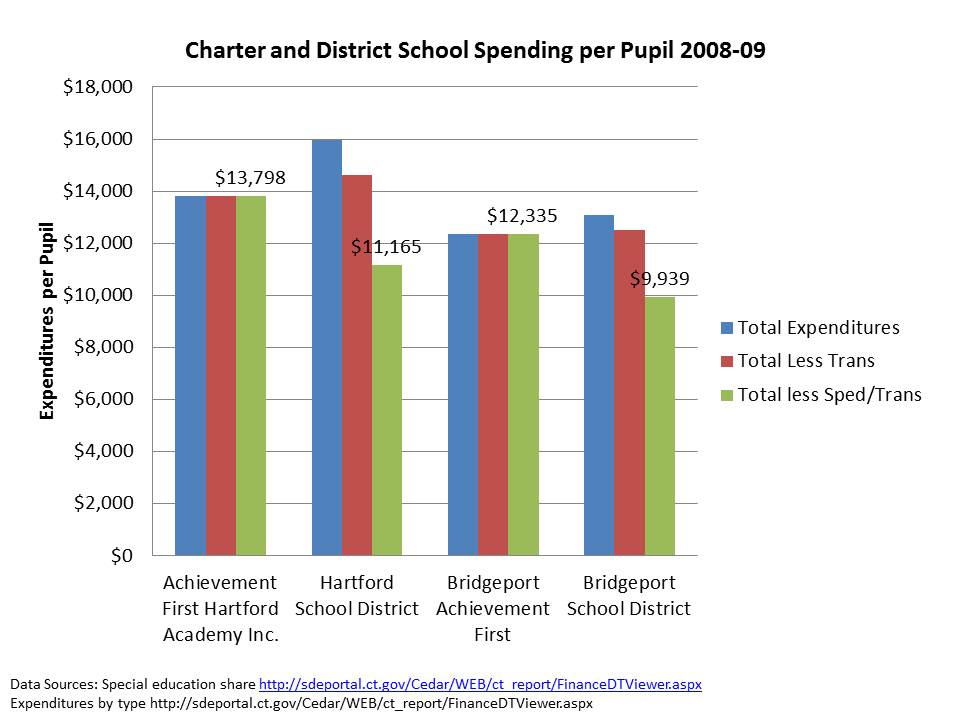

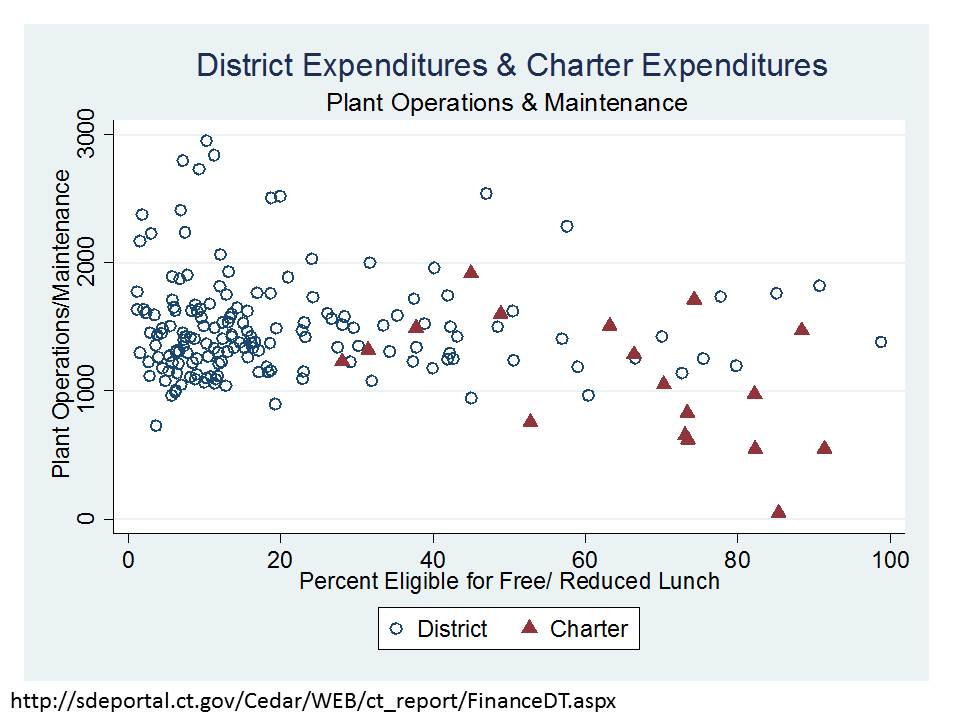

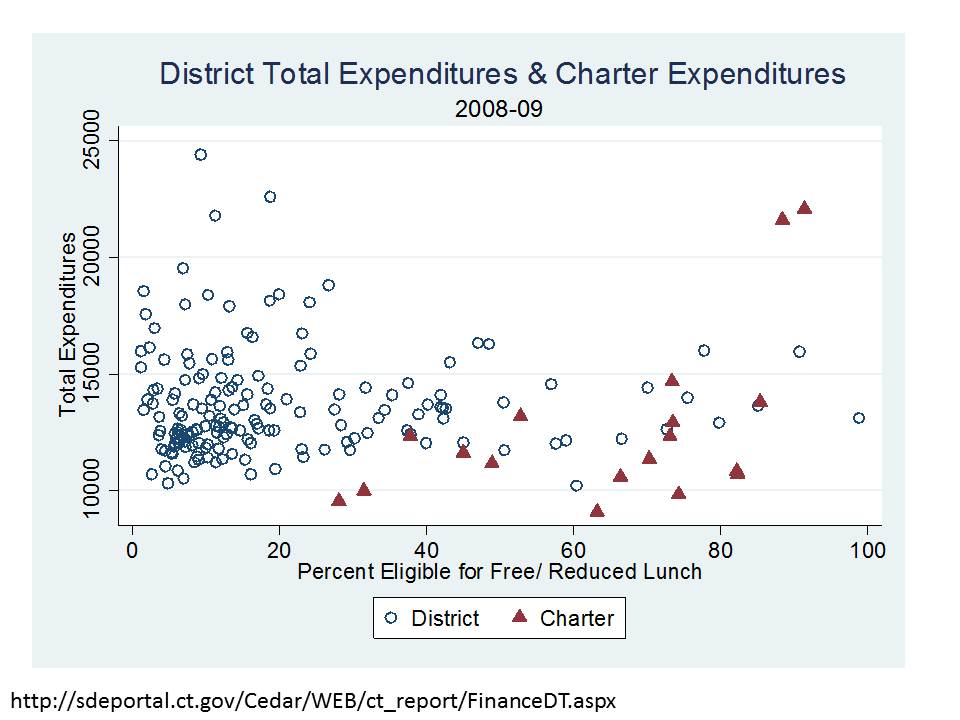

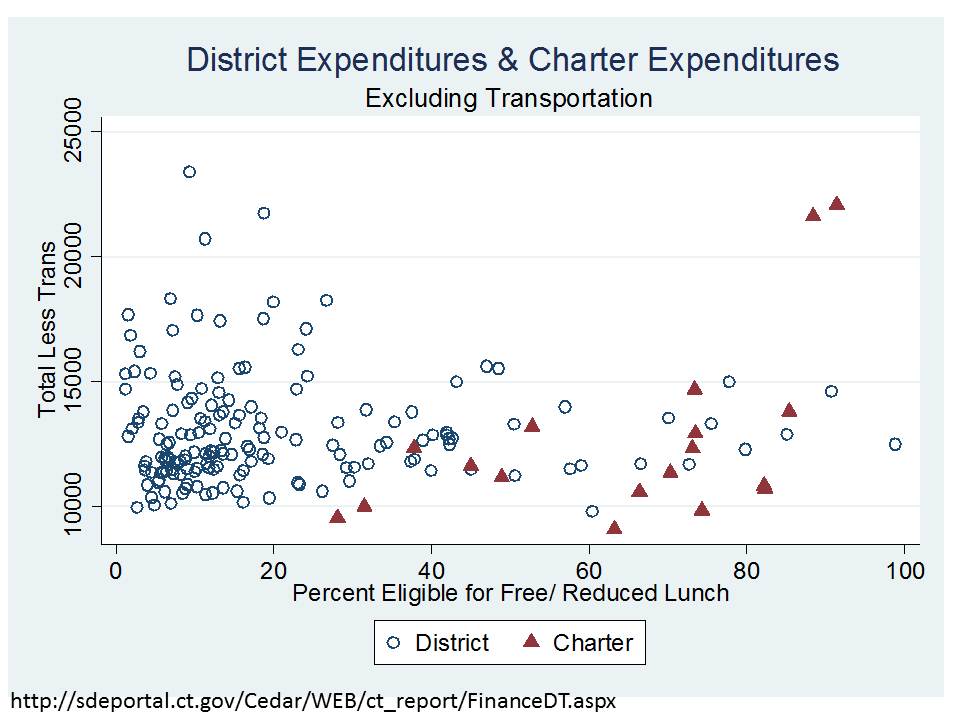

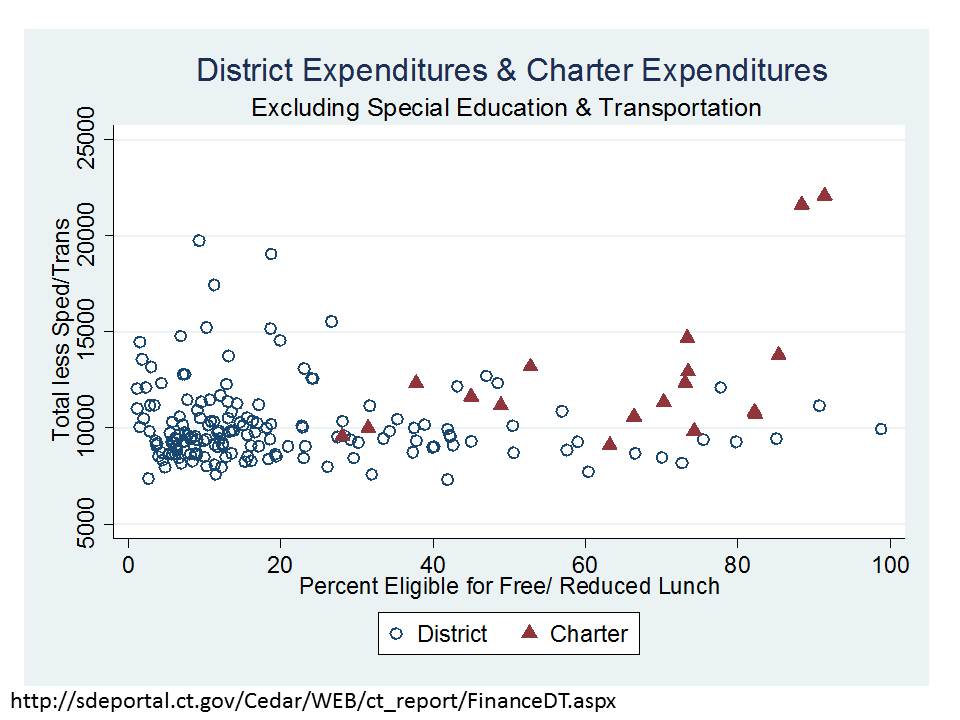

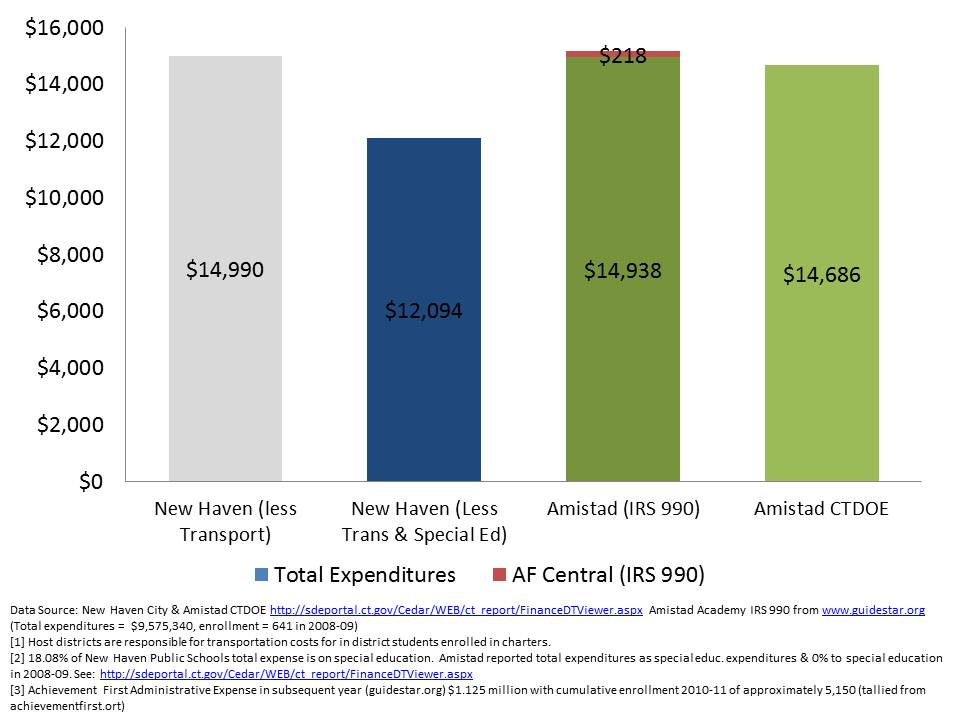

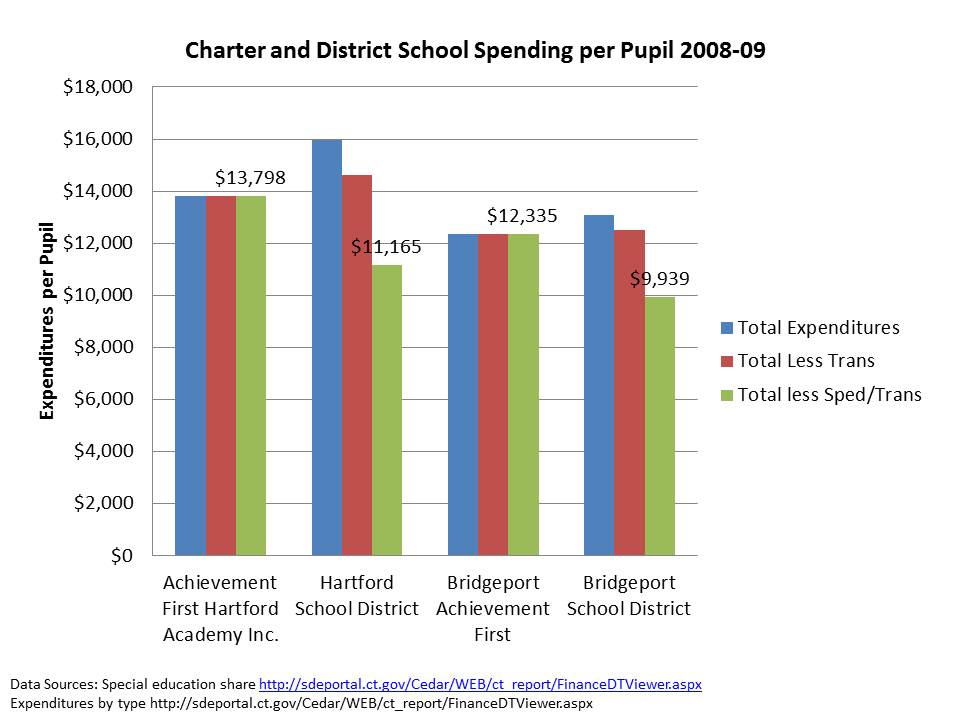

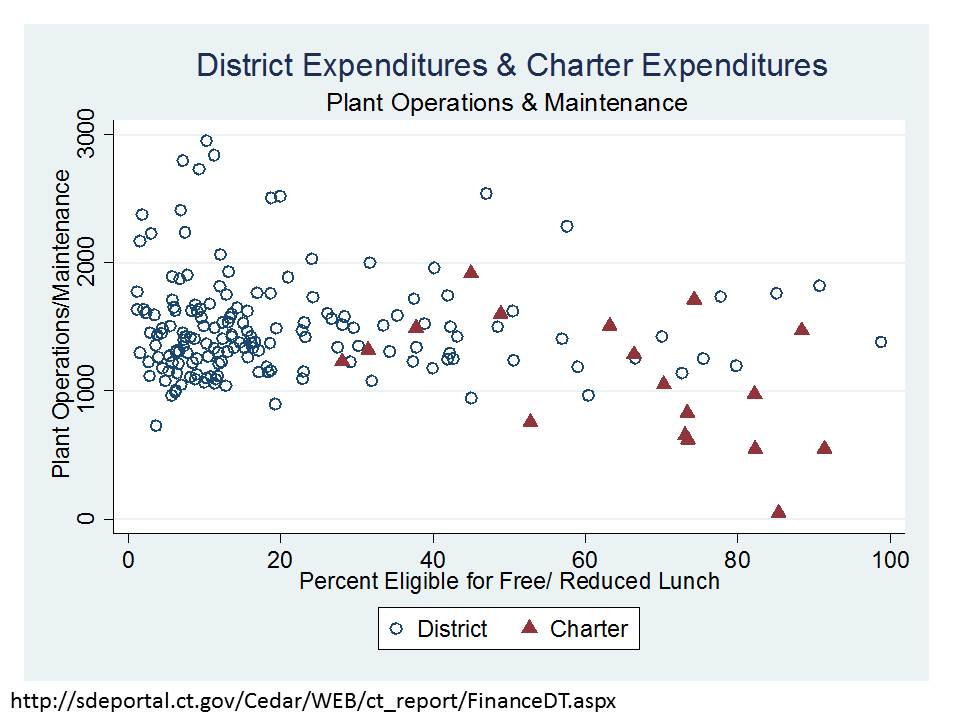

Below (at end of post) I provide an important explanation/discussion of issues in comparing charter school and traditional public district finances. First and foremost, it is important to understand simply from the above comparisons, that these schools serve substantively different student populations, thus equal dollar inputs is, from the outset, an inappropriate fairness metric. But the complexities go beyond that. In CT and other locations, host districts retain responsibility for transportation and special education costs, even for students attending charters. Thus, it would be reasonable, as I did in a previous post to subtract out those expenditures from district budgets when comparing to charter spending. Now, on the other side, Charters do often have to lease facilities at their own expense, which in a state like CT would typically run about $1,500 to $2,000 per pupil. More in NYC, similar in NJ. But, while charter advocates would have you believe that districts have $0 cost of facilities, that is not necessarily true. For CT public districts, plant operations expenses per pupil tend to be on the order of $1,000 to $2,000 per pupil, and large urban districts maintaining significant capital stock with significant deferred maintenance tend to be toward the high end. More discussion of the factors which cut each way is in the note at the end of the post. So, here’s a quick run-down on charter and district expenditures in CT, cut different ways (all expressed in per pupil terms, and with respect to district/charter % Free or Reduced price lunch shares):

So… after taking out special education and transportation, charters appear relatively well resourced.

EVEN IF WE ASSUME THAT THE NET DIFFERENCE IN FACILITIES COST IS ABOUT $1,000 PER PUPIL BETWEEN CHARTER AND DISTRICT SCHOOLS, CHARTERS ARE IN PRETTY GOOD SHAPE IN CT. (That assumption would pull the $1,000 per pupil off the charter estimates above). This would assume the facilities maintenance/operations/debt service in hosts to be about $1,000 and lease/operations/maintenance for charters to be about $2,000 per pupil.

Here’s an alternative angle (from previous post)

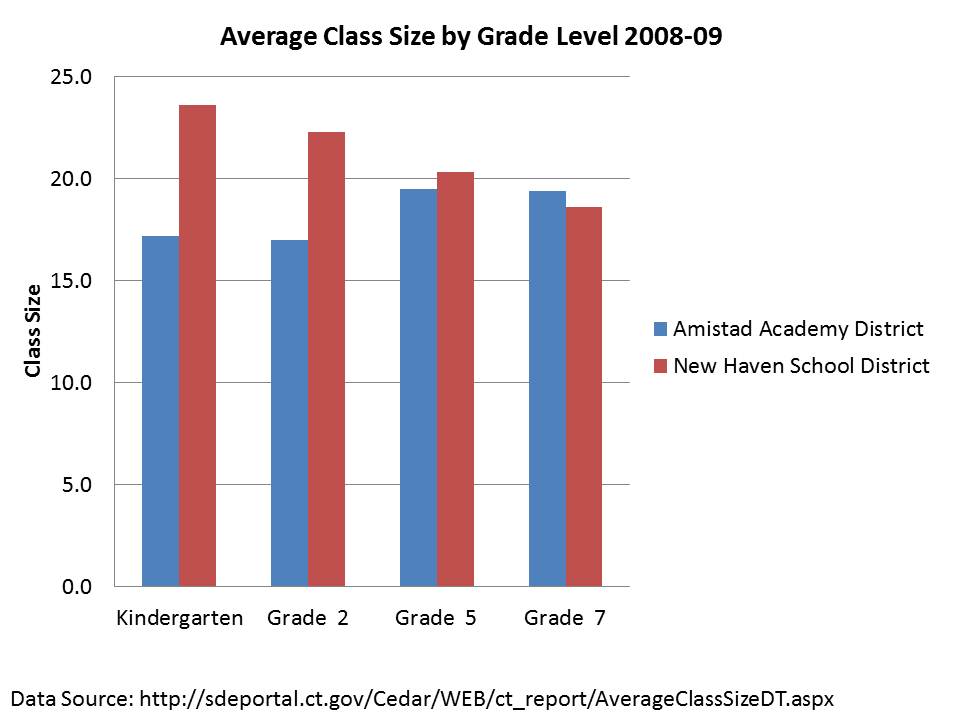

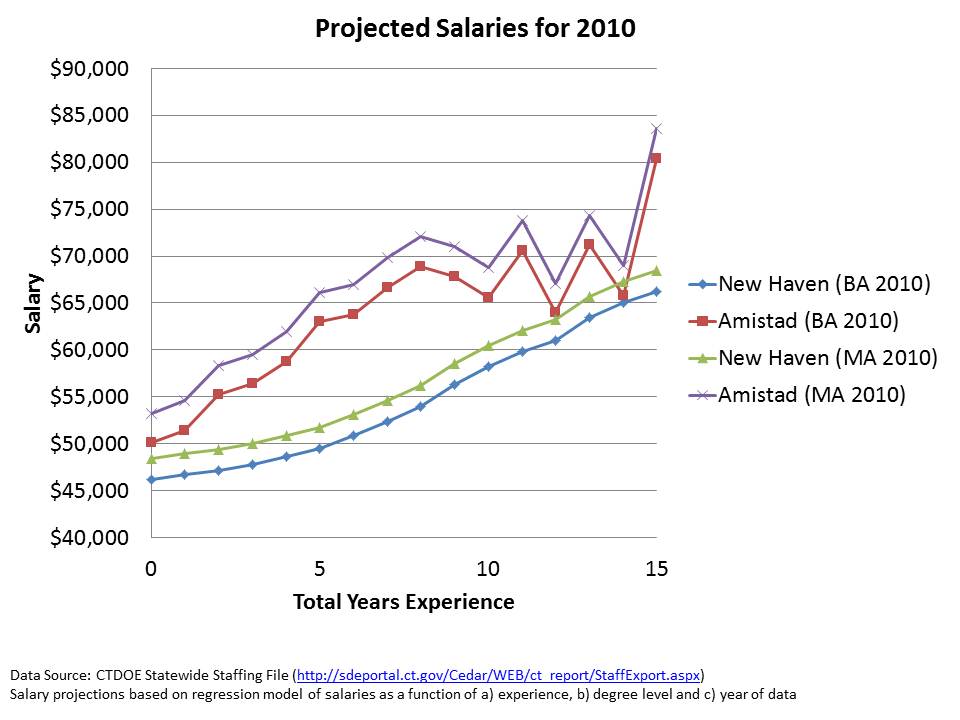

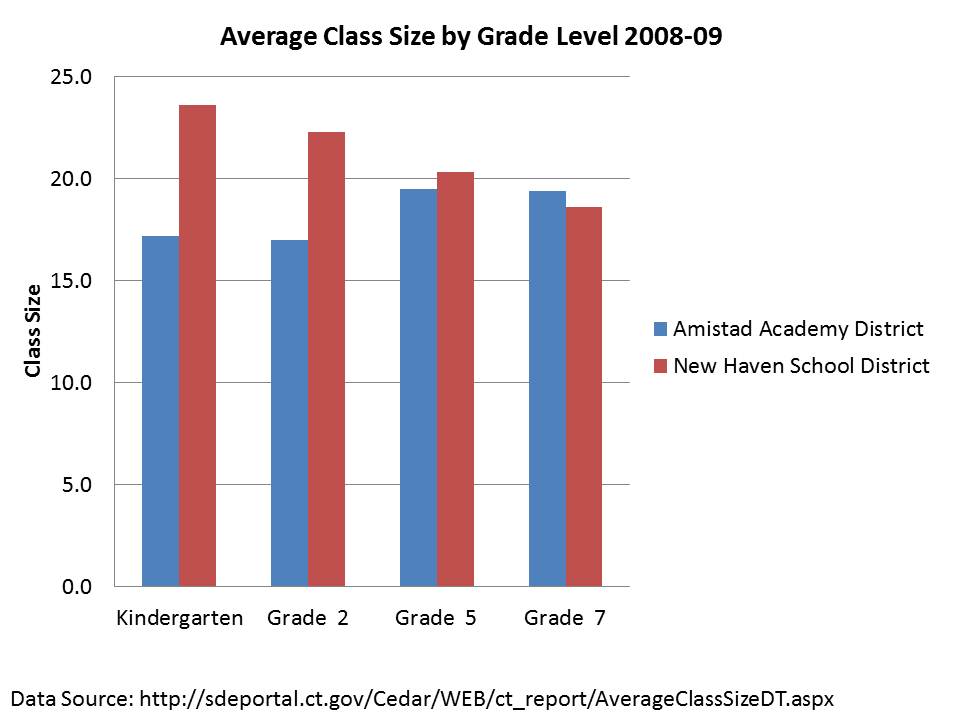

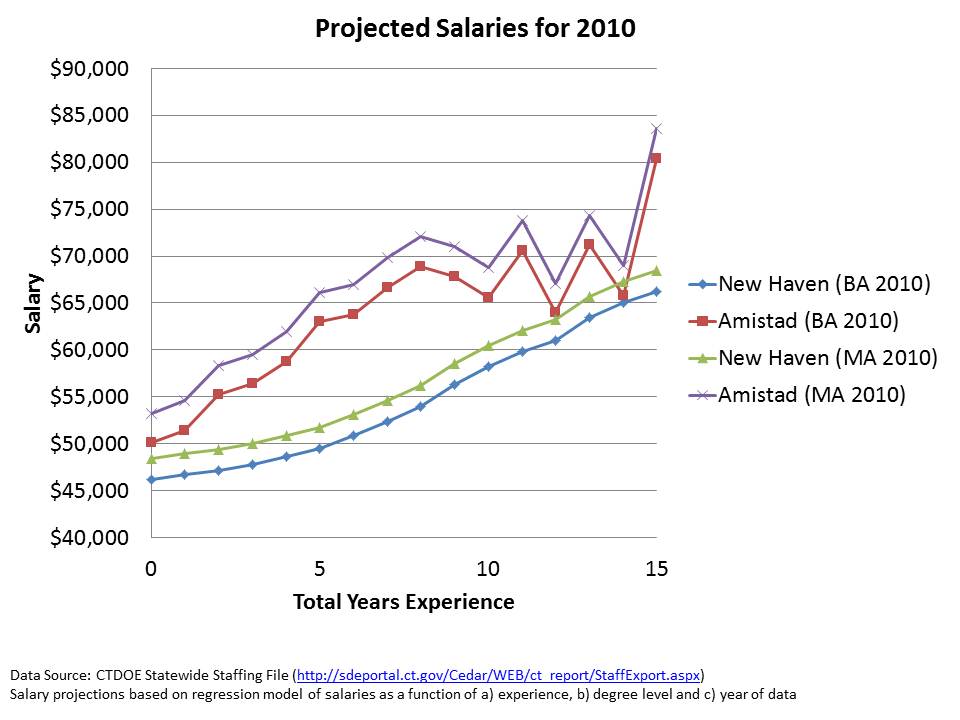

I also showed in a previous post that for Amistad, the funding difference translates to both a class size advantage and salary advantage:

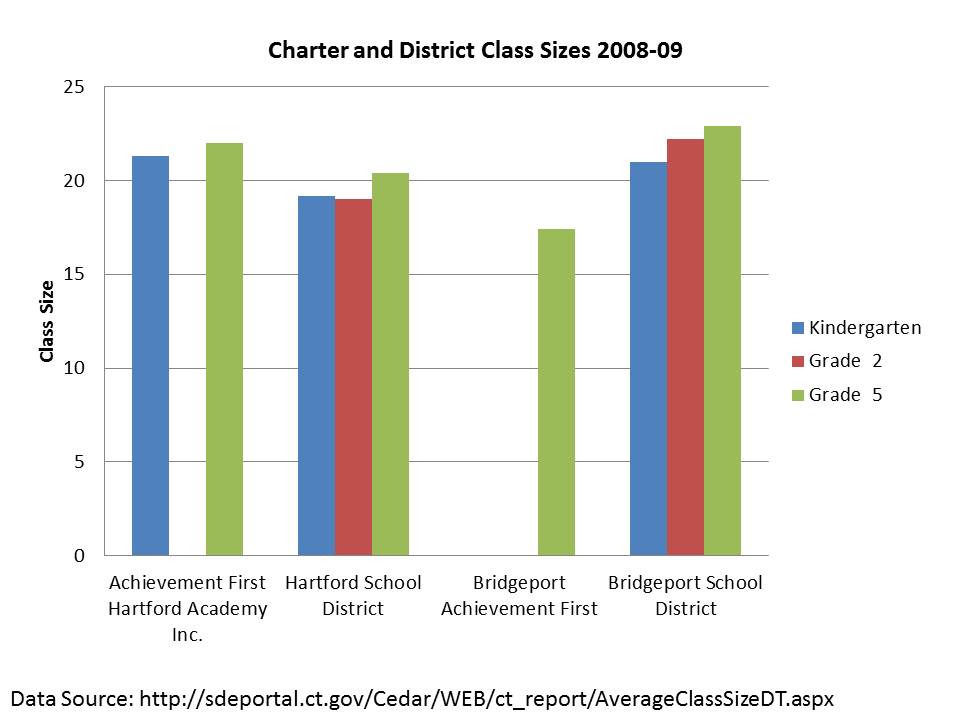

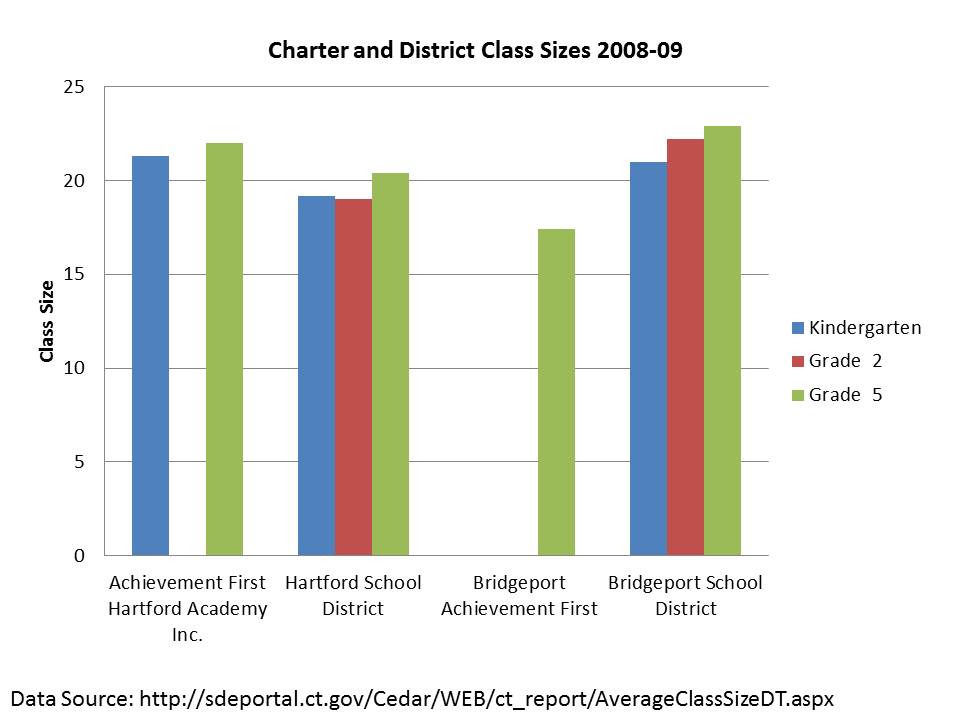

Class sizes are more mixed in Hartford, but in Bridgeport (the least well funded urban district), Achievement First offers much smaller class size:

OUTCOMES

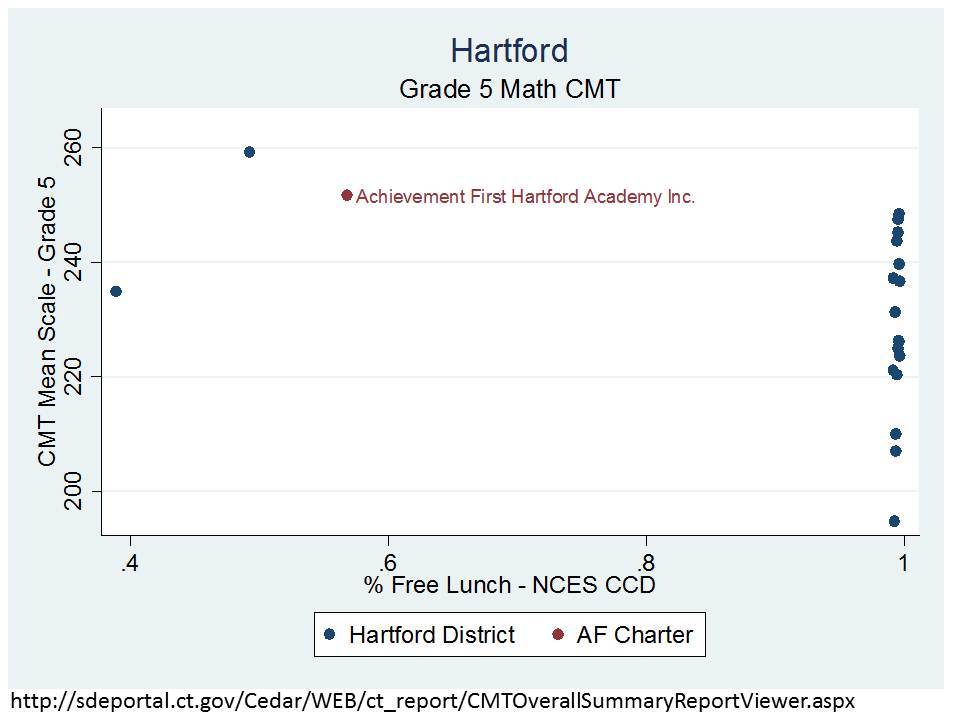

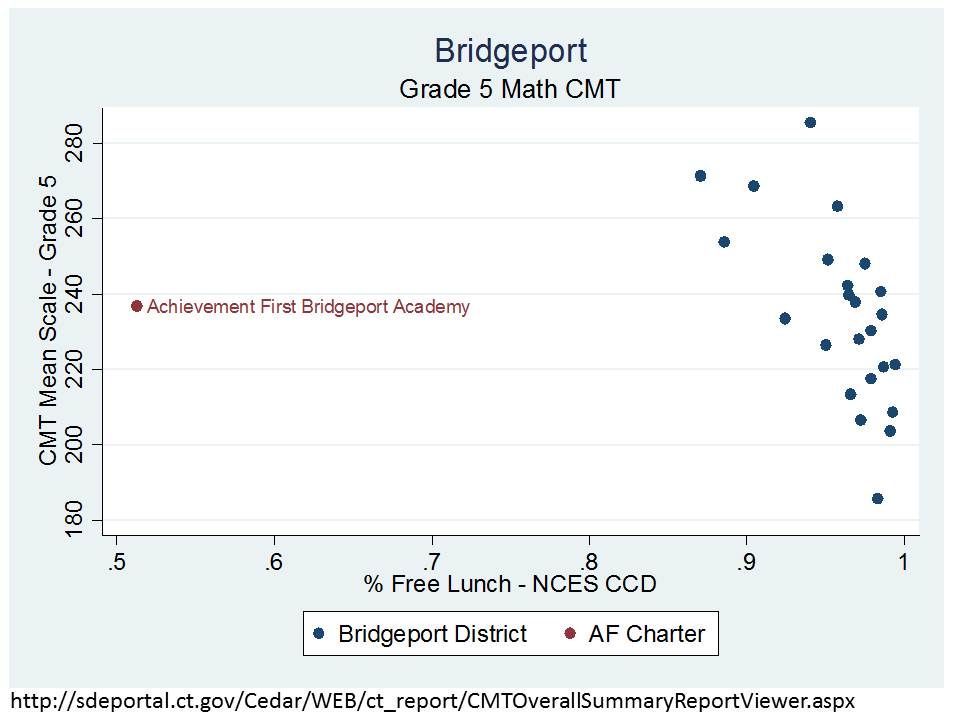

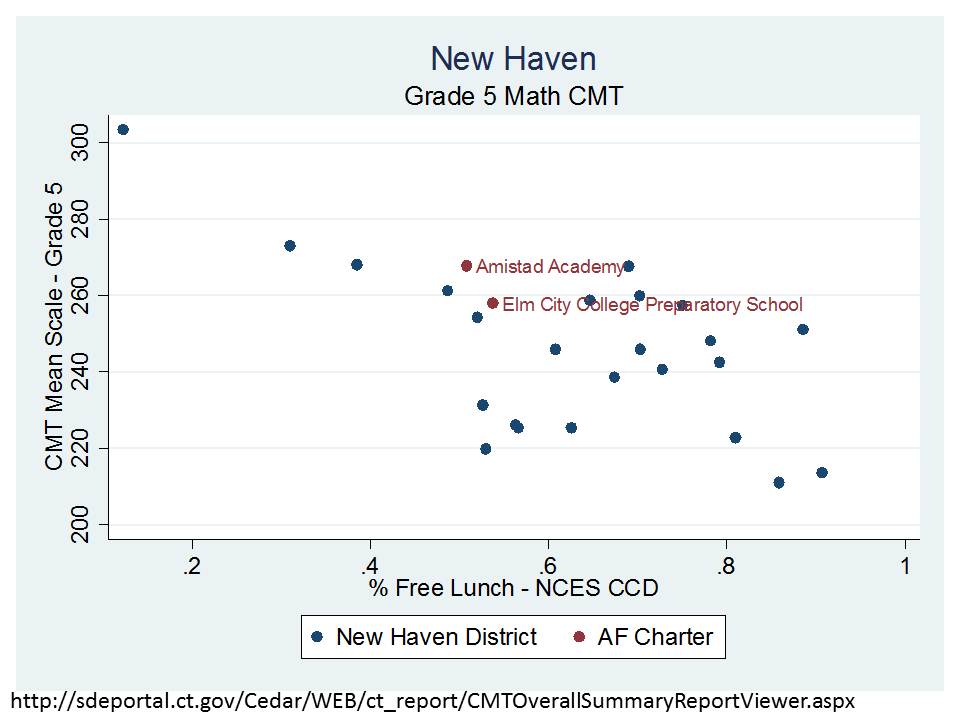

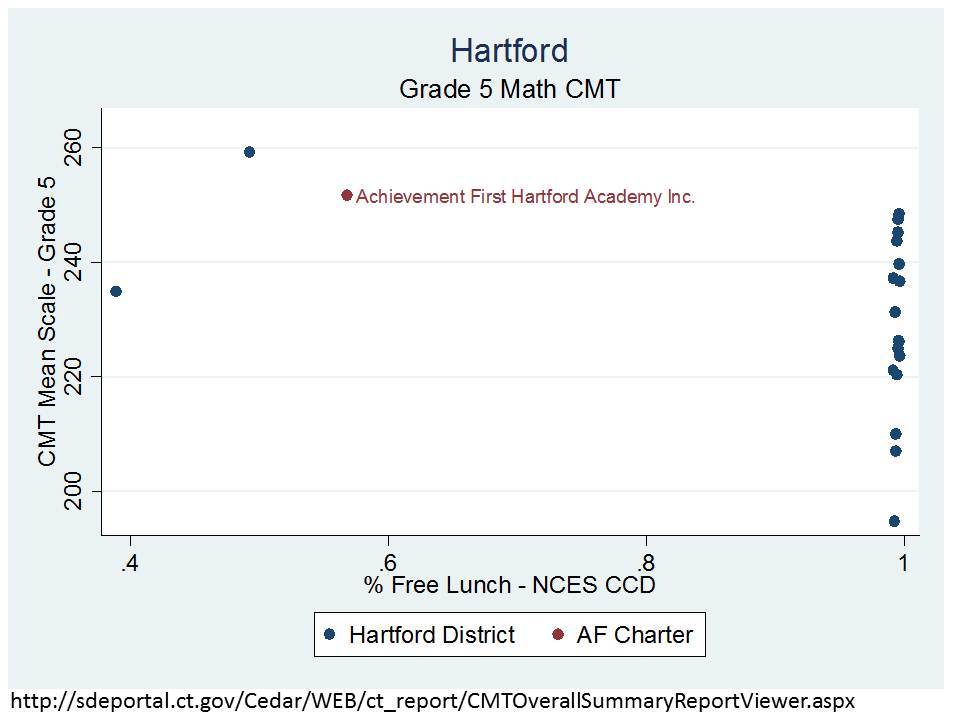

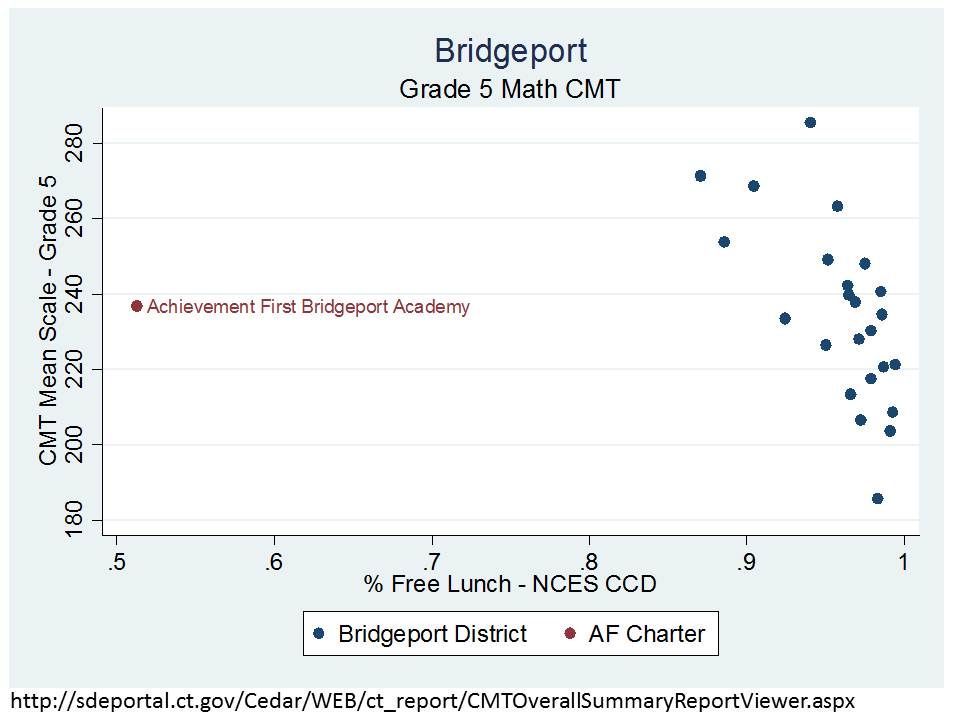

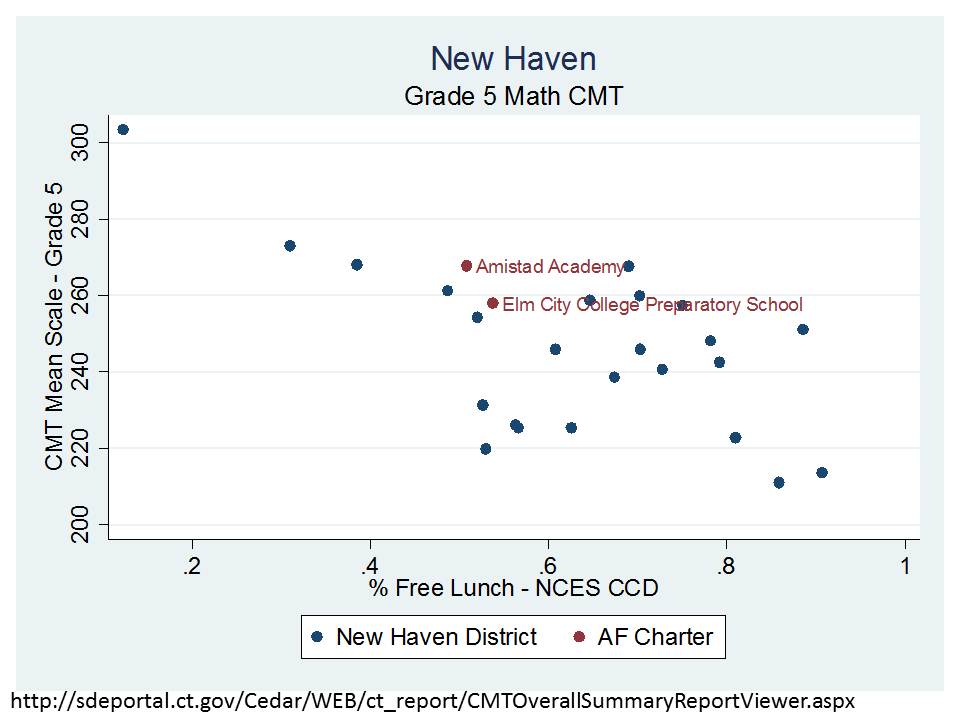

The final prong of the argument involves those higher outcomes – those beating the odds with the same kids and less money – outcomes. Here are a few samples of the 5th grade math outcomes by district, focusing on the position of the Achievement First charter schools. I’ve graphed the school level 5th grade math 2010-11 Connecticut Mastery Test mean scale score by school level % Free Lunch (prior year). It’s important to understand that these charter schools not only have much lower % Free Lunch but also tend to have low ELL populations and also have much lower shares of enrollment with disabilities.

Here’s Hartford, where the Achievement First school looks so unlike nearly every Hartford public school reporting 5th grade math scores that it’s hard to even make a comparison. But, the two dots over near the Achievement First school do perform similarly.

Comparisons are comparably ridiculous in Bridgeport.

But more reasonable in New Haven! Even then, Amistad and Elm City Prep fall somewhat in line with New Haven schools serving similar % Free Lunch.

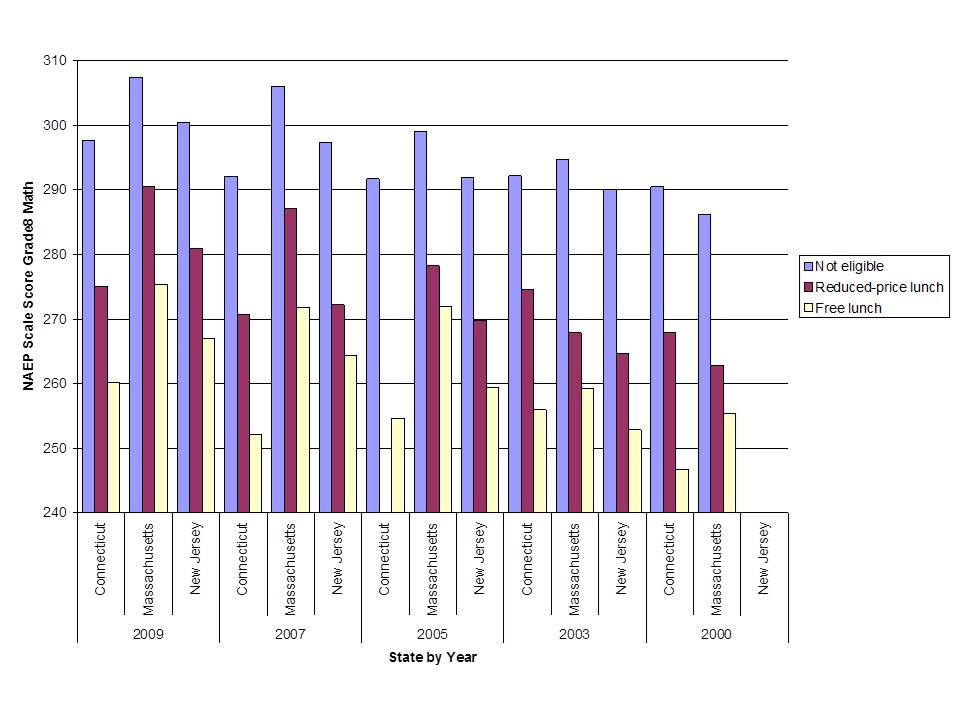

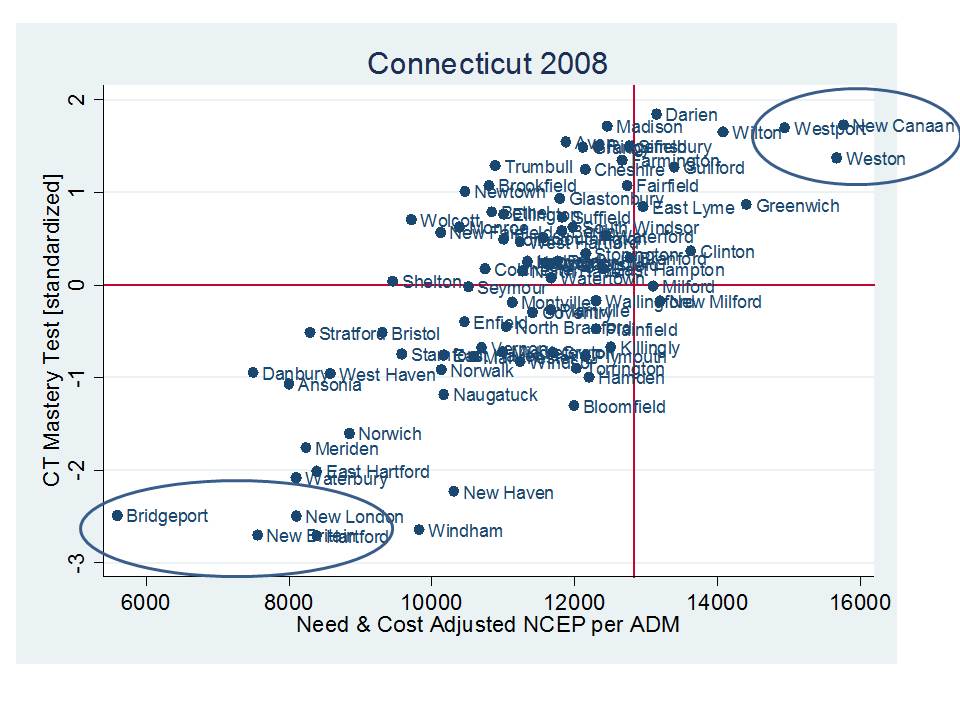

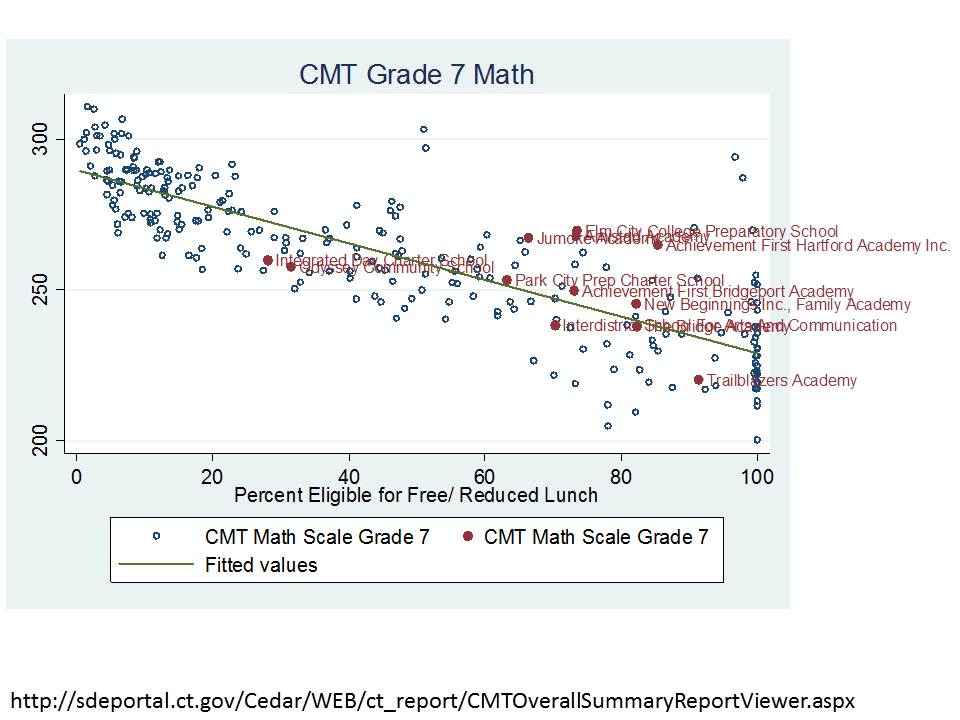

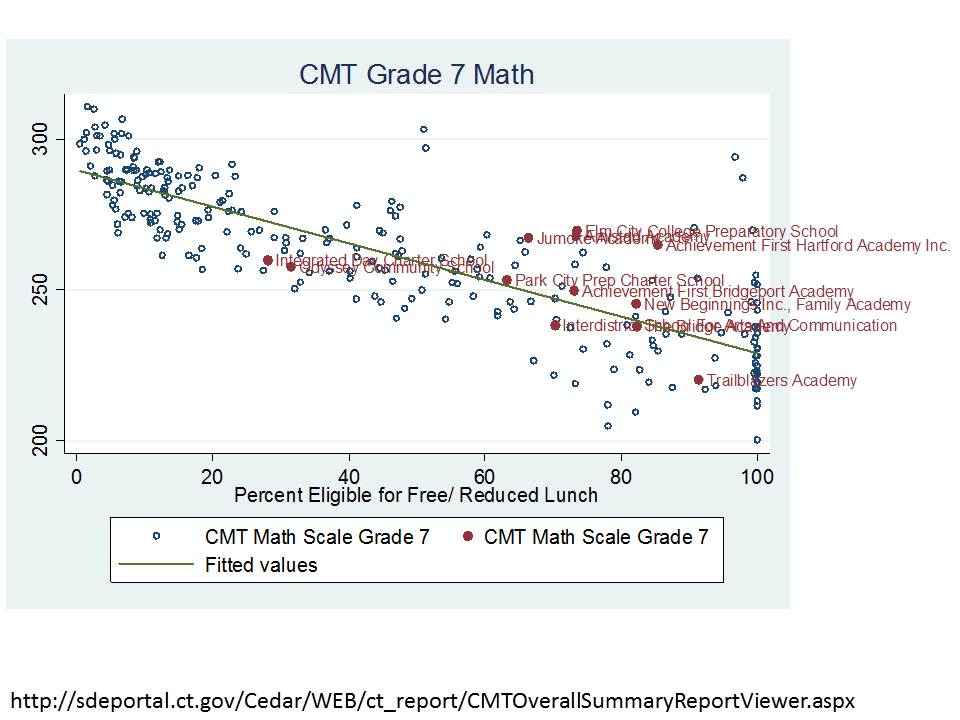

A statewide look at 7th grade math scores provides a better showing especially for Achievement First schools, but the analysis is hardly decisive. Note that this graph uses % Free or Reduced Lunch from CEDaR sources. Using such a high income threshold for low income status tends to mash schools in urban districts against the right hand side of the figure, removing some important variation. I’ll redo with % free if/when I get the chance. This graph includes schools statewide, including affluent suburban schools. Among the notable features of the graph is that low income status matters, whether for charter schools or for traditional district schools. Most fall along the trendline.

In this case, the Achievement First schools in particular have higher math mean scale scores than traditional public schools serving the same % Free Lunch, BUT… this DOES NOT ACCOUNT FOR THE ADDITIONAL DIFFERENCES IN ELL AND SPECIAL EDUCATION WHICH MAY (WILL) SUBSTANTIALLY INFLUENCE THESE COMPARISONS!

Perhaps most importantly, these scatterplots are essentially little more than descriptive comparisons of mean scale scores against schools similar on a single parameter (% Free Lunch). BUT, even this simple adjustment serves to undermine the current rhetoric in Connecticut, as I discussed in a previous post.

NOTE: Charter-District School Spending Comparisons & the Facilities Cost Issue

A study frequently cited by charter advocates, authored by researchers from Ball State University and Public Impact, compared the charter versus traditional public school funding deficits across states, rating states by the extent that they under-subsidize charter schools.[1] The authors identify no state or city where charter schools are fully, equitably funded. But simple direct comparisons between subsidies for charter schools and public districts can be misleading because public districts may still retain some responsibility for expenditures associated with charters that fall within their district boundaries or that serve students from their district. For example, under many state charter laws, host districts or sending districts retain responsibility for providing transportation services, subsidizing food services, or providing funding for special education services. Revenues provided to host districts to provide these services may show up on host district financial reports, and if the service is financed directly by the host district, the expenditure will also be incurred by the host, not the charter, even though the services are received by charter students. Drawing simple direct comparisons thus can result in a compounded error: Host districts are credited with an expense on children attending charter schools, but children attending charter schools are not credited to the district enrollment. In a per pupil spending calculation for the host districts, this may lead to inflating the numerator (district expenditures) while deflating the denominator (pupils served), thus significantly inflating the district’s per pupil spending. Concurrently, the charter expenditure is deflated.

Correct budgeting would reverse those two entries, essentially subtracting the expense from the budget calculated for the district, while adding the in-kind funding to the charter school calculation. Further, in districts like New York City, the city Department of Education incurs the expense for providing facilities to several charters. That is, the City’s budget, not the charter budgets, incur another expense that serves only charter students. The Ball State/Public Impact study errs egregiously on all fronts, assuming in each and every case that the revenue reported by charter schools versus traditional public schools provides the same range of services and provides those services exclusively for the students in that sector (district or charter).

Charter advocates often argue that charters are most disadvantaged in financial comparisons because charters must often incur from their annual operating expenses, the expenses associated with leasing facilities space. Indeed it is true that charters are not afforded the ability to levy taxes to carry public debt to finance construction of facilities. But it is incorrect to assume when comparing expenditures that for traditional public schools, facilities are already paid for and have no associated costs, while charter schools must bear the burden of leasing at market rates – essentially and “all versus nothing” comparison. First, public districts do have ongoing maintenance and operations costs of facilities as well as payments on debt incurred for capital investment, including new construction and renovation. The average “capital outlay” expenditure of public school districts in 2008-09 was over $2,000 per pupil in New York State, nearly $2,000 per pupil in Texas and about $1,400 per pupil in Ohio. Based on enrollment weighted averages generated from the U.S. Census Bureau’s Fiscal Survey of Local Governments, Elementary and Secondary School Finances 2008-09 (variable tcapout): http://www2.census.gov/govs/school/elsec09t.xls

Second, charter schools finance their facilities by a variety of mechanisms, with many in New York City operating in space provided by the city, many charters nationwide operating in space fully financed with private philanthropy, and many holding lease agreements for privately or publicly owned facilities. New York City is not alone it its choice to provide full facilities support for some charter school operators (http://www.thenotebook.org/blog/124517/district-cant-say-how-many-millions-its-spending-renaissance-charters). Thus, the common characterization that charter schools front 100% of facilities costs from operating budgets, with no public subsidy, and traditional public school facilities are “free” of any costs is wrong in nearly every case, and in some cases, there exists no facilities cost disadvantage whatsoever for charter operators.

Baker and Ferris (2011) point out that while the Ball State/Public Impact Study claims that charter schools in New York State are severely underfunded, the New York City Independent Budget Office (IBO), in more refined analysis focusing only on New York City charters (the majority of charters in the State), points out that charter schools housed within Board of Education facilities are comparably subsidized when compared with traditional public schools (2008-09). In revised analyses, the IBO found that co-located charters (in 2009-10) actually received more than city public schools, while charters housed in private space continued to receive less (after discounting occupancy costs).[1] That is, the funding picture around facilities is more nuanced that is often suggested.

Batdorff, M., Maloney, L., May, J., Doyle, D., & Hassel, B. (2010). Charter School Funding: Inequity Persists. Muncie, IN: Ball State University.

NYC Independent Budget Office (2010, February). Comparing the Level of Public Support: Charter Schools versus Traditional Public Schools. New York: Author, 1

NYC Independent Budget Office (2011) Charter Schools Housed in the City’s School Buildings get More Public Funding per Student than Traditional Public Schools. http://ibo.nyc.ny.us/cgi-park/?p=272

NYC Independent Budget Office (2011) Comparison of Funding Traditional Schools vs. Charter Schools: Supplement http://www.ibo.nyc.ny.us/iboreports/chartersupplement.pdf

Additional Figures

Administrative expenses in charters often include facilities lease agreements in addition to any recruitment/marketing expenses and growth/expansion.