Modern state school finance formulas – aid distribution formulas – typically strive (but fail) to achieve two simultaneous objectives: 1) accounting for differences in the costs of achieving equal educational opportunity across schools and districts, and 2) accounting for differences in the ability of local public school districts to cover those costs. Local district ability to raise revenues might be a function of either or both local taxable property wealth and the incomes of local property owners, thus their ability to pay taxes on their properties.

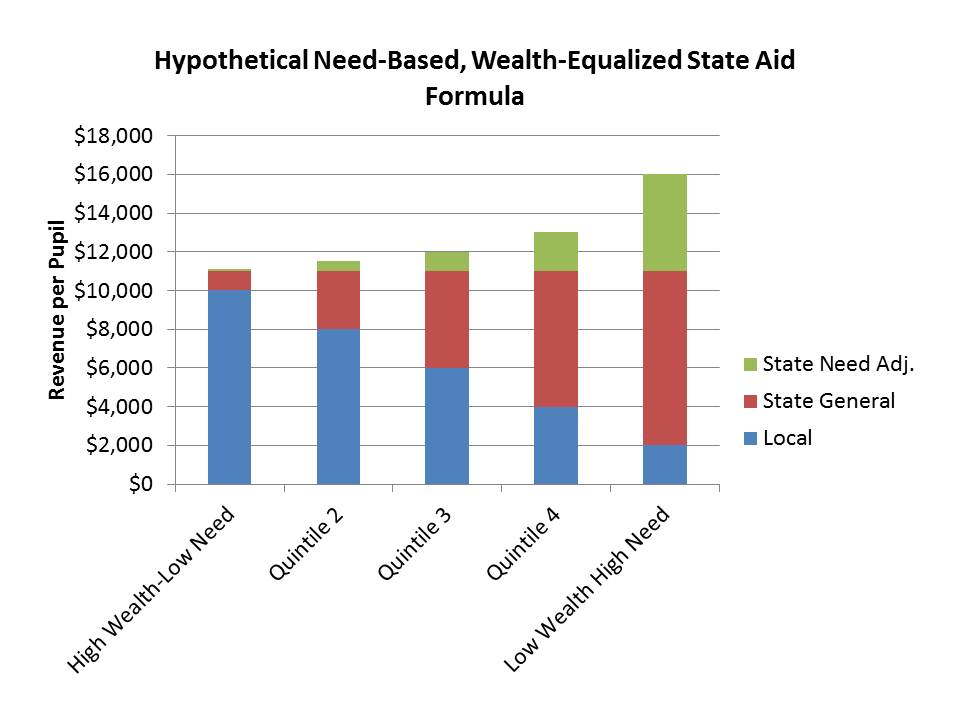

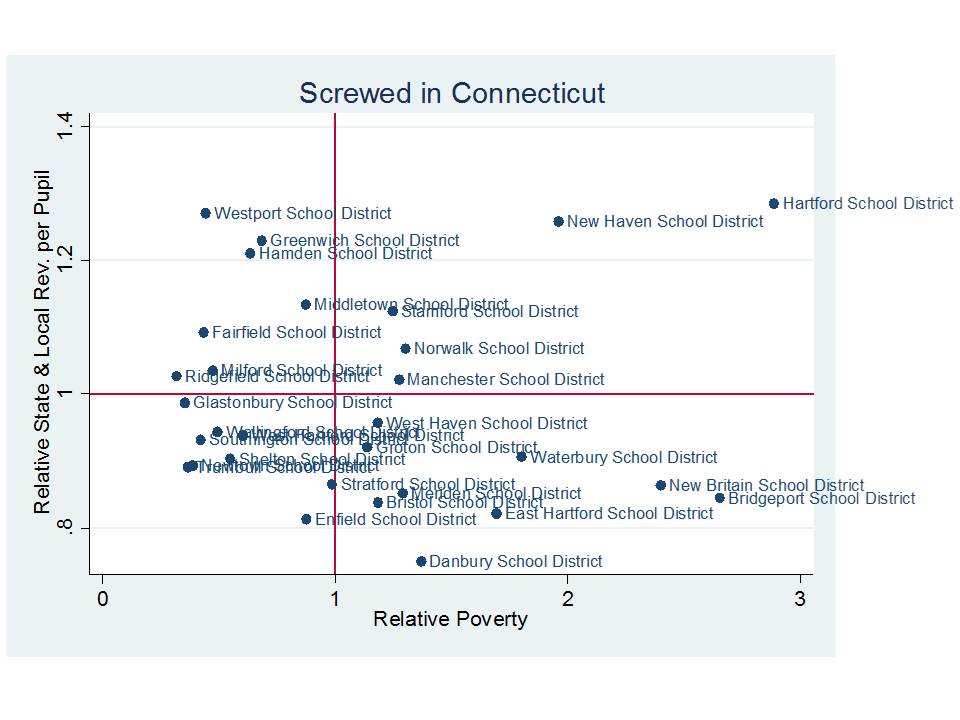

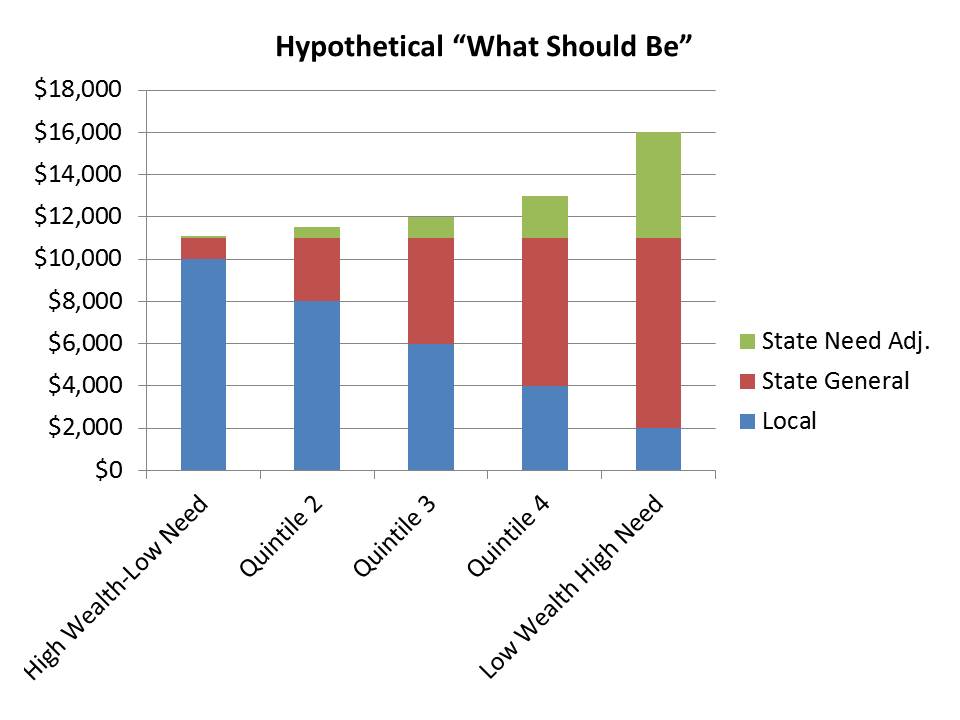

Figure 1 presents a hypothetical example of the distribution of state and local revenue per pupil across school districts, sorted by poverty concentration. The hypothetical relies on the simplified assumption that districts with weaker local revenue raising capacity also tend to be higher in poverty concentration. While that’s not uniformly true, there is often at least some correlation between the two [it serves to make this hypothetical a bit more straightforward]. Accepting this oversimplified characterization, Figure 1 shows that the typical low poverty and high local fiscal capacity district would likely raise the vast majority of the cost of providing its children with equal educational opportunity through local tax dollars. There may be some small share of state general aid assuming that the total cost of providing equal educational opportunity exceeds the local resources raised with a fair tax rate.

Figure 1

This pattern is usually arrived at (if it is arrived at) through some overly complicated formula requiring multiple inefficiently and illogically laid out spreadsheets of calculations and based on measures for which each state chooses its own, completely distinct and unrecognizable nomenclature. A short version might go as follows:

Step 1 – determine target funding level (need & cost adjusted foundation level) per pupil for each district

Target Funding per Pupil = Foundation Level x Student Need Adjustments x Geographic Cost Adjustments

Where the foundation level is some specified per pupil dollar amount. Where student need adjustments include adjustments for individual student educational needs, as for children with limited English language proficiency and children with one or more disabilities, and collective characteristics of the student population such as poverty, homelessness and/or mobility/transiency rates. Where geographic costs refer to geographic variations in competitive wages, and factors such as economies of scale and population sparsity.

Step 2 – determine the share of target funding to be raised by local communities

State Aid per Pupil = Target Funding per Pupil – Local Fair Share

Yep. That’s it. Student needs and costs are accommodated in Step 1, and differences in local wealth and/or capacity to pay are accommodated in Step 2! Now convert that into about 2,000+ separate calculations and create incomprehensible names for each measure (like calling a weight on “low income students” a “student success factor”) and you’ve got a state school finance formula.

But I digress.

Implicit in the design of state school finance systems is that money may be leveraged for improving both the measured and unmeasured outcomes of children. That is, that money matters to the quality of schooling that can be provided in general and that money matters toward the provision of special services for children with greater educational needs. That is, money can be an equalizer of educational opportunity.

In a typical foundation aid formula, it is implied that a foundation level of “X” should be sufficient for producing a given level of student outcomes in an average school district. It is then assumed that if one wishes to produce a higher level of outcomes, the foundation level should be increased. In short, it costs more to achieve higher outcomes[1] and the foundation level in a state school finance formula is the tool used for determining the overall level of support to be provided.

Further, it is assumed that resource levels may be adjusted in order to permit districts in different parts of the state to recruit and retain teachers of comparable quality. That is, the wages paid to teachers affect who will be willing to work in any given school. In other words, teacher wages affect teacher quality and in turn they affect school quality and student outcomes. This is plain common sense, and this teacher wage effect operates at two levels. First, in general, teacher wages must be sufficiently competitive with other career opportunities for similarly educated individuals. The overall competitiveness of teacher wages affects the overall academic quality of those who choose to enter teaching.[2] Second, the relative wages for teachers across local public school districts determine the distribution of teaching quality.[3] Districts with more favorable working conditions (more desirable facilities, fewer low income and minority students) can pay a lower wage and attract the same teacher. Wages matter, therefore, money matters.

Finally, those student need adjustments in state school finance formulas assume that the additional resources can be leveraged to improve outcomes for low income students, or students with limited English language proficiency. First, note that some share of the additional resources is needed in higher poverty settings simply to provide for “real resource” equity – or to pay the wage premium for doing the more complicated job. Second, resource intensive strategies such as reduced class sizes in the early grades, high quality (using qualified teaching staff)[4] early childhood programs, intensive tutoring and extended learning time programs may significantly improve outcomes of low income students. And these strategies all come with significant additional costs (even when adopted under the veil of “no excuses charterdom“).

But, because providing more money to support public schools often means raising more tax dollars and because providing supplemental resources to children whose own communities may lack local revenue raising capacity often means more aggressive redistribution of state tax revenues, whether and how money matters in education is often hotly politically contested.

School finance is a political minefield, which is arguably why so many pundits have tried to distract from school finance issues by advancing ludicrous arguments that education equity and overall quality can be improved by altering teacher labor markets via statistical deselection without ever addressing funding deficiencies and wage disparities or by expanding charter schooling and ignoring the role of philanthropic contributions (while counting on them). Unfortunately for those political pundits, school finance is a minefield they must eventually walk through if they ever expect to make real progress in resolving quality or equity concerns.

In a recent report titled Revisiting the Age Old Question: Does Money Matter in Education?[5] I review the controversy over whether, how and why money matters in education, evaluating the current political rhetoric in light of decades of empirical research. I ask three questions, and summarize the response to those questions as follows:

Does money matter? Yes. On average, aggregate measures of per pupil spending are positively associated with improved or higher student outcomes. In some studies, the size of this effect is larger than in others and, in some cases, additional funding appears to matter more for some students than others. Clearly, there are other factors that may moderate the influence of funding on student outcomes, such as how that money is spent – in other words, money must be spent wisely to yield benefits. But, on balance, in direct tests of the relationship between financial resources and student outcomes, money matters.

Do schooling resources that cost money matter? Yes. Schooling resources which cost money, including class size reduction or higher teacher salaries, are positively associated with student outcomes. Again, in some cases, those effects are larger than others and there is also variation by student population and other contextual variables. On the whole, however, the things that cost money benefit students, and there is scarce evidence that there are more cost-effective alternatives.

Do state school finance reforms matter? Yes. Sustained improvements to the level and distribution of funding across local public school districts can lead to improvements in the level and distribution of student outcomes. While money alone may not be the answer, more equitable and adequate allocation of financial inputs to schooling provide a necessary underlying condition for improving the equity and adequacy of outcomes. The available evidence suggests that appropriate combinations of more adequate funding with more accountability for its use may be most promising.

While there may in fact be better and more efficient ways to leverage the education dollar toward improved student outcomes, we do know the following:

- Many of the ways in which schools currently spend money do improve student outcomes.

- When schools have more money, they have greater opportunity to spend productively. When they don’t, they can’t.

- Arguments that across-the-board budget cuts will not hurt outcomes are completely unfounded.

In short, money matters, resources that cost money matter and more equitable distribution of school funding can improve outcomes. Policymakers would be well-advised to rely on high-quality research to guide the critical choices they make regarding school finance.

Regarding the politicized rhetoric around money and schools, which has become only more bombastic and less accurate in recent years, I explain the following:

Given the preponderance of evidence that resources do matter and that state school finance reforms can effect changes in student outcomes, it seems somewhat surprising that not only has doubt persisted, but the rhetoric of doubt seems to have escalated. In many cases, there is no longer just doubt, but rather direct assertions that: schools can do more than they are currently doing with less than they presently spend; the suggestion that money is not a necessary underlying condition for school improvement; and, in the most extreme cases, that cuts to funding might actually stimulate improvements that past funding increases have failed to accomplish.

To be blunt, money does matter. Schools and districts with more money clearly have greater ability to provide higher-quality, broader, and deeper educational opportunities to the children they serve. Furthermore, in the absence of money, or in the aftermath of deep cuts to existing funding, schools are unable to do many of the things they need to do in order to maintain quality educational opportunities. Without funding, efficiency tradeoffs and innovations being broadly endorsed are suspect. One cannot tradeoff spending money on class size reductions against increasing teacher salaries to improve teacher quality if funding is not there for either – if class sizes are already large and teacher salaries non-competitive. While these are not the conditions faced by all districts, they are faced by many.

It is certainly reasonable to acknowledge that money, by itself, is not a comprehensive solution for improving school quality. Clearly, money can be spent poorly and have limited influence on school quality. Or, money can be spent well and have substantive positive influence. But money that’s not there can’t do either. The available evidence leaves little doubt: Sufficient financial resources are a necessary underlying condition for providing quality education.

There certainly exists no evidence that equitable and adequate outcomes are more easily attainable where funding is neither equitable nor adequate. There exists no evidence that more adequate outcomes will be attained with less adequate funding. Both of these contentions are unfounded and quite honestly, completely absurd.

[1] Duncombe, W. and Yinger, J.M. (1999). Performance Standards and Education Cost Indexes: You Can’t Have One Without the Other. In H.F. Ladd, R. Chalk, and J.S. Hansen (Eds.), Equity and Adequacy in Education Finance: Issues and Perspectives (pp.260-97). Washington, DC: National Academy Press.

[2] Allegretto, S.A., Corcoran, S.P., Mishel, L.R. (2008) The teaching penalty : teacher pay losing ground. Washington, D.C. : Economic Policy Institute, ©2008. Richard J. Murnane and Randall Olsen (1989) The effects of salaries and opportunity costs on length of state in teaching. Evidence from Michigan. Review of Economics and Statistics 71 (2) 347-352. David N. Figlio (2002) Can Public Schools Buy Better-Qualified Teachers?” Industrial and Labor Relations Review 55, 686-699. David N. Figlio (1997) Teacher Salaries and Teacher Quality. Economics Letters 55 267-271. Ronald Ferguson (1991) Paying for Public Education: New Evidence on How and Why Money Matters. Harvard Journal on Legislation. 28 (2) 465-498. Loeb, S., Page, M. (2000) Examining the Link Between Teacher Wages and Student Outcomes: The Importance of Alternative Labor Market Opportunities and Non-Pecuniary Variation. Review of Economics and Statistics 82 (3) 393-408. Figlio, D.N., Rueben, K. (2001) Tax Limits and the Qualifications of New Teachers. Journal of Public Economics. April, 49-71

[3] Ondrich, J., Pas, E., Yinger, J. (2008) The Determinants of Teacher Attrition in Upstate New York. Public Finance Review 36 (1) 112-144. Lankford, H., Loeb., S., Wyckoff, J. (2002) Teacher Sorting and the Plight of Urban Schools. Educational Evaluation and Policy Analysis 24 (1) 37-62. Clotfelter, C., Ladd, H.F., Vigdor, J. (2011) Teacher Mobility, School Segregation and Pay Based Policies to Level the Playing Field. Education Finance and Policy , Vol.6, No.3, Pages 399–438. Clotfelter, Charles T., Elizabeth Glennie, Helen F. Ladd, and Jacob L. Vigdor. 2008. Would higher salaries keep teachers in high-poverty schools? Evidence from a policy intervention in North Carolina. Journal of Public Economics 92: 1352–70.

[5] Baker, B.D. (2012) Revisiting the Age Old Question: Does Money Matter in Education. Shanker Institute. http://www.shankerinstitute.org/images/doesmoneymatter_final.pdf