While I fully understand that state education agencies are fast becoming propaganda machines, I’m increasingly concerned with how far this will go. Yes, under NCLB, state education agencies concocted completely wrongheaded school classification schemes that had little or nothing to do with actual school quality, and in rare cases, used those policies to enforce substantive sanctions on schools. But, I don’t recall many state officials going to great lengths to prove the worth – argue the validity – of these systems. Yeah… there were sales-pitchy materials alongside technical manuals for state report cards, but I don’t recall such a strong push to advance completely false characterizations of the measures. Perhaps I’m wrong. But either way, this brings me to today’s post.

I am increasingly concerned with at least some state officials’ misguided rhetoric promoting policy initiatives built on information that is either knowingly suspect, or simply conceptually wrong/inappropriate.

Specifically, the rhetoric around adoption of measures of teacher effectiveness has become driven largely by soundbites that in many cases are simply factually WRONG.

As I’ve explained before…

- With value added modeling, which does attempt to parse statistically the relationship between a student being assigned to teacher X and that students achievement growth, controlling for various characteristics of the student and the student’s peer group, there still exists a substantial possibility of random-error based mis-classification of the teacher or remaining bias in the teacher’s classification (something we didn’t catch in the model affected that teacher’s estimate). And there’s little way of knowing what’s what.

- With student growth percentiles, there is no attempt to parse statistically the relationship between a student being assigned a particular teacher and the teacher’s supposed responsibility for that student’s change among her peers in test score percentile rank.

This article explains these issues in great detail.

And this video may also be helpful.

Matt Di Carlo has written extensively about the question of whether and how well value-added modes actually accomplish their goal of fully controlling for student backgrounds.

Sound Bites don’t Validate Bad or Wrong Measures!

So, let’s take a look at some of the rhetoric that’s flying around out there and why and how it’s WRONG.

New Jersey has recently released its new regulations for implementing teacher evaluation policies, with heavy reliance on student growth percentile scores, ultimately aggregated to the teacher level as median growth percentiles. When challenged about whether those growth percentile scores will accurately represent teacher effectiveness, specifically for teachers serving kids from different backgrounds, NJ Commissioner Christopher Cerf explains:

“You are looking at the progress students make and that fully takes into account socio-economic status,” Cerf said. “By focusing on the starting point, it equalizes for things like special education and poverty and so on.” (emphasis added)

Here’s the thing about that statement. Well, two things. First, the comparisons of individual students don’t actually explain what happens when a group of students is aggregated to their teacher and the teacher is assigned the median student’s growth score to represent his/her effectiveness, where teacher’s don’t all have an evenly distributed mix of kids who started at similar points (to other teachers). So, in one sense, this statement doesn’t even address the issue.

More importantly, however, this statement is simply WRONG!

There’s little or no research to back this up, but for early claims of William Sanders and colleagues in the 1990s in early applications of value added modeling which excluded covariates. Likely, those cases where covariates have been found to have only small effects are cases in which those effects are drowned out by noise or other bias resulting from underlying test scaling (or re-scaling) issues – or alternatively, crappy measurement of the covariates. Here’s an example of the stepwise effects of adding covariates on teacher ratings.

Consider that one year’s assessment is given in April. The school year ends in late June. The next year’s test is given the next April. First, and tangential (to the covariate issue… but still important) there are approximately two months of instruction given by the prior year’s teacher that are assigned the current year’s teacher. Beyond that, there are a multitude of things that go on outside of the few hours a day where the teacher has contact with a child, that influence any given child’s “gains” over the year, and those things that go on outside of school vary widely by children’s economic status. Further, children with certain life experiences on a continued daily/weekly/monthly basis are more likely to be clustered with each other in schools and classrooms.

With annual test scores – differences in summer experiences (slide 20) which vary by student economic background matter – differences in home settings and access to home resources matters – differences in access to outside of school tutoring and other family subsidized supports may matter and depend on family resources. Variations in kids’ daily lives more generally matter (neighborhood violence, etc.) and many of those variations exist as a function of socio-economic status.

Variations in peer group with whom children attend school matters, and also varies by socio-economic status, neighborhood structure, conditions, and varies by socioeconomic status of not just the individual child, but the group of children. (citations and examples available in this slide set)

In short, it is patently false to suggest that using the same starting point “fully takes into account socio-economic status.”

It’s certainly false to make such a statement about aggregated group comparisons – especially while never actually conducting or producing publicly any analysis to back such a ridiculous claim.

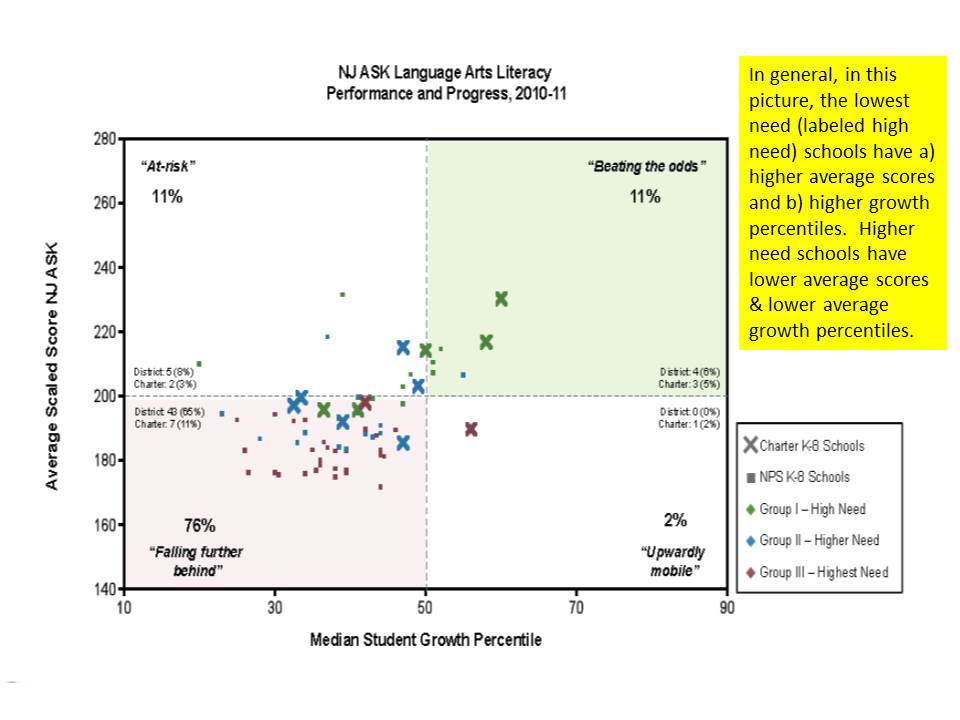

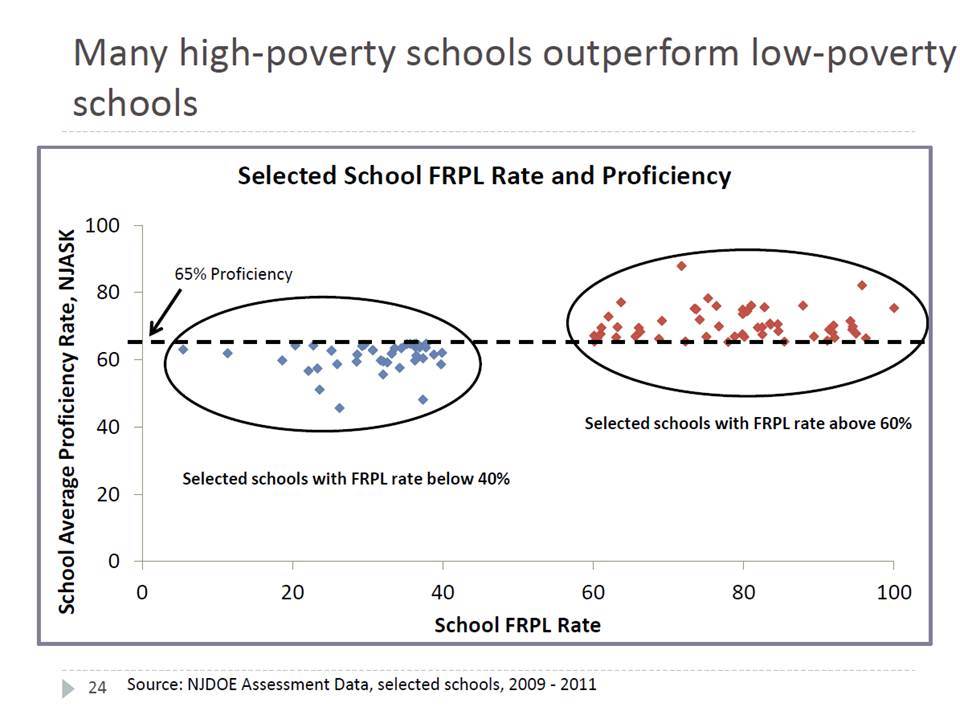

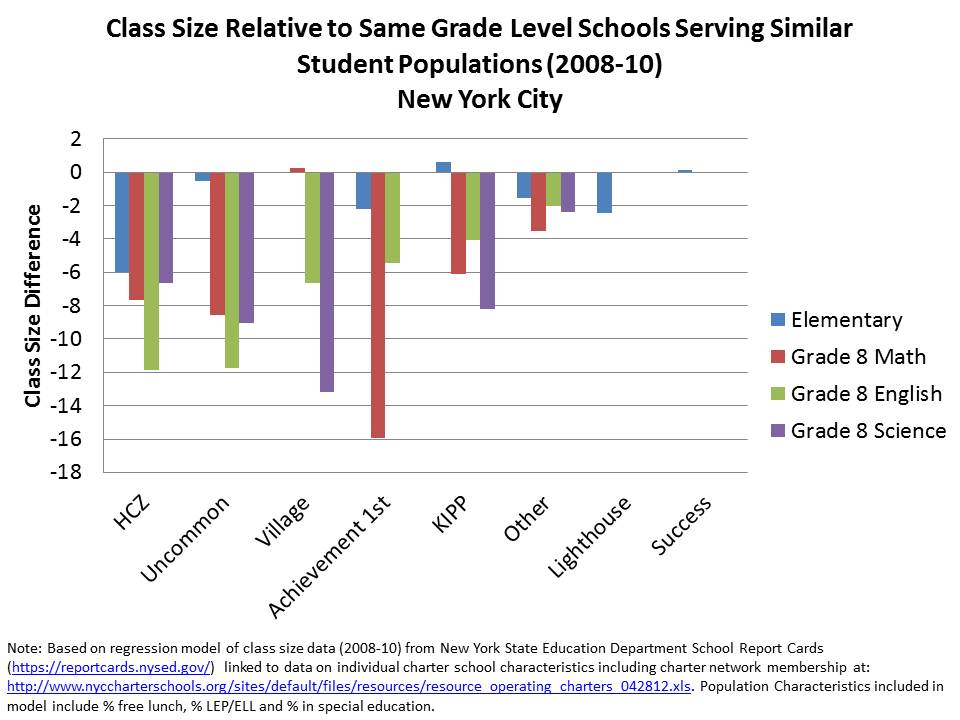

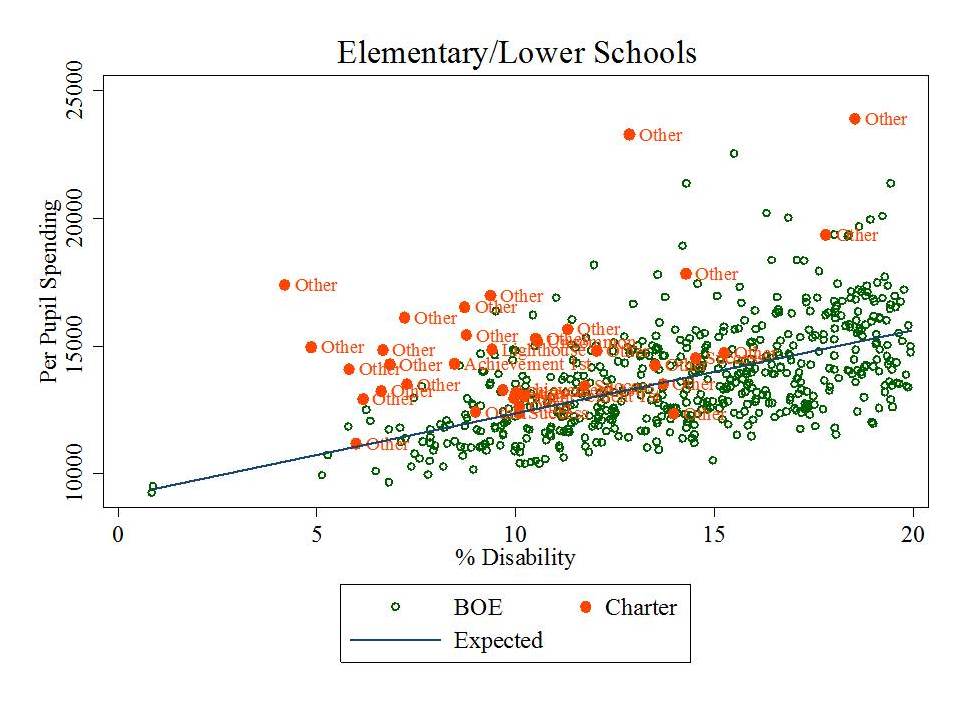

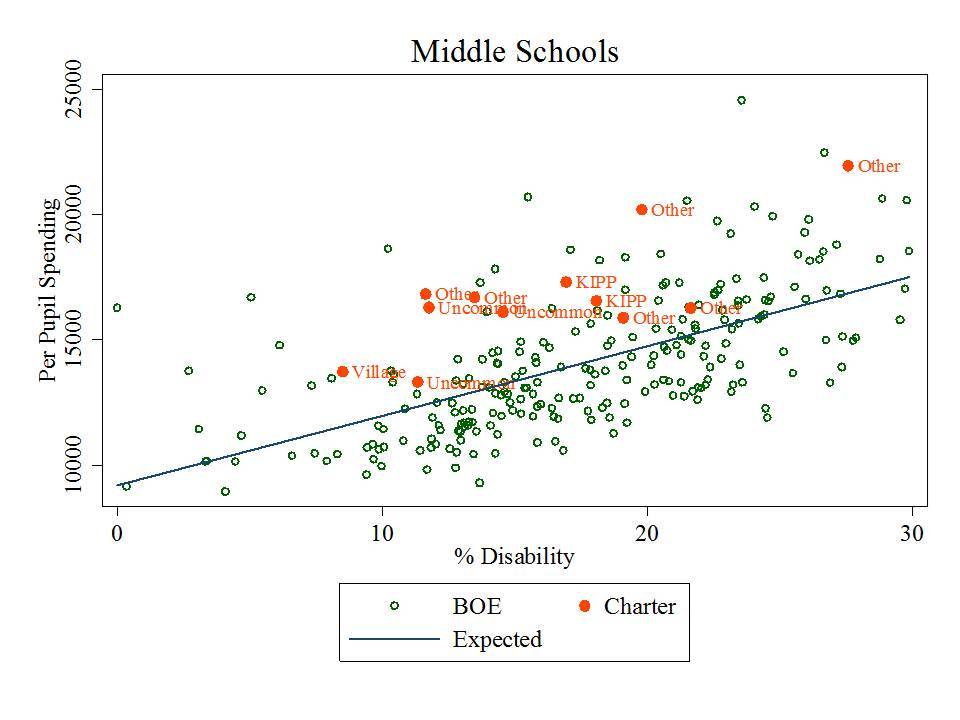

For lack of any larger available analysis of aggregated (teacher or school level) NJ growth percentile data, I stumbled across this graph from a Newark Public Schools presentation a short while back.

http://www.njspotlight.com/assets/12/1212/2110

Interestingly, what this graph shows is that the average score level in schools is somewhat positively associated with the median growth percentile, even within Newark where variation is relatively limited. In other words, schools with higher average scores appear to achieve higher gains. Peer group effect? Maybe. Underlying test scaling effect? Maybe. Don’t know. Can’t know.

The graph provides another dimension that is also helpful. It identifies lower and higher need schools – where “high need” are the lowest need in the mix. They have the highest average scores, and highest growth percentiles. And this is on the English/language arts assessment, where Math assessments tend to reveal stronger such correlations.

Now, state officials might counter that this pattern actually occurs because of the distribution of teaching talent… and has nothing to do with model failure to capture differences in student backgrounds. All of the great teachers are in those lower need, higher average performing schools! Thus, fire the others, and they’ll be awesome too! There is no basis for such a claim given that the model makes no attempt beyond prior score to capture student background.

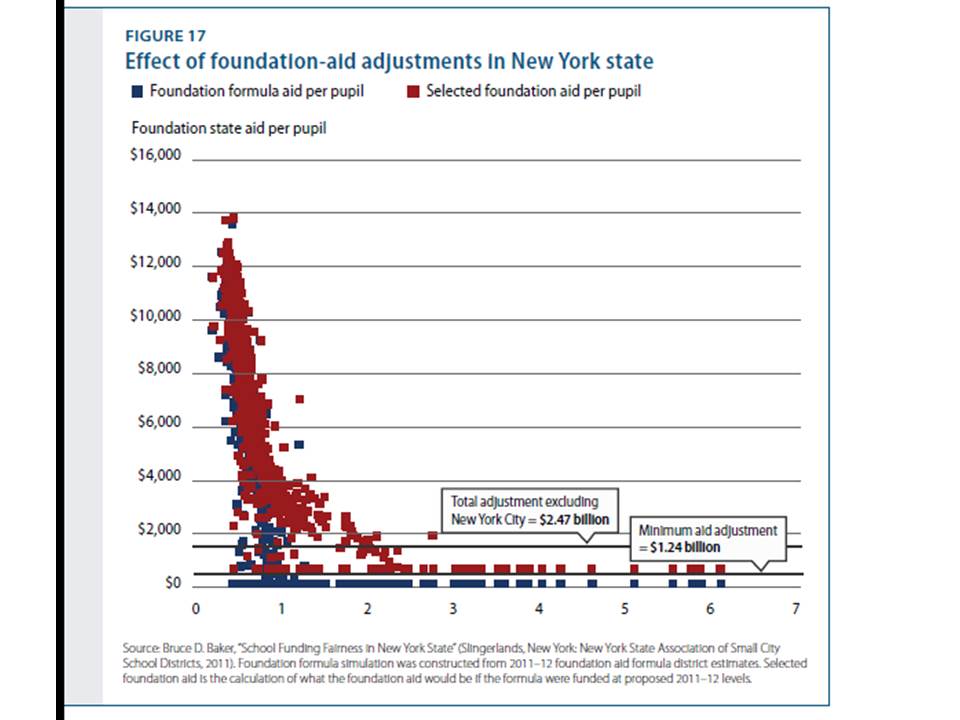

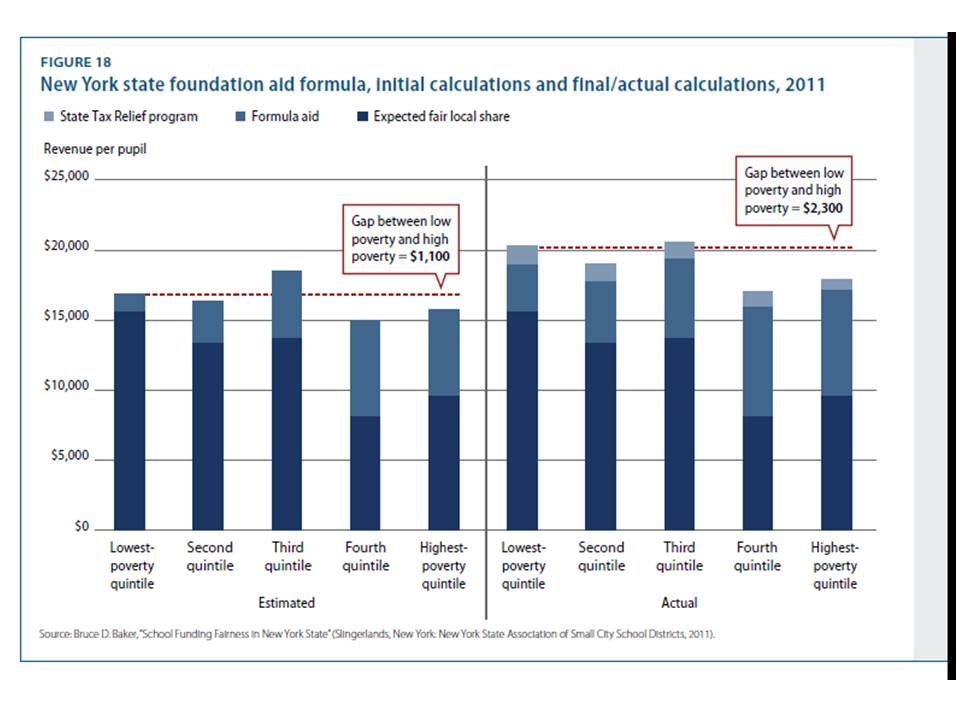

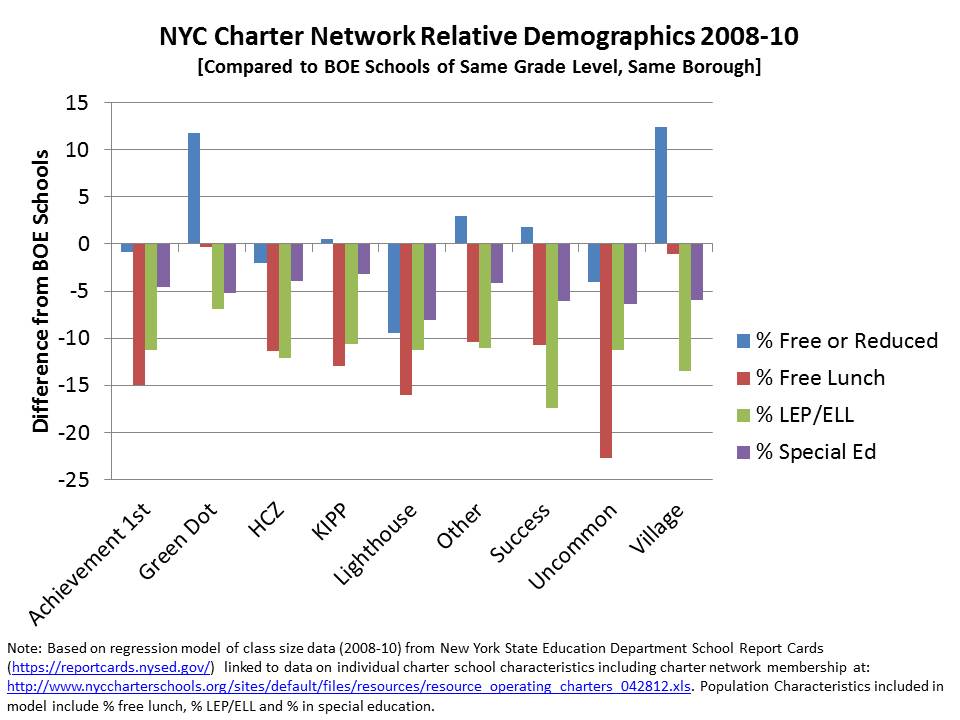

Then there’s New York State, where similar rhetoric has been pervasive in the state’s push to get local public school districts to adopt state compliant teacher evaluation provisions in contracts, and to base those evaluations largely on state provided growth percentile measures. Notably, New York State unlike New Jersey actually realized that the growth percentile data required adjustment for student characteristics. So they tried to produce adjusted measures. It just didn’t work.

In a New York Post op-ed, the Chancellor of the Board of Regents opined:

The student-growth scores provided by the state for teacher evaluations are adjusted for factors such as students who are English Language Learners, students with disabilities and students living in poverty. When used right, growth data from student assessments provide an objective measurement of student achievement and, by extension, teacher performance. http://www.nypost.com/p/news/opinion/opedcolumnists/for_nyc_students_move_on_evaluations_EZVY4h9ddpxQSGz3oBWf0M

So, what’s wrong with that? Well… mainly… that it’s… WRONG!

First, as I elaborate below, the state’s own technical report on their measures found that they were in fact not an unbiased measure of teacher or principal performance:

Despite the model conditioning on prior year test scores, schools and teachers with students who had higher prior year test scores, on average, had higher MGPs. Teachers of classes with higher percentages of economically disadvantaged students had lower MGPs. (p. 1) https://schoolfinance101.com/wp-content/uploads/2012/11/growth-model-11-12-air-technical-report.pdf

That said, the Chancellor has cleverly chosen her words. Yes, it’s adjusted… but the adjustment doesn’t work. Yes, they are an objective measure. But they are still wrong. They are a measure of student achievement. But not a very good one.

But they are not by any stretch of the imagination, by extension, a measure of teacher performance. You can call them that. You can declare them that in regulations. But they are not.

To ice this reformy cake in New York, the Commissioner of Education has declared in letters to individual school districts regarding their evaluation plans, that any other measure they choose to add along side the state growth percentiles must be acceptably correlated with the growth percentiles:

The department will be analyzing data supplied by districts, BOCES and/or schools and may order a corrective action plan if there are unacceptably low correlation results between the student growth subcomponent and any other measure of teacher and principal effectiveness… https://schoolfinance101.wordpress.com/2012/12/05/its-time-to-just-say-no-more-thoughts-on-the-ny-state-tchr-eval-system/

Because, of course, the growth percentile data are plainly and obviously a fair, balanced objective measure of teacher effectiveness.

WRONG!

But it’s better than the Status Quo!

The standard retort is that marginally flawed or not, these measures are much better than the status quo. ‘Cuz of course, we all know our schools suck. Teachers really suck. Principals enable their suckiness. And pretty much anything we might do… must suck less.

WRONG – it is absolutely not better than the status quo to take a knowingly flawed measure, or a measure that does not even attempt to isolate teacher effectiveness, and use it to label teachers as good or bad at their jobs. It is even worse to then mandate that the measure be used to take employment action against the employee.

It’s not good for teachers AND It’s not good for kids. (noting the stupidity of the reformy argument that anything that’s bad for teachers must be good for kids, and vice versa)

On the one hand, these ridiculous rigid, ill-conceived, statistically and legally inept and morally bankrupt policies will most certainly lead to increased, not decreased litigation over teacher dismissal.

On the other hand… The anything is better than the status quo argument is getting a bit stale and was pretty ridiculous to begin with. Jay Matthews of the Washington Post acknowledged his preference for a return toward the status quo (suggesting different improvements) in a recent blog post, explaining:

We would be better off rating teachers the old-fashioned way. Let principals do it in the normal course of watching and working with their staff. But be much more careful than we have been in the past about who gets to be principal, and provide much more training.

In closing, the ham-fisted argument of the anti-status quo argument, as applied to teacher evaluation, is easily summarized as follows:

Anything > Status Quo

Where the “greater than” symbol implies “really freakin’ better than… if not totally awesome… wicked awesome in fact,” but since it’s all relative, it would have be “wicked awesomer.”

Because student growth measures exists and purport to measure student achievement growth which is supposed to be a teacher’s primary responsibility, it therefore counts as “something,” which is a subclass of “anything” and therefore it is better than the “status quo.” That is:

Student Growth Measures = “something”

Something ⊆ Anything (something is a subset of anything)

Something > Status Quo

Student Growth Measures > Current Teacher Evaluation

Again, where “>” means “awesomer” even though we know that current teacher evaluation is anything but awesome.

It’s just that simple!

And this is the basis for modern education policymaking?