While I fully understand that state education agencies are fast becoming propaganda machines, I’m increasingly concerned with how far this will go. Yes, under NCLB, state education agencies concocted completely wrongheaded school classification schemes that had little or nothing to do with actual school quality, and in rare cases, used those policies to enforce substantive sanctions on schools. But, I don’t recall many state officials going to great lengths to prove the worth – argue the validity – of these systems. Yeah… there were sales-pitchy materials alongside technical manuals for state report cards, but I don’t recall such a strong push to advance completely false characterizations of the measures. Perhaps I’m wrong. But either way, this brings me to today’s post.

I am increasingly concerned with at least some state officials’ misguided rhetoric promoting policy initiatives built on information that is either knowingly suspect, or simply conceptually wrong/inappropriate.

Specifically, the rhetoric around adoption of measures of teacher effectiveness has become driven largely by soundbites that in many cases are simply factually WRONG.

As I’ve explained before…

- With value added modeling, which does attempt to parse statistically the relationship between a student being assigned to teacher X and that students achievement growth, controlling for various characteristics of the student and the student’s peer group, there still exists a substantial possibility of random-error based mis-classification of the teacher or remaining bias in the teacher’s classification (something we didn’t catch in the model affected that teacher’s estimate). And there’s little way of knowing what’s what.

- With student growth percentiles, there is no attempt to parse statistically the relationship between a student being assigned a particular teacher and the teacher’s supposed responsibility for that student’s change among her peers in test score percentile rank.

This article explains these issues in great detail.

And this video may also be helpful.

Matt Di Carlo has written extensively about the question of whether and how well value-added modes actually accomplish their goal of fully controlling for student backgrounds.

Sound Bites don’t Validate Bad or Wrong Measures!

So, let’s take a look at some of the rhetoric that’s flying around out there and why and how it’s WRONG.

New Jersey has recently released its new regulations for implementing teacher evaluation policies, with heavy reliance on student growth percentile scores, ultimately aggregated to the teacher level as median growth percentiles. When challenged about whether those growth percentile scores will accurately represent teacher effectiveness, specifically for teachers serving kids from different backgrounds, NJ Commissioner Christopher Cerf explains:

“You are looking at the progress students make and that fully takes into account socio-economic status,” Cerf said. “By focusing on the starting point, it equalizes for things like special education and poverty and so on.” (emphasis added)

Here’s the thing about that statement. Well, two things. First, the comparisons of individual students don’t actually explain what happens when a group of students is aggregated to their teacher and the teacher is assigned the median student’s growth score to represent his/her effectiveness, where teacher’s don’t all have an evenly distributed mix of kids who started at similar points (to other teachers). So, in one sense, this statement doesn’t even address the issue.

More importantly, however, this statement is simply WRONG!

There’s little or no research to back this up, but for early claims of William Sanders and colleagues in the 1990s in early applications of value added modeling which excluded covariates. Likely, those cases where covariates have been found to have only small effects are cases in which those effects are drowned out by noise or other bias resulting from underlying test scaling (or re-scaling) issues – or alternatively, crappy measurement of the covariates. Here’s an example of the stepwise effects of adding covariates on teacher ratings.

Consider that one year’s assessment is given in April. The school year ends in late June. The next year’s test is given the next April. First, and tangential (to the covariate issue… but still important) there are approximately two months of instruction given by the prior year’s teacher that are assigned the current year’s teacher. Beyond that, there are a multitude of things that go on outside of the few hours a day where the teacher has contact with a child, that influence any given child’s “gains” over the year, and those things that go on outside of school vary widely by children’s economic status. Further, children with certain life experiences on a continued daily/weekly/monthly basis are more likely to be clustered with each other in schools and classrooms.

With annual test scores – differences in summer experiences (slide 20) which vary by student economic background matter – differences in home settings and access to home resources matters – differences in access to outside of school tutoring and other family subsidized supports may matter and depend on family resources. Variations in kids’ daily lives more generally matter (neighborhood violence, etc.) and many of those variations exist as a function of socio-economic status.

Variations in peer group with whom children attend school matters, and also varies by socio-economic status, neighborhood structure, conditions, and varies by socioeconomic status of not just the individual child, but the group of children. (citations and examples available in this slide set)

In short, it is patently false to suggest that using the same starting point “fully takes into account socio-economic status.”

It’s certainly false to make such a statement about aggregated group comparisons – especially while never actually conducting or producing publicly any analysis to back such a ridiculous claim.

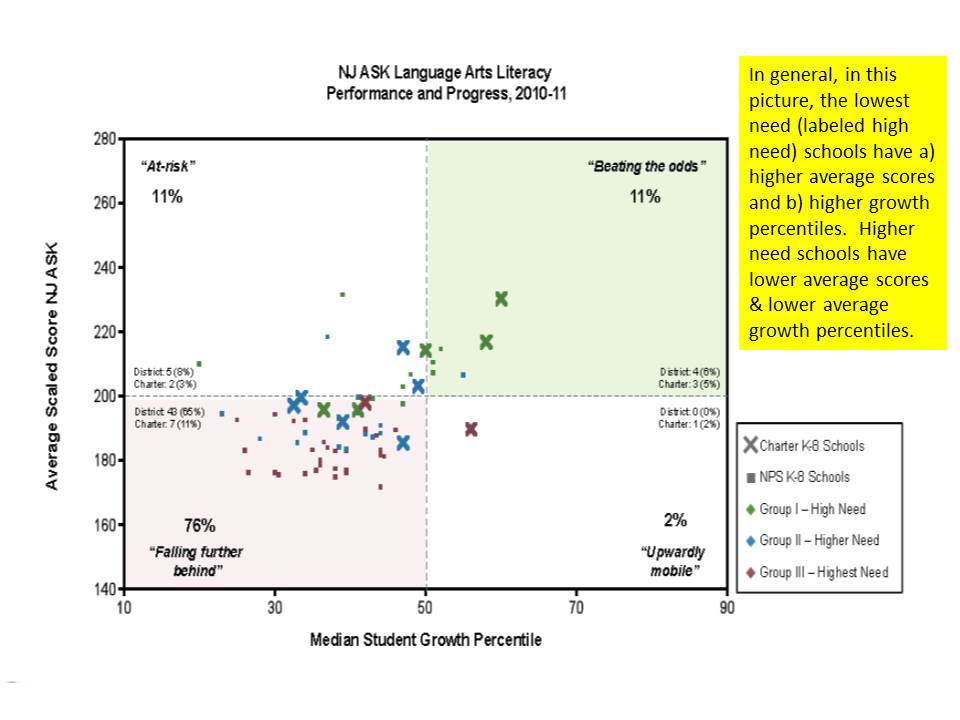

For lack of any larger available analysis of aggregated (teacher or school level) NJ growth percentile data, I stumbled across this graph from a Newark Public Schools presentation a short while back.

http://www.njspotlight.com/assets/12/1212/2110

Interestingly, what this graph shows is that the average score level in schools is somewhat positively associated with the median growth percentile, even within Newark where variation is relatively limited. In other words, schools with higher average scores appear to achieve higher gains. Peer group effect? Maybe. Underlying test scaling effect? Maybe. Don’t know. Can’t know.

The graph provides another dimension that is also helpful. It identifies lower and higher need schools – where “high need” are the lowest need in the mix. They have the highest average scores, and highest growth percentiles. And this is on the English/language arts assessment, where Math assessments tend to reveal stronger such correlations.

Now, state officials might counter that this pattern actually occurs because of the distribution of teaching talent… and has nothing to do with model failure to capture differences in student backgrounds. All of the great teachers are in those lower need, higher average performing schools! Thus, fire the others, and they’ll be awesome too! There is no basis for such a claim given that the model makes no attempt beyond prior score to capture student background.

Then there’s New York State, where similar rhetoric has been pervasive in the state’s push to get local public school districts to adopt state compliant teacher evaluation provisions in contracts, and to base those evaluations largely on state provided growth percentile measures. Notably, New York State unlike New Jersey actually realized that the growth percentile data required adjustment for student characteristics. So they tried to produce adjusted measures. It just didn’t work.

In a New York Post op-ed, the Chancellor of the Board of Regents opined:

The student-growth scores provided by the state for teacher evaluations are adjusted for factors such as students who are English Language Learners, students with disabilities and students living in poverty. When used right, growth data from student assessments provide an objective measurement of student achievement and, by extension, teacher performance. http://www.nypost.com/p/news/opinion/opedcolumnists/for_nyc_students_move_on_evaluations_EZVY4h9ddpxQSGz3oBWf0M

So, what’s wrong with that? Well… mainly… that it’s… WRONG!

First, as I elaborate below, the state’s own technical report on their measures found that they were in fact not an unbiased measure of teacher or principal performance:

Despite the model conditioning on prior year test scores, schools and teachers with students who had higher prior year test scores, on average, had higher MGPs. Teachers of classes with higher percentages of economically disadvantaged students had lower MGPs. (p. 1) https://schoolfinance101.com/wp-content/uploads/2012/11/growth-model-11-12-air-technical-report.pdf

That said, the Chancellor has cleverly chosen her words. Yes, it’s adjusted… but the adjustment doesn’t work. Yes, they are an objective measure. But they are still wrong. They are a measure of student achievement. But not a very good one.

But they are not by any stretch of the imagination, by extension, a measure of teacher performance. You can call them that. You can declare them that in regulations. But they are not.

To ice this reformy cake in New York, the Commissioner of Education has declared in letters to individual school districts regarding their evaluation plans, that any other measure they choose to add along side the state growth percentiles must be acceptably correlated with the growth percentiles:

The department will be analyzing data supplied by districts, BOCES and/or schools and may order a corrective action plan if there are unacceptably low correlation results between the student growth subcomponent and any other measure of teacher and principal effectiveness… https://schoolfinance101.wordpress.com/2012/12/05/its-time-to-just-say-no-more-thoughts-on-the-ny-state-tchr-eval-system/

Because, of course, the growth percentile data are plainly and obviously a fair, balanced objective measure of teacher effectiveness.

WRONG!

But it’s better than the Status Quo!

The standard retort is that marginally flawed or not, these measures are much better than the status quo. ‘Cuz of course, we all know our schools suck. Teachers really suck. Principals enable their suckiness. And pretty much anything we might do… must suck less.

WRONG – it is absolutely not better than the status quo to take a knowingly flawed measure, or a measure that does not even attempt to isolate teacher effectiveness, and use it to label teachers as good or bad at their jobs. It is even worse to then mandate that the measure be used to take employment action against the employee.

It’s not good for teachers AND It’s not good for kids. (noting the stupidity of the reformy argument that anything that’s bad for teachers must be good for kids, and vice versa)

On the one hand, these ridiculous rigid, ill-conceived, statistically and legally inept and morally bankrupt policies will most certainly lead to increased, not decreased litigation over teacher dismissal.

On the other hand… The anything is better than the status quo argument is getting a bit stale and was pretty ridiculous to begin with. Jay Matthews of the Washington Post acknowledged his preference for a return toward the status quo (suggesting different improvements) in a recent blog post, explaining:

We would be better off rating teachers the old-fashioned way. Let principals do it in the normal course of watching and working with their staff. But be much more careful than we have been in the past about who gets to be principal, and provide much more training.

In closing, the ham-fisted argument of the anti-status quo argument, as applied to teacher evaluation, is easily summarized as follows:

Anything > Status Quo

Where the “greater than” symbol implies “really freakin’ better than… if not totally awesome… wicked awesome in fact,” but since it’s all relative, it would have be “wicked awesomer.”

Because student growth measures exists and purport to measure student achievement growth which is supposed to be a teacher’s primary responsibility, it therefore counts as “something,” which is a subclass of “anything” and therefore it is better than the “status quo.” That is:

Student Growth Measures = “something”

Something ⊆ Anything (something is a subset of anything)

Something > Status Quo

Student Growth Measures > Current Teacher Evaluation

Again, where “>” means “awesomer” even though we know that current teacher evaluation is anything but awesome.

It’s just that simple!

And this is the basis for modern education policymaking?

No… I really do think it’s better than status quo. If you saw, first hand, school districts refusing to address failing schools… Any kick in the pants is a good one. But I agree that we need to bring on relevant discussion on what accountability should look like.

There might be something… many things that are better than the status quo. I agree with that. But this is not. And unfortunately, excessive emphasis on this, keeps us from discussing alternatives that might, in fact, be better.

One way in which this is not better, is that it will certainly not ease the ability of high need schools to find “better” replacements for teachers they might be wrongly required to dismiss. The increased risk/unpredictability… disproportionately assigned to low income schools… is likely to reduce average teacher “quality” in those schools over time and induce an unproductive instability.

That’s sort of a chicken or egg thing, isn’t it? Test scores are bad in failing schools because failing schools tend to be where less-than-exemplary teachers are shipped to retire. And since they’re less-than-exemplary, they tend to not have the tools to help hard-to-teach kids. So how does a measure — or lack thereof — make a difference?

What I do know… Is that I came out of a school district that was once touted as one of the most coveted teaching assignments in the state… back in the ’70s…. Women who could have been anything else were teaching back then, and most considered my district a coup. When the high schools turned from white to brown, most of my ’80s era teachers stayed. So I wasn’t surprised when my high school could still carry acceptable scores and be a Blue Ribbon School and all that.

Now most of those women are retired. So we have to turn to charters to save us. Too bad. We should have worked on recruiting and maintaining a proper work force.

It is perplexing that educational policy-makers and pundits are perfectly happy to applaud bad assessment measures for teachers but shy away from proclaiming the glory of anything similar when it comes to students.

As you can tell by my tone in this post, I’m just disgusted with the current behavior of certain state officials – to a large extent from a technical standpoint – but also in terms of their willingness to totally disregard the well-being of the people involved.

I’m in Texas, and I think our state officials are ready to do better… But we have people who want to trash our system, rather than fix it. Most of them are thinking about themselves. Few of them are thinking about the kids who are stuck in our failing schools.

I’m tired of schools that get away with ignoring kids. I think we need data. I think we need to use it diagnostically. I think we need to create tests that produce useful information for teachers and especially parents. I think we need to arm parents with good information so they can demand better performance of their teachers and their schools, and do it in specific ways. I think we need to consider how accountability should differ for student, teacher and school. But, most of all, I think the days of a school telling you “It’s all okay, there’s nothing behind the curtain” need to be over. Because we need to start figuring out how to serve the growing number of black and brown kids who are being failed by our school system.

I totally agree on the need for data. And if you read that article I linked, the conclusions address this. see also: https://schoolfinance101.wordpress.com/2012/12/07/friday-thoughts-on-data-assessment-informed-decision-making-in-schools/

But current policies in some states are getting in the way of reasonable use of better data.

Separately, for years, Texas has really been ahead of the curve on the production of higher quality data… and arguably in many cases… the more reasonable/responsible use of those data. TEA has maintained much stronger technical capacity than NJDOE or NYSED and has had much stronger relationships with capable academic researchers. I’ve always enjoyed my times working on Texas policy issues w/Texas policymakers and my academic colleagues down there – even when I’ve been on the opposite side of some issues with them (heck, it was one of my own coauthors on several projects that consulted with the state to challenge my expert reports of behalf of districts in the school funding case – but that made it more fun/interesting/useful… because the debate was substantive).

We collect the data, but we don’t bother to use it properly. No wonder parents have no buy in to our system! I do know individual school districts often pass it along to teachers. Sometimes. But we’re rolling out a student dashboard this year, and we managed to do what? Get rid of the data. Why not take the stakes off and use the data? (And I’m open to the discussion of how valid the tests are, etc…) We launched education research centers… then the last administration killed them off. Sadly. (Not to discount your work… ) Those ERC should have produced the research to inform our policy decisions.

I wonder…failed by our school systems? Or failed by our society? If we see that the correlation is NOT to skin color but to poverty, then it becomes not an academic issue, but an economic one. Perhaps we need to stop blaming schools because numbers on paper and pencil tests are low, but start blaming politicians because the unemployment rate is so high. Or let’s collect data on those students who are scoring low and see what is REALLY causing the problem – how many of them haven’t seen their mom for a week because she’s in jail? Or don’t know who their dad is? Or are living with grandma because both parents walked out on them? Or who walked through gang territory on the way to school and are lucky to have made it into the building unscathed? Or who didn’t have breakfast? The fact is, look at the data we DO have – the students that our “schools” are failing are ALL urban ,inner-city schools with high poverty rates. The rest of our schools are doing quite well, and it’s NOT because all of the bad teachers go to the inner city. The issue is POVERTY and the problems that inner city poverty create. Take care of that, and I’m willing to bet that the scores go up and we will conclude that the schools are not failing our children.

What gets in the way of reasonable uses of data are the forces that want to profitize every element of education. There sole goal is to perpetuate the myth that almost all public schools are failing so they can replace them with private charter operators, on-line or digital providers, and voucher schemes. The use of accountability policies backed by inappropriate applications of data are a major piece of their plans. Their is big money to be made and therefore there is big money behind their efforts.

Here here…

Jay, i think pulling out the conspiracy theories just weakens the moral high ground that belongs to the school community. How many times did I hear this session in Texas that Pearson was the profiteer? Pearson makes $100M a year off Texas. Revising our tests will cut $25M from that contract. If the tests are gone, it’s $100M/year. People pointed to it like it was a smoking gun. Are you kidding me? I went off and said, “Seriously, people, Pearson is about to get a huge cut of the Common Core testing contracts. Do you REALLY think they’re worried about Texas?” If so, they would have the same slew of lobbyists that AT&T, etc, carry every session. They didn’t.

It isn’t theory it is fact. It isn’t just Pearson. Look at K12 inc. who is one of the top online providers. Look at the big CMO and EMO outfits.

The researcher has proven that charters, whether brick and mortar or virtual, are not a big difference maker. You can reference many of Dr. Baker’s posts on this matter. Yet the dollars continue to be siphoned of to their operators.

In the wake, student’s and educator’s rights are trampled and violated.

While I’ve asserted in many posts that there is gaming of this system and preferential treatment by policymakers (unleveling of playing field), I’ve not necessarily framed it as a profit driven conspiracy theory.

https://schoolfinance101.wordpress.com/2013/02/16/from-portfolios-to-parasites-the-unfortunate-path-of-u-s-charter-school-policy/

https://schoolfinance101.wordpress.com/2013/04/08/the-disturbing-language-and-shallow-logic-of-ed-reform-comments-on-relinquishment-sector-agnosticism/

We can talk urban land deals, public school closing/takeover and use of new market tax credits another day.

Jay, data isn’t the problem. It’s a public education community that plays the victim. Data is not the problem. It’s educators who toss stone after stone at others — and it may be perfectly valid! — but still provide no solution to fix their own poor performance. (Sorry for the generality. You may have great performance) What the public wants is a solution to improve performance, not a discussion of how rights are trampled.

That said, i still think the public needs to be aware of the rights tradeoffs as part of the equation. Many have no idea until their own rights have been trampled. And then they do care as much about that if not more than they do about whether test scores went up a point or two.

Here’s the question*: Is the traditional model of teacher evaluation inescapably wrong-headed and flawed, or is it just implemented incredibly poorly?

* OK. Not THE THE question, but one of the THE questions.

I think we all agree that teacher evaluation needs to be improved. What we do not agree on, and have not discussed explicitly, is whether expert evaluation based upon observed practice is just not workable. Do people think that ANYTHING would be better because the current system is based upon a bad model? Or because they results we get are so bad?

I’ve put a fuller expression of this on my blog, More Thoughtful. http://morethoughtful.blogspot.com/2013/04/how-broken-is-teacher-evaluation.html

I think you and Bruce are thinking of specific local or state situation where testing is a huge measure of teacher accountability. Where is this common?

I would certainly argue that in the NY example, where to begin with the testing must be a sizable share and where other measures are required to be correlated with it, that testing becomes the driver of decisions. I also explain in my linked research paper that even in systems where VAM or SGP measures are, say 30 to 50%… they will more often than not serve as the tipping point… and the variation that may tip the decision may either be a) true effect variation, b) statistical bias (something else affected the rating) or c) noise. Which is why we shouldn’t be using this information in these parallel, weighted decision schemes. All discussed in research paper linked above – as are the policies that mandate, what is, in effect misuse of the data/misinterpretation.

We have bills floating around this session to refuse linking teacher pay to test scores… Which makes Texas, of course, something other than the normal curve. I’d prefer to see a robust discussion of what sort of measurements (VAM or otherwise) are useful.

It’s not just about what measures are useful, but how they are useful – in what type of process?

https://schoolfinance101.wordpress.com/2012/12/07/friday-thoughts-on-data-assessment-informed-decision-making-in-schools/

This is not just about teacher pay. It is about any number of consequences of evaluation. These could include:

* Firing for cause

* Layoffs

* Tenure decisions

* Promotion

* Qualifying for steps on a career ladder

* Pay

* Tenure

(Yeah, there’s some redundancy in there.)

It also links to PD. Who needs PD, and how does a school allocate PD resources?

It goes on and on. And it’s not limited to NY.

A little CS Lewis may help the “relinquisher” who seeks to implement anything because it must be better than the failing public schools that are currently status quo.

“Progress means getting nearer to the place you want to be. And if you have taken a wrong turning, then to go forward does not get you any nearer. If you are on the wrong road, progress means doing an about-turn and walking back to the right road; and in that case the man who turns back soonest is the most progressive man.”

Current practice is not bringing about educational equity for the educationally disadvantaged which was the intended purpose of these reforms back to Goals 2000. It is time to turn around and try a different route to progress the system for those very students. That is, of course, assuming that the place we want to be as a nation is where some assemblance of equity is present for our children.

But, all to often I think that our problem is that the place the “relinquishers” want to be and the place I want to be are diametrically opposite. Maybe the corporate reformers are getting where they want to be with public employees outsourced to private industry so that benefits packages afforded to those public servants are removed from the taxable roles. Thus lowering the responsbility of the 1% to the good of the common and preserving the gap between us and them.

Quite possibly, those of us who believe in an equitable education for our children and those of us who believe in the “relinquisher” educational agenda have simply two different “places we want to be.” We want to be in a place where a child in poverty has just as much of a chance for upward mobility as a child in wealth has for downward mobility. They want to be in a place where their children assume their natural place as the “Brahmins” of America. Maybe I want to turn around and they want to keep moving forward because we have antipodal goals.

Actually… In Texas, we did move to close gaps with the current accountability system. We have huge increases in black and brown kids applying to college. The problem is hitting the wall with those gains and a need to do it better. We implemented tougher requirements and, guess what? The kids rose to the challenge.

But thanks for the CS Lewis. 🙂 It’s appreciated. Failure is failure… until you see progress. Then you ought to fight tooth and nail to keep it.

Reblogged this on Transparent Christina.

Professor Baker, without defending the idea of using standardized tests to assess the quality of each individual teacher, can you cite an example of an advocate for improving schools who wrote, as you asserted that there is a “reformy argument that anything that’s bad for teachers must be good for kids, and vice versa)”?

thanks you

that quote is indeed a stylized reference to the notion that somehow teachers interests and kids interests are diametrically opposed, which many have discussed. no, they’ve not framed it as absurdly as I do here… but again, that was a stylized framing.

I will admit that there are some bad teachers. There are probably whole school buildings that are bad. And I will also admit that we in public education are very slow to change and that our practice is very slow to mirror what we know is best practice. Having said all of that, there is simply NO WAY to use Value Added measures for teacher evaluation in an equitable, fair manner. The numbers are influenced by way too many uncontrollable factors and are just as likely to punish excellent teachers and reward poor teachers. I have worked with dozens of districts to analyze their value added data and I’ve seen this over and over again. The main reason what we’re doing is worse than the status quo is that this unhealthy emphasis on numbers and test score is causing good teachers to suck and causing us to lose an entire generation of students to the boredom of test prep when they NEED to move, create, collaborate, DO, BE, and LEARN to think. We have taken the arts out of schools. We have spent billions on assessments and number crunchers to come up with the numbers, all of which takes money away from classrooms and students. We are pressured to teach to poorly designed tests. We are hurting students and teachers and I think it’s going to take a LONG time to undo the damage we have done.

What makes a school failing? All this time we have heard: Test Scores, Test Scores, Test Scores. But here is a reality check: Over the past ten years, our school system (across the USA) has had and amazing impact on society: (all of these facts are readily available to the public) Index Crimes are down (rape, robbery, murder, gta, and assault.) All have decreased across the US.(fbi.gov)

A more telling way of looking at it is this: Until ten years ago, it is conceivable to see that the young black men from our inner cities (and the schools) were more likely to be incarcerated than go to college. Economics in our cities have not changed yet, as of this writing, the amount of young black men enrolled in college far surpasses the number of young black men in jail. In fact, the number of young black men incarcerated has dropped by 30,000 people. That is a small city!!! And every single year, the difference grows in favor of college. Sounds like the schools are doing their job. That is reality, testing is not.

Dr. Baker, Will you be able to write to the NJ State Board of Ed. – it is so important that they hear this.

Having led the re-design of a Virginia school division’s principal and teacher evaluation for the last five years, I am intrigued by some of the posters who don’t seem to have a grasp of contemporary evaluation models exclusive of VAMs and SGMs. Virginia, like everybody else, has had to deal with USED’s requirements for NCLB waivers and we’ve had to include student progress as 40% of the evaluation for teachers, principals and superintendents. Qualitative data gathered through a meaningful evaluation process should provide a better and fairer picture of a teacher’s performance in the classroom, given the right training for the evaluator.

Dr. Baker is right that VAMs or SGMs are not good measures, and will skew performance ratings GREATLY. Our staff disassembled the actual tool used by Virginia and others to calculate SGM and found internal flaws that squeezed outputs to the middle; that is, the measure cannot be used to exclude high performing or low performing teachers from the norm (or students for that matter). The problem gets worse as the division’s demographic becomes more homogeneous.