Very recently, I posted a critique of the recent technical report on New York State median growth percentiles to be used in that state’s teacher and principal evaluation system.

Today, I read this piece in the NY Post – an editorial by NY State Board of Regents Chancellor Merryl Tisch, and well, MY HEAD ALMOST EXPLODED!

The point of the editorial is to encourage NY City’s teachers and DOE to agree to a teacher evaluation system based on supposedly objective measures – where “objective measures” seems largely to be code language for estimates of teacher effectiveness derived from student assessment data.

First, I have written several previous posts on the usefulness of NYC’s value-added model for determining teacher effectiveness.

- the NYC VAM model retains some persistent biases

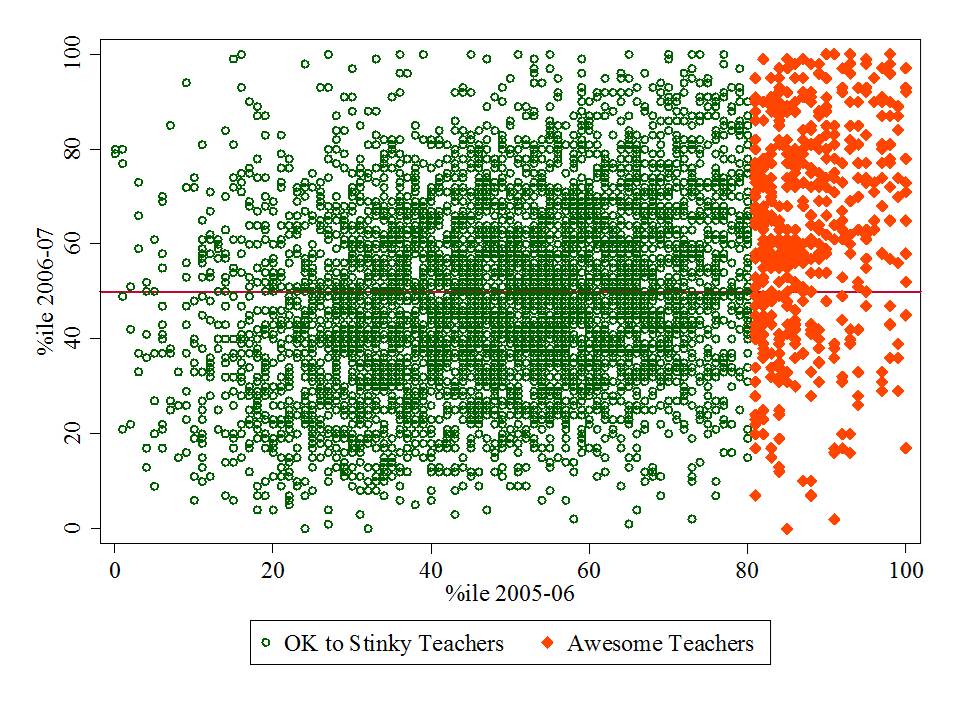

- the NYC VAM model is highly unstable from year to year

- the NYC VAM results capture only a handful of teachers per school and their results tend to jump all over the place

- adopting the NCTQ irreplaceables logic, the NYC VAM data are so noisy that few if any teachers are persistently irreplaceable

- for various reasons, it is unlikely that these are just early glitches in the system that will get better with time

Setting aside this long list of concerns about the NYC VAM results, I now turn to the NYSED – state median growth percentile data (which actually seem inferior to the NYC VAM model/estimates). In her editorial, Chancellor Tisch proclaims:

The student-growth scores provided by the state for teacher evaluations are adjusted for factors such as students who are English Language Learners, students with disabilities and students living in poverty. When used right, growth data from student assessments provide an objective measurement of student achievement and, by extension, teacher performance.

Let me be blunt here. CHANCELLOR TISCH – YOU ARE WRONG! FLAT OUT WRONG! IRRESPONSIBLY & PERHAPS NEGLIGENTLY WRONG!

[now, one might quibble that Chancellor Tisch has merely stated that the measures are “adjusted for” certain factors and she has not claimed that those adjustments actually work to eliminate bias. Further, she has merely declared that the measures are “objective” and not that they are accurate or precise. Personally, I don’t find this deceptive language at all comforting!]

Indeed, the measures attempt – but fail to sufficiently adjust for key factors. They retain substantial biases as identified in the state’s own technical report. And they are subject to many of the same error concerns as the NYC VAM model. Given the findings of the state’s own technical report, it is irresponsible to suggest that these measures can and should be immediately considered for making personnel and compensation decisions.

Finally, as I laid out in my previous blog post to suggest that “growth data from student assessments provide an objective measure of student achievement, and, by extension, teacher performance” IS A HUGE UNWARRANTED STRETCH!

While I might concur with the follow up statement from Chancellor Tisch that “We should never judge an educator solely by test scores, but we shouldn’t completely disregard student performance and growth either.” I would argue that school leaders/peer teachers/personnel managers should absolutely have the option to completely disregard data that have high potential to be sending false signals, either as a function of persistent bias or error. Requiring action based on biased and error prone data (rather than permitting those data to be reasonably mined to the extent they may, OR MAY NOT, be useful) is a toxic formula for public schooling quality.

The one thing I can’t quite figure out here is which is the misinformation and which is the disinformation. In any case, both are wrong!

The rest of what I have to say, I’ve already said. But, so readers don’t have to click the link below to access the previous post, I’ve pasted the entire thing below. Enjoy!

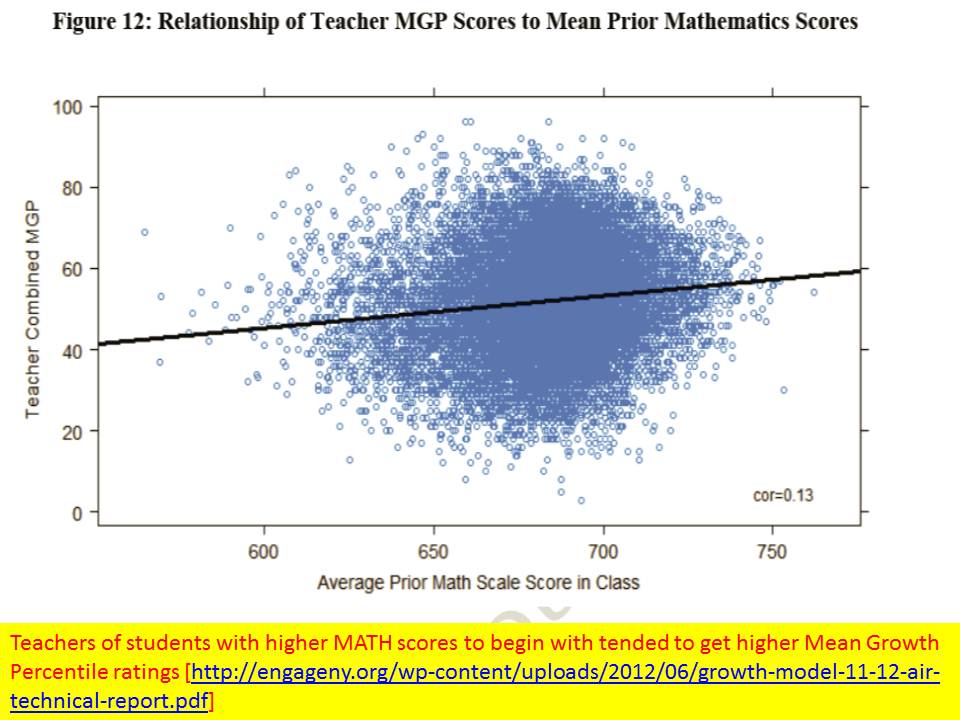

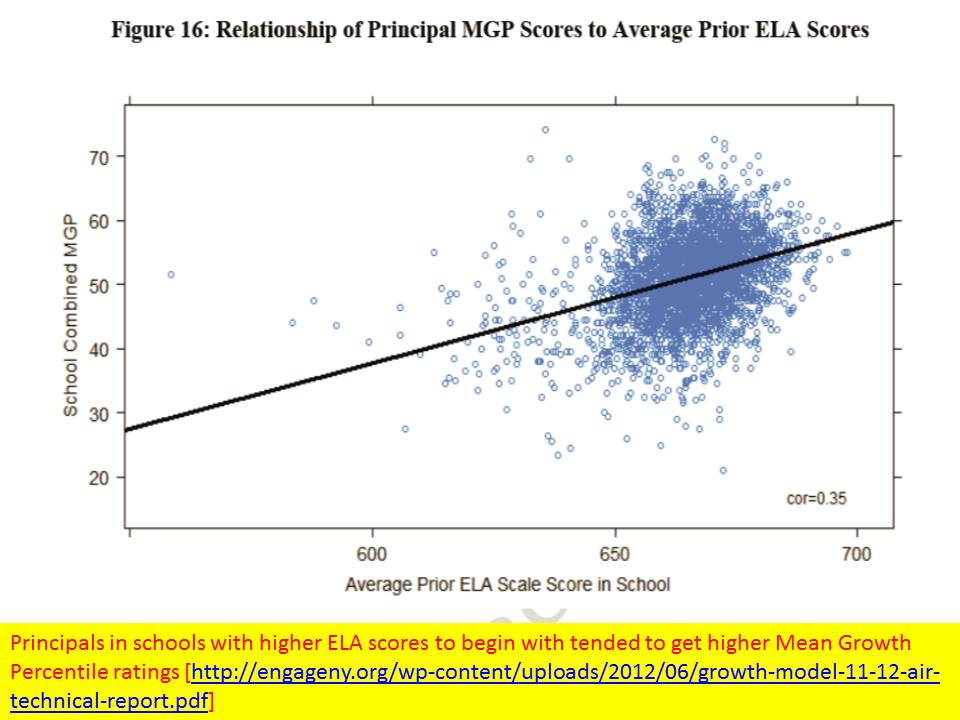

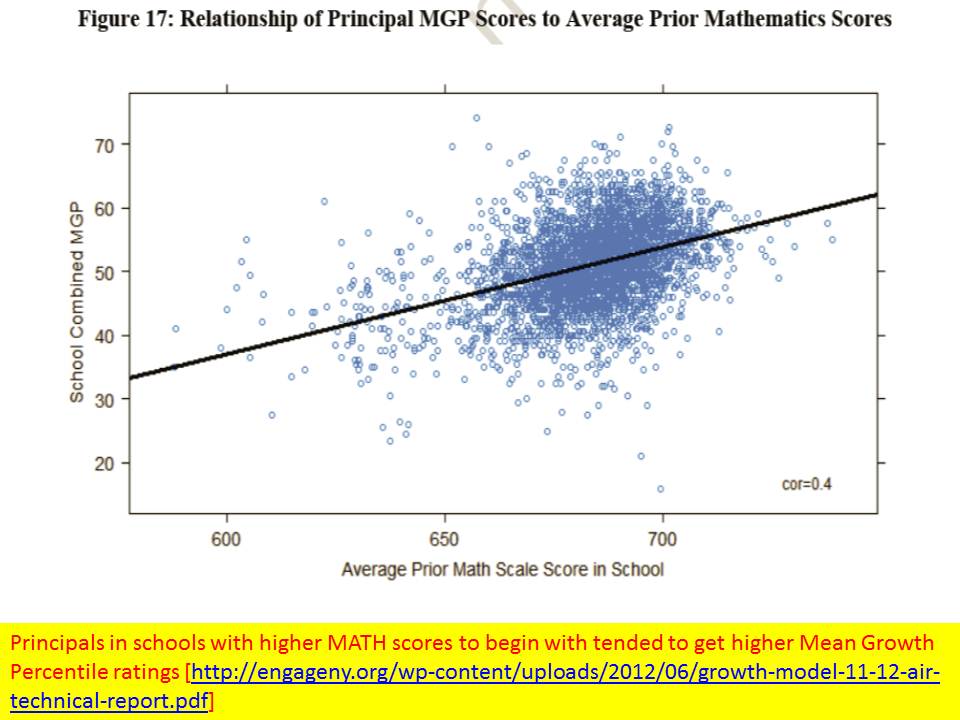

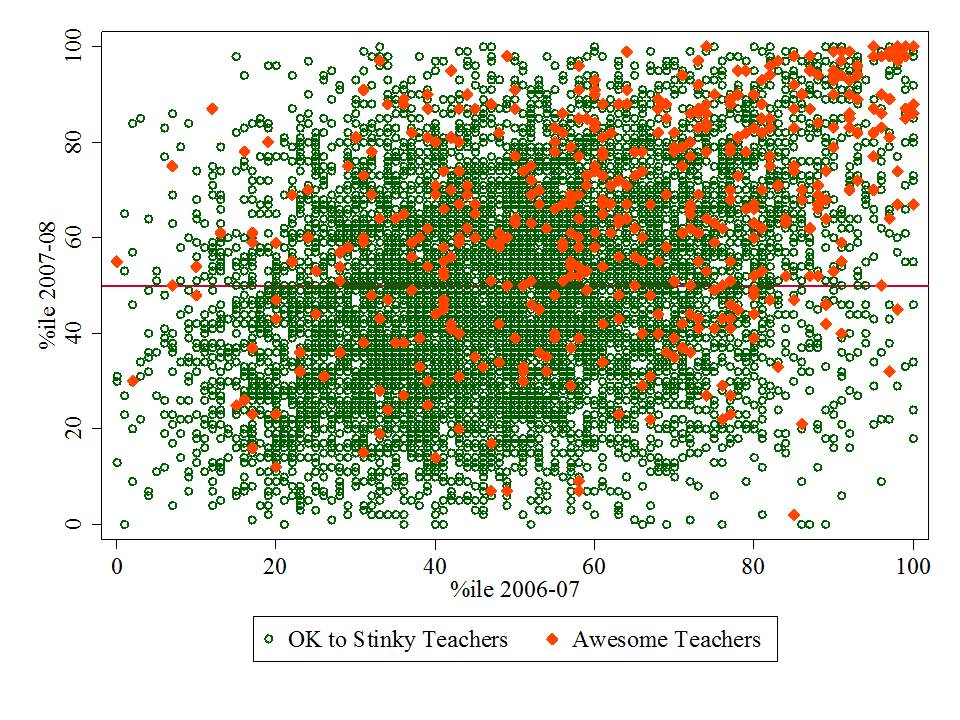

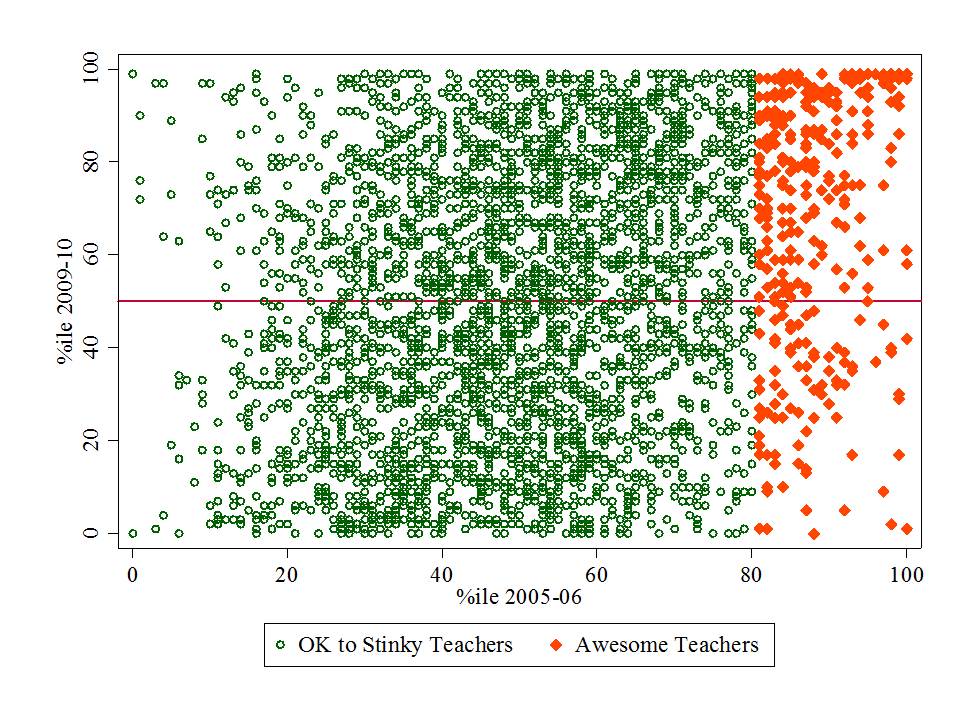

I was immediately intrigued the other day when a friend passed along a link to the recent technical report on the New York State growth model, the results of which are expected/required to be integrated into district level teacher and principal evaluation systems under that state’s new teacher evaluation regulations. I did as I often do and went straight for the pictures – in this case- the scatterplots of the relationships between various “other” measures and the teacher and principal “effect” measures. There was plenty of interesting stuff there, some of which I’ll discuss below.

But then I went to the written language of the report – specifically the report’s (albeit in DRAFT form) conclusions. The conclusions were only two short paragraphs long, despite much to ponder being provided in the body of the report. The authors’ main conclusion was as follows:

The model selected to estimate growth scores for New York State provides a fair and accurate method for estimating individual teacher and principal effectiveness based on specific regulatory requirements for a “growth model” in the 2011-2012 school year. p. 40

http://engageny.org/wp-content/uploads/2012/06/growth-model-11-12-air-technical-report.pdf

13-Nov-2012 20:54

Updated Final Report: http://engageny.org/sites/default/files/resource/attachments/growth-model-11-12-air-technical-report_0.pdf

Local copy of original DRAFT report: growth-model-11-12-air-technical-report

Local copy of FINAL report: growth-model-11-12-air-technical-report_FINAL

Unfortunately, the multitude of graphs that immediately preceded this conclusion undermine it entirely. but first, allow me to address the egregious conceptual problems with the framing of this conclusion.

First Conceptually

Let’s start with the low hanging fruit here. First and foremost, nowhere in the technical report, nowhere in their data analyses, do the authors actually measure “individual teacher and principal effectiveness.” And quite honestly, I don’t give a crap if the “specific regulatory requirements” refer to such measures in these terms. If that’s what the author is referring to in this language, that’s a pathetic copout. Indeed it may have been their charge to “measure individual teacher and principal effectiveness based on requirements stated in XYZ.” That’s how contracts for such work are often stated. But that does not obligate the author to conclude that this is actually what has been statistically accomplished. And I’m just getting started.

So, what is being measured and reported? At best, what we have are:

- An estimate of student relative test score change on one assessment each for ELA and Math (scaled to growth percentile) for students who happen to be clustered in certain classrooms.

THIS IS NOT TO BE CONFLATED WITH “TEACHER EFFECTIVENESS”

Rather, it is merely a classroom aggregate statistical association based on data points pertaining to two subjects being addressed by teachers in those classrooms, for a group of children who happen to spend a minority share of their day and year in those classrooms.

- An estimate of student relative test score change on one assessment each for ELA and Math (scaled to growth percentile) for students who happen to be clustered in certain schools.

THIS IS NOT TO BE CONFLATED WITH “PRINCIPAL EFFECTIVENESS”

Rather, it is merely a school aggregate statistical association based on data points pertaining to two subjects being addressed by teachers in classrooms that are housed in a given school under the leadership of perhaps one or more principals, vps, etc., for a group of children who happen to spend a minority share of their day and year in those classrooms.

Now Statistically

Following are a series of charts presented in the technical report, immediately preceding the above conclusion.

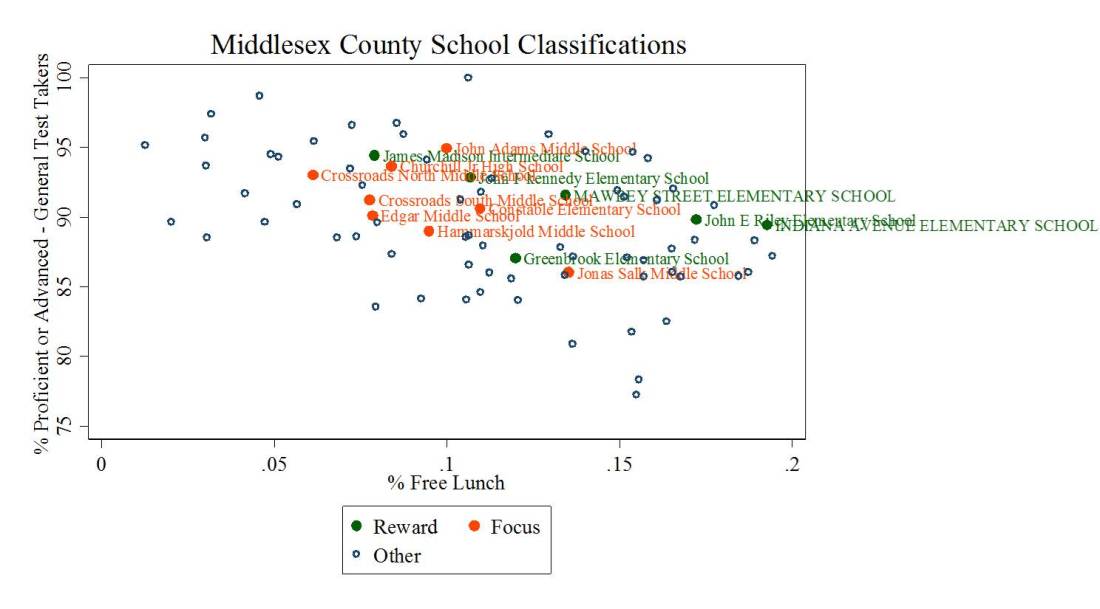

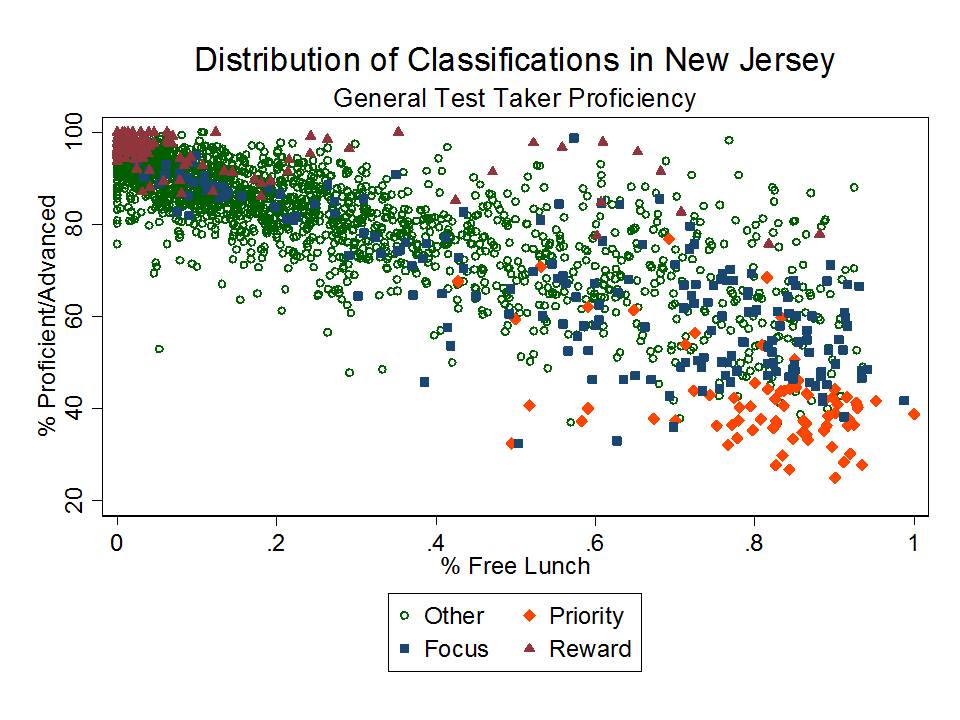

Classroom Level Rating Bias

School Level Rating Bias

And there are many more figures displaying more subtle biases, but biases that for clusters of teachers may be quite significant and consequential.

Based on the figures above, there certainly appears to be, both at the teacher, excuse me – classroom, and principal – I mean school level, substantial bias in the Mean Growth Percentile ratings with respect to initial performance levels on both math and reading. Teachers with students who had higher starting scores and principals in schools with higher starting scores tended to have higher Mean Growth Percentiles.

This might occur for several reasons. First, it might just be that the tests used to generate the MGPs are scaled such that it’s just easier to achieve growth in the upper ranges of scores. I came to a similar finding of bias in the NYC value added model, where schools having higher starting math scores showed higher value added. So perhaps something is going on here. It might also be that students clustered among higher performing peers tend to do better. And, it’s at least conceivable that students who previously had strong teachers and remain clustered together from year to year, continue to show strong growth. What is less likely is that many of the actual “better” teachers just so happen to be teaching the kids who had better scores to begin with.

That the systemic bias appears greater in the school level estimates than in the teacher level estimates is suggestive that the teacher level estimates may actually be even more bias than they appear. The aggregation of otherwise less biased estimates should not reveal more bias.

Further, as I’ve mentioned on several times on this blog previously, even if there weren’t such glaringly apparent overall patterns of bias their still might be underlying biased clusters. That is, groups of teachers serving certain types of students might have ratings that are substantially WRONG, either in relation to observed characteristics of the students they serve or their settings, or of unobserved characteristics.

Closing Thoughts

To be blunt – the measures are neither conceptually nor statistically accurate. They suffer significant bias, as shown and then completely ignored by the authors. And inaccurate measures can’t be fair. Characterizing them as such is irresponsible.

I’ve now written 2 articles and numerous blog posts in which I have raised concerns about the likely overly rigid use of these very types of metrics when making high stakes personnel decisions. I have pointed out that misuse of this information may raise significant legal concerns. That is, when district administrators do start making teacher or principal dismissal decisions based on these data, there will likely follow, some very interesting litigation over whether this information really is sufficient for upholding due process (depending largely on how it is applied in the process).

I have pointed out that the originators of the SGP approach have stated in numerous technical documents and academic papers that SGPs are intended to be a descriptive tool and are not for making causal assertions (they are not for “attribution of responsibility”) regarding teacher effects on student outcomes. Yet, the authors persist in encouraging states and local districts to do just that. I certainly expect to see them called to the witness stand the first time SGP information is misused to attribute student failure to a teacher.

But the case of the NY-AIR technical report is somewhat more disconcerting. Here, we have a technically proficient author working for a highly respected organization – American Institutes for Research – ignoring all of the statistical red flags (after waiving them), and seemingly oblivious to gaping conceptual holes (commonly understood limitations) between the actual statistical analyses presented and the concluding statements made (and language used throughout).

The conclusion are WRONG – statistically and conceptually. And the author needs to recognize that being so damn bluntly wrong may be consequential for the livelihoods of thousands of individual teachers and principals! Yes, it is indeed another leap for a local school administrator to use their state approved evaluation framework, coupled with these measures, to actually decide to adversely affect the livelihood and potential career of some wrongly classified teacher or principal – but the author of this report has given them the tool and provided his blessing. And that’s inexcusable.