This is just a quick note with a few pictures in response to the TNTP “Irreplaceables” report that came out a few weeks back – a report that is utterly ridiculous at many levels (especially this graph!)… but due to the storm I just didn’t get a chance address it. But let’s just entertain for the moment the premise that teachers who achieve a value-added rating in the top 20% in a given year are… just plain freakin’ awesome…. and that districts should take whatever steps they can to focus on retaining this specific momentary slice of teachers. At the same time, districts might not want concern themselves with all of those other teachers that range only from okay… all the way down to those that simply stink!

The TNTP report focuses on teachers who were in the top 14% in Washington DC based on aggregate IMPACT ratings, which do include more than value-added alone, but are certainly driven by the Value-added metric. TNTP compares DC to other districts, and explains that the top 20% by value-added are assumed to be higher performers.

For the other four districts we studied, we used teacher value-added scores or student academic growth measures to identify high- and low-performing teachers—those whose students made much more or much less academic progress than expected. These data provided us with a common yardstick for teacher performance. Teachers scoring in approximately the top 20 percent were identified as Irreplaceables. While teachers of this caliber earn high ratings in student surveys and have been shown to have a positive impact that extends far beyond test scores, we acknowledge that such measures are limited to certain grades and subjects and should not be the only ones used in real-world teacher evaluations. http://tntp.org/assets/documents/TNTP_DCIrreplaceables_2012.pdf

Let’s take a stab at this with the NYC Teacher Value-added Percentiles which I played around with in some previous posts.

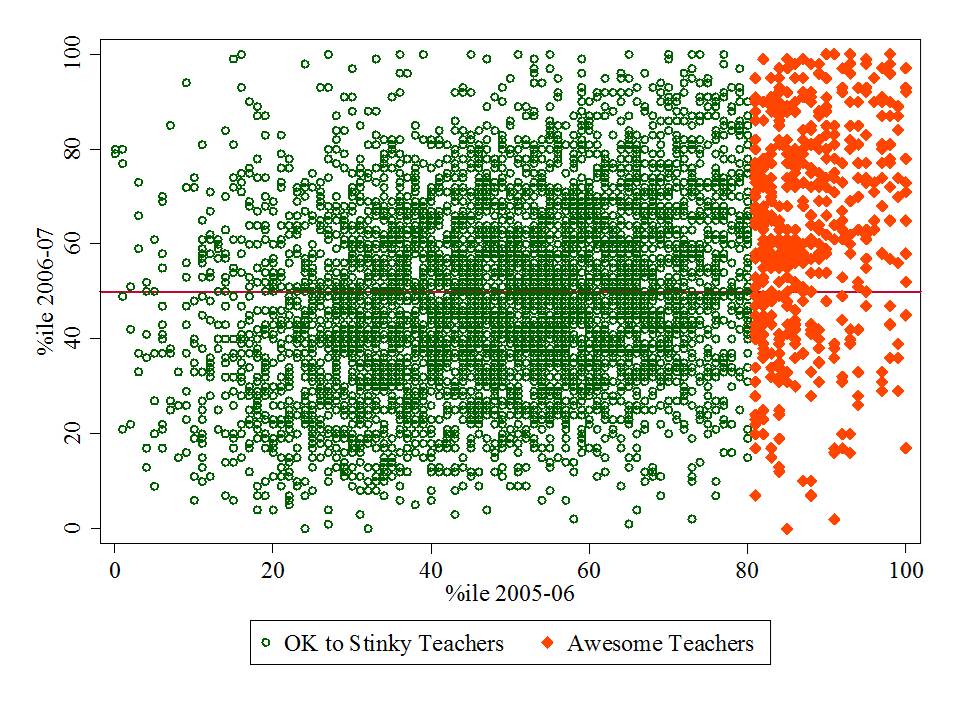

The following graphs play out the premise of “irreplaceables” with NYC value-added percentile data. I start by identifying those teachers that are in the top 20% in 2005-06 and then see where they land in each subsequent year through 2009-10.

NOTE: IT’S REALLY NOT A GREAT IDEA TO MAKE SCATTERPLOTS OF THE RELATIONSHIP BETWEEN PERCENTILE RANKS – BETTER TO USE THE ACTUAL VAM SCORES. BUT THIS IS ILLUSTRATIVE… THE POINT BEING TO SEE WHERE ALL OF THOSE DOTS THAT ARE “IRREPLACEABLE” IN YEAR 1 (2005-06) STAY THAT WAY YEAR AFTER YEAR!

I’ve chosen to focus on the MATHEMATICS ratings here… which were actually the more stable ratings from year to year (but were stable potentially because the were biased!)

Figure 1 – Who is irreplaceable in 2006-07 after being irreplaceable in 2005-06?

Figure 1 shows that there are certainly more “irreplaceables” (awesome teachers) that remain above the median the following year than fall below it… but there sure are one heck of a lot of those irreplaceables that are below the median the next year… and a few that are near the 0%ile! This is not, by any stretch to condemn those individuals for being falsely rated as irreplaceable but actually sucking. Rather, this is to point out that there is comparable likelihood that these teachers were wrongly classified each year (potentially like nearly every other teacher in the mix).

Figure 1 shows that there are certainly more “irreplaceables” (awesome teachers) that remain above the median the following year than fall below it… but there sure are one heck of a lot of those irreplaceables that are below the median the next year… and a few that are near the 0%ile! This is not, by any stretch to condemn those individuals for being falsely rated as irreplaceable but actually sucking. Rather, this is to point out that there is comparable likelihood that these teachers were wrongly classified each year (potentially like nearly every other teacher in the mix).

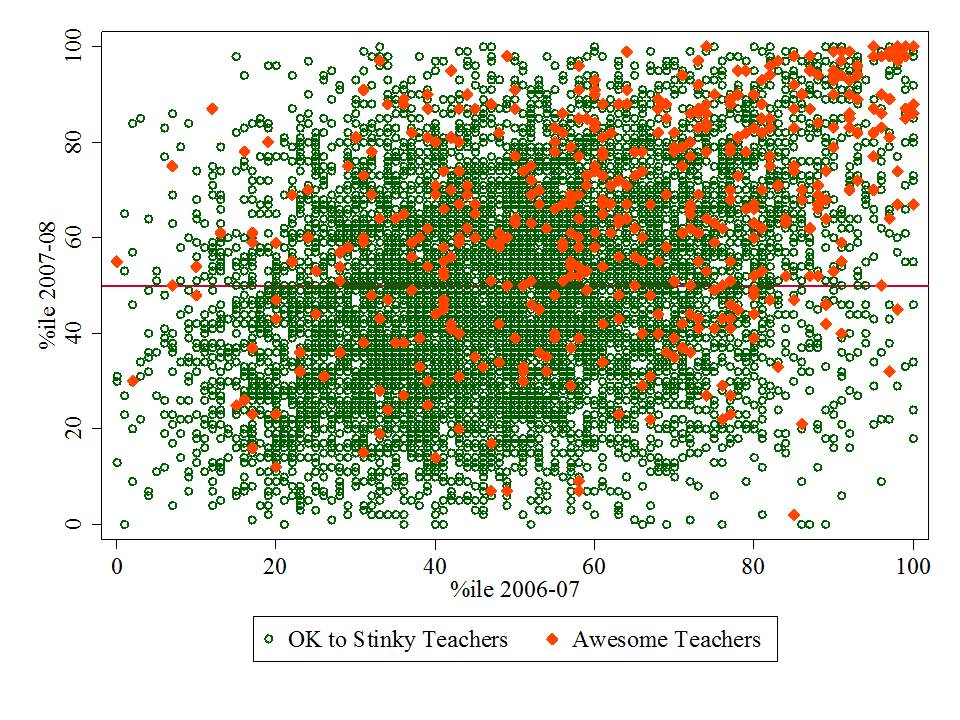

Figure 2 – Among those 2005-06 Irreplaceables, how do they reshuffle between 2006-07 & 2007-08?

Hmm… now they’re moving all over the place. A small cluster do appear to stay in the upper right. But, we are dealing with a dramatically diminishing pool of the persistently awesome here. And I’m not even pointing out the number of cases in the data set that are simply disappearing from year to year. Another post – another day.

Hmm… now they’re moving all over the place. A small cluster do appear to stay in the upper right. But, we are dealing with a dramatically diminishing pool of the persistently awesome here. And I’m not even pointing out the number of cases in the data set that are simply disappearing from year to year. Another post – another day.

I provide an analysis along these lines here: https://schoolfinance101.wordpress.com/2012/03/01/about-those-dice-ready-set-roll-on-the-vam-ification-of-tenure/

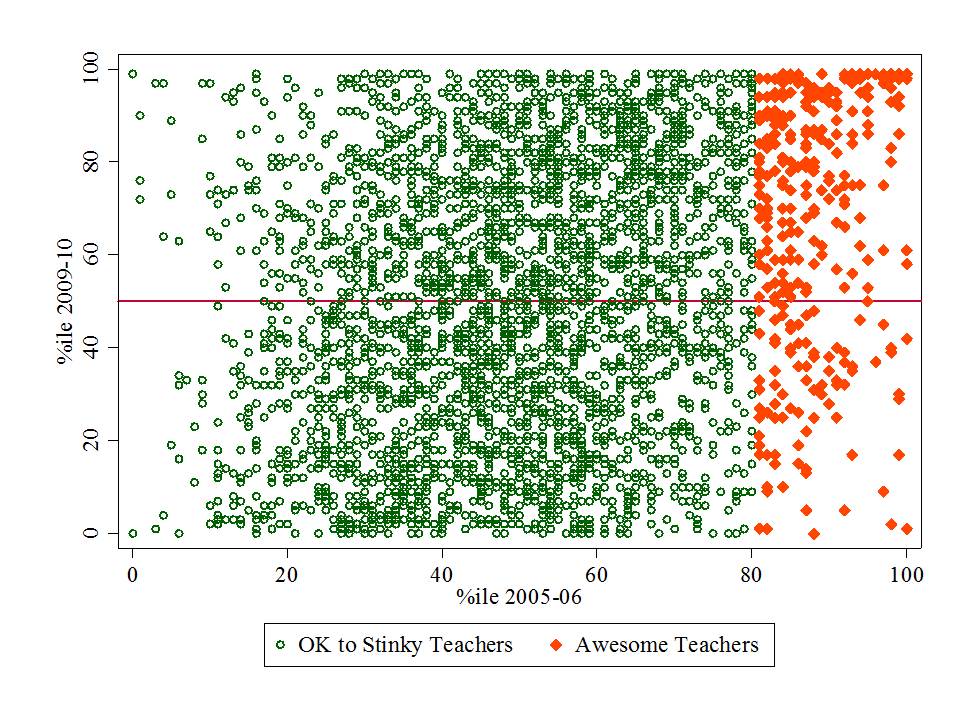

Figure 3 – How many of those teachers who were totally awesome in 2005-06 were still totally awesome in 2009-10?

The relationship between ratings from year to year is even weaker when one looks at the endpoints of the data set, comparing 2005-06 ratings to 2009-10 ones. Again, we’ve got teachers who were supposedly “irreplaceable” in 2005-06 who are at the bottom of the heap in 2009-10.

Yes, there is still a cluster of teachers who had a top 20% rating in 2005-06 and have one again in 2009-10. BUT… many… uh… most of these had a much lower rating for at least one of the in between years!

Of the thousands of teachers for whom ratings exist for each year, there are 14 in math and 5 in ELA that stay in the top 20% for each year! Sure hope they don’t leave!

====

Note: Because the NYC teacher data release did not provide unique identifiers for matching teachers from year to year, for my previous analyses I had constructed a matching identifier based on teacher name, subject and grade level within school. So, my year to year comparisons include only those teachers who are teaching the same subject and grade level in the same school from one year to the next. Arguably, this matching approach might lead to greater stability than might be expected if I included teachers who moved to different schools serving different students and/or changed subject areas or levels.

Astounding, Bruce! If the unions continue to “cooperate” with this kind of quackery evaluation crap, they deserve to go out of business. Keep up your wonderful work! Dianne (603) 325-5250 m

________________________________

Thanks… yeah… I’ve had it.

I’m interested to know how many teachers cycle through the “Irreplaceable” category at least one over the entire period. The VAM may actually label nearly all teachers as irreplaceable given enough rounds of evaluation.

good point.. I’ll check if/when I get a chance! likely most.

Bruce, could you do (have you done?) the same analysis for the school reports cards (a big part of the principal’s evaluation). And specifically aren’t schools (or teachers) near the top of the rating in one year more likely to go down than up (just merely a result of ceiling and regression to mean effects?). Secondly, have you done similar kinds of stability analysis for the school perception surveys (parents surveys) and how they respond to the year’s previous school report card; i.e. are swings in the report card associated with swings in parent perception surveys the next year – like predator – prey cycles (the anology is not intended), etc). The potential for cyclical patterns of parents responding to unstable school report card indexes, is, I presume, great since its a potential feedback loop….

School report card measures tend to be even more correlated with student population characteristics, and are thus hugely problematic for rating principals or teachers. That’s what the VAM advocates like to hang their hats on – that these VAM or MGP measures are less biased (they argue that they are unbiased) and therefore good measures. Being simply “less bad” is no basis for good evaluation!

The researchers at TNTP may have overlooked this chart from the DCPS Office of Human Chattel (January 2012). On page 15, “Teacher Retention,” the box for staff listings reads: “Team to be hired in coming months.”

Click to access fy11_12_agencyperformance_dcps_officeofhumancapital_orgchart.pdf

I’m curious to know what these plots might look like if the likelihood of being “totally awesome” were in fact almost totally random. Would you still get 5 ELA teachers who were “totally awesome” five years in a row? I remember when I stayed home sick from school one day (I was about ten) and my stepfather, a math professor, took me with him to his Statistics class. In his lecture that day, he considered Reggie Jackson’s feat of hitting four homers in a World Series game (1978?). My stepfather calculated that it was highly likely that SOME journeyman slugger would accomplish this feat, even if the probability of his hitting a home run was exactly the same (say 30/500) on every at bat. This kind of calculation seems relevant here…

Much of the pattern may be due to regression to the mean and the year-to-year correlation seems to be about 0.7 or so. That would be easy to calculate. School and class size would be a huge factor. The top and bottom teachers (and most variable teachers) would be associated with small classes and small schools. The school size would affect the number of teachers, and it would be much easier to be in the top (or bottom) 20% in a small school. If the VA ratings were across the entire system than class size would still be important but school size less important.