Here’s an excerpt from a forthcoming article on whether school finance reforms have made any difference for students. The article is partly in response to claims by Eric Hanushek and Alfred Lindseth that school finance reforms have resulted in massive increases in funding to public schools which have not helped and may have in fact harmed children. My forthcoming work on this topic is co-authored with Kevin Welner of U. of Colorado.

=====

In terms of quality and scope, the most useful single study of judicially induced state finance reform was published by Card and Payne in 2002. They found that court declarations of unconstitutionality in the 1980s increased the relative funding provided to low-income districts. And they found that these school finance reforms had, in turn, significant equity effects on academic outcomes:

Using micro samples of SAT scores from this same period, we then test whether changes in spending inequality affect the gap in achievement between different family background groups. We find evidence that equalization of spending leads to a narrowing of test score outcomes across family background groups. (p. 49)

To evaluate distributional changes in school finance, Card and Payne estimated the partial correlations between current expenditures per pupil and median family income, conditional on other factors influencing demand for public schooling across districts within states and over time. Card and Payne then measured the differences in the change in income-associated spending distribution between states where school funding systems had been overturned, upheld, or where no court decision had been rendered. Importantly, they also evaluated whether structural changes to funding formulas (that is, the actual reforms) were associated with changes to the income-spending relationship, conditional on the presence of court rulings.

To make the final link between income-spending relationships and outcome gaps, Card and Payne evaluated changes in gaps in SAT scores among individual SAT test-takers categorized by family background characteristics.[1] Put in terms of our Figure 1, Card and Payne (2002) appear to have taken the greatest care in a multi-year, cross-state study, to establish appropriate linkages between litigation, reforms by type, changes in the distribution of funding, and related changes in the distribution of outcomes.

Notwithstanding the generally acknowledged importance of this study,[2] Hanushek and Lindseth (2009) never mention it in their book, including the chapter in which they conclude that school finance reforms have no positive effects.

This omission – as well as the other omissions noted below – is telling of a larger point. The development of, and reliance upon, a research base should depend on relatively objective criteria. Readers depend on authors of literature reviews to come forward with the best and most applicable research bearing on the issues under consideration. While Hanushek and Lindseth might argue that this particular omission is because Card and Payne (2002) are speaking to equity (not adequacy) litigation, we have already described how the line between equity and adequacy is not so simple. Moreover, the research that Hanushek and Lindseth do choose to include goes far beyond that directly focused on adequacy – including the Cato study of a Kansas City desegregation order discussed below.

Another key study not mentioned by Hanushek and Lindseth (2009) concerned the effects of reforms implemented under the Kansas court’s pre-ruling in 1992 (Deke, 2003). The reforms leveled up funding in low-property-wealth school districts, and Deke found as follows:

Using panel models that, if biased, are likely biased downward, I have a conservative estimate of the impact of a 20% increase in spending on the probability of going on to postsecondary education. The regression results show that such a spending increase raises that probability by approximately 5% (p. 275).

The Kansas reforms addressed by Deke (2003) came as a result of a judicial pre-order, advising the legislature that if the pending suit made it to trial, the judge would declare the school finance system unconstitutional (Baker and Green, 2006).

Hanushek and Lindseth (2009) also omitted from their discussion two additional studies, both peer-reviewed, that explore the effects of Michigan’s school finance reforms, known as “Proposal A,” implemented in the mid-1990s. Michigan’s reforms were implemented without ruling or high level of litigation threat, but the reforms were nonetheless comparable in many ways to reforms implemented following judicial rulings[3] (see Leuven et al., 2007; and Papke, 2001). In the first study, Papke (2001) finds:

Focusing on pass rates for fourth-grade and seventh grade math tests (the most complete and consistent data available for Michigan), I find that increases in spending have nontrivial, statistically significant effects on math test pass rates, and the effects are largest for schools with initially poor performance. (Papke, 2001, p. 821.)

Leuven and colleagues (2007) find no positive effects of two specific increases in funding targeted to schools with elevated at-risk populations, a convenient conclusion for Hanushek and Lindseth to have included.

A third Michigan study (available online since 2003 as a working paper from Princeton University, and now accepted for publication in Education Finance and Policy, a peer-reviewed journal) directly estimates the relationship between implemented reforms and subsequent outcomes (Roy, 2003). Roy, whose work was not cited by Hanushek and Lindseth, finds:

Proposal A was quite successful in reducing inter-district spending disparities. There were also significant gains in achievement in the poorest districts, as measured by success in state tests. However, as yet these improvements do not show up in nationwide tests like NAEP and ACT. (Roy, 2003, p. 1.)

Most recently, a study by Choudhary (2009) “estimate[s] the causal effect of increased spending on 4th and 7th grade math scores for two test measures—a scale score and a percent satisfactory measure” (p. 1). She “find[s] positive effects of increased spending on 4th grade test scores. A 60% percent increase in spending increases the percent satisfactory score by one standard deviation” (p. 1).

Perhaps because there was no judicial order involved in Michigan, researchers were able to avoid the tendency to focus on or classify the judicial order. Moreover, single-state studies generally avoid such problems because there is little statistical purpose in classifying litigation. Importantly, each of these studies focuses instead on measures of the changing distribution and level of spending (characteristics of the reforms themselves) and resulting changes in the distribution and level of outcomes. Each takes a different approach, but attempts to appropriately align their measures of spending change and outcome change, adhering to principles laid out in our Figure 1.

Other high-quality but non-peer reviewed empirical estimates of the effects of specific school finance reforms linked to court orders have been published for Vermont and Massachusetts. For example, Downes (2004), in an evaluation of Vermont school finance reforms that were ordered in 1997 and implemented in 1998, found as follows:

All of the evidence cited in this paper supports the conclusion that Act 60 has dramatically reduced dispersion in education spending and has done this by weakening the link between spending and property wealth. Further, the regressions presented in this paper offer some evidence that student performance has become more equal in the post–Act 60 period. And no results support the conclusion that Act 60 has contributed to increased dispersion in performance. (p. 312)

Hanushek and Lindseth (2009) never acknowledge this positive finding (although they do briefly cite the Downes evaluation, for a different point). Again, one might attribute this omission to the argument that the Vermont reforms were equity reforms, not adequacy reforms. However, similar to the 1992 Kansas reforms, the overall effect of the Vermont Act 60 reforms was to level up low-wealth districts and increase state school spending dramatically, thus addressing both adequacy and equity.

For Massachusetts, two independent sets of authors (in addition to Hanushek and Lindseth) have found positive reform effects. Most recently — after the Hanushek and Lindseth book was written — Downes, Zabel and Ansel (2009) found:

The achievement gap notwithstanding, this research provides new evidence that the state’s investment has had a clear and significant impact. Specifically, some of the research findings show how education reform has been successful in raising the achievement of students in the previously low-spending districts. Quite simply, this comprehensive analysis documents that without Ed Reform the achievement gap would be larger than it is today. (p. 5)

Previously, Guryan (2003) found:

Using state aid formulas as instruments, I find that increases in per-pupil spending led to significant increases in math, reading, science, and social studies test scores for 4th- and 8th-grade students. The magnitudes imply a $1,000 increase in per-pupil spending leads to about a third to a half of a standard-deviation increase in average test scores. It is noted that the state aid driving the estimates is targeted to under-funded school districts, which may have atypical returns to additional expenditures. (p. 1)

Although Hanushek and Lindseth concede that Massachusetts reforms appear successful,[4] they failed to cite Guryan’s NBER working paper, the inclusion of which would have (like most other omitted studies) weakened their overall conclusions about the non-impact of these reforms.

Turning to New Jersey, two recent (though not yet peer-reviewed) studies find positive effects of that state’s finance reforms. Alexandra Resch (2008), in a study published as a dissertation for the economics department at the University of Michigan, found evidence suggesting that New Jersey Abbott districts “directed the added resources largely to instructional personnel” (p. 1) such as additional teachers and support staff. She also concluded that this increase in funding and spending improved the achievement of students in the affected school districts. Looking at the statewide 11th grade assessment (“the only test that spans the policy change”), she found “that the policy improves test scores for minority students in the affected districts by one-fifth to one-quarter of a standard deviation” (p. 1).

The second recent study was originally presented at a 2007 conference at Columbia University, and a revised, peer-reviewed version was recently published by the Campaign for Educational Equity at Teachers College, Columbia University (Goertz and Weiss, 2009). This paper offered descriptive evidence that reveals some positive test results of recent New Jersey school finance reforms:

State Assessments: In 1999 the gap between the Abbott districts and all other districts in the state was over 30 points. By 2007 the gap was down to 19 points, a reduction of 11 points or 0.39 standard deviation units. The gap between the Abbott districts and the high-wealth districts fell from 35 to 22 points. Meanwhile performance in the low-, middle-, and high-wealth districts essentially remained parallel during this eight-year period (Figure 3, p. 23).

NAEP: The NAEP results confirm the changes we saw using state assessment data. NAEP scores in fourth-grade reading and mathematics in central cities rose 21 and 22 points, respectively between the mid-1990s and 2007, a rate that was faster than the urban fringe in both subjects and the state as a whole in reading (p. 26).

The Goertz and Weiss paper (which was, as designed and intended by the paper’s authors, the statistically least rigorous analysis of the ones presented here) does receive mention from Hanushek and Lindseth multiple times, but only in an effort to discredit and minimize its findings.

Card, D. and Payne, A. A. (2002). School Finance Reform, the Distribution of School Spending, and the Distribution of Student Test Scores. Journal of Public Economics, 83(1), 49-82.

Choudhary, L. (2009). Education Inputs, Student Performance and School Finance Reform in Michigan. Economics of Education Review, 28(1), 90-98.

Deke, J. (2003). A study of the impact of public school spending on postsecondary educational attainment using statewide school district refinancing in Kansas, Economics of Education Review, 22(3), 275-284.

Downes, T. A. (2004). School Finance Reform and School Quality: Lessons from Vermont. In Yinger, J. (ed), Helping Children Left Behind: State Aid and the Pursuit of Educational Equity. Cambridge, MA: MIT Press.

Downes, T. A., Zabel, J., Ansel, D. (2009). Incomplete Grade: Massachusetts Education Reform at 15. Boston, MA. MassINC.

Goertz, M., and Weiss, M. (2009). Assessing Success in School Finance Litigation: The Case of New Jersey. New York City: The Campaign for Educational Equity, Teachers College, Columbia University.

Guryan, J. (2003). Does Money Matter? Estimates from Education Finance Reform in Massachusetts. Working Paper No. 8269. Cambridge, MA: National Bureau of Economic Research.

Leuven, E., Lindahl, M., Oosterbeek, H., and Webbink, D. (2007). The Effect of Extra Funding for Disadvantaged Pupils on Achievement. The Review of Economics and Statistics, 89(4), 721-736.

Resch, A. M. (2008). Three Essays on Resources in Education (dissertation). Ann Arbor: University of Michigan, Department of Economics. Retrieved October 28, 2009, from http://deepblue.lib.umich.edu/bitstream/2027.42/61592/1/aresch_1.pdf

Roy, J. (2003). Impact of School Finance Reform on Resource Equalization and Academic Performance: Evidence from Michigan. Princeton University, Education Research Section Working Paper No. 8. Retrieved October 23, 2009 from http://papers.ssrn.com/sol3/papers.cfm?abstract_id=630121 (Forthcoming in Education Finance and Policy.)

[1] Card and Payne provide substantial detail on their methodological attempts to negate the usual role of selection bias in SAT test-taking patterns. They also explain that their preference was to measure more directly the effects of income-related changes in current spending per pupil on income-related changes in SAT performance, but that the income measures in their SAT database were unreliable and could not be corroborated by other sources. As such, Card and Payne used combinations of parent education levels to proxy for income and socio-economic differences between SAT test takers.

[2] As one indication of its prominence among researchers, as of the writing of this article, Google Scholar identified 153 citations to this article.

[3] There is little reason to assume that the presence of judicial order would necessarily make otherwise similar reforms less (or more) effective, though constraints surrounding judicial remedies may.

[4] Hanushek and Lindseth attribute the success of the Massachusetts reforms not to spending, but to the fact that the “remedial steps passed by the legislature also included a vigorous regime of academic standards, a high-stakes graduation test, and strict accountability measures of a kind that have run into resistance in other states, particularly from teachers unions” (p. 169). That is, it was not the funding that mattered in Massachusetts, but rather it was the accountability reforms that accompanied the funding.

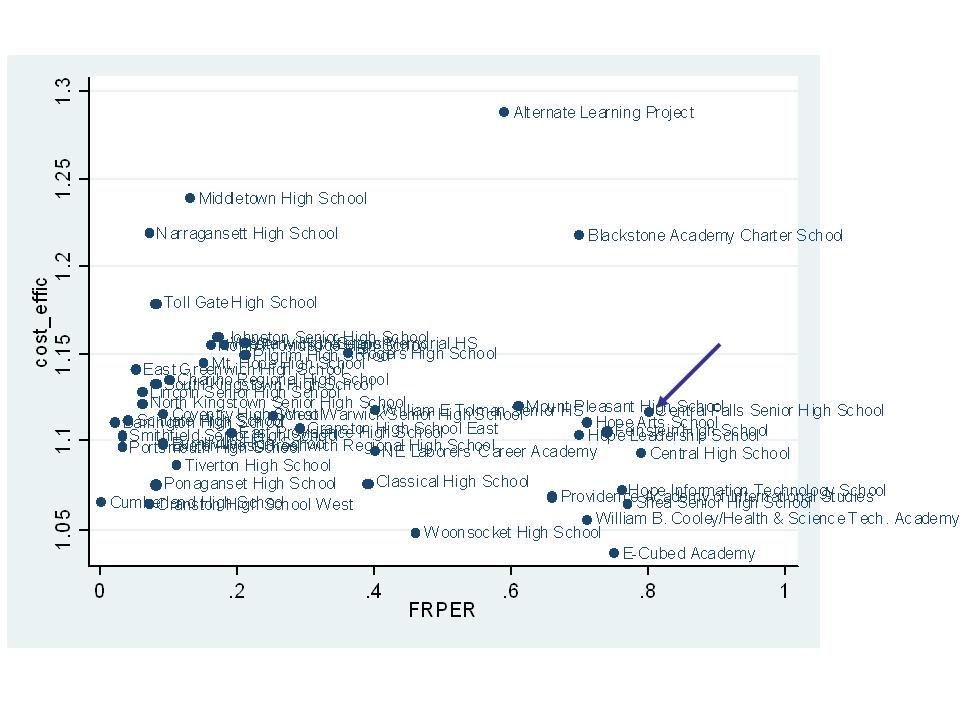

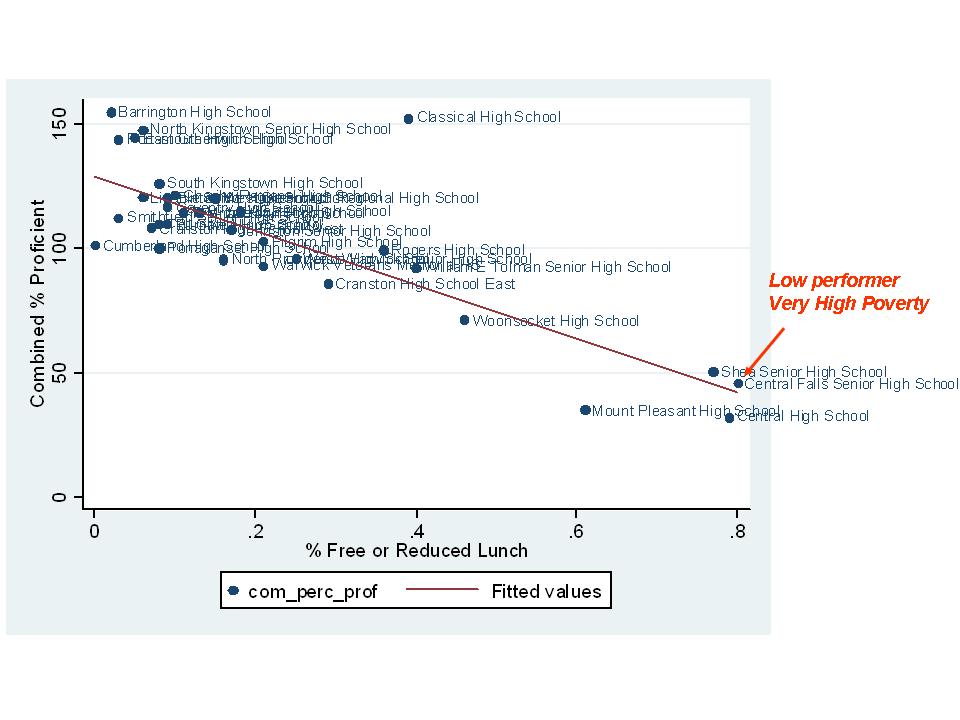

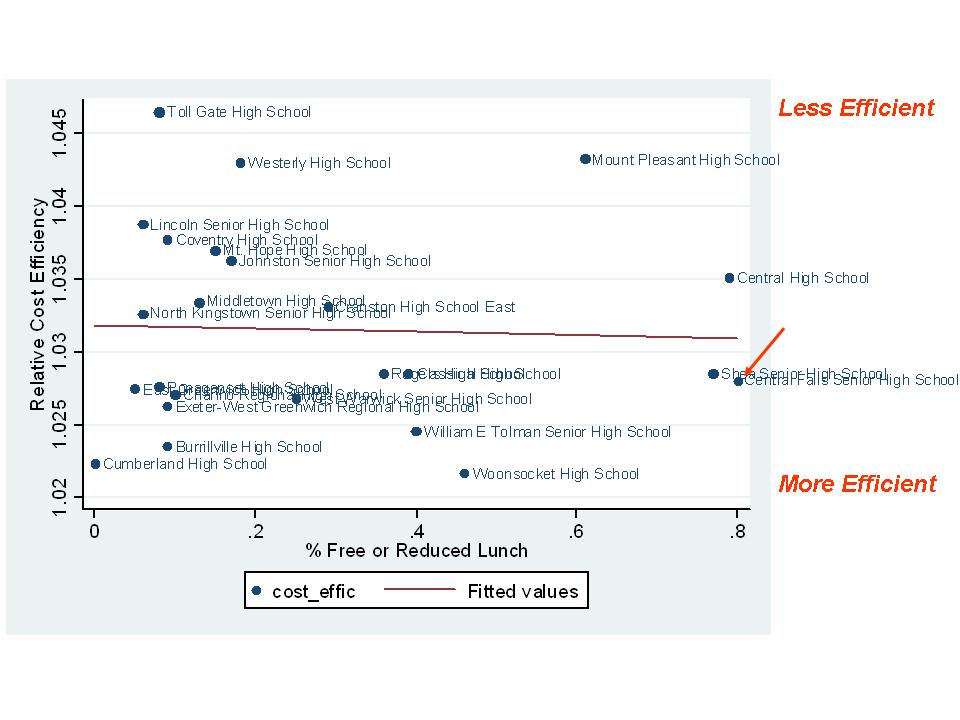

Yes, Central Falls is in tough shape – very high poverty and relatively low performing. But, not really off the trendline (above it, if anything) for performance given its poverty level and better than other high schools of similar poverty.

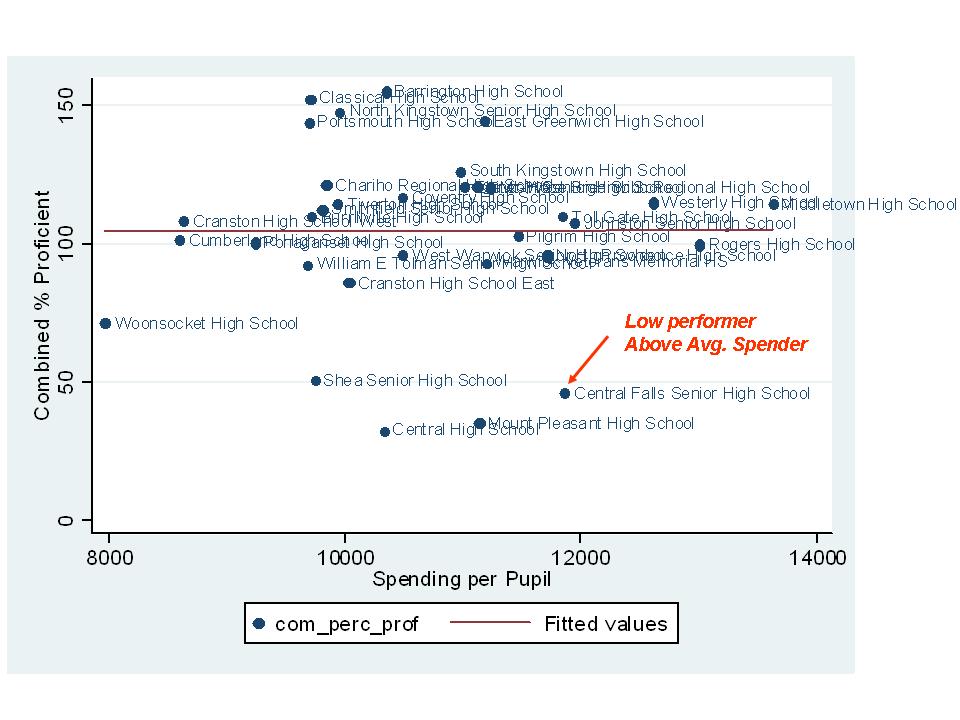

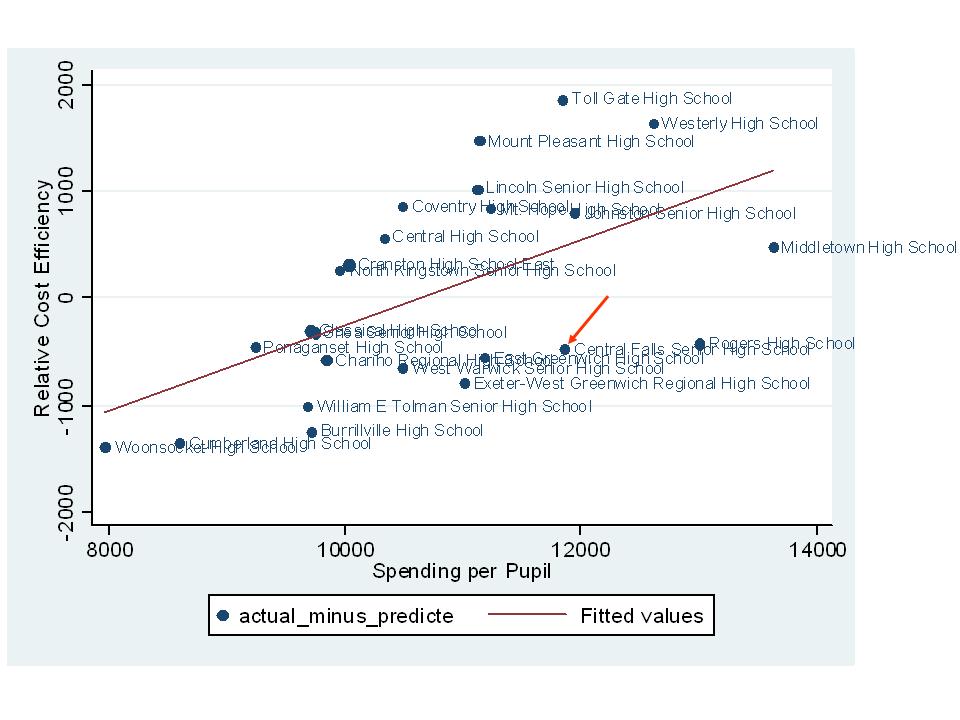

Yes, Central Falls is in tough shape – very high poverty and relatively low performing. But, not really off the trendline (above it, if anything) for performance given its poverty level and better than other high schools of similar poverty. Central Falls spending is somewhat above average. But again, its student needs are far greater than average – in fact, they are on the outer edge of the entire distribution. So, it is unlikely that “somewhat above average” per pupil spending is going to fully compensate for their high needs.

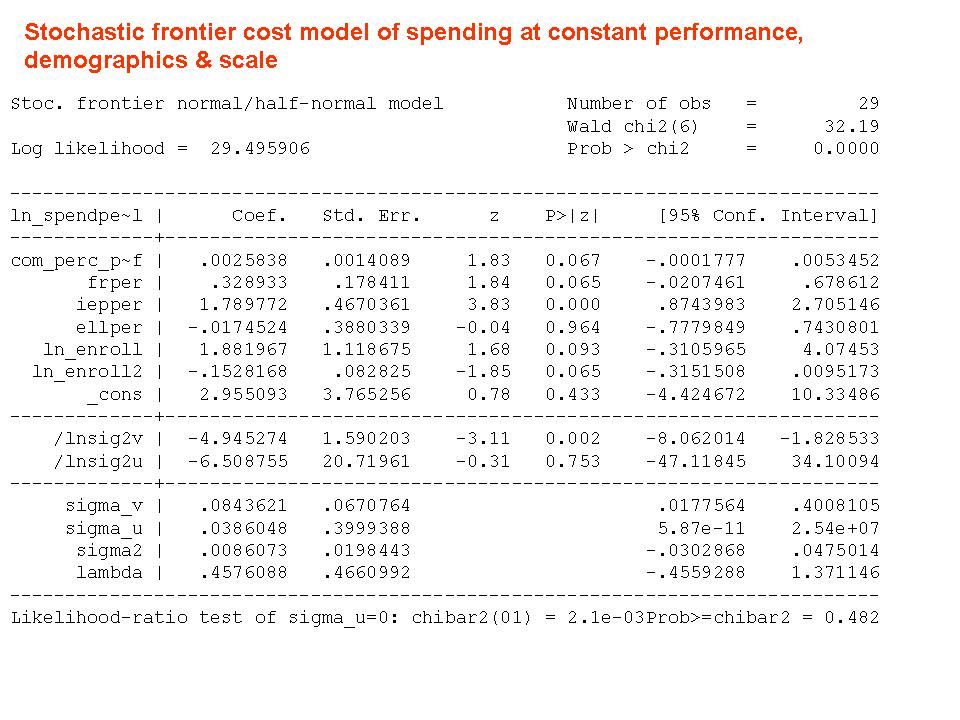

Central Falls spending is somewhat above average. But again, its student needs are far greater than average – in fact, they are on the outer edge of the entire distribution. So, it is unlikely that “somewhat above average” per pupil spending is going to fully compensate for their high needs. As it turns out, the relative efficiency of Central Falls HS stacks up pretty well with other Rhode Island High Schools. That is, the actual spending per pupil in Central Falls is not far off from the predicted amount to achieve their current outcomes, with their current population.

As it turns out, the relative efficiency of Central Falls HS stacks up pretty well with other Rhode Island High Schools. That is, the actual spending per pupil in Central Falls is not far off from the predicted amount to achieve their current outcomes, with their current population. This seems like a fairer comparison than simply casting stones at Central Falls teachers for their miserable test scores.

This seems like a fairer comparison than simply casting stones at Central Falls teachers for their miserable test scores.