This post comes from a work in progress… and addresses games states play to validate their choices to spend less than might actually be needed in order to achieve desired outcome standards. This post will be followed by another which reviews three major smokescreens commonly used to argue that none of this matters anyway.

=====

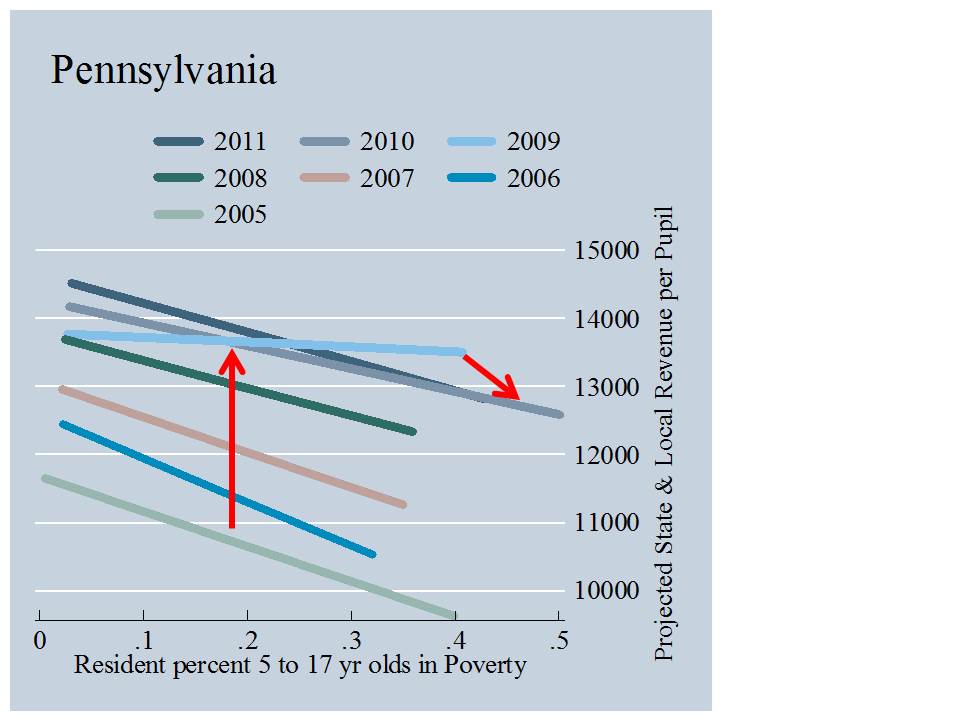

Over the past two decades in particular, states and advocacy groups have engaged with greater frequency in attempting to define the amount of funding that would be necessary for achieving adequate educational outcomes. One might characterize the period as one of the rise of empiricism in school finance, which coincided with a shift in litigation strategies from emphasis on funding equity to emphasis on funding adequacy – specifically whether funding was adequate to either provide specific programs and services or to achieve specific measured educational outcomes. In some cases, states have adopted their empirical strategy in response to judicial orders that the legislature comply with state constitutional mandate for the provision of an adequate education. In other cases, states have proactively set out to validate spending targets they know they can already meet (or have already been met), in order to claim school finance reform political victory.

Prior to this new “empirical era,” total state budgets would be set based on political preferences of governors and legislators regarding state tax policy and the revenues expected to be produced by the state tax system. Revenue projections, based on politically palatable tax policy, divided by the numbers of children to be served, generate the average per pupil amount of available aid. And then the tug of war over shifting distributions toward one constituency and thus away from another, ensues. The biggest difference between this approach and current approaches, if any, is that now, state policymakers are more likely to attempt to justify that the amount backed into via the same steps, is in fact an empirically valid estimate of the funding needed for children to achieve adequate outcomes.

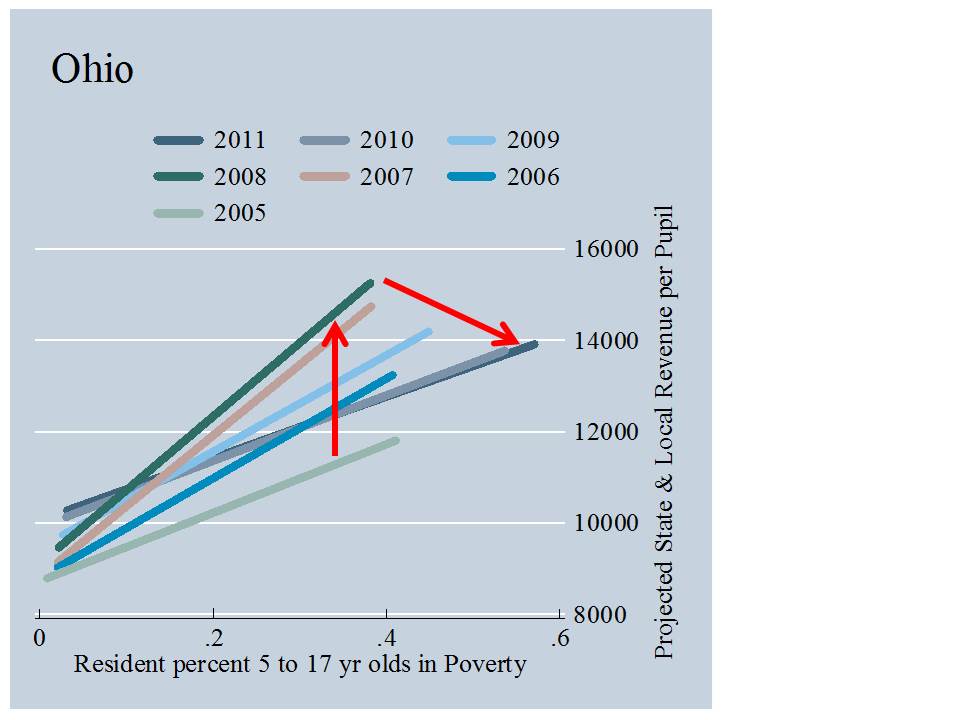

Baker, Taylor and Vedlitz (2005) provide an explanation of early gaming of estimates of the costs of providing an adequate education in Illinois and Ohio in the 1990s.

Augenblick and Colleagues provide multiple cost estimates for Illinois based on different outcome standards, using single or multiple years of data and including some or all outcome standards. The higher of the two figures in Table 5 represents the average expenditures of Illinois school districts which, using 1999-2000 data, had 83% of students meeting or exceeding the standard for improvement over time. The lower of the two figures is based on the average expenditure of districts which, using 2000 data only, had 67% of pupils meet or exceed the standards, and 50% meeting standards on all tests.

Similar issues exist in a series of successful schools cost estimates produced in Ohio a year earlier. In Ohio, however, estimates were derived and proposed amidst the political process, with various constituents picking and choosing their data years and outcome measures to yield the desired result. Two Ohio estimates are provided in the table, but multiple estimates were actually prepared based on different subsets of districts meeting different outcome standards. The Governor’s office chose 43 districts meeting 20 of 27 1999 standards, the Senate selected 122 districts meeting 17 of 18 1996 standards, the House chose 45 districts meeting all 18 original standards in 1999, and the House again in an amended bill used 127 districts meeting 17 of 18 1996 standards in 1996 and 20 of 27 standards in 1999.(Baker, Taylor, Vedlitz, 2005, p. 15)

Put simply, legislators in Ohio backed into outcome standards to identify that subset of school districts that on average were spending what the state was willing to spend within its current budget.

New York’s Numbers Game

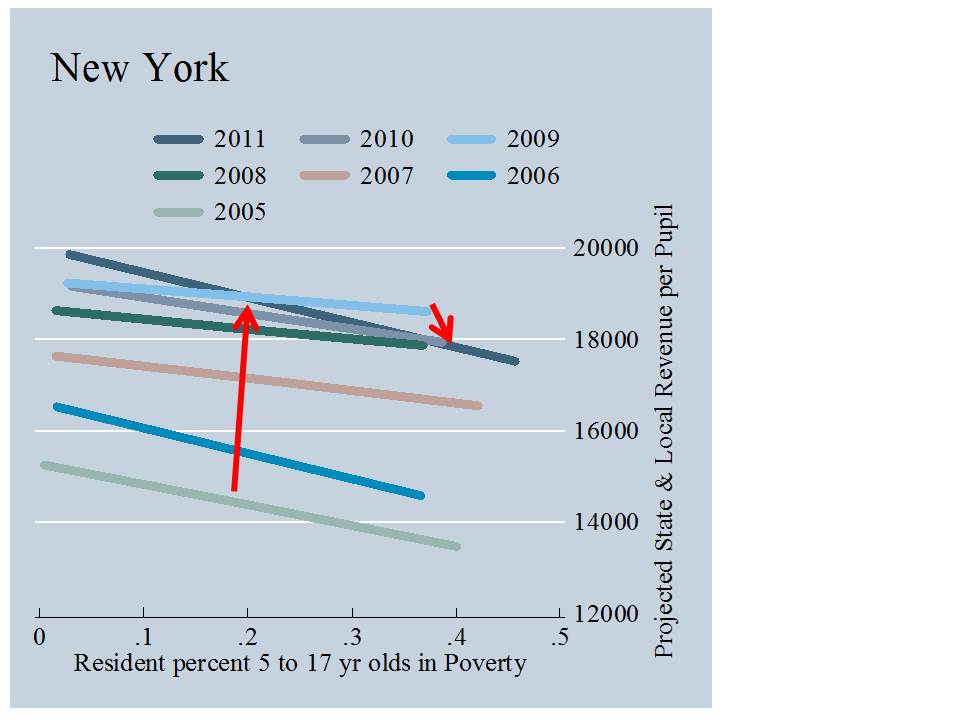

More recent school finance reforms in New York State reveal that similar games persist. In response to court order in Campaign for Fiscal Equity v. State, the legislature adopted a foundation aid formula to be phased in from 2007 to 2011 where the basic funding level in that formula would be set as follows:

The Foundation Amount is the cost of providing general education services. It is measured by determining instructional costs of districts that are performing well. (NYSED, Primer on State Aid, 2011-12)

The state defined “performing well” as a standard of 80% of children scoring proficient or higher on state assessments, a performance level marginally lower than the statewide mean at the time.

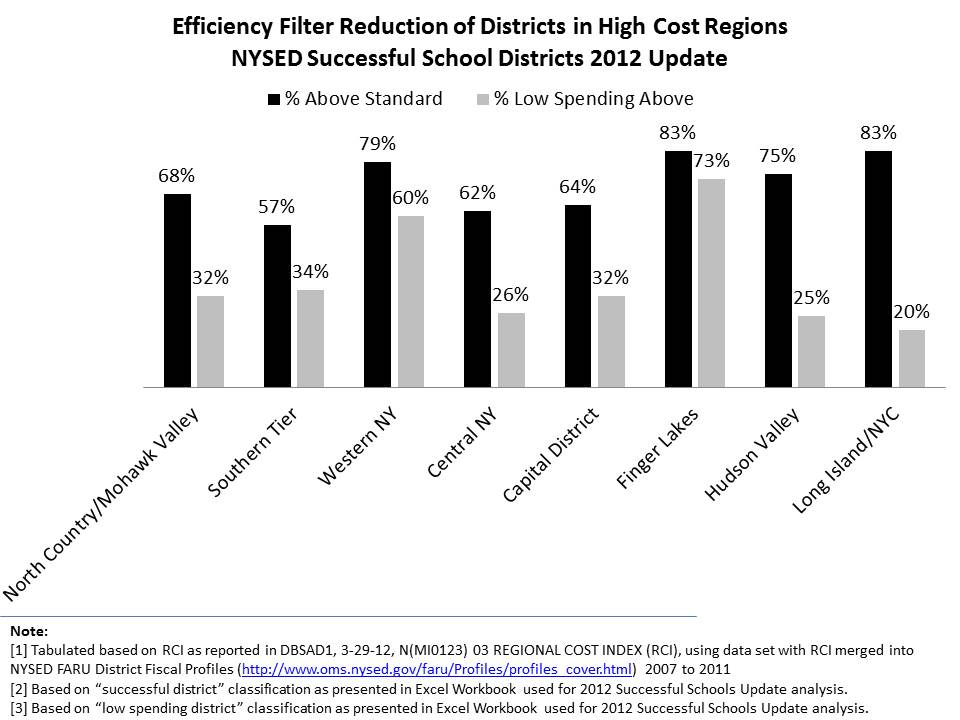

In constructing their baseline cost estimates, state officials adopted a handful of additional steps to ensure a politically palatable, low basic cost estimate. First, state officials chose only to consider the average spending of those districts that were both “performing well” and in the lower half of spending among those performing well. By taking this step, nearly all districts in the higher cost regions of the state are excluded and thus have limited effect on the basic cost estimate. Figure 1 shows that across regions, about 60 to 80% of districts meet the “successful” standard. In Western New York and the Finger Lakes region about 73% of districts are both “successful” and low spending. But, while 75 to83% of Hudson Valley and Long Island districts are “successful”, only 20 to 25% are in the lower half of spending (even after applying the state’s regional cost adjustment, which is clearly inadequate).

Thus, basic costs for districts statewide are measured largely against the average spending of districts lying somewhere in the triangle between Ithaca, Buffalo and Syracuse. Spending behavior of these districts has little relevance to costs of providing adequate education in and around New York City.

Figure 1

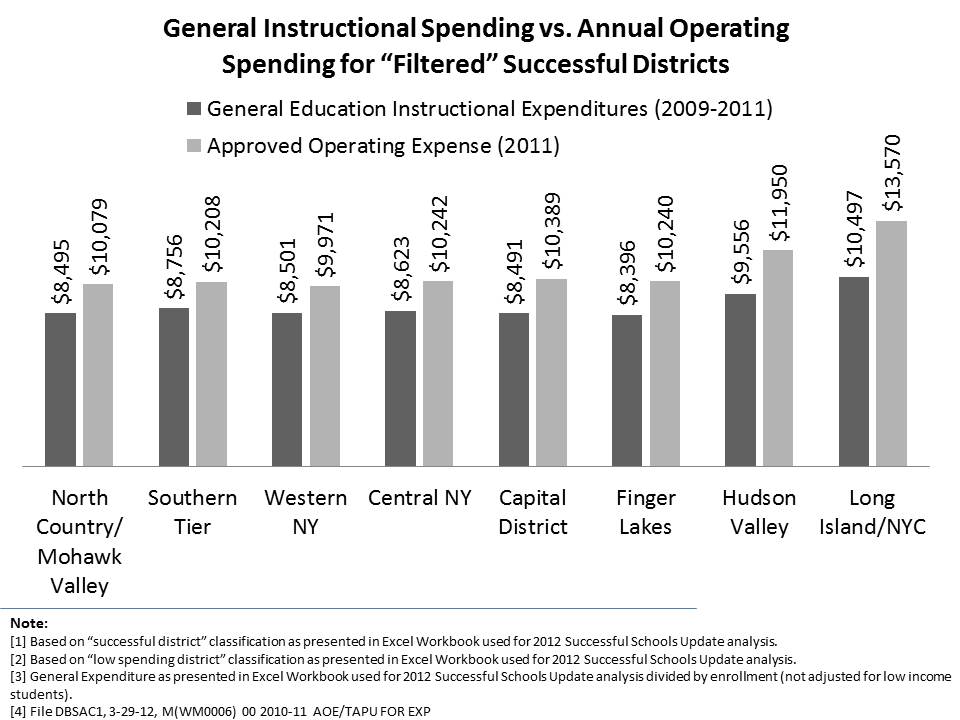

Another step in the process further deflates basic cost estimates. Instead of adopting a comprehensive measure of annual operating expenditures, the state chose a pruned down “general instructional spending” figure. In particular, the pruned general instructional spending figure is substantively lower than the state’s approved operating expense figure for downstate districts, as shown in Figure 2.

Figure 2

The combined a) setting of a low outcome bar, b) filtered exclusion of districts in higher cost regions of the state and c) selection of a partial spending figure rather than a more comprehensive one guarantees a more politically palatable minimum cost estimate, while still provide a veneer of empirical validity.

Despite taking such care to generate such a low estimate of adequate spending under-girding the state foundation aid formula, in recent years, the state has failed to come even close to funding the targets established by the formula – providing less than half of the target levels of aid required for many of the state’s highest need districts.

Rhode Island’s Numbers Game

Perhaps most ludicrous of all are Rhode Island public officials’ attempt to validate empirically their selected spending levels for recent school finance reforms. Rhode Island’s school finance reforms gained significant attention among policy think tanks as a model of proactive political collaboration leading to progressive, empirically based but elegantly simple reform (Wong, 2013). As described in official documents, the basic funding level for the Rhode Island formula is set as follows:

(1) The core instruction amount shall be an amount equal to a statewide per pupil core instruction amount as established by the department of elementary and secondary education, derived from the average of northeast regional expenditure data for the states of Rhode Island, Massachusetts, Connecticut, and New Hampshire from the National Center for Education Statistics (NCES) that will adequately fund the student instructional needs as described in the basic education program and multiplied by the district average daily membership as defined in section 16-7-22. (RIDE, 2010)

As articulated by State Education Commisioner Deborah Gist:

“Our core instructional amount was based on national research, using data from the NCES, is sufficient to fund the requirements of the Rhode Island Basic Education Program, and it in no way focused on states with low per-pupil expenditures. In fact, we looked particularly carefully at our neighboring states, which have some of the highest per-pupil expenditures in the nation, and we included only those states that have an organizational structure and staffing patterns similar to ours.” (Gist, 2010)

Several points here are worthy of note.

- That like New York officials, Rhode Island officials chose to focus on a reduced spending figure – core instructional spending – rather than a complete current operating spending figure.

- Average core spending of other states is hardly to be considered “national research” and average spending based on national data sources in other states is hardly indicative of what might be required to achieve Rhode Island’s required outcomes unless the state’s outcomes are also contingent on standards set in other states.

- The data used to set funding targets for school year 2010-11 and beyond come from several years prior;

- New Hampshire is not a neighboring state of Rhode Island.

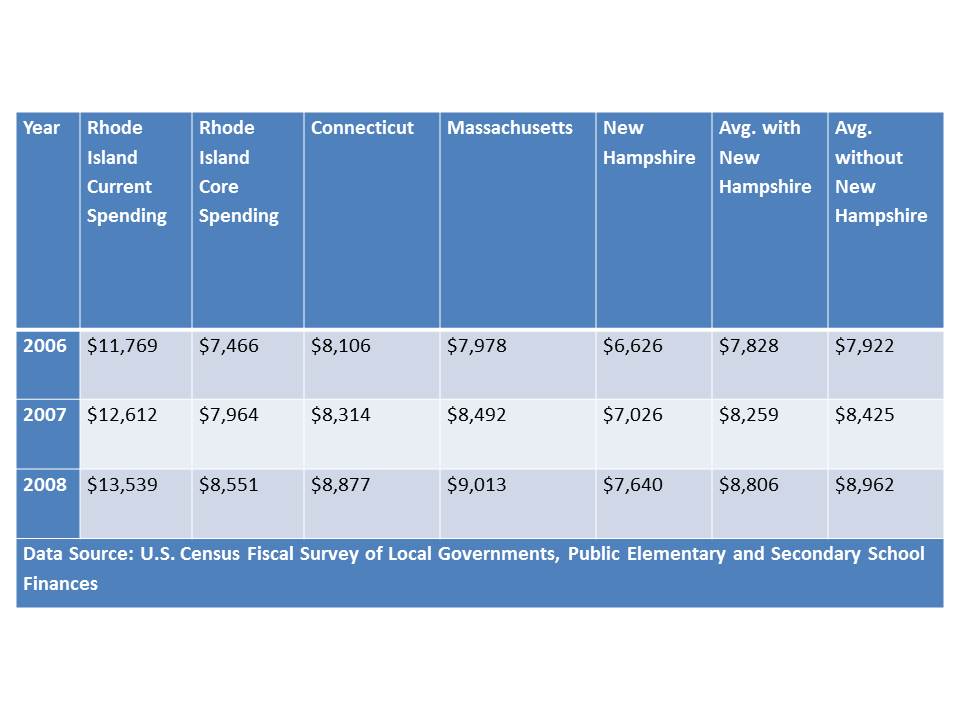

Table 1 shows the effect of including New Hampshire among Rhode Island’s “neighbors” when calculating the basic spending levels. Spending in New Hampshire is substantively lower than in Massachusetts or Connecticut, and thus brings down the average. Notably, spending in Vermont which is much higher than in New Hampshire is not included.

Table 1

Eventually, in accordance with their “analyses,” Rhode Island officials proposed a foundation level for 2010-11 and beyond to be set at $8,295 (RIDE, 2010, Wong, 2013). Notably, however, the average spending in Connecticut, Massachusetts and New Hampshire which most closely approximates that figure comes from 2006-07. Further, the 2007-08 Rhode Island average core instructional spending per pupil was already over $8,500, and a more comprehensive measure of current operating spending per pupil exceeded $13,000 per pupil.

References

Baker, B. D. (2012). Revisiting the Age-Old Question: Does Money Matter in Education?. Albert Shanker Institute.

Baker, B. D. (2009). Private schooling in the US: Expenditures, supply, and policy implications. Boulder and Tempe: Education and the Public Interest Center & Education Policy Research Unit.

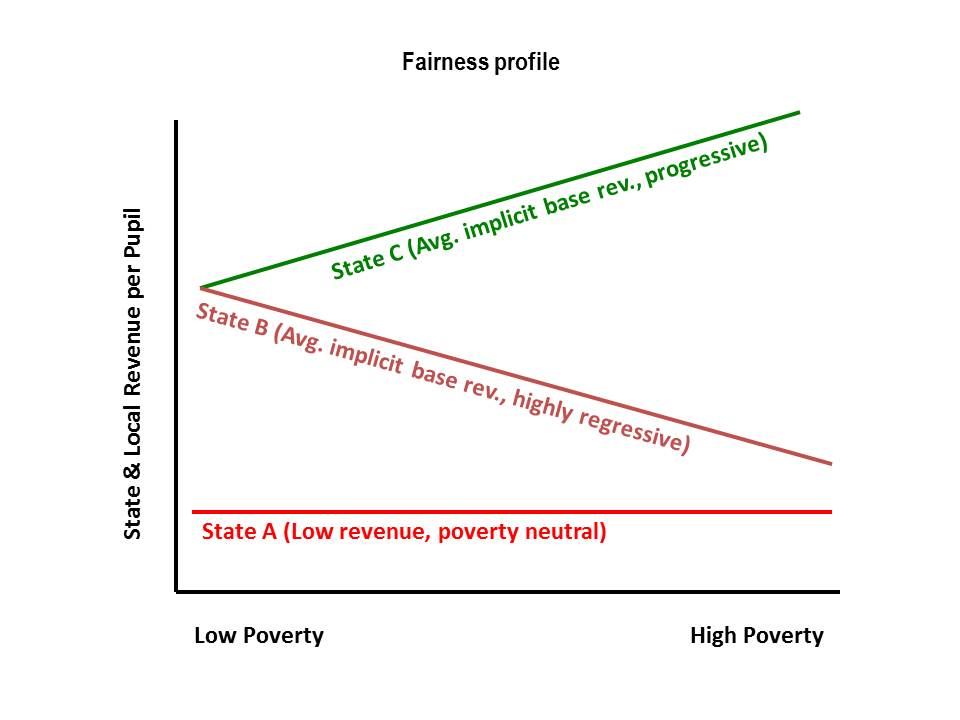

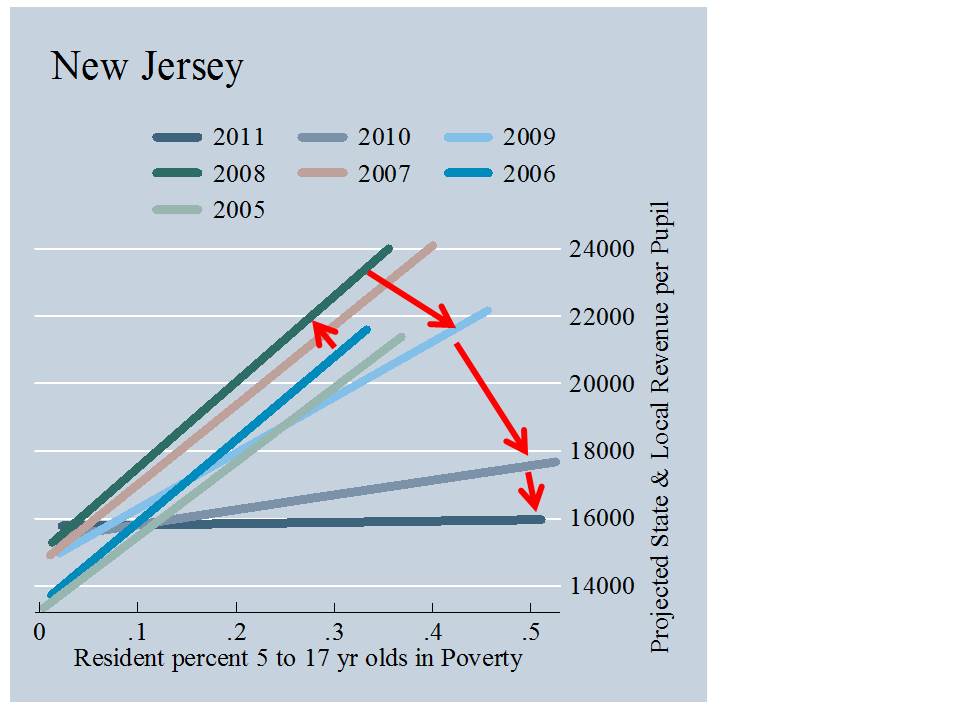

Baker, B. D., & Corcoran, S. P. (2012). The Stealth Inequities of School Funding: How State and Local School Finance Systems Perpetuate Inequitable Student Spending. Center for American Progress.

Baker, B., & Green, P. (2008). Conceptions of equity and adequacy in school finance. Handbook of research in education finance and policy, 203-221.

Baker, B. D., Libby, K., & Wiley, K. (2012). Spending by the Major Charter Management Organizations: Comparing Charter School and Local Public District Financial Resources in New York, Ohio, and Texas. National Education Policy Center.

Baker, B. D., Sciarra, D. G., & Farrie, D. (2012). Is School Funding Fair?: A National Report Card. Education Law Center. http://schoolfundingfairness.org/National_Report_Card_2012.pdf

Baker, B. D., Sciarra, D. G., & Farrie, D. (2010). Is School Funding Fair?: A National Report Card. Education Law Center. http://schoolfundingfairness.org/National_Report_Card.pdf

Baker, B. D., Taylor, L., & Vedlitz, A. (2005). Measuring educational adequacy in public schools (Report prepared for the Texas Legislature Joint Committee on Public School Finance, The Texas School Finance Project).

Baker, B., & Welner, K. G. (2012). Evidence and Rigor Scrutinizing the Rhetorical Embrace of Evidence-Based Decision Making. Educational Researcher, 41(3), 98-101.

Baker, B.D. & Welner, K.G. (2011a). Productivity Research, the U.S. Department of Education, and High-Quality Evidence. Boulder, CO: National Education Policy Center. Retrieved [date] from http://nepc.colorado.edu/publication/productivity-research.

Baker, B. D., & Welner, K. G. (2011b). School Finance and Courts: Does Reform Matter, and How Can We Tell?. Teachers College Record, 113(11), 2374-2414.

Baker, B., & Welner, K. G. (2010). Premature celebrations: The persistence of inter-district funding disparities. education policy analysis archives, 18, 9.

Bifulco, R. (2005) District-Level Black-White Funding Disparities in the United States 1987 to 2002. Journal of Education Finance 31 (2) 172-194.

Buras, K. L. (2011). Race, charter schools, and conscious capitalism: On the spatial politics of whiteness as property (and the unconscionable assault on black New Orleans). Harvard Educational Review, 81(2), 296-331.

Clune, W. H. (1994). The shift from equity to adequacy in school finance. Educational Policy, 8(4), 376-394.

Cuomo, A (2011) State of the State. Albany, NY. http://www.governor.ny.gov/sl2/stateofthestate2011transcript

Deslatte, A. (2011) Scott: Anthropology and journalism don’t pay, and neither do capes. Orlando, FL: Orlando Sentinal. October 11, 2011

Downes, T. A. (2004). School Finance Reform and School Quality: Lessons from Vermont. In

Yinger, J. (ed), Helping Children Left Behind: State Aid and the Pursuit of Educational Equity.

Cambridge, MA: MIT Press.

Duncan, A. (November 17, 2010) The New Normal: Doing More with Less — Secretary Arne Duncan’s Remarks at the American Enterprise Institute. Washington, DC:

Duncombe, W.D., and Johnston, J. (2004). Helping Children Left Behind: State Aid and the Pursuit ofEducational Equity. Cambridge, MA: MIT Press.

Freeman, J. (2011) New Jersey’s ‘Failed Experiment’ The new governor is on a mission to make his state competitive again in attracting people and capital. New York, Wall Street Journal. http://online.wsj.com/article/SB10001424052702303348504575184120546772244.html

Gates, W. (2011) Flip the Curve: Student Achievement vs. School Budgets. Huffington Post. http://www.huffingtonpost.com/bill-gates/bill-gates-school-performance_b_829771.html

Gist, D. (2010) National Journal. R.I. Formula Funds Children, Not Systems. http://education.nationaljournal.com/2010/06/a-funding-formula-for-success.php

Imazeki, J., and Reschovsky, A. (2004). Helping Children Left Behind: State Aid and the Pursuit of Educational Equity. Cambridge, MA: MIT Press.

McClure, P., Wiener, R., Roza, M., and Hill, M. (2008). Ensuring equal opportunity in public education: How local school district funding policies hurt disadvantaged students and what federal policy can do about it. Washington, DC: Center for American Progress. Retrieved December 20, 2009 from http://www.americanprogress.org/issues/2008/06/pdf/comparability.pdf

Public Impact; The University of Dayton, School of Education and Allied Professions; and Thomas B. Fordham Institute. (2008, March). Fund the Child: Bringing Equity, Autonomy and Portability to Ohio School Finance How sound an investment? Washington, DC: Thomas B. Fordham Institute. Retrieved December 20, 2009 from http://www.edexcellence.net/doc/fund_the_child_ohio_031208.pdf

New York State Education Department (2011). Fiscal Analysis & Research Unit. Primer on State Aid 2011-2012. http://www.oms.nysed.gov/faru/PDFDocuments/Primer11-12D.pdf

New York State Education Department (2011). Fiscal Analysis & Research Unit. Successful Schools Analysis Technical Report. http://www.oms.nysed.gov/faru/documents/technical_final.doc

Oliff, P., Mai, C., Leachman, M. (2012) New School Year Brings More Cuts in State Funding for Schools. Washington, DC: Center on Budget and Policy Priorities. http://www.cbpp.org/cms/?fa=view&id=3825 Accessed July 23, 2013

RIDE (Rhode Island Department of Education) Division of School Finance (2010) http://www.ride.ri.gov/Finance/Funding/FundingFormula/Docs/H8094Aaa_FINAL_6_10_10.pdf

Roza, M. (2006) “How Districts Short Change Low Income and Minority Students,” in Funding Gaps 2006. Washington, DC: The Education Trust.

Rubenstein, R., Schwartz, A. E., Stiefel, L., and Bel Hadj Amor, H. (2007). From districts to schools: The distribution of resources across schools in big city school districts. Economics of Education Review, 26(5), 532-545.

Stiefel, L, Rubenstein, R., and Berne, R. (1998). Intra-District Equity in Four Large Cities: Data, Methods and Results.” Journal of Education Finance, 23(4), 447-467.

U.S. Department of Education, For Each and Every Child—A Strategy for Education Equity and Excellence, Washington, D.C., 2013. http://www2.ed.gov/about/bdscomm/list/eec/equity-excellence-commission-report.pdf

Wong, K. K. (2013). The Design of the Rhode Island School Funding Formula: Developing New Strategies on Equity and Accountability. Peabody Journal of Education, 88(1), 37-47.