The other day, the Center on Reinventing Public Education (CRPE) at University of Washington released a bold new study claiming that Washington school districts underpay Math and Science teachers relative to other teachers – which is clearly an abomination in a state that is home to high-tech industries like Boeing and Microsoft.

The study consisted of looking at the average salaries of math and science teachers and other teachers in several large Washington State school districts and showing that in most, the average for math and science teachers is lower than for other teachers. As it turns out, the average experience of math and science teachers is lower and far more of them are in their first five years. So, it’s mainly about the experience differential. The authors infer from this that turnover of math and science teachers must be higher, but never actually test this assumption. They next infer that this turnover must be a function of having less competitive salaries – relative to what they could earn outside of teaching.

The study never calculates relative turnover of math and science versus other teachers. Rather, the study implies that lower average experience levels must be indicative of higher turnover. The only follow-up analysis on this point is to show that math and science teachers, in addition to being less experienced, are also younger. Wow! That doesn’t validate the turnover claim though, which may be true… but no validation here.

This is a silly study to begin with, but check out the not-so-subtle difference between the press release and the study itself.

The Press Release

http://www.crpe.org/cs/crpe/view/news/111

The analysis finds that in twenty-five of the thirty largest districts, math and science teachers had fewer years of teaching experience due to higher turnover—an indication that labor market forces do indeed vary with subject matter expertise. The subject-neutral salary schedule works to ignore these differences.

The Study

http://www.crpe.org/cs/crpe/download/csr_files/rr_crpe_STEM_Aug10.pdf

That said, the lower teacher experience levels are indicative of greater turnover among the math and science teaching ranks, lending support to the hypothesis that math and science teachers may have access to more compelling non-teaching opportunities than do their peers. (p. 5)

Both are a stretch, given the thin analysis, but the press release declares outright that turnover is the issue, while the study merely infers without ever testing or validating.

The study goes on to be an indictment of paying teachers more for years of experience – (because we all know that experience doesn’t matter?) – and argues that differential pay by teaching field is the answer. This is an absurd false dichotomy. Even if it is reasonable to differentiate pay by teaching field that does not mean that it is unreasonable to differentiate by experience, or that taking dollars away from experience-based pay is the only way to differentiate by field.

I happen to agree that there exist significant problems with Washington’s statewide teacher salary schedule, and that among other things, math and science teachers in Washington State are disadvantaged on the broader labor market. But the CRPE study does nothing to advance this argument.

Previous work by Lori Taylor, of Texas A&M does:

Report on Taylor Study:

http://www.wsipp.wa.gov/rptfiles/08-12-2201.pdf

Taylor Study:

http://www.leg.wa.gov/JointCommittees/BEF/Documents/Mtg11-10_11-08/WAWagesDraftRpt.pdf

The CRPE study goes further to say that the findings indicate that school districts haven’t taken seriously a state policy initiative to increase investment in math and science teaching. So let’s say that the bill to which the CRPE press release refers – House Bill 2621 – really did stimulate districts to step up their efforts to hire more math and science teachers. What would likely happen to math and science teacher average salaries? Well, many new math and science teachers would enter the system. That would alter the experience distribution of math and science teachers – they would likely become less experienced on average – and hence their average salaries would decline and be lower than average salaries in other fields not stimulated by similar initiatives.

When I get a chance, I’ll try to play around with my Washington teacher data set and post some follow-up analyses.

Kevin Welner and I point to similar misrepresentations of findings from several reports from this same center in this article on within and between-district financial disparities:

Baker, B. D., & Welner, K. G. (2010). “Premature celebrations: The persistence of interdistrict funding disparities” Educational Policy Analysis Archives, 18(9). Retrieved [date] from http://epaa.asu.edu/ojs/article/view/718

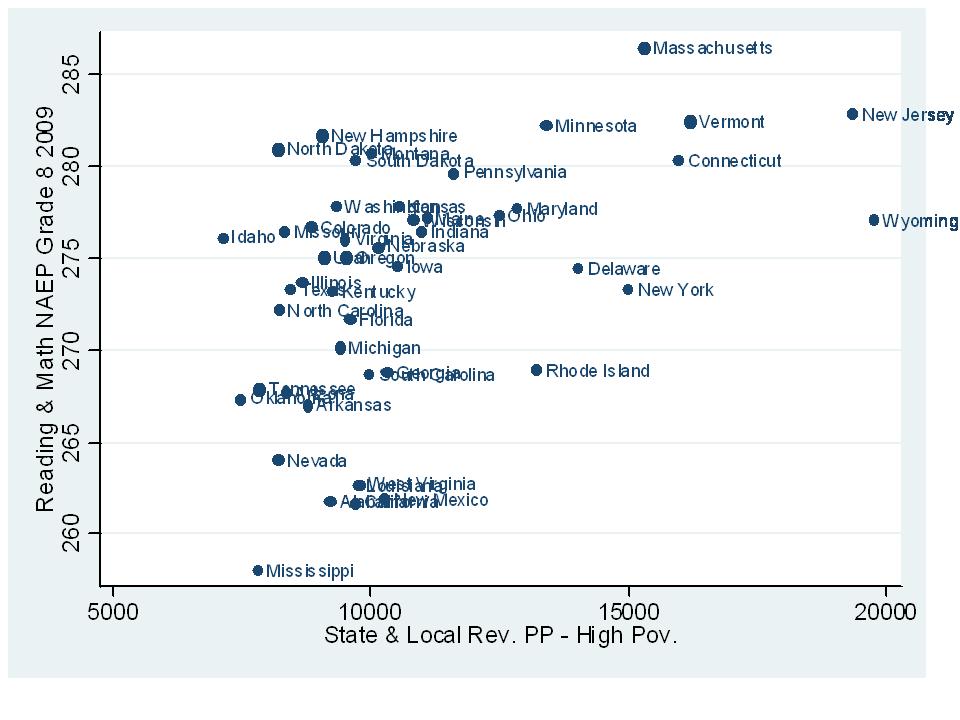

And now, for some fun follow-up figures:

These figures use individual teacher level data from the State of Washington. I include all teachers holding “secondary” assignments and identify teachers certified to teach biology, chemistry, physics, general science and math (and all subcategories) using the certification record files on the same teachers. Note that some teachers in the data set hold multiple assignments, so the total numbers of cases in these graphs is not an exact match for the total number of individual teachers. I haven’t asked for Washington Teacher data for a few years, so these only go up to 2006-07. Unlike the CRPE report, which cherry picks 30 districts, I use the whole state. If I get a chance, I’ll play with some other cuts at the data. These data don’t coincide at all with the CRPE “findings.”

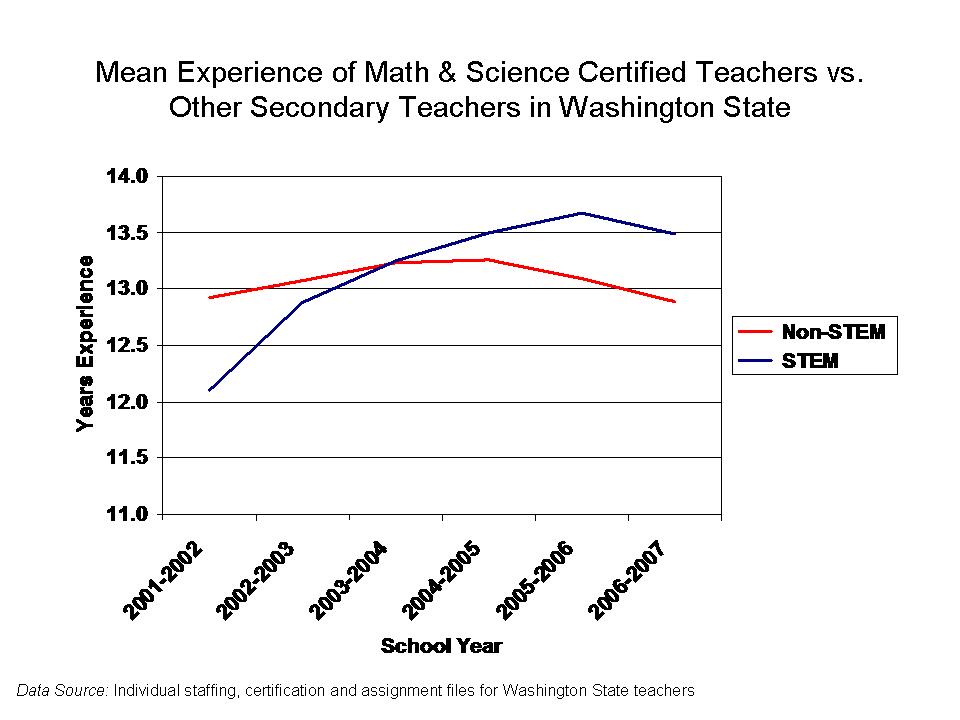

Here are the experience differences:

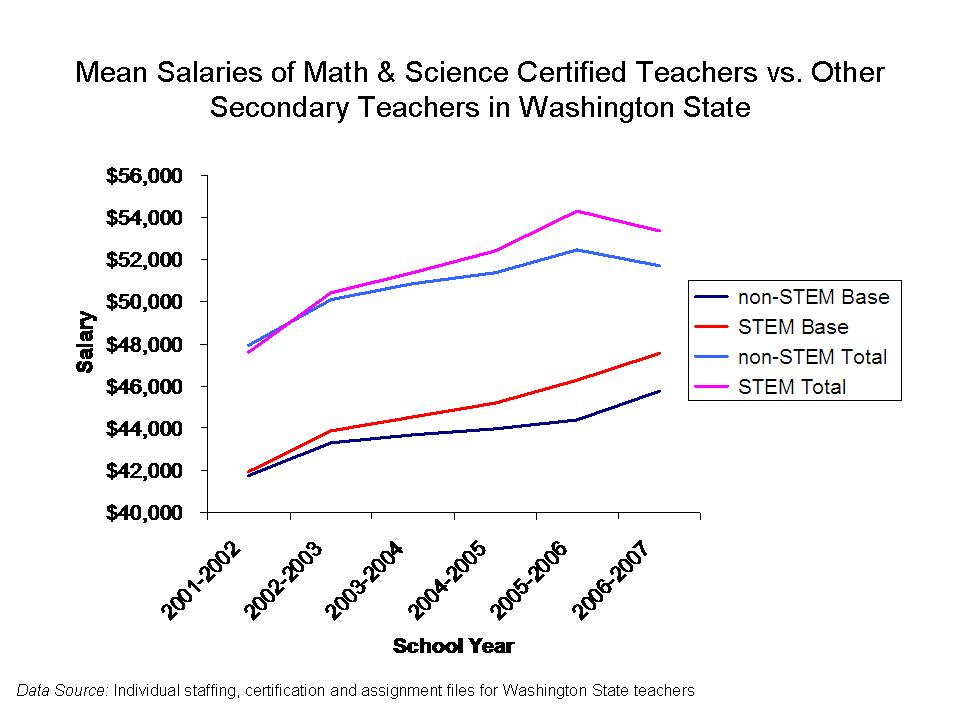

Here are the salary differences, on average, which coincide with the experience differences:

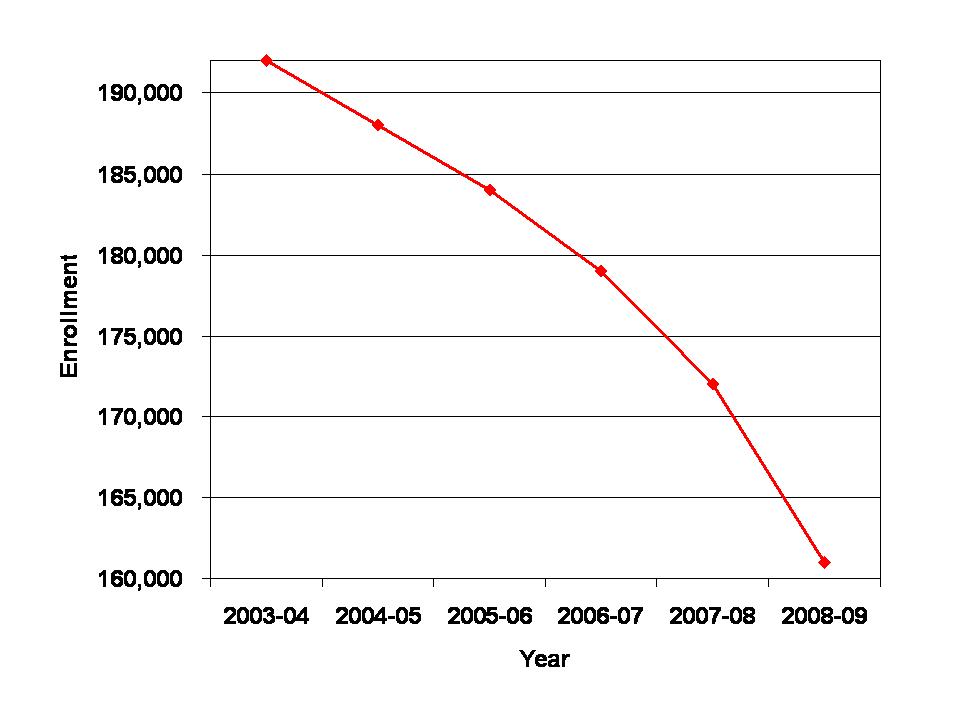

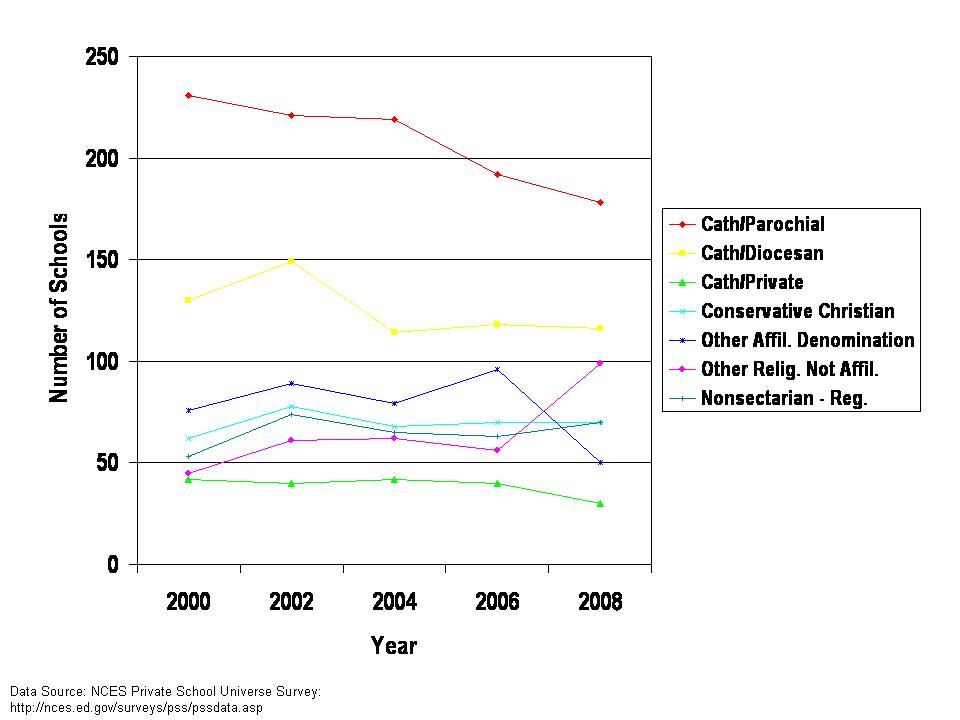

Now, here are the total numbers of teachers, and apparent decline in share that are math/science certified over this time period. Math/science teachers were relatively flat, while others grew.

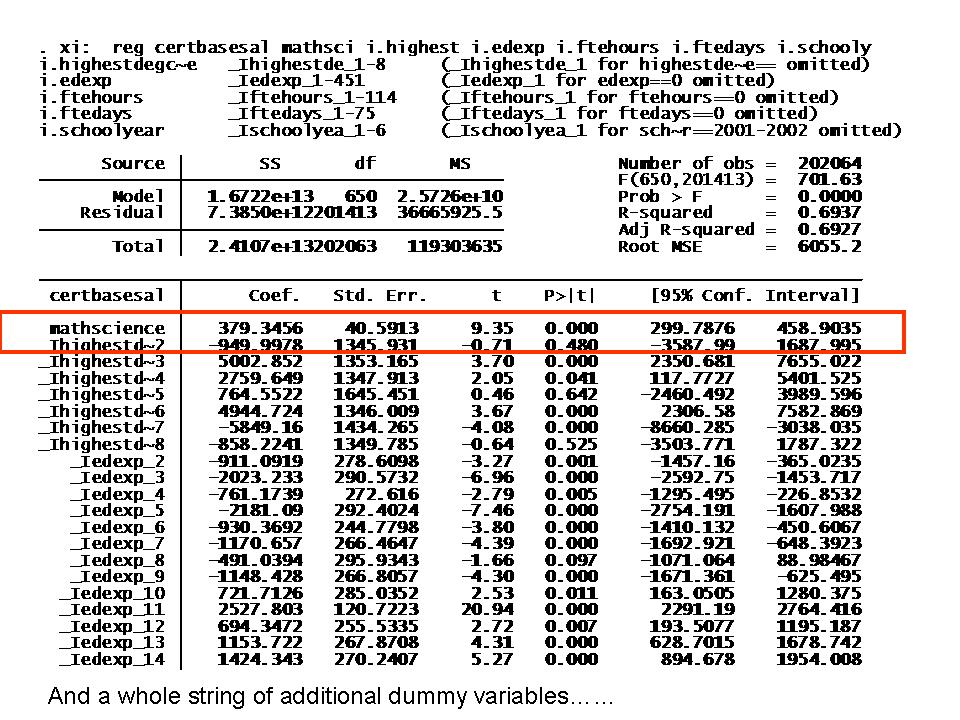

Finally, here’s a portion of the regression model of certified base salaries, where I control for degree level, experience, year, hours per day and days per year, all of which influence salaries. Interestingly, this regression shows that math and science teachers, holding all that other stuff constant, made about $380 more than non-math/science teachers, even under the fixed salary schedule.