This post is partly in response to the Brookings Institution report released this week which urged that value-added measures be considered in teacher evaluation:

http://www.brookings.edu/~/media/Files/rc/reports/2010/1117_evaluating_teachers/1117_evaluating_teachers.pdf

However, this post is more targeted at the punditry that has followed the Brookings report – the punditry that now latches onto this report as a significant endorsement of using value-added ratings as a major component in high-stakes personnel decisions. Personally, I didn’t read it that way. Nowhere did I see this report arguing strongly for a substantial emphasis on value-added measures. That said, I actually felt that the report based its rather modest conclusions on 3 deeply flawed arguments.

Argument 1 – Other methods of teacher evaluation are ineffective at determining good versus bad teachers because those methods are only weakly correlated with value-added measures.

Or, in other words, current value added measures, while only weak predictors of future value-added, are still stronger predictors of future value-added (using the same measures and models) than other indirect measures of teacher quality such as experience or principal evaluations.

This logic undergirds the quality-based dismissal example in the recent Brookings report which is based on an earlier Calder Center paper (www.caldercenter.org). That paper showed that if one dismisses teachers based on VAM, future predicted student gains are higher than if one dismisses teachers based on experience (or seniority). The authors point out that less experienced teachers are scattered across the full range of effectiveness – based on VAM – and therefore, dismissing teachers on the basis of experience leads to dismissal of both good and bad teachers – as measured by VAM. By contrast, teachers with low value-added are invariably – low value-added – BY DEFINITION. Therefore, dismissing on the basis of low value-added leaves more high value-added teachers in the system – including more teachers who show high value-added in later years (current value added is more correlated with future value added than is experience).

It is assumed in this simulation that VAM (based on a specific set of assessments and model specification) produces the true measure of teacher quality both as basis for current teacher dismissals and as basis for evaluating the effectiveness of choosing to dismiss based on VAM versus dismissing based on experience.

The authors similarly write off principal evaluations of teachers as ineffective because they too are less correlated with value-added measures than value-added measures with themselves.

Might I argue the opposite? – Value-added measures are flawed because they only weakly predict which teachers we know – by observation – are good and which ones we know are bad? A specious argument – but no more specious than its inverse.

The circular logic here is, well, problematic. Of course if we measure the effectiveness of the policy decision in terms of VAM, making the policy decision based on VAM (using the same model and assessments) will produce the more highly correlated outcome – correlated with VAM, that is.

However, it is quite likely that if we simply use different assessment data or different VAM model specification to evaluate the results of the alternative dismissal policies that we might find neither VAM-based dismissal nor experienced based dismissal better or worse than the other.

For example, Corcoran and Jennings conducted an analysis of the same teachers on two different tests in Houston, Texas, finding:

…among those who ranked in the top category (5) on the TAKS reading test, more than 17 percent ranked among the lowest two categories on the Stanford test. Similarly, more than 15 percent of the lowest value-added teachers on the TAKS were in the highest two categories on the Stanford.

- Corcoran, Sean P., Jennifer L. Jennings, and Andrew A. Beveridge. 2010. “Teacher Effectiveness on High- and Low-Stakes Tests.” Paper presented at the Institute for Research on Poverty summer workshop, Madison, WI.

So, what would happen if we did a simulation of “quality based” layoffs versus experience-based layoffs using the Houston data, where the quality-based layoffs were based on a VAM model using the Texas Assessments (TAKS), but then we evaluate the effectiveness of the layoff alternatives using a value-added model of Stanford achievement test data? Arguably the odds would still be stacked in favor of VAM predicting VAM – even if different VAM measures (and perhaps different model specifications). But, I suspect the results would be much less compelling than the original simulation.

The results under this alternative approach may, however, be reduced entirely to noise – meaning that the VAM based layoffs would be the equivalent of random firings – drawn from a hat and poorly if at all correlated with the outcome measure estimated by a different VAM – as opposed to experienced based firings. Neither would be a much better predictor of future value-added. But for all their flaws, I’d take the experienced based dismissal policy over the roll of the dice, randomized firing policy any day.

Argument 2 – We should be unconcerned about high misclassification errors – falsely identifying good teachers as bad, therefore resulting in random harm to teachers – Rather, we should be concerned that current methods falsely identify bad teachers as good, doing lifelong harm to kids.

The Brookings report argues:

Much of the concern and cautions about the use of value-added have focused on the frequency of occurrence of false negatives, i.e., effective teachers who are identified as ineffective. But framing the problem in terms of false negatives places the focus almost entirely on the interests of the individual who is being evaluated rather than the students who are being served. It is easy to identify with the good teacher who wants to avoid dismissal for being incorrectly labeled a bad teacher. From that individual’s perspective, no rate of misclassification is acceptable. However, an evaluation system that results in tenure and advancement for almost every teacher and thus has a very low rate of false negatives generates a high rate of false positives, i.e., teachers identified as effective who are not. These teachers drag down the performance of schools and do not serve students as well as more effective teachers.

Again, the false identification assumption regarding current evaluations is based on the assumption that the value-added measure is a true measure of teacher quality. That is, we know current evaluations are bad because many teachers get tenure but have bad value-added ratings. But, value-added ratings are good because some teachers who had good value-added ratings at one point, under one type of value-added model applied to one type of assessments, also have good value-added ratings later using the same model specification applied to similar or same testing data.

Setting that circular logic issue aside, we are faced with the moral dilemma I posed in an earlier post. This argument is all about the “adults vs. kids” issue, and the assumption that if it’s really about the kids, it can’t be at all about the adults in the system, and vice versa. The reality however is that a system that is a great workplace for adults can translate to a better educational setting for children and a system that creates a divisive, negative workplace setting for the adults is unlikely to translate to a better educational setting for the kids. It’s more likely to be a both/and, not either/or situation.

I explained previously:

I guess that one could try to dismiss those moral, ethical and legal concerns regarding wrongly dismissing teachers by arguing that if it’s better for the kids in the end, then wrongly firing 1 in 4 average teachers along the way is the price we have to pay. I suspect that’s what the pundits would argue – since it’s about fairness to the kids, not fairness to the teachers, right? Still, this seems like a heavy toll to pay, an unnecessary toll, and quite honestly, one that’s not even that likely to work even in the best of engineered circumstances.

Too often overlooked in these analyses is the question of who will really want to teach in an education system where the chance of having one’s career cut short by random statistical error is quite high????? Who will be waiting in line? What kind of workplace will that create? And can we really expect average teaching quality to improve as a result?

Argument 3 – Other professions use “weak” indicators or signals of performance, like using the SAT for college admission or using patient mortality rates to evaluate hospital quality.

The Brookings report argues:

It is instructive to look at other sectors of the economy as a gauge for judging the stability of value-added measures. The use of imprecise measures to make high stakes decisions that place societal or institutional interests above those of individuals is wide-spread and accepted in fields outside of teaching.

Examples from Brookings:

In health care, patient volume and patient mortality rates for surgeons and hospitals are publicly reported on an annual basis by private organizations and federal agencies and have been formally approved as quality measures by national organizations.

The correlation of the college admission test scores of college applicants with measures of college success is modest (r = .35 for SAT combined verbal + math and freshman GPA[9]). Nevertheless nearly all selective colleges use SAT or ACT scores as a heavily weighted component of their admission decisions even though that produces substantial false negative rates (students who could have succeeded but are denied entry).

On its face, the argument that other professions use weak indicators is reason for public education to do the same is absurd. And, this argument presents as a given, with very weak justification and a handful of cherry-picked citations, that these weak signals play a significant role in high stakes decision-making. A more thorough review of health-care policy literature in particular raises many of the same concerns we hear in the education debate over institutional and individual performance measures – precision, accuracy and incentives. There also exists a similar divide in perspectives between healthcare policy wonks and management organizations versus physicians with regard to the accuracy and usefulness of the indicators and the incentives created by specific measures.

Many pundits out there tweeting and blogging about this new Brookings report are the same pundits who continue to argue that value-added ratings should constitute as much as 50% of teacher evaluation – and that somehow this new Brookings report validates their claim. I don’t see where the Brookings report goes anywhere near that far.

To those viewing the Brookings report in that light, implicit in the “other sectors do it” argument is that the SAT and mortality rates are considered major factors for evaluating students for admission or for evaluating hospital quality. Are they really? In an era where more and more colleges are making the SAT optional, how many are using it as 50% of admissions criteria? Yes, most highly selective colleges do still require the SAT, and it no doubt serves as a tipping factor on admissions decisions (largely out of convenience when taking the first cut at a large applicant pool). But, several have abandoned use of SAT altogether (http://www.fairtest.org/university/optional), perhaps because it is perceived to be such a weak signal – or because of all of the perverse incentives and inequities associated with the SAT. Would anyone seriously consider using patient mortality rates alone as 50% of the value for rating hospital quality – determining hospital closures?

And even the batting average comparison – the authors argue that past batting average is only a weak predictor of future batting average – but is clearly still important in player personnel decisions. But what percentage does batting average count for? Does a baseball GM consider which pitchers the batter went up against that season? Would batting average count for, say, 50% of the decision – absolutely, a fixed share, deal breaker? It may be important, but it’s one of many, many statistics most of which are also likely considered in context – in a very flexible decision framework (more art than science?).

And then there’s the issue of the incentives created by emphasizing a specific measure. What may be good in baseball may not in healthcare or education!

Let’s say we put this much emphasis on batting average. What is a player to do? How can the player improve his worth? Getting more hits – getting traded to a team in a division with poor pitching… to get more hits? Neither is a deeply problematic incentive. There’s not much downside to improving one’s batting average (setting aside the role of performance enhancing drugs and all that). Besides, it’s a freakin’ game!!!!!… a game that involves gaming the system. A game that is based largely on obsession with statistics and trying to figure our which ones are and aren’t meaningful.

The SAT is different. Much has been made of the perverse incentives including increased classification among students from higher income families to take an un-timed SAT (http://www.slate.com/id/2141820/) and entire industries that have merged around the emphasis on SAT scores for college admission, reinforcing socio-economic disparities in SAT performance. Even if the SAT could be a reasonable indicator, its usefulness has been distorted and significantly compromised by the incentives and resulting behaviors. Hence the decision by many colleges to consider the test optional .

What about hospitals trying to reduce mortality rates? Turning away the sickest, highest risk patients and most complex cases is one option. Unlike the batting average measure, there is a significant downside to this one. Those with the greatest needs don’t get served!

To argue that these supposedly analogous measures are widely accepted in healthcare as a basis for high-stakes decisions is a foolish stretch. The citations in the Brookings report to sources that report healthcare indicators (like: http://www.hospitalcompare.hhs.gov/) are more analogous to the school report cards that already exist on state department of education web sites (but with additional survey responses added) – and not analogous to the publication of individual teacher value-added ratings as done earlier this year by the LA Times. Comparable information – and comparably useless information – is already widely available on public schools through both government sources and a plethora of private vendors. For that matter, consumer ratings of individual teachers are also available through sources like www.ratemyteacher.com.

To wrap this up…

Do I think value-added measures are useless? No! Do I think they should be used to evaluate and compare individual teachers and should be used as a significant factor in employment decisions? No! But, there is likely still much that can be learned from studying different approaches to value-added modeling, developing more useful assessment instruments, using these instruments and models to better understand what works in schools and what doesn’t. Clearly we need to learn how to use data more thoughtfully to inform school improvement efforts. Quite honestly, I believe many already are.

Follow up Response to Chad Aldeman’s critique of my argument:

-

You (Chad) seem to have missed the point, which I perhaps did not make clear enough. Indeed, if we were able to measure precisely what we wanted to measure and if we measured it, and it predicted itself, we’d be in great shape. The problem is that we can’t measure precisely what we think we want to measure.

Given the same assessment instrument and value added model parameters, we get a .2 to .3 correlation from year to year… (possibly partly a function of non-random assignment).

Given different assessments in the same year, Sean Corcoran found:

…among those who ranked in the top category (5) on the TAKS reading test, more than 17 percent ranked among the lowest two categories on the Stanford test. Similarly, more than 15 percent of the lowest value-added teachers on the TAKS were in the highest two categories on the Stanford.

* Corcoran, Sean P., Jennifer L. Jennings, and Andrew A. Beveridge. 2010. “Teacher Effectiveness on High- and Low-Stakes Tests.” Paper presented at the Institute for Research on Poverty summer workshop, Madison, WI.

So… what this calls into question is whether we really are measuring what we want to measure. Are we able to precisely determine that a math teacher is good at teaching math – regardless of the students they have, or the year they have them, or the test we use to measure it? Or even regardless of the scaling of the test.

When we measure points per game, we know what we are measuring. I’m curious now about the correlation between points per game and win/loss, or even a championship season… or annual revenue, since that’s the end goal… but I digress.

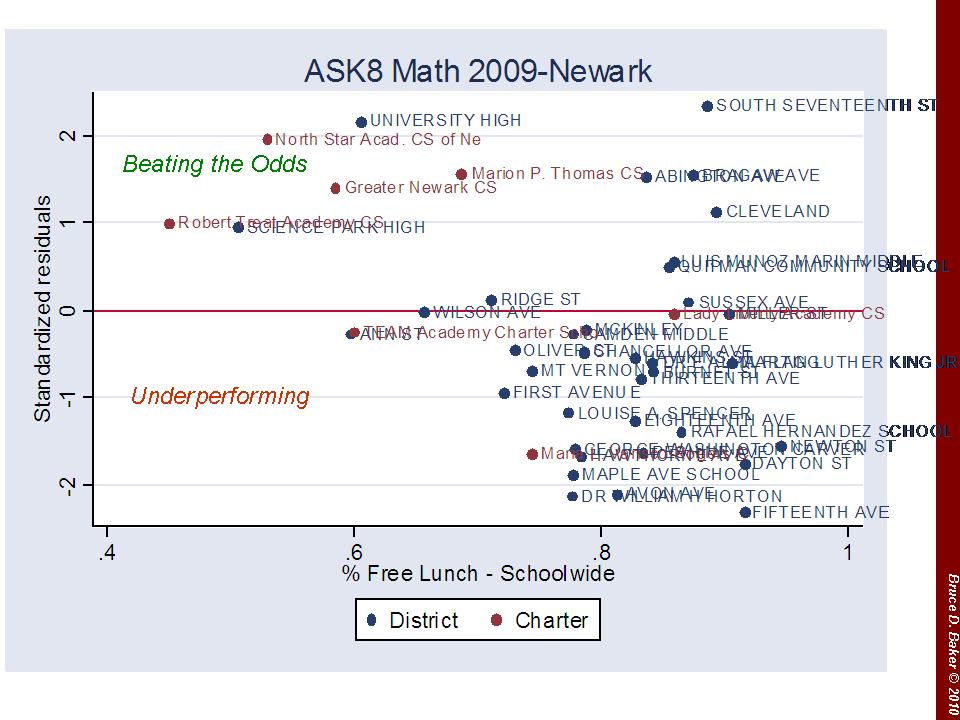

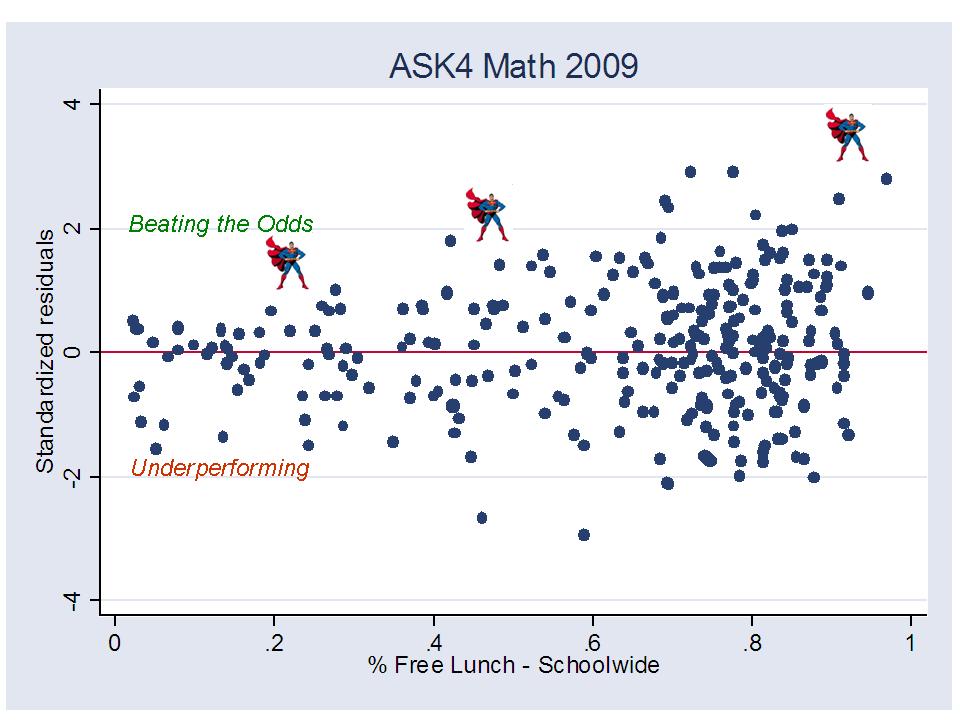

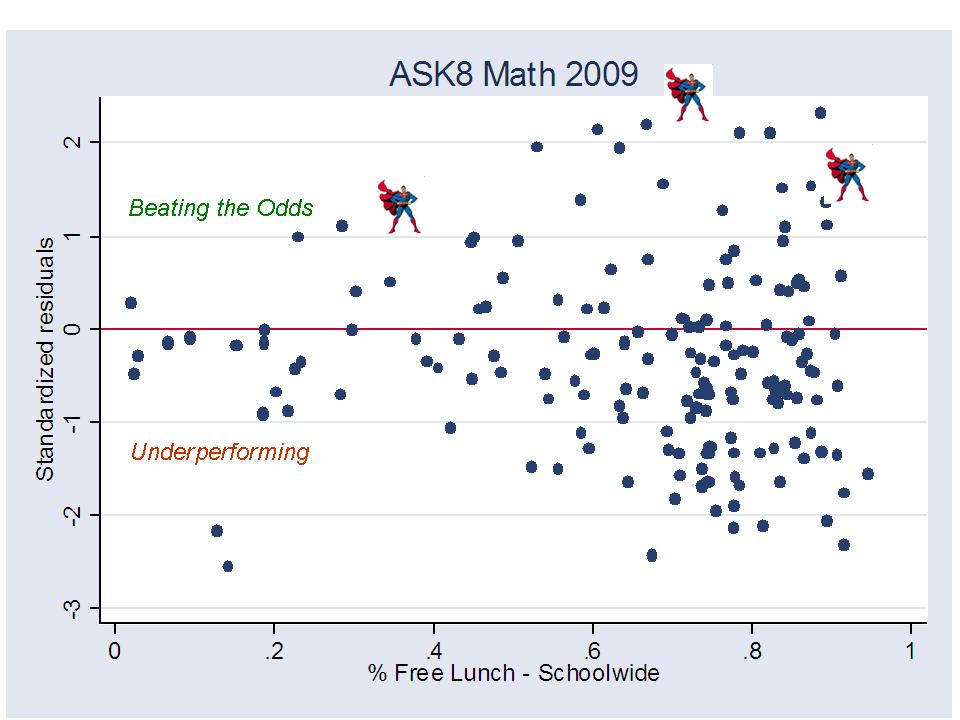

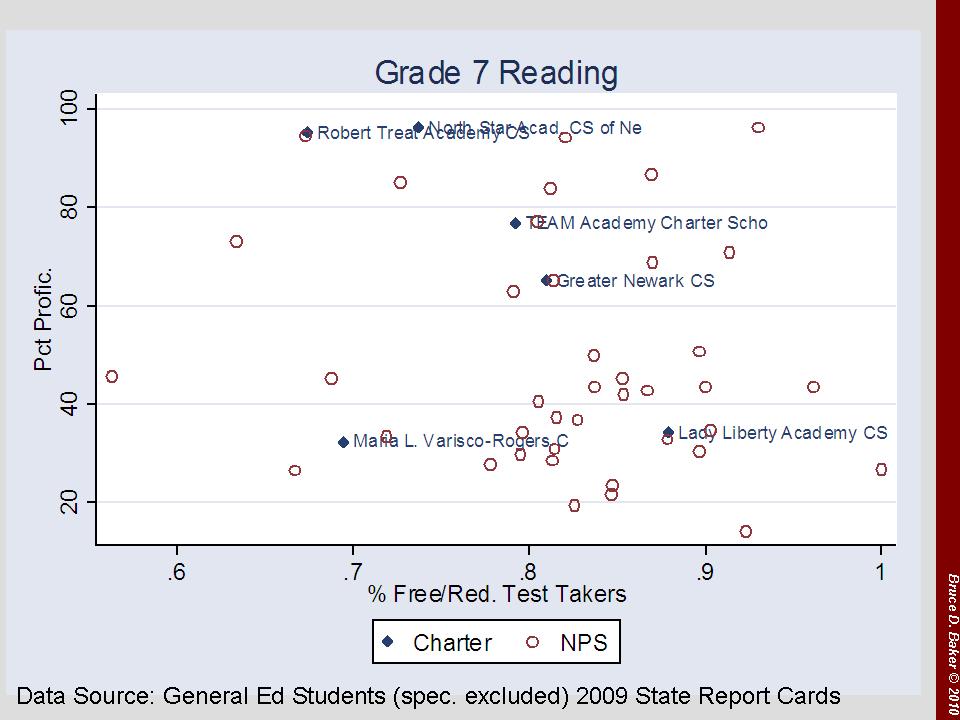

Now, here’s the part that sets North Star and a few others apart – at first in a seemingly good way…

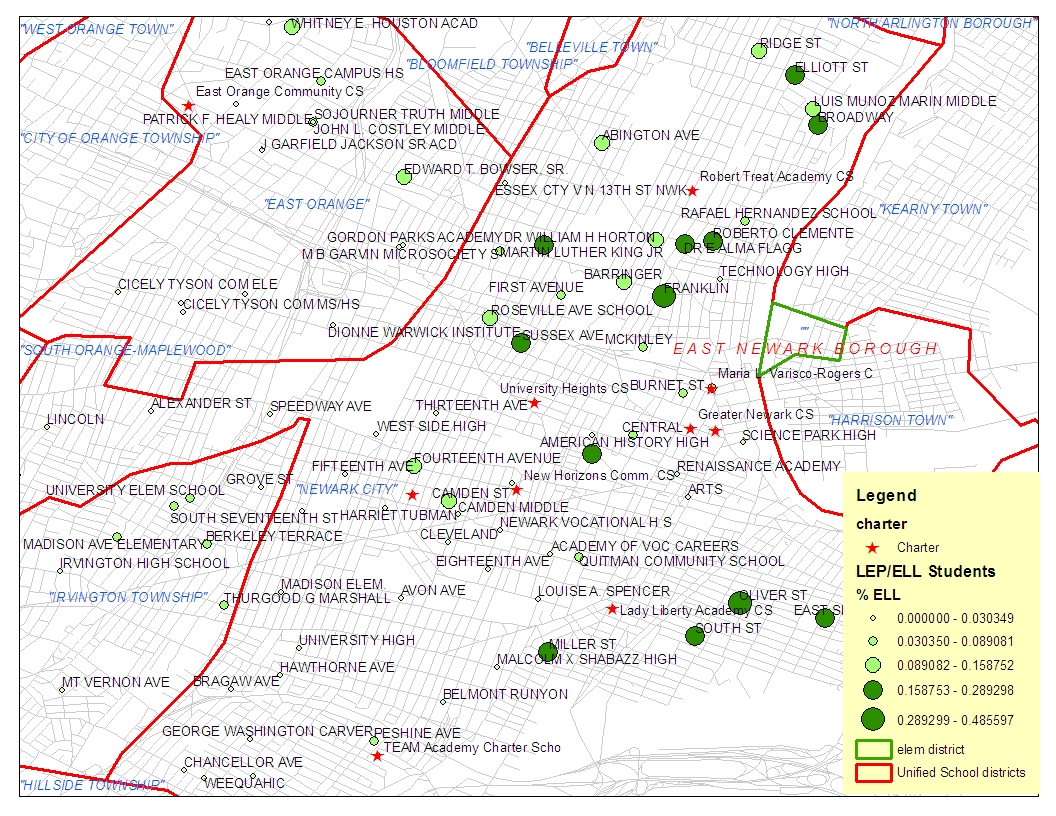

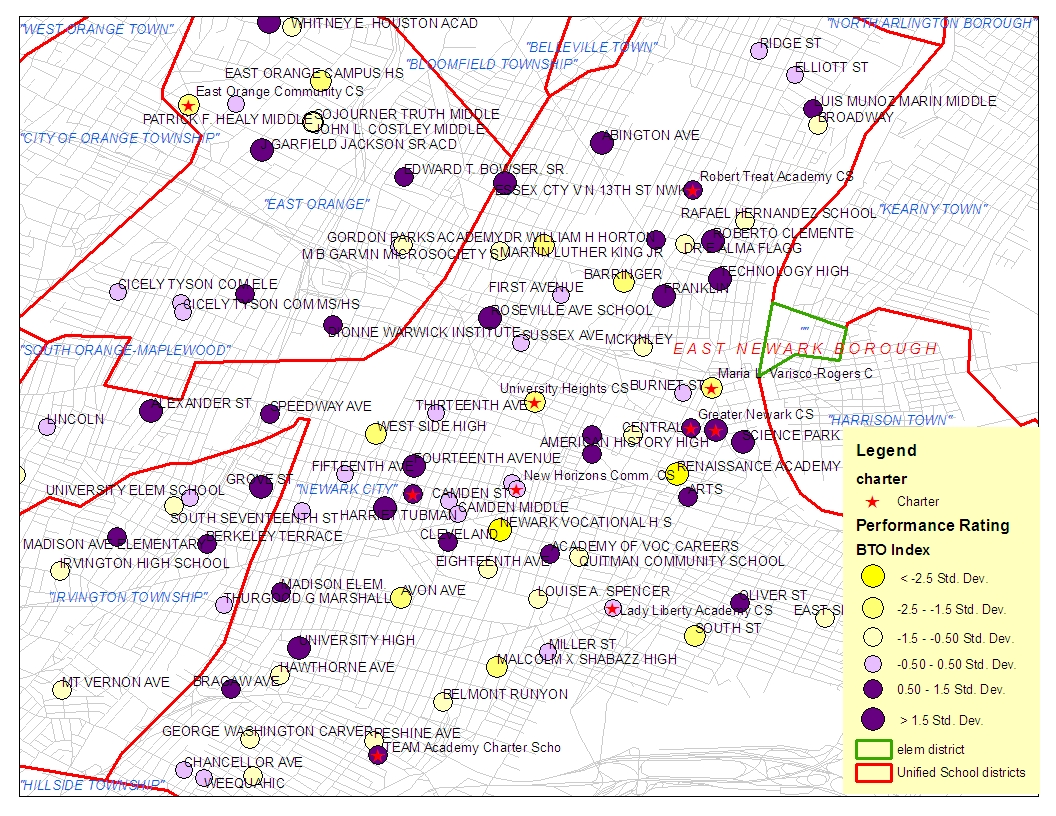

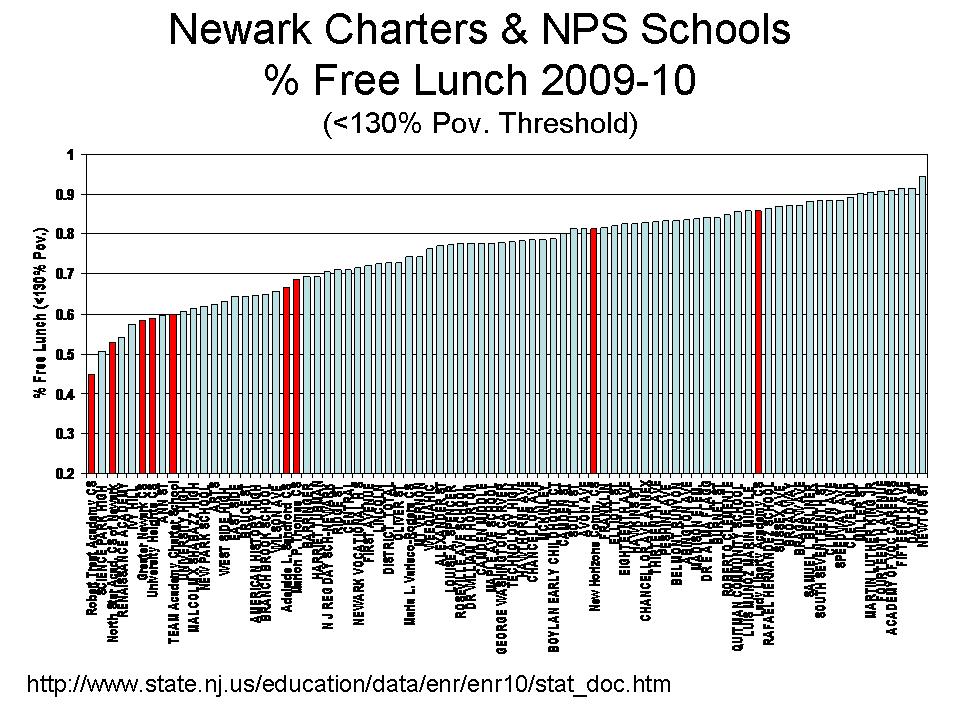

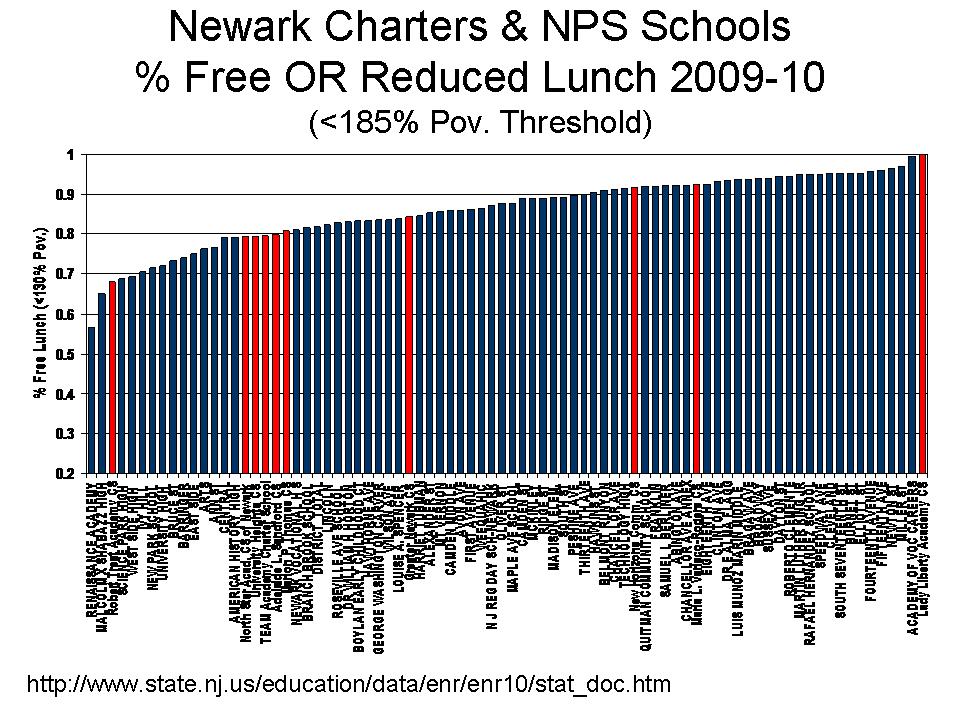

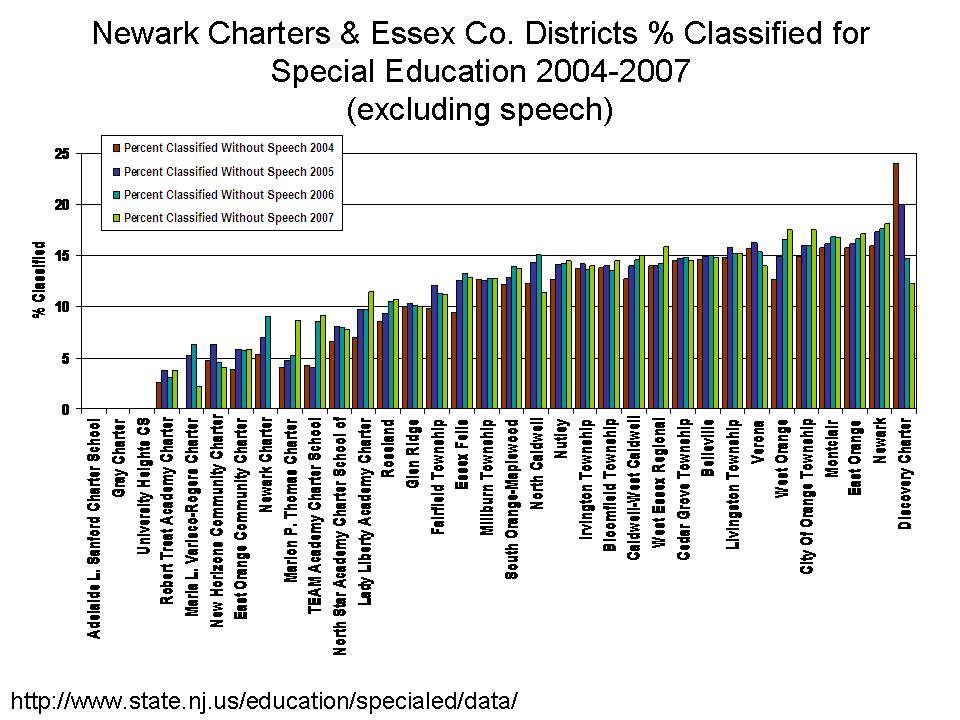

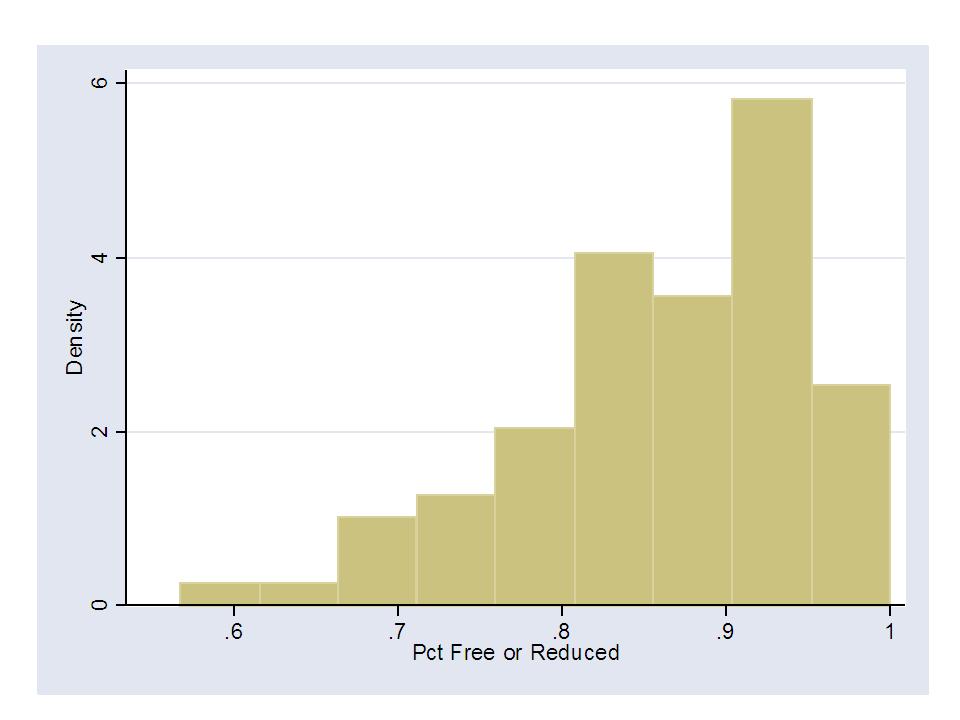

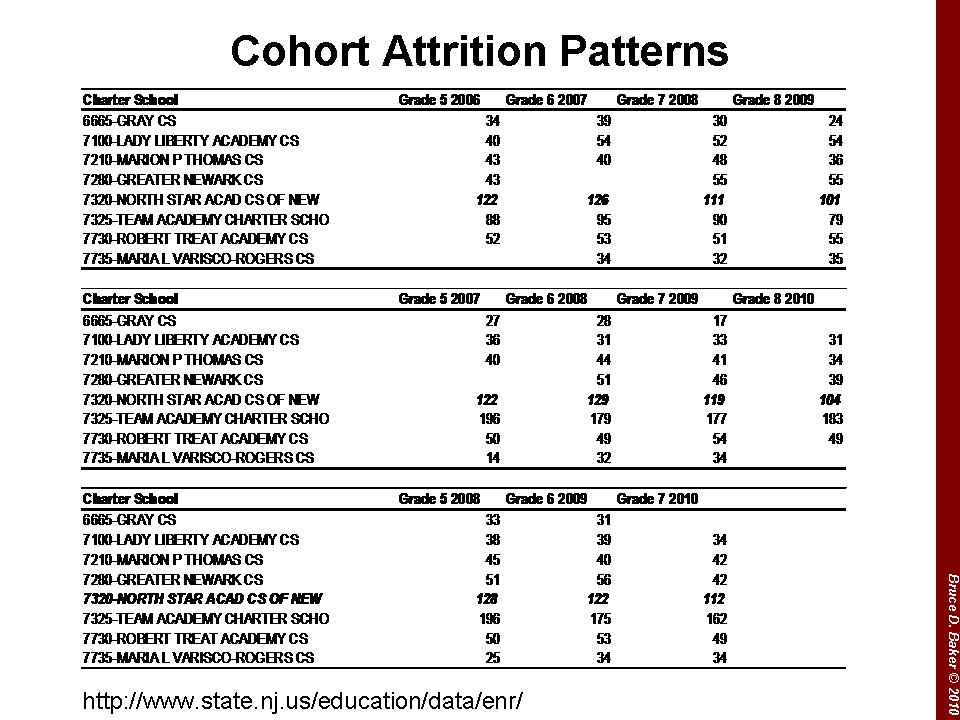

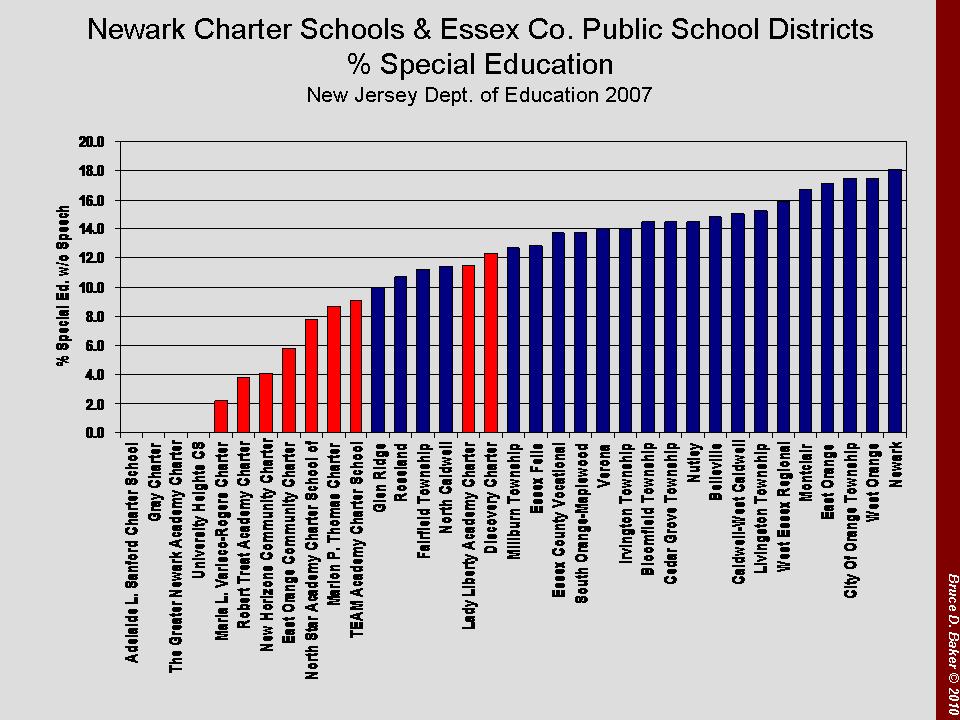

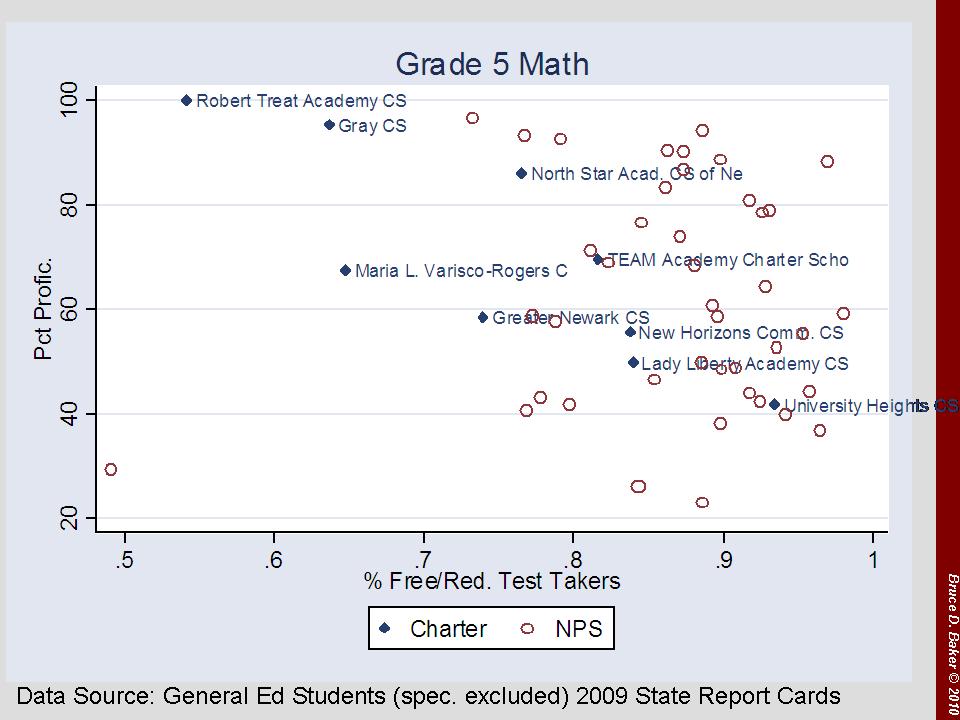

Now, here’s the part that sets North Star and a few others apart – at first in a seemingly good way… Here, I take two 8th grade cohorts and trace them backwards. I focus on General Test Takers only, and use the ASK Math assessment data in this case. Quick note about those data – Scores across all schools tend to drop in 7th grade due to cut-score placement (not because kids get dumber in 7th grade and wise up again in 8th). The top section of the table looks at the failure rates and number of test takers for the 6th grade in 2005-06, 7th in 2006-07 and 8th in 2007-08. Over this time period, North Star drops 38% of its general test takers. And, cuts the already low failure rate from nearly 12% to 0%. Greater Newark also drops over 30% of test takers in the cohort, and reaps significant reductions in failures (partially proficient) in the process.

Here, I take two 8th grade cohorts and trace them backwards. I focus on General Test Takers only, and use the ASK Math assessment data in this case. Quick note about those data – Scores across all schools tend to drop in 7th grade due to cut-score placement (not because kids get dumber in 7th grade and wise up again in 8th). The top section of the table looks at the failure rates and number of test takers for the 6th grade in 2005-06, 7th in 2006-07 and 8th in 2007-08. Over this time period, North Star drops 38% of its general test takers. And, cuts the already low failure rate from nearly 12% to 0%. Greater Newark also drops over 30% of test takers in the cohort, and reaps significant reductions in failures (partially proficient) in the process.

You (Chad) seem to have missed the point, which I perhaps did not make clear enough. Indeed, if we were able to measure precisely what we wanted to measure and if we measured it, and it predicted itself, we’d be in great shape. The problem is that we can’t measure precisely what we think we want to measure.

Given the same assessment instrument and value added model parameters, we get a .2 to .3 correlation from year to year… (possibly partly a function of non-random assignment).

Given different assessments in the same year, Sean Corcoran found:

…among those who ranked in the top category (5) on the TAKS reading test, more than 17 percent ranked among the lowest two categories on the Stanford test. Similarly, more than 15 percent of the lowest value-added teachers on the TAKS were in the highest two categories on the Stanford.

* Corcoran, Sean P., Jennifer L. Jennings, and Andrew A. Beveridge. 2010. “Teacher Effectiveness on High- and Low-Stakes Tests.” Paper presented at the Institute for Research on Poverty summer workshop, Madison, WI.

So… what this calls into question is whether we really are measuring what we want to measure. Are we able to precisely determine that a math teacher is good at teaching math – regardless of the students they have, or the year they have them, or the test we use to measure it? Or even regardless of the scaling of the test.

When we measure points per game, we know what we are measuring. I’m curious now about the correlation between points per game and win/loss, or even a championship season… or annual revenue, since that’s the end goal… but I digress.