Okay. You all know that I like to call out dumb graphs. And I’ve addressed a few on this blog previously.

Here are a few from the past: https://schoolfinance101.wordpress.com/2011/04/08/dumbest-real-reformy-graphs/

Now, each of the graphs in this previous post and numerous others I’ve addressed, like this one (From RiShawn Biddle) had something over the graph I’m going to address in this post. Each of the graphs I’ve addressed previously at the very least used some “real” data. They all used it badly. Some used it in ways that should be considered illegal. Others… well… just dumb.

But this new graph, sent to me from a colleague who had to suffer through this presentation, really takes the cake. This new graph comes to us from Marguerite Roza, from a presentation to the New York Board of Regents in September. And this one rises above all of these previous graphs because IT IS ENTIRELY FABRICATED. IT IS BASED ON NOTHING.

Perhaps even worse than that, the fabricated information on this illustrative graph suggests that its author does not have even the slightest grip on a) statistics, b) graphing, c) how one might measure effects of school reforms (and how large or small they might be) or d) basic economics.

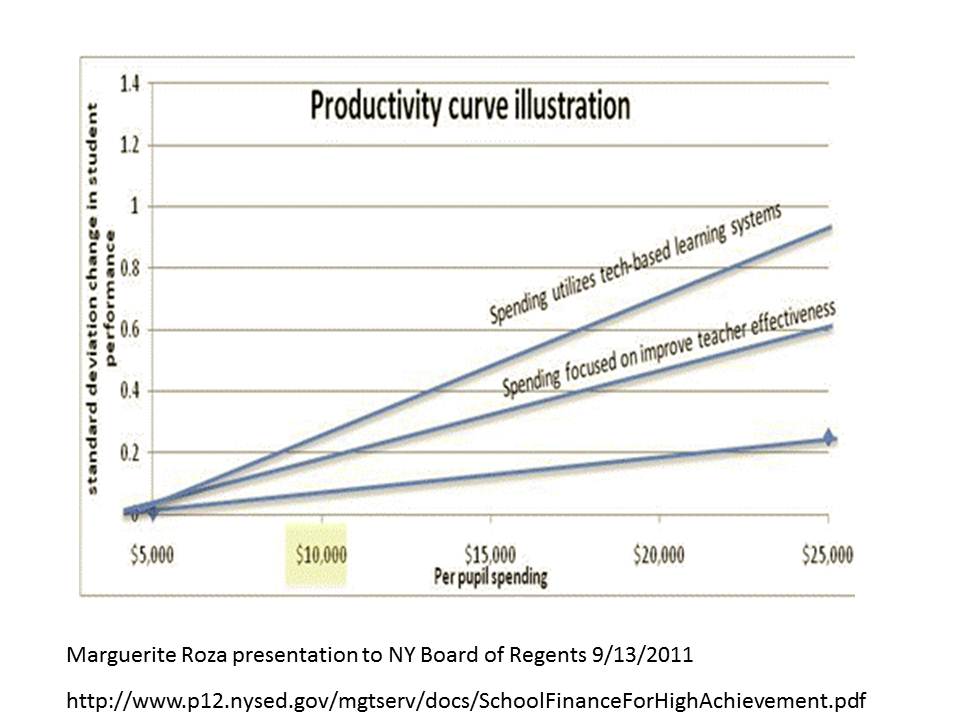

Here’s the graph:

http://www.p12.nysed.gov/mgtserv/docs/SchoolFinanceForHighAchievement.pdf

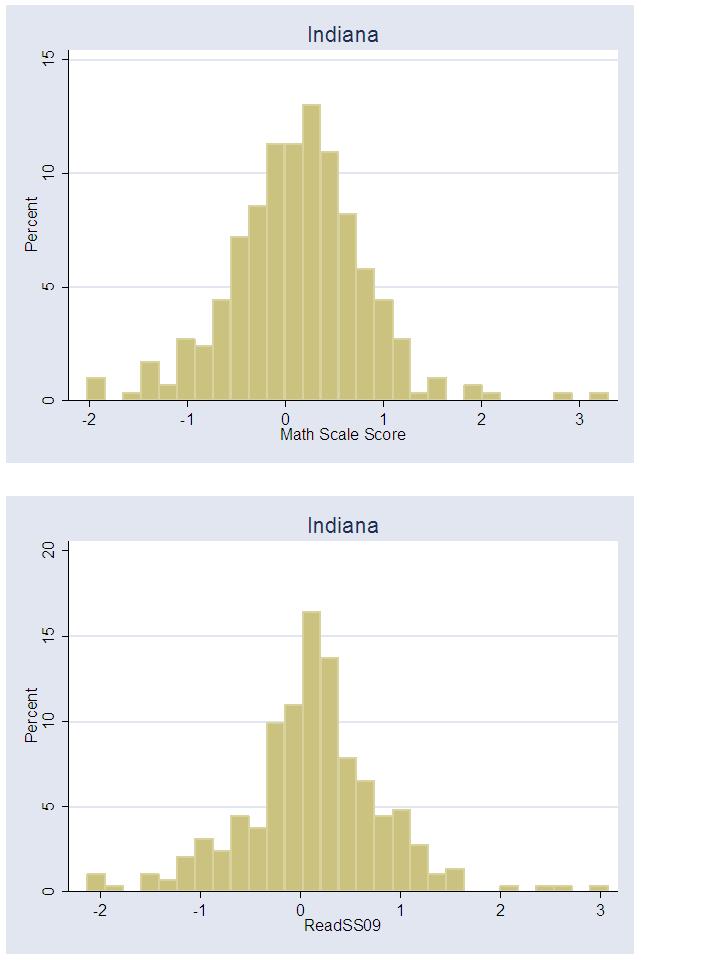

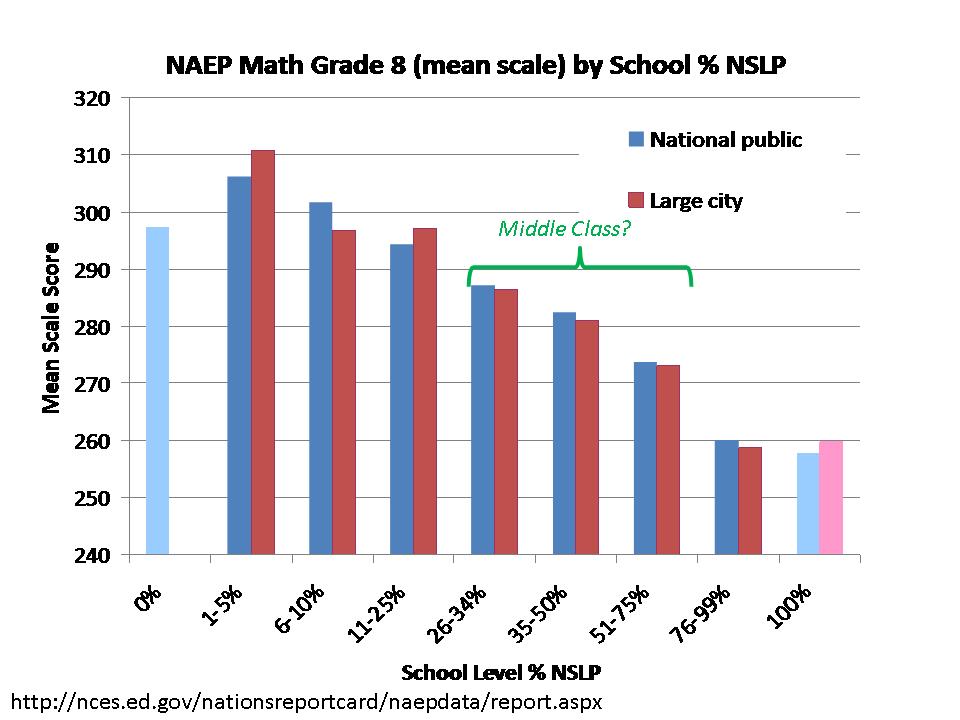

Now, here’s what the graph is supposed to be saying. Along the horizontal axis is per pupil spending and on the vertical axis are measured student outcomes. It’s intended to be a graph of the rate of return to additional dollars spent. The bottom diagonal line on this graph – the lowest angled blue line – is intended to show the rate of return in student outcomes for each additional dollar spent given the current ways in which schools are run. Go from $5,000 to $25,000 in spending and you raise student achievement by, oh about .2 standard deviations.

Note, no diminishing returns (perhaps those returns diminish well outside the range of this graph?). It’s linear all the way – keep spending an you keep gaining…. to infinity and beyond. But I digress (that’s the basic economics bit above). And that doesn’t really matter – because this line isn’t based on a damn thing anyway. While I concur that there is a return to additional dollars spent, even I would be hard pressed to identify a single estimate of the rate of return for moving from $5k to $25k in per pupil spending.

Where the graph gets fun is in the addition of the other two lines. Note that the presentation linked above includes a graph with only the lower line first, then includes this graph which adds the upper two lines. And what are those lines? Those lines are what we supposedly can get as a return for additional dollars spent if we either a) spend with a focus on improving teacher effectiveness or b) spend “utilizing tech-based learning systems” (note that I hate utilizing the word utilizing when USE is sufficient!). I have it on good authority that the definitions of either provided during the presentation were, well, unsatisfactory.

But most importantly, even if there was a clear definition of either, THERE IS ABSOLUTELY NO EVIDENCE TO BACK THIS UP. IT IS ENTIRELY FABRICATED. Now, I’ve previously picked on Marguerite Roza for here work with Mike Petrilli on the Stretching the School Dollar policy brief. Specifically, I raised significant concern that Petrilli and Roza provide all sorts of recommendations for how to stretch the school dollar but PROVIDE NO ACTUAL COST/EFFECTIVENESS ANALYSIS.

In this graph, it would appear that Marguerite Roza has tried to make up for that by COMPLETELY FABRICATING RATE OF RETURN ANALYSIS for her preferred reforms.

Now let’s dig a little deeper into this graph. If you look closely at the graph, Roza is asserting that if we spend $5,000 per pupil either a) traditionally, b) focused on teacher effectiveness or c) on tech-based systems, we are at the same starting point. Not sure how that makes sense… since the traditional approach is necessarily least productive/efficient in the reformy world… but… yeah… okay. Let’s assume it’s all relative to the starting point for each…which would zero out the imaginary advantages of two reformy alternatives… which really doesn’t make sense when you’re pitching the reformy alternatives.

Most interesting is the fact that Roza is asserting here that if you add another $20,000 per pupil into tech-based solutions – YOU CAN RAISE STUDENT OUTCOMES BY A FULL STANDARD DEVIATION. WOBEGON HERE WE COME!!!!! Crap, we’ll leave Wobegon in the dust at that rate. KIPP… pshaw… Harlem-Scarsdale achievement gap… been there done that! We’re talking a full standard deviation of student outcome improvement! Never seen anything like that – certainly not anything based on… say… evidence?

To be clear, even a moderately informed presenter fully intending to present fabricated but still realistic information on student achievement would likely present something a little closer to reality than this.

Indeed this graph is intended to be illustrative… not real…. but the really big problem is that it is NOT EVEN ILLUSTRATIVE OF ANYTHING REMOTELY REAL.

Now for the part that’s really not funny. As much as I’m making a big joke about this graph, it was presented to policymakers as entirely serious. How or whether they interpreted it as serious, who knows. But, it was presented to policymakers in New York State and has likely been presented to policymakers elsewhere with the serious intent of suggesting to those policymakers that if they just adopt reformy strategies for teacher compensation or buy some mythical software tools, they can actually improve their education systems at the same time as slashing school aid across the board. Put into context, this graph isn’t funny at all. It’s offensive. And it’s damned irresponsible! It’s reprehensible!

Let’s be clear. We have absolutely no evidence that the rate of return to the education dollar would be TRIPLED (or improved at all) if we spent each additional dollar on things such as test score based merit pay or other “teacher quality” initiatives such as eliminating seniority based pay or increments for advanced degrees. In fact, we’ve generally found the effect of performance pay reforms to be no different from “0.” And we have absolutely no evidence on record that the rate of return to the education dollar could be increased 5X if we moved dollars into “tech-based” learning systems.

The information in this graph is… COMPLETELY FABRICATED.

And that’s why this graph makes my whole new category of DUMBEST COMPLETELY FABRICATED GRAPHS EVER!