Contrary to reformy wisdom regarding spending bubbles… the harmlessness …. oh wait… the benefits of spending cuts… and the fact that we all know as a reformy fact that we’ve already dumped plenty of money into our high need districts nationwide – it turns out that there actually are still some school districts out there that appear somewhat disadvantaged when it comes to funding.

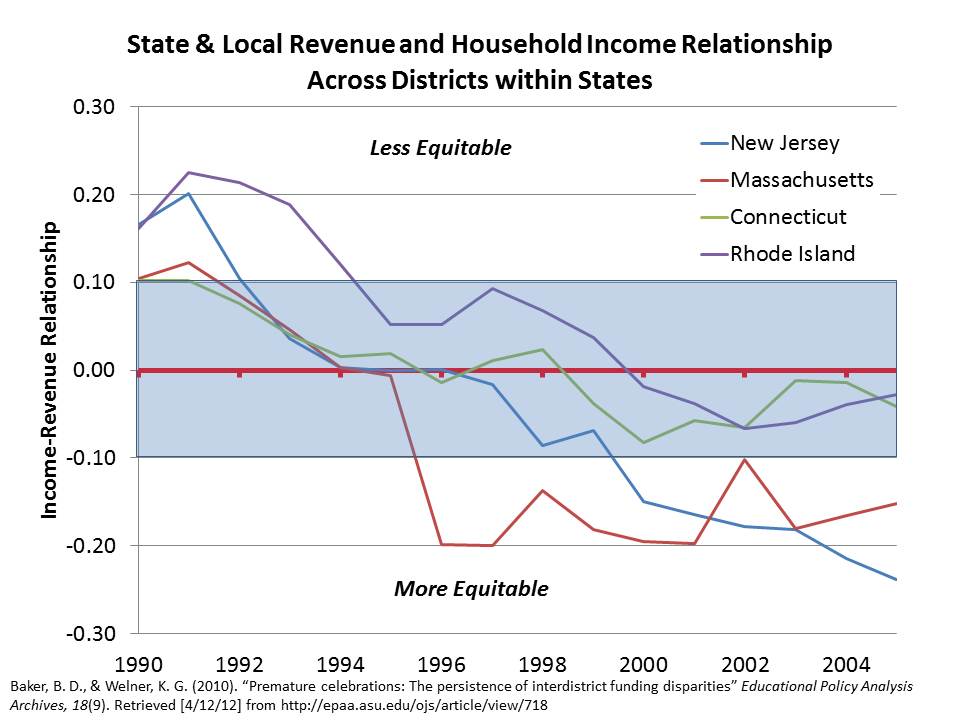

Soon, we will be releasing our annual update of our report on school funding fairness. In that report, we emphasize that school funding fairness is an issue primarily governed by and primarily a responsibility of the states. And school funding fairness varies widely across states. First, the overall level of funding varies significantly from state to state. Second, the extent to which states provide additional resources to districts with higher concentrations of children in poverty varies widely across states. In fact, several large, diverse states still maintain state school finance systems where the highest need districts receive substantially less state and local revenue per pupil than the lowest need districts. These states include Illinois, New York, Pennsylvania and Texas among others.

It’s important to understand that the value of any given level of education funding, in any given location, is relative. That is, it doesn’t simply matter that a district has or spends $10,000 per pupil, or $20,000 per pupil. What matters is how that funding compares to other districts operating in the same labor market, and for that matter, how that money relates to other conditions in the region/labor market. Why? Well, schooling is labor intensive. And the quality of schooling depends largely on the ability of schools or districts to recruit and retain quality employees. And yes… despite reformy arguments to the contrary – competitive wages for teachers matter! The largest share of school district annual operating budgets is tied up in the salaries and wages of teachers and other school workers. The ability to recruit and retain teachers in a school district in any given labor market depends on the wage a district can pay to teachers a) relative to other surrounding schools/districts and b) relative to non-teaching alternatives in the same labor market.

In our funding fairness report, we present statewide profiles of disparities in funding with respect to poverty. But, I thought it would be fun (albeit rather depressing) here to try to identify some of the least well-funded districts in the country. Now, keep in mind that there are still over 15,000 districts nationwide. I’m focusing here on large and mid-sized cities using a Census Bureau Locale classification.

Following are two lists. In each case, I have selected districts where:

- The combined state and local revenue per pupil is less than the average for districts in the same labor market (core based statistical area);

- The U.S. Census Poverty rate for the district is more than 50% higher than the average for districts in the same labor market.

Put very simply, districts with higher student needs than surrounding districts in the same labor market don’t just require the same total revenue per pupil to get the job done. They require more. Higher need districts require more money simply to recruit and retain similar quantities (per pupil) of similar quality teachers. That is, they need to be able to pay a wage premium. In addition, higher need districts need to be able to both provide the additional program/service supports necessary for helping kids from disadvantaged backgrounds (including smaller classes in early grades) while still maintaining advanced and enriched course options.

The districts in these tables not only don’t have the “same” total state and local revenue per pupil than surrounding districts. They have less and in some cases they have a lot less! In many cases their child poverty rate is more than twice that of the surrounding districts that continue to have more resources.

Among the least well funded cities are Chicago, Philadelphia and Bridgeport, CT. All have much higher poverty than their surroundings.

Table 1. Least fairly funded large, midsize and small cities [Preliminary single year analysis]

| District | State | State & Local Revenue Ratio | Poverty Ratio |

| West Fresno Elementary School District | California | 71% | 1.97 |

| Roosevelt Elementary District | Arizona | 74% | 1.87 |

| Alhambra Elementary District | Arizona | 75% | 1.85 |

| Reading School District | Pennsylvania | 78% | 2.50 |

| Allentown City School District | Pennsylvania | 78% | 2.48 |

| Franklin-McKinley Elementary School District | California | 79% | 1.92 |

| Chicago Public School District 299 | Illinois | 80% | 1.67 |

| Alum Rock Union Elementary School District | California | 82% | 1.52 |

| Isaac Elementary District | Arizona | 83% | 1.91 |

| Sunnyside Unified District | Arizona | 85% | 1.70 |

| Creighton Elementary District | Arizona | 87% | 1.96 |

| North Forest Independent School District | Texas | 87% | 2.13 |

| Manchester School District | New Hampshire | 87% | 1.77 |

| East Hartford School District | Connecticut | 87% | 1.60 |

| Murphy Elementary District | Arizona | 87% | 2.88 |

| Schenectady City School District | New York | 88% | 2.53 |

| Lansingburgh Central School District | New York | 89% | 1.94 |

| Pontiac City School District | Michigan | 90% | 3.04 |

| Kankakee School District 111 | Illinois | 91% | 1.69 |

| Utica City School District | New York | 91% | 1.98 |

| National Elementary School District | California | 91% | 1.74 |

| San Antonio Independent School District | Texas | 91% | 1.66 |

| Bloomington School District 87 | Illinois | 91% | 1.73 |

| Godfrey-Lee Public Schools | Michigan | 92% | 1.81 |

| Hueneme Elementary School District | California | 92% | 1.72 |

| Dallas Independent School District | Texas | 92% | 1.83 |

| Balsz Elementary District | Arizona | 92% | 1.66 |

| Adams-Arapahoe School District 28J | Colorado | 93% | 1.77 |

| Binghamton City School District | New York | 93% | 1.91 |

| Fort Worth Independent School District | Texas | 93% | 1.70 |

| Norfolk City Public Schools | Virginia | 93% | 1.77 |

| Magnolia Elementary School District | California | 93% | 1.65 |

| Parkrose School District 3 | Oregon | 93% | 1.69 |

| Godwin Heights Public Schools | Michigan | 94% | 1.57 |

| Philadelphia City School District | Pennsylvania | 94% | 2.12 |

| Alief Independent School District | Texas | 94% | 1.69 |

| David Douglas School District 40 | Oregon | 96% | 2.00 |

| South San Antonio Independent School District | Texas | 96% | 1.61 |

| Lansing Public School District | Michigan | 96% | 2.00 |

| Clarenceville School District | Michigan | 96% | 1.65 |

| Harrison School District 2 | Colorado | 96% | 1.81 |

| Holland City School District | Michigan | 96% | 1.71 |

| Lebanon School District | Pennsylvania | 96% | 2.08 |

| Bridgeport School District | Connecticut | 98% | 2.63 |

| Edgewood Independent School District | Texas | 98% | 1.71 |

| Turner Unified School District 202 | Kansas | 98% | 1.62 |

| Biddeford | Maine | 98% | 1.84 |

| Saginaw City School District | Michigan | 98% | 1.73 |

| North Little Rock School District | Arkansas | 98% | 1.63 |

| Burlington School District | Vermont | 98% | 1.90 |

| Milwaukee School District | Wisconsin | 98% | 2.09 |

| Omaha Public Schools | Nebraska | 98% | 1.72 |

| Santa Ana Unified School District | California | 99% | 1.63 |

| Birmingham City School District | Alabama | 99% | 1.77 |

| Erie City School District | Pennsylvania | 99% | 1.70 |

| Crooked Oak Public Schools | Oklahoma | 99% | 1.73 |

| Lancaster School District | Pennsylvania | 99% | 2.11 |

| Lima City School District | Ohio | 99% | 2.24 |

| Gainesville City School District | Georgia | 99% | 1.78 |

| Oakland Unified School District | California | 99% | 1.84 |

Data Sources: Based on Census Fiscal Survey (f33) 2008-09 [http://www.census.gov/govs/school/] and Census Small Area Income and Poverty Estimates

Table 2. Least fairly funded fringe districts of large, midsize and small cities [Preliminary single year analysis]

| District | State | State & Local Revenue Ratio | Poverty Ratio |

| Clearview Local School District | Ohio | 67% | 1.57 |

| Cicero School District 99 | Illinois | 67% | 1.60 |

| Waukegan Community Unit School District 60 | Illinois | 68% | 1.97 |

| Posen-Robbins Elementary School District 143-5 | Illinois | 69% | 1.74 |

| Lincoln Elementary School District 156 | Illinois | 71% | 1.76 |

| Maywood-Melrose Park-Broadview School District 89 | Illinois | 72% | 1.52 |

| Kannapolis City Schools | North Carolina | 72% | 1.53 |

| Round Lake Community Unit School District 116 | Illinois | 72% | 1.72 |

| Ravenswood City Elementary School District | California | 73% | 1.82 |

| Zion Elementary School District 6 | Illinois | 73% | 1.99 |

| Community Consolidated School District 168 | Illinois | 75% | 1.79 |

| Inkster City School District | Michigan | 75% | 1.55 |

| Woonsocket School District | Rhode Island | 76% | 1.78 |

| Dayton Independent School District | Kentucky | 76% | 1.82 |

| Port Huron Area School District | Michigan | 77% | 1.93 |

| Highland Park City Schools | Michigan | 78% | 2.03 |

| Harvey School District 152 | Illinois | 79% | 1.76 |

| Pawtucket School District | Rhode Island | 80% | 1.56 |

| Clintondale Community Schools | Michigan | 80% | 1.68 |

| Bessemer City School District | Alabama | 80% | 1.86 |

| New Miami Local School District | Ohio | 80% | 1.78 |

| Hamtramck Public Schools | Michigan | 80% | 2.13 |

| Chicago Heights School District 170 | Illinois | 80% | 1.84 |

| Kenosha School District | Wisconsin | 81% | 1.63 |

| Blackstone-Millville School District | Massachusetts | 81% | 1.63 |

| North Chicago School District 187 | Illinois | 82% | 2.06 |

| Waterbury School District | Connecticut | 82% | 1.94 |

| Ludlow Independent School District | Kentucky | 82% | 1.52 |

| Revere School District | Massachusetts | 83% | 1.82 |

| Chicago Ridge School District 127-5 | Illinois | 83% | 1.67 |

| Laurel Highlands School District | Pennsylvania | 83% | 1.62 |

| Brentwood Union Free School District | New York | 84% | 2.17 |

| Glendale Elementary District | Arizona | 84% | 1.57 |

| Pleasant Hill School District 69 | Illinois | 84% | 2.08 |

| Lennox Elementary School District | California | 85% | 1.53 |

| Rochester School District | New Hampshire | 86% | 1.65 |

| Spalding County School District | Georgia | 86% | 1.64 |

| Campbell City School District | Ohio | 86% | 1.61 |

| Castleberry Independent School District | Texas | 86% | 1.55 |

| Connellsville Area School District | Pennsylvania | 86% | 1.65 |

| Fredericksburg City Public Schools | Virginia | 87% | 2.81 |

| Alta Vista Elementary School District | California | 87% | 1.58 |

| Paulsboro Borough School District | New Jersey | 87% | 2.58 |

| Chelsea School District | Massachusetts | 87% | 2.17 |

| Uniontown Area School District | Pennsylvania | 87% | 1.86 |

| Pleasant Valley School District 62 | Illinois | 88% | 2.07 |

| Everett School District | Massachusetts | 88% | 2.52 |

| Carbon Cliff-Barstow School District 36 | Illinois | 89% | 2.14 |

| Madison Public Schools | Michigan | 89% | 2.02 |

| Freehold Borough School District | New Jersey | 90% | 2.44 |

| Caldwell School District 132 | Idaho | 90% | 1.85 |

| Twin Lakes No. 4 School District | Wisconsin | 90% | 1.67 |

| Edinburgh Community School Corporation | Indiana | 90% | 1.70 |

| Riverview Gardens School District | Missouri | 90% | 1.79 |

| Independence Public Schools | Missouri | 91% | 1.61 |

| Hazel Park City School District | Michigan | 91% | 1.88 |

| Winooski Incorporated School District | Vermont | 91% | 2.19 |

| Carteret Borough School District | New Jersey | 91% | 1.79 |

| Penns Grove-Carneys Point Regional School District | New Jersey | 92% | 1.51 |

| Speedway School Town | Indiana | 92% | 1.54 |

| Hopewell City Public Schools | Virginia | 92% | 2.00 |

| Bound Brook Borough School District | New Jersey | 92% | 1.73 |

| New Britain School District | Connecticut | 92% | 2.46 |

| Somersworth School District | New Hampshire | 92% | 1.62 |

| Watervliet City School District | New York | 92% | 1.57 |

| Centennial School District 28J | Oregon | 92% | 1.59 |

| William Floyd Union Free School District | New York | 93% | 1.92 |

| Fountain School District 8 | Colorado | 93% | 1.65 |

| Lowell School District | Massachusetts | 93% | 2.55 |

| Lorain City School District | Ohio | 93% | 1.95 |

| St. Bernard Parish School District | Louisiana | 93% | 1.64 |

| Cahokia Community Unit School District 187 | Illinois | 93% | 2.79 |

| Northridge Local School District | Ohio | 93% | 2.20 |

| Hudson Falls Central School District | New York | 94% | 1.62 |

| Reynolds School District 7 | Oregon | 94% | 1.84 |

| Woodbury City School District | New Jersey | 94% | 2.00 |

| Aldine Independent School District | Texas | 94% | 1.63 |

| Bartonville School District 66 | Illinois | 94% | 1.65 |

| Westwood Heights Schools | Michigan | 95% | 1.81 |

| Hazel Crest School District 152-5 | Illinois | 95% | 1.81 |

| New Kensington-Arnold School District | Pennsylvania | 95% | 1.59 |

| Cascade Union Elementary School District | California | 95% | 1.63 |

| Malden School District | Massachusetts | 95% | 2.29 |

| Seabrook School District | New Hampshire | 96% | 1.64 |

| Lynn School District | Massachusetts | 96% | 1.87 |

| Newport Independent School District | Kentucky | 96% | 1.91 |

| River Forest Community School Corporation | Indiana | 96% | 1.60 |

| Willow Run Community Schools | Michigan | 96% | 2.19 |

| Big Beaver Falls Area School District | Pennsylvania | 96% | 1.70 |

| Norwood City School District | Ohio | 97% | 1.69 |

| Beecher Community School District | Michigan | 97% | 2.31 |

| Jennings School District | Missouri | 97% | 2.06 |

| Hammond School City | Indiana | 97% | 1.55 |

| Freeport Union Free School District | New York | 97% | 2.17 |

| Monessen City School District | Pennsylvania | 97% | 1.83 |

| Copiague Union Free School District | New York | 97% | 1.87 |

| McKeesport Area School District | Pennsylvania | 98% | 2.07 |

| Lawrence School District | Massachusetts | 98% | 2.41 |

| Covington Independent School District | Kentucky | 98% | 2.37 |

| Clinton School District | Massachusetts | 98% | 2.26 |

| Adams County School District 14 | Colorado | 98% | 1.82 |

| Beloit School District | Wisconsin | 99% | 1.71 |

| Brooklawn Borough School District | New Jersey | 99% | 1.51 |

| Oak Park City School District | Michigan | 99% | 2.21 |

| Lindenwold Borough School District | New Jersey | 99% | 2.08 |

| Bay Shore Union Free School District | New York | 99% | 1.88 |

Data Sources: Based on Census Fiscal Survey (f33) 2008-09 [http://www.census.gov/govs/school/] and Census Small Area Income and Poverty Estimates

Now, it’s one thing for reformy pundits to be making the absurd arguments I laid out in the introduction above. They simply don’t know crap about any of this stuff. I’m convinced of that. They simply don’t know what districts spend, how it compares to other districts – or even that school finance is primarily a state by state issue. Invariably, when speaking on issues of school funding, they make statements that are patently false – and most often passed down through the reformy bad graph archive.

What concerns me more is when local representatives of children attending these districts, including the superintendents of many of these school districts simply don’t stand up for their own constituents. Somehow, the solution for Philadelphia public schools is to close more of them? To shift more control to additional private managers? But to ignore entirely that Pennsylvania continues to maintain one of the least equitable state school finance systems in the country? The same applies to Chicago? Do we hear the City of Chicago’s leaders condemning the fact that Illinois also maintains one of the nation’s least fair funding systems? One of the nation’s most racially disparate state school finance systems?

I also don’t expect to see Governors of these states continue to point the finger of shame at these districts – and state departments of education continue to set up ill-conceived and unfair accountability systems and unfunded intervention strategies through new powers awarded to them under NCLB waivers. When they do – if, for example, NY’s Governor Cuomo chooses to point the finger of shame at Utica (purely hypothetical), I sure as hell hope that Utica points right back! And I hope others including Schenectedy and Binghamton stand by their side. Likewise for Reading and Allentown, PA! These districts have been persistently slammed by their state school funding system. We are talking about districts that a) have 2.5 times the poverty rate of their surroundings and b) less than 80% of the state and local revenue.

And likewise for Bridgeport, CT along with New Britain and Waterbury! And what about Waukegan, IL… which by these measures has only about 68% of the average state and local revenue of their surroundings and nearly double the poverty rate!

Leaders in these cities should be outraged by their treatment under state school finance systems. We should be hearing it, and hearing it loudly. We shouldn’t just be hearing about how their incompetent and greedy teachers and administrators are to blame and how we need to simply shut down more of their schools and turn them over to someone else. Fairness in funding is a critical first step. It is a prerequisite condition. And without it, we can expect continued difficulties in these districts – difficulties that will certainly not be remedied by current slash/burn & blame policies.

Note: The analysis presented here is a preliminary run using a single year of national school finance data (but built on a 3-year panel). In several of these cases however, especially those that I call out individually, I have conducted numerous additional analyses which are consistent with those above. I can say with confidence that the Illinois, Pennsylvania, Connecticut and New York State disparities represented above are entirely consistent with analyses of multiple years of state data and federal data. Cities like Utica, NY, Bridgeport, Waterbury and New Britain CT, Allentown and Reading, PA are consistently among the worst funded districts relative to their state as a whole and their specific labor market surroundings. Riverview Gardens and other poor inner urban fringe St. Louis districts are also among the most disadvantaged, similar to low income, high-minority concentration Chicago suburbs. Texas and Colorado findings are also consistent. Others may be as well, but I’ve not yet had the chance to reconcile the findings for each city/state with state data systems.