I recall several years ago attending an initial organizing meeting for a special interest group on Charter Schools at the American Educational Research Association. Note to outsiders – AERA has several special interest groups, some research oriented, some advocacy oriented… many somewhere in between. These are member organized groups and many are very small. If I recall correctly, there were a handful of us at that meeting, including Gary Miron, Katy Bulkley and a few others. If memory serves me, I think Rick Hess may have paid a visit to the meeting to argue that this new group should really just be a part of the school choice special interest group. All of that aside, I and others attended this meeting out of our interest in studying this relatively new concept of charter schools. Most of us were intrigued by the possibilities of alternative governance structures that might provide opportunity for innovation (what might now be referred to a disruptive innovation).

I didn’t spend a whole lot of time researching charters in my first few years after that, but eventually I did start to explore charter schooling and teacher labor markets – specifically the recruitment/retention of teachers based on different academic backgrounds – specifically college selectivity. My perspective was that some creative, energetic leadership (which might now be referred to as Cage-busting leadership) that might be associated with a mission-driven start-up school, coupled with an ounce or two of deregulation, and applied in the right context, might provide opportunities to recruit an academically talented pool of teachers. Our research largely supported these assertions.

- Baker, B. D., & Dickerson, J. L. (2006). Charter Schools, Teacher Labor Market Deregulation, and Teacher Quality Evidence From the Schools and Staffing Survey. Educational Policy, 20(5), 752-778.

In recent years, however, my perception is that this whole movement has gotten way out of control – it has morphed dramatically – especially the punditry and resultant public policy surrounding charter schooling. Sadly, I’m reaching a point where I now believe that the end result is causing more harm than good. In my view, many charter schools, and certainly the political movement of charter schooling, are no-longer operating in the public interest. In fact, they have all the incentive in the world to do just the opposite, and there is little or no sign of this turning around any time soon.

We’ve shifted dramatically, and rather quickly from what some might refer to as a portfolio model, to what I would now characterize as a parasitic one.

Overarching Incentives & Chartery Miracles

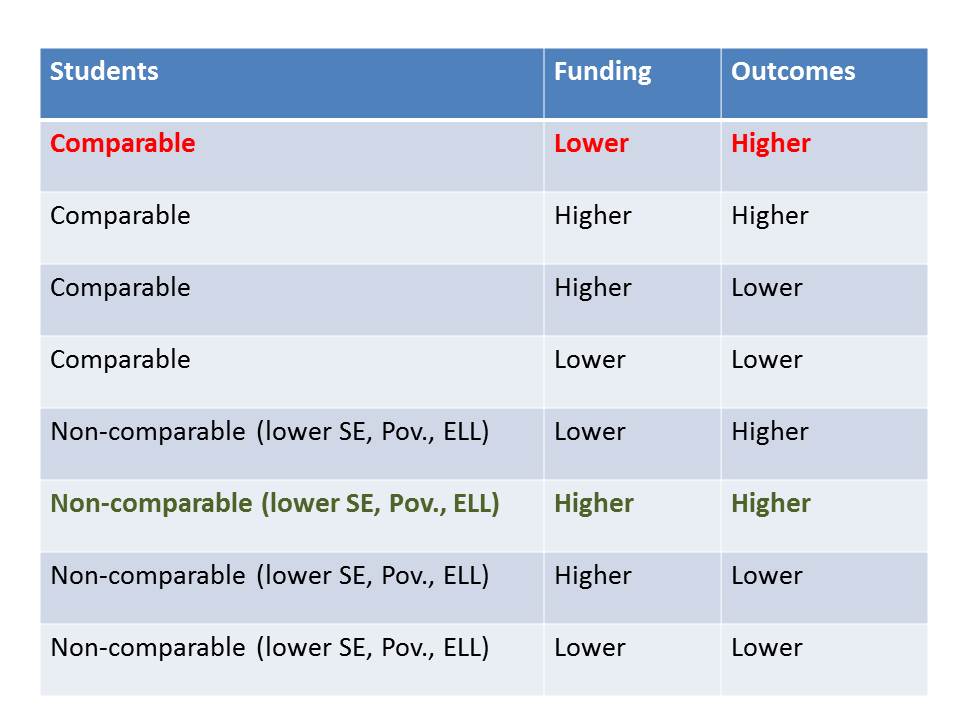

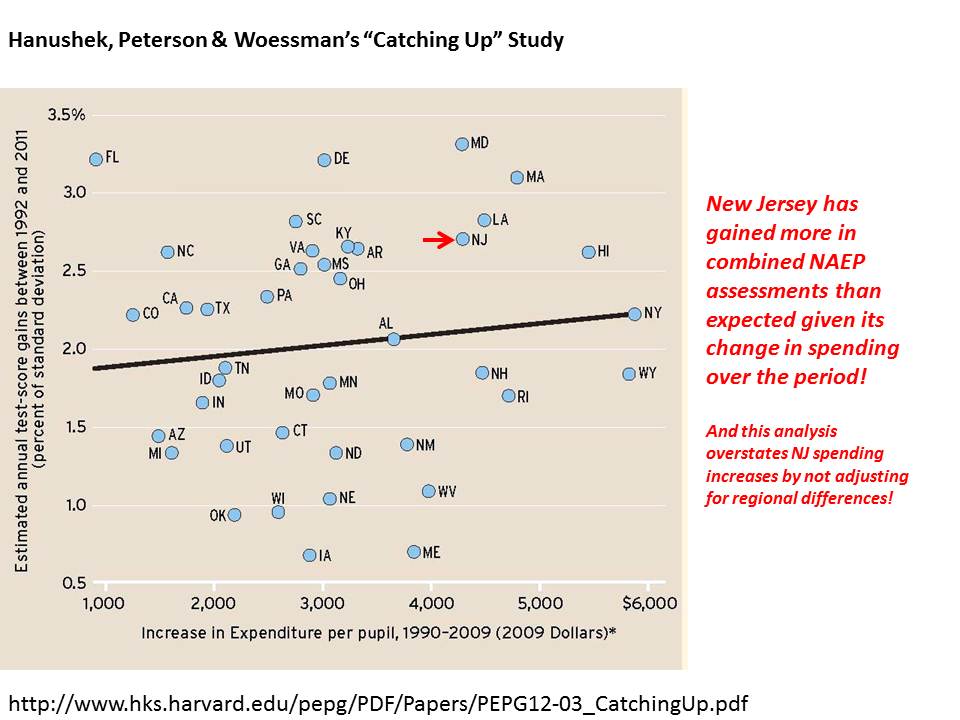

Since the early phases of significant national charter expansion which coincided (somewhat) with early implementation of NCLB, chartery success has been reduced to a definition reminiscent of the cult of efficiency. Chartery success (accompanied by headlines, news magazine segments and visits from politicians) is largely defined as A) getting higher test scores or greater test score growth, B) for less money, and C) with the “same” kids. Because this is the supposed definition of success, punditry around charter schooling – and research designed to endorse this punditry – makes every effort to validate A, while obfuscating or completely misrepresenting B and/or C.

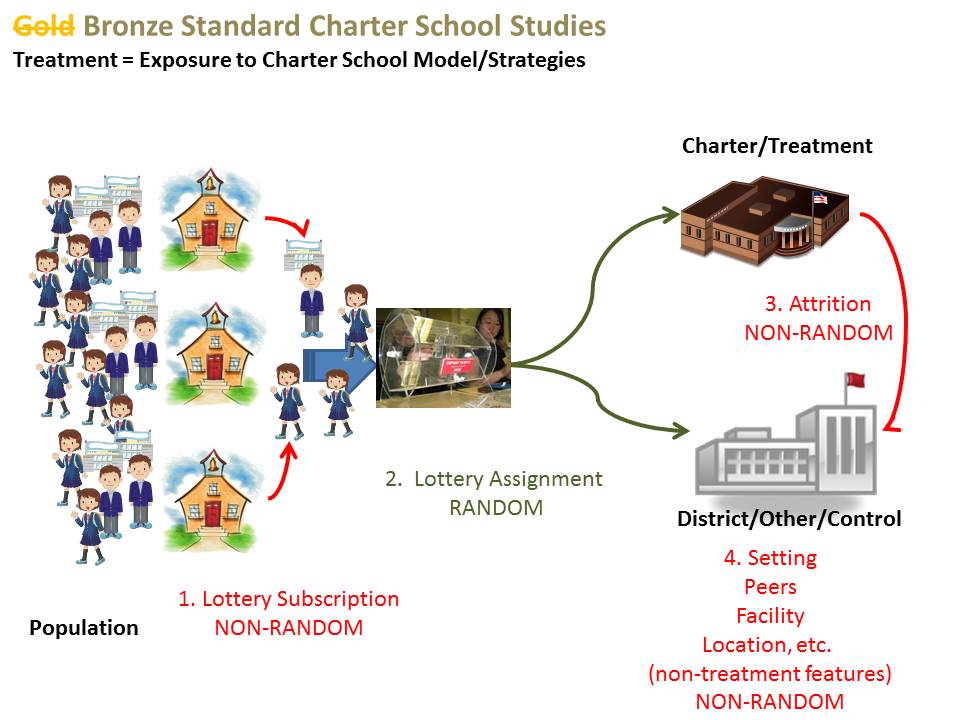

Figure 1. Chartery Miracle Success Framework

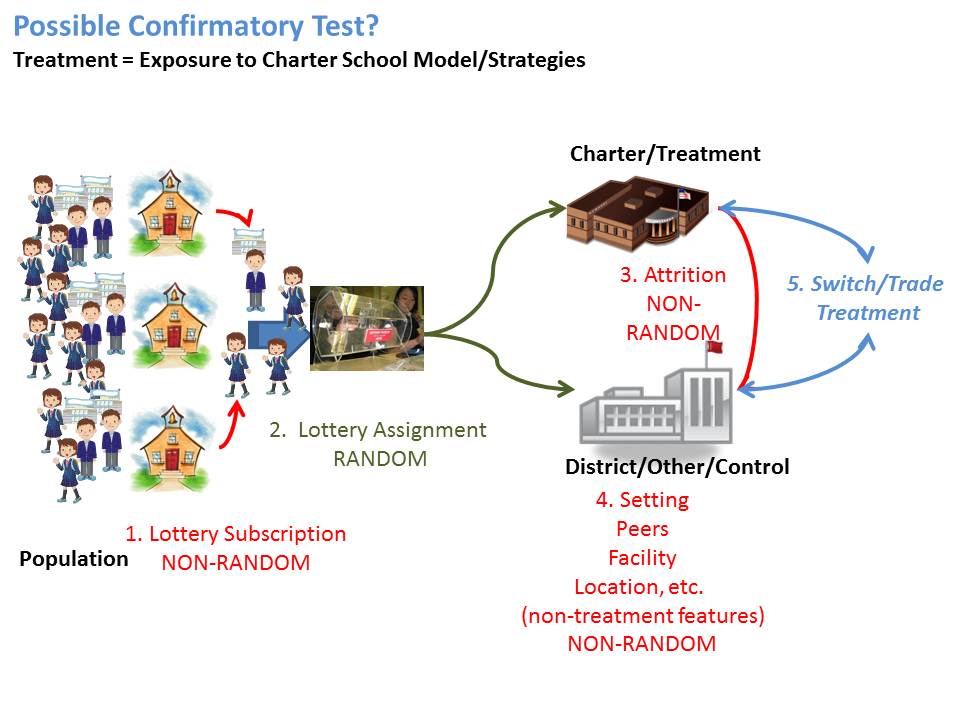

The central objective in Chartery Miracle Punditry is to prove that average scores, and otherwise methodologically weak policy analyses show that charter students outperform their traditional public school counterparts.

These studies rarely if ever include any accurate measure of the resources used by charters, more often than not citing bogus, irrelevant studies or providing flimsy back of the napkin analysis.

These studies often use entirely insufficient measures for declaring students as being “matched” with peers between district and charter schools, fail to consider fully the role of peer effects as one of the largest school factors, or the intersection of selective attrition and peer effects.

In part, because it is increasingly well understood that this is the way the game is played, charter school operators have all the incentive in the world to play the game this way (even if they were otherwise predisposed not to). And apparently far too many charter operators are responsive to these incentives.

Competition for Demographic Advantage

This recent Reuters article by Stephanie Simon explains practices actually used by many charter operators, arguably in response to current incentives.

http://www.reuters.com/article/2013/02/15/us-usa-charters-admissions-idUSBRE91E0HF20130215

In short, charter schools are applying a variety of creative strategies to screen out those students they feel won’t help their numbers. In some/many cases, children will be screened out on the basis of otherwise unobservable characteristics. Two low income children wish to apply… but only one is sufficiently motivated to complete the 15 page entry essay. They are labeled as similar as one returns to the district school and one matriculates to the charter, but clearly there is at least some difference between them which may influence their future performance. But these mechanisms also serve to sort out poorer children from more disrupted households, more mobile families, and non-English speaking families. And clearly they send a signal to parents of children with disabilities that this may not be the school for you.

In many parts of the country, especially in areas where charter schools serve a larger share of total enrollment, charter schools do seem to serve more lower income students. And in states where there exists an incentive to serve children with disabilities, charters often do so (boutique special education charters). But these incentives get out of hand as well.

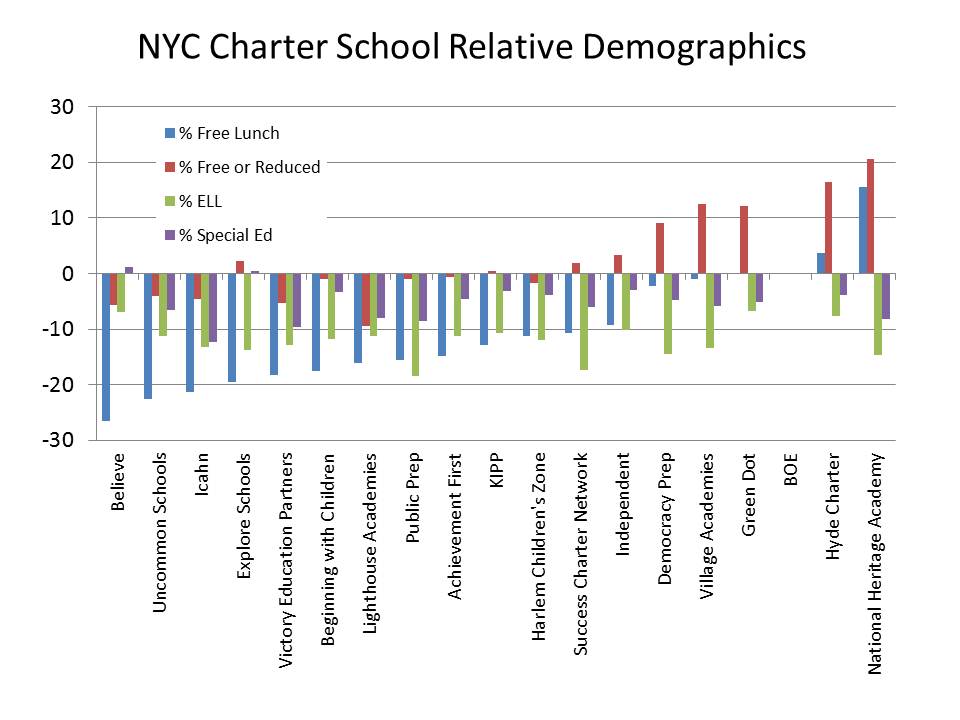

In affluent, economically diverse states like New Jersey, New York and Connecticut, as I commented in the Reuters article, my research (& related posts) shows substantial cream-skimming among charters. Many of these findings are validated by others, as I explain in my reports/publications. Here are a few figures on demographics of New York and Connecticut charter schools.

This figure on New York City Charter schools draws on data from a forthcoming article (related to a recent report). In this analysis, I use three years of data from 2008-10, and I estimate a regression equation for each demographic measure, comparing schools that serve the same grade level in the same borough of the city. The graph shows how much lower (or higher) the population share is in each charter school chain, relative to NYC district schools.

Figure 2. New York City Relative Demographics

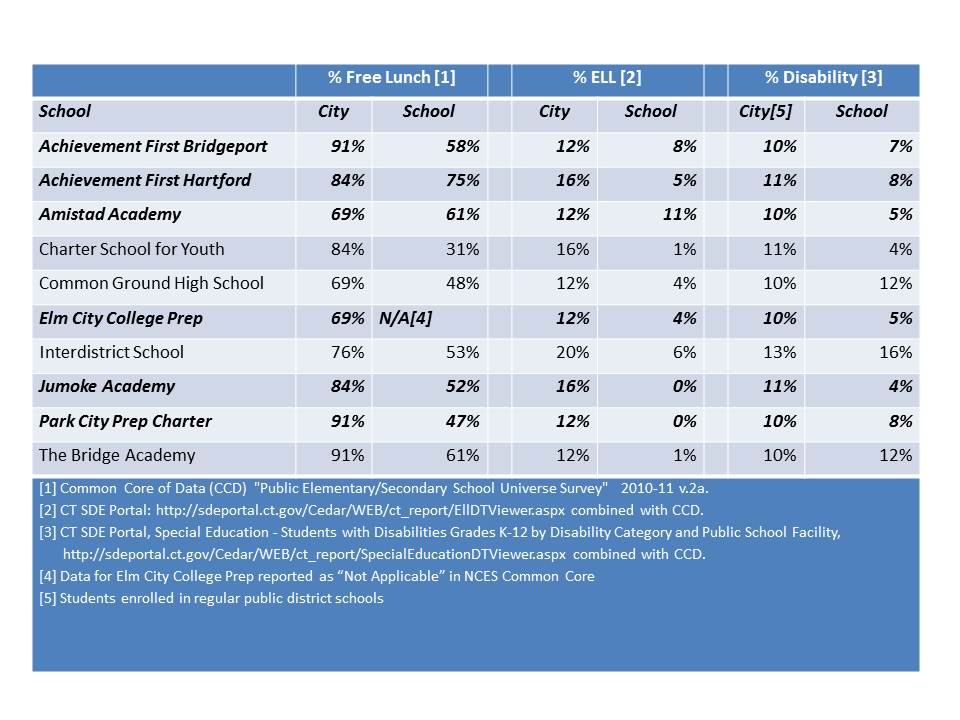

This next figure shows the demographics of Connecticut Charter Schools that are in high poverty cities. To construct this comparison, I combine CTDOE data with data from NCES Common Core. I sum the total number of public & charter school enrolled children by City (school location in CCD) and the total numbers of free lunch, ELL and special education enrolled children. Note that the special education concentrations are for only regular district (& charter) schools. Overall district rates of children with disabilities are marginally higher (because some are in special &/or private placements).

Table 1. Connecticut Charter Schools in High Poverty (<50% Free Lunch) Cities

Let me make this absolutely clear. In a heterogeneous urban schooling environment, the more individual schools or groups of schools engage in behavior that cream skims off children who are less poor, less likely to face language barriers, far less likely to have a disability to begin with, and unlikely at all to have a severe disability, the higher the concentration of these children left behind in district schools.(see for example: https://schoolfinance101.wordpress.com/2012/08/06/effects-of-charter-enrollment-on-newark-district-enrollment/)

Indeed, as I’ve pointed out previously, districts create some similar (or even more extreme) segregation on their own through magnet schools, but under these circumstances, districts can (and should) regulate the extent of segregation – and specifically the extent to which high need children are left behind clustered in certain district schools. Certainly some urban districts do a very poor job at managing this balance.

But with independent charter expansion, districts lose the ability to even try to manage the balance. Sadly, what may initially have been conceived of as a symbiotic relationship between charter and district schools is increasingly becoming parasitic!

In a “competitive marketplace” of schooling within a geographic space, under this incentive structure, the goal is to be that school which most effectively cream skims – without regard for who you are leaving behind for district schools or other charters to serve – while best concealing the cream-skimming – and while ensuring lack of financial transparency for making legitimate resource comparisons.

This is precisely why the idea of replacing entirely urban public school systems with a portfolio of charters competing against one another with minimal centralized oversight, is a massively stupid [from a public policy perspective] idea. That is, unless the overarching incentive structure were to change entirely. But I have little hope of that happening, and there seems to be little incentive for advocates of the extreme extension of charter madness to support altering the incentives.

There does seem to be some increased media and public awareness that many charter schools are indeed attempting to game their enrollments. Some charter (and chartering) advocates, including Mike Petrilli have capitulated on this point, but have suggested that this isn’t necessarily a bad thing. I might agree that with moderation, under the right controls, which requires some centralized governance/management, this may be partly true. But under current circumstances, it’s not.

[sidebar – one need only look at the geographic distribution of charters in New Orleans or Kansas City with respect to neighborhood income to see how such a system, under the current incentive structure, will fail to serve the neediest children]

Competition for Resources

The last frontier of deception in the charter debates seems to be over comparability of resources. Few if any studies which praise charter successes make any legitimate attempt to measure resources. Ken Libby, Katy Wiley and I did our best to tease out resource comparability in NYC, Texas and Ohio. The fact that our report has so darn many pages (over 20) of appendices, footnotes, caveats and explanations regarding those comparisons is testament to the fact that policymakers (and the charter industry influencing them) seem to have little interest in improving transparency or comparability of charter school finances.

Lack of clear reporting, transparency and comparability permits the most vocal charter pundits to continue advancing utterly ridiculous arguments about their supposed massive, persistent resource disadvantage.

Thus, they (charter pundits) perpetuate the myth that charters everywhere and always are disadvantaged in terms of resources access – and specifically by the design of state funding systems. Some indeed are, but others clearly are not. Thus, they position themselves to lobby fiercely for their supposed “fair share” of public resources. These arguments are most often anchored to the completely bogus Ball State/Public Impact study of charter school funding (see explanation of Bogosity here![1])

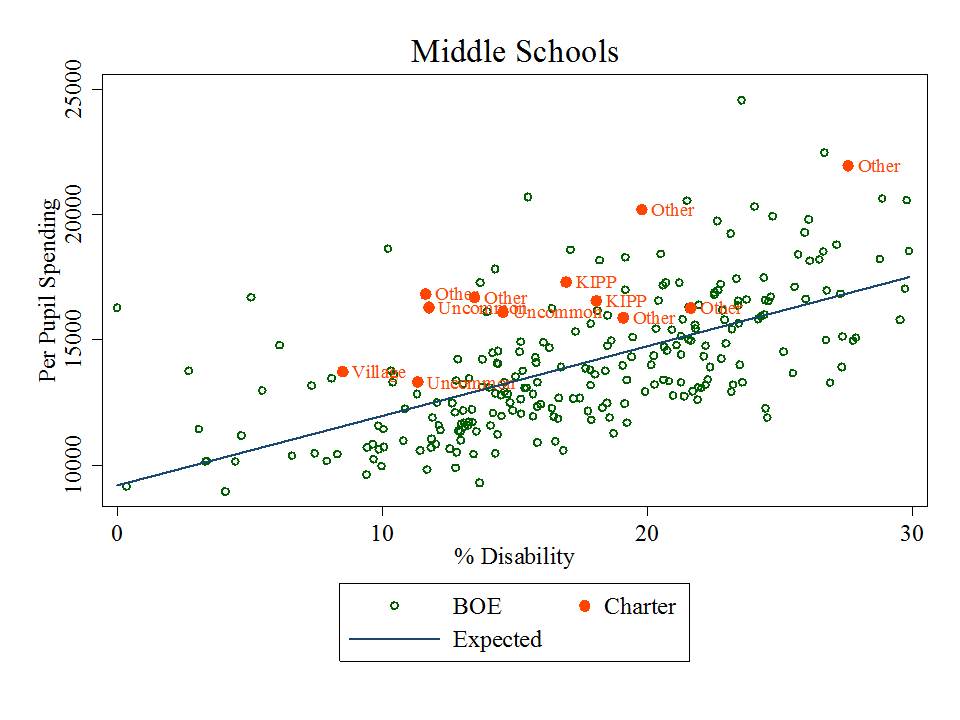

My recent report, and forthcoming article with expanded analyses, on New York City charter schools shows that most substantially outspend NYC BOE schools serving similar student populations and the same grade levels. Figure 3 shows the scatterplot of middle schools by special education population share (where special education population is the strongest predictor of school site spending differences for NYC BOE schools).

Figure 3. Site Based Spending and % Special Education in NYC Middle Schools

Figure 4 shows the elementary schools.

Figure 4. Site Based Spending and % Special Education in NYC Elementary Schools

Figure 5 shows the total expenditures per pupil for Connecticut district and Charter schools. It would appear from Figure 5 that charter schools are getting the short end of the stick? Right? Especially those high flying charters like Amistad and Achievement First in Bridgeport? The problem with this comparison is that it is the host districts that are responsible for financing transportation costs, and ultimately responsible for serving children with disabilities (including/especially severe disabilities) and the expenditures for transportation and special education (including transportation of charter students) are reported on district expenditures.

Figure 5. Total Expenditures per Pupil for Connecticut District & Charter Schools

When we pull out transportation and special education spending the picture changes quite substantially as shown in Figure 6. The charter schools are doing reasonable well in comparable expenditures per pupil – setting aside lengthy discussion of chartery misrepresentations of comparisons of facilities costs (the classic charter reactionary argument being that charters in a state like CT spend about $1700 per pupil on facilities, whereas district facilities are supposedly “free.” Even if that was the case, many CT charters would still be ahead. But, district facilities also come with maintenance costs and long term debt payments [which yes, are expenditures] that while not equaling charter lease payments as a share of operating expense, they do close the supposed gap quite substantially – see lengthy note below).

Figure 6. Comparable Expenditures per Pupil for Connecticut District & Charter Schools

Collateral Damage of the Parasitic Chartering Model

In previous posts I showed how the population cream-skimming effect necessarily leads to an increasingly disadvantaged student population left behind in district schools. High need, urban districts that are hosts to increasing shares of cream-skimming charters become increasingly disadvantaged over time in terms of the students they must serve.

It would be one thing if state policies were in some way trying to intervene to scale up district resources to mitigate this damage. It would be one thing if we could count on charter advocates/pundits to support public policy that would help local districts deal with these (intended) consequences.

But again, the overarching incentives do not favor such advocacy. Resources are finite, and in the never ending quest to “win” the chartery success wars, it is in the interest of charter advocates to do whatever they can to get the largest share of the resources, and not care so much whether district schools get anything. In fact, it’s easier to win if they don’t.

I was not initially so cynical as to believe that charter advocates would seemingly endorse persistent deprivation of needy traditional districts in their own effort to garner more resources, and “win”. But, increasingly, it seems they are. At the very least, they want what they perceive to be their share, regardless of consequences for district schools. We see this in the persistent drive for access to facilities in New York City, subtle shifts in charter vs. district subsidy rates that appear to advantage the charters (see IBO reports) and the continued flood of philanthropy.

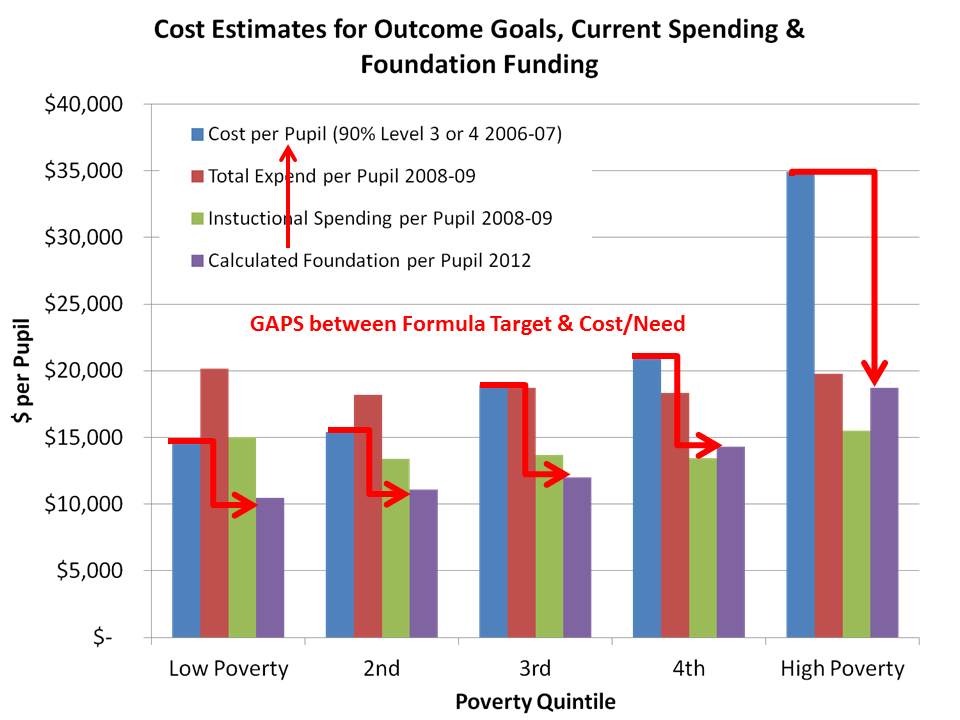

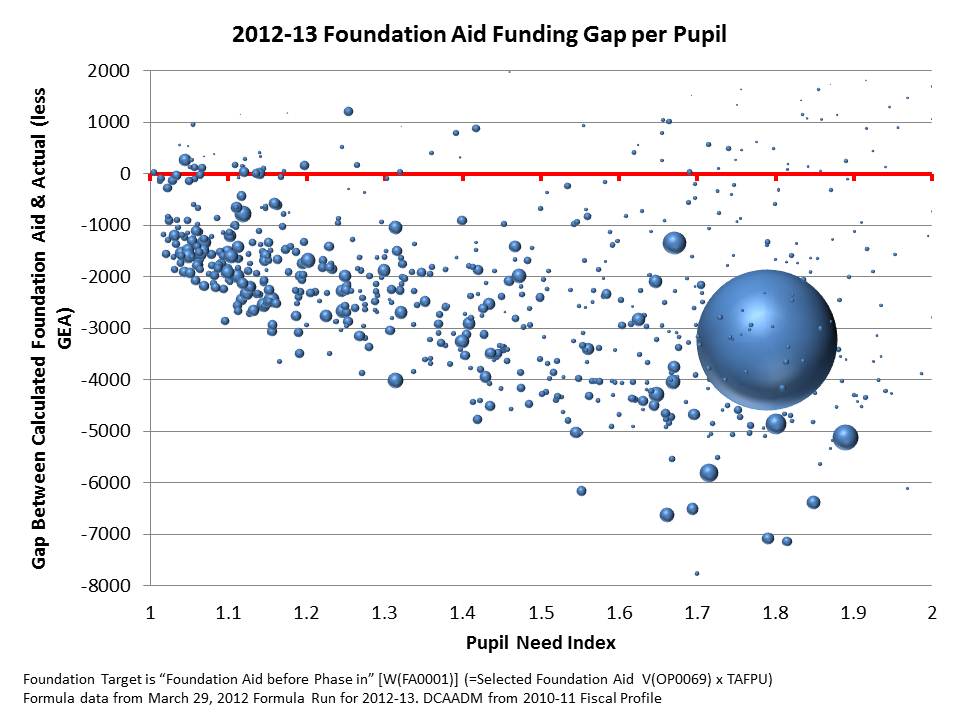

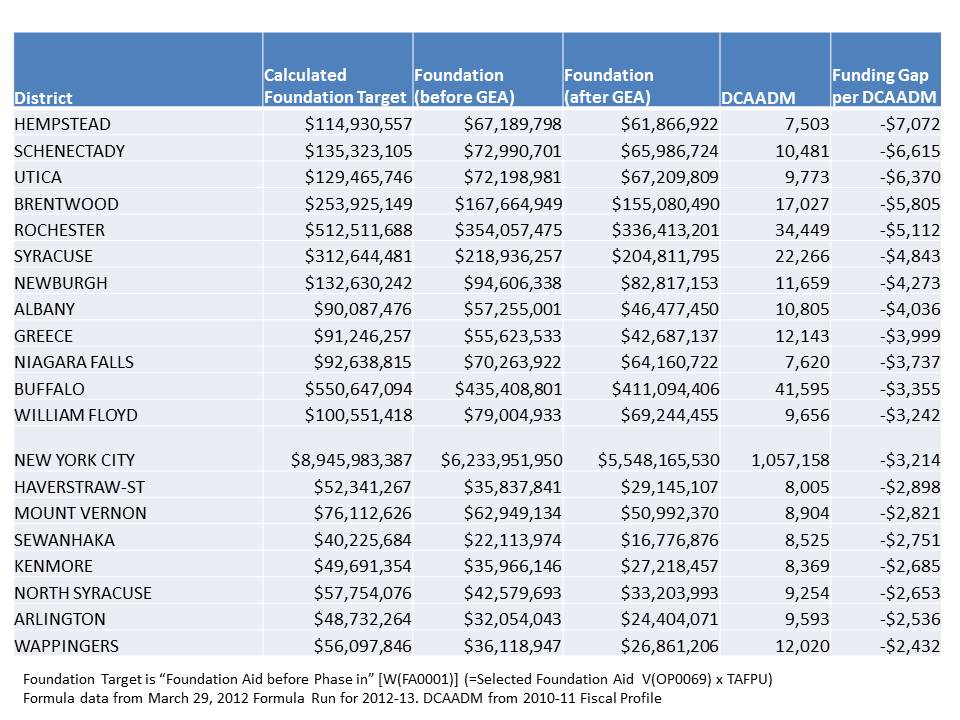

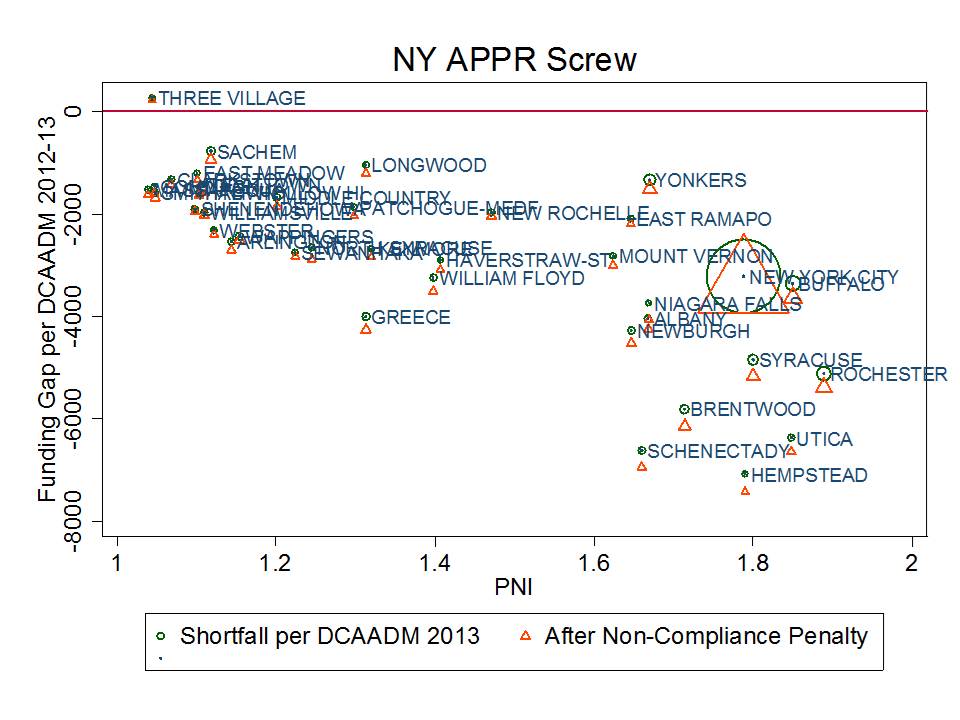

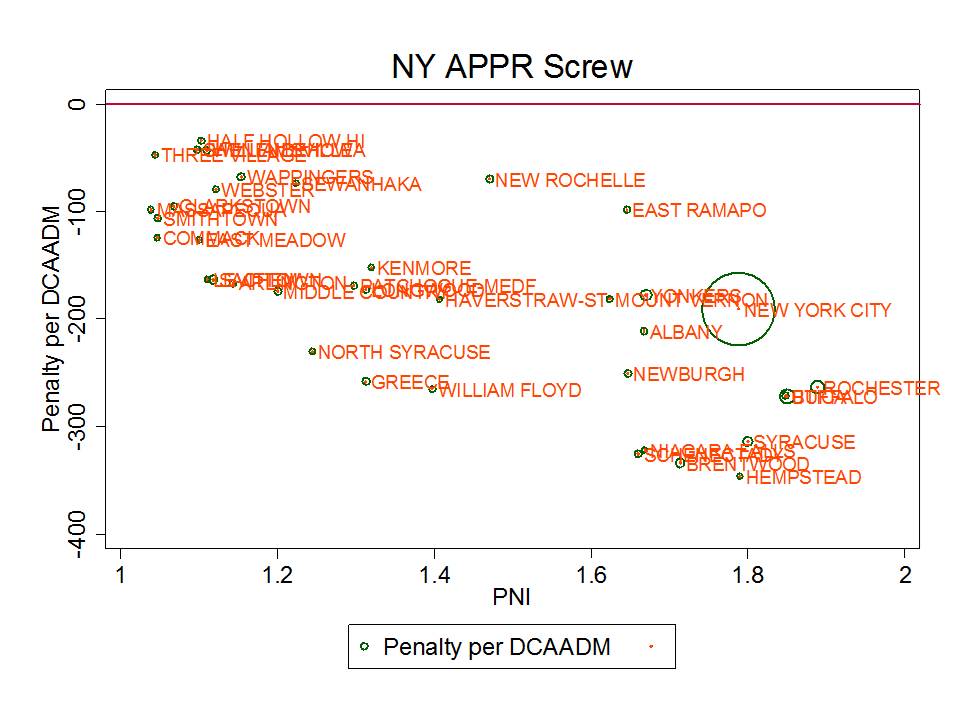

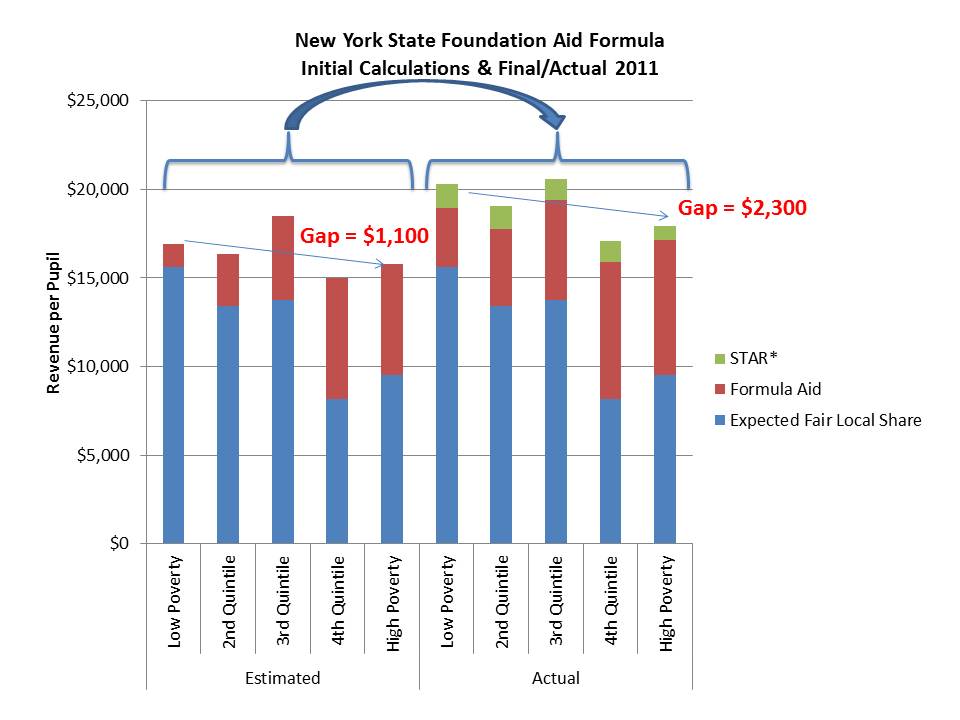

Meanwhile, what is the status of funding for high need districts in New York State? Well, Table 2 summarizes the current degrees of underfunding of New York State’s school finance formula.

Several high need districts are “underfunded” on the state’s own formula by thousands per pupil, including New York City. And where is the outcry from charter advocates that their hosts are being underfunded?

Table 2. Underfunding of New York State’s foundation formula

Districts are starting to get fed up. But they still seem to lack the sex appeal (or bank accounts) and media access of leading charter advocates.

Yet, we don’t hear the cry from charter advocates to support the formula. Doing so might actually increase the pass through funds to charters. But, well endowed charters can offset whatever losses they might face by an underfunded formula… and be that much more likely to “win!” Is that really in the public interest? When is the last time you heard a charter advocate argue for fully funded the state aid formula (as opposed to mandating specifically an increase to their allotment of it).

Connecticut provides a similar case of collateral damage. Figure 7 shows the per pupil increases in the Education Cost Sharing formula adopted for the current year, over prior year spending levels. In short, it ain’t much! Okay… it’s actually next to nothing. Persistent inequities exist between higher and lower need districts, and for that matter, among higher need districts (notably, Hartford and New Haven spending in this graph are distorted by magnet school aid, some of which is spent on kids from other districts).

In the same year, the CT legislature did manage to more significantly increase charter school funding (on the order of $2k per pupil), despite the fact that many charter schools were both serving lower need student populations and already spending more per pupil on a comparative basis than their host districts. Why? Well, first of all, it’s a lot cheaper – takes much less total funding increase – to increase funding for just charter kids. Second, that’s where the current punditry is – with charter advocates successfully conveying their (false) message of severe fiscal disadvantage. Pauvre, Pauvre Charter Schools?

Meanwhile, charters like Achievement First in Bridgeport seem more than happy to take their windfall and allow their “competition” (Bridgeport Public Schools) to languish. It is indeed easier to win that way. And that seems to be what it’s all about.

Figure 7. 2012-13 increases to District Funding in Connecticut

Closing Thoughts

It’s quite sad that we’ve reached this stage. As I envisioned it from the outset (or early on, around the late 1990s), it wasn’t supposed to turn out this way. It would, in theory be possible to establish an avenue for creative experimentation, increased flexibility – for appropriately moderated disruptive innovation and cagebusting leadership. It might even all fit into a portfolio model. Yeah… we could use all of the reformy language to describe what might have been a far more reasonable, thoughtful extension of chartering.

But alas, the potential for charters to contribute positively to the public good, in my view, has been severely compromised in part by the ill conceived incentive framework policymakers and pundits have wrapped around the concept of chartering. Unfortunately, for the foreseeable future it is all too convenient for them to perpetuate this faulty incentive system. Yeah… the public is catching on, and eventually this too shall pass. The only question is just how much damage will have been done before we turn the corner.

[final side bar: Among the damages not discussed herein, but discussed in a previous post, are the increasing shares of students, primarily in urban districts serving low income children and minorities that will be forced to forgo constitutional rights and statutory protections that would be available to them in true public schools, in order to gain access to the only available charter schools. Sadly, many charters have chosen as one method to improve their chance of winning, discipline policies & requirements that would be impermissible in “public” schools (in legalize “state actors”)].

Notes:

[1] Footnote #22 from: http://nepc.colorado.edu/files/rb-charterspending_0.pdf

A study frequently cited by charter advocates, authored by researchers from Ball State University and Public Impact, compared the charter versus traditional public school funding deficits across states, rating states by the extent that they under-subsidize charter schools. The authors identify no state or city where charter schools are fully, equitably funded.

But simple direct comparisons between subsidies for charter schools and public districts can be misleading because public districts may still retain some responsibility for expenditures associated with charters that fall within their district boundaries or that serve students from their district. For example, under many state charter laws, host districts or sending districts retain responsibility for providing transportation services, subsidizing food services, or providing funding for special education services. Revenues provided to host districts to provide these services may show up on host district financial reports, and if the service is financed directly by the host district, the expenditure will also be incurred by the host, not the charter, even though the services are received by charter students.

Drawing simple direct comparisons thus can result in a compounded error: Host districts are credited with an expense on children attending charter schools, but children attending charter schools are not credited to the district enrollment. In a per-pupil spending calculation for the host districts, this may lead to inflating the numerator (district expenditures) while deflating the denominator (pupils served), thus significantly inflating the district’s per pupil spending. Concurrently, the charter expenditure is deflated.

Correct budgeting would reverse those two entries, essentially subtracting the expense from the budget calculated for the district, while adding the in-kind funding to the charter school calculation. Further, in districts like New York City, the city Department of Education incurs the expense for providing facilities to several charters. That is, the City’s budget, not the charter budgets, incur another expense that serves only charter students. The Ball State/Public Impact study errs egregiously on all fronts, assuming in each and every case that the revenue reported by charter schools versus traditional public schools provides the same range of services and provides those services exclusively for the students in that sector (district or charter).

Charter advocates often argue that charters are most disadvantaged in financial comparisons because charters must often incur from their annual operating expenses, the expenses associated with leasing facilities space. Indeed it is true that charters are not afforded the ability to levy taxes to carry public debt to finance construction of facilities. But it is incorrect to assume when comparing expenditures that for traditional public schools, facilities are already paid for and have no associated costs, while charter schools must bear the burden of leasing at market rates – essentially and “all versus nothing” comparison. First, public districts do have ongoing maintenance and operations costs of facilities as well as payments on debt incurred for capital investment, including new construction and renovation. Second, charter schools finance their facilities by a variety of mechanisms, with many in New York City operating in space provided by the city, many charters nationwide operating in space fully financed with private philanthropy, and many holding lease agreements for privately or publicly owned facilities.

New York City is not alone it its choice to provide full facilities support for some charter school operators (http://www.thenotebook.org/blog/124517/district-cant-say-how-many-millions-its-spending-renaissance-charters). Thus, the common characterization that charter schools front 100% of facilities costs from operating budgets, with no public subsidy, and traditional public school facilities are “free” of any costs, is wrong in nearly every case, and in some cases there exists no facilities cost disadvantage whatsoever for charter operators. Baker and Ferris (2011) point out that while the Ball State/Public Impact Study claims that charter schools in New York State are severely underfunded, the New York City Independent Budget Office (IBO), in more refined analysis focusing only on New York City charters (the majority of charters in the State), points out that charter schools housed within Board of Education facilities are comparably subsidized when compared with traditional public schools (2008-09). In revised analyses, the IBO found that co-located charters (in 2009-10) actually received more than city public schools, while charters housed in private space continued to receive less (after discounting occupancy costs). That is, the funding picture around facilities is more nuanced that is often suggested.

Batdorff, M., Maloney, L., May, J., Doyle, D., & Hassel, B. (2010). Charter School Funding: Inequity Persists. Muncie, IN: Ball State University.

NYC Independent Budget Office (2010, February). Comparing the Level of Public Support: Charter Schools versus Traditional Public Schools. New York: Author, 1.

NYC Independent Budget Office (2011). Charter Schools Housed in the City’s School Buildings get More Public Funding per Student than Traditional Public Schools. New York: Author. Retrieved April 24, 2012, from http://ibo.nyc.ny.us/cgi-park/?p=272.

NYC Independent Budget Office (2011). Comparison of Funding Traditional Schools vs. Charter Schools: Supplement. New York: Author .Retrieved April 24, 2012, from http://www.ibo.nyc.ny.us/iboreports/chartersupplement.pdf.

Note: The average “capital outlay” expenditure of public school districts in 2008-09 was over $2,000 per pupil in New York State, nearly $2,000 per pupil in Texas and about $1,400 per pupil in Ohio. Based on enrollment weighted averages generated from the U.S. Census Bureau’s Fiscal Survey of Local Governments, Elementary and Secondary School Finances 2008-09 (variable tcapout): http://www2.census.gov/govs/school/elsec09t.xls