It’s been a while since I’ve written anything about New Jersey Charter schools, so I figured I throw a few new graphs and tables out there. In the not too distant past, I’ve explained:

- That Newark charter schools in particular, persist in having an overall cream-skimming effect in Newark, creating demographic advantage for themselves and ultimately to the detriment of the district.

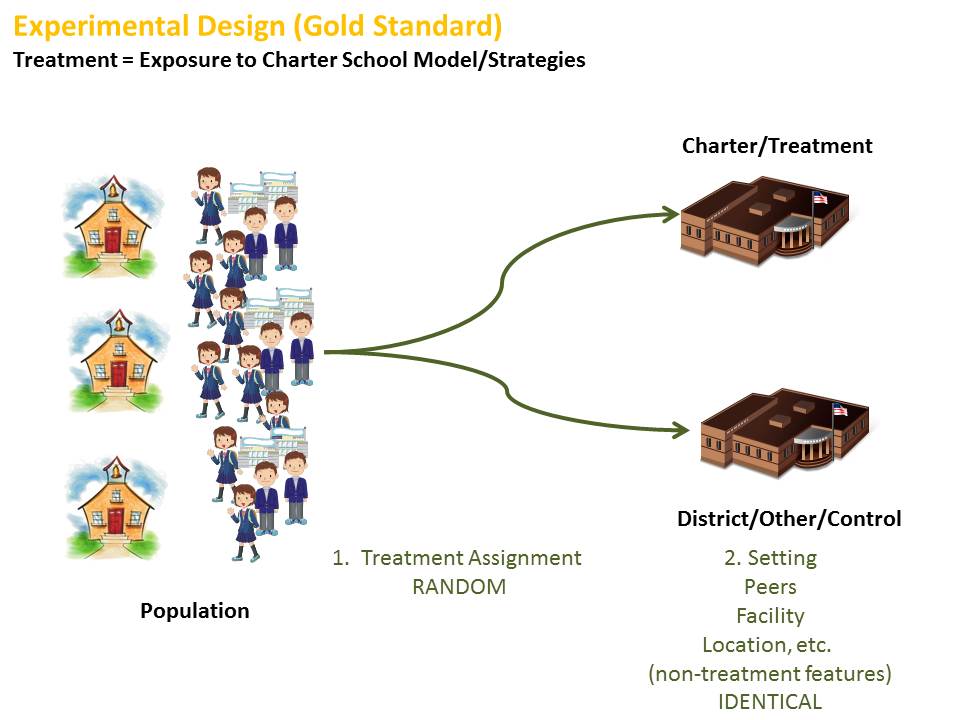

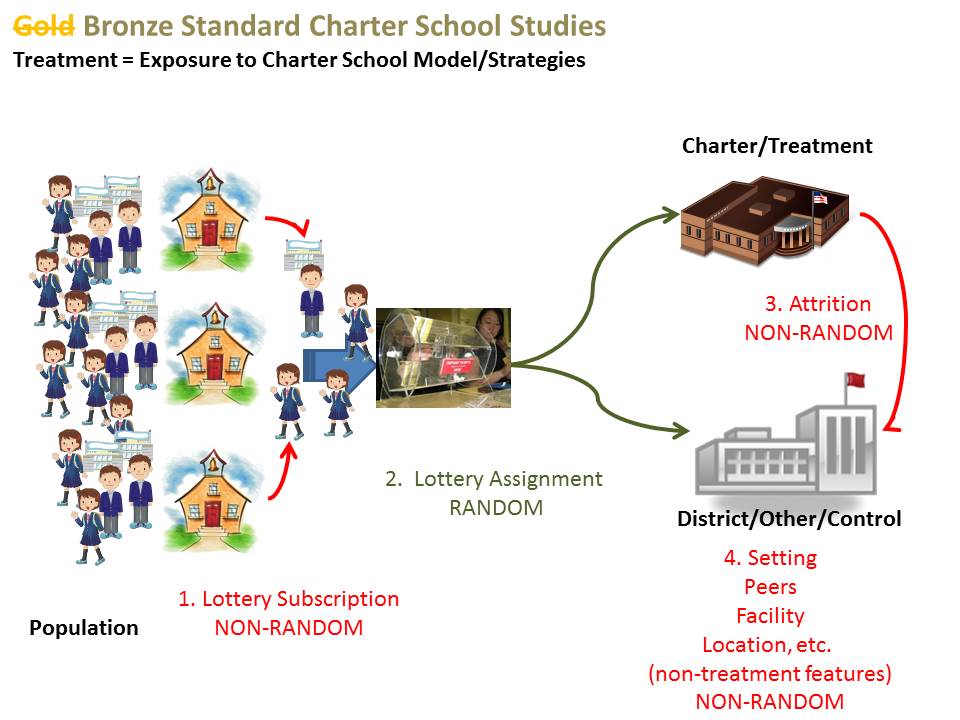

- That while the NJ CREDO charter school effect study showed positive effects of charter enrollment on student outcomes specifically (and only) in Newark, the unique features of student sorting (read skimming) in Newark make it difficult to draw any reasonable conclusions about the effectiveness of actual practices of Newark Charters. Note that in my most recent post, I re-explain the problem with asserting school effects, when a sizable component of the school effect may be a function of the children (peer group) served.

- In many earlier posts, I evaluated the extent to which average performance levels of Newark (and other NJ) charter schools were higher or lower than those of demographically similar schools, finding that charters were/are pretty much scattered.

- And I’ve raised questions about other data – including attrition rates – for some high flying NJ charters.

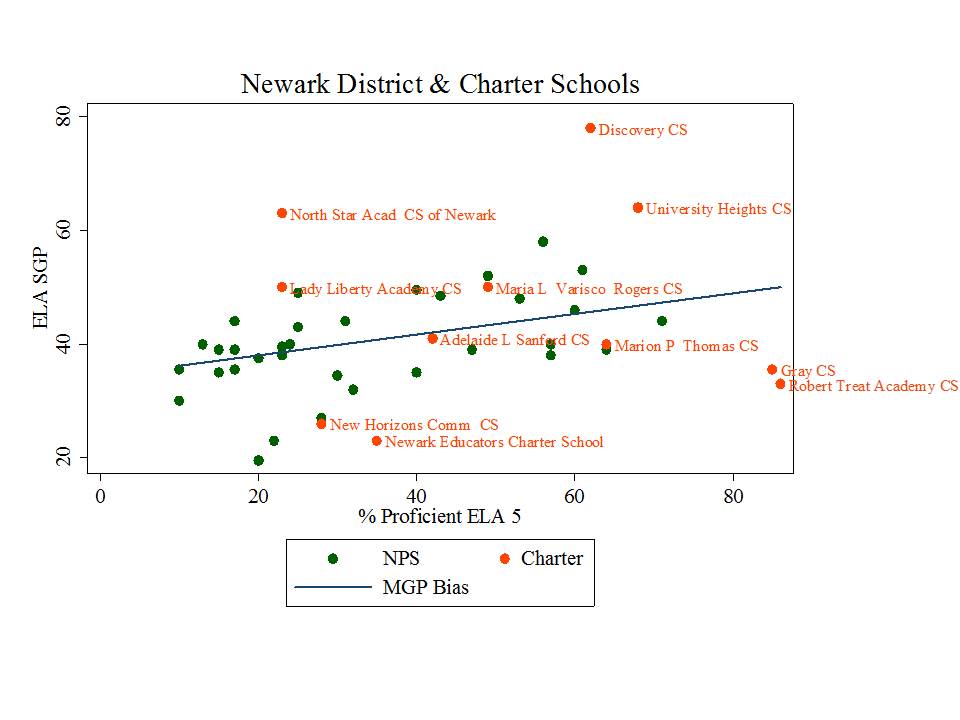

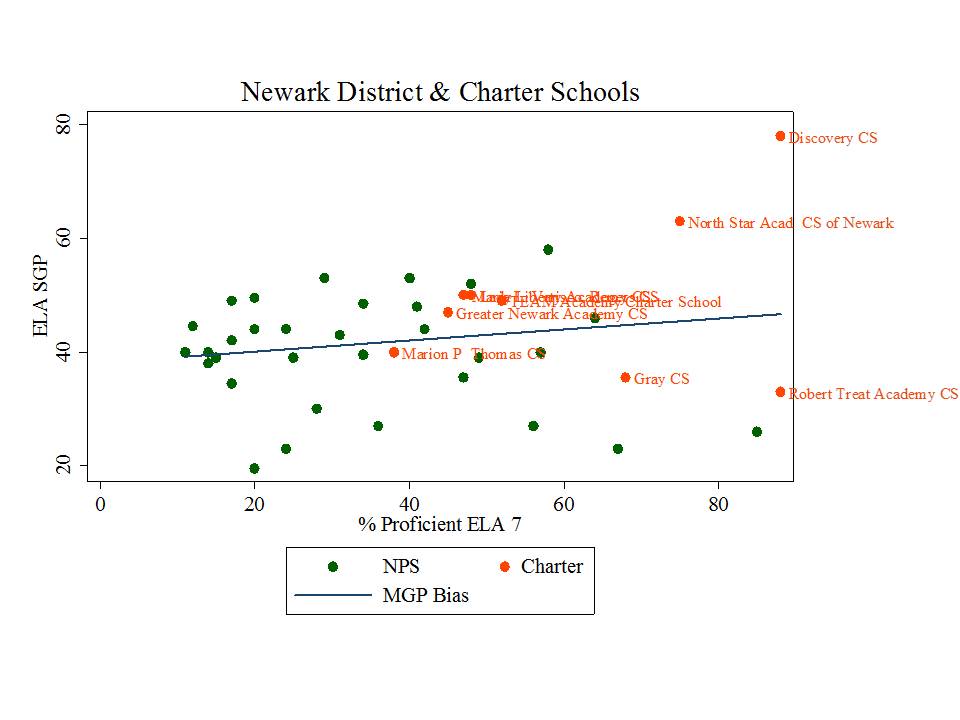

As an update, since past posts have only looked at NJ charter performance in terms of “levels” (shares of kids proficient, or not), let’s take a look at how Newark district and charter schools compare on the state’s new school level growth percentile measures. In theory, these measures should provide us a more reasonable measure of how much the schools contribute to year over year changes in student test scores. Of course, remember, that school effect is conflated with peer effect and with every other attribute of the yearly in and out of school lives of the kids attending each school.

And bear in mind that I’ve critiqued in great detail previously that New Jersey’s growth percentile scores appear to do a particularly crappy job at removing biases associated with student demographics, or with average performance levels of kids in a cohort. To summarize prior findings:

- school average growth percentiles tend to be lower in schools with higher average rates of proficiency to begin with.

- school average growth percentiles tend to be lower in schools with higher shares of low income children.

- school average growth percentiles tend to be lower in schools with more non-proficient scoring special education students.

And each of these relationships was disturbingly strong. So, any analysis of the growth percentile data must be taken with a grain of salt.

So, pretending for a moment that the growth percentile data aren’t complete garbage, let’s take a look at the growth percentile data for Newark Charter Schools, along side district schools.

Let’s start with a statewide look at charter school growth percentiles compared to district schools. In this figure, I’ve graphed the 7th grade ELA growth percentiles with respect to average school level proficiency rates, since the growth percentile data seem so heavily biased in this regard. As such, it seems most reasonable to try to account for this bias by comparing schools against those with the most similar current average proficiency rates.

Figure 1. Statewide Language Arts Growth with Respect to Average Proficiency (Grade 7)

Now, if we buy these growth percentiles as reasonable, then one of our conclusions might be that Robert Treat Academy is one of, if not the worst school in the state – at least in terms of its ability to contribute to test score gains. By contrast, Discovery Charter school totally rocks.

Other charters to be explored in greater depth below, like TEAM Academy in Newark fall in the “somewhat better than average” category (marginally above the trendline) and frequently cited standouts like North Star Academy somewhat higher (though in the cloud, statewide).

So, let’s focus on Newark in particular.

Figure 2. Newark Language Arts Growth with Respect to Average Proficiency (Grade 5)

Figure 3. Newark Language Arts Growth with Respect to Average Proficiency (Grade 6)

Figure 4. Newark Language Arts Growth with Respect to Average Proficiency (Grade 7)

Figure 5. Newark Language Arts Growth with Respect to Average Proficiency (Grade 8)

In my earlier posts, it was typically schools like Treat, North Star, Gray and Greater Newark that rose to the top, with TEAM posting more average results, but all of these results heavily mediated by demographic differences, with Treat and North Star hardly resembling district schools at all, and TEAM coming closer but still holding a demographic edge over district schools.

In my earlier posts, it was typically schools like Treat, North Star, Gray and Greater Newark that rose to the top, with TEAM posting more average results, but all of these results heavily mediated by demographic differences, with Treat and North Star hardly resembling district schools at all, and TEAM coming closer but still holding a demographic edge over district schools.

In these updated graphs, using the growth measures, one must begin to question the Robert Treat miracle especially. Yeah… they start high… and stay high on proficiency… but they appear to contributed little to achievement gains. Again, that is, if these measures really have any value at all. Gray is also hardly a standout… or actually it is a standout… but not in a good way.

TEAM continues to post solidly above average, but still in the non-superman (mere mortal) mix of district & charter schooling in Newark.

Remember, school gains are a function of all that goes on in the lives of kids assigned to each school, including in school and out of school stuff, including peer effect.

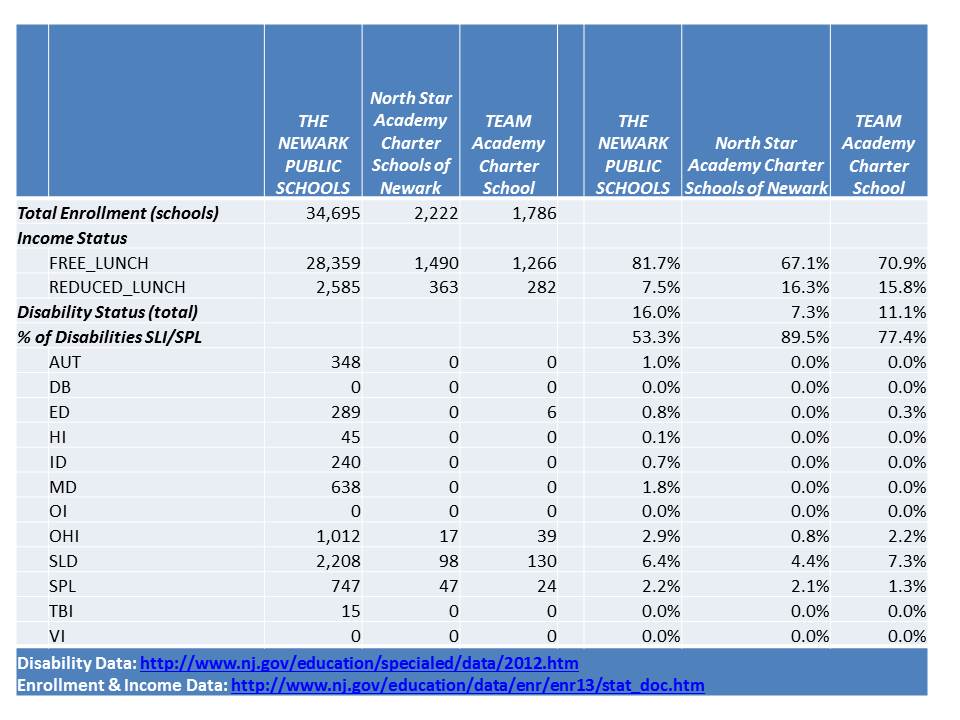

Let’s focus in on the contrast between TEAM and North Star for a bit. These are the two big ones in Newark now, and they’ve evolved over time toward providing K-12 programs. Here’s the most recent demographic data comparing income status and special education populations by classification, for NPS, TEAM and North Star.

Figure 6. Demographic data for NPS, TEAM and North Star (2012-13 enrollments & 2011-12 special education)

North Star especially continues to serve far fewer of the lowest income children. And, North Star continues to serve very few children with disabilities, and next to none with more severe disabilities. Similarly, in TEAM, most children with disabilities have only mild specific learning disabilities or speech/language impairment.

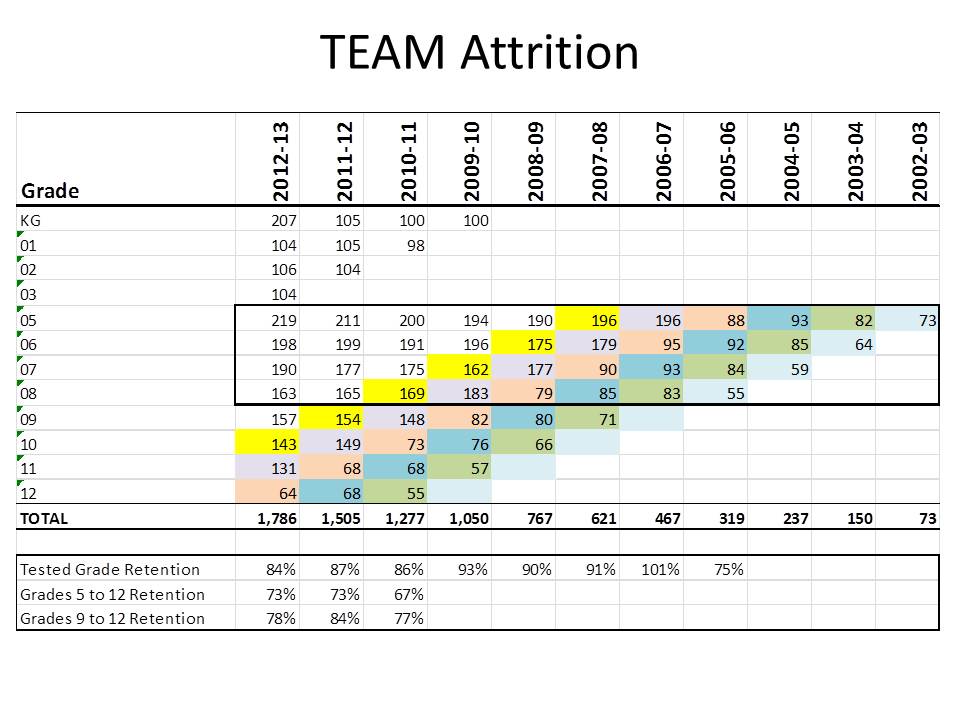

But this next piece remains the most interesting to me. I’ve not revisited attrition rates for some time, and now these schools are bigger and have a longer track record, so it’s hard to argue that the patterns we see over several cohorts, including the most recent several years, for schools serving over 1,000 children, are anomalies. At this point, these data are becoming sufficiently stable and predictable to represent patterns of practice.

The next two tables map the changes in cohort size over time for cohorts of students attending TEAM and North Star. The major caveat of these tables is that if there are 80 5th graders one year and 80 6th graders the next, we don’t necessarily know that they are the same 80 kids. 5 may have left and been replaced by 5 new students. But, taking on new students does pose some “risk” in terms of expected test scores, so some charters engage in less “backfilling” than others, and fewer backfill enrollments in upper grades.

Since tests that influence SGPs are given in grades 5 – 8 (well, 3 – 8, but 5-8 is most relevant here), the extent to which kids drop off between grade 5 & 6, 6 & 7, and who drops off between those grades can, of course, affect the median measured gain (if kids who were more likely to show low gains leave, and thus aren’t around for the next year of testing, and those more likely to show high gains stay, then median gains will shift upward from what they might have otherwise been).

First, lets look at TEAM.

Figure 7. TEAM Cohort Attrition Rates

Among tested grade ranges, with the exception of the most recent cohort, TEAM keeps from the upper 80s to low 90s – percentages of 5th graders who make it to 8th grade (with potential replacement involved). Any annual attrition may bias growth percentiles, as noted above, if potentially lower gain students are more likely to leave. But without student level data, that’s a bit hard to tell.

Among tested grade ranges, with the exception of the most recent cohort, TEAM keeps from the upper 80s to low 90s – percentages of 5th graders who make it to 8th grade (with potential replacement involved). Any annual attrition may bias growth percentiles, as noted above, if potentially lower gain students are more likely to leave. But without student level data, that’s a bit hard to tell.

TEAMs’ grade 5 to 12 attrition is greater, dropping over 25% of kids per cohort. From 9 to 12, about 20% disappear.

But these figures are far more striking for North Star.

Figure 8. North Star Cohort Attrition Rates

Within tested grades, North Star matches TEAM in the most recent year, but for previous years, North Star loses marginally more kids from grades 5 to 8, hanging mainly in the lower to mid 80s. So, if there is bias in who is leaving – if weaker – slower gain students are more likely to leave, that may partially explain North Star’s greater gains seen above. Further, as weaker students leave, the peer group composition changes, also having potential positive effects on growth for those who remain.

Within tested grades, North Star matches TEAM in the most recent year, but for previous years, North Star loses marginally more kids from grades 5 to 8, hanging mainly in the lower to mid 80s. So, if there is bias in who is leaving – if weaker – slower gain students are more likely to leave, that may partially explain North Star’s greater gains seen above. Further, as weaker students leave, the peer group composition changes, also having potential positive effects on growth for those who remain.

Now… the other portion of attrition here doesn’t presently affect the growth percentile scores, but it is indeed striking, and raises serious policy concerns about the larger role of a school like North Star in the Newark community.

From grade 5 to 12, North Star persistently finishes less than half the number who started! As noted above, this is no anomaly at this point. It’s a pattern and a persistent one, over the four cohorts that have gone this far. I may choose to track this back further, but going back further brings us to smaller starting cohorts, increasing volatility.

Even from Grade 9 to 12, only about 65% persist.

Parsing these data a step further, let’s look specifically at attrition for Black boys at North Star.

Figure 9. Cohort Decline for Black Boys

I’ve flipped the direction of the years here…to be moving forward in the logical left to right direction. So, reorient yourself! For grade 5 to 12, North Star had only one cohort that approached retaining 50% (well… actually, 42%). In other years, grade 5 to 12 attrition was around 75% or greater for black boys. Grade 9 to 12 attrition was about 40% in the most recent two years, and much more than that previously for black boys. Of the 50 or so annual entrants at 5th grade to North Star prior to recent doubling, only a handful would ever make it to 12th grade.

I’ve flipped the direction of the years here…to be moving forward in the logical left to right direction. So, reorient yourself! For grade 5 to 12, North Star had only one cohort that approached retaining 50% (well… actually, 42%). In other years, grade 5 to 12 attrition was around 75% or greater for black boys. Grade 9 to 12 attrition was about 40% in the most recent two years, and much more than that previously for black boys. Of the 50 or so annual entrants at 5th grade to North Star prior to recent doubling, only a handful would ever make it to 12th grade.

The concern here, of course, is what is happening to the rest of those students who leave, and what is the effect of this churn on surrounding schools – perhaps both charter and district schools who are absorbing these students who are so rapidly shed. [to the extent, if any, that exceptional middle school preparation at a school like North Star leads students to scholarship opportunities at elite private schools, or acceptance to highly selective magnet schools, this attrition may be less ugly than it looks]

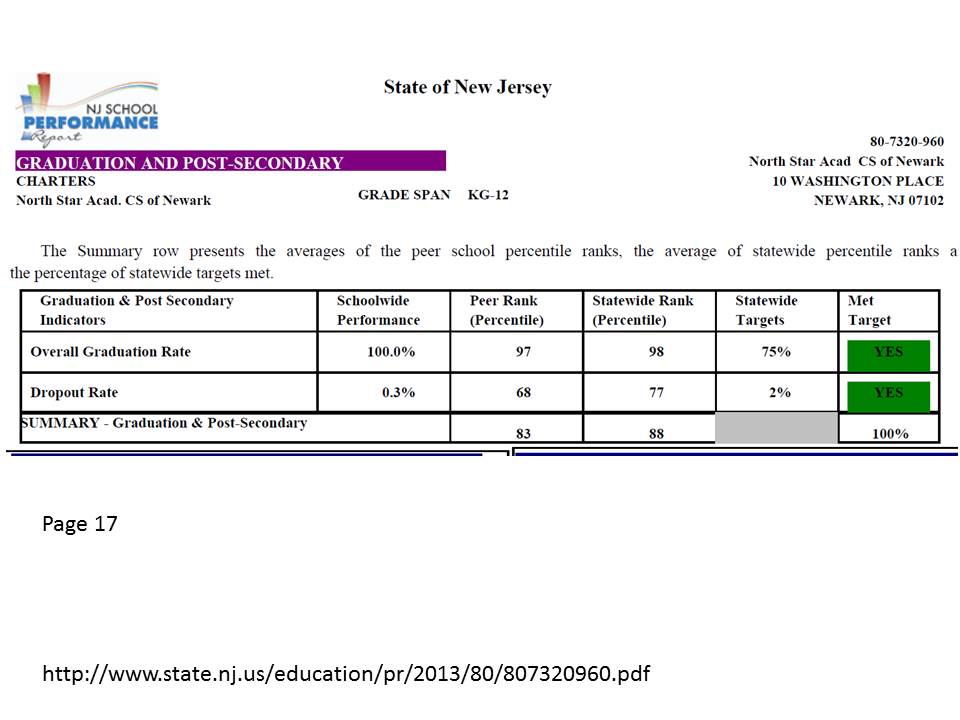

Of course, this does lead one to question how North Star is able to report to the state a 100% graduation rate and a .3% dropout rate? Seems a bit suspect, eh?

Figure 9. What North Star reports as its dropout and graduation rates

Notably absent HERE, as well, is any mention of the fact that only a handful of kids actually stick around through grade 12?

So, is this data driven leadership, or little more than drive by data? Seems that they’ve missed a really, really critical issue. [if you lose more than half of your kids btw grades 5 and 8, and even more than that for one of your target populations – black boys – that kind of diminishes the value of the outcomes created for the handful who stay, doesn’t it? Not for the stayers individually, but certainly for the school as a whole.]

A few closing thoughts…

As I’ve mentioned on many previous occasions, it is issues such as this as well as the demographic effects of charters, magnets and other schools that induce student sorting in the district, that must be carefully tracked and appropriately managed. Neither an actual public school, nor a school chartered to serve the public interest (with public resources) should be shielded from scrutiny.

If we are really serious about promoting a system of great schools (as opposed to a school system) which productively integrates charter and district schools, then we can nolonger sit by and permit behavior by some that is more likely than not, damaging to others (in that same system). That’s simply not how a “system of great schools” works, or how any well-functioning system, biological, ecological, economic, social or otherwise works.

But sadly, those who most vociferously favor charter expansion as a key element of supposed “portfolio” models of schooling appear entirely uninterested mitigating parasitic activity (that which achieves the parasites goal at the expense of the host. e.g. parasitic rather than symbiotic). Rather, they fallaciously argue that an organism consisting entirely of potential parasites is itself, the optimal form. That the good host is one that relinquishes? (WTF?) As if somehow, the damaging effects of skimming and selective attrition might be lessened or cease to exist if the entirety of cities such as Newark were served only by charter schools. Such an assertion is not merely suspect, it’s absurd.

So then, imagine if you will, an entire district of North Stars? Or an entire district of those who strive to achieve the same public accolades of North Star? That would sure work well from a public policy standpoint. They’d be in constant bitter battle over who could get by with the fewest of the lowest income kids. Anyone who couldn’t “cut it” in 5th or 6th grade, along with each and every child with a disability other than speech impairment would dumped out on the streets of Newark. Even after the rather significant front end sorting, we’d be looking at 45% citywide graduation rates – actually – likely much lower than that because some of the aspiring North Star’s would have to take students even less likely to complete under their preferred model.

Yes, there would probably eventually be some “market segmentation” (a hearty mix of segregation, tracking & warehousing of kids with disabilities) – special schools for the kids brushed off to begin with – and special schools for those shed later on. But, under current accountability policies, those “special schools” would be closed and reconstituted every few years or so since they won’t be able to post the requisite gains. Sounds like one hell of a “system of great schools,” doesn’t it.

To the extent we avoid changing the incentive structure & accountability system, the tendency to act parasitic rather than in a more beneficial relationship will dominate. The current system is driven by the need to post good numbers – good “reported” numbers. NJ has created a reporting system that allows North Star to post a 100% grad rate and .3% dropout rate despite completing less than 50% of their 5th graders.

What do they get for this? Broad awards, accolades from NJDOE… & the opportunity to run their own graduate school to train teachers in their stellar methods (that result in nearly every black boy leaving before graduation).

A major problem here is that the incentive structure, the accountability measures, and system as it stands favor taking the parasitic path to results.

That said, in my view, it takes morally compromised leadership to rationalize taking this to the extent that North Star has. TEAM, for example, exists under the very same accountability structures. And while TEAM does its own share of skimming and shedding, it’s no North Star.

But I digress.

More to come – perhaps.