On a daily basis, I continue to be befuddled by the ignorant bluster, intellectual laziness and mathematical and financial ineptitude of those who most loudly opine on how to fix America’s supposed dreadful public education system. Common examples that irk me include taking numbers out context to make them seem shocking, like this Newark example (some additional context), or the repeated misrepresentation of per pupil spending in New York State.

And then there are those times, when a loudmouthed pundit simply chooses to ignore reality altogether – and frame the problem as it exists only in their own cloistered world or own head. That brings me to this tweet:

Philly’s district as financially distressed & low-performing as I’ve seen. Stop propping it up. Bring it to an end. http://t.co/6QttBnjwzl

— Andy Smarick (@smarick) June 17, 2013

Perhaps I’m misinterpreting, but it appears that Andy Smarick in this tweet is placing blame for the financial distress of Philadephia schools squarely if not entirely on the city school district itself. In fact, he suggests that someone has been “propping up” the district. And that because the district – like all “urban” districts do – fails – it must be replaced by an assortment of private providers. See this post for more insights into Smarick’s “solution” to this “problem” that Philly Schools has clearly created on its own.

To callously assert that the problems faced by Philly schools are primarily if not entirely a function of local mismanagement – and that someone somewhere has actually been trying to “prop” the district up – displays a baffling degree of willful ignorance. Save for another day a discussion of the fact that over the past 10 years, the city has in fact adopted many of the strategies that Smarick himself endorses (privatized management, charter expansion, etc.).

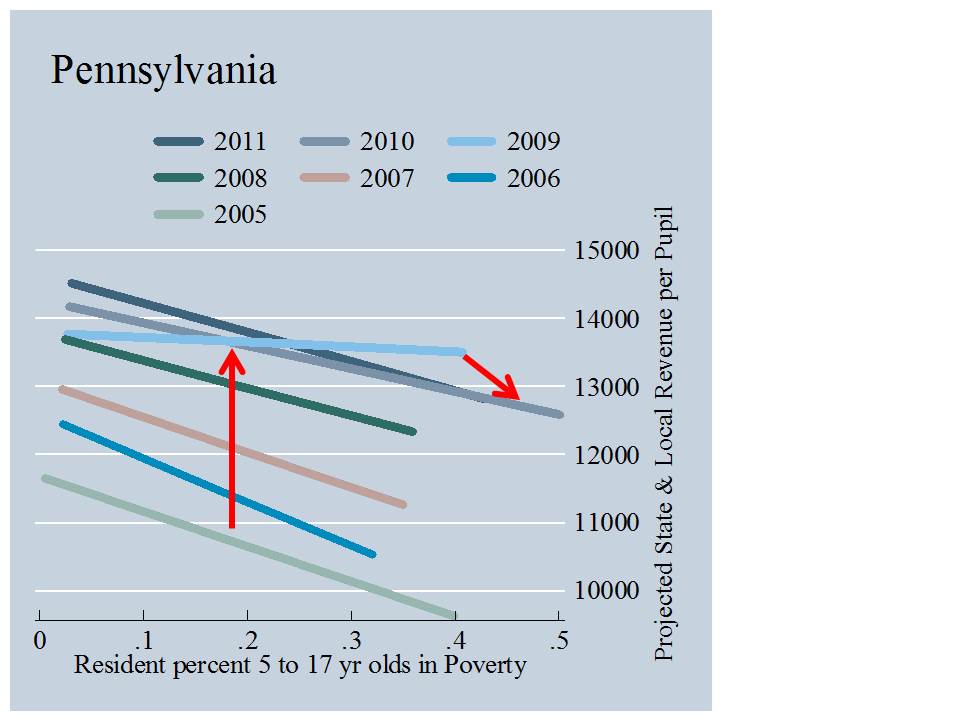

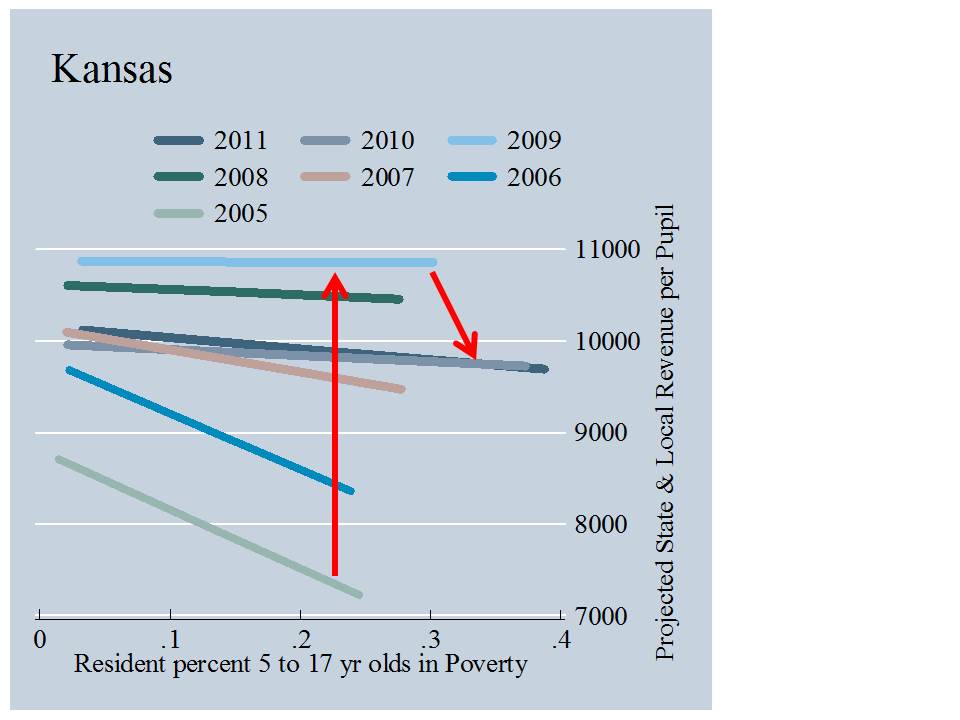

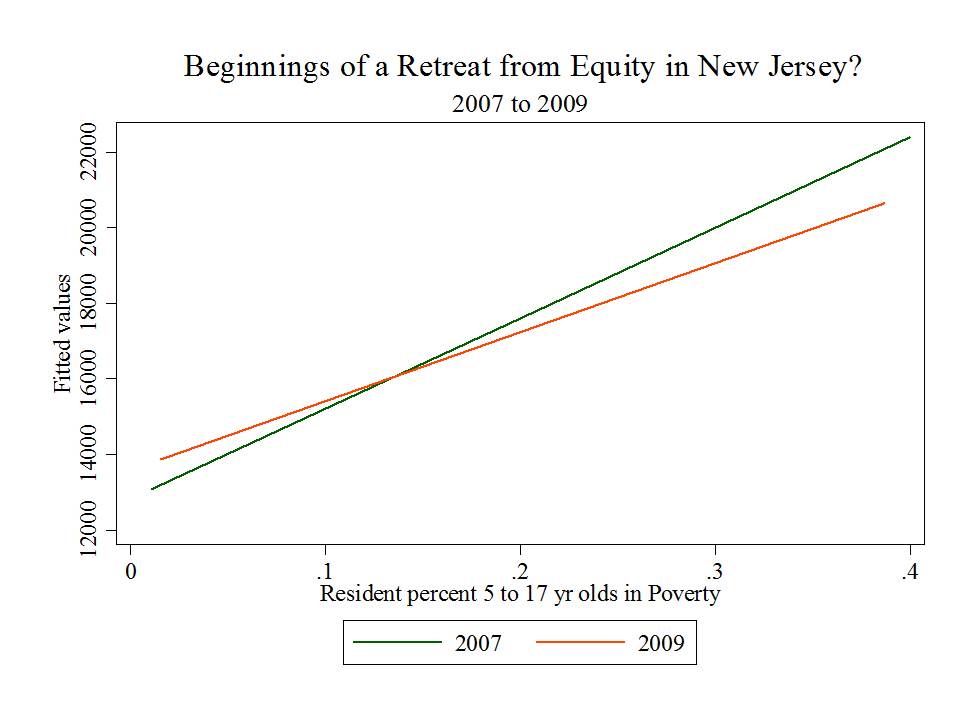

One might argue that to a significant extent, through the state’s dysfunctional and inequitable approach to providing financial support for local public districts, Pennsylvania has for some time (but for a brief period of temporary reforms) actually been trying to put an end to Philly schools. And it appears that they may be achieving their goals. To summarize:

- Pennsylvania has among the least equitable state school finance systems in the country, and Philly bears the brunt of that system.

- Pennsylvania’s school finance system is actually designed in ways that divert needed funding away from higher need districts like Philadelphia.

- And Pennsylvania’s school finance system has created numerous perverse incentives regarding charter school funding, also to Philly’s disadvantage. (see here also)

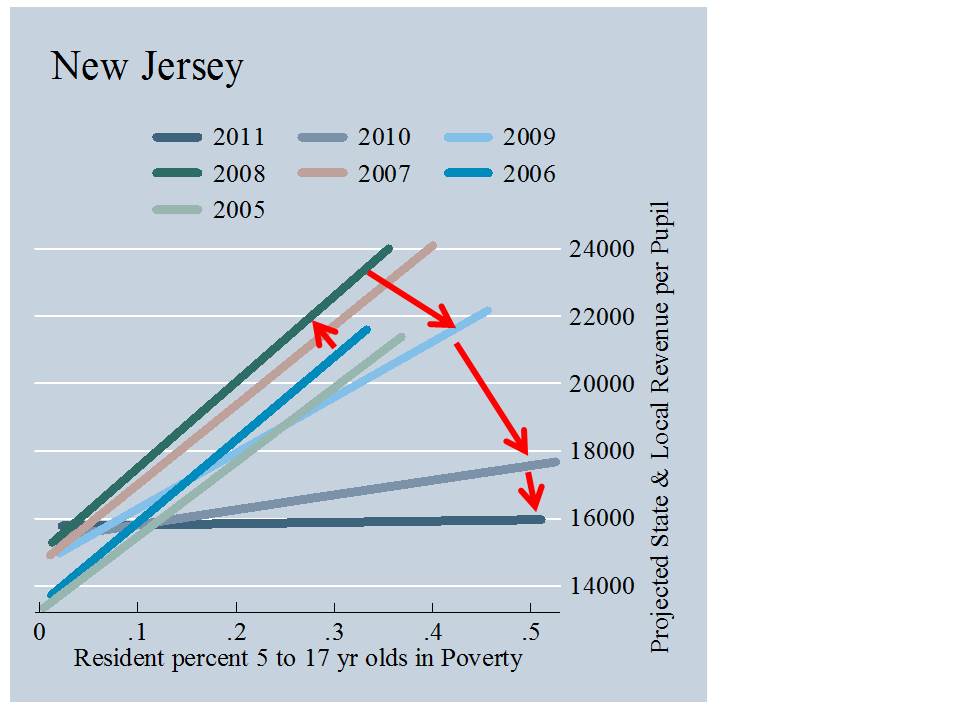

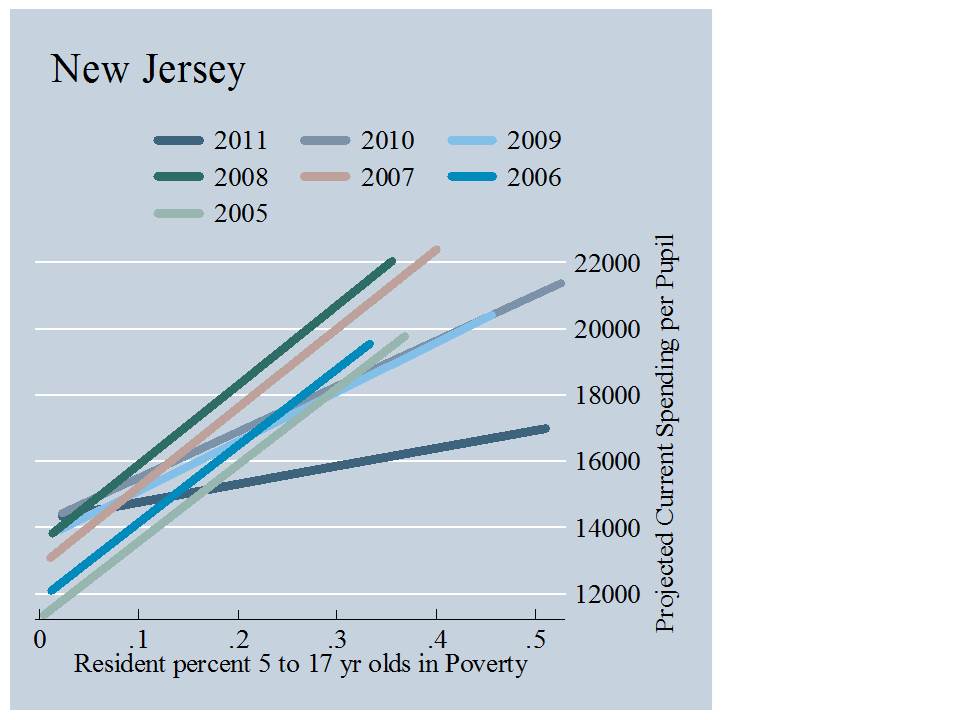

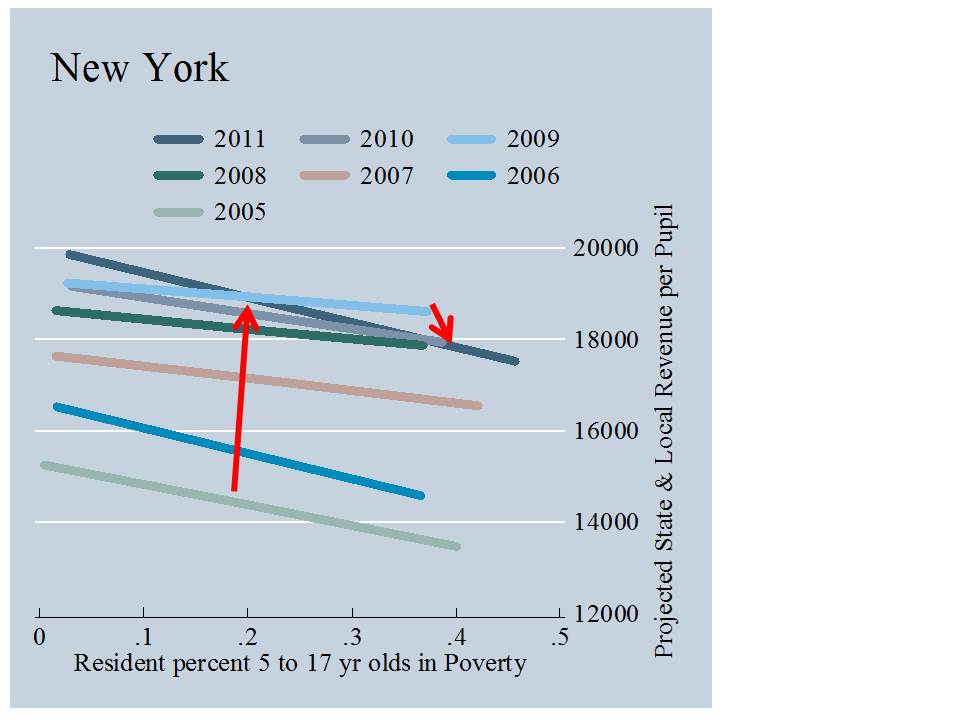

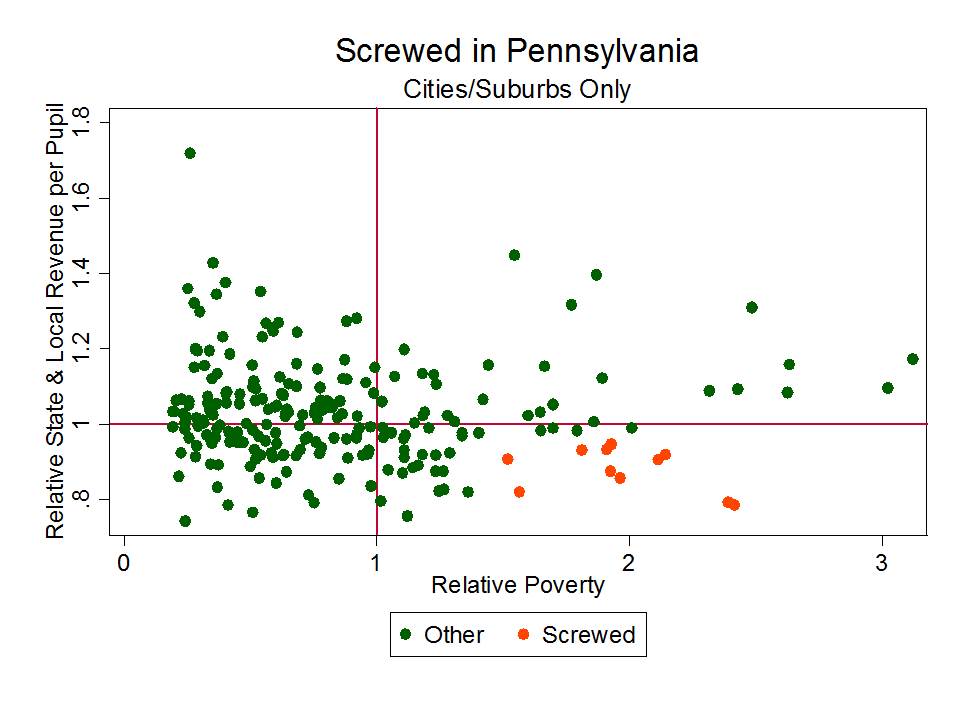

I would be remiss if I didn’t actually include data or a graph in this post, beyond the citations to sources above that include plenty. So here it is – the distribution of state and local revenues for districts in the Philly metro area from 2005 to 2011, with respect to child poverty.

A district with average state and local revenue for the metro area would fall on the 1.0 line. The sizes of the shapes represent the size of the districts in terms of enrollment. Circles are for 2005, triangles for 2007 and so on (see key). The vertical position of larger shapes is measured from their center. Notably, Philly hangs at marginally above 80% of metro average funding. Yes… following the Rendell formula reforms Philly’s position started to improve slightly but has since fallen back, and never really made sufficient progress. Way up in that upper left hand corner, is Lower Merion School District, perhaps the most affluent suburb of Philly. They’re doin’ just fine!

A district with average state and local revenue for the metro area would fall on the 1.0 line. The sizes of the shapes represent the size of the districts in terms of enrollment. Circles are for 2005, triangles for 2007 and so on (see key). The vertical position of larger shapes is measured from their center. Notably, Philly hangs at marginally above 80% of metro average funding. Yes… following the Rendell formula reforms Philly’s position started to improve slightly but has since fallen back, and never really made sufficient progress. Way up in that upper left hand corner, is Lower Merion School District, perhaps the most affluent suburb of Philly. They’re doin’ just fine!

What we also notice here is that Philly’s indicator is, year after year, moving to the right in our picture. Some of this is a poverty measurement issue, but some of it is real (to be parsed more carefully at a later point). Philly school aged children are getting poorer. They were never compensated with sufficient additional resources to begin with and those resources are now in decline.

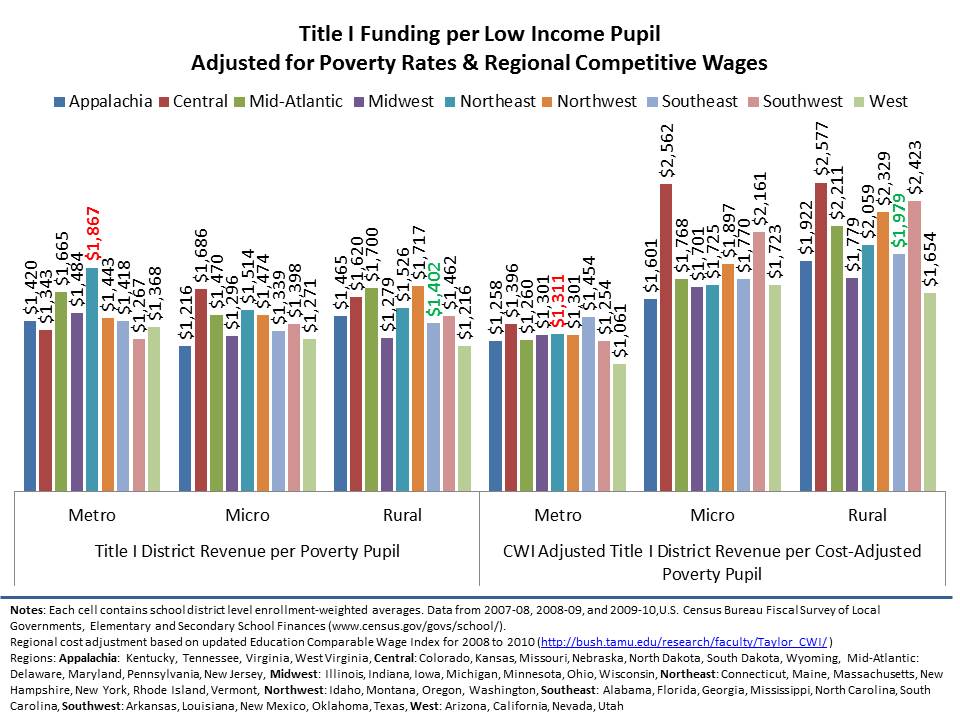

I’ve explained previously that Cost pressures in education are primarily local/regional. Education is a labor intensive industry. Salaries must be competitive on the local/regional labor market to recruit and retain quality teachers. And for children to have access to higher education, they must be able to compete with peers in their region.

And within any region, children with greater needs and schools serving higher concentrations of children with greater needs require more resources – more resources to recruit and retain even comparable numbers of comparable teachers – and more resources to provide smaller class sizes and more individual attention.

Put simply – Philly needs far more than its surrounding districts but has, year after year, had far less.

More information on how and why money matters can be found here:

http://www.shankerinstitute.org/images/doesmoneymatter_final.pdf

As far back as I’ve been running the numbers with both national and state data sources, Philly has been among the most screwed urban public districts in the nation. Philly has never been “propped up.”

End the district? Because it’s clearly the right thing to do for these kids? Because we’ve propped them up year after year… and they just keep blowing it – acting inefficiently – in the interest of adults not kids – as all “urban” districts do? Are you freakin’ kidding me? Wake the hell up. Look at some damned data and evaluate the problem a little more carefully before you make such absurd declarations.

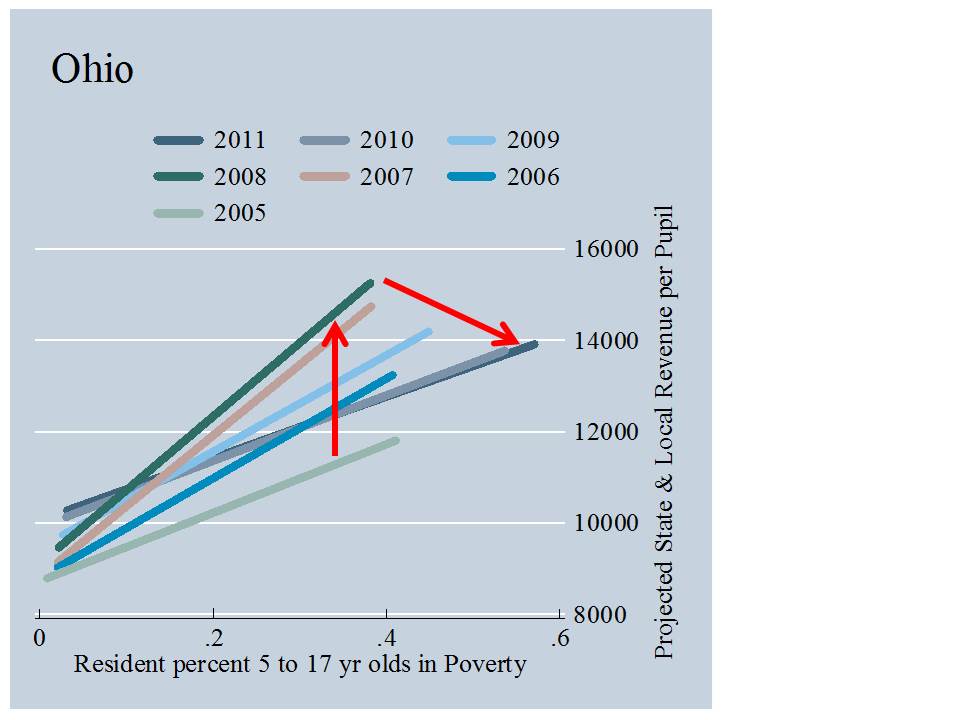

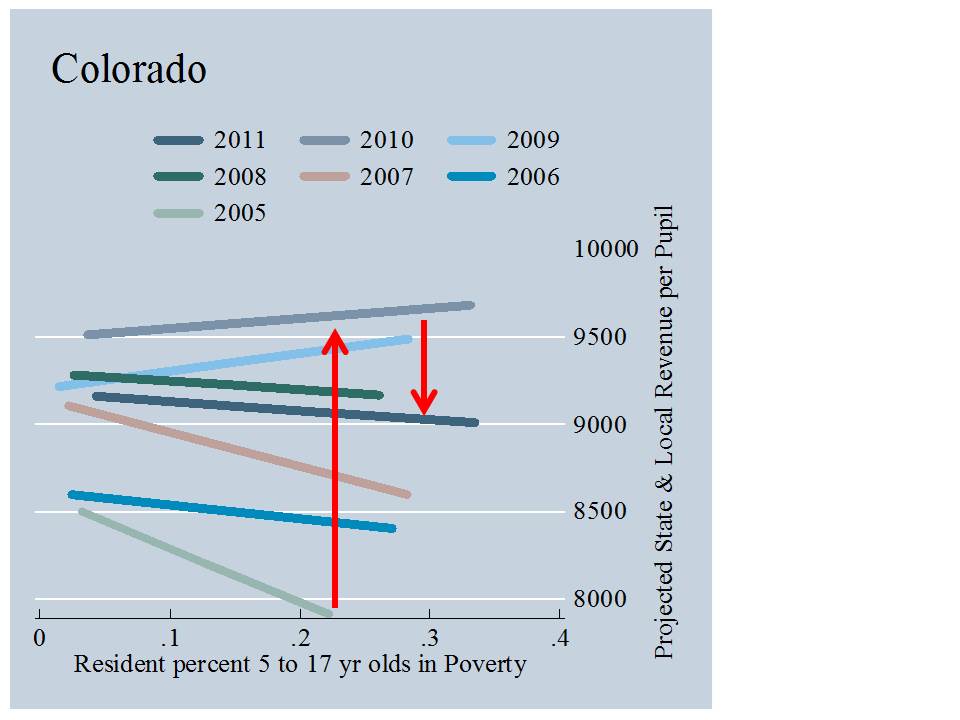

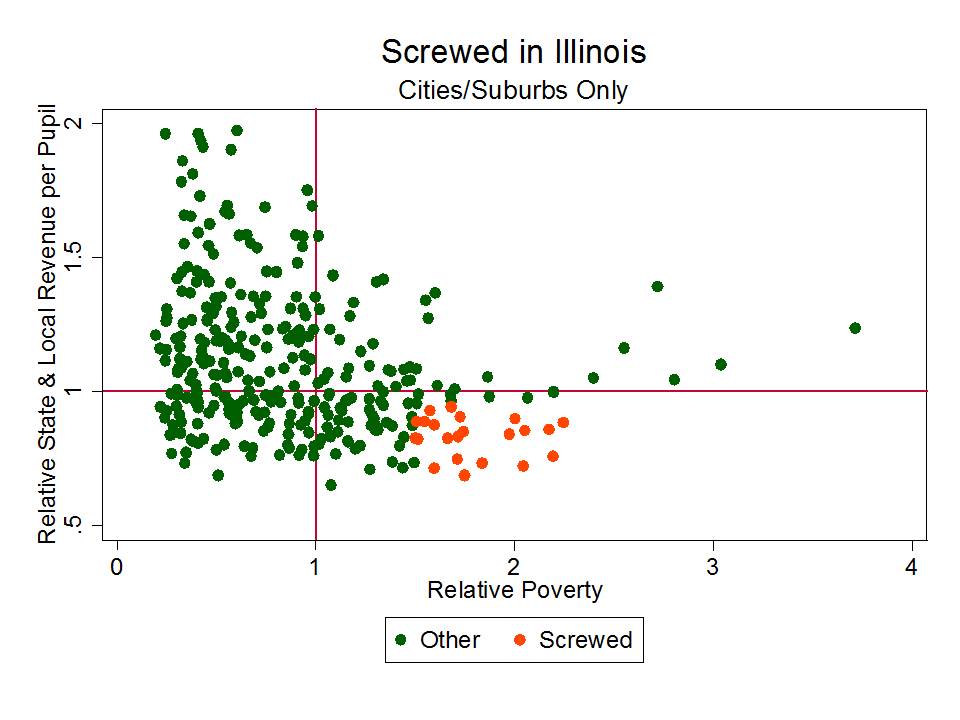

For those who wish to levy similar accusations against Chicago….

Those BIG shapes there… which like Philly, fall below the “average” line and have much higher child poverty than other districts in their metro? yeah… that’s Chicago. As I’ve noted on numerous previous posts in this blog (just search for “Chicago” or Illinois) Chicago and Philly are consistently among the most screwed major urban districts – operating in states with the least equitable state school finance systems. The links above to reports slamming PA (first two bullets) provide similar tales of inequity in Illinois.

Those BIG shapes there… which like Philly, fall below the “average” line and have much higher child poverty than other districts in their metro? yeah… that’s Chicago. As I’ve noted on numerous previous posts in this blog (just search for “Chicago” or Illinois) Chicago and Philly are consistently among the most screwed major urban districts – operating in states with the least equitable state school finance systems. The links above to reports slamming PA (first two bullets) provide similar tales of inequity in Illinois.

UPDATE:

Clearly, Andy Smarick cares little that he lacks even the most basic understanding of the financial plight of Philadelphia public schools. The tweets keep coming… and remain as wrong as ever… simply … factually… wrong! There is just no excuse for this kind of BS.

As for the presumptive solution here… that the “failed urban” district should/can be replaced with portfolio of charter operators that will necessarily be more effective, consider again that Philly has been dabbling for over a decade with resource free attempts at porfolio-izing the district. Consider also that even where charters – at small market share (http://shankerblog.org/?p=8609) do appear relatively effective – there remain substantive differences in their student populations, and in many cases substantive differences in their access to resources.

There are no miracles, regardless of the type of provider. Here’s one particularly relevant post on the non-reformy lessons of KIPP: https://schoolfinance101.wordpress.com/2013/03/01/the-non-reformy-lessons-of-kipp/ & here’s a more cynical post regarding NJ charters, and Uncommon schools in particular:

https://schoolfinance101.wordpress.com/2013/07/14/newark-charter-update-a-few-new-graphs-musings/

In other words, if the urban school district has proven, with unlimited resources, that it cannot succeed, and if charters have largely proven a break even endeavor in their urban contexts, then they too are equal failures. Only in Smarick’s wild imagination is the solution so simple and clear, yet so potentially dangerous if blindly accepted as public policy.

This level of fact-free schlock and feeble minded policy advocacy must stop. Civil discourse? Sorry. I just can’t. This stuff is just too dumb for words! It’s irresponsible, ill-informed, reckless and more.

Philly’s district Exhibit A for ending urban district. Outrageously low performing, budget crisis, demanding more $, no real reform @tussotf

— Andy Smarick (@smarick) August 11, 2013

@stopthefreezeNJ The failed urban district has done wrong by millions of kids. Those boys and girls desperately need something better.

— Andy Smarick (@smarick) August 11, 2013

@kombiz No entity can fix Philly district or any urban district for that matter. Urban district is broken, can’t be fixed, must be replaced.

— Andy Smarick (@smarick) August 11, 2013

@kreed328 @stopthefreezeNJ Urban districts have been failing low-income kids for half a century. No more. Replace the failed urban district.

— Andy Smarick (@smarick) August 11, 2013

@kombiz Philly’s district = terrible for decades, families left, as a result it’s bankrupt. Gotten huge state funding for yrs to prop it up.

— Andy Smarick (@smarick) August 11, 2013

@kombiz I know Philly gets among (if not THE) highest levels of funding from the state. I also know it’s been losing thousands of students.

— Andy Smarick (@smarick) August 11, 2013

@kombiz And I know the state just bailed it out again. And now the district is asking for more money. Again.

— Andy Smarick (@smarick) August 11, 2013

@kombiz You honestly believe if Philly’s district got all the money it wanted, it would become high-performing? Hasn’t worked anywhere in US

— Andy Smarick (@smarick) August 11, 2013