This is an issue I’ve written much about over time – the persistent failure of New York State to fund its highest need public schools, and more recently, the audacity of state officials to place blame for their own egregious failures on the teachers and administrators in the state’s least well-funded school districts. Here’s a recap of previous posts:

- On how New York State crafted a low-ball estimate of what districts needed to achieve adequate outcomes and then still completely failed to fund it.

- On how New York State maintains one of the least equitable state school finance systems in the nation.

- On how New York State’s systemic, persistent underfunding of high need districts has led to significant increases of numbers of children attending school with excessively large class sizes.

- On how New York State officials crafted a completely bogus, racially and economically disparate school classification scheme in order to justify intervening in the very schools they have most deprived over time.

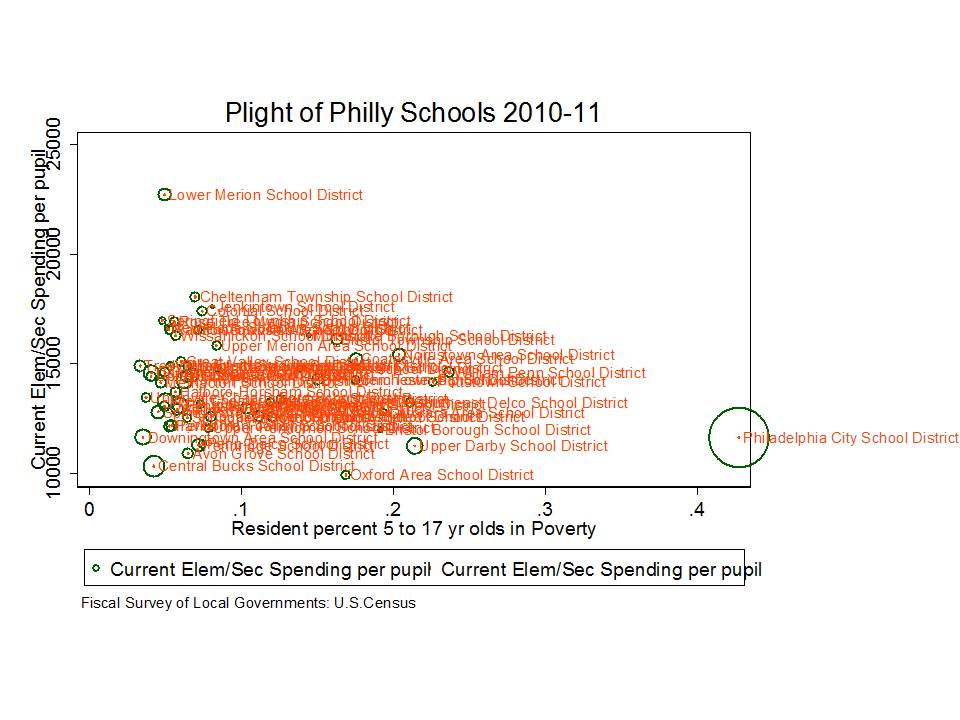

Much like my recent posts regarding the completely misinformed bluster of pundits like Andy Smarick regarding Philadelphia, when I read stuff like this from Joe Williams of Dems of Ed Reform – I get a little irked!

This column from Joe Williams of DFER goes on the attack against those who would criticize NY Governor Cuomo’s call to impose the “death penalty” on failing schools. Williams asserts that any opposition to Cuomo’s statements can be rooted in nothing other than union/teacher self-interests. That there clearly is no possible case, on behalf of parents and/or children, for opposing Cuomo’s death penalty option. It’s just the right thing and only thing to do on behalf of suffering parents and children.

In New York, to cite yet another example, the state teachers union wasn’t happy that Gov. Andrew Cuomo had the audacity to suggest that the public – including the state Government – shouldn’t tolerate schools which persistently fail to educate children. The union’s flacks quickly seized on Cuomo’s descriptive use of the term “Death Penalty” for failing schools. We can only assume they we’re pretending to be outraged on behalf of homicidal maniacs on Death Row or something clever like that.

Thankfully, Cuomo wasn’t distracted by the manufactured outrage at NYSUT headquarters. Yesterday, he stuck to his guns, telling the Buffalo News that he was going to stand with parents, students, teachers, and taxpayers in fighting often-decades-long failure. Amen.

So, let’s take a look at some actual data on how well Governor Cuomo has been looking out for the interests of those disadvantaged children and families trapped in low performing schools and districts around New York State.

To review my previous posts, New York State has a funding formula that bases the amount of funding each district theoretically needs in order to achieve desired outcomes on the average spending of districts that do achieve those outcomes… and then attaches weights to account for additional student needs and regional costs. After setting this target funding figure, the state determines the amount that should be paid for with local tax revenue sources and the amount that should be paid with state aid.

The state sets a state aid target for each district to aid in reaching their adequate spending target.

I’ll set aside entirely the really big and important question of whether these targets set by the state are actually adequate.

So, we’ve got two targets here and as I’ve shown previously, the state under the leadership of Governor Cuomo has missed both targets by long shot – and has especially missed those targets for districts serving the children with the greatest needs. Since I’ve beaten this issue to death in several previous posts, I’ll provide only a short review here.

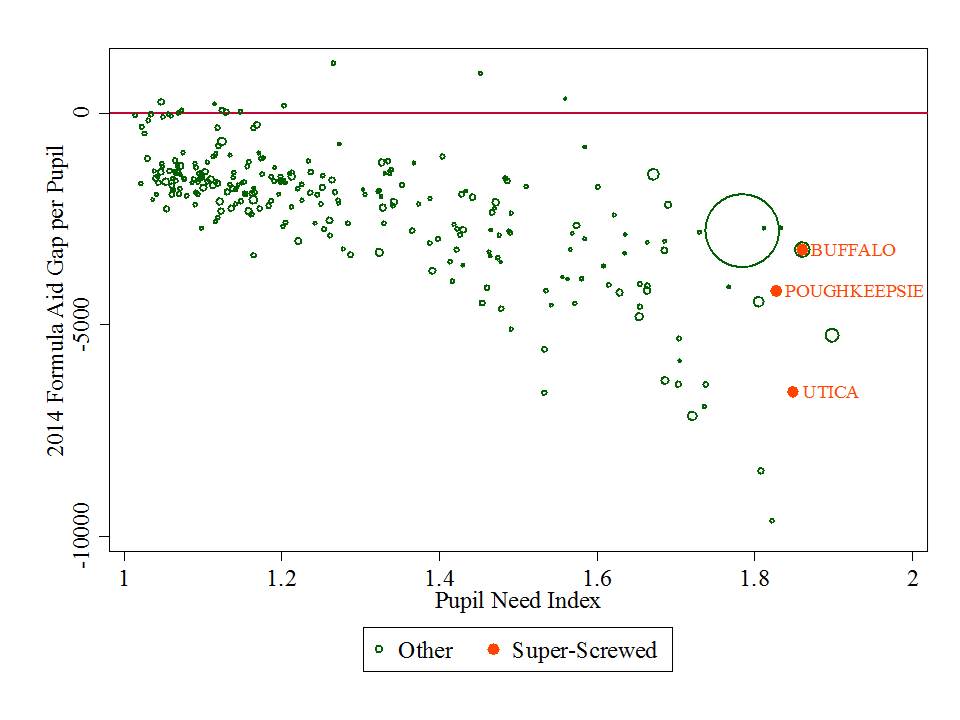

This first figure shows the size of the average STATE AID TARGET GAPS – or amount per pupil that the good Gov’ Cuomo has deprived these children of – for the 2013-14 school year. Notably, the state aid shortfalls- the amount the state underfunds its own formula – grow bigger and bigger as the state’s own pupil need index goes higher and higher. The huge bowling ball here is New York City. I’ve have noted a few standouts from past posts, including Utica and Poughkeepsie, and I’ve include Buffalo here because it seemed to have been the target of the death penalty comments.

Figure 1.

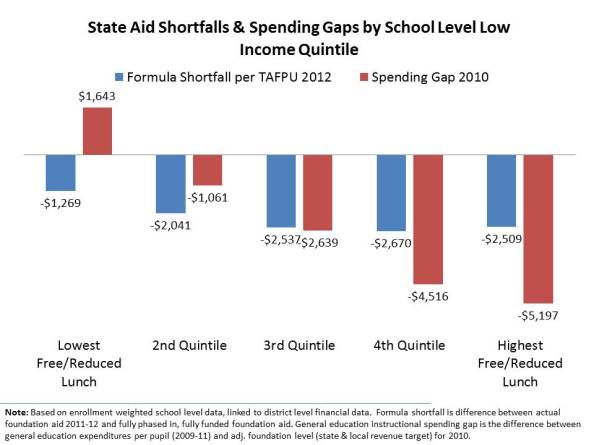

This second figure shows the average gaps of both types described above, by the average shares of children qualified for free or reduced priced lunch in school districts facing those gaps. This graph shows the average gaps of both types. Notably, the lowest poverty districts do spend, on average, more than they need to achieve adequate outcomes, even though they are not receiving their full state aid allotment. They simply have the local capacity to offset these losses – a capacity that higher poverty districts don’t have – and under the Governor’s tax limit policies – couldn’t even use if they did!

Figure 2.

Yeah… that’s right, the good Gov’ who is clearly the only one trying to do right by kids and parents here (according to the bloviating Williams) a) has deprived districts in some cases of over $6,000 per pupil in state aid they are supposed to get, and b) has imposed local tax limits that prohibit those districts from even partially closing the gap the state – the Governor – has created for them.

But hey… it’s all for the kids, right? At least he’s not a union lacky just lookin’ out for himself.

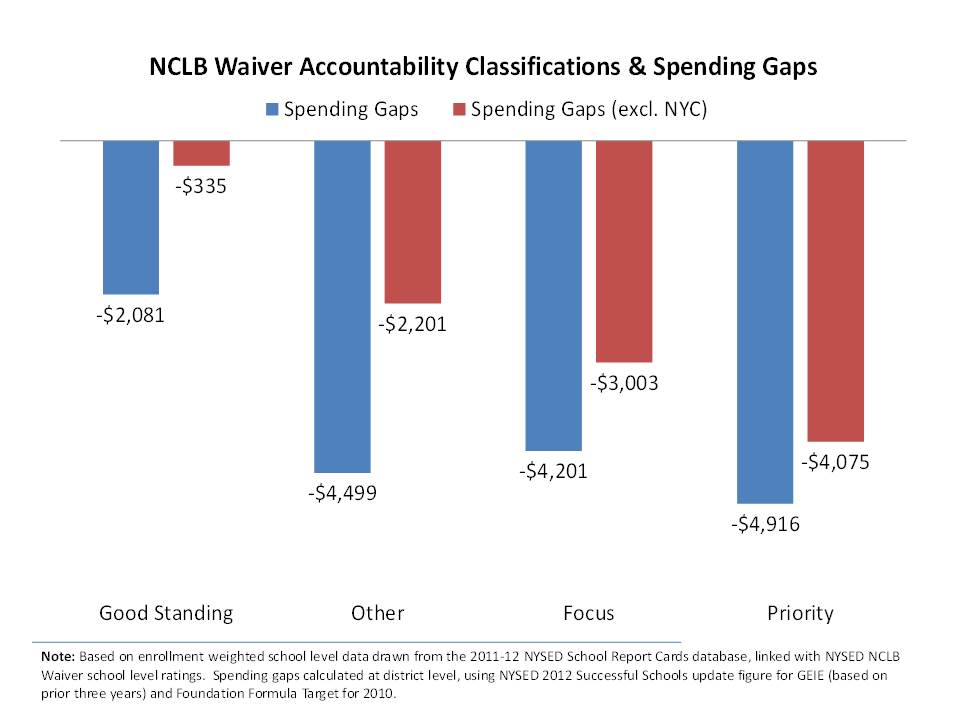

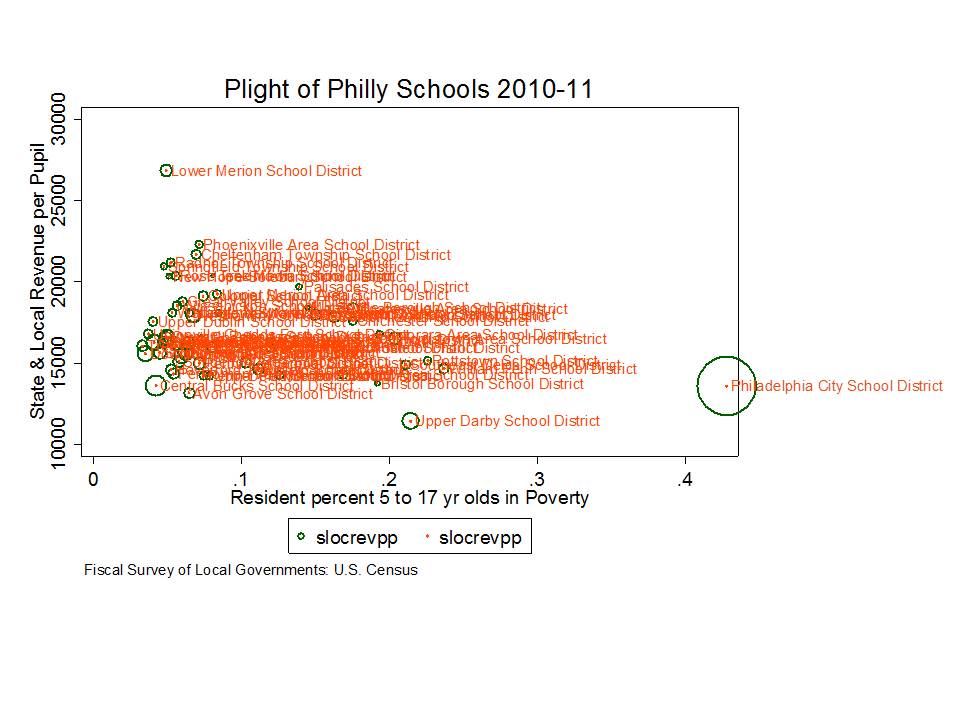

Let’s hit a few more figures here, linking the Governor’s death penalty claims with the funding shortfalls he persistently endorses. This graph shows the average spending gaps of districts of schools falling in the state’s accountability classifications – where presumably, those Priority Schools are the ones on death row.

Figure 3.

Like other states with approved NCLB waivers, New York has adopted a modified performance classification scheme to identify those schools and districts subject to the most immediate interventions.

Using 2010-11 school year results, NYSED will identify as Priority Schools the lowest achieving district and public charter schools in the state based on combined ELA and math assessment results or graduation rate for the “all students” group, if these schools are not demonstrating progress in improving student results. The Department will identify any district with at least one Priority School as a Focus District. If a district is among those with the lowest achieving subgroups in ELA and mathematics combined or for graduation rate and is not showing improvement, the district will also be identified as a Focus District. These districts in turn will be required to identify, at a minimum, a specified number of schools as Focus Schools.[1]

Under this model, the state assumes no blame for a district’s or school’s “failure” to achieve measured outcome goals, but grants itself additional authority to impose significant structural, programmatic and staffing changes. By design of this system, the fault lies with district and school management and operations and the quality of teachers delivering the curriculum. Schools identified as priority schools and districts identified as focus districts are unlikely to receive substantive additional financial resources from the state but will face additional accountability and potential restructuring requirements.

Though unlikely to be a successful strategy with the state as arbiter, districts so severely underfunded by the state and serving high need student populations should push back against the state on the following basis:

Districts with schools that have been preliminarily identified as Priority Schools, as well as preliminarily identified charter schools, that believe that there are extenuating or extraordinary circumstances that should cause the school to not be so identified may petition the Commissioner to have a school removed from Priority status. These petitions will be due two weeks from the date of notification that a school has been preliminarily identified as a Priority School. (p. 6) [2]

That is, it might be a logical strategy to use the state’s own dramatic underfunding of the state’s own estimate of adequate funding as basis for arguing extenuating circumstances. Until the state at the very least meets its own minimum funding obligation, the state should have little authority to force additional requirements or structural changes on these districts. The state must accept at least partial blame for current conditions, if not the lion’s share.

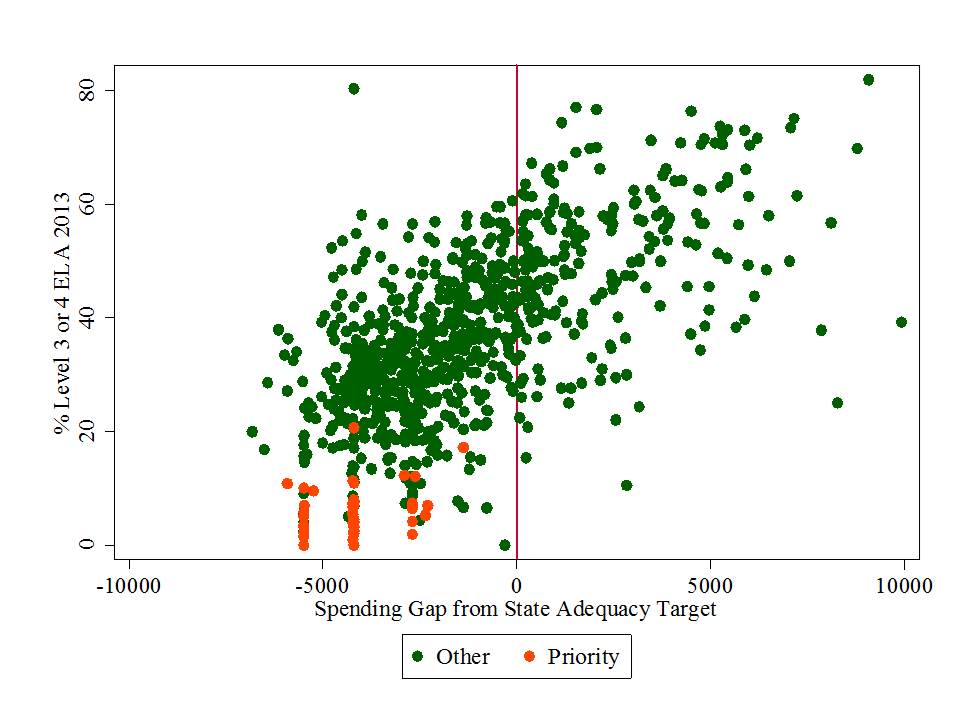

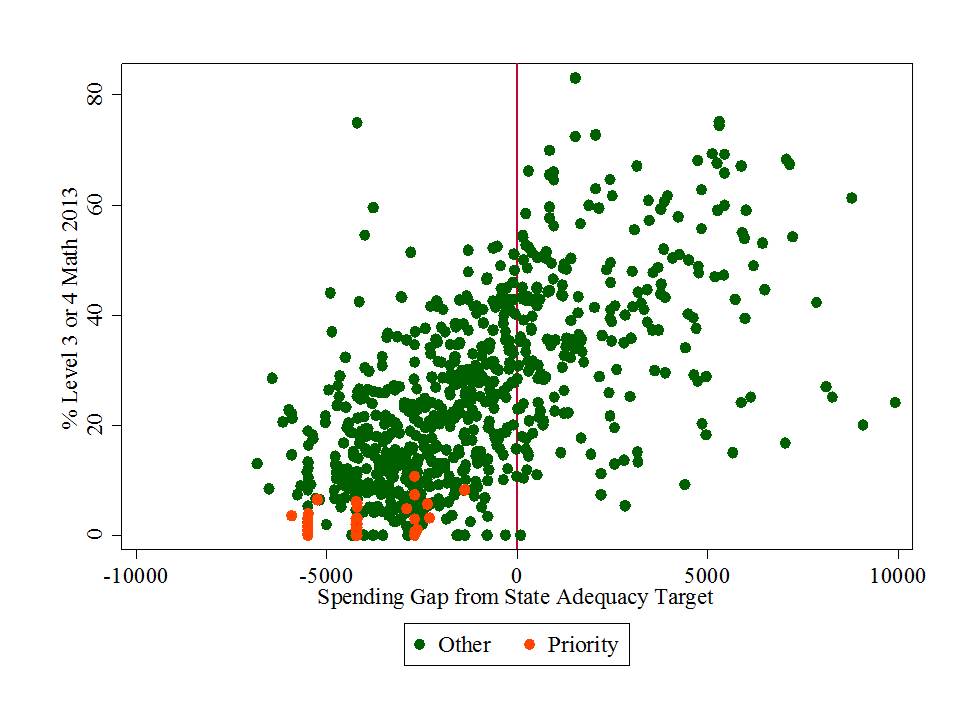

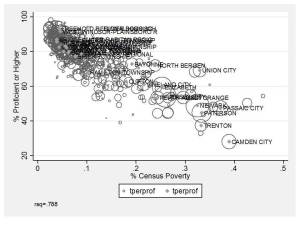

Okay… just a few more to reinforce my point here. These next few graphs compare school level 2013 8th grade outcomes with district level spending gaps – leaving out New York City. Priority schools are indicated in Orange. Indeed, Priority schools are very low performing especially on the new state assessments. But notably, all of the priority schools in these figures are also in districts that spend $2,000 to $6,000 less per pupil – as a direct function of state aid deprivation – than the state itself estimates that they need in order to achieve desired outcomes.

For the stat geeks, the r-squared for each of these is around .50 – that is, spending gaps alone explain 50% of the variance in the outcomes. Below, I provide the multiple regression output.

Figure 4.

Figure 5.

So, before making calls to impose the death penalty on failing New York State schools, I would argue that the Governor should take a hard look at his own policies of recent years, before placing blame – vilifying teachers and other school officials – and assuming easily attainable revenue-neutral solutions.

Put simply, what the New York public should NOT tolerate, is a Governor and Legislature who refuse to provide sufficient resources to high need schools and then turn around and blame the schools and communities for their own failures. (all the while, protecting billions of dollars in separate aid programs that drive funds to wealthy districts).

Appendix

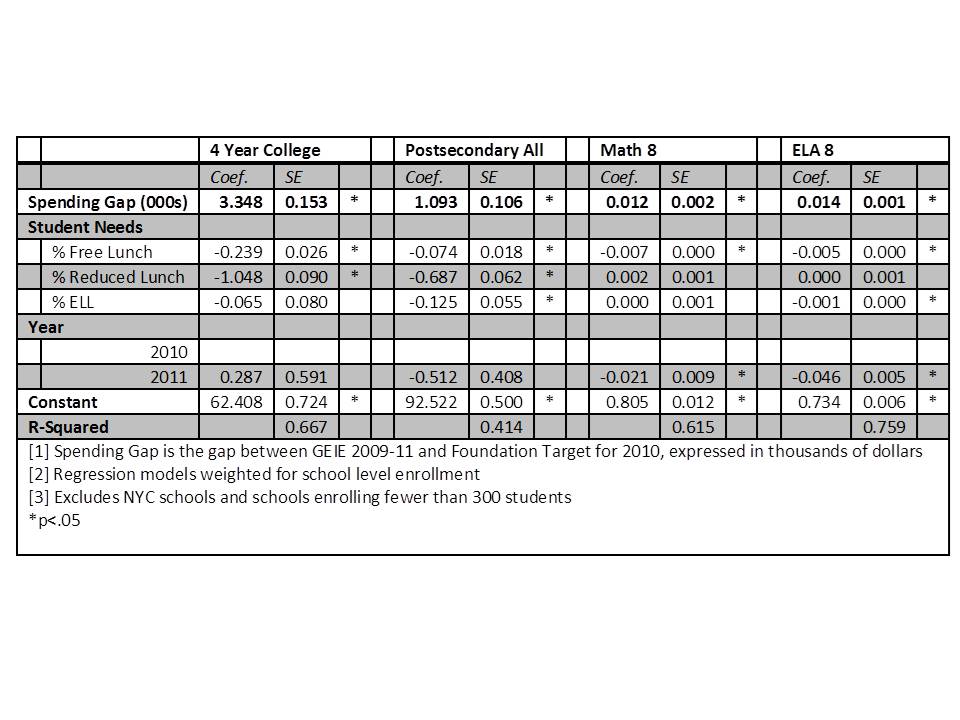

Table 1 provides a multiple regression analysis which asks the question – to what extent are spending gaps associated with outcomes, among schools with similar percentages of low income or non-English speaking children, in the same year. In other words, are the spending gap to outcome relationships displayed in previous figures merely a function of the relationships between outcome gaps and student population characteristics, and spending gaps and student characteristics? Table 18 shows that in each case, for each outcome measure, outcome gaps are associated with spending gaps, even among districts with similar student needs. A$1,000 reduction in spending gap is associated with a 3.3% increase in 4yr college attendance, 1.1% increase in postsecondary attendance, 1.2% increase in 8th grade math scores and 1.4% increase in 8th grade ELA scores.

Table 1.