Below is an excerpt from a recent series of policy briefs on NY State school funding

Statewide Policy Brief with NYC Supplement: BBaker.NYPolicyBrief_NYC

50 Biggest Funding Gaps Supplement: 50 Biggest Aid Gaps 2013-14_15_FINAL

Note: The above briefs received financial support from the New York State Association for Small City School Districts. All opinions are my own.

The 2007 New York State Foundation Aid formula was adopted specifically to achieve compliance with the high court’s 2006 order in the Campaign for Fiscal Equity case. The State argued that this new formula was built on sound empirical analysis of the spending behavior of efficient districts that achieved adequate outcomes on State assessments. The State argued that the Foundation Aid formula applied this evidence, coupled with additional evidence-based adjustments to address student needs and regional cost variation, in order to identify a specific target level of per pupil spending for each district statewide which would provide comparable opportunities to achieve adequate educational outcomes. The State determined the share of that target spending to be raised through local tax revenues and estimated the amount to be paid by the state toward achieving each districts’ sound basic spending target.

Then, the State simply failed to fund the formula.

When enacted, the State committed to phasing-in the Foundation Aid formula from 2007 to 2010-2011. The data behind the base spending calculation had been drawn from 2003-2005, and included general education instructional spending of school districts that a) achieved 80% proficiency rates on state assessments, and b) were in the lower half spending districts among those who achieved desired outcomes. The formula for transitioning these figures to spending targets involves a combination of inflation adjustment, and phase-in percent to bring the dated estimates up to date and project the annual increases for hitting the adequate spending target in future years – four years out in the case of the original proposed remedy.

The current Foundation Aid formula may be described as follows.

District Foundation Aid per Pupil = [Foundation Amount X Pupil Need Index X Regional Cost Index] – Expected Minimum Local Contribution

Under this formula, the State determines the need and cost adjusted target spending for each district by taking the foundation funding level and multiplying it times the pupil need adjustment index (PNI) and then times the regional labor cost adjustment index (RCI). This approach is reasonable only to the extent that the target level of funding generated for each district by the formula represents what the State determines in necessary districts to provide a meaningful high school education, the constitutional standards established in the CFE rulings.

In 2012-13, the inflation adjusted foundation level of funding [for aid calculation purposes] was set to $6,580[1], a value which on its face is far lower than existing spending levels in nearly every New York State public school district or charter school. The pupil need index combines measures of poverty (U.S. Census Poverty and Free or Reduced Lunch) shares of children with limited English language proficiency, and district population sparsity. Finally, the Regional Cost Index is intended to recognize “regional variations in purchasing power around the State, based on wages of non-school professionals.”

Once a district’s target level of funding is calculated, the State then determines the share of that target that will be paid for by the local district and the share that will be picked up by the State through Foundation Aid. The State share of aid, or total Foundation Aid is determined as follows:

Total Foundation Aid = Selected Foundation Aid X Selected Total Aidable Foundation Pupil Units (TAFPU). Selected Foundation Aid is the district’s Foundation Aid per pupil, but no less than $500. [2]

It is important to note that, under this formula, the State provides every district a minimum of at least $500 per pupil in Foundation Aid without regard to whether the district has the ability to raise local revenue to meet or exceed their spending target on their own, without State aid. In this calculation, total Aidable Foundation Pupil Units (TAFPU) include additional weighted adjustments for children with disabilities (not addressed in the PNI), pupils in summer school and half versus full day kindergarten.

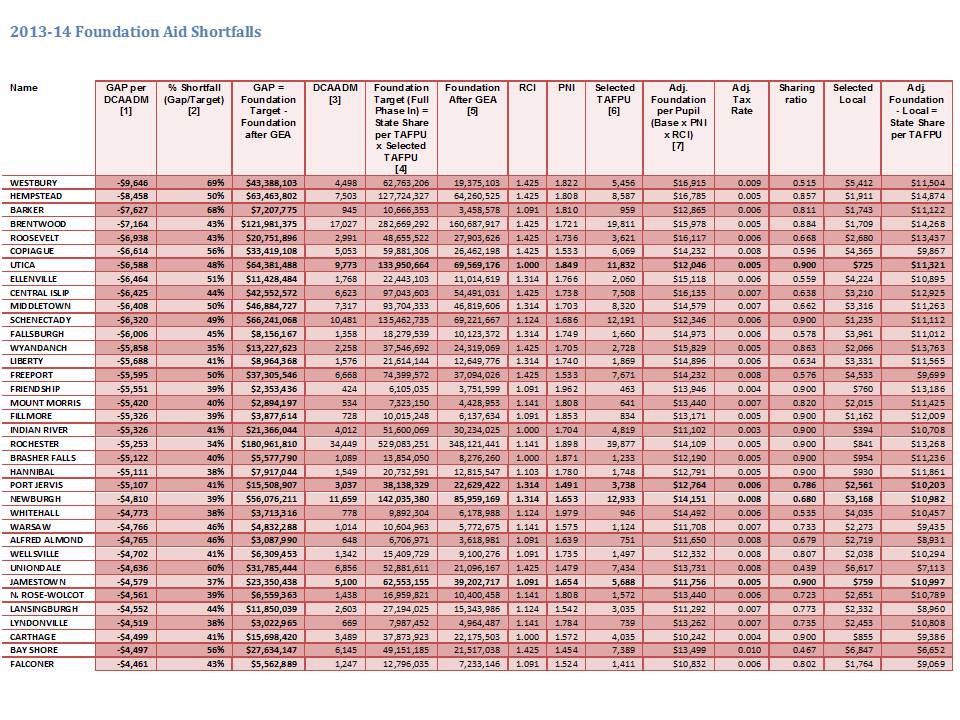

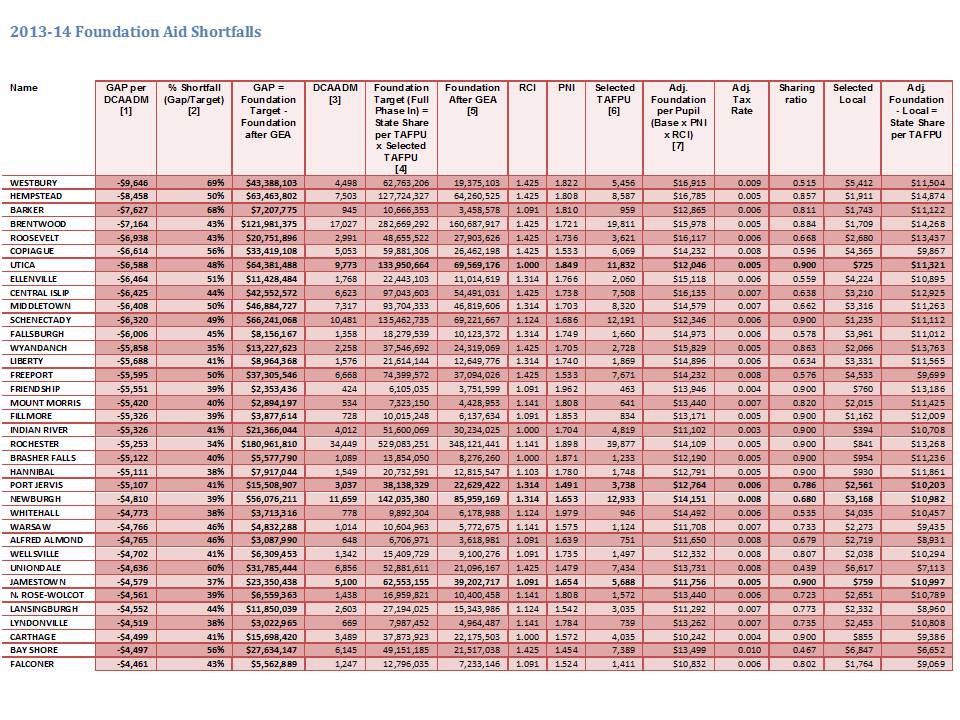

The following table lists those districts with the largest per pupil gaps in State Foundation Aid in 2013-14.. In other words, these are the districts with the largest differences between the Foundation Aid the districts should have received had the State actually funded the Foundation Aid formula compared to the actual Foundation Aid the districts receive after the State’s Aid freeze and cuts are applied. Detailed documentation of the calculations in the table is presented in the appendix.

Top 50 2013-14 Foundation Aid Shortfalls

[1] Shortfall per DCAADM = (Foundation Aid before Phase In – Foundation After GEA) / DCAADM

[2] Shortfall Percent = (Foundation Aid before Phase In – Foundation After GEA) / Foundation Aid before Phase In

[3] NYSED FARU District Fiscal Profiles (http://www.oms.nysed.gov/faru/Profiles/profiles_cover.html) 2010-11

[4] File DBSAD1 W(FA0001) 00 FOUNDATION AID BEFORE PHASE-IN 03/26/13

[5] (Foundation Aid [DBSAA1, 03/26/13, E(FA0197) 00 2013-14 FOUNDATION AID] + GEA [AA(FA0186) 00 2012-13 GAP ELIMINATION ADJUSTMENT (SA1213)] + GEA Partial Restoration [AB(FA0187) 00 2013-14 GEA RESTORATION])

[6] File DBSAD1 M(OP0088) 00 SELECTED TAFPU 03/26/13

[7] File DBSAD1 P(OP0002) 02 ADJUSTED FOUNDATION AMT/PUPIL 03/26/13

Governor’s 2014-15 Budget Shortfalls

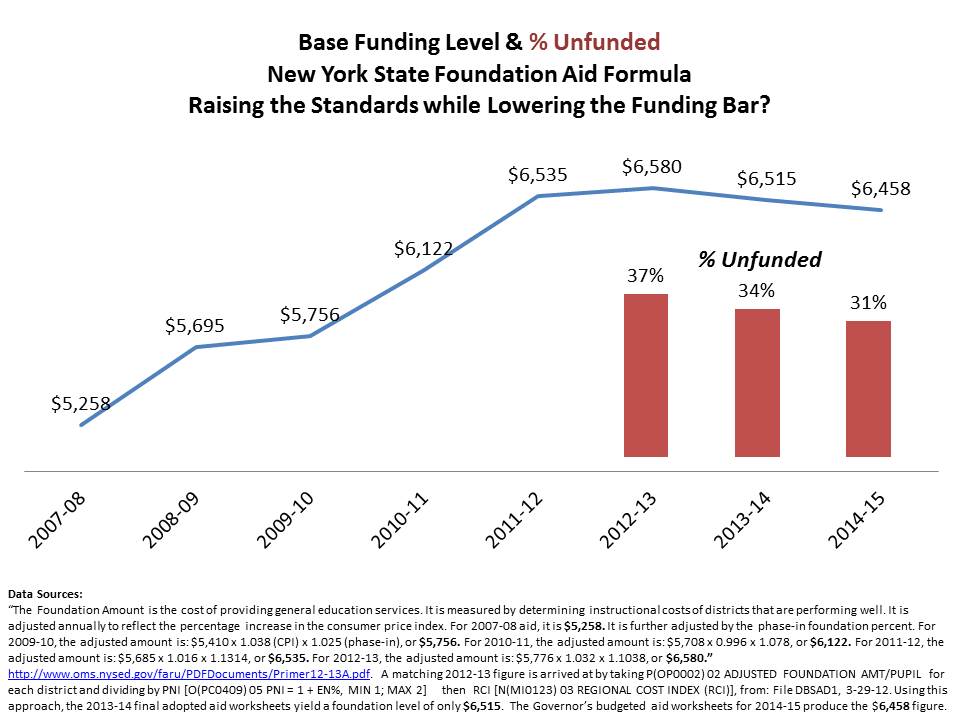

On January 17, 2014 district by district data became available for Governor Cuomo’s budget proposal for the 2014-15 school year. As discussed in the appendix the Adequacy Target Funding per Pupil, which is the “Adjusted Foundation aid per TAFPU [Total Aidable Foundation Pupil Units] is arrived at by taking a base funding figure times the pupil needs index (PNI) times the regional cost index (RCI). That base funding figure is intended to be based on average spending of the lower half of local public school districts meeting prescribed outcome standards, as discussed in the policy brief released concurrent with these analyses. Inexplicably, the state has chosen over the past few years to lower that base funding amount, despite increasing outcome standards.

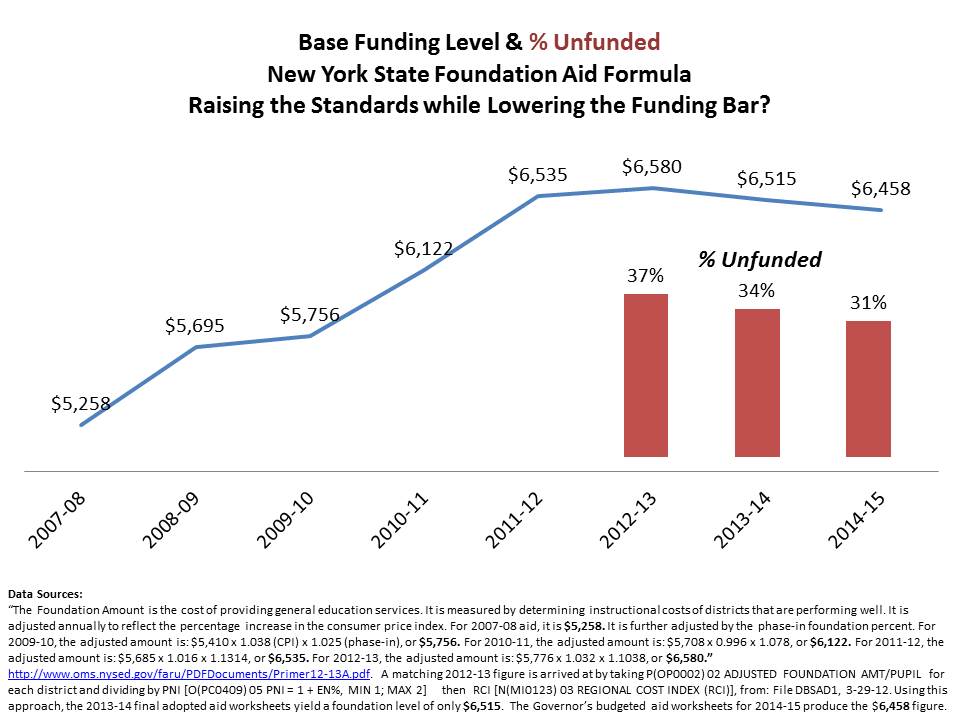

Using the state’s own spreadsheets for aid allotments, one can back these figures out of the state aid worksheets as well by taking each districts’ “Adjusted Foundation per Pupil” divided by their PNI and RCI. For 2012-13, that figure rounds to $6,580 for each district. The 2013-14 aid worksheets yield a foundation level of only $6,515, or a cut to the foundation level of $65. Backing this figure out of the 2014-15 budget proposal yields $6,458, another cut to the base funding level. This means that the gaps in funding for the past few years are further understated in these tables. Yet, these gaps are still huge. The table below summarizes those gaps for the 50 districts with the largest per pupil funding gaps.

Comparing the Governor’s budget for 2014-15 to prior year gaps provides an appearance that the Governor’s budget helps in closing the gaps substantially for some districts like Utica. This is a false impression however, created by lowering the adequacy target to offset the increase in pupil needs.

Top 50 2014-15 Budgeted Shortfalls

[data run as of 1/17/14]

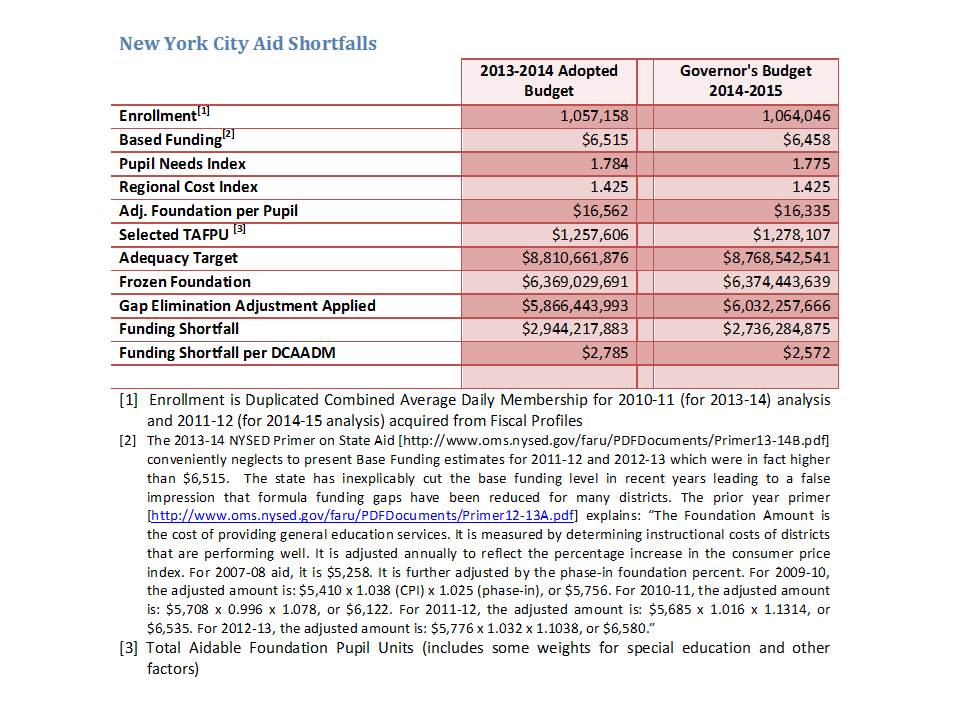

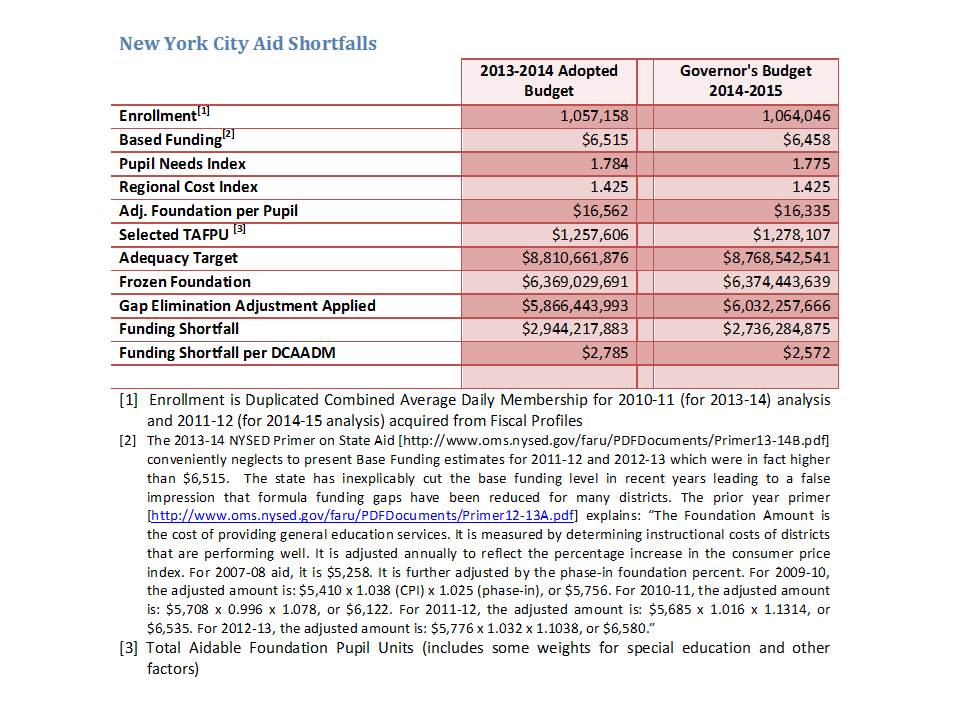

What about New York City?

The following table shows the current year, and Governor’s budgeted shortfalls for New York City:

These shortfalls remain over $2,500 per pupil for a total approaching $3 billion.

These shortfalls remain over $2,500 per pupil for a total approaching $3 billion.

Cutting Basic Funding while Increasing Standards

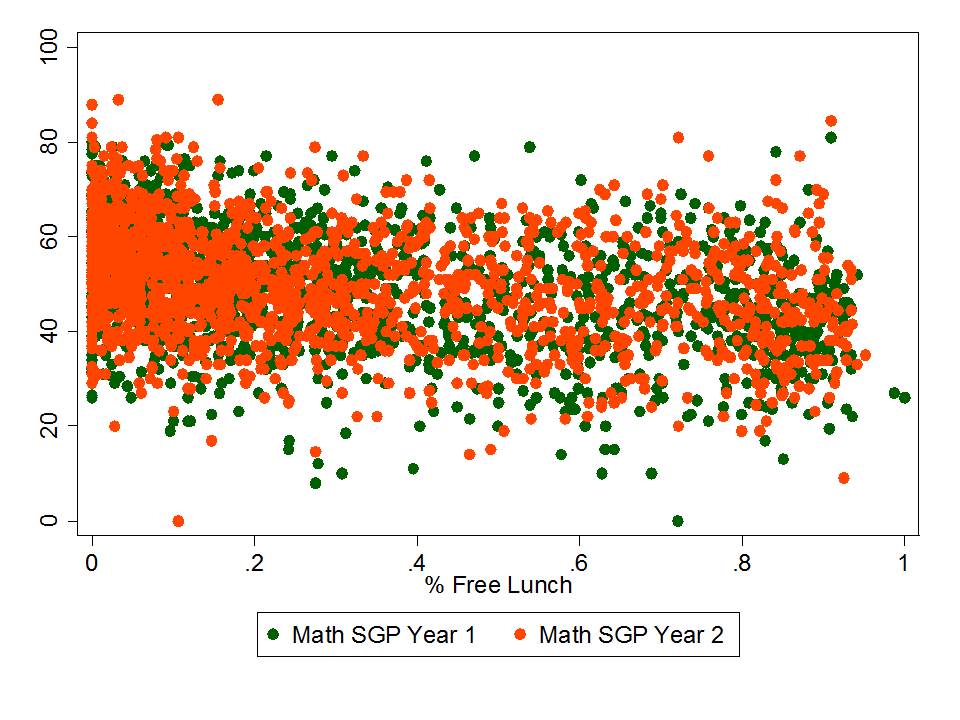

Put simply, higher student outcome standards cost more to achieve, not less. As explained above, the New York State school finance formula is built on an underlying basic cost estimate of what it would take for a low need (no additional student needs) district to achieve adequate educational outcomes as measured on state assessments. The current formula is built on average spending estimates dating back several years now and based on prior outcome standards, tied to a goal of achieving 80% proficient or higher. More than once in the past several years, the state has substantively increased the measured outcome standards.

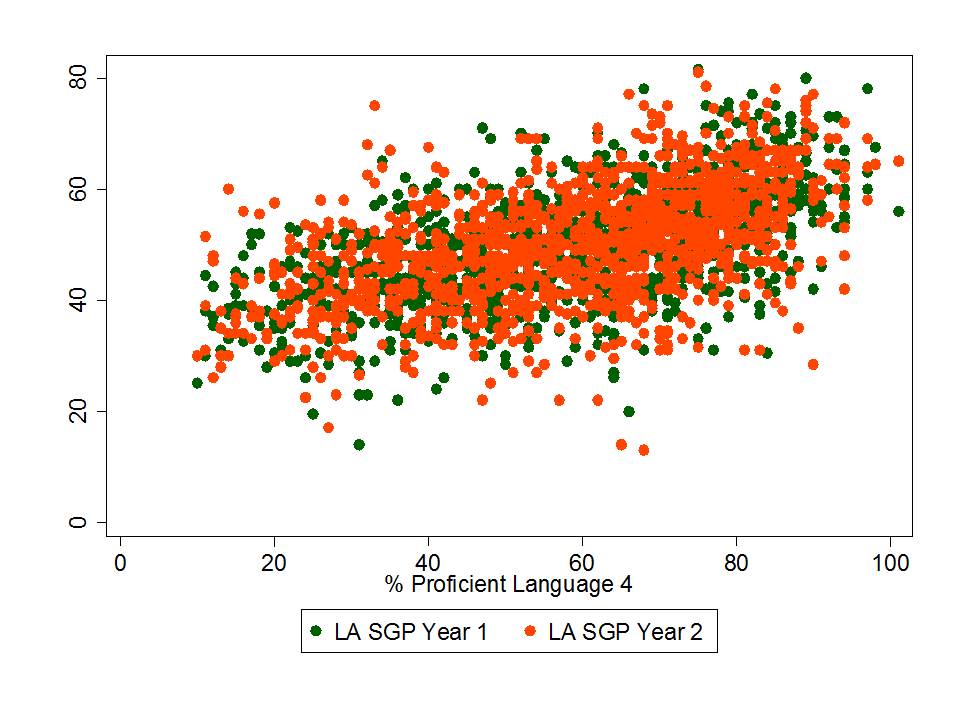

For 2010, the Regents adjusted the assessment cut scores to address the inflation issue, and as one might expect proficiency rates adjusted accordingly. The following figure shows the rates of children scoring at level 3 or 4 in 2009 and again in 2010. I have selected a few key, rounded, points for comparison. Districts where 95% of children were proficient or higher in 2009 had approximately 80% in 2010. Districts that had 80% in 2009 had approximately 50% in 2010. This means that the operational standard of adequacy using 2009 data was equivalent to 50% of children scoring level 3 or 4 in 2010. This also means that if we accept as reasonable, a standard of 80% at level 3 or 4 in 2010, that was equivalent to 95% – not 80% – in 2009.

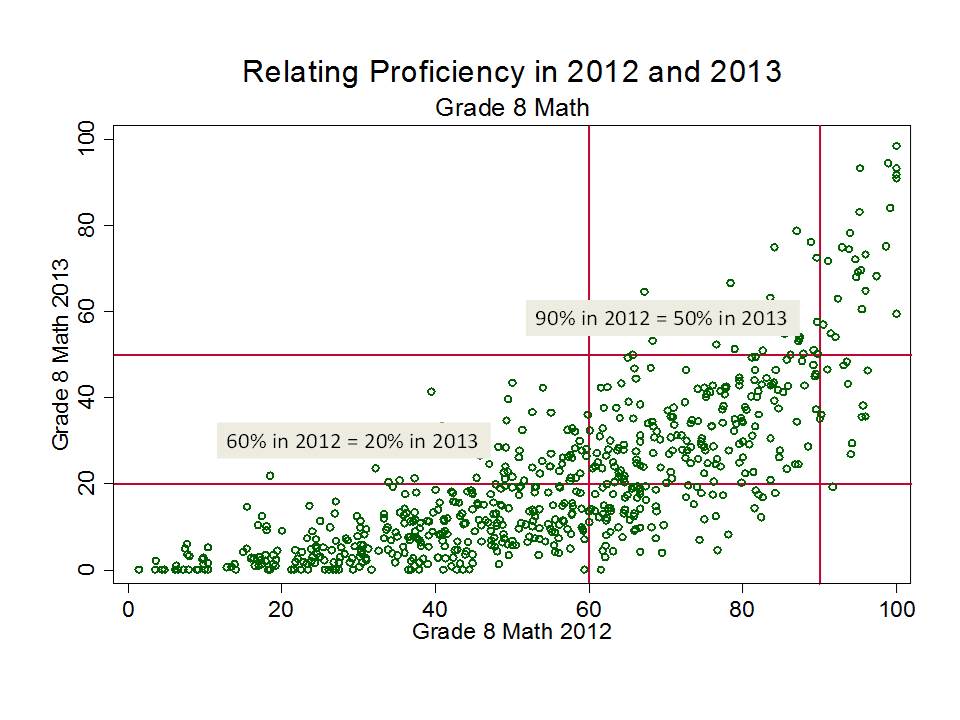

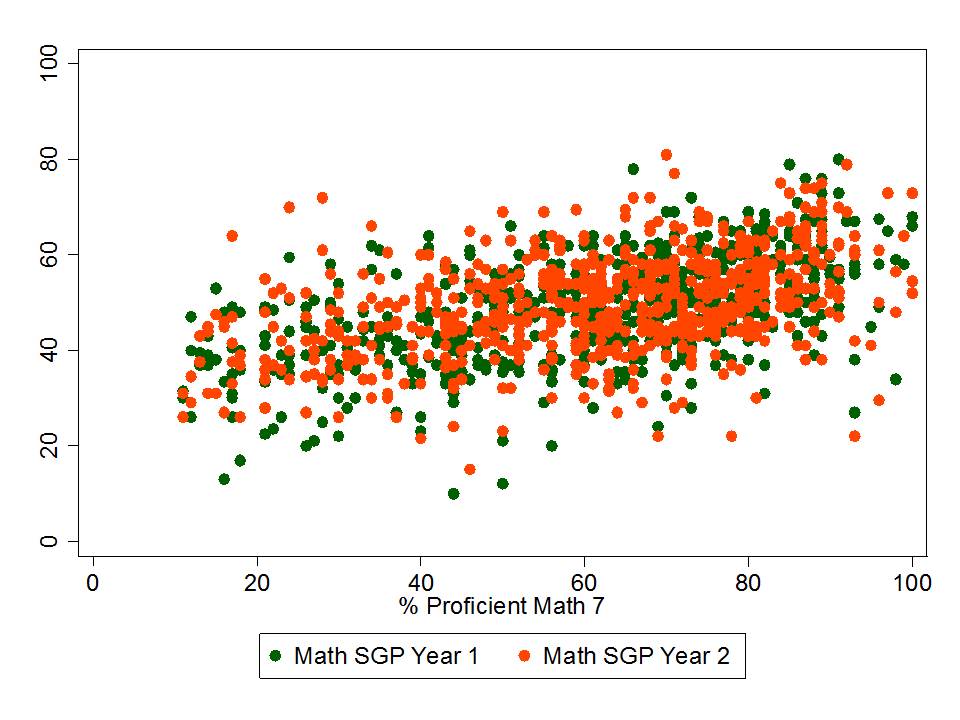

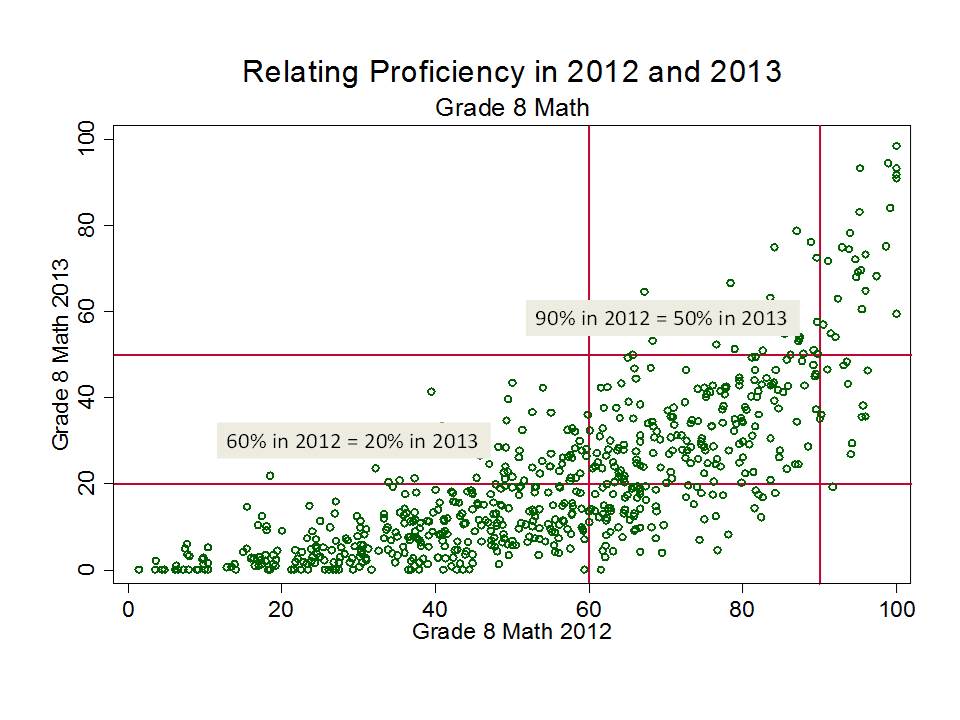

This next figure shows the resulting shift of the change in assessments from 2012 to 2013, also for 8th grade math. Again, I’ve applied ballpark cutpoint comparisons. Here, a school where 60% were proficient in 2012 was likely to have 20% proficient in 2013. A school where 90% were proficient in 2012 was likely to have 50% proficient in 2013. If, as state policymakers argue, the 2013 assessments do more accurately represent the standard for college readiness, and thus the constitutional standard of meaningful high school education, it is quite likely that the cost of achieving that constitutional standard is much higher than previously estimated. Notably, only a handful of schools surpass the 80% threshold on math proficiency for the 2013 assessments.

While it appears that the state has been chipping away at funding gaps for districts including New York City, they have not done so by substantively increasing funding, but rather by decreasing the adequate funding target. This figure shows that the underlying basic cost figure for the foundation aid formula climbed gradually as planned through 2012-13. Note that this climb was based on the assumed 80% success rate on the 2007-08 outcome standard, not considering the 2009-10 adjustment to that outcome standard. But inexplicably, the state has chosen to reduce the basic funding figure for each year since, despite raising the outcome standards dramatically.

While it appears that the state has been chipping away at funding gaps for districts including New York City, they have not done so by substantively increasing funding, but rather by decreasing the adequate funding target. This figure shows that the underlying basic cost figure for the foundation aid formula climbed gradually as planned through 2012-13. Note that this climb was based on the assumed 80% success rate on the 2007-08 outcome standard, not considering the 2009-10 adjustment to that outcome standard. But inexplicably, the state has chosen to reduce the basic funding figure for each year since, despite raising the outcome standards dramatically.

Even worse, as explained above, the state continues to underfund the foundation aid formula by about 1/3. That is, even after lowering their target funding level, the state continues to fall over 30% short of that funding target. The primary reason the extent of underfunding has declined is because the state has lowered the target.

Raising outcome standards while cutting funding is a formula for failure.

Appendix

The current “adequacy” target (according to the foundation aid formula) is the fully phased in adequacy target per (selected) aidable pupil unit, or, as laid out above:

PNI x RCI x Base = State Prescribed Adequacy Target[3]

This formula adequacy target represents what the state itself adopted as the quantification of its own constitutional obligation to provide for a sound basic education. Later in this brief, I challenge the validity of this target, but for purposes of this section, it is appropriate to consider this figure as the state’s own definition of its constitutional obligation.

The state aid per pupil (TAFPU) to reach that state prescribed adequacy target is then:

Adj. Foundation per Pupil – Local Contribution per Pupil = State Share per Pupil

And the total state aid to be received, if the formula was both fully phased in and fully funded is:

State Share per Pupil x TAFPU = Foundation Aid [before phase in]

Where “phase in” refers to the fact that the foundation formula is intended to scale toward full adequacy funding over three year periods (originally, four years reaching the target in 2011). Phase in, as referred to in this case, is a reduction to the target funding, representing the progress toward fully phased in funding to be made in the coming year. In the following analyses, and as represented above, I compare current funding against foundation aid before this reduction (phase in) is applied.

Thus, the extent of underfunding is:

State Aid to Reach Adequacy Target – Actual Foundation Formula Funding (after all adjustments) = Underfunding

The underfunding of the foundation formula results from two specific calculations. First, instead of actually basing foundation aid on the above calculations – that is, the actual formula – aid is simply frozen[4] (or proportionately marginally increased) relative to prior year total (not per pupil) aid. Then, in a two-step calculation, aid is reduced using the Gap Elimination Adjustment and then partially restored for most districts.[5]

For example, for the city of Utica:

$12,046Foundation aid per TAFPU x 11,832TAFPU = $133,950,644Foundation Aid (before phase in)

But, as shown in the following table, estimated actual (frozen) foundation aid is:

Estimate for 2013-14 = $72,413,005

So the preliminary foundation aid funding gap for Utica is:

$133,950,644Foundation Aid (before phase in) – $72,413,005Aid Based on Prior Year = $61,537,659Preliminary Aid Gap

But this is the gap before applying the Gap Elimination Adjustment. The deceptively named Gap Elimination Adjustment (or GEA) is really just a cut to state aid, which on average, falls more heavily on districts more dependent on state aid, or higher need districts.

The real gap for Utica is, therefore, as follows:

$72,413,005Aid Based on Prior Year – $2,843,829GEA = $69,569,176Actual Aid

So:

$133,950,644Foundation Aid (before phase in) – $69,569,176Actual Aid = $64,381,488Actual GAP

In the table, we see that Utica actually receives only about half of the total state aid it should receive if the formula was funded. Other small city districts face similar shortfalls, with Utica also receiving about half of the state aid estimated as needed under the state foundation aid formula.

The table also provides a per pupil calculation of the degree of state aid underfunding across Small City districts and New York City. I calculate the foundation aid gap per Duplicated Combined Adjusted Average Daily Membership, or DCAADM[6] which is the district enrollment figure commonly used in the state fiscal profiles files for calculating per pupil amounts.

[1] See: http://www.oms.nysed.gov/faru/PDFDocuments/Primer12-13A.pdf.

“The Foundation Amount is the cost of providing general education services. It is measured by determining instructional costs of districts that are performing well. It is adjusted annually to reflect the percentage increase in the consumer price index. For 2007-08 aid, it is $5,258. It is further adjusted by the phase-in foundation percent. For 2009-10, the adjusted amount is: $5,410 x 1.038 (CPI) x 1.025 (phase-in), or $5,756. For 2010-11, the adjusted amount is: $5,708 x 0.996 x 1.078, or $6,122. For 2011-12, the adjusted amount is: $5,685 x 1.016 x 1.1314, or $6,535. For 2012-13, the adjusted amount is: $5,776 x 1.032 x 1.1038, or $6,580.”

In this case, the matching 2012-13 figure is arrived at by taking P(OP0002) 02 ADJUSTED FOUNDATION AMT/PUPIL for each district and dividing by PNI [O(PC0409) 05 PNI = 1 + EN%, MIN 1; MAX 2] then RCI [N(MI0123) 03 REGIONAL COST INDEX (RCI)], from: File DBSAD1, 3-29-12. Prior years also match. Interestingly, however the 2013-14 aid worksheets yield a foundation level of only $6,515, or a cut to the foundation level of $65.

[3] DBSAD1, 3-29-12, P(OP0002) 02 ADJUSTED FOUNDATION AMT/PUPIL

[4] DBSAA1, 3-29-12, E(FA0197) 00 2012-13 FOUNDATION AID

[5] DBSAA1, 3-29-12, GEA [AA(FL0026) 00 2012-13 GAP ELIM ADJUST ON BT1213] + GEA Partial Restoration [AB(FL0027) 00 2012-13 GAP ELIMINATION ADJMT RESTORATION])

[6] Duplicated CAADM. This item (Duplicated Combined Adjusted Average Daily Membership or DCAADM) is the pupil count used to calculate per pupil amounts for the revenue items and expenditure categories. The pupil count is based on data from State aid worksheets and Basic Educational Data System forms. This pupil count is the best count of the number of students receiving their educational program at district expense. DCAADM includes the average daily membership (ADM) of students enrolled in district programs (including half-day kindergarten pupils weighted at 0.5); plus equivalent secondary attendance of students under 21 years of age who are not on a regular day school register plus pupils with disabilities attending Boards of Cooperative Educational Services (BOCES) full time plus pupils with disabilities in approved private school programs including State schools at Rome and Batavia plus resident students for whom the district pays tuition to another school district plus incarcerated youth. Beginning with the 1999-2000 school year, pupils resident to the district but attending a charter school are included. Beginning with the 2007-08 school year, students attending full-day Pre-K are weighted at 1.0, 1/2 day Pre-K weighted at 0.5. Since residents attending other districts were also included in the CAADM count of the receiving district, this pupil count is a duplicated count. The State total consists of the sum of the rounded pupil counts of each school district. Data Source: State Aid Suspense File. See: http://www.oms.nysed.gov/faru/Profiles/18th/revisedAppendix.html

New Jersey Education Policy Forum