So, I’ve seen on more than a few occasions these last few weeks references to the recent Dobbie and Fryer article on NYC charter schools as the latest evidence that money doesn’t matter in schools. That costly stuff like class size, or overall measures of total per pupil expenditures are simply unimportant, and can easily be replaced/substituted with no-cost alternatives like those employed in no excuses charter schools (like high expectations, tutoring, additional time, and wrap-around services). I’ll set aside the issue that many of these supposedly more effective alternatives do, in fact, have cost implications. Instead, I’ll focus my critique on whether this Dobbie/Fryer study provides any substantive evidence that money doesn’t matter – either broadly, or in the narrower context of looking specifically at NYC charter schools.

Now, in many cases, it’s really just the media spin from a study that gets out of hand. It’s just the media and politically motivated tweeters who dig for the lede otherwise buried by the overly cautious researcher. Not so much in this case. Dobbie and Fryer actually make this bold statement… and make it several times and in several forms throughout their paper – as if they’re really on to something.

We find that traditionally collected input measures — class size, per pupil expenditure, the fraction of teachers with no certification, and the fraction of teachers with an advanced degree — are not correlated with school effectiveness.

http://www.nber.org/tmp/65800-w17632.pdf

Now, I would generally treat the work of such respected researchers with great caution here on my blog. Yes, my readers know well that I do go after shoddy think tank work with little reservation. But, when the work is from a respected source, like here, or here, I do tend to be more reserved and more cautious, often second guessing whether my critique is legit.

But I’ll be honest here. I find this Dobbie/Fryer piece infuriating on many levels, some of which are simply entirely inexcusable (and, as noted below, this is the 3rd in a row, so my patience is running thin). The basic structure of their study, as far as I can tell from the disturbingly sparse documentation in their working paper, is that they conducted a survey of NYC charter schools to gather information on practices (the no excuses stuff) and on expenditures and class size. Then, they evaluated the correlations between individual factors (and an aggregate index of them) among traditional and no excuses measures, and alternative forms of their charter effect estimates.

Let’s be really clear here – simply testing the correlation between spending and an outcome measure – comparing higher and lower spending schools and their outcomes to see if the higher spending schools have higher effectiveness measures – WOULD TELL US LITTLE OR NOTHING, EVEN IF THE DATA WERE ACCURATE, PRECISE AND WELL DOCUMENTED. Which, by the way, they are not.

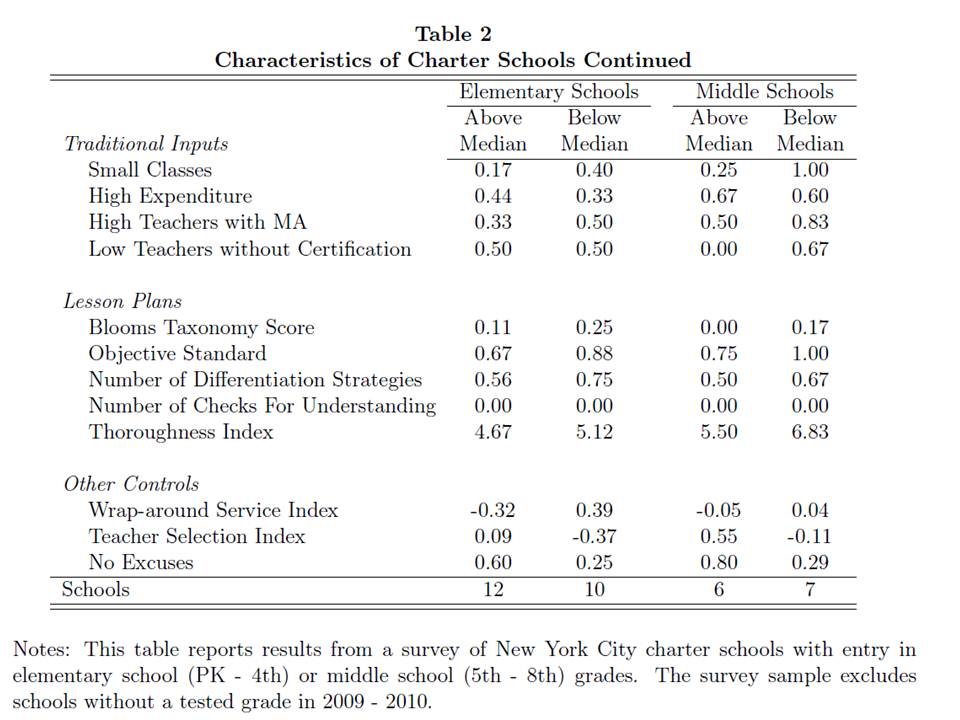

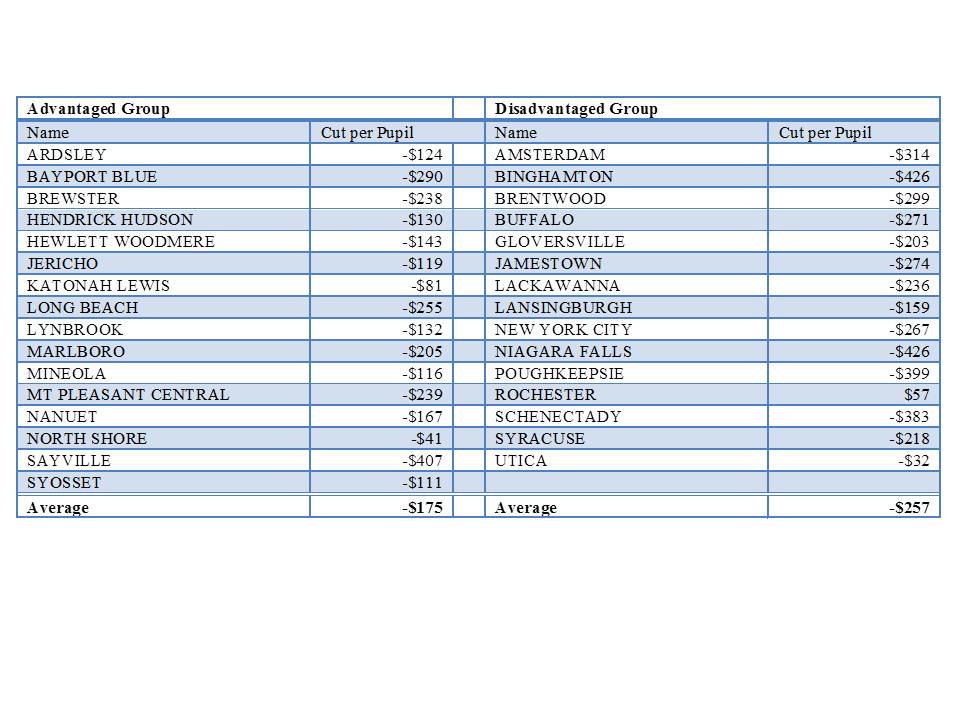

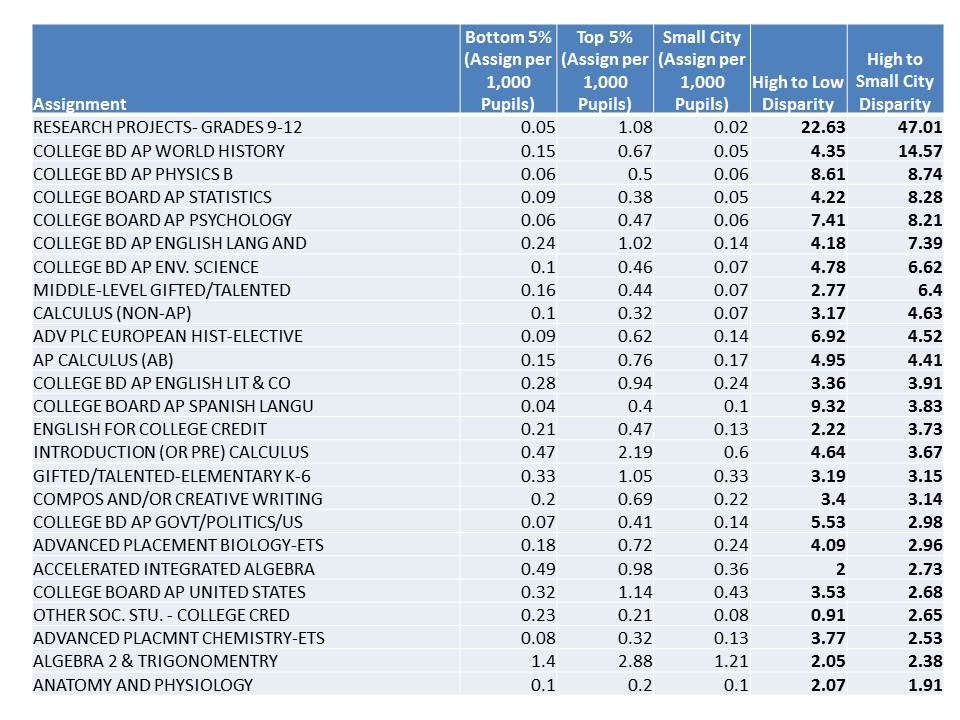

Here’s what Dobbie and Fryer give us for descriptive information on their resource measures:

FIGURE 1: D/F Descriptives

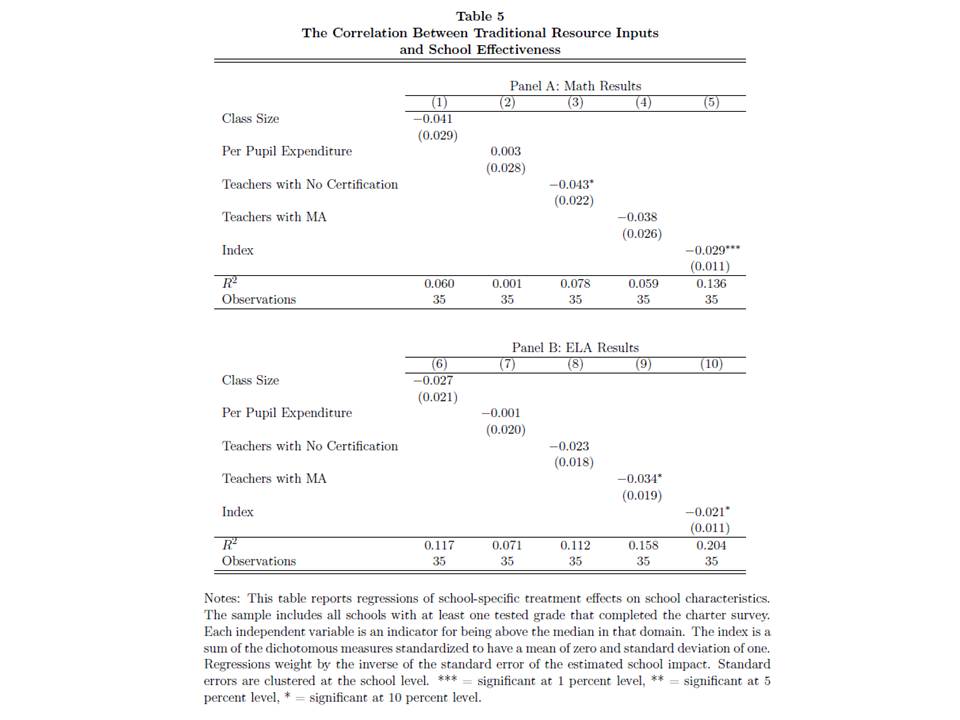

And here’s the evidence regarding the correlation between traditional resources and outcomes:

FIGURE 2: D/F Correlations (they include another table, #6 w/Lottery estimates)

So, why would it be problematic to look for a simple correlation between charter spending (“per pupil expenditure”) levels and school effectiveness measures?

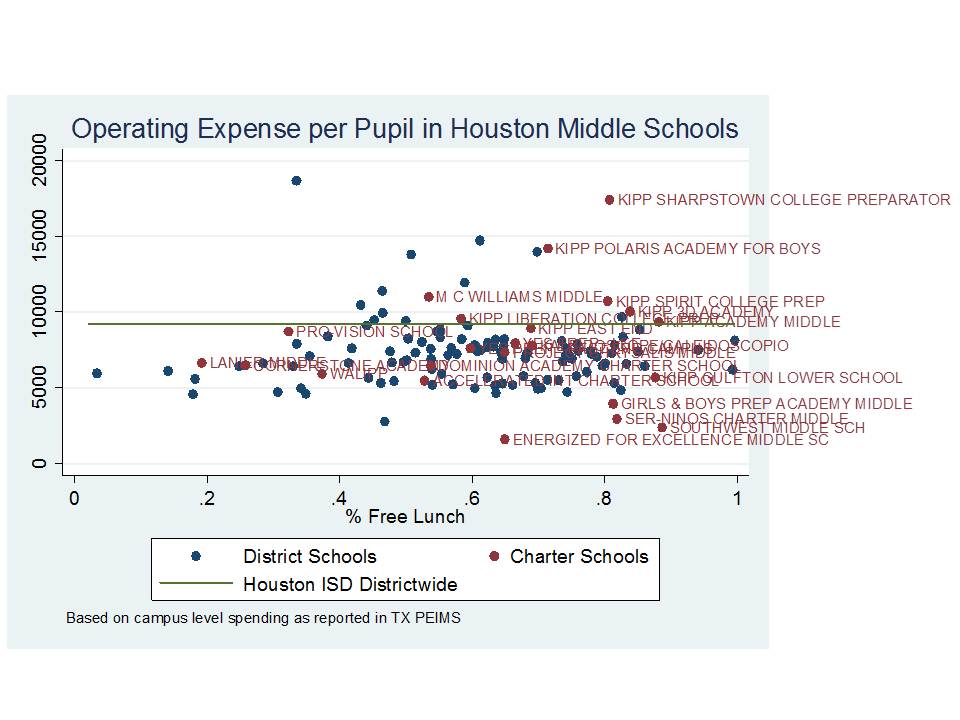

First, NYC charter schools are an eclectic mix of very small to small (nothing medium or large, really) schools at various stages of development, adding grade levels from year to year, adding schools and growing to scale over time. Some are there, others working their way there. And economies of scale has a substantial effect on per pupil spending. So too might other start-up costs which may not translate to same year effectiveness measures.

Here’s a link to my detailed analysis of NYC charter school spending and the complexities of even figuring out what they spend, comparing audited annual financial report data and IRS filings: http://nepc.colorado.edu/files/NEPC-NYCharter-Baker-Ferris.pdf (as opposed to saying, hey, what do you spend anyway?)

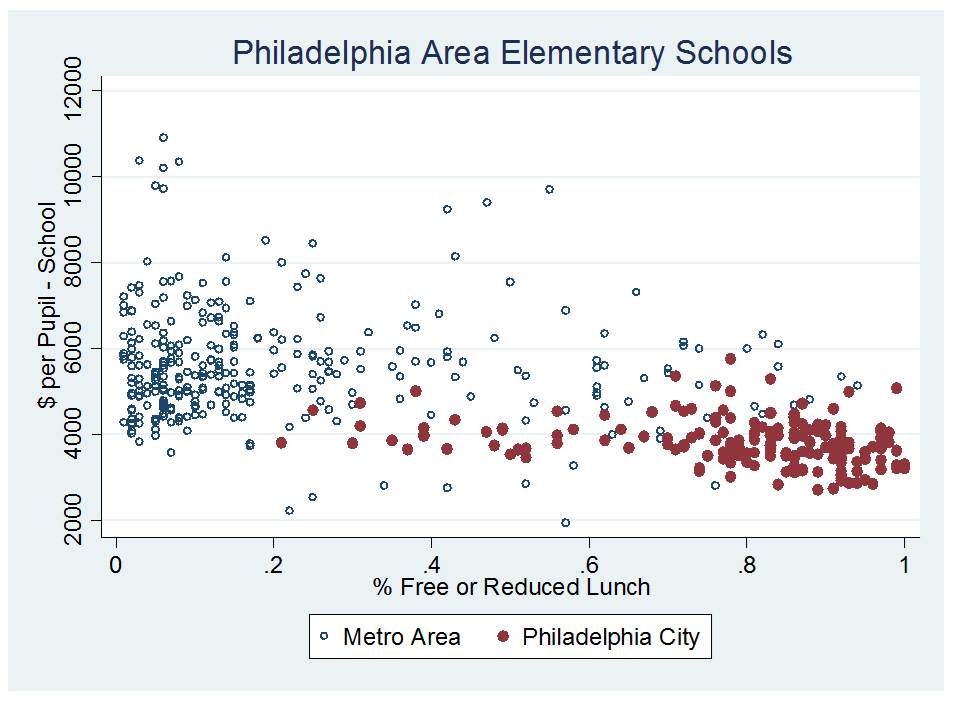

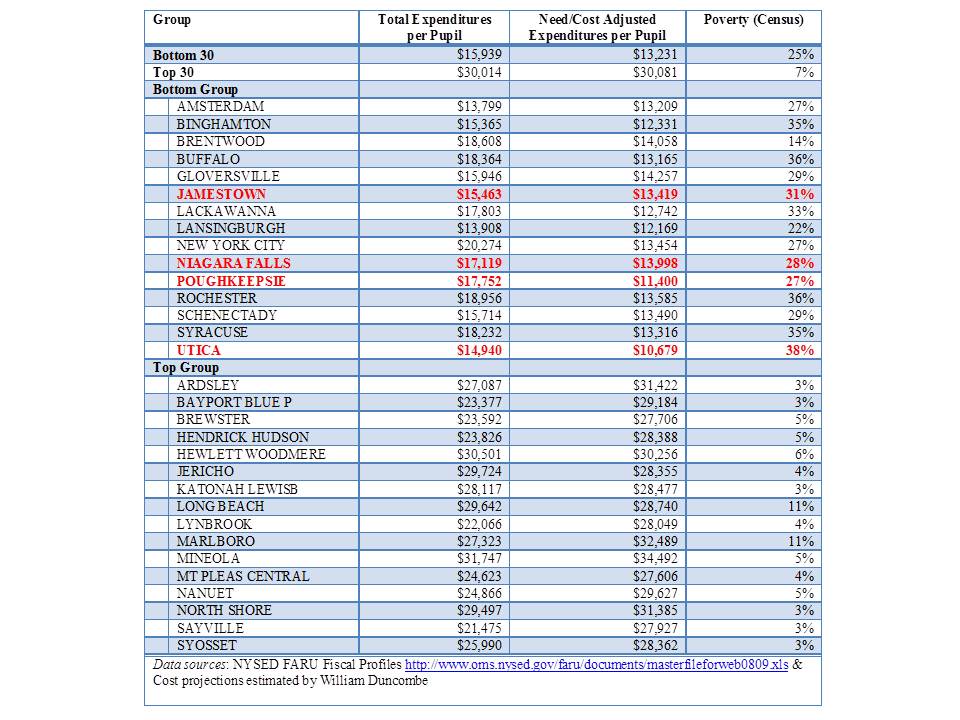

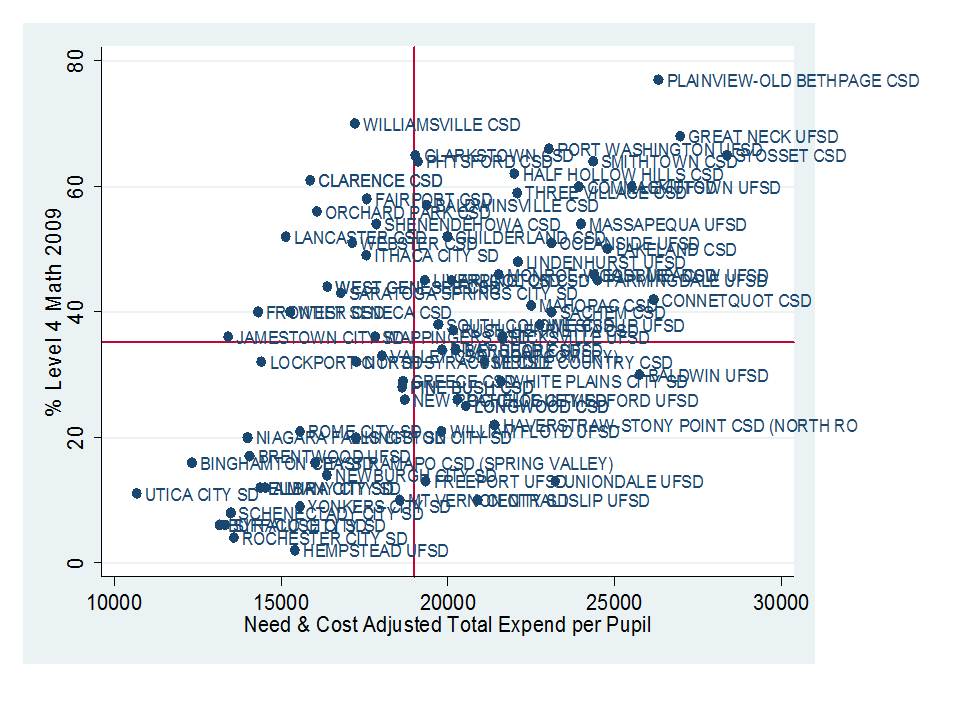

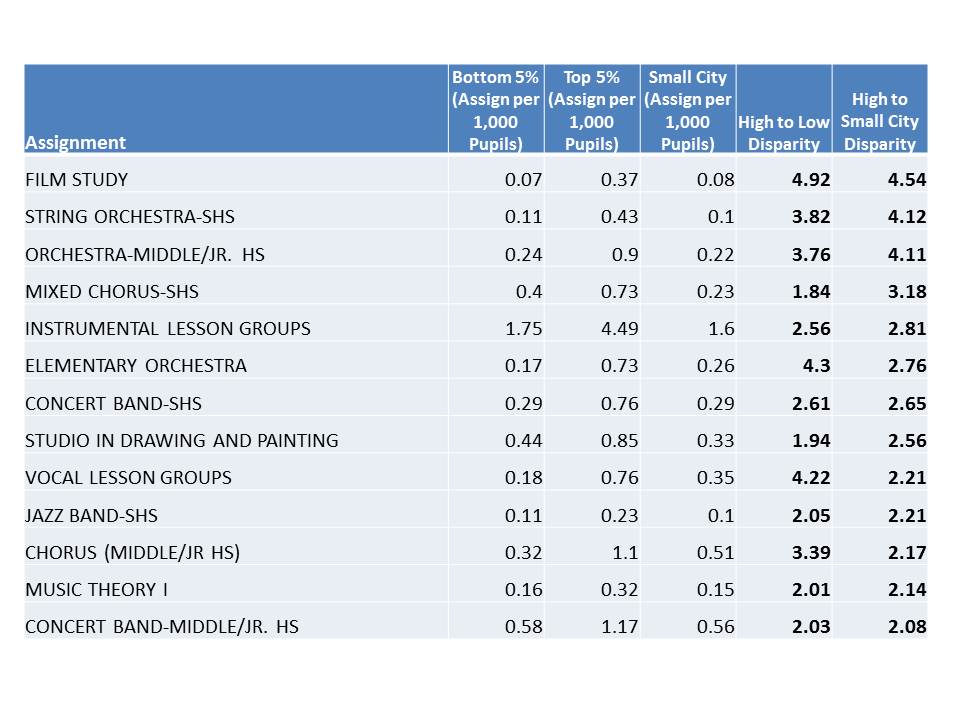

As it turns out, school size and grade range were the only two factors I (along with Richard Ferris) found to be reasonable predictors of NYC charter school per pupil spending (note that the caption on this chart in the original report is wrong – this chart relates to predictors of total per pupil spending, not facilities spending alone). At the very least, any respectable analysis of the relationship between spending and effectiveness must account for grade range/level and economies of scale. It should probably also account for student population characteristics (which may bias effectiveness estimates). But, the sample sizes are also pretty darn small when trying to evaluate resource effects across similar grade level/range NYC charter schools. That alone will find you nothing.

FIGURE 3: B/F Regression of factors influencing NYC charter spending

Further, NYC charter schools have different access to facilities. Some are provided NYC public school facilities (through colocation), while others are not. Having a facility provided can save a NYC charter school over $2500 per pupil per year (to be put toward other things). Dobbie and Fryer provide no documentation regarding whether these differences are accounted for in their mythical per pupil expenditure figure.

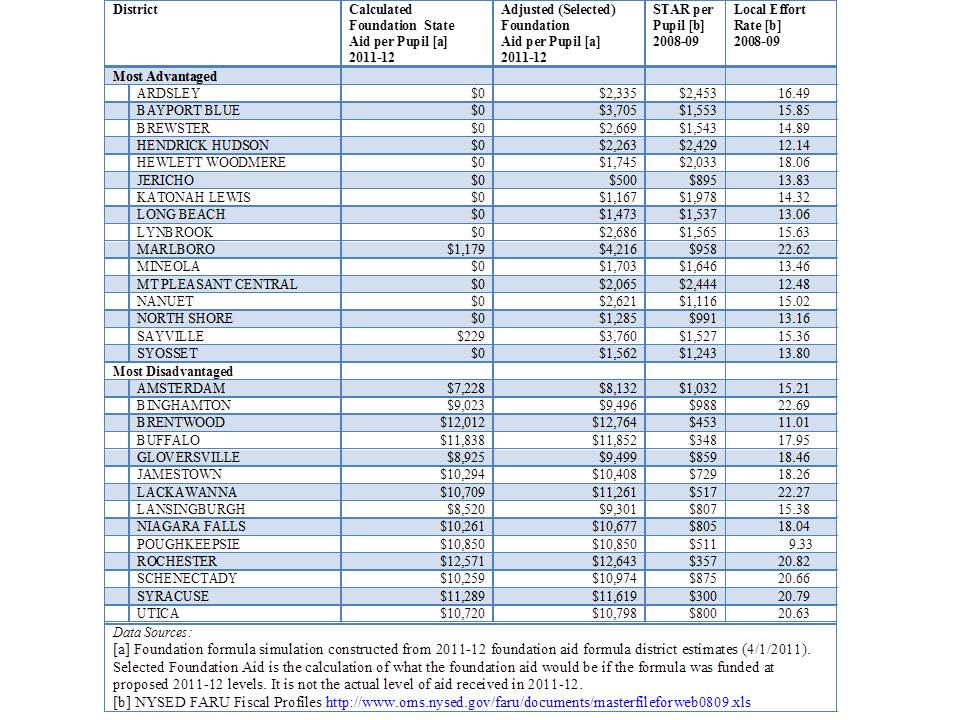

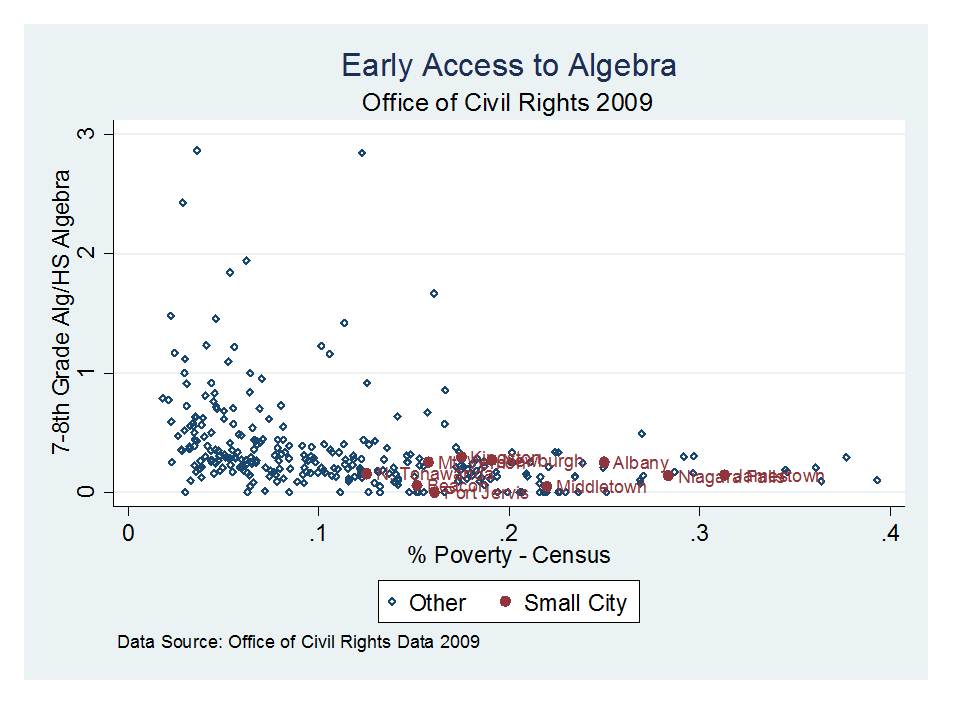

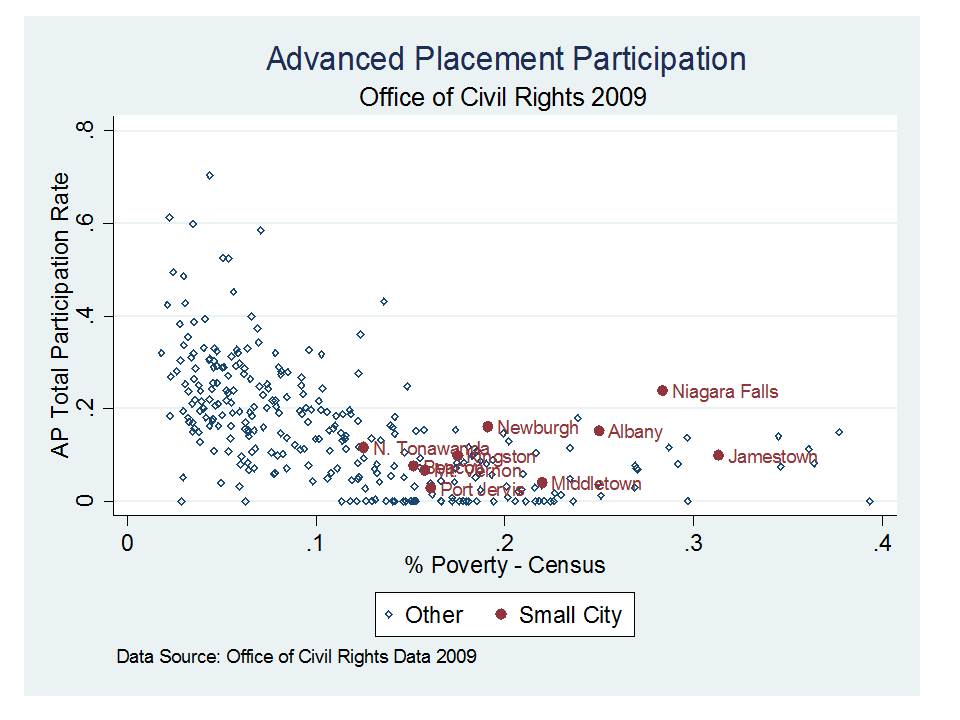

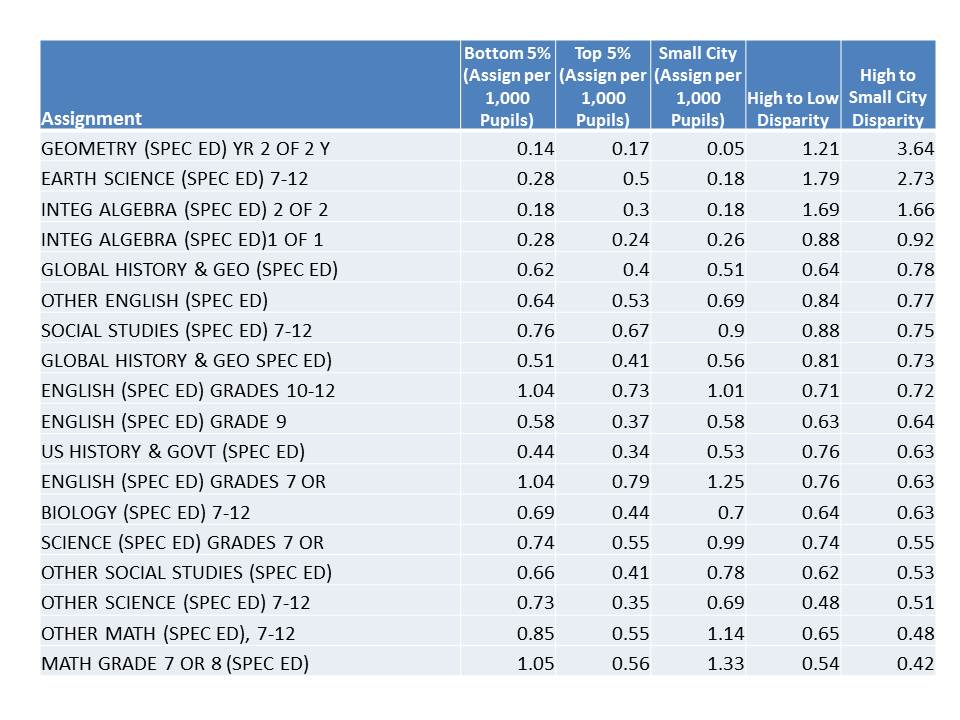

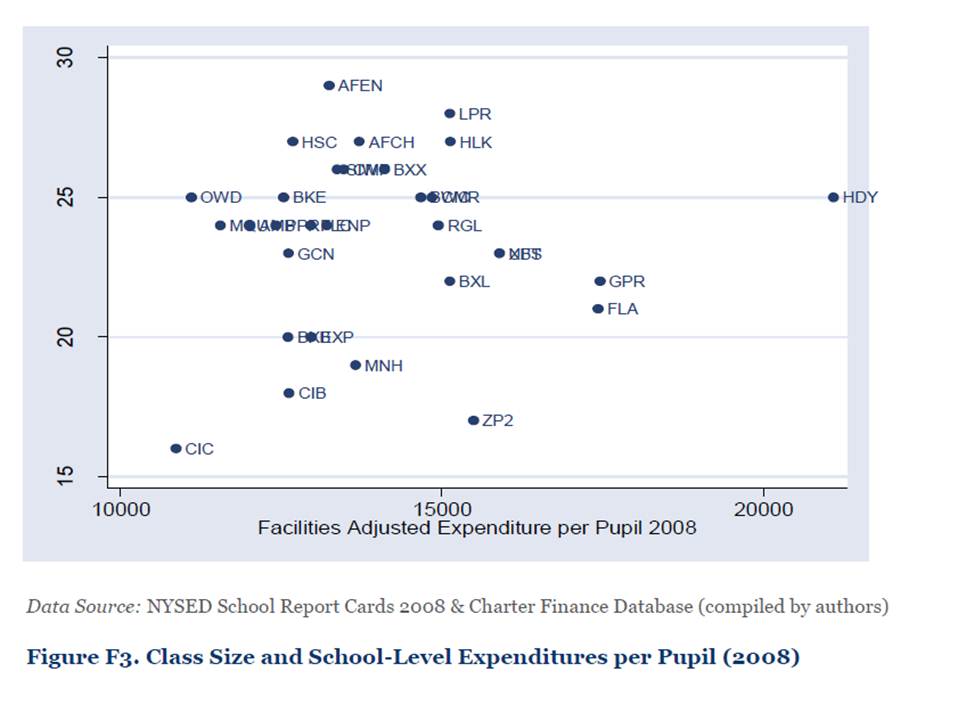

It turns out that because of the various structures, grade ranges and developmental stage of NYC charters, it’s hard to even discern a relationship between per pupil spending and class size, even after trying to account for the facilities cost differentials (typically, you’d get a pattern in this type of graph, with declining class size as per pupil spending increases).

FIGURE 4: B/F $ and Class Size

Some more detail in NYC KIPP spending here: https://schoolfinance101.com/wp-content/uploads/2011/10/slide81.jpg

Some more detail in NYC KIPP spending here: https://schoolfinance101.com/wp-content/uploads/2011/10/slide81.jpg

The reality is that the wacky and large expenditure variations that exist across NYC charter schools don’t seem to be correlated with much of anything, individually, but are correlated with school size and grade range (r-squared between .5 and .6 for those).

Capturing an accurate and precise representation of NYC charter school spending is messy. Not even trying is embarrassing and inexcusable.

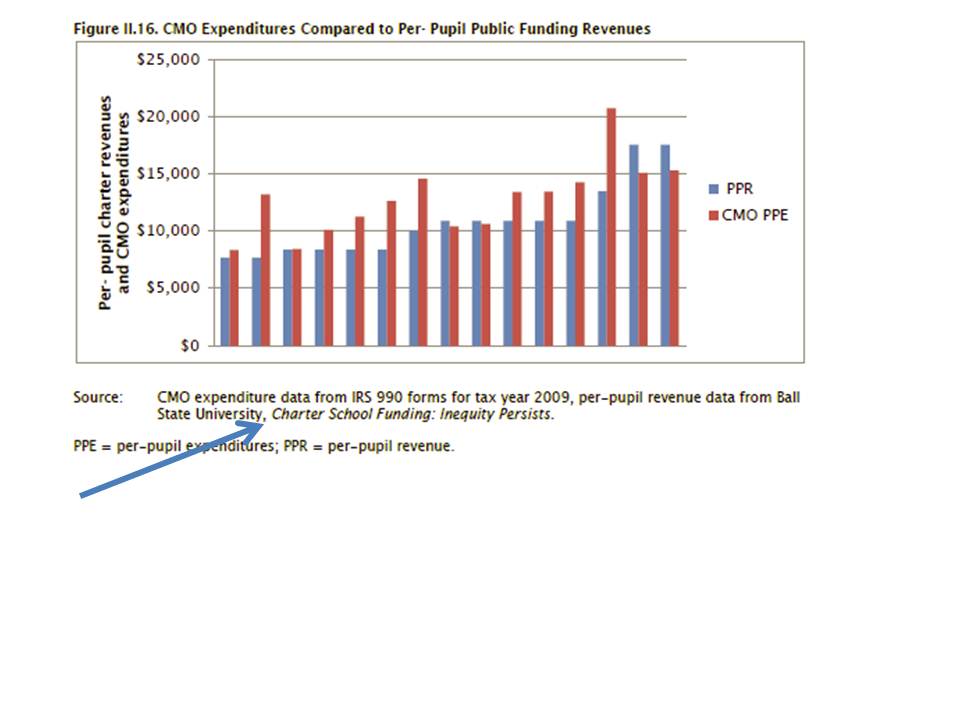

Even worse and most frustrating about this particular paper by Dobbie and Fryer is the absurd lack of documentation, or any real descriptives on the measures they used. Instead, Dobbie and Fryer present a very limited information form of descriptive on per pupil spending (above). We have no idea what Dobbie and Fryer believe are the actual ranges of per pupil spending across their sample of schools? Rather, we have only a measure of the amount above the mean, the high expenditure charters are (I don’t mind standardizing measures, but like to see what I’m dealing with first!) This information is presumably drawn from their survey – with no definition whatsoever of what is even meant by “per pupil expenditures?” [which is not always a simple question] Did the costs of wrap-around services in Harlem Children’s Zone count? Dobbie and Fyer’s earlier back of the napkin estimates of HCZ wrap around costs (see below) fall well short of the revenue we identified for HCZ in our report by actually looking at their financial statements.

Even if Dobbie and Fryer did find, in appropriately documented analyses, using more accurate/precise and appropriate spending measures, that spending was not correlated with charter effectiveness estimates in NYC, this would be a very limited finding.

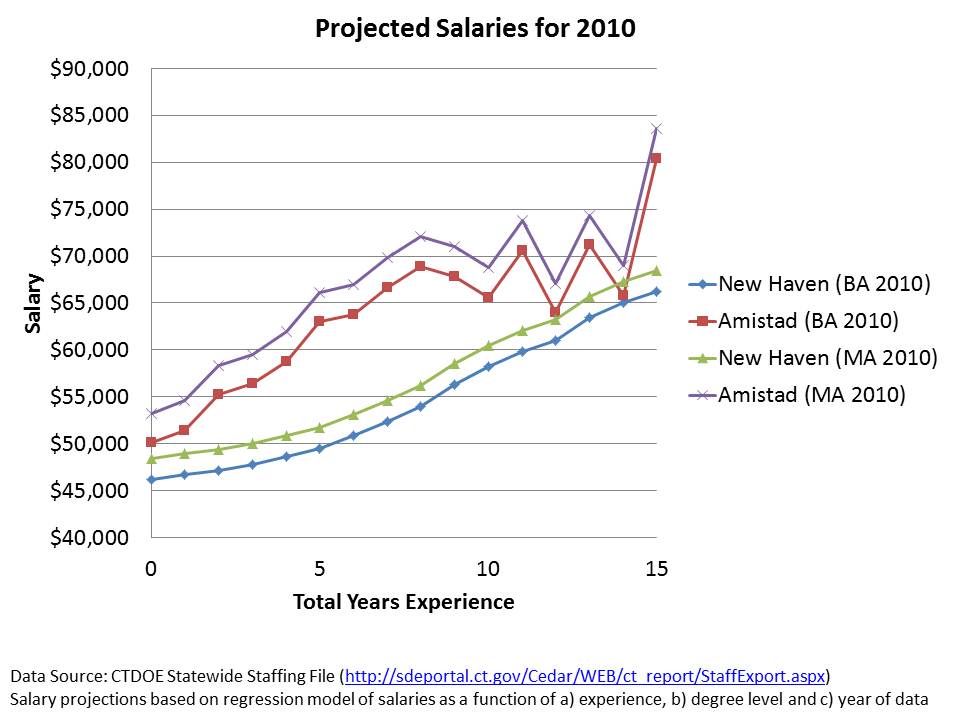

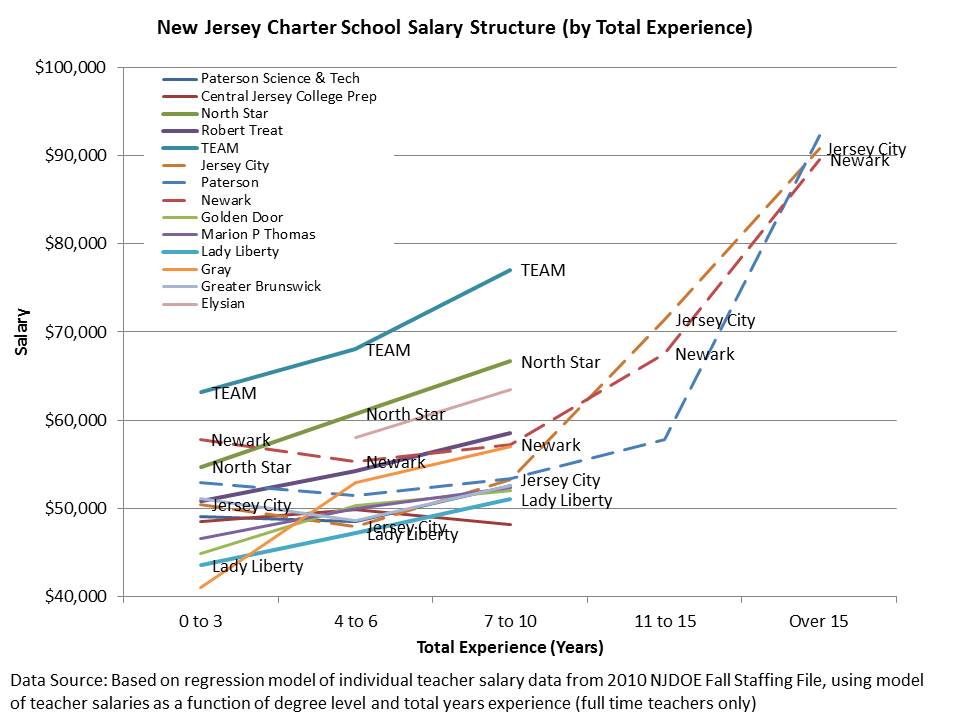

The finding is more limited in light of the fact that the supposedly resource neutral strategies used in their “no excuses” schools aren’t resource neutral at all. Rather, the cost implications of these resource intensive strategies are not carefully explored (similar to the unsatisfying lack of real cost analysis in Fryer’s recent Houston Apollo program study – again, no documentation at all!).

Dobbie and Fryer’s NYC charter study adds nothing to the larger debate on the importance of class size, or financial resources toward improving school quality and/or student outcomes. A much richer, more rigorous literature on this topic already exists, and I will provide a thorough review of that literature at a future point in time.

Tip – surveys of interested parties are not how to get information on finances. Audited financial statements are probably a better starting point, and two forms of such data are available for nearly all NYC charter schools. Further, where specific programs/services are involved, a thorough resource cost analysis (ingredients method) is warranted. This is School Finance (or Econ of Ed) 101.

Other examples of sloppy, poorly documented cost/benefit inferences from recent Dobbie and Fryer papers:

Here’s a segment identified as cost-benefit analysis from Dobbie and Fryer’s paper on Harlem Children’s Zone:

The total per-pupil costs of the HCZ public charter schools can be calculated with relative ease. The New York Department of Education provided every charter school, including the Promise Academy, $12,443 per pupil in 2008-2009. HCZ estimates that they added an additional $4,657 per-pupil for in school costs and approximately $2,172 per pupil for after-school and “wrap-around” programs. This implies that HCZ spends $19,272 per pupil. To put this in perspective, the median school district in New York State spent $16,171 per pupil in 2006, and the district at the 95th percentile cutpoint spent $33,521 per pupil (Zhou and Johnson, 2008).

http://www.economics.harvard.edu/files/faculty/21_HCZ_Nov2009_NBERwkgpaper.pdf

This paper on Harlem Childrens Zone provides no attempt to validate the $4,657 figure, and no documentation from financial reports to reconcile it. We discuss in our NEPC report, the range of likely expenditures for HCZ, where $4,657 would be below our low estimates (though 2 years earlier), based on mining actual IRS filings and audited financial reports. Further, it is absurd to compare HCZ spending to NY State mean spending without any consideration for variations in regional costs. It is far more reasonable to compare the relevant spending components to similar schools within NYC serving similar student populations. Their statement about perspective puts absolutely nothing into perspective, or at least not into any relevant perspective.

Here’s all of the information provided in the Apollo 20 no excuses Houston public schools study:

The experiment’s cost of roughly $2,042 per student – 22 percent of the average per pupil expenditure and similar to the costs of “No Excuses” charters – could seem daunting to a cash strapped district, but taking the treatment effects at face value, this implies a return on that investment of over 20 percent.

http://www.hisd.org/HISDConnectEnglish/Images/Apollo/apollo20whitepaper.pdf

The $2,042 figure is not documented at all. This is where a resource cost analysis would be appropriate (identifying the various resources that go into providing these services, the input prices of those resources, and determining the total costs). Further, it is not cited/documented anywhere in this paper any source that shows that no excuses charters spend about the same. Where? When? Actually, $2,000 per pupil in Texas is one thing and something entirely different in NY? This stuff isn’t trivial and such omissions are shameful and inexcusable.