Update: Here are a bunch of additional graphs relating Students First Report Card grades with unadjusted and adjusted NAEP Gains (hint – it’s the adjusted gains that matter since low performing states are able to post bigger gains, and also generally received higher grades from Students First). Mis_naep_ery9

Yesterday gave us the release of the 2013 NAEP results, which of course brings with it a bunch of ridiculous attempts to cast those results as supporting the reform-du-jour. Most specifically yesterday, the big media buzz was around the gains from 2011 to 2013 which were argued to show that Tennessee and Washington DC are huge outliers – modern miracles – and that because these two settings have placed significant emphasis on teacher evaluation policy – that current trends in teacher evaluation policy are working – that tougher evaluations are the answer to improving student outcomes – not money… not class size… none of that other stuff.

I won’t even get into all of the different things that might be picked up in a supposed swing of test scores at the state level over a 2 year period. Whether 2 year swings are substantive and important or not can certainly be debated (not really), but whether policy implementation can yield a shift in state average test scores in a two year period is perhaps even more suspect.

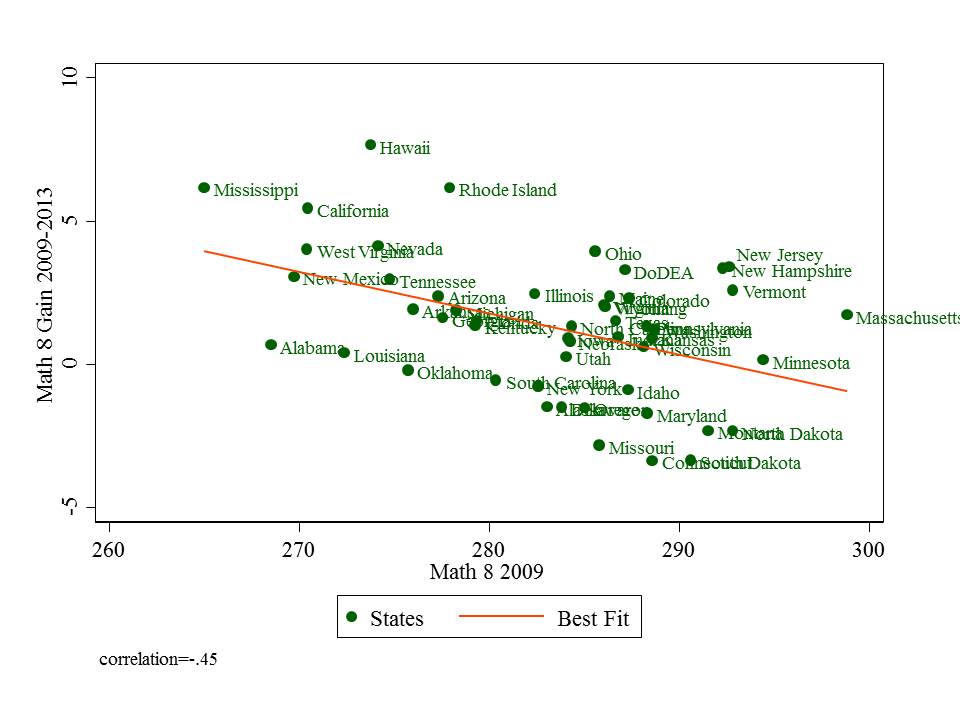

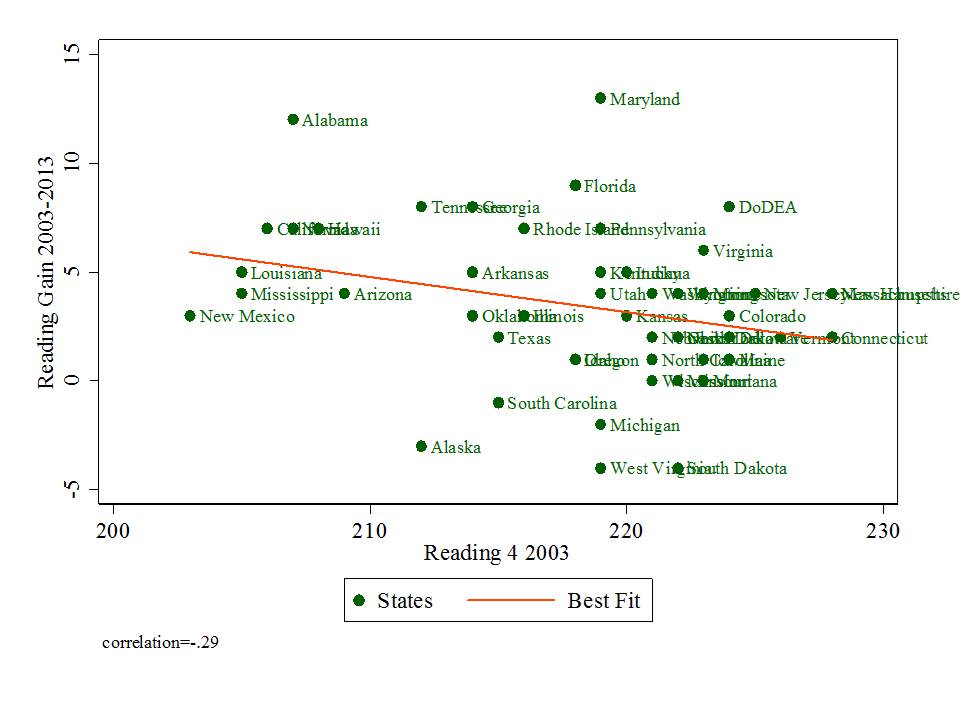

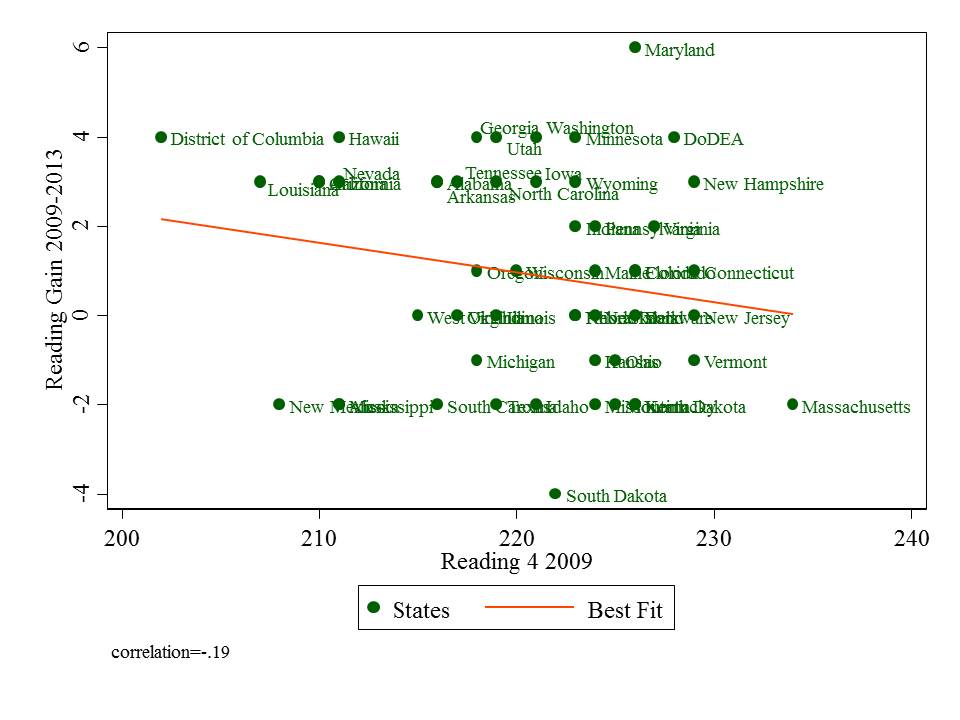

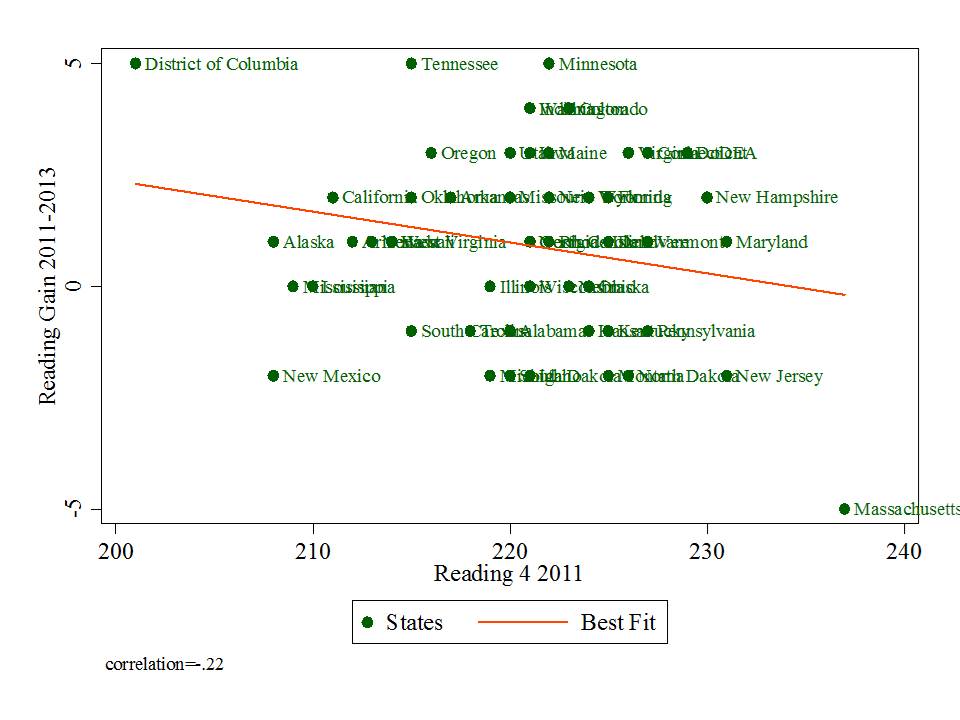

Setting all that aside, let’s just take a step back and look at the NAEP data, changes in scores from 03-13, 09-13 and 11-13 for 4th grade reading and 8th grade math. BUT, as I’ve shown before, since gains on NAEP appear correlated with starting point – lower performing states show higher gains, let’s condition those gains on starting point by representing them in scatterplots against starting points.

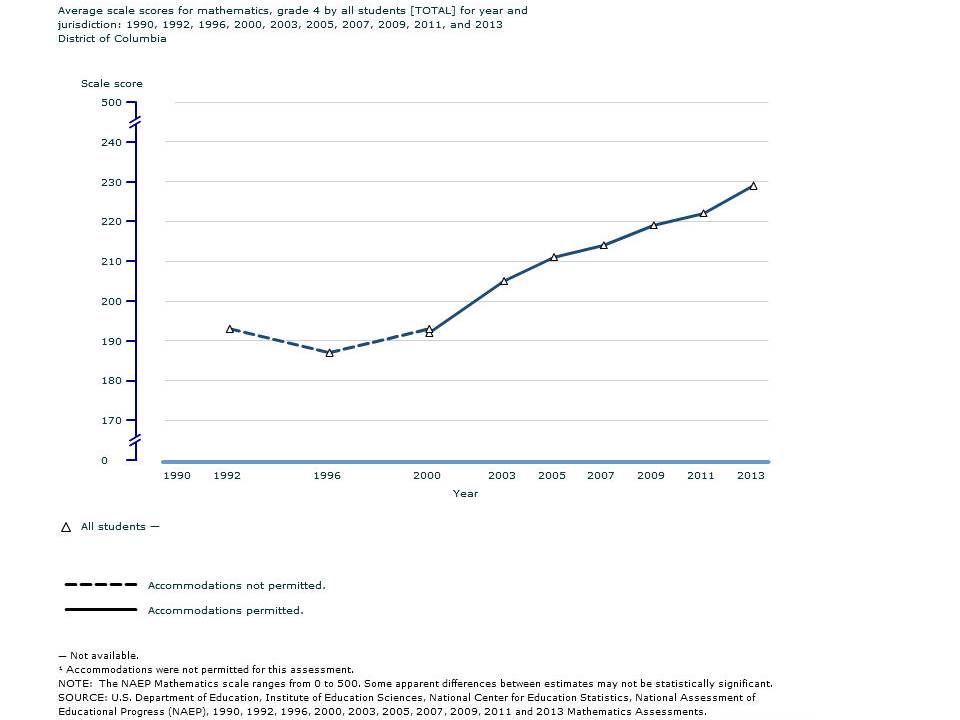

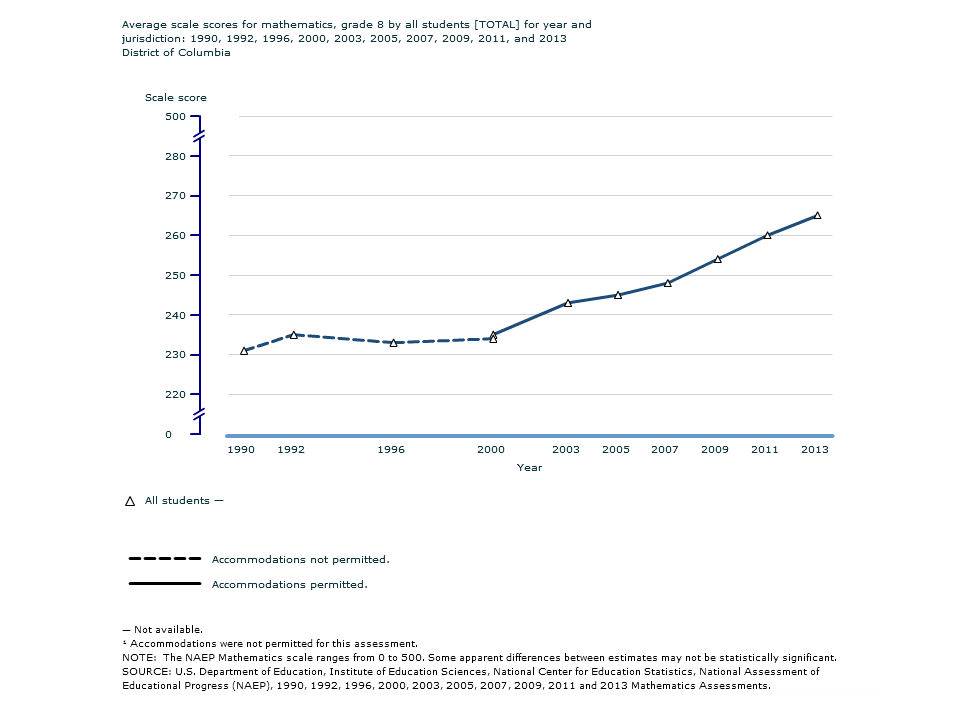

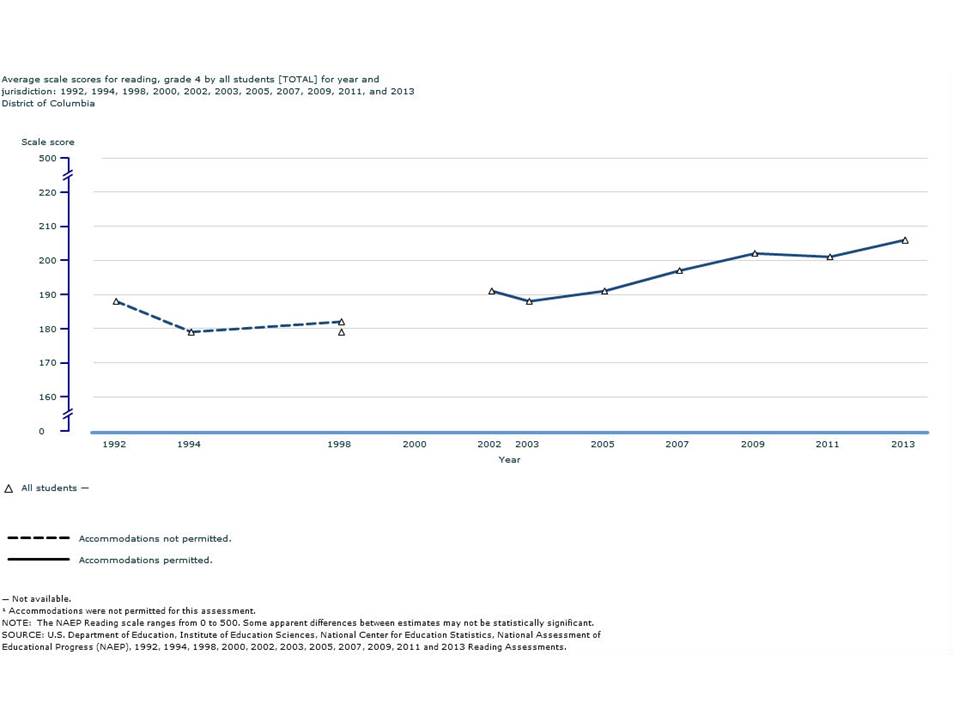

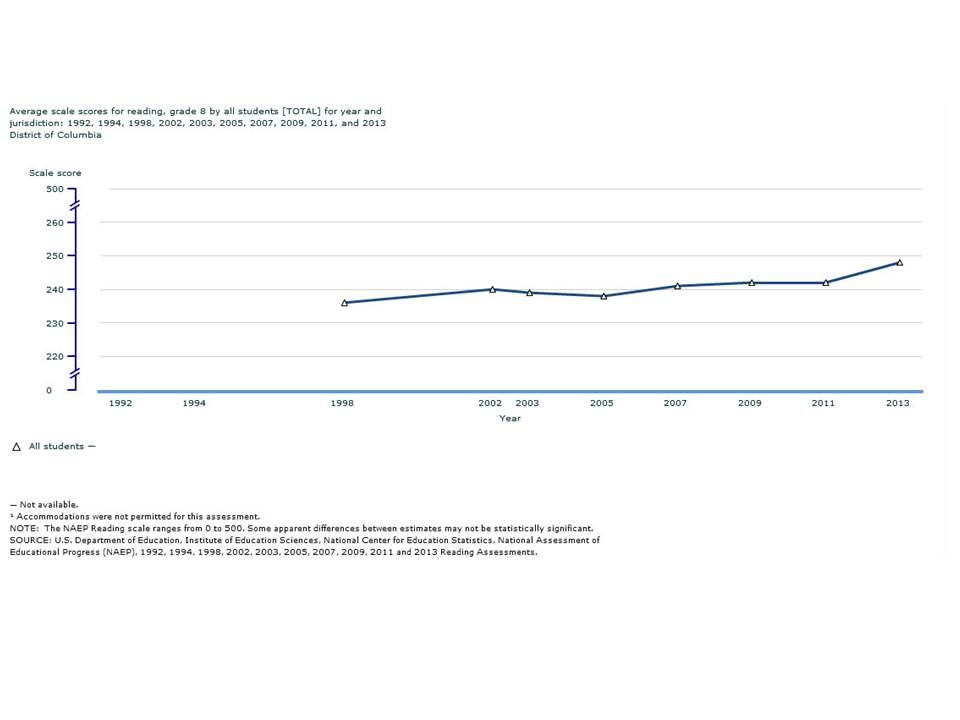

Here are the figures. In some of the figures below, I’ve cut out Washington, DC because it is such a low performing outlier. It does creep into the picture as its scores rise. But this is a rise over the longer haul, much prior to teacher evaluation reforms.

If teacher evaluation reform (or expanded choice, etc.) has caused great NAEP gains, then the graphs below should show that especially from pre-RTTT baseline year 2009 to 2013, states adopting RTTT-style teacher eval policies should be rising above the trendline – but not those curmudgeonly states that have lagged in such reform efforts.

Over the 10 year period, Maryland is the miracle state in 4th grade reading. Matt Di Carlo has pointed this out in the past. Florida does okay, and Alabama is also a standout. New Jersey and Massachusetts – both initial high performers also exceed expectations given starting point. Louisiana falls right on the line.

From 2009-2013, Maryland remains the standout. Georgia, Washington, Utah and Minnesota also do pretty darn well, and yes… Tennessee is in that next batch, but even Wyoming and New Hampshire beat expectations by more, having started higher. Louisiana beats expectations. In any case, it’s hard to make a case that from 2009 to 2013, states that moved most aggressively on teacher evaluation are those that showed greatest gains.

On the recent 2-year bump, Tennessee and DC do quite well, but so too does Minnesota. Colorado (another teacher eval state) does pretty well on this one. This graph may provide the “best” (albeit painfully weak, suspect and short term) “evidence” for teacher eval states – well – except for Minnesota, which I don’t believe was leading the reformy pack on that issue. Of course there are also those who wish to point to choice policies as the driver – noting Indiana’s presence in the mix – but similar inconsistencies undermine this argument (with larger and smaller charter and voucher share states falling, well, all over the place in this figure – but that does warrant some additional figures at a later point.)

Let’s move to 8th grade math.

Grade 8 Math 2003-2013 New Jersey and Massachusetts lead the way on 10 year gains – even though they started high – with Vermont and New Hampshire doing okay as well. Hawaii also isn’t looking bad here. But Louisiana, despite starting low, posted lack-luster gains. Tennessee is right below Nevada – falling pretty much in line with expectations.

New Jersey and Massachusetts lead the way on 10 year gains – even though they started high – with Vermont and New Hampshire doing okay as well. Hawaii also isn’t looking bad here. But Louisiana, despite starting low, posted lack-luster gains. Tennessee is right below Nevada – falling pretty much in line with expectations.

Grade 8 Math 2009-2013 From 2009 to 2013, New Jersey and Massachusetts along with Rhode Island, Hawaii, Ohio, California and Mississippi do pretty well. Not your most reformy mix of states – regarding teacher evaluation or choice programs (but for Ohio’s charter expansion). Louisiana is still sucking it up, and Tennessee falling more or less in line with expectations.

From 2009 to 2013, New Jersey and Massachusetts along with Rhode Island, Hawaii, Ohio, California and Mississippi do pretty well. Not your most reformy mix of states – regarding teacher evaluation or choice programs (but for Ohio’s charter expansion). Louisiana is still sucking it up, and Tennessee falling more or less in line with expectations.

Grade 8 Math 2011-2013 Finally, in the much noisier two year bump on math from 2011 to 2013, we get a little more spreading out – because a two year bump is noisier – less certain – less decisive in any way, and also less related to initial level. Here, New Jersey and Massachusetts are still about as far above expected growth as is Tennessee, which for the first time jumps above expectations for grade 8 math growth. DC does creep into the picture here, and posts some pretty nice gains. BUT… the issue with DC is that its average starting point is so low that it’s hard to predict accurately what its gain would likely be.

Finally, in the much noisier two year bump on math from 2011 to 2013, we get a little more spreading out – because a two year bump is noisier – less certain – less decisive in any way, and also less related to initial level. Here, New Jersey and Massachusetts are still about as far above expected growth as is Tennessee, which for the first time jumps above expectations for grade 8 math growth. DC does creep into the picture here, and posts some pretty nice gains. BUT… the issue with DC is that its average starting point is so low that it’s hard to predict accurately what its gain would likely be.

Is Tennessee’s 2-year growth an anomaly? we’ll have to wait at least another two years to figure that out. Was it caused by teacher evaluation policies? That’s really unlikely, given that those states that are equally and even further above their expectations have approached teacher evaluation in very mixed ways and other states that had taken the reformy lead on teacher policies – Louisiana and Colorado – fall well below expectations.

UPDATE: Classic example of Mis-NAEP-ery

Latest NAEP school test scores suggest that school reform helps. Big improvements in DC & Tennessee, both centers of reform.

— Nicholas Kristof (@NickKristof) November 8, 2013

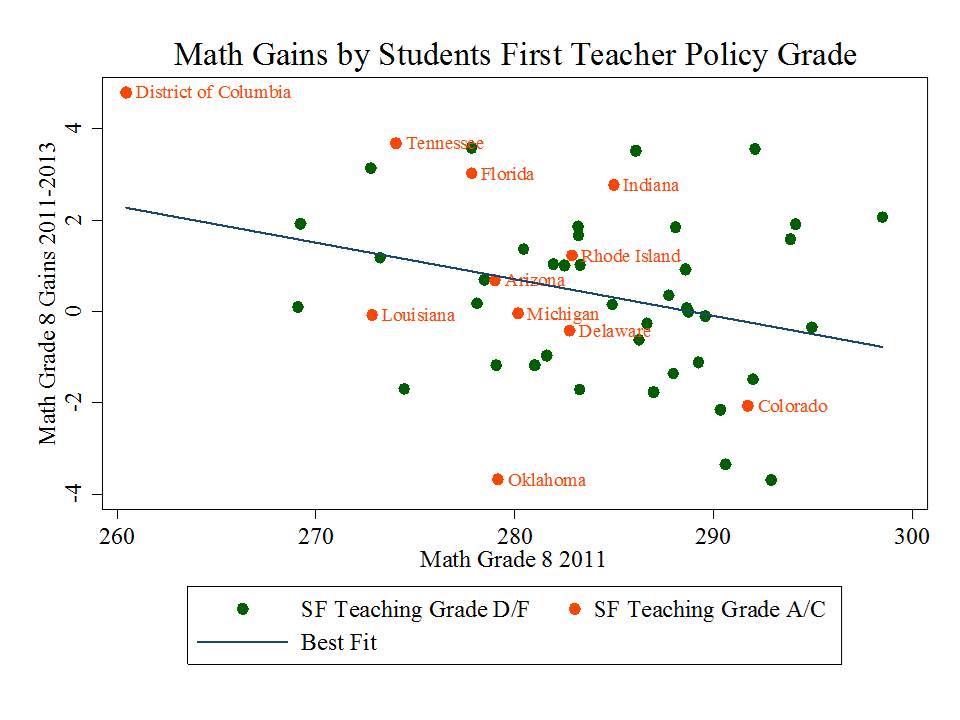

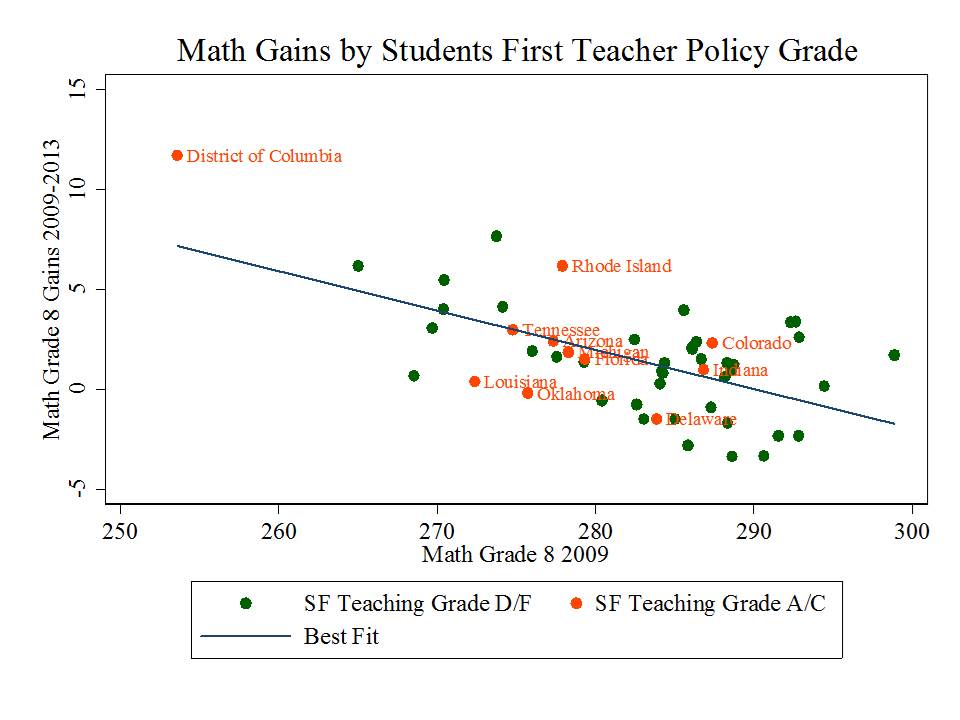

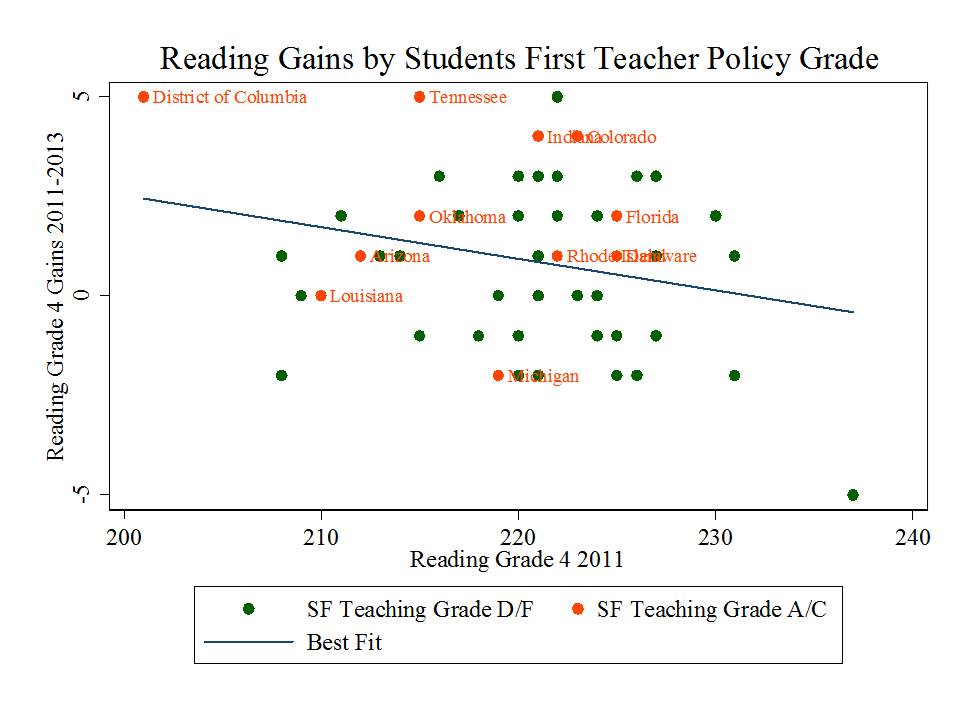

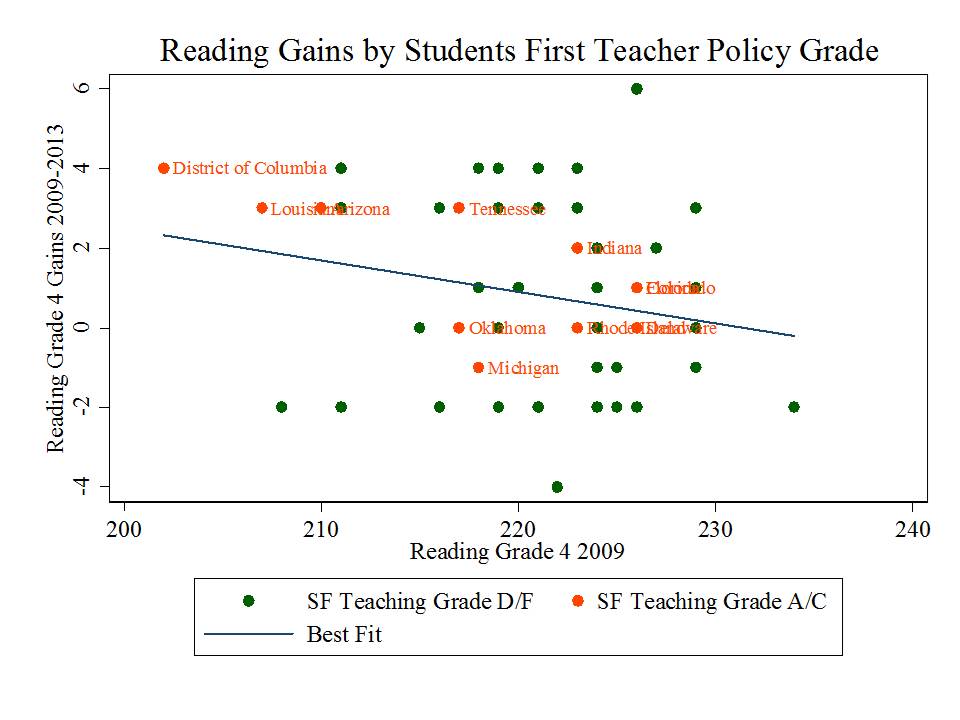

Here are some additional versions of the figures above, in which I have identified the states that received passing grades from Students First for “teaching” related policies.

Clarification: The graphs below separate states that received above/below a “teaching” grade point average of 2.0 from Students First.

Another UPDATE: Here are the trends on DC score improvements… So, in other words, are you really telling me that teacher contractual changes adopted in the last few years affected student gains starting back in 1996?

Another UPDATE: Here are the trends on DC score improvements… So, in other words, are you really telling me that teacher contractual changes adopted in the last few years affected student gains starting back in 1996?

For DC, doesn’t changing student demographics need to be factored in? IIRC, there’s been significant changes the last few years.

absolutely… needs to be factored in across the board. These are cohort changes… which, as much as anything, reflect changing makeup of cohorts.

Bruce, these graphs are fascinating but they make it hard to see many states (e. g. Rhode island) for some results. Are tables available? Keep up the tremendous work! Rick Richards

I’ll see what I can do… it is a pain. I can hand edit these, pulling state labels off one another.

Even if it’s true that reform can be linked to increased test scores. So what? Do test scores have predictive validity beyond future test scores?

Sometimes one must wonder if data really just doesn’t matter to anyone, even the data drivel reform crowd (many who don’t understand the data themselves). I remember the old saying that data could be made to prove any point – and this at graduate school at Teachers College (no swipe at my alma mater, but anumeracy and statistical illiteracy is the norm in education; even in a top school of education like TC). Key pt is that the data being discussed cannot and probably should not be used for making general claims about policy effects in totally uncontrolled situations with multiple variables at many different levels affecting a very complex and also changing outcomes measures structure. Bruce is at least trying to control for obviously important starting pts effects. But this misses dozens of confounding variables with known effects (and possibly hundreds more with unknown effects). Without dealing with cohort issues, changing demographics, changing classroom size, changing teacher demographics, characteristics and teaching styles, changes in leadership and school management approaches, changing economics and school budgets, changing politics, changing education practices, etc, etc. etc. Sometimes its just better to say – we simply do not know, and we do not have the data to tell. And its almost always a mistake to make sweeping decisions or even make general statements to forward policy that affects tens of millions. Sometimes (often in fact) its better to be humble and be very, very cautious about overextending the reach of our data quality and our analytical possibilities.

I have a question – why should there be gains all the time? We’re not even talking about test results from the same kids over years. Isn’t this the intial fallacy with this whole idea?

Indeed… these are merely cohort changes which is why in some cases, shifting scores over time may merely reflect shifting demography. NAEP long term trend data essentially as whether the next cohort, appears, in terms of test scores, better prepared than the previous. But if that next cohort includes more kids in poverty, etc., we may have improved our delivery, but may still not see a higher outcome. We may have merely done okay at offsetting the increase in poverty. Or, the alternative, like DC, declining poverty/gentrification including re-entry of some into the public schooling system (via segregated charters & neighborhood schools) may actually lead to increases in scores over cohorts.