I’ve reached a point after these past few years where I feel that I’ve spent way too much time critiquing poorly constructed arguments and shoddy analyses that seem to be playing far too large a role in influencing state and federal (especially federal) education policy. I find this frustrating not because I wish that my own work got more recognition. I actually think my own work gets too much recognition as well, simply because I’ve become more “media savvy” than some of my peers in recent years.

I find it frustrating because there are numerous exceptional scholars doing exceptional work in school finance and the economics of education whose entire body of rigorous disciplined research seems drowned out by a few prolific hacks with connections in the current policy debate.It may come as a surprise to readers of popular media, but individuals like Mike Petrilli, Eric Osberg, Rick Hess (all listed on the USDOE resource web site) or Bryan Hassel wouldn’t generally be considered credible scholars in school finance or economics of education. I’d perhaps have less concern – and be able to blow this off – if many of the assertions being made by these individuals – and others – weren’t so often completely unsupported by reasonable analysis and if those assertions didn’t lead to potentially dangerous and damaging policies.

This post is specifically about the body of methodologically flimsy research produced in recent years by Marguerite Roza, previously of the Center on Reinventing Public Education and currently an advisor to the Gates Foundation.

Why this post now? I’ve simply lost my patience.

This post is in part a response to the recent unveiling of the U.S. Dept. of Education web site on improving educational productivity http://www.ed.gov/oii-news/resources-framing-educational-productivity. Amazingly, this site lists primarily non-peer reviewed, shoddy work by Marguerite Roza and colleagues and bypasses entirely more serious research on educational productivity or methods for evaluating it. The quality of some of the examples on this site is particularly abysmal. Yet it is presented as “the work of leading thinkers in the field.” (interesting that “thinkers” is used in place of “researchers.”) Among the worst examples, this site lists as a credible resource the Center for American Progress Return on Investment analysis. (by Ulrich Boser, a great writer on the topic of art theft, but in this case, a bit out of field).

I don’t mind so much that this stuff exists. But it certainly doesn’t belong in a serious policy conversation, nor does it represent “the work of leading thinkers in the field.”

Let’s start with a few common attributes of the worst-of-the-worst types of policy research floating around out there and warping and misguiding the education policy debates in general and school finance debates in particular. For lack of a better term, let’s just call it “hack research.”

Perhaps most importantly, hack research fails to recognize all of the credible work that’s already been done on a topic, typically because the research hack who produced it lacks entirely the discipline to bother to understand that body of work and how to build on it in order to come to new, credible findings and conclusions.

Further, hack research displays little regard for the connection between rigorous analysis and conclusions that may be drawn from it. This stems in part from the lack of discipline to actually conduct rigorous analyses.

Particularly effective hacks will not just ignore the body of existing scholarship but will do so belligerently, proclaiming that no good work has ever been done, no credible methods of analysis do exist, and therefore the time is right for their own creative and new perspective! The hack research method substitute is usually some seemingly intuitive, completely shallow, poorly conceived back-of-the-napkin approach. In other words, the hack research motto is that we must think outside the box, because it’s just too much work to open and unpack that box!

Many of us start as hacks, but eventually grow out of it as we realize that there’s a lot of great stuff out there to read and exceptional scholars from which to learn. And, some non-hacky researchers will occasionally hack. Hack happens. It’s only really problematic when it’s a persistent pattern of hackyness or even gets worse over time.

The most dangerous hacks use their shtick to influence policy with catchy anecdotes, convincing policymakers and major players that they need look no further (at real research, for example) than their own hacky “research.” And the most effective hacks can spin findings that never were into pure urban legend – well-accepted myths turned realities – with serious policy implications!

Let’s take a look at a number of mythical findings from shoddy research produced by Marguerite Roza in recent years, including a few sources cited on the USDOE resources page.

Myth #1: States have largely solved between district funding disparities and within district disparities are the remaining problem of the day.

Sources of the myth: See references in Baker/Welner article (cited below)

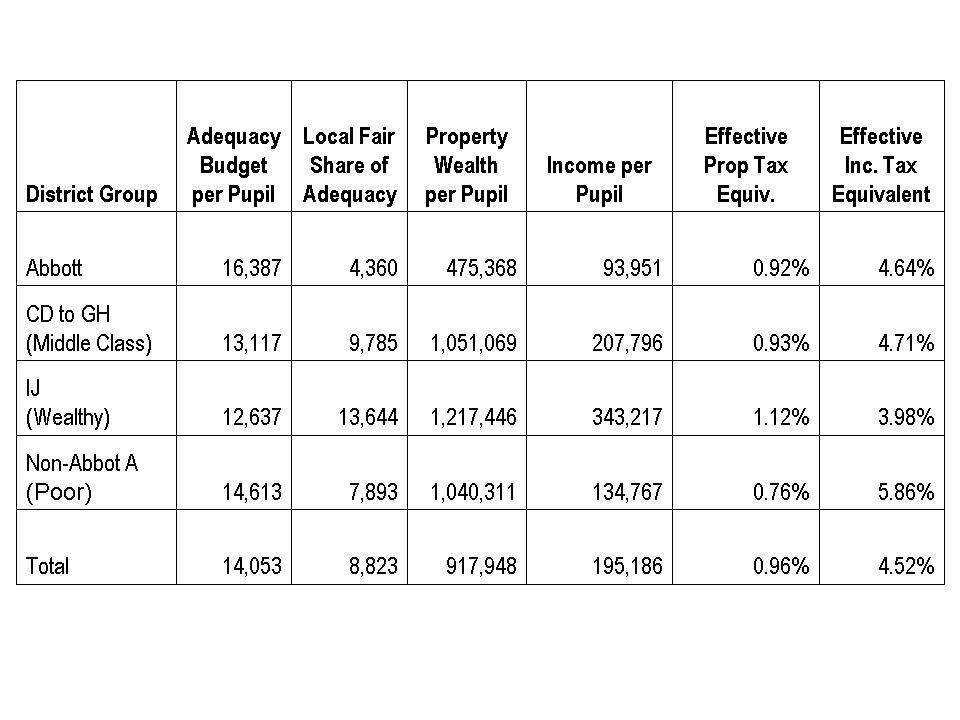

A now common myth in school finance reiterated in numerous sources produced by the Education Trust, Center for American Progress and other DC think tanks and pundits is that states have largely resolved disparities in funding between districts and that persistent disparities are primarily within districts, between schools – a function of illogical district allocation formulas.

In a recent article Kevin Welner and I tackle this argument and dig deeply into the sources behind this argument, which invariably find their way back to Marguerite Roza, then of the Center on Inventing Research Findings – excuse me – Center for Reinventing Public Education (CRPE).

Kevin and I conclude in our article:

Two interlocking claims are being increasingly made around school finance: that states have largely met their obligations to resolve disparities between local public school districts and that the bulk of remaining disparities are those that persist within school districts. These local decisions are described as irrational and unfair school district practices in the allocation of resources between individual schools. In this article, we accept the basic contention of within-district inequities. But we offer a critique of the empirical basis for the claims that within-district gaps are the dominant form of persistent disparities in school finance, finding instead that claims to this effect are largely based on one or a handful of deeply flawed analyses.

Kevin Welner and I dissect in detail the problematic, “non-traditional” methods Roza and colleagues use for conducting their analyses (ignoring real methods used by real researchers in real publications), but perhaps more interesting are those cases where a narrow, measured finding pertaining to one specific estimate in one specific context becomes a national trend, a dominant reality soon thereafter. Op-Ed columns by Roza on the topic of within versus between district funding disparities include particularly egregious examples. Kevin Welner and I explain:

Following a state high court decision in New York mandating increased funding to New York City schools, Roza and Hill (2005) opined: “So, the real problem is not that New York City spends some $4,000 less per pupil than Westchester County, but that some schools in New York [City] spend $10,000 more per pupil than others in the same city.” That is, the state has fixed its end of the system enough.

This statement by Roza and Hill is even more problematic when one dissects it more carefully. What they are saying is that the average of per pupil spending in suburban districts is only $4,000 greater than spending per pupil in New York City but that the difference between maximum and minimum spending across schools in New York City is about $10,000 per pupil. Note the rather misleading apples-and-oranges issue. They are comparing the average in one case to the extremes in another.

In fact, among downstate suburban[1] New York State districts, the range of between-district differences in 2005 was an astounding $50,000 per pupil (between the small, wealthy Bridgehampton district at $69,772 and Franklin Square at $13,979). In that same year, New York City as a district spent $16,616 per pupil, while nine downstate suburban districts spent more than $26,616 (that is, more than $10,000 beyond the average for New York City). Pocantico Hills and Greenburgh, both in Westchester County (the comparison County used by Roza and Hill), spent over $30,000 per pupil in 2005.[2] These numbers dwarf even the purported $10,000 range within New York City (a range that we agree is presumptively problematic); our conclusion based on this cursory analysis is that the bigger problem likely remains the between-district disparity in funding.

For the full take down, see:

Baker, B. D., & Welner, K. G. (2010). “Premature celebrations: The persistence of interdistrict funding disparities” Educational Policy Analysis Archives, 18(9). Retrieved [date] from http://epaa.asu.edu/ojs/article/view/718

Myth #2: America’s public school system suffers from something called Baumol’s disease, therefore the only solutions must be found outside of public education

Source: Curing Baumol’s Disease: In Search of Productivity Gains in K–12 Schooling Paul Hill, Marguerite Roza

While I don’t think this one really ever caught on, it’s so absurd that it must be addressed. Further, it’s actually cited on the USDOE resources in educational productivity page despite the fact that it offers no useful guidance whatsoever on the topic.

The objective of this policy brief by Paul Hill and Marguerite Roza of CRPE is to explain how American public education suffers from Baumol’s disease, or “the tendency of labor-intensive organizations to become more expensive over time but not any more productive.” Hill and Roza’s attempt at empirical validation that American public education suffers from Baumol’s disease is presented in two oversimplified figures, a graph showing increased number of staff who are not core teachers (Figure 1) and a graph showing that student test scores on the National Assessment of Educational Progress have remained flat over time (Figure 2). The latter claim that we’ve seen no improvement in NAEP scores over time is contested.[1] And the former claim, when aggregated nationally is not particularly meaningful. The authors provide no empirically rigorous link between the two.

Rather, the casual reader is simply to assume that public schools have added a lot of non-teaching staff and have, on average, nationally seen no yield for that increase costs. Hill and Roza posit:

“While these indicators clearly point to increased costs for education, efforts to quantify productivity changes have been hampered by measurement challenges on the outputs side of the equation. By most accounts, key indicators of outcomes have not shown comparable gains. A thirty-year look at NAEP performance for seventeen year-olds, for instance, suggests that test scores have changed very little.” (p. 3)

While this may, in fact, not be entirely untrue, the authors provide no rigorous validation that “Baumol’s Disease” is a persistent problem of American public schools.

However, without a disease with a catchy name, there would be little reason for their proposed cure. But the proposed cure is no more thoroughly vetted or precisely articulated than the disease. A central assumption in the Baumol’s disease policy brief is that American public education systems take on one single form, as represented by national averages in the TWO graphs provided, that there is little or no variation within the public education system in terms of resource use or outcomes achieved (e.g. that it all suffers Baumol’s disease), and that therefore the only possible cures are those that come from outside the public education system or at its fringes. That is, that we have nothing to learn from variation within the public education system itself, because there is no such variation. Instead, for example, the authors suggest a closer look at “home schooling, distance learning systems, foreign language learning, franchise tutoring programs, summer content camps, parent-paid instructional programs (music, swimming lessons, etc.), armed services training, industry training/development, apprentice programs, education systems abroad.” (p. 10)

Numerous more credible researchers have spent a great deal of time learning from the heterogeneity of how schools, school districts, and charter schools operate, as well as across states, including studying the relative efficiency of schools that either operate differently or change how they operate. The assumption that the only solutions must come from outside the system is patently absurd, when the “system” consists of 51 policy contexts, over 100,000 schools, 5,000 charter schools and about 15,000 public districts. And it’s just lazy, hack thinking.

While one might gain insights from other labor-intensive industries, or education at the fringes of the current public system, it would be foolish to ignore the extent of variation within the current American public education system, and across traditional public, magnet, charter and private schooling. Arguably, the authors present the view that there is little or nothing to learn from the current system specifically in order to avoid the need for conducting rigorous analysis of it. Further, while such policy briefs may be generously considered as useful conversation starters, we take serious issue with the U.S. Department of Education’s identification of sources of this type, which are purely speculative, and severely lacking in intellectual or empirical rigor, as “Key Readings on Educational Productivity.”

Myth #3: Poor, failing school districts have plenty of money but are squandering too much on Cheerleading and Ceramics when they need to be spending on basics!

Original Source of (the anecdote behind the) myth: “Now is a Great Time to Consider the Per Unit Cost of Everything in Education.”

As I explain in my recent conference paper:

Authors including Marguerite Roza and colleagues of the Center for Reinventing Public Education encourage public outrage that any school district not presently meeting state outcome standards would dare to allocate resources to courses like ceramics or activities like cheerleading. To support their argument, the authors provide anecdotes of per pupil expense on cheerleading being far greater than per pupil expense on core academic subjects like math or English.

- Imagine a high school that spends $328 per student for math courses and $1,348 per cheerleader for cheerleading activities. Or a school where the average per student cost of offering ceramics was $1,608; cosmetology, $1,997; and such core subjects as science, $739.1

These shocking anecdotes, however, are unhelpful for truly understanding resource allocation differences and reallocation options, and are an unfortunate and unnecessary distraction. For example, the major reason why cheerleading or ceramics expenses per pupil are seemingly high is the relatively small class sizes, compared to those in English or Math. In total, the funds allocated to either cheerleading of ceramics are unlikely to have much if any effect if redistributed to reading or math.

Now, this myth is a rather strange one, because the source from which it comes, which is authored by Marguerite alone, really isn’t totally unreasonable. It’s not useful in any way shape or form, but it’s not unreasonable either. This wacky anecdote about cheerleading and ceramics spending comes from a piece in which Roza is trying to explain the importance of comparing unit costs of providing specific programs/opportunities. This is a rather “no duh” idea, and the working paper and eventual book chapter is built on uninteresting anecdotes, at best. The original point of the paper is that if administrators look at the per unit cost of everything, they might find some things that stand out, and some things that might be reasonably reorganized to be offered at a lower unit cost (for example, the cost of cheerleading was reduced by moving it from a class period drawing on salaried time, to an after school activity, paid by small stipend).

But, the spin from this piece has been that this is all that low performing, poor urban districts need to do. They’ve all got enough. They themselves are responsible for the most persistent inequities – not the states. And they are the ones wasting way too much on things like cheerleading and ceramics. Given that this spin has had far more traction than the more reasonable paper behind it, one might assert that this is precisely what Roza intended.

In my paper, I conclude:

Rather, the emergent story from the data in both states was the contrast between high spending, high outcome districts, and low spending low outcome districts and their respective high schools. On average, high spending, high outcome districts were as one might expect much lower in student poverty concentration and low spending, low outcome districts much higher in poverty. That is, after applying thorough cost adjustment including adjustments for differences in student needs. Interestingly, the most striking differences between these groups of districts were not in the availability of assigned teachers or courses in the arts, but rather in the distribution of advanced versus basic course offerings in curricular areas such as math and physical science.

Note that to begin with, low spending, low outcome schools had fewer teacher main assignments and fewer course assignments per pupil. As such, they were, from the outset, more constrained in their allocation options. Further, there is at least some evidence that when evaluating district wide resource allocation, low resource, low outcome districts see greater necessity or feel greater pressure to allocate a larger overall share of resources to elementary classrooms (based on Illinois findings).

More thorough analyses of this issue see:

Baker, B.D. (2011) Cheerleading, Ceramics and the Non-Productive Use of Educational Resources in High Need Districts: Really? Paper presented at the Annual Meeting of the American Educational Research Association, New Orleans, LA 2011

Myth #4: High schools in Washington State pay math and science teachers less than other teacher despite public interest and state policies which encourage paying them more

Source: Washington State High Schools Pay Less for Math and Science Teachers than for Teachers in Other Subjects Jim Simpkins, Marguerite Roza, Cristina Sepe

This is one that suffers from both major issues identified at the beginning of this rant. First, the disconnect between the “study” and the press release:

The Press Release

http://www.crpe.org/cs/crpe/view/news/111

The analysis finds that in twenty-five of the thirty largest districts, math and science teachers had fewer years of teaching experience due to higher turnover—an indication that labor market forces do indeed vary with subject matter expertise. The subject-neutral salary schedule works to ignore these differences.

The Study

http://www.crpe.org/cs/crpe/download/csr_files/rr_crpe_STEM_Aug10.pdf

That said, the lower teacher experience levels are indicative of greater turnover among the math and science teaching ranks, lending support to the hypothesis that math and science teachers may have access to more compelling non-teaching opportunities than do their peers. (p. 5)

That is, the conclusions of the study itself and the press release are, well, not consistent. But this pattern of behavior is entirely consistent for Roza and CRPE.

In a previous post I address just how ridiculous the methods in this analysis are, in which she compares STEM teacher salaries with non-STEM teacher salaries without any controls for other factors that affect salaries (on the argument that salaries shouldn’t be based on those things – experience and degree level – anyway).

All that Roza really found in this paper was that STEM teachers tend to be younger and as a result have lower average salaries than non-STEM teachers. From that, she spun the argument that because STEM teachers don’t earn more than other teachers, but STEM fields are more competitive, STEM teachers must be leaving teaching at a higher rate, leading to a less experienced pool and lower average salaries (a vicious cycle indeed! But one that’s never validated by the ridiculous analysis).

In my post, I actually evaluate several years of teacher level data on all teachers in Washington State, finding most of her conclusions to be flat out wrong. Here’s the figure on mean STEM and non-STEM teacher salaries over time: https://schoolfinance101.com/wp-content/uploads/2010/08/slide42.jpg

I also point out that credible researchers like Lori Taylor of Texas A&M have actually done better analyses of Washington teacher wages and addressed variations in labor market competitiveness by field:

Report on Taylor Study:

http://www.wsipp.wa.gov/rptfiles/08-12-2201.pdf

Taylor Study:

http://www.leg.wa.gov/JointCommittees/BEF/Documents/Mtg11-10_11-08/WAWagesDraftRpt.pdf

Somehow, not surprisingly, Roza was unaware of either this better research or the more credible methods used in this research.

For the full take down, see: https://schoolfinance101.wordpress.com/2010/08/20/new-from-the-center-on-inventing-research-findings/

Myth #5: With our handy-dandy basket of reformy fixes, we can cut significant funding from American public schools and dramatically increase productivity!

Source: Petrilli and Roza

Stretching the School Dollar (Brief)

http://www.edexcellence.net/publications-issues/publications/stretching-the-school-dollar-policy-brief.html

In their policy brief on Stretching the School Dollar, Mike Petrilli of Thomas B. Fordham Institute and Marguerite Roza of the Gates Foundation provide a lengthy laundry list of strategies by which school districts and states might arguably increase their productivity at lower expense, or “stretch the dollar” so to speak. This policy brief is an extension of the Frederick Hess (American Enterprise Institute) and Eric Osberg (Fordham Institute) edited book by the same title. We highlight this source because of repeated specific references to this source in Secretary Duncan’s “New Normal” speeches during the Fall of 2010.[2]

Because this policy brief and book specifically list strategies that are intended to improve productivity at comparable or lower expense, it would be particularly relevant for the book or brief to either provide directly or summarize from other sources, rigorous cost-effectiveness analysis of these options, or relative efficiency comparisons of schools and districts employing these options. But that is apparently asking way too much of Roza or Petrilli. I’ll cut Mike some slack here, because he isn’t the one actually presenting himself as a school finance expert/scholar. That’s Roza’s role in this partnership, therefore the burden falls on her. But after reading enough work by Roza and colleagues, I’m no-longer convinced that she is even aware that there is a body of research out there on Cost-effectiveness analysis or relative efficiency (more on this later). I certainly encourage her to go buy a copy of Hank Levin and Patrick McEwan’s book, not so subtly titled Cost-Effectiveness Analysis: Methods and Applications. It’s a relatively easy, non-academic read.

I’ll offer a primer on these methods and their application to these questions in a future post. There’s no need to beat a dead horse on this topic. I’ve taken down Roza and Petrilli’s reformy gift basket in two previous posts to which you can refer.

For the full take down, see:

Part 1 – Stretching the Truth, Not Dollars: School Finance in a Can: Unproven and Unsubstantiated Dollar-Stretching State Policies

Part 2 – Stretching the Truth, Not Dollars: Considering the Application of Cost-Benefit Analysis to Teacher Layoff Alternatives

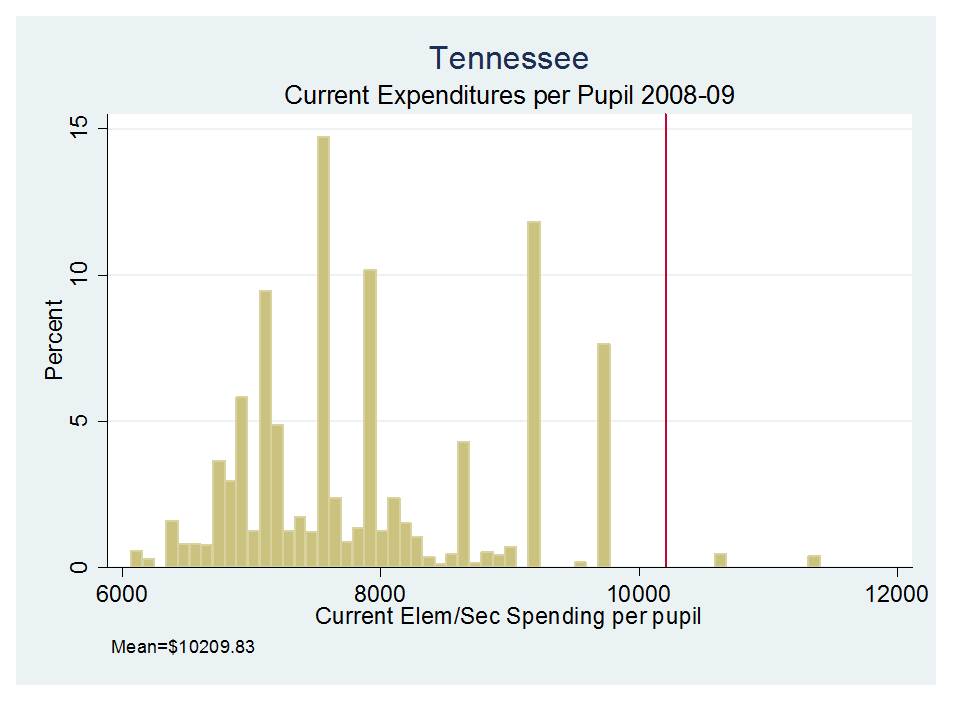

(national mean current spending per pupil in 2008-09 was $10,209.83 [w/outliers excluded])

(national mean current spending per pupil in 2008-09 was $10,209.83 [w/outliers excluded])