On Monday, the organization Students First came out with their state policy rankings, just in time to promote their policy agenda in state legislatures across the country. Let’s be clear, Students First’s state policy rankings are based on a list of what Students First thinks states should do. It’s entirely about their political preferences – largely reformy policies – template stuff that has been sweeping the reformiest states over the past few years. I’ll have more to say about these preferred policies at the end of this post.

Others have already pointed out that Students First gave good grades to states like Louisiana and Florida, and crummy grades to states like New Jersey or Massachusetts – but that states like Louisiana have notoriously among the worst school systems – lowest test scores – in the nation – whereas states like New Jersey and Massachusetts have pretty darn good test scores and well respected school systems. I’ll go there as well, but not as my primary focus. Clearly there’s more behind the test score differences than policy context. New Jersey and Massachusetts certainly have more educated, more affluent adult and parent populations than Louisiana, and that makes a difference.

I’ll anxiously await the day good reformers like Mike Petrilli pack their bags and leave their suburban Washington DC districts to move their kids to the amazing future schools of Louisiana! Heck, given these new Students First ratings, any Louisiana school has to be better- or at least have far more potential – than any school in Montgomery County Maryland, run by that curmudgeonly anti-reformer Suprintendent Joshua Starr! In fact, in my ideal world, Louisiana would become a reformy wonderland… magnet for all the reformy types… where they could go live in peace – rate their teachers by value-added models, fire 10 to 20% each year – pay them nothing – get rid of any retirement benefits, make every school a charter school (operating primarily with imported Turkish and/or Filipino labor), engage in at least 50% online learning (sitting at a computer doing test prep modules), and provide tuition tax credits to all of the reformies who prefer a purely religious perspective interwoven across subjects. Of course, this must all be done with Lousiana’s current level of financial commitment to public schooling.

But I digress… Now back to the Students First ratings. Students First created 3 broad categories of preferred policies for their ratings – policies that it believes:

- Elevate teaching

- Empower parents

- Spend wisely and govern well

By elevate teaching, Students First means the usual basket of reformy options including elimination of traditional salary schedules, teacher evaluations based heavily on student test scores, reduction of retirement benefits and reduction or elimination of due process rights, and pay based primarily on test-score driven evaluation systems. They also prefer to expand alternative routes into the teaching profession. Of course, there’s not a whole lot of transparency into how these various elements are factored into the final grades. But there is a rubric!

By empowering parents, Students First essentially means increasing use of school report cards (more school grades & ratings – yes, a report card that endorses use of … report cards!), reporting to parents when their child is assigned to a teacher with a low rating (driven by test scores), and adoption of policies such as Parent Trigger. And of course, charters should be provided everywhere and anywhere… so everyone has the choice to attend one.

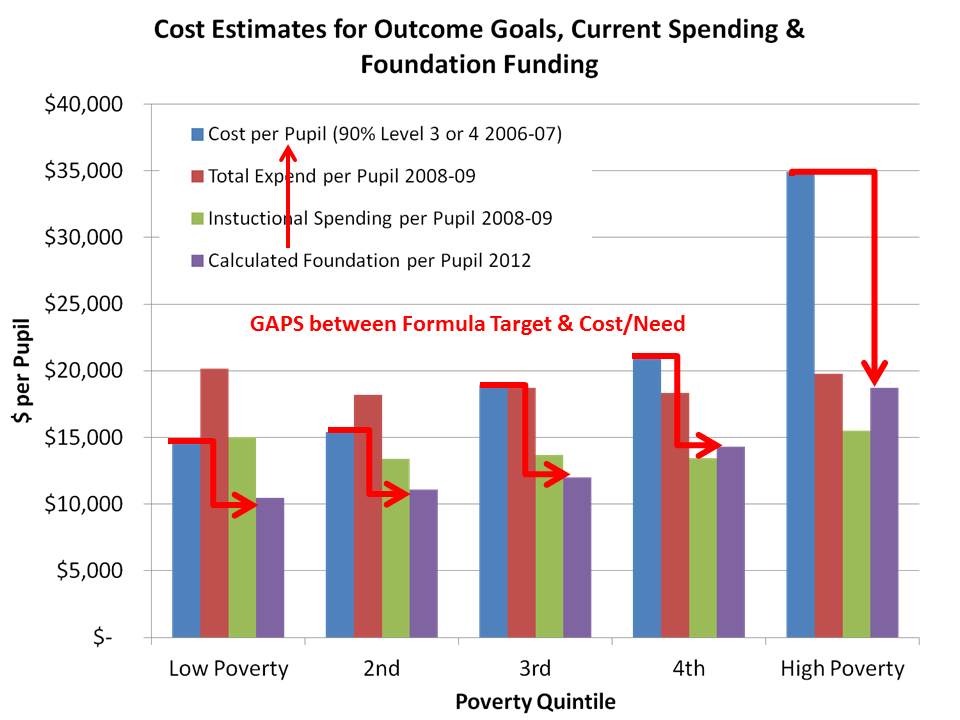

Finally, by spend wisely and govern well… I’m quite honestly not even sure what the hell they mean? They include a broad statement about all kids receiving equitable funding, but seem to imply that this means that charter kids get the same funding as district kids – a pretty narrow interpretation of fairness (and an incalculable one in states with no charters). No actual data seem to be used to rate state funding systems. Fairness is also determined by the provision of publicly finance facilities space to charter schools. Somehow, their ratings of funding totally ignore the vast majority of schools and children across which funds are distributed. In their view, Mayors, not local school boards should govern schools, and schools should report their expenditures uniformly – in a way that shows how the spending affects achievement (good luck with that as a reporting requirement/mechanism).

Every item on their list is somehow mysteriously scored on a “0” (you suck) to “4” (wow… you are REFORMERIFIC!) scale without using any actual data (apparently) to inform that ordinal rating. Then in a wonderful leap of number abuse, these ordinal scale data are averaged to create a grade point average for each broad category – on a 0-4 GPA like scale, where most values of course lie in the imaginary spaces between the original ordinal ratings (like kinda-semi-almost-reformerific = 3.49).

That said, let’s dig into those grades, and other stuff that may or may not correlate with them.

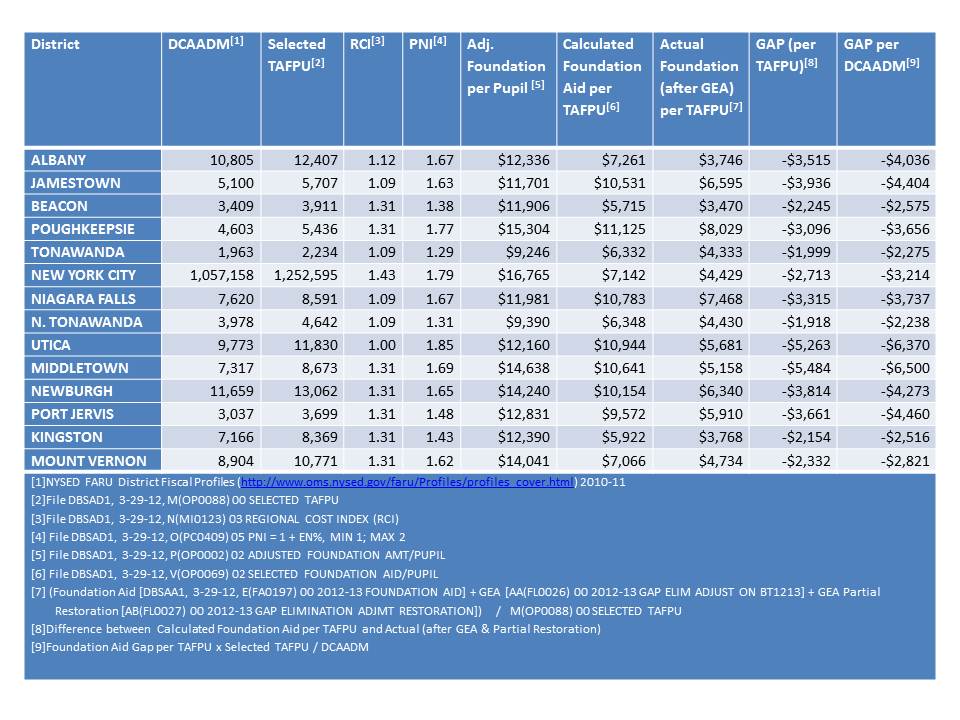

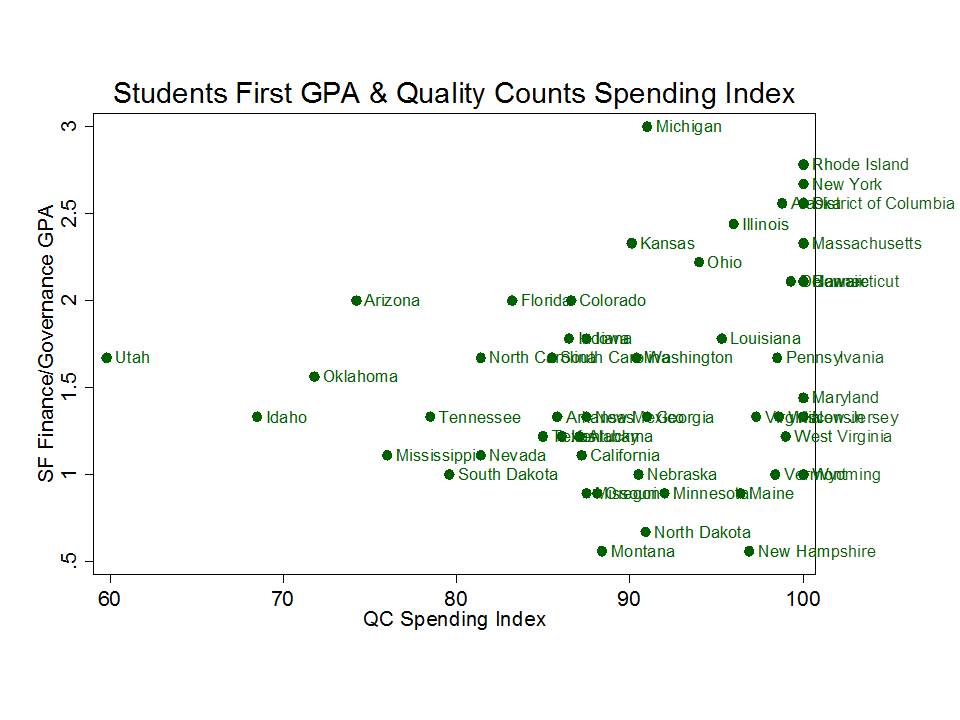

RheeFormy Funding Indicators vs. Real Funding Indicators

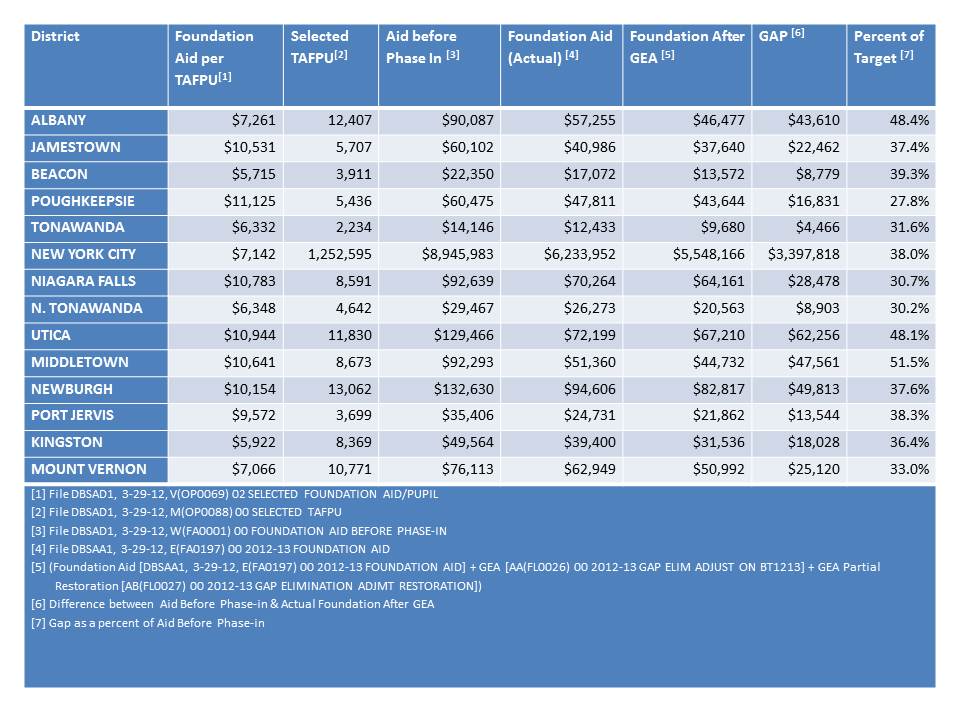

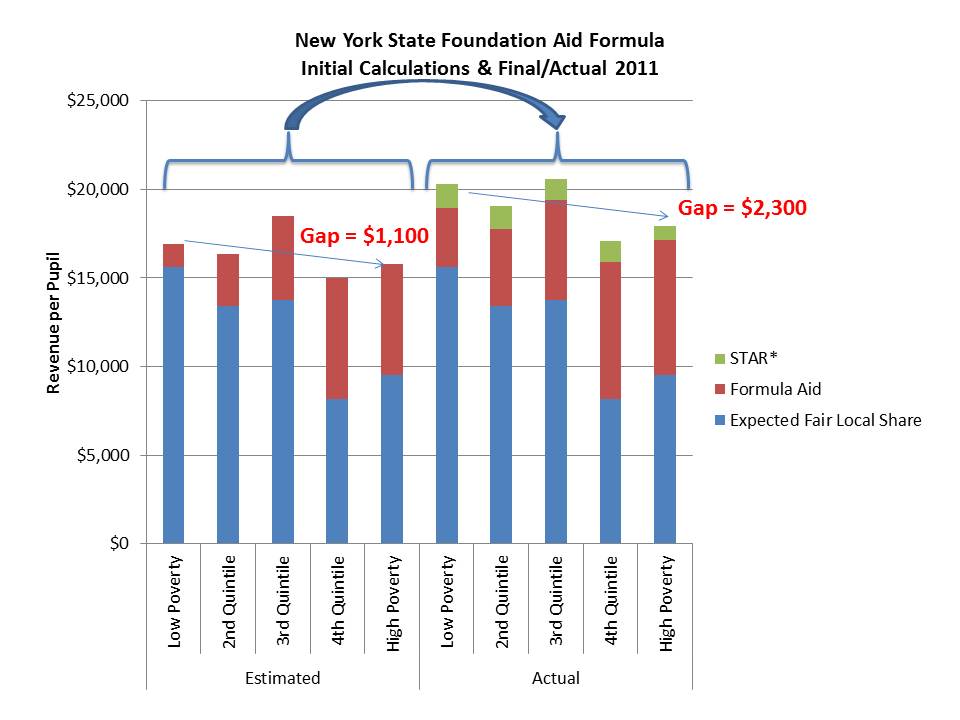

Let’s start with the funding grades which I will relate to two of our primary indicators in our annual report on school funding fairness. First, let’s look at the relationship between the RheeFormy GPA for funding/governance and our rating on the percent of gross state product allocated to K-12 education. Top scorers on RheeFormy funding are Michigan, Rhode Island, New York, Alaska and Illinois. Right away this is rather absurd since New York and Illinois are quite well known to have among the least equitable school funding systems in the nation…. but that’s the next graph. RheeFormy winners Florida and Louisiana are not particular standouts on their funding effort to education, whereas the states of New Jersey and Massachusetts, despite being much richer to begin with, allocate a much larger share of their economic productivity to elementary and secondary education. But hey, why would anyone want to count how much effort a state actually puts into funding its schools? right? how could that matter?

Figure 1.

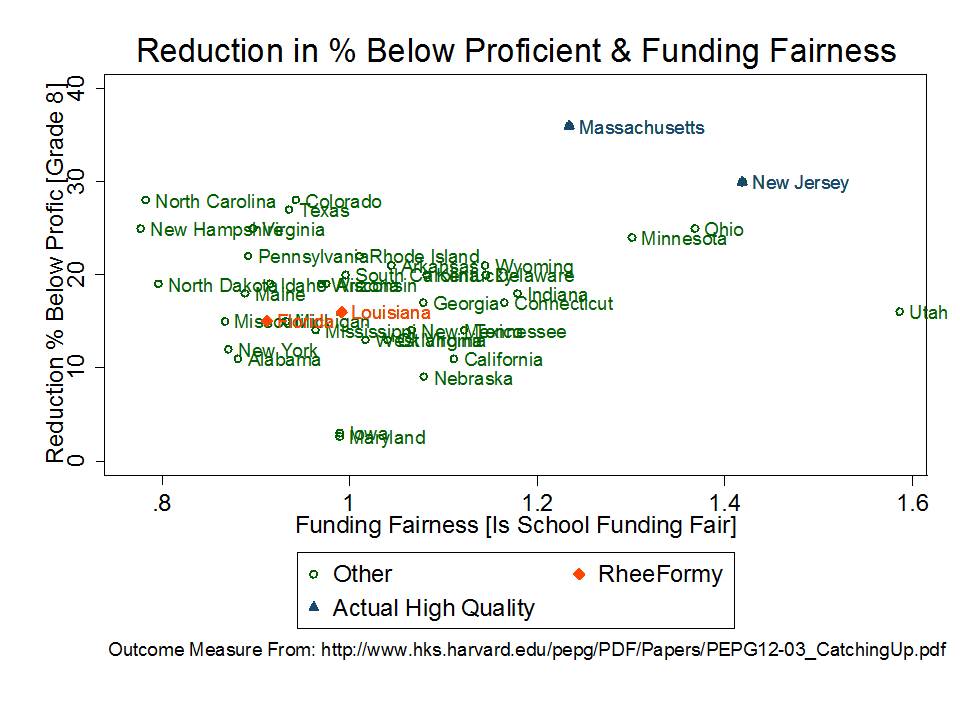

Figure 2 compares the RheeFormy finance GPA to our indicator of funding fairness. Our indicator of funding fairness compares the projected state and local revenue of high poverty school districts to that of low poverty school districts. A value of greater than 1.0 indicates a progressive system where more resources are allocated to higher poverty districts and a value of less than 1.0 indicates a regressive system. Not surprisingly, the RheeFormy standouts of Florida and Louisiana and especially New York and Illinois (which are among the top in RheeFormy finance) are all highly regressive states. Uh… that’s bad… not good. High poverty districts get the shaft in these states. But that’s apparently just fine with Students First. In fact, it seems preferable! Way to go!

Meanwhile, New Jersey and Massachusetts, along with Ohio, are relatively progressive on finance. Yes, Utah is too, but that’s because Utah spends next to nothing on most schools and slightly more than next to nothing on lower income schools.

So, apparently, RheeFormy logic dictates that to really make progress on achievement in low income communities, money really doesn’t matter. State school finance systems don’t matter, and in fact, spending less on districts with more poor children is the way to go. Good for New York and Illinois! NOT!

Figure 2.

What we know about school finance reforms, funding level & distribution & student outcomes

…sustained improvements to the level and distribution of funding across local public school districts can lead to improvements in the level and distribution of student outcomes. While money alone may not be the answer, adequate and equitable distributions of financial inputs to schooling provide a necessary underlying condition for improving adequacy and equity of outcomes. That is, if the money isn’t there, schools and districts simply don’t have a “leverage option” that can support strategies that might improve student outcomes. If the money is there, they can use it productively; if it’s not, they can’t. But, even if they have the money, there’s no guarantee that they will. Evidence from Massachusetts, in particular, suggests that appropriate combinations of more funding with more accountability may be most promising.

See: http://www.shankerinstitute.org/images/doesmoneymatter_final.pdf

Put bluntly – equitable and adequate financing for the education of all children is a prerequisite condition for achieving equitable and adequate outcomes. The Students First rating system misses this point entirely – measuring neither the equity nor adequacy – nor effort to raise these prerequisite resources.

No, the Students First ratings don’t pretend to measure these things. But, they seem to argue that their ratings measure the prerequisite conditions for reforming education systems – where I must assume they mean making those systems better. But the reality is that the ratings focus on trivial and tangential reformy preferences, leading them to praise states that are among the worst in the nation on school funding and chastise states that are among the best.

RheeFormy Teacher Quality Indicators vs. Competitive Compensation

Next up are the RheeFormy ratings on elevating the teaching profession, where quite clearly, having competitive wages for teachers (relatively to the workforce of similarly educated workers) is a non-issue. Figure 3 shows the relationship between the relative weekly wage of teachers – compared to same education level non-teachers – and the elevating teaching GPA. Teachers in Louisiana have among the largest “teaching penalties” earning only about 70% of the weekly wage of non-teachers. That’ll certainly elevate the profession! They are right up there with other stellar reformy states including Colorado and Tennessee. Teachers in Florida do a bit better. Teachers in Massachusetts don’t do that well either, but Massachusetts is a state where non-teacher wages are quite high. So being relatively low in Massachusetts might not be as bad as being relatively low in Louisiana or Tennessee! (They also have the benefit of being able to send their own kids to pretty good public schools.) Teacher wages in New Jersey are more competitive, even in their higher non-teacher wage competitive context.

Figure 3.

In really simple terms – competitive wages are the first step toward elevating the teaching profession. Given what we actually know from research on “elevating the teaching profession” I don’t expect to see America’s best teachers flocking to Louisiana, Colorado and Tennessee anytime soon. But, I certainly hope to see those reformers in their caravan, moving their families to those reformy promise lands!

What we know about policy conditions for a strong teacher workforce

A substantial body of literature has accumulated to validate the conclusion that both teachers’ overall wages and relative wages affect the quality of those who choose to enter the teaching profession, and whether they stay once they get in. For example, Murnane and Olson (1989) found that salaries affect the decision to enter teaching and the duration of the teaching career,[i] while Figlio (1997, 2002) and Ferguson (1991) concluded that higher salaries are associated with more qualified teachers.[ii] In addition, more recent studies have tackled the specific issues of relative pay noted above. Loeb and Page showed that:

“Once we adjust for labor market factors, we estimate that raising teacher wages by 10 percent reduces high school dropout rates by 3 percent to 4 percent. Our findings suggest that previous studies have failed to produce robust estimates because they lack adequate controls for non-wage aspects of teaching and market differences in alternative occupational opportunities.”[iii]

In short, while salaries are not the only factor involved, they do affect the quality of the teaching workforce, which in turn affects student outcomes.

Research on the flip side of this issue – evaluating spending constraints or reductions – reveals the potential harm to teaching quality that flows from leveling down or reducing spending. For example, David Figlio and Kim Rueben (2001) note that, “Using data from the National Center for Education Statistics we find that tax limits systematically reduce the average quality of education majors, as well as new public school teachers in states that have passed these limits.”[iv]

Salaries also play a potentially important role in improving the equity of student outcomes. While several studies show that higher salaries relative to labor market norms can draw higher quality candidates into teaching, the evidence also indicates that relative teacher salaries across schools and districts may influence the distribution of teaching quality. For example, Ondrich, Pas and Yinger (2008) “find that teachers in districts with higher salaries relative to non-teaching salaries in the same county are less likely to leave teaching and that a teacher is less likely to change districts when he or she teaches in a district near the top of the teacher salary distribution in that county.”[v]

And what do we know about the effectiveness of the preferred Students First policies of providing performance based pay? For recent studies specifically on the topic of “merit pay,” each of which generally finds no positive effects of merit pay on student outcomes, see:

- Glazerman, S., Seifullah, A. (2010) An Evaluation of the Teacher Advancement Program in Chicago: Year Two Impact Report. Mathematica Policy Research Institute. 6319-520

- Springer, M.G., Ballou, D., Hamilton, L., Le, V., Lockwood, J.R., McCaffrey, D., Pepper, M., and Stecher, B. (2010). Teacher Pay for Performance: Experimental Evidence from the Project on Incentives in Teaching. Nashville, TN: National Center on Performance Incentives at Vanderbilt University.

- Marsh, J. A., Springer, M. G., McCaffrey, D. F., Yuan, K., Epstein, S., Koppich, J., Kalra, N., DiMartino, C., & Peng, A. (2011). A Big Apple for Educators: New York City’s Experiment with Schoolwide Performance Bonuses. Final Evaluation Report. RAND Corporation & Vanderbilt University.

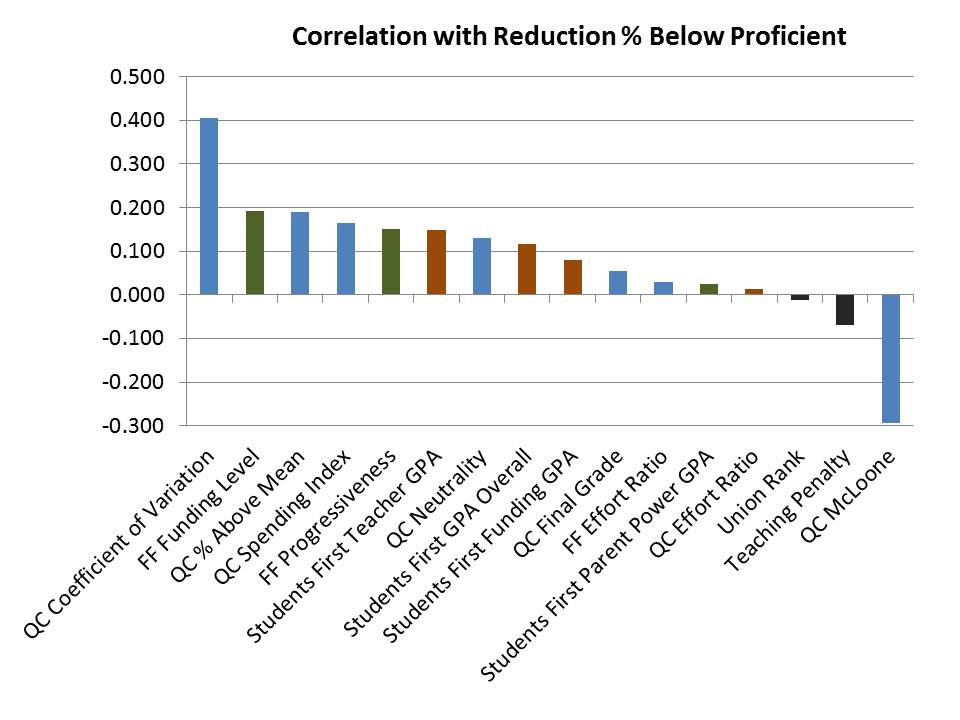

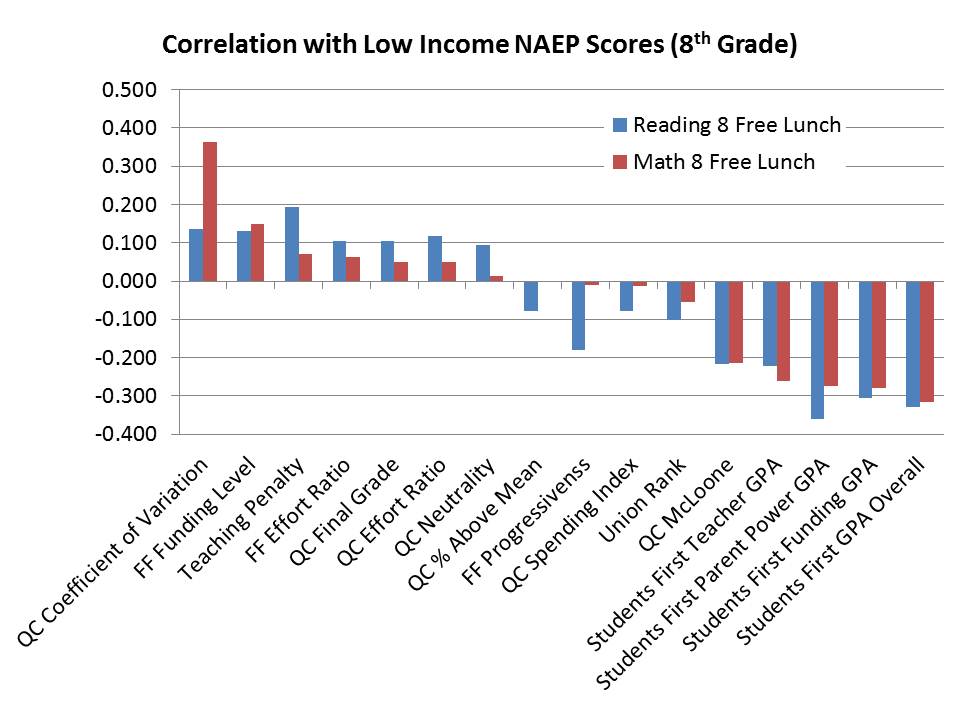

RheeFormy States are NOT a Model for our Nation!

Now for that discussion of outcomes I mentioned previously. Well, here’s what it looks like. The new RheeFormy ratings applaud the likes of Louisiana, Florida… Tennessee and even Washington DC. These are anything but stellar performers on national assessments, as shown in Figure 4 and Figure 5. But indeed, these are also states (and a city) with relatively high child poverty rates.

Figure 4.

Figure 5.

Others have argued that states like Louisiana and Florida in particular – while being low performers – have posted impressive gains on NAEP over the past 20 years (most of which predates adoption of these new RheeFormy policies). Figure 6 uses the standardize annual NAEP gains reported in

THIS REPORT. It would appear here that overall winners on the Students First Ratings do have pretty good NAEP gains over time. But, Massachussetts and New Jersey – RheeFormy losers actually posted gains on NAEP similar to those of Louisiana and Florida!

Figure 6.

Even more impressive, New Jersey (and also Massachusetts, but 1990 scores were not available) posted strong NAEP gains despite being relatively high to begin with. As it turns out, Louisiana had nowhere to go but up. And it appears that starting out with very low NAEP scores is a pretty strong determinant of how much a state gained over time. The lower your starting point, the more you gained. But that Non-reformy New Jersey – curmudgeonly high spending, fair spending state of New Jersey – posted gains similar to Louisiana even though it started out already among the highest performing states!

Figure 7.

And what else about those outcomes in those stick-in-the-mud

union dominated states of Massachusetts and New Jersey? As it turns out, while they were working on spending their money fairly on lower income kids they were also making significant gains in reducing the percent of children scoring below proficiency on NAEP (again using data from the

PEPG Catching Up report!) Yep, there they are, flying pretty high on funding fairness and on improving the outcomes of the lowest performing students. Louisiana and Florida… well… not so much!

Figure 8.

A Comment on Accountability, Empowerment, Transparency & Students First Preferred Policies

Finally, I close with a topic that should be another blog post altogether, and likely will be at some point. I’ve been struck by the logic that the preferred policies in the Students First report are intended – by their framing – to increase accountability, empowerment and transparency. Yet, in all likelihood, most of these proposals accomplish precisely the opposite – substantially eroding public accountability and oversight and compromising statutory and constitutional rights of children, employees and local taxpayers.

Now, we may have our differing perspectives on the structure of our American government and operation of government entities. But, our government has a defined structure with reasonably well conceived overarching laws.

The U.S. Constitution, state constitutions and various federal and state statutes provide important protections to students and employees and the taxpayers that finance public institutions. Importantly, our constitution protects individuals from certain treatments by our government and agents of our government. Public schools – government schools to borrow from libertarian rhetoric – fall under that umbrella, and must, for example, provide children due process before depriving them the right to attend, and must respect – to a limited extent – students rights to free expression, etc. Government schools also cannot promote/endorse a particular religious viewpoint (proselytize). Other protections, many protections of both children and employees of government institutions are invoked through Section 1983 of the U.S. Code which applies to entities that are ‘state actors’ (uh… government entities). Further, many state laws apply to government entities and to ‘public officials.’

We have a representative system of government with multiple levels, where public officials are elected by (and accountable to) voters (albeit often a small share of those eligible), and where additional layers of public officials may be appointed by elected public officials. And, as noted above, many laws, especially those pertaining to public disclosure, public meetings and public records apply to ‘public officials.’

The Students First state policy rating system – like many other reformy manifestos – implies that the road to ACCOUNTABILITY and TRANSPARENCY is necessarily (perhaps exclusively) paved through shifting larger numbers of students and teachers and larger shares of public funding over to the management of non-government entities and non-public officials, as well as creating entirely new layers of ‘public decision making’ by referendum/petition (Parent Trigger). Whatever gripes we may have regarding the efficiency or responsiveness of government operated services, we must think this one through carefully.

Unless detailed accountability requirements are explicitly spelled out in a whole new layer of state and federal laws, the preferred policies laid out in the Students First and by other reformy institutions are more likely to lead to less public accountability and transparency rather than more. For example:

- Shifting substantial numbers of students into private schools or privately managed charter schools means that larger shares of students will have limited constitutional and statutory protections. When students are educated under privately managed schools – including charters – they do not (unless explicitly laid out in state charter laws) have the same constitutional protections with respect to discipline policies and they lack other important statutory protections that apply only to “state actors” (government institutions). Indeed, parents have a choice of whether to forgo these rights for their children. BUT…. advocates of these policies are deceitfully selling charter schooling as ‘public’ (with all the rights, privileges, etc.) to an unknowing public.

- Shifting substantial shares of public financing to entities governed by appointed boards of private citizens (not public officials), private management firms and private subcontractors reduces financial transparency because these institutions and individuals may – and most often do when challenged – invoke that they are not subject to open meetings, open records and other disclosure laws that necessarily apply to government entities.

Further, specific policies including parent trigger policies and ‘opportunity scholarship’ tuition tax credits may also substantially erode public accountability.

- Parent trigger attempts to subvert traditional elected representative local government by granting disproportionate power to a temporary class of citizens – parents of children attending a school at a given moment in time – to make relatively permanent decisions, by simply majority rule, regarding operations of public assets, public programs and services, including the option to make them no-longer public. But these assets, programs and services belong to and serve directly and indirectly the larger community of eligible voters who put in place their elected school board (or city government officials that appointed a portion or all of that board).

- Establishment of privately governed entities to manage funds collected through a tax credit program [Opportunity Scholarship Vouchers] is comparable to simply handing over an equivalent sum of funds collected as tax dollars to that entity, except that taxpayers lose any/all rights/accountability over the use of those funds! As with private management firms and schools, private entities of this type are not governed directly by public officials and therefore may not be similarly legally accountable. They may not be subject to the same level of public records or meetings disclosure, etc. This mechanism [Tuition Tax Credit] has been used as a means to get around constitutional concerns over allocation of public tax revenues to religious institutions. That is, this mechanism was created specifically to negate taxpayer standing to mount legal challenges over the use of funds. The U.S. Supreme Court has determined that when tax credit programs are structured in this way, taxpayers have no right [no standing] to bring constitutional (or likely any) challenges over the use of these funds. [http://www.supremecourt.gov/opinions/10pdf/09-987.pdf]

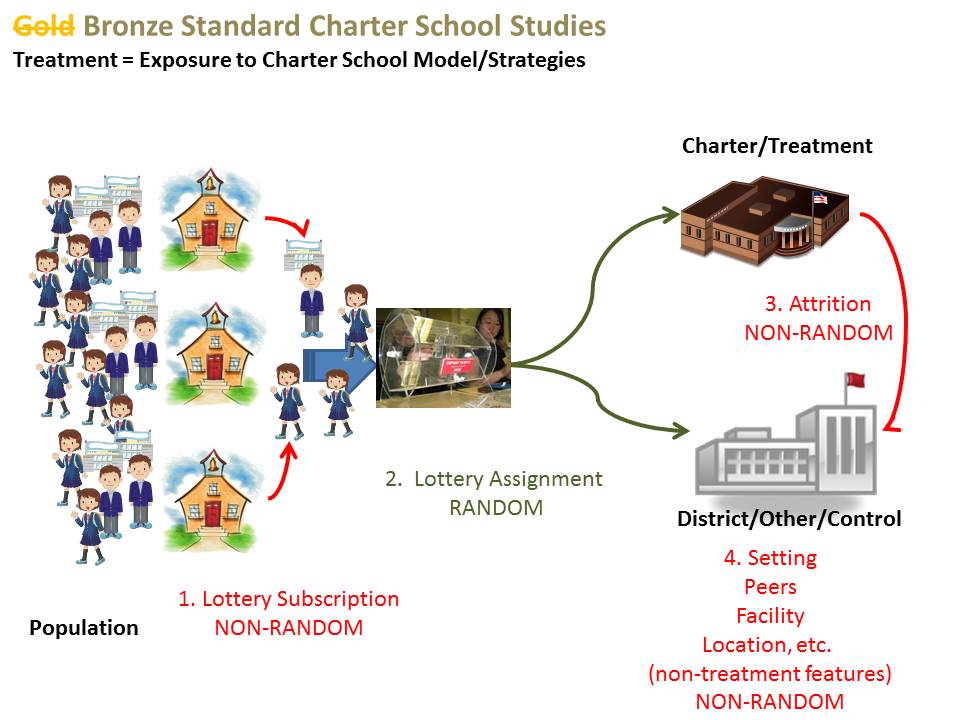

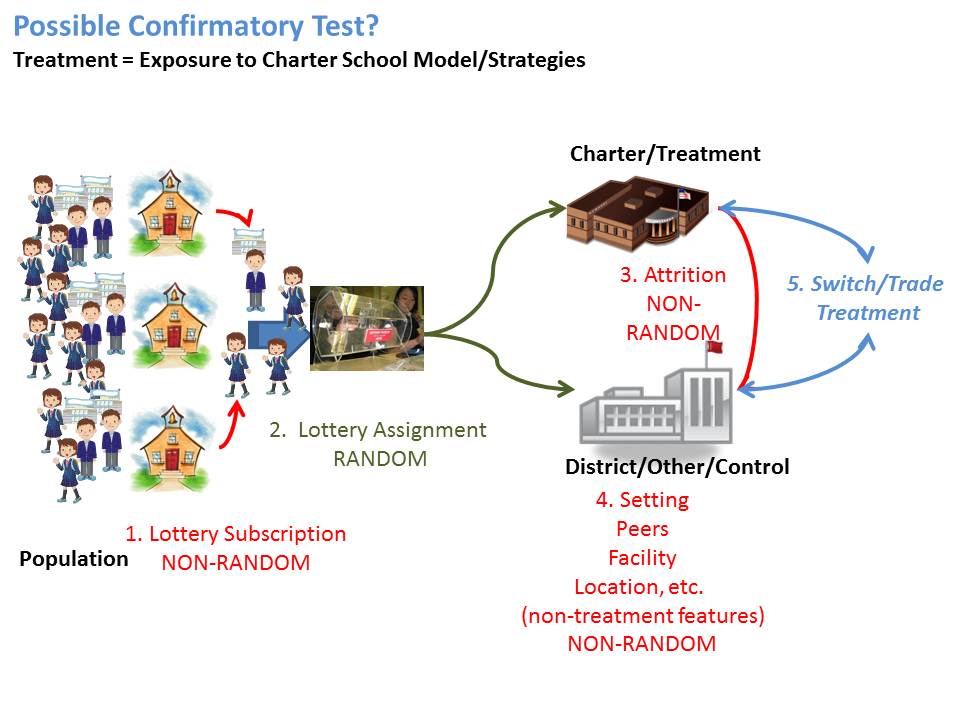

So yes – Students First has their policy preferences – and they’re certainly entitled to that. They’ve built their entire rating system on their idea of what’s good policy. They’ve not tried to justify their policy preferences in any research basis on effectiveness or efficiency of these policy preferences, nor could they. There simply is no research basis to support the vast majority of their preferences. Even where Charter school policy is concerned, findings of successful charters seem to occur most often where authorizers are few and tightly regulated, and where charter market share is low (as in NYC or Boston). This is in direct contrast with the SF preference for further deregulating and expanding the sector (as in states with relatively poor charter performance). So, in short, there’s simply no research based reason to follow the policy agenda of Students First. But the reasons they provide – accountability, transparency, blah… blah… blah… are also not consistent with their policy agenda.

As a school finance researcher in particular, I’ve been increasingly frustrated by the lack of detailed consistent financial reporting on charter schools, and I’ve written much on this topic. I’ve also written on private school financing, which is even more sparsely reported. The more kids who are shifted into charters, the fewer kids on which we have reliable, comprehensive information on finances, teacher contracts, compensation packages etc. (as charters management companies repeatedly invoke that their employee contracts are private, not public documents). Similarly, financial arrangements involving land deals and capital financing are more opaque than ever – far more opaque and inaccessible than the public financing world of municipal bond financed infrastructure. And I don’t see any legitimate effort to make these institutions more transparent – NONE!

[i] Richard J. Murnane and Randall Olsen (1989) The effects of salaries and opportunity costs on length of state in teaching. Evidence from Michigan. Review of Economics and Statistics 71 (2) 347-352

[ii] David N. Figlio (2002) Can Public Schools Buy Better-Qualified Teachers?” Industrial and Labor Relations Review 55, 686-699. David N. Figlio (1997) Teacher Salaries and Teacher Quality. Economics Letters 55 267-271. Ronald Ferguson (1991) Paying for Public Education: New Evidence on How and Why Money Matters. Harvard Journal on Legislation. 28 (2) 465-498.

[iii] Loeb, S., Page, M. (2000) Examining the Link Between Teacher Wages and Student Outcomes: The Importance of Alternative Labor Market Opportunities and Non-Pecuniary Variation. Review of Economics and Statistics 82 (3) 393-408

[iv] Figlio, D.N., Rueben, K. (2001) Tax Limits and the Qualifications of New Teachers. Journal of Public Economics. April, 49-71

See also:

Downes, T. A. Figlio, D. N. (1999) Do Tax and Expenditure Limits Provide a Free Lunch? Evidence on the Link Between Limits and Public Sector Service Quality52 (1) 113-128

[v] Ondrich, J., Pas, E., Yinger, J. (2008) The Determinants of Teacher Attrition in Upstate New York. Public Finance Review 36 (1) 112-144