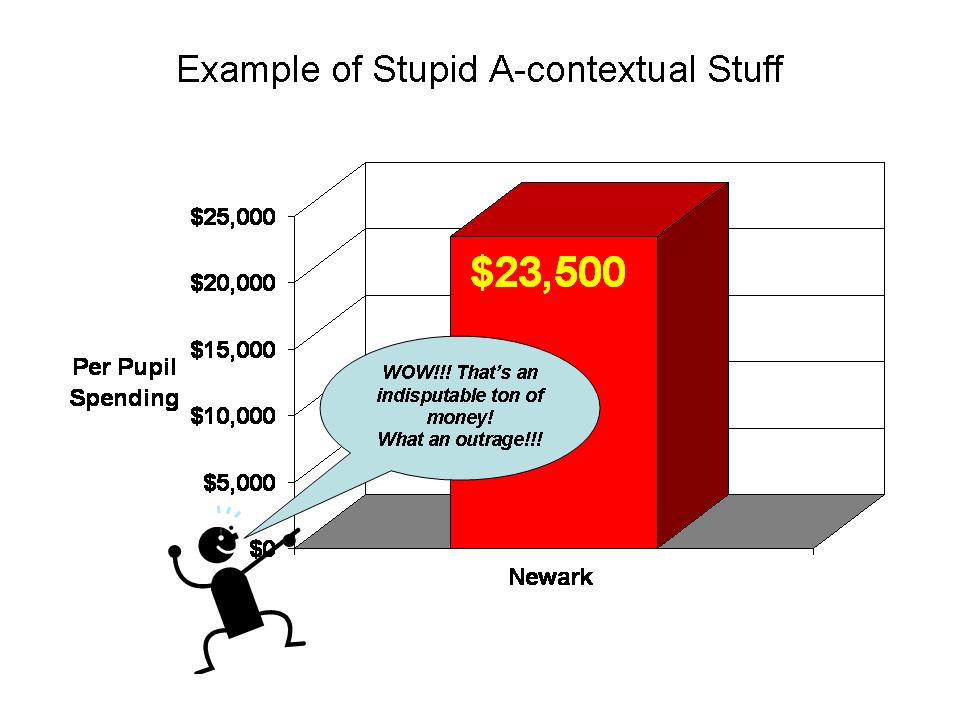

Anyone who’s been reading popular media or who watched any of NBC’s Education Nation now knows as a simple fact that Newark Public Schools spend $22,000 per pupil and that’s simply a disgracefully high amount! Just check out this Google search for “Newark” & “22,000” & “per-pupil.”

Along with this number come claims of the sort – Newark is one of if not the most expensive public school system in the nation! It’s simply fact – truthiness – proofiness – or perhaps even factiness. Recent Newark claims are about as facty as these two other great claims from school finance lore:

- that Kansas City Missouri spent more than any other district in the nation for 10 years running (but of course still failed miserably); and

- that the average private per pupil cost is about $3,500 per year.

Coauthor Preston C. Green and I tear down the first of these “urban legends” of school finance in this article. I tear down the myth of the $3,500 private school in this report from 2009.

This post is about putting numbers into context. In the urban legends article above, Preston Green and I simply fact check national data on per pupil spending and find that Kansas City came close in one year in the early 1990s, but fell precipitously after that, as desegregation funding tapered off. The mythical private school number is one of those numbers that relies on a single report of a very incomplete sample of “tuition” not spending data (where tuition does not cover full costs or spending), from around 1999 – yet references to that same number still persist. Time is part of the context of money!

So, where do these Newark spending figures come from and why is important to contextualize these numbers? First of all, the $22,000 number is a rounded figure based on the total current spending per pupil reported in the 2006-07 U.S. Census Fiscal Survey – elementary and secondary finances. The updated 2007-08 figure rises to $23,500. Wow… holy crap… that’s a lot right?

So, we’re kind of starting with a “fact” (a number that can be linked to some reasonable source). That said, even this fact varies by source. Note that in a per pupil spending figure we’ve got a numerator – spending and a denominator – pupils. Both matter to how this number is calculated. The New Jersey Department of Education reports Newark Public Schools Spending per Pupil around $16,000 to $17,000 during those same years. NJDOE also reports NPS enrollment well over 45,000. The implicit enrollment of NPS in the Census Fiscal Survey figures (backed out from total current spending and per pupil estimate) is around 43,000. Census reports NPS enrollment at about 40,000 (but uses the 43,000 figure to generate the per pupil figure). So, this unexplained discrepancy is responsible for some of the difference. But this is all rather trivial quibbling to some extent. A much simpler, transparent issue is the issue of context.

Let’s walk through some examples. Here is how the media has been pitching Newark Public Schools spending:

One big bar on a graph, sitting out in space. The general public doesn’t have a particularly good idea of what that $23,500 means. So, they are simply told – Damn… that’ s a lot- maybe even the most in the country! Either way, it’s ton of money… which is unquestionably being wasted.

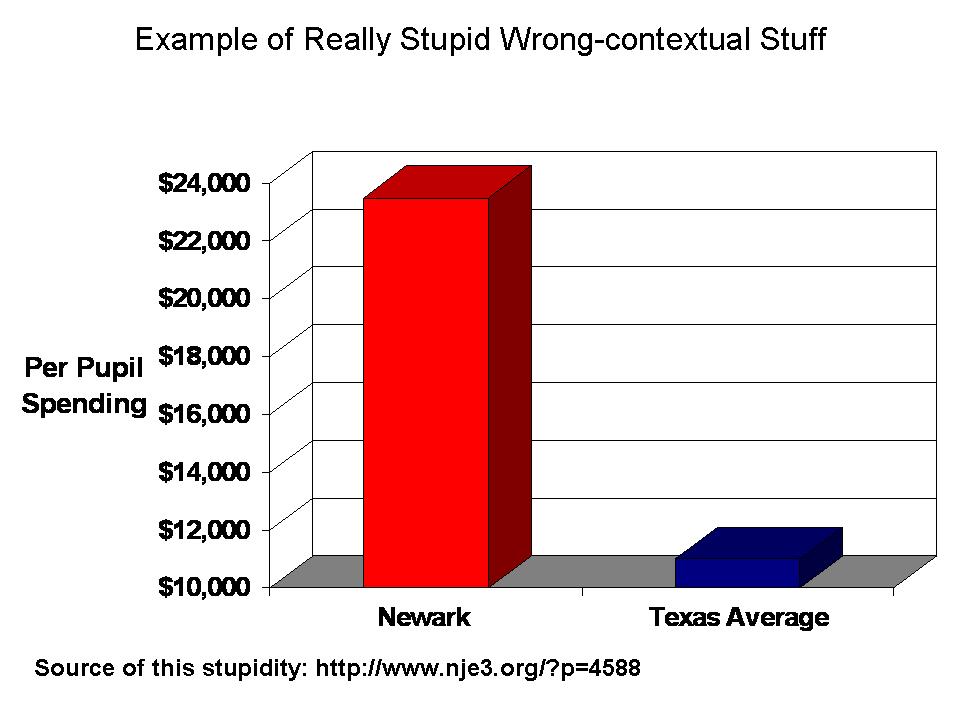

The other comparison we’ve been seeing and hearing in New Jersey is that Newark spends twice what Texas spends per pupil – and they get great outcomes and Newark… well… stinks… because they’re wasting it all. Here’s my stylized graph of that example (which you can find here: http://www.nje3.org/?p=4588)

Wow, that’s some really brilliant stuff there. For fun, I’ve used one of those fine visual tricks of cutting off the first $10,000 to emphasize the fact that Newark spends a ton, and Texas not much at all… Texas great… Newark stinky – expensive and stinky.

Setting aside this foolishness, how does Newark actually compare – in various contexts?

Is Newark the highest spending district in the nation? one of them?

Is Newark the highest spending district in the metropolitan area? (NYC Core based statistical area)

Is Newark even the highest spending in New Jersey?

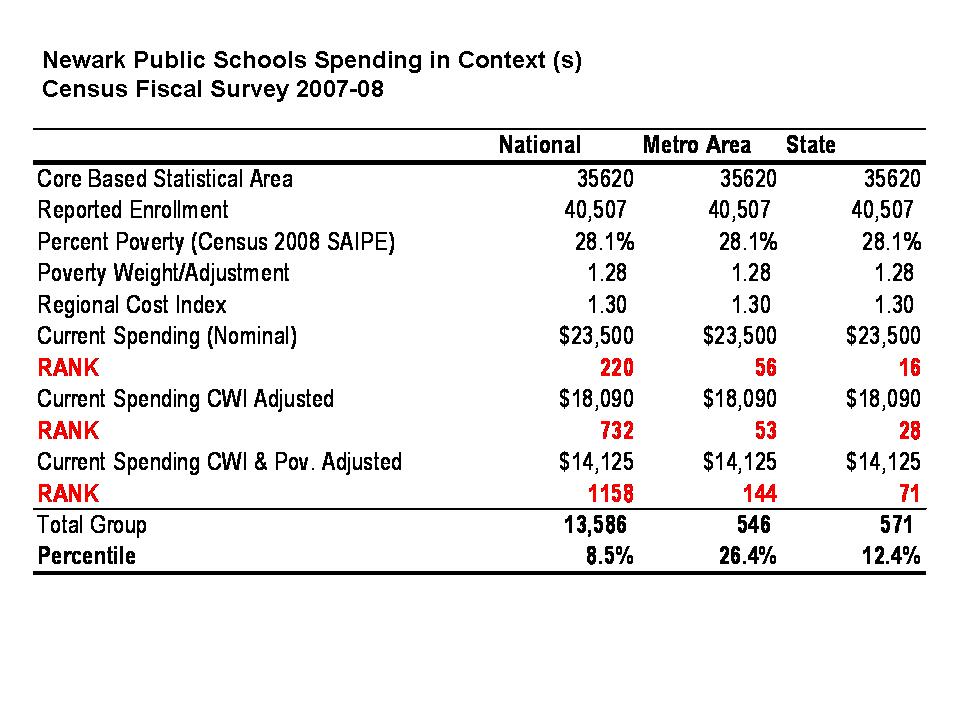

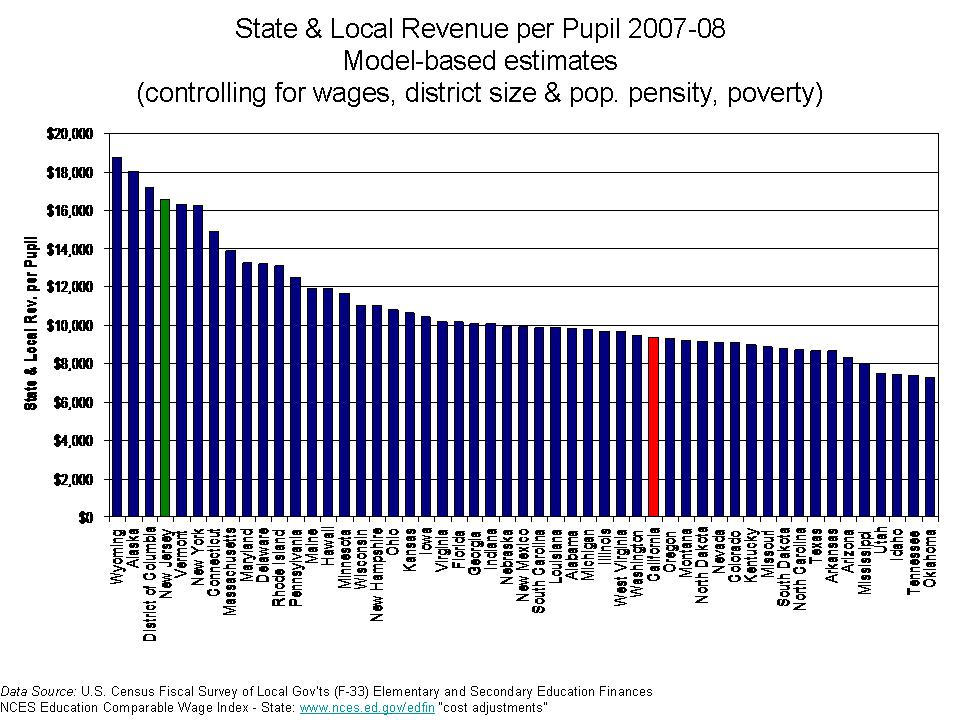

Here, I use the Census Fiscal Survey data from 2007-08 and walk through for NPS, the same comparisons I did in the previous state ranking post.

This table shows the alternative comparisons. First out of 13,500 or so districts nationally with sufficient data for the comparison, NPS ranked 220 without any cost or need adjustment. Not #1, but 220. That’s still pretty high – 220 out of 13,500 puts NPS at about the top 2%. However, simply adjust for regional differences in competitive wages, and NPS drops to 732, about the top 5%. Next, adjust for the additional needs of children in poverty (using a conservative adjustment as I did in the previous post) and Newark slides back to rank of 1158 – still in the top 10%, but not top overall by any stretch.

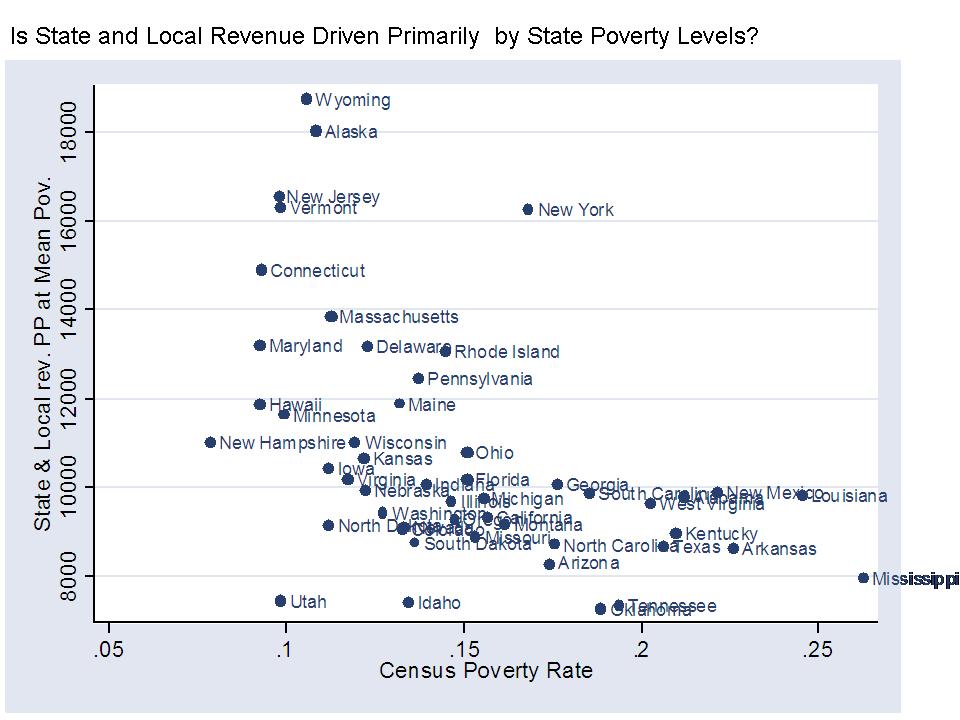

Now, as I noted in my previous post, cross state – national comparisons are tough because it’s particularly hard to equate the costs of hiring teachers and other school staff across regions and it’s also hard to equate poverty rates from one location to another.

So then, let’s focus on the NY metro area. For starters, NPS comes in 56th with no adjustments, and 53rd when we adjust for wage variation (because the NCES wage index does carve the NY metro area into a handful of labor markets). Either way, NPS is not at the top of even the NY labor market in spending. Among the 546 or so districts in the NY metro, NPS hovers around the top 10% – on the edge of it. If we take the additional step of adjusting for children in poverty, NPS drops to a rank of 144 out of 546 – below the 25%ile.

NPS ranks somewhat higher within the state of NJ than in the broader metro area, mainly because the highest spending districts in the NY metro area tend to be in New York State – Westchester and Rockland Counties and on Long Island. Yeah… NY districts spend much more than New Jersey districts in the NY metro area, and as I’ve shown previously, have much higher salaries as well.

So there you have it. Newark per pupil spending in context. Newark Public Schools are a relatively high spending district, which provides the district with more opportunities to assist its high need population than other urban, high poverty, high minority concentration districts around the country. But Newark is not some massive outlier – most expensive in the nation district.

Note that these analyses do not question the Census per pupil spending figure. I simply accept that number – because I’ve accepted the Census data for all others in the sample. It would be inappropriate for me to “audit” NPS spending without looking for similar issues in other cities and states. The number may be screwed up, which is why I tend to stay away from the Census data for individual district analysis, without reconciling across other sources. But, it is what it is, and these data are generally pretty good (but for some specific states).

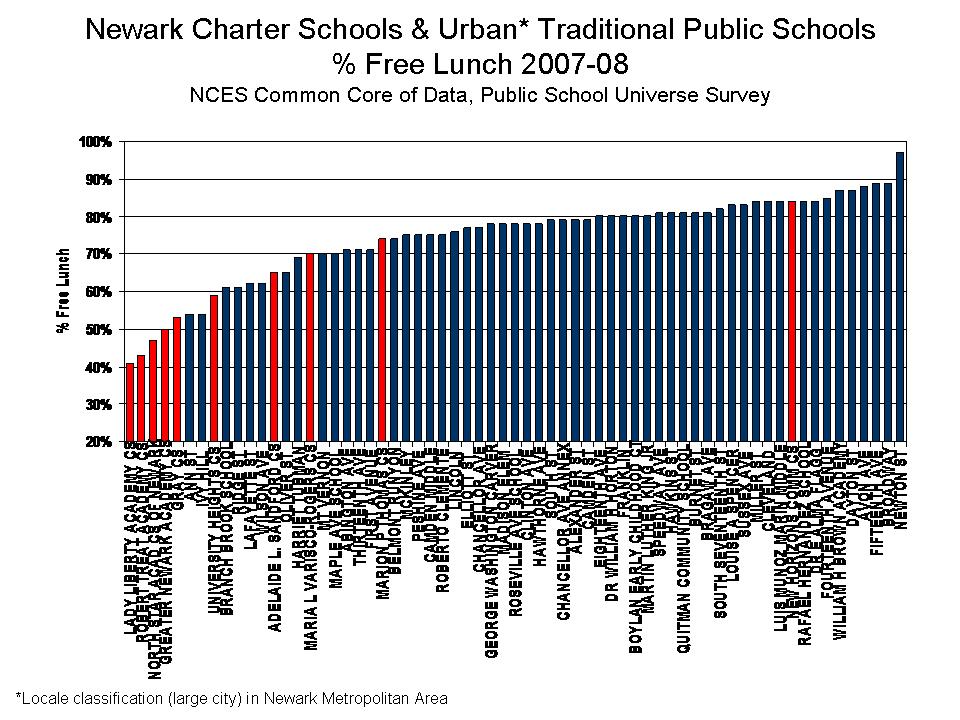

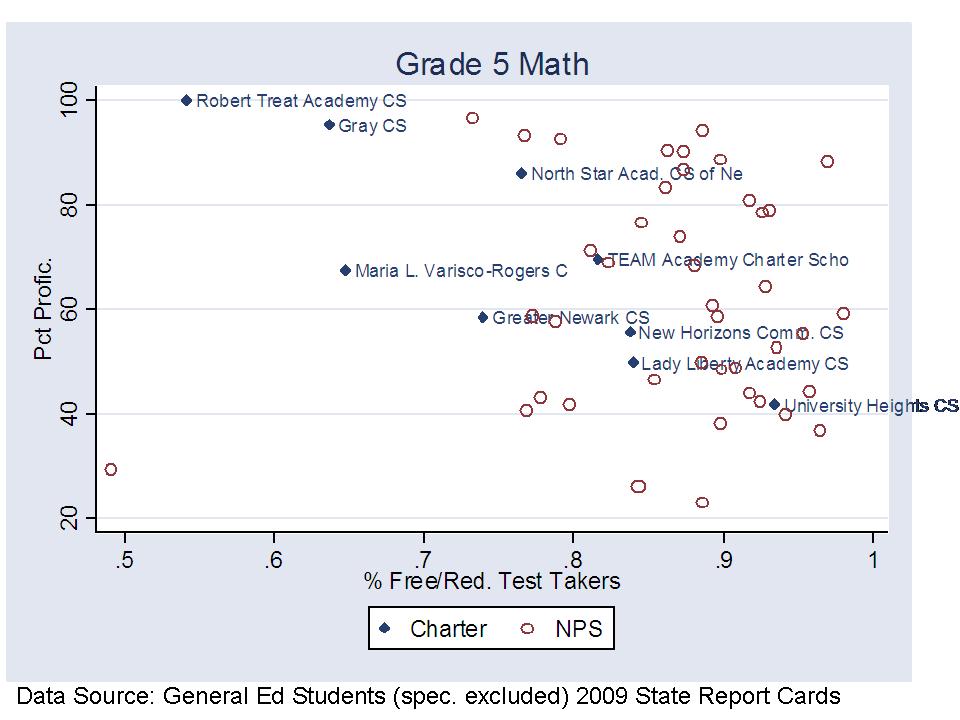

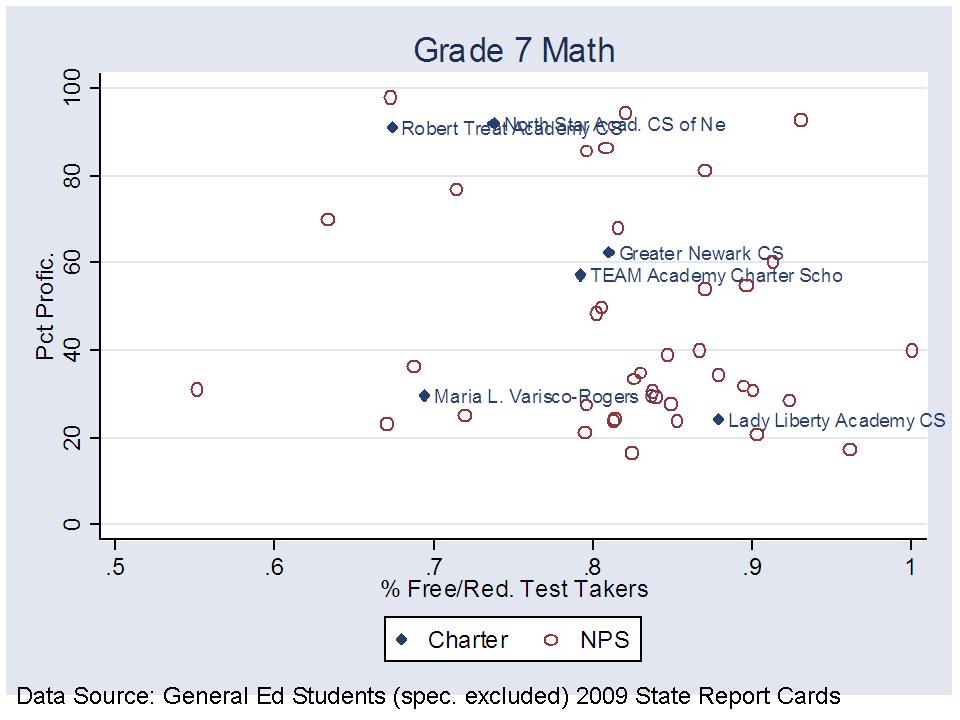

Finally, while I have shown here that NPS is still a relatively high spender, even after adjustments, I’ve not tackled the outcome question. What do we get for this funding? I would argue that pundits have grossly misrepresented this side of the equation as well. Pundits argue that NPS has a low graduation rate and that graduation rate is even inflated because more kids graduate than actually pass the high school assessments (using the alternative assessment to get around the supposed gate). Those same pundits are quick to point out the very high graduation rates of the few secondary charter schools in NJ – as a good thing. I show in this much older post that these same charters which graduate 97% of their students actually had lower high school math assessment scores than poor urban districts (which had lower grad rates). Pot? Kettle? ???? Perhaps more on the outcome issue at a later point in time.

In the meantime, for a thorough discussion of the relationship between school funding reforms and student outcomes, see this article (which includes some discussion of New Jersey):

Baker, B.D., Welner, K. School Finance and Courts: Does Reform Matter, and How Can We Tell? Teachers College Record

http://www.tcrecord.org/content.asp?contentid=16106

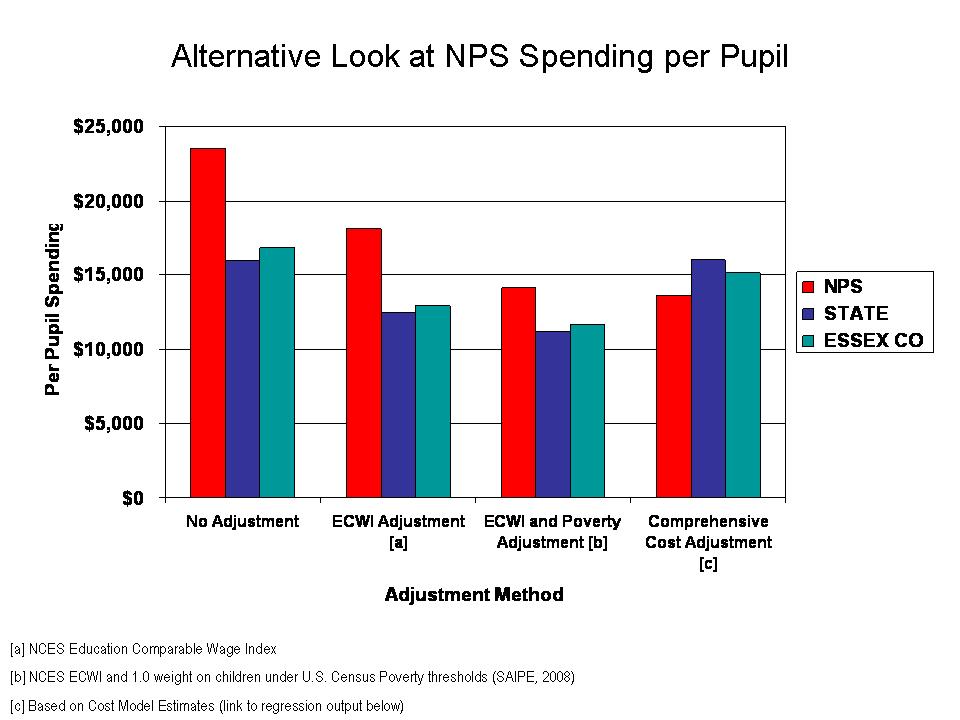

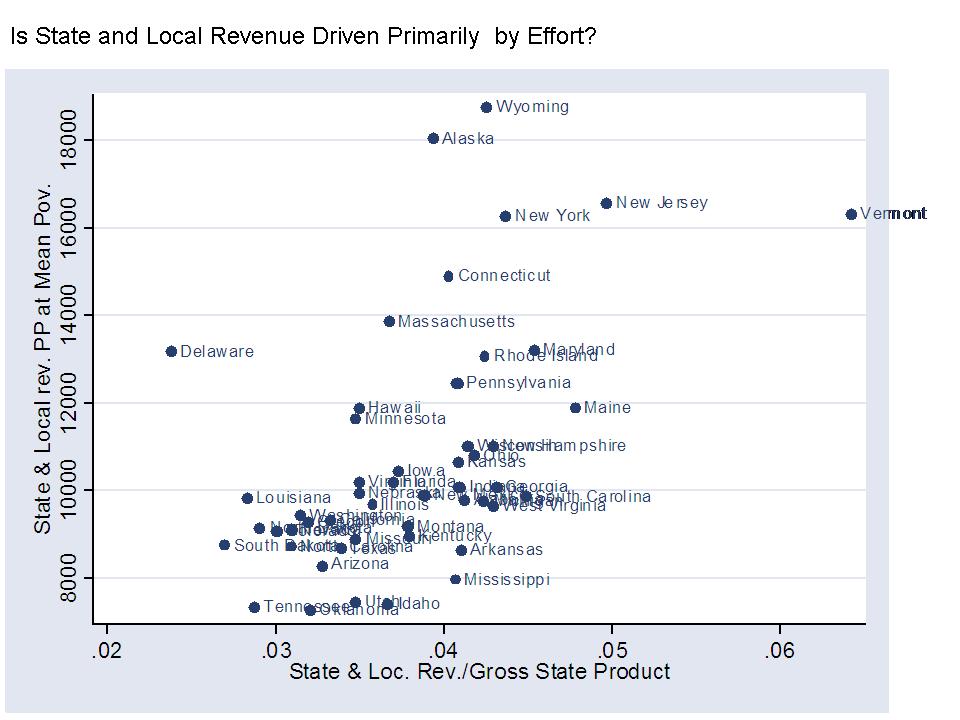

UPDATE: Here’s one additional really important comparison for NPS. The following graph compares NPS to the 216 K-12 NJ districts for whom sufficient data were available for this analysis. This graph starts by comparing NPS to the other districts in the state and in Essex County using each of the above methods for adjusting spending figures – ECWI and then the ECWI and a poverty weight. But, clearly even the latter of these two approaches doesn’t catch all of the cost differences faced by Newark Public Schools. The final comparison in this graph includes a “comprehensive” cost adjustment based on a statistical model of New Jersey School districts – a “cost function” model, an approach which has been used extensively in economic research on education costs (Google Scholar Search on Cost Function Research of William Duncombe and John Yinger).

Here we see that if we account for the various costs faced by NPS, NPS actually has less per pupil than either the state average or other districts in Essex County.

Here is a link to the cost models. Note: for this analysis I actually adopted a very conservative assumption to generate the cost index for NPS. The statewide average cost index is 1.0 and NPS receives a cost index around 1.7 indicating 70% higher than state average costs, largely as a function of student population served, but also being in a higher wage labor market. The conservative assumption was that any variation in spending picked up by the model that was associated only with being an Abbott district (and not accounted for by differences in outcomes) was treated as “inefficiency,” and therefore not counted toward Abbott district cost index values (a significant portion of the Abbott bump in funding was effectively removed as if “waste” even though this is a suspect assumption).