Today’s (okay…yesterday… I got caught up in a few other things) New Jersey headlines once again touted the supposed successes of New Jersey Charter Schools:

http://www.nj.com/news/index.ssf/2011/01/gov_christie_releases_study_sh.html

The Star Ledger reporters, among others, were essentially reiterating the information provided them by the New Jersey Department of Education. Here’s their story.

http://www.state.nj.us/education/news/2011/0118chart.htm

And here’s a choice quote from the press release:

“These charter schools are living proof that a firm dedication to students and a commitment to best education practices will result in high student achievement in some of New Jersey’s lowest-income areas,” said Carlos Perez, chief executive officer of the New Jersey Charter School Association. He pointed to NJASK data for third grade Language Arts, where more than half the charters outperformed the schools in their home districts, and of those, more than 75 percent were located in former Abbott districts.

No spin there. Right? Just a balanced summary of achievement data, with thoughtful interpretation of what they might actually mean. Not really.

There are many, many reasons why the comparisons released yesterday are deeply problematic, and well, quite honestly, pretty darn meaningless. I could not have said it better than Matt DiCarlo of Shanker Blog did here:

“Unfortunately, however, the analysis could barely pass muster if submitted by a student in one of the state’s high school math classes (charter or regular public).”

Here are some guidelines I have posted in the past, regarding appropriate ways to compare New Jersey Charter Schools to their host districts on various measures including outcome measures:

- When comparing across schools within poor urban setting, compare on basis of free lunch, not free or reduced, so as to pick up variation across schools. Reduced lunch income threshold too high to pick up variation.

- When comparing free lunch rates across schools either a) compare against individual schools and nearest schools, OR compare against district averages by GRADE LEVEL. Subsidized lunch rates decline in higher grade levels (for many reasons, to be discussed later). Most charter schools serve elementary and/or middle grades. As such they should be compared to traditional public schools of the same grade level. High school students bring district averages down.

- When comparing test score outcomes using NJ report card data, be sure to compare General Test Takers, not Total Test Takes. Total Test Takers include scores/pass rates for children with disabilities. But, as we have seen time and time again, in charts above, Charters tend not to serve these students. Therefore, it is best to exclude scores of these students from both the Charter Schools and Traditional Public Schools.

Today’s (okay, yesterday – publication lag) primary violation involves #3 above, but also relates to the first two basic rules. Let’s do a quick walk through, using the 2009 data, because the 2010 school level school reports data are not yet posted on the NJDOE web site. The bottom line is that it is relatively meaningless to simply compare raw scores or proficiency rates of charter schools to host district schools – as done by NJDOE and the Star Ledger. That is, it is meaningless unless they actually serve similar student populations, which they do not.

Below, I walk through a few quick examples of student population differences in Newark, home to the state’s high-flying charter schools (North Star Academy and Robert Treat Academy). Next, I construct a statistical model of school performance including New Jersey Charter schools and traditional public schools in their host district, controlling for student demographics and location. I first used this same model here: Searching for Superguy in New Jersey. I use that model to show adjusted performance comparisons on a few of the tests, and then I use a variation of that model to test the proficiency rate difference – on average statewide – between charter schools and schools in the host district. Finally, I address one additional factor which I am unable to fully control for in the model – the fact that some New Jersey Charter Schools – high performing ones – seem to have unusually high rates of cohort attrition between grade 6 and 8, concurrent with rising test scores. I raise this point because pushing out of students is not an option available to traditional public schools. In fact, it is the traditional public schools that must take back those students pushed out.

Demographic Examples from Newark

Here are a few slides from previous posts on the demography of Newark Charter Schools in particular, compared to other Newark Public Schools. Here are the shares of kids who qualify for free lunch by school in Newark (city boundaries). Clearly, most of the charters fall toward the left hand side of the graph with far fewer of the lowest low-income children.

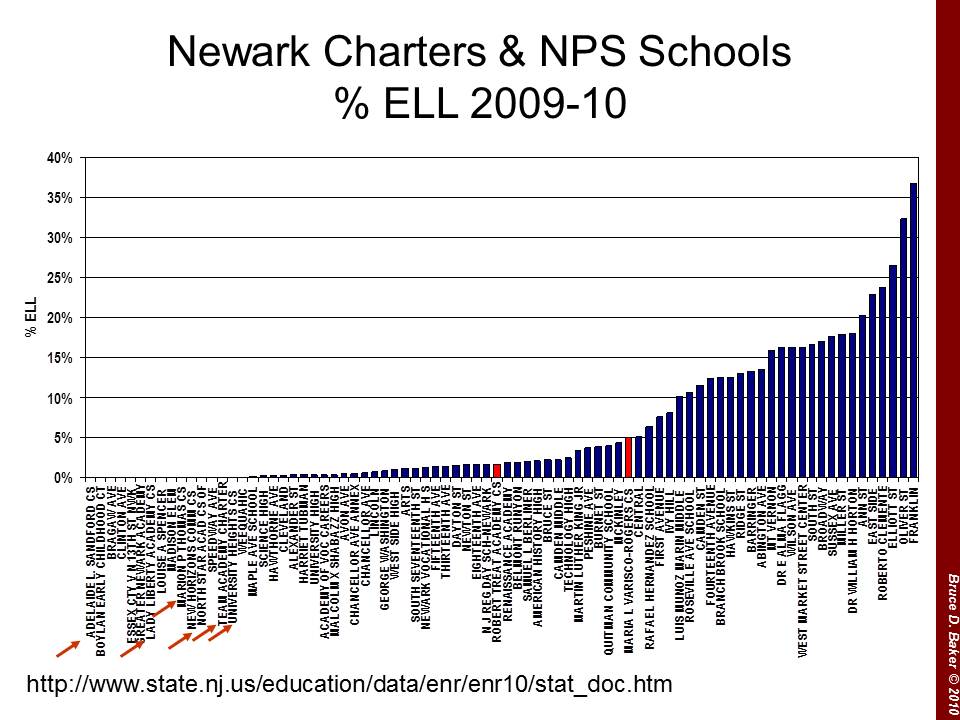

The shares of English Language Learners look similar if not more dramatic. Many NPS schools have very high rates of English Language Learners while few charters have even a modest share.

Finally, here’s a 4 year run of the most recent available special education classification rate data (More recent years of data have a dead link on the classification rates). This graph compares Essex County charter schools with Essex County public school districts. Charter Schools have invariably low special education rates, but for those focused on children with disabilities.

One cannot reasonably ignore these differences when comparing performance outcomes of kids across schools. It’s just silly and not particularly useful.

The Outcomes Corrected for the Demographics

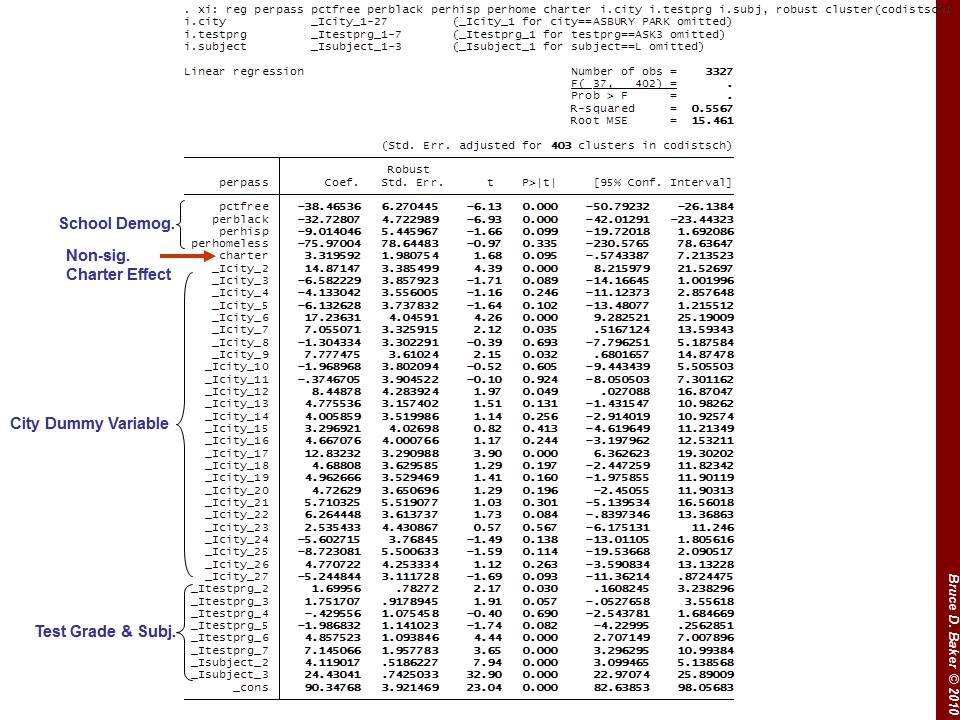

So then, what happens if we actually use some statistical adjustments to evaluate whether the charter schools outperform (on average proficiency rate) other schools in the same city on the same test. Well, I’ve done this for charter data from 2009 and previous years and will do it again for the 2010 data when available. I use variables available in the Fall Enrollment Files and from the School Report Card and information on school location from the NCES Common Core of Data in order to create a model of the expected scores for each charter school and each other school in the same city. In the model, I use only the performance of GENERAL TEST TAKERS, so as to exclude those scores of special education students (who, for the most part don’t attend the charter schools). The model:

Outcome = f(Poverty, Race, Homelessness, City, Tested Grade, Subject)

Is use the model to create a predicted performance level (proficiency rate) for each school, considering which grade level test we are looking at, in which subject, the race/ethnicity of the students (where Hispanic concentration is highly correlated with available ELL data, and Hispanic concentration data are more consistently reported), the share of students qualifying for free lunch, the percent identified as homeless and the city of location for the school. That is, each charter school is effectively compared against only other schools in the same geographic context (city).

This is a CRUDE model, which can’t really account for other factors, such as the possibility that some charter schools actually shed, or push out, lower performing students over time. More on that below. So, for each school, I get a predicted performance level – what that school is expected to achieve given the children it serves and the location. I can then compare the actual performance to the predicted performance to determine whether the school beats expectations or falls below expectations.

The next two graphs provide a visual representation of schools beating the odds and schools under-performing with respect to expectations. Charters are identified in red and named. Blue circles are traditional public schools in the same district. Note that there are about the same number of charters beating expectations as there are falling short. The same is true for non-charters. On average, both groups appear to be about average.

8th Grade Math performance looks much like 4th grade. Charters are evenly split between “good” and “bad,” as are the traditional public schools in their host districts.

The Overall Charter Difference (Or Not?)

Now, the above graphs don’t directly test whether the average charter performance is better or worse than the average non-charter performance on the same test, same grade and in the same location. But, conducting that test (for these purposes) is as simple as adding into the statistical model an indicator of whether a school is a charter school. Doing so creates a simple (oversimplified, in fact) comparison of the average performance of charters to the average performance of non-charters in the same city (on the same test, in the same grade level), while “correcting” statistically for differences in the student population. I SHOULD POINT OUT THAT ONE CAN NEVER REALLY FULLY CORRECT FOR THOSE DIFFERENCES!

Using this oversimplified method, the analysis (statistical output) below shows that the charter average proficiency rate is about 3% higher than the non-charter average – BUT THAT DIFFERENCE IS NOT STATISTICALLY SIGNIFICANT. That is, there really isn’t any difference. THAT IS, THERE REALLY ISN’T ANY DIFFERENCE.

Some Other Intervening Factors: Cohort Attrition, or Pushing Out

As I mentioned above, even the “tricky statistics” I used cannot sort out such things as a school that systematically dumps, or pushes out lower performing students, where those lower performing students end up back in the host district. Such an effect would simultaneously boost the charter performance and depress the host district performance (if enough kids were pushed back). I’ve written on this topic previously. So, I’ll reuse some of the older stuff – which isn’t really that old (last Fall).

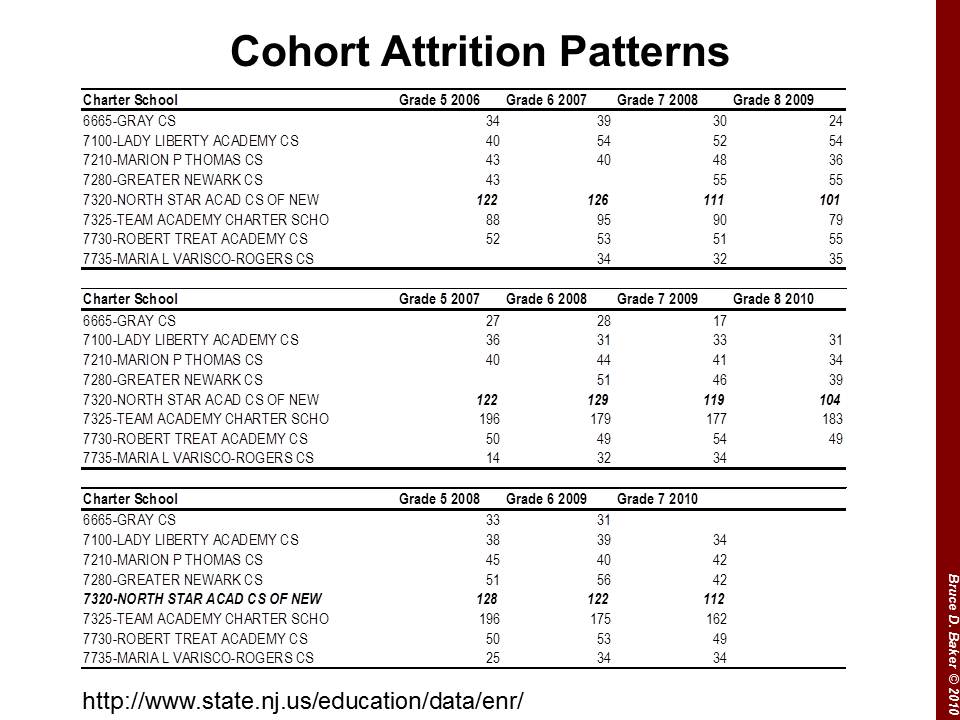

In this figure, we can see that for the 2009 8th graders, North Star began with 122 5th graders and ended with 101 in 8th. The subsequent cohort also began with 122, and ended with 104. These are sizable attrition rates. Robert Treat, on the other hand, maintains cohorts of about 50 students – non-representative cohorts indeed – but without the same degree of attrition as North Star. Now, a school could maintain cohort size even with attrition if that school were to fill vacant slots with newly lotteried-in students. This, however, is risky to the performance status of the school, if performance status is the main selling point.

Here, I take two 8th grade cohorts and trace them backwards. I focus on General Test Takers only, and use the ASK Math assessment data in this case. Quick note about those data – Scores across all schools tend to drop in 7th grade due to cut-score placement (not because kids get dumber in 7th grade and wise up again in 8th). The top section of the table looks at the failure rates and number of test takers for the 6th grade in 2005-06, 7th in 2006-07 and 8th in 2007-08. Over this time period, North Star drops 38% of its general test takers. And, cuts the already low failure rate from nearly 12% to 0%. Greater Newark also drops over 30% of test takers in the cohort, and reaps significant reductions in failures (partially proficient) in the process.

The bottom half of the table shows the next cohort in sequence. For this cohort, North Star sheds 21% of test takers between grade 6 and 8, and cuts failure rates nearly in half – starting low to begin with (starting low in the previous grade level, 5th grade, the entry year for the school). Gray and Greater Newark also shed significant numbers of students and Greater Newark in particular sees significant reductions in share of non(uh… partially)proficient students.

My point here is not that these are bad schools, or that they are necessarily engaging in any particular immoral or unethical activity. But rather, that a significant portion of the apparent success of schools like North Star is a) attributable to the demographically different population they serve to begin with and b) attributable to the patterns of student attrition that occur within cohorts over time.

Understanding Differing Perspectives

Some will say, why should I care if charters are producing higher outcomes with similar kids? What matters to me is that they are producing higher outcomes! Anyone who produces higher outcomes in Newark or Trenton should be applauded, no matter how they do it. It’s one more high performing school where there wasn’t one previously.

It is important to understand that comparisons of student outcomes that ignore differences in student populations reward – in the public eye – those schools that manage to find a way to serve more advantaged populations, either by achieving non-representative initial lottery pool or by selective attrition. As a result, there is a disincentive for charter operators to actually make greater effort to serve higher need populations – the ones who really need it! And there are many out there who see this as their real mission. Those charter operators who do try to serve more ELL children, more children in severe multi-generational poverty, and children with disabilities often find themselves answering tough questions from their boards of directors and the media regarding why they can’t produce the same test scores as the high-flying charter on the other side of town. These are not good incentives from a public policy perspective. They are good for the few, not the whole.

Further, one’s perspective on this point varies whether one is a parent looking for options for his/her own child, or a policymaker looking for “scalable” policy options for improving educational opportunities for children statewide. From a parent (or child) perspective, one is relatively unconcerned whether the positive school effect is function of selectivity of peer group and attrition, so long as there is a positive effect. But, from a public policy perspective, the “charter model” is only useful if the majority of positive effects are not due to peer group selectivity and attrition, but rather to the efficacy and transferability of the educational models, programs and strategies. Given the uncommon student populations served by many Newark charters and even more uncommon attrition patterns among some… not to mention the grossly insufficient data… we simply have no way of knowing whether these schools can provide insights for scalable reforms.

As they presently operate, however, many of the standout schools do not represent scalable reforms. And on average, New Jersey charters are still… just… average.

2 thoughts on “Unspinning Data on New Jersey Charter Schools”

Comments are closed.